DeepSWIR: A Deep Learning Based Approach for the Synthesis of Short-Wave InfraRed Band using Multi-Sensor Concurrent Datasets

Convolutional Neural Network (CNN) is achieving remarkable progress in various computer vision tasks. In the past few years, the remote sensing community has observed Deep Neural Network (DNN) finally taking off in several challenging fields. In this…

Authors: Litu Rout, Yatharath Bhateja, Ankur Garg

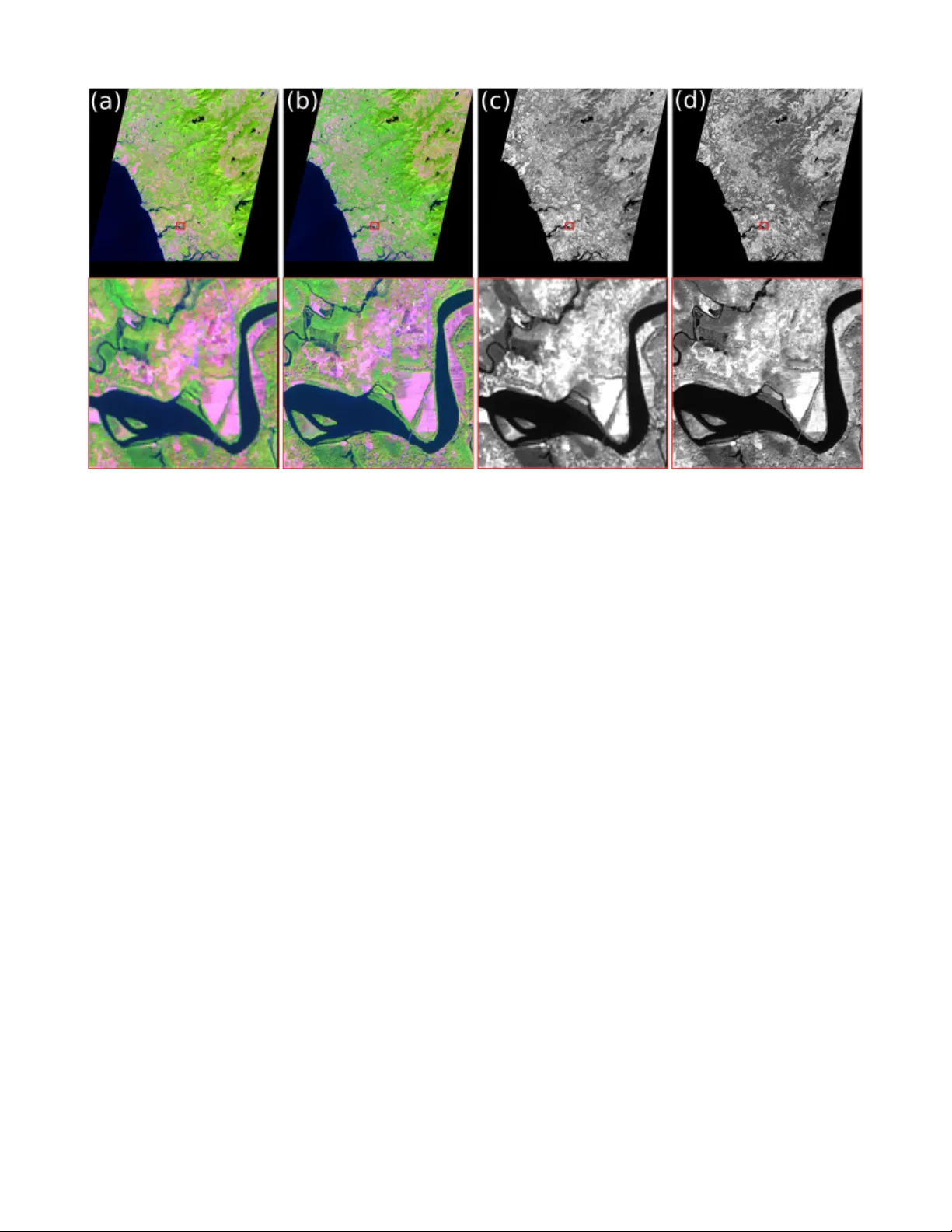

1 DeepSWIR: A Deep Learning Based Approach for the Synthesis of Short-W a v e InfraRed Band using Multi-Sensor Concurrent Datasets Litu Rout, Y atharath Bhateja, Ankur Garg, Indranil Mishra, S Manthira Moorthi, and Debjyoti Dhar Abstract —Con volutional Neural Network (CNN) is achieving remarkable progress in various computer vision tasks. In the past few years, the remote sensing community has observed Deep Neural Netw ork (DNN) finally taking off in several challenging fields. In this study , we propose a DNN to generate a pr edefined High Resolution (HR) synthetic spectral band using an ensemble of concurrent Lo w Resolution (LR) bands and existing HR bands. Of particular interest, the proposed network, namely DeepSWIR, synthesizes Short-W a ve InfraRed (SWIR) band at 5m Ground Sampling Distance (GSD) using Green (G), Red (R) and Near InfraRed (NIR) bands at both 24m and 5m GSD, and SWIR band at 24m GSD. T o our knowledge, the highest spatial resolution of commercially deliv erable SWIR band is at 7.5m GSD. Also, we propose a Gaussian feathering based image stitching appr oach in light of processing large satellite imagery . T o experimentally validate the synthesized HR SWIR band, we critically analyse the qualitativ e and quantitative results produced by DeepSWIR using state-of-the-art evaluation metrics. Further , we conv ert the synthesized DN values to T op Of Atmosphere (TO A) reflectance and compar e with the corresponding band of Sentinel-2B. Finally , we show one real world application of the synthesized band by using it to map wetland resources over our region of interest. Index T erms —Remote Sensing, Con volutional Neural Network, Band Synthesis, Super Resolution, Image Stitching. I . I N T RO D U C T I O N N UMER OUS Remote Sensing (RS) applications ha ve a strong reliance on earth observation images which are highly resolv ed in spatial domain and preserve the spectral characteristics of indi vidual objects [ 1 ], [ 2 ], [ 3 ], [ 4 ]. In the recent decade, several optical and Synthetic Aperture Radar (SAR) satellites were launched with high spatio-spectral reso- lution that opens unprecedented opportunities in applications including Object Detection [ 5 ], Image Retriev al [ 6 ], Automatic T arget Recognition [ 7 ], Semantic Segmentation [ 8 ], T errain surface classification [ 9 ], detection of archaeological features in modern landscapes [ 10 ], biomass assessment [ 11 ], identifi- cation of forest cutting [ 12 ] etc. The extrapolation of V isible and Near InfraRed (VNIR) bands farther into InfraRed (IR) spectrum offers the provision to capture rich features uniquely associated with applications such as, material identification, wildfire response, mining/geology etc. Due to minimal influ- ence of atmospheric noise, fog, smok e etc. on SWIR band, All authors are with the Optical Data Processing Division, Signal and Image Processing Group, Space Applications Center, Indian Space Research Organisation, Ahmedabad, India - 380015. Corresponding mail ids: (lr , ybhateja, agarg, indranil, smmoorthi, deb)@sac.isro.gov .in this band is relativ ely more suitable for the aforementioned applications compared to VNIR bands. Despite the usefulness of SWIR band, very few RS satellites are capable of providing high resolution spectral bands in this particular range of the Electro Magnetic Spectrum (EMS). T o our knowledge, the highest spatial resolution of commercially deliv erable SWIR band is at 7.5m, which is provided by W orldV iew-3 [ 13 ]. Of particular interest, the Indian Remote Sensing (IRS) satellite, Resourcesat-2A provides VNIR and SWIR at 24m spatial resolution using Linear Imaging and self Scanning Sensor (LISS-III). Howe ver , the LISS-IV sensor present in Resourcesat-2A pro vides only VNIR bands at 5m spatial resolution. F or this reason, generation of a synthetic High Resolution (HR) SWIR (5m) band by preserving the spectral characteristics of Low Resolution (LR) SWIR (24m), beyond nai ve interpolation [ 14 ] and data fusion [ 15 ], is the primary motiv e of this study . T o tackle this problem, we propose a customized deep neural netw ork (DeepSWIR) by borrowing the inte gral parts from the state-of-the-art CNN architectures [ 16 ], [ 17 ], [ 18 ], [ 19 ], which is trained on only the LR concurrent bands of LISS-III (24m) and specifically designed to synthesize LISS-IV -SWIR at 5m spatial resolution. A vast majority of the RS community is addressing the issue of generating HR spectral bands by posing it as a super resolution problem and hallucinating the missing HR details in the LR bands. In the context of the current scenario, a similar objectiv e would be to super-resolve the LISS-III bands at 24m GSD to the corresponding LISS-IV bands at 5m GSD, thereby meeting our objecti ve of synthesizing the required spectral band, i.e., S W I R 5 m . Thus, in order to construct training data for such supervised learning, both LR and HR bands corresponding to the same region on ground and at the same time is necessary , which is not practically viable ev en with sophisticated technology . Especially , this approach, without any assumption, is not feasible at present for faithful super-resolution of our band of interest due to unav ailability of S W I R 5 m . Howe ver , a practically viable way of solving the problem, as proposed by Charis et al. [ 20 ], is to assume that the mapping from LR to HR bands is roughly scale- in variant and it depends only on the relative difference in resolution, i.e., { 20 m } → { 10 m } ≡ { 40 m } → { 20 m } . Thus, virtually unlimited data can be prepared by downsampling our 24m bands to a required lev el and learning ho w to transfer necessary HR details from the concurrent LR bands at required lev el to the e xisting bands at 24m. Then the trained model is applied to bands at 24m and expected to improve the 2 Fig. 1. Pipeline of the proposed framework. The optimal set of trainable parameters, θ ∗ is obtained by training the DeepSWIR model end to end on the low resolution concurrent multi-spectral bands of LISS-III. The learned set of parameters is then used to synthesize a virtual high resolution band, S W I R 5 m by lev eraging the spectral characteristics from LISS-III and spatial characteristics from LISS-IV . resolution by the same factor as from the required level to 24m. Nev ertheless, the process of constructing training data in such manner in volves downsampling, filtering (e.g. Gaussian blurring) etc. that inherently injects noise into the training data. Though this method is quite successful in learning the in verse mapping, this reduces the scientific correctness necessary for our application with required degree of realism. Therefore, we propose to learn the spectral mapping directly from real bands of LISS-III { G, R, N I R } 24 m → { S W I R } 24 m , while preserving the spatial characteristics. Then we feed the existing HR bands of LISS-IV { G, R, N I R } 5 m to the trained model and expect a virtual band that establishes the learned LR spectral mapping while preserving the input spatial resolution, i.e., 5m GSD. The underlying hypothesis is that the model tries to establish a robust spectral mapping of various objects as a function of concurrent LR bands in the hidden layers of a very deep neural netw ork which does not undergo drastic changes across spatial resolution. Fig. 1 illustrates the pipeline of our o verall framew ork. Fig. 2 pictorially depicts the qualitativ e assessment of proposed HR band synthesis using our customized DeepSWIR. Our contributions are loosely summarized as three-folds. • First, we propose a unified deep learning based frame- work for synthesizing HR required spectral band(s) using LR and existing HR concurrent bands. The proposed model, namely DeepSWIR, le verages the spectral char- acteristics from LR bands and spatial, from HR bands. • In this process, we propose an architecture to perform both global and local residual learning in order to fill in the missing high frequency details of LR band. Thus, the combined residual learning allows adaptiv e fusion of deep and shallo w features for the synthesis of required band. • Further, we propose a simple and yet, ef fectiv e approach for processing large satellite images. By this approach, we process lar ge number of ov erlapping crops ([32x32]) of a large satellite image and stitch them together using our Gaussian feather mosaicing scheme for seamless band generation. In the follo wing Section II , we briefly discuss various approaches intended to address the problem similar to ours, i.e., generating required HR spectral band(s) using LR bands and existing HR bands. Thereafter , we detail the proposed methodology in Section III and the e xperimental details in Sec- tion IV to critically analyse the performance of the proposed DeepSWIR. At the end , we draw compelling inferences based on our discussion throughout and discuss concluding remarks in Section V . I I . R E L A T E D W O R K The super-resolution enhancement techniques ha ve gradually dev eloped from naiv e interpolation [ 21 ] to CNN based supervised [ 22 ] and unsupervised approach [ 23 ]. A popular class of con ventional algorithms, which are designed to address similar kind of RS applications, attempt to enhance the spatial resolution of the LR bands by fusing the high frequency details from Panchromatic (Pan) images. The smaller size of pixels that leads to high spatial 3 Fig. 2. Synthesis of S W I R 5 m by leveraging spatial and spectral characteristics from { G, R, N I R } 5 m and { G, R, N I R, S W I R } 24 m , respec- tiv ely . (a,b) and (c,d) represent the paired False Colour Composite (FCC) of LISS-III (24m) ( S W I R ( R ) , N I R ( G ) , R ( B )) and LISS-IV (5m) ( DeepS W I R ( R ) , N I R ( G ) , R ( B )) satellite imagery . (e,f) and (g,h) represent their corresponding S W I R bands. The FCC verifies that the synthesized S W I R 5 m is auto-registered with existing LISS-IV bands and hence, maintains the spatial resolution. The paired S W I R 24 m and S W I R 5 m assures that the spectral characteristics is preserved. resolution in Pan images is the primary contributing factor in image fusion. This method of pan-sharpening, howe ver , is affected by the possibilities of dis-similar modalities caused by different spectral and temporal acquisition even after geometric registration. In addition, the lack of Pan bands in Resourcesat-2A and unavoidable shortcomings of fusion based schemes leads to recommendations for developing a new technique for our application. As per recent studies [ 24 ], [ 25 ], [ 26 ], the CNN based approaches offer considerable gain o ver bicubic and sophisticated neighbourhood regression methods on non- remote sensing benchmarks such as CelebA [ 27 ] or ImageNet [ 28 ] in similar tasks. Dong et al. [ 22 ] proposed Super-Resolution Conv olutional Neural Network (SRCNN) that outperformed the state-of-the-art methods till date by a large margin. Among generati ve models, Chrisitian et al. [ 23 ] proposed the Photo-Realistic Single Image Super-Resolution using a Generative Adv ersarial Network (SRGAN), which pushed the synthetic images towards natural image manifold that made it perceptually more con vincing. Kim et al. [ 29 ] discussed the idea of recursiv e or shared weights in Deeply- Recursiv e Con volutional Network (DRCN), which performed fa vourably against state-of-the-art methods with relativ ely fewer parameters. Mao et al. [ 30 ] proposed a very deep encoder-decoder architecture with residual connections, which performed better than SRCNN in super resolution tasks. Shi et al. [ 31 ] de veloped the Efficient Sub-Pixel Con volutional Neural Network (ESPCN) that increased resolution in the last layer of ESPCN, thereby reducing the computational and space complexity by a large margin. Jin et al. [ 32 ] proposed a Deep CNN with Skip Connection and Network in Network (DCSCN), which extracts cues from both local and global area by lev eraging CNN with skip connections and Network in Network architecture (NIN) [ 33 ]. Among RS satellite imagery , Charis et al. [ 20 ] integrated residual blocks in a customized CNN, namely DSen2, to generate super-resolved imagery of Sentinel2 at 10m GSD. This method, howe ver , operates under the assumption of scale- in variance which div erges from our objecti ve of learning spectral mapping from the existing bands directly , while preserving the spatio-spectral characteristics. T o the contrary , the proposed deep learning model (DeepSWIR), unlike DSen2, remains less susceptible to the adv erse effects of inherent noise injection into the training data as a result of resampling, mainly due to the use of existing bands in training DeepSWIR. Thus, deep learning being a data driven process, relativ ely more appropriate information regarding the spatial and spectral characteristics of indi vidual objects flo w from the LR LISS- III data to the DeepSWIR model, which is transferred to the HR LISS-IV data without loss of generality . Darren et al. [ 34 ] assimilated the effecti veness of shallow and deep CNN for super-resolution tasks on satellite imagery . As per their exploratory analysis, the SRCNN [ 22 ] with deep connecti vity and residual connections (DCR SRCNN) [ 34 ] provided the best results across their regions of interest. In this study , we observed a similar trend in performance with respect to depth of the network, and hence, we attempted to implement a deeper network borrowing the concepts from fe w of the latest 4 dev elopments in this area [ 16 ], [ 17 ], [ 18 ], [ 19 ]. I I I . P RO P O S E D M E T H O D O L O G Y Here, we detail our method by expanding the fundamental components of the end-to-end frame work. At first, we briefly discuss about the satellite imagery under the scope of our study in Section III-A . Thereafter , we describe about datasets used in training and cross-validation in Section III-B , the customized DeepSWIR architecture in Section III-C , and image stitching mechanism using Gaussian weights in Section III-D . A. Resourcesat-2A Satellite Imag ery The proposed DeepSWIR exploits the similarity and concur- rency associated with the unique sensors: LISS-III and LISS- IV on board Indian Space Research Organisation’ s (ISRO) Resourcesat-2A mission. In particular , we take adv antage of same wa velength range in the VNIR part of the EMS and thus, create a representati ve dataset for training the model without altering the pure spectra of the acquired bands. Due to simultaneous acquisition, the LISS-IV sensor’ s 70km swath ov erlaps with the 140 km swath of the LISS-III sensor , thereby providing an unique opportunity to create concurrent datasets under near identical en vironmental condition [ 35 ]. T ABLE I L I SS - I I I A N D L I S S -I V S E N S OR C H A RA CT E R I ST I C S Bands and W avelength Ranges ( µm ) LISS-III LISS-IV Band2 (G) 0.52-0.59 0.52-0.59 Band3 (R) 0.62-0.68 0.62-0.68 Band4 (NIR) 0.77-0.86 0.77 -0.86 Band5 (SWIR) 1.55-1.70 - Swath Width (km) 140 70 Spatial Resolution (m) 24 5 Radiometric Resolution (bit) 10 10 T emporal Resolution (days) 24 48 The Resourcesat-2A satellite orbits in a sun-synchronous orbit at an altitude of 817 km. The satellite completes about 14 orbits per day with 101.35 minutes to complete one revolution around the earth. T o cover the entire earth, it takes around 341 orbits during a 24-day cycle. Both LISS-III and LISS- IV have VNIR bands in the same range of EMS, but SWIR band is present only in LISS-III sensor . The LISS-III sensor cov ers a 140 km swath with deliverable product at 24m spatial resolution, whereas the LISS-IV sensor covers a 70 km swath with 5m spatial resolution. Both LISS-III and LISS-IV hav e same radiometric resolution of 10 bit radiometry . The characteristics of LISS-III and LISS-IV sensors are given in T able I [ 35 ]. B. Prepar ation of Representative Dataset One of the major challenges in supervised deep learning algorithms is the preparation of paired representativ e datasets to cov er the area of interest. As our area of interest is limited to different regions of India, we choose few representati ve path & row combinations as per W orldwide Reference System (WRS), which essentially covers different unique object signatures in SWIR range of the EMS. Of particular interest, the training dataset is constructed to learn the spectral characteristics of urban area, water bodies (lake, ri ver , ocean etc.), ve getation, barren land, snow , ice, cloud, desert sand, white sand etc. For validation and testing purpose, a similar representative dataset is contructed to analyze the network’ s generalization performance across seasonal variation, dif ferent path & row combinations and different spatial resolutions. The Date of Pass (DOP), path, ro w and area cov ered of the tiles used in training, validation and testing of DeepSWIR are presented in T able II and a sample subset data is sho wn in Fig. 3 . C. Network Arc hitecture The overall architecture of proposed DeepSWIR is shown in Fig. 4 . As shown in Fig. 4 , the DeepSWIR model encompasses two major learning mechanisms: global and local residual learning. The local residual learning, as part of a Local Resid- ual Feature Extraction unit ( F LRF E ( . ) ), is guided by a Shallow Feature Extraction module ( F S F E ( . )) and cascaded Residual Blocks ( F RB ( . )) . The global residual learning is undertaken by a Global Residual Feature Extraction unit ( F GRF E ( . )) . Thereafter , a dense fusion of features extracted from local and global residual learning directly predicts the intensities within dynamic range of the required spectral band. Local residual learning operates on the features F 0 ex- tracted by the shallo w feature extraction layer , F 0 = F S F E ( I S B ) . (1) The F S F E ( . ) unit consists of a single con volutional layer without any non-linear acti vation to extract shallo w features. The shallow features are then fed as inputs to the Residual Blocks, F n = F RB ,n ( F n − 1 ) , n = 1 , . . . , N = F RB ,n ( F RB ,n − 1 ( F RB ,n − 2 ( F RB ,n − 3 ( . . . F RB , 1 ( F 0 ))))) . (2) Here, F RB ,n ( . ) denotes the operations of n th residual block inside the LRFE unit. The number of channels present in the internal layers of a residual block is represented by feature size in Section IV -C -T able III . As shown in Fig. 5 , the residual block used in DeepSWIR is a slight variant of the original residual block with skip connections [ 18 ] in a sense that it does not use activ ation units at the output and is expected to adaptiv ely capture the dynamic range of radiometric values. Hence, the output of F RB ,n ( . ) is computed by fusing the global features F GF with the scaled local features F LF , F n = F n − 1 ,GF + F n − 1 ,LF . (3) Thus, the adverse effects which may arise due to deeper con volution is suppressed to some e xtent through guided resid- ual learning at each stage of the LRFE module. In addition, we verify that the residual block in a deep neural network, as sho wn in se veral recent studies [ 18 ], [ 34 ], [ 32 ], introduces fast con ver gence and better information flow . W e, therefore, use these blocks to address issues of similar kind. Further , the final stage of local residual learning consists of a Local Feature Fusion ( F LF F ( . )) layer to operate on the features extracted from previous layers and fuse them to 5 T ABLE II S T AT IS T I CS O F T H E D A T A U S E D F O R T R A I NI N G , VAL I DATI O N A N D T E S TI N G T H E P RO P O SE D D E EP S W I R. T rain S. No. DOP Path Row Major Features (Area) 1 02.03.18 93 56 Urban, Land, V egetation (Ahmedabad) 2 19.01.18 99 67 W ater, V egetation, Land (Kerala) 3 08.01.18 91 55 Desert Sand, White Sand (Rajasthan) 4 05.10.17 97 49 Snow , Ice, Cloud, Mountain (Himalaya) 5 29.01.17 100 58 V egetation, W ater (Madhya Pradesh) 6 11.01.18 107 52 Mountain, Snow , V egetation(Sikkim) T est S. No. DOP Path Row Major Features (Area) 1 17.11.17 96 62 W etland, W ater , V egetation(Goa) 2 26.11.17 93 56 Urban, Seasonal V ariation (Ahmedabad) 3 24.11.17 107 52 Mountain, Cloud, Snow , V egetation (Sikkim) 4 14.04.17 91 52 Desert Sand(Jaisalmer) Fig. 3. Samples of dataset used in training and cross validation of DeepSWIR. Here, x i represents the False Colour Composite (FCC) of { N I R ( R ) , Red ( G ) , Gr een ( B ) } 24 m and y i represents the corresponding concurrent S W I R 24 m . Fig. 4. Synthesis of target band (I TB ) by fusing global (F GFF ) and local (F LFF ) features e xtracted from source bands (I SB ) with carefully crafted Global Residual Feature Extraction (GRFE) and Local Residual Feature Extraction (LRFE) blocks. Fig. 5. Residual Blocks (RB) used in LRFE. maintain the channel dimension (here, 1) of the target band ( I T B ) , F LF F = F LF F ( F N ) . (4) Global Residual Learning , to the contrary , operates on the source bands I S B and extracts shallower features while pre- serving higher lev el structures present in the source imagery . The GRFE unit is built upon fully con volutional layers with kernel size [1x1]. One way to understand the operations of F GRF E ( . ) is that it learns to preserve the necessary spatial characteristics of the source imagery . Thereby , it contributes significantly to reconstruct the target band at same spatial resolution as that of the source band without deteriorating the spatial characteristics, F GF F = F GRF E ( I S B ) . (5) On the other hand, the LRFE unit is expected to contribute mainly towards learning the spectral mapping from source bands to target band. Thus, the required target band is synthe- sized by combining the spatial and spectral information in the form of global and local residual feature fusion, respecti vely , I T B = F GF F + F LF F . (6) D. Gaussian F eathering for Imag e Stitching One of the major shortcomings in employing deep learning on satellite imagery is to process large volume of data. Unlike non-remote sensing datasets, it is very unlikely to efficiently process a full satellite image through deep conv olutional networks. A common solution to address such problems is to randomly crop sev eral small portions of each indi vidual lar ge 6 tile and train neural networks on this exhausti ve cropped dataset. Though this approach reduces the computational complexity to some e xtent while training, it gi ves rise to a typical problem while reconstructing a seamless full tile from the predicted crops. In particular , it produces blocky ef fects while stitching small crops to generate a full tile. T o tackle this problem, we crop overlapping patches in row major order and stitch them together in the same order by our feather mosaicing scheme with Gaussian weights. Let x i ∈ R d × d × c , i = 1 , 2 , . . . , N represent the ordered crops (row major) of a full tile X ∈ R m × n × c , where d, c, N , and ( m, n ) represent the size of a small patch, number of input channels (bands), total number of crops, and size of input tile, respectiv ely . The crops of target band, Y ∈ R m × n × 1 corresponding to X are synthesized by , y i = F DeepS W I R ( x i ) , y i ∈ R d × d × 1 . (7) For 50%, i.e, d/2 pixel ov erlap between two adjacent patches in a row , we define Gaussian feathering of y i and y i +1 to construct y i,i +1 ∈ R d × ( d + d 2 ) by , y i,i +1 ( u, v ) u ∈ [1 ,d ] = y i ( u, v ) if v ∈ [1 , d 2 ) y i ( u, v ) ∗ ω i ( v − d 2 + 1) + y i +1 ( u, v − d 2 + 1) ∗ ω i +1 ( v − d 2 + 1) if v ∈ [ d 2 , d ) y i +1 ( u, v − d 2 + 1) if v ∈ [ d, d + d 2 ] . Here, u ∈ [1 , d ] , ω i ∈ R 1 × d 2 and ω i +1 ∈ R 1 × d 2 represent the Gaussian weights associated with i th and ( i + 1) th patch, respectiv ely , i.e, ω i = exp − ( ˆ d − µ ) 2 2 ∗ σ 2 , ω i +1 = 1 − ω i for ˆ d ∈ [ d 2 , d ] . The reason for selecting Gaussian weights, instead of linear , or sigmoid weights, is to preserve the source distribution of individual optical image patches which is reasonably assumed to follow Gaussian distribution [ 36 ], [ 37 ], [ 38 ], [ 39 ]. In our case, we set µ = d 2 and σ = d 4 so as to meet the computational demand. Note that we first feather adjacent patches in horizontal direction to construct horizontal strips, ˜ y j ∈ R d × n , where j = 1 , 2 , . . . , M and M represents total number of horizontal strips. Further , we stitch these horizontal strips ( ˜ y ) in vertical direction in the similar manner and thus, reconstruct a seamless full tile without any blocky ef fect. T o maintain continuity at the boundary along horizontal and vertical direction, we simply replace the pixels of equi valent size from the corresponding edges with the final predicted patches. Fig. 6 shows the relativ e improvement of proposed Gaussian feathering ov er naively stitching individual patches to form a complete tile. In particular , the proposed Gaussian feathering preserves the source radiometry while eliminating abrupt transition between adjacent patches. I V . E X P E R I M E N T S A N D A N A L Y S I S In this section, we elaborate on the necessary implementa- tion details in Section IV -A , used baseline and state-of-the-art ev aluation metrics in Section IV -B , ablation study of proposed architecture in Section IV -C , e valuation on LISS-III and LISS- IV in Section IV -D - IV -E , cross validation with Sentinel-2B in Section IV -F , and finally , one real world application of synthesized virtual band in Section IV -G . Fig. 6. Qualitati ve analysis of Gaussian feathering for image stitching. (a) Naiv e stitching and (b) Gaussian feathering of predicted patches. Though blocky effect is not distinctively visible at native resolution, it degrades the data quality as it persists throughout the image. The proposed Gaussian feathering does not suffer from blocky effect even at 4 times upsampling as highlighted in Red and Green bounding boxes. A. Implementation Details For faithful regeneration of the reported experimental re- sults, we provide the exact hyper-parameter settings and implementation details as follows. All the experiments hav e been conducted on a local machine with 256GB ram, 8GB gpu memory , and i7 processor . W e consider patch size of (32 × 32) , i.e. d = 32 . The total number of randomly cropped images from the representativ e dataset, as discussed in Section III-A , is approximately 1M. For cross-validation purpose we ran- domly split the total crops into training ( ≈ 800 K ) and testing ( ≈ 200 K ) datasets. W e use initial learning rate of 1 e − 4 and keep decreasing the rate on plateau with a scheduled decay of 0 . 004 . T o avoid vanishing/e xploding gradients, we normalize the gradient norm at each time step to 1 and clip each gradient value to [ − 0 . 5 , 0 . 5] . As reported by D. P . Kingma and J.L.Ba [ 40 ], we use β 1 = 0 . 9 and β 2 = 0 . 999 to update the biased first and second moment estimates, respectively . W e use N ADAM optimizer [ 41 ] for faster con vergence and better stability . The in NAD AM optimizer is set to 1 e − 8 . The entire pipeline has been dev eloped in python using open- source libraries such as Keras [ 42 ] with T ensorFlow [ 43 ] backend. As reported by Charis et al. [ 20 ] the Mean Absolute Error (MAE) along with Root Mean Square Error (RMSE) performs fa vourably in satellite imagery . W e verify the ef ficacy of such combination and adv ocate using MAE as our primary objectiv e function to be minimized and RMSE as our early stopping criteria (Section IV -B ). The upper limit of training epochs is set to 10000. The patience v alue for early stopping criteria is set as 5 epochs, which is an essential factor to control overfitting to some extent. W e observed that deeper networks with smaller kernel size works reasonably better . So we use [3x3] conv olutional kernels and 28 layers in this case (Section IV -C ). W e also observed that hour-glass structure like CNN with pooling layers losses high frequency image details due to se veral downsampling and upsampling in volved in the feed-forward process. Therefore, we implemented DeepSWIR in a fully con volutional fashion. 7 B. Baseline and Evaluation Metrics As we do not have original S W I R 5 m to assess the per- formance of synthesized SWIR band, we intend to analyze the performance by comparing with the closest alternative, i.e. bicubic interpolated S W I R 24 m at 5m GSD. W e primarily choose RMSE as our quantitati ve e valuation metric and there- fore, set this as our early stopping criteria while training the model. The RMSE is computed as follo wing, RM S E = v u u t 1 n n X i =0 ( x i − ˆ x i ) 2 (8) where, x and ˆ x represents the vector representation of S W I R 24 m resampled at 5m GSD and synthesized S W I R 5 m respectiv ely . In this formulation, we do not apply any form of normalization and report the RMSE as such. Among other ev aluation metrics, we use SSIM [ 44 ], PSNR [ 44 ], SRE [ 20 ] and SAM [ 45 ] inde xes to measure reliability of both spatial and spectral profile of the synthesized band. C. Ablation Study In order to assimilate the performance of DeepSWIR with different number of conv olution layers, we train ablati ve DeepSWIR with varied number of residual blocks. For faster experimentation, we use Ahmedabad and Kerala regions for training these ablativ e DeepSWIRs, and Goa for testing its generalization. This training subset is selected so as to cov er various objects of interest present in Goa tile. As shown in T able III , both MAE and RMSE in cross-v alidation follow a decreasing trend as the number of residual blocks (ResBlk), or total con volution operations increases. Howe ver , the relative reduction of MAE and RMSE keeps decreasing with depth of the network. Thus, keeping in mind the computational resource, we opt 24 ResBlks with 128 channels each in the customized DeepSWIR. Thereafter , we extend the capacity of the model to incorporate the whole representati ve dataset by increasing the number of channels/feature size from 128 to 256. The validation MAE and RMSE values of the final model, which we hav e used in rest of our experimentation, are as low as 4.46 and 7.96, respecti vely . Note that the ablati ve trackers hav e been ev aluated on LISS-III dataset by comparing the synthesized S W I R 24 m with the real S W I R 24 m . T ABLE III C O MPA R IS O N O F A B L A T I V E D E E P SW I R N E T WO R K A R C HI T E C TU R E S . No. Layer (No. ResBlk) Feature Size Parameters MAE RMSE train val train val 15 (6) 128 1.77M 8.64 9.78 11.56 12.73 27 (12) 128 3.54M 2.64 3.73 5.70 7.87 35 (16) 128 4.72M 2.09 3.62 4.54 7.59 51 (24) 128 7.08M 2.01 3.60 4.34 7.47 51 (24) 256 28.3M 4.13 4.46 7.27 7.96 D. Evaluation on LISS-III Since we train the network on LR bands of LISS-III, we primarily draw a comparison between synthesized S W I R 24 m and existing S W I R 24 m . In this regard, the MAE and RMSE errors hav e been reported in Section IV -C and are considerably low in both metrics on approximately 200 K patches cov ering the aforementioned representati ve dataset. Additionally , we analyze the network’ s potential to comprehend the homoge- neous and heterogeneous structures in our region of interest. W e consider all possible overlapping patches ( [32 × 32] ) with 50% overlap along horizontal and vertical direction, and measure their respective variances. As shown in Fig. 7 , we observed that the synthesized S W I R 24 m closely resembles the original S W I R 24 m in terms of homogeneity . This ensures the model’ s capability to discriminate between homogeneous and heterogeneous textures/features in a tile. Fig. 7. Performance of the model on homogeneous and heterogeneous patches. V ariance distribution of (a) LISS-III DeepSWIR and (b) LISS-III SWIR. The histogram of synthesized band follows the histogram of original to a large extent. The similarity in variance distribution, howe ver , does not fully characterize the reliability of DN counts. For this reason, we also compare the individual DN counts of synthesized and original S W I R 24 m . As shown in Fig. 8 , the scatter plot of DN counts follows the ideal curve (Red) with 97% population falling within 5 counts of tolerance. Thus, the combined measure of variance distribution and pixel wise DN counts cross referencing ensures that the model effecti vely learns spatio-spectral characteristics of individual objects with sufficient degree of realism necessary . E. Evaluation on LISS-IV As we focus on synthesizing a high resolution S W I R 5 m , we ev aluate the model’ s transfer learning ability to gener- alize across different sensors: LISS-III and LISS-IV . Since the model learns to preserve the spatial resolution of input bands through global residual learning during training phase, it synthesizes the virtual band at same spatial resolution as that of the input. Thus, the DeepSWIR model generates a virtual band at 5m GSD when { G, R, N I R } 5 m is fed as input. In addition to global residual learning, the DeepSWIR model comprises of local residual learning module, which generates the radiometry of S W I R as a complex combina- tion of { G, R, N I R } 5 m and fuses it onto the synthesized virtual band in the high dimensional feature space. The virtual S W I R 5 m , thus synthesized, has been compared with the interpolated S W I R 24 m at 5m GSD. As given in T able IV , the values of state-of-the-art metrics such as RMSE, SSIM, PSNR, SRE, and SAM between these compared bands are reasonable because of additional structures/details present in synthesized 8 Fig. 8. Cross Referencing of predicted and original SWIR band of LISS-III. The predicted DN count closely resembles the original DN count. Band5 ( S W I R 5 m ) which are missing in cubic interpolated S W I R 24 m at 5m GSD. Nev ertheless, the statistics of these state-of-the-art metrics in Band5 are comparable to other bands which verifies the consistency in spatial and spectral characteristics of the synthesized S W I R 5 m . T ABLE IV S T AT IS T I CS O F V A R I O US S T AT E - O F - TH E - A RT M E T R IC S U S E D T O E V A LU A T E T H E P E R F OR M A N CE O F S Y N TH E S I ZE D S W I R B AN D BA S E D O N T H E I N TE R P O LATE D L I SS - I I I S W I R 24 m A T 5 M G S D . Band2 Band3 Band4 Band5 RMSE 5.057 5.565 16.488 23.367 SSIM 0.989 0.979 0.898 0.879 PSNR (dB) 46.305 45.804 36.725 34.723 SRE (dB) 77.279 73.464 70.919 62.263 SAM (deg) 2.548 5.222 5.467 10.339 Further , we analyze the consistency in spectral character- istics of various terrain features in our region of interest as shown in Fig 9 . F or rob ust measurement, we consider mean DN count of 10 pixels randomly selected from the compared categories that includes V egetation ( 9 (a)), Ri ver W ater ( 9 (b)), Land ( 9 (c)), and Deep Ocean W ater ( 9 (d)). As per our exper - imentation, the spectral characteristics of synthesized band is consistent with existing high resolution bands (LISS-IV) and also, follo ws a similar pattern in frequenc y domain as that of the actual lo w resolution sensor DN counts (LISS-III). F . Cross V alidation with Sentinel-2B Since the model has been trained on LISS-III data only , it may falsely emphasize on learning blind mapping in order to synthesize target band without learning the actual physical characteristics, such as reflectance of various objects. T o assimilate such behaviour , we have conv erted the DN values of S W I R 5 m to T op Of the Atmosphere (TO A) reflectance and compared with the data product of Sentinel-2B (lev el 1C) Fig. 9. Spectral Response of various terrain features from GO A region (DOP=17.11.17, path=96, row=62). The synthesized S W I R 5 m is consistent with other bands of LISS-IV and closely follo ws the spectral response of LISS-III. in the nearest wavelength range (Sentinel-2B: (1.64-1.68) µ m, LISS-IV : (1.55-1.70) µ m) and closest date of pass (Sentinel- 2B: 01.11.17, LISS-IV : 17.11.17). In order to generate the TO A reflectance, we ha ve used the saturation radiance of LISS-III S W I R 24 m and DOP of LISS-IV concurrent bands. For robustness, we have considered mean reflectance of 10 randomly selected pixels from the homogeneous regions of chosen categories. Since the nativ e resolution of Sentinel is 20m, we hav e resampled it to the resolution of LISS-IV (5m) using cubic interpolation. As shown in Fig. 10 , the TO A reflectance of the virtual sensor: DeepSWIR closely follows that of the real sensor: Sentinel-2B over homogeneous regions: Stagnant W ater, River W ater , and Land. The relati vely smaller TO A reflectance of V egetation re gions of LISS-IV is due to additional structures/details present in our DeepSWIR which has nati ve resolution of 5m unlike Sentinel-2B. G. Application of Synthesized Band in W etland In ventory In order to verify the usefulness of synthesized band in real world applications, we have used the generated S W I R 5 m to delineate wetland resources in selecti ve regions of GO A tile (path=96, row=62). Since our primary scope of this study is not to dev elop wetland delineation algorithm, b ut synthesis of high resolution S W I R 5 m band, we hav e used a simple and yet very effecti ve algorithm proposed by Sushma et. al. [ 46 ] for mapping wetland resources. As sho wn in Fig. 11 , the use of high resolution N I R 5 m in the absence of S W I R 5 m fails to generate accurate maps of wetland resources and fails to delineate narrow wetlands as higlighted by the arro w heads. On the contrary , the use of synthesized S W I R 5 m could capture the unique features associated with v arious classes of wetland resources and hence, could suppress false positives and delineate ev en narrow wetlands to a great extent. In Fig. 12 we show qualitative comparison between original and 9 Fig. 10. Comparison of percentage TO A reflectance of LISS-IV -DeepSWIR and Sentinel-2B-SWIR. The virtual band closely follo ws the output of real sensor over homogeneous region. Fig. 11. Comparison of N I R 5 m and synthesized S W I R 5 m in wetland mapping. Unlike N I R 5 m , the synthesized high resolution S W I R 5 m assists in delineating narrow wetland resources and suppress false positives to a great extent. synthesized band where minute structures/details present in synthesized band are clearly distinguishable from the interpo- lated band at 5m GSD. V . C O N C L U D I N G R E M A R K S In this study , we demonstrated an efficient way to utilize the power of deep learning techniques in synthesizing a virtual band using existing bands of multi-sensor satellite imagery . Further , we critically analyzed the qualitati ve and quantitative results produced by the proposed DeepSWIR with extensi ve experimentation and appropriate e valuation standards. Additionally , we proposed a Gaussian feathering based image stitching mechanism for seamless band generation. As per our experiments, we report that the synthesized high resolution S W I R 5 m possesses the spectral characteristics of LISS-III S W I R 24 m and spatial characteristics of LISS-IV sensor to a great extent. W e also observed a significant resemblance in the TO A reflectance of the virtual band, S W I R 5 m with that of the real sensor, Sentinel-2B over homogeneous features. At the end, we showed the real world application of the synthesized high resolution band by using it to delineate wetland resources ov er our region of interest. Howe ver , though the computationally e xtensive deep con- volutional neural networks are highly efficient in synthesiz- ing the high resolution virtual band, S W I R 5 m that closely mimics the spatio-spectral characteristics of real bands, this method should not be considered as an alternati ve to sending remote sensing satellites. First, these models are data dri ven approaches and hence, strongly depend upon representative training datasets which are acquired by remote sensing satel- lites. Second, the model e xtracts spatial information from the existing high resolution concurrent bands: { G, R, N I R } 5 m , and spectral information from e xisting low resolution bands: { G, R , N I R, S W I R } 24 m . This model can generate a high resolution band at one particular time provided it has access to other high resolution accurate bands, { G, R, N I R } 5 m at that instant of time. Therefore, unlike real SWIR band sensor , the DeepSWIR model can not produce S W I R 5 m in highly unlikely scenarios where all its source band sensors fail. Nev ertheless, our study sho ws that a deep neural network can be successfully employed to synthesize virtual bands in most cases and more importantly , it sho ws appealing results in both qualitativ e and quantitativ e assessments. Therefore, these biologically inspired techniques can be used to broaden our vision in the EMS with certain degree of accuracy when technology reaches its limit. A C K N O W L E D G M E N T W e are immensely grateful to our colleagues Ashwin Gujrati and V ibhuti Bhushan Jha who provided expertise that greatly assisted the research, although any errors are our own and should not tarnish the reputation of these esteemed profession- als. W e would also like to express our gratification to all the members of Optical Data Processing Di vision (ODPD), Signal and Image Processing Group (SIPG) for their continuous support throughout this research. R E F E R E N C E S [1] J. E. Patino and J. C. Duque, “ A revie w of regional science applications of satellite remote sensing in urban settings, ” Computers, Envir onment and Urban Systems , vol. 37, pp. 1–17, 2013. [2] C. Pohl and J. L. V an Genderen, “Review article multisensor image fusion in remote sensing: concepts, methods and applications, ” Interna- tional journal of r emote sensing , vol. 19, no. 5, pp. 823–854, 1998. [3] T . Blaschke, “Object based image analysis for remote sensing, ” ISPRS journal of photogrammetry and remote sensing , vol. 65, no. 1, pp. 2–16, 2010. [4] I. Colomina and P . Molina, “Unmanned aerial systems for photogram- metry and remote sensing: A revie w , ” ISPRS Journal of photogrammetry and r emote sensing , vol. 92, pp. 79–97, 2014. [5] G. Cheng and J. Han, “ A survey on object detection in optical remote sensing images, ” ISPRS Journal of Photogrammetry and Remote Sens- ing , vol. 117, pp. 11–28, 2016. [6] Y . Y ang and S. Newsam, “Geographic image retriev al using local inv ari- ant features, ” IEEE T ransactions on Geoscience and Remote Sensing , vol. 51, no. 2, pp. 818–832, 2013. 10 Fig. 12. (a) FCC of LISS-III { S W I R, N I R, R } 24 m resampled at 5m. (b) FCC of LISS-IV { S W I R ( Sy nthesiz ed ) , N I R, R } 5 m . (c) LISS-III S W I R 24 m resampled at 5m. (c) LISS-IV S W I R 5 m Synthesized. The bottom row represents the selected region highlighted by red bounding box. The FCCs ensure the consistency in both radiometric resemblance (a-b) and geometric registration of synthesized band with respect to existing high resolution bands (b). The radiometric resemblance between SWIR interpolated and synthesized band (c-d) verifies enhanced spatial resolution with desired spectral characteristics. [7] S. A. W agner , “Sar atr by a combination of convolutional neural network and support vector machines, ” IEEE Tr ansactions on Aerospace and Electr onic Systems , vol. 52, no. 6, pp. 2861–2872, 2016. [8] M. V olpi and V . Ferrari, “Semantic segmentation of urban scenes by learning local class interactions, ” in Pr oceedings of the IEEE Conference on Computer V ision and P attern Recognition W orkshops , pp. 1–9, 2015. [9] J. Geng, H. W ang, J. Fan, and X. Ma, “Deep supervised and contractiv e neural network for sar image classification, ” IEEE Tr ansactions on Geoscience and Remote Sensing , vol. 55, no. 4, pp. 2442–2459, 2017. [10] D. T apete and F . Cigna, “Trends and perspecti ves of space-borne sar remote sensing for archaeological landscape and cultural heritage appli- cations, ” Journal of Ar chaeological Science: Reports , vol. 14, pp. 716– 726, 2017. [11] K. K. Kumar , M. Nagai, A. W itayangkurn, K. Kritiyutanant, S. Naka- mura, et al. , “ Above ground biomass assessment from combined optical and sar remote sensing data in surat thani province, thailand, ” J. Geogr . Inf. Syst. , vol. 8, p. 506, 2016. [12] U. Khati, V . Kumar , D. Bandyopadhyay , M. Musthafa, and G. Singh, “Identification of forest cutting in managed forest of haldwani, india using alos-2/palsar-2 sar data, ” Journal of en vir onmental manag ement , vol. 213, pp. 503–512, 2018. [13] B. Y e, S. Tian, J. Ge, and Y . Sun, “ Assessment of worldview-3 data for lithological mapping, ” Remote Sensing , vol. 9, no. 11, p. 1132, 2017. [14] G. Anbarjafari and H. Demirel, “Image super resolution based on interpolation of wavelet domain high frequency subbands and the spatial domain input image, ” ETRI journal , vol. 32, no. 3, pp. 390–394, 2010. [15] C. Thomas, T . Ranchin, L. W ald, and J. Chanussot, “Synthesis of multispectral images to high spatial resolution: A critical revie w of fusion methods based on remote sensing physics, ” IEEE T ransactions on Geoscience and Remote Sensing , vol. 46, no. 5, pp. 1301–1312, 2008. [16] A. Krizhevsky , I. Sutskev er, and G. E. Hinton, “Imagenet classification with deep conv olutional neural networks, ” in Advances in neural infor- mation pr ocessing systems , pp. 1097–1105, 2012. [17] C. Szegedy , W . Liu, Y . Jia, P . Sermanet, S. Reed, D. Anguelov , D. Erhan, V . V anhoucke, and A. Rabino vich, “Going deeper with convolutions, ” in Proceedings of the IEEE conference on computer vision and pattern r ecognition , pp. 1–9, 2015. [18] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition, ” in Proceedings of the IEEE conference on computer vision and pattern r ecognition , pp. 770–778, 2016. [19] G. Huang, Z. Liu, L. V an Der Maaten, and K. Q. W einberger , “Densely connected con volutional networks., ” in CVPR , vol. 1, p. 3, 2017. [20] C. Lanaras, J. Bioucas-Dias, S. Galliani, E. Baltsavias, and K. Schindler , “Super-resolution of sentinel-2 images: Learning a globally applicable deep neural network, ” arXiv preprint , 2018. [21] L. Zhang and X. W u, “ An edge-guided image interpolation algorithm via directional filtering and data fusion, ” IEEE transactions on Image Pr ocessing , vol. 15, no. 8, pp. 2226–2238, 2006. [22] C. Dong, C. C. Loy , K. He, and X. T ang, “Learning a deep con volu- tional network for image super-resolution, ” in European conference on computer vision , pp. 184–199, Springer , 2014. [23] C. Ledig, L. Theis, F . Husz ´ ar , J. Caballero, A. Cunningham, A. Acosta, A. P . Aitken, A. T ejani, J. T otz, Z. W ang, et al. , “Photo-realistic single image super-resolution using a generative adversarial network., ” in CVPR , vol. 2, p. 4, 2017. [24] H. Zhang, V . Sindagi, and V . M. Patel, “Image de-raining using a condi- tional generativ e adversarial network, ” arXiv preprint , 2017. [25] H. Zhang and V . M. Patel, “Density-aware single image de-raining using a multi-stream dense network, ” arXiv preprint , 2018. [26] H. Zhang and V . M. Patel, “Densely connected pyramid dehazing network, ” in The IEEE Confer ence on Computer V ision and P attern Recognition (CVPR) , 2018. [27] S. Escalera, M. T orres T orres, B. Martinez, X. Bar ´ o, H. Jair Escalante, I. Guyon, G. Tzimiropoulos, C. Corneou, M. Oliu, M. Ali Bagheri, et al. , “Chalearn looking at people and faces of the world: Face analysis workshop and challenge 2016, ” in Proceedings of the IEEE Conference on Computer V ision and P attern Recognition W orkshops , pp. 1–8, 2016. [28] J. Deng, W . Dong, R. Socher , L.-J. Li, K. Li, and L. Fei-Fei, “Imagenet: A large-scale hierarchical image database, ” in Computer V ision and P attern Recognition, 2009. CVPR 2009. IEEE Confer ence on , pp. 248– 255, Ieee, 2009. [29] J. Kim, J. Kwon Lee, and K. Mu Lee, “Deeply-recursive conv olutional network for image super-resolution, ” in Pr oceedings of the IEEE confer- ence on computer vision and pattern recognition , pp. 1637–1645, 2016. [30] X. Mao, C. Shen, and Y .-B. Y ang, “Image restoration using very deep con volutional encoder-decoder networks with symmetric skip connec- tions, ” in Advances in neural information pr ocessing systems , pp. 2802– 2810, 2016. [31] W . Shi, J. Caballero, F . Husz ´ ar , J. T otz, A. P . Aitken, R. Bishop, D. Rueckert, and Z. W ang, “Real-time single image and video super- 11 resolution using an efficient sub-pixel con volutional neural network, ” in Pr oceedings of the IEEE Conference on Computer V ision and P attern Recognition , pp. 1874–1883, 2016. [32] J. Y amanaka, S. Kuwashima, and T . K urita, “Fast and accurate image super resolution by deep cnn with skip connection and network in network, ” in Neural Information Pr ocessing , pp. 217–225, Springer , 2017. [33] M. Lin, Q. Chen, and S. Y an, “Network in network, ” arXiv preprint arXiv:1312.4400 , 2013. [34] D. Pouliot, R. Latifovic, J. Pasher , and J. Duffe, “Landsat super- resolution enhancement using conv olution neural networks and sentinel- 2 for training, ” Remote Sensing , vol. 10, no. 3, p. 394, 2018. [35] M. Pandya, K. Murali, and A. Kirankumar , “Quantification and com- parison of spectral characteristics of sensors on board resourcesat-1 and resourcesat-2 satellites, ” Remote sensing letters , vol. 4, no. 3, pp. 306– 314, 2013. [36] H. Permuter , J. Francos, and I. H. Jermyn, “Gaussian mixture models of texture and colour for image database retrieval, ” in Acoustics, Speech, and Signal Pr ocessing, 2003. Proceedings.(ICASSP’03). 2003 IEEE International Confer ence on , vol. 3, pp. III–569, IEEE, 2003. [37] H. Permuter , J. Francos, and I. Jermyn, “ A study of gaussian mixture models of color and texture features for image classification and seg- mentation, ” P attern Recognition , vol. 39, no. 4, pp. 695–706, 2006. [38] B. Storvik, G. Storvik, and R. Fjørtoft, “On the combination of correlated images using meta-gaussian distributions, ” 2008. [39] T . Celik, “Image change detection using gaussian mixture model and genetic algorithm, ” Journal of visual communication and image repr e- sentation , vol. 21, no. 8, pp. 965–974, 2010. [40] D. P . Kingma and J. Ba, “ Adam: A method for stochastic optimization, ” arXiv pr eprint arXiv:1412.6980 , 2014. [41] T . Dozat, “Incorporating nesterov momentum into adam, ” 2016. [42] F . Chollet, “keras. ” https://github.com/fchollet/keras , 2015. [43] M. Abadi, A. Agarwal, P . Barham, E. Brevdo, Z. Chen, C. Citro, G. S. Corrado, A. Davis, J. Dean, M. Devin, S. Ghemawat, I. Goodfellow , A. Harp, G. Irving, M. Isard, Y . Jia, R. Jozefowicz, L. Kaiser , M. Kudlur , J. Levenberg, D. Man ´ e, R. Monga, S. Moore, D. Murray , C. Olah, M. Schuster , J. Shlens, B. Steiner , I. Sutskever , K. T alwar , P . T ucker, V . V anhoucke, V . V asudev an, F . V i ´ egas, O. V inyals, P . W arden, M. W at- tenberg, M. Wicke, Y . Y u, and X. Zheng, “T ensorFlow: Large-scale machine learning on heterogeneous systems, ” 2015. Software available from tensorflow .org. [44] A. Hore and D. Ziou, “Image quality metrics: Psnr vs. ssim, ” in P attern r ecognition (icpr), 2010 20th international confer ence on , pp. 2366– 2369, IEEE, 2010. [45] R. H. Y uhas, A. F . Goetz, and J. W . Boardman, “Discrimination among semi-arid landscape endmembers using the spectral angle mapper (sam) algorithm, ” 1992. [46] S. Panigrahy , T . Murthy , J. Patel, and T . Singh, “W etlands of india: in ventory and assessment at 1: 50,000 scale using geospatial techniques, ” Curr ent science , pp. 852–856, 2012.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment