Hybrid Compression Techniques for EEG Data Based on Lossy/Lossless Compression Algorithms

The recorded Electroencephalography (EEG) data comes with a large size due to the high sampling rate. Therefore, large space and more bandwidth are required for storing and transmitting the EEG data. Thus, preprocessing and compressing the EEG data i…

Authors: Madyan Alsenwi, Tawfik Ismail, M. Saeed Darweesh

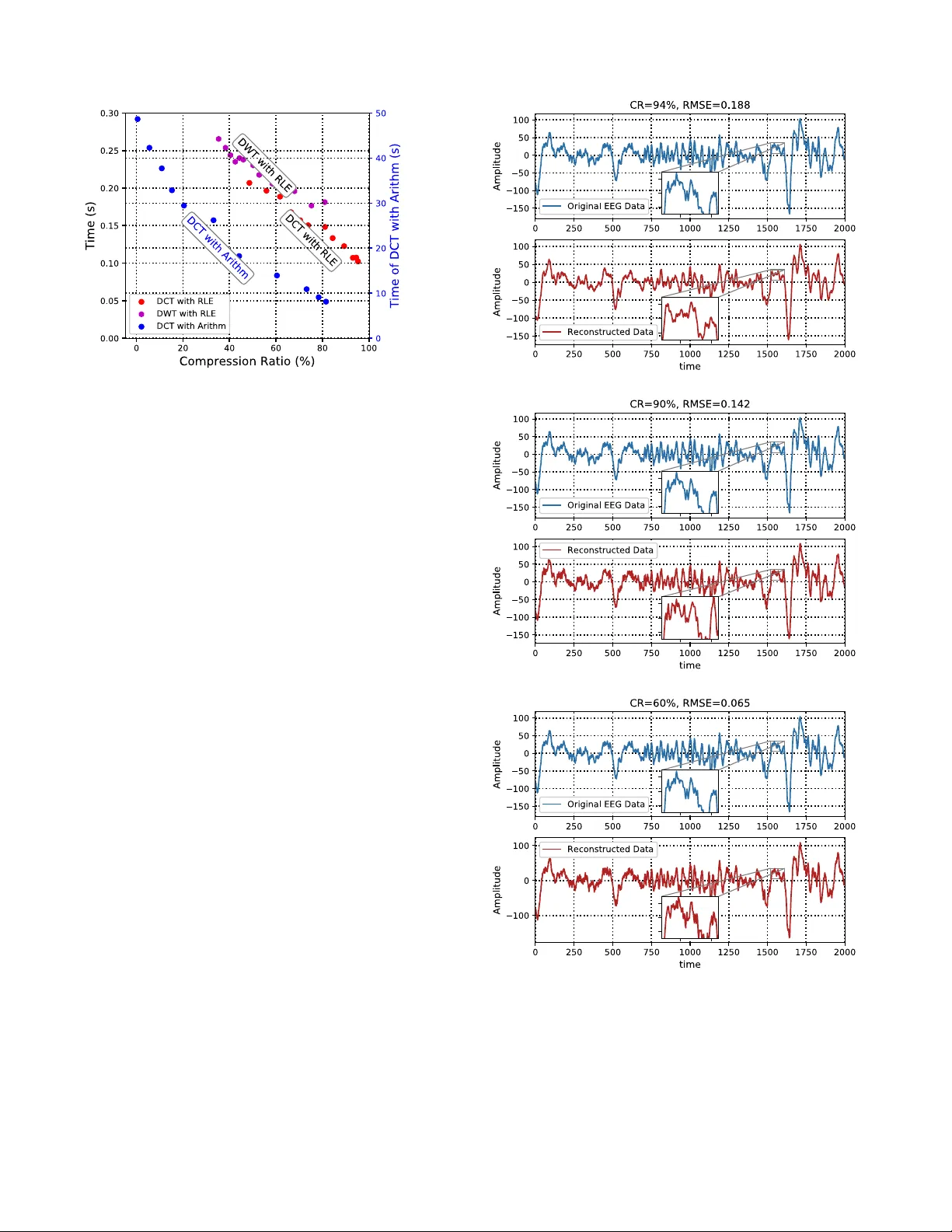

Hybrid Compression T echniques for EEG Data Based on Lossy/Lossless Compression Algorithms Madyan Alsenwi 1 , T awfik Ismail 2 , and M. Saeed Darweesh 3 , 4 1 Department of Computer Science and Engineering, Kyung Hee Uni versity , Gyeonggi-do 17104, South K orea 2 National Institute of Laser Enhanced Science, Cairo Univ ersity , Giza, Egypt 3 Institute of A viation Engineering and T echnology , Giza, Egypt 4 Nanotechnology Department, Zew ail City for Science and T echnology , Egypt Email: malsenwi@khu.ac.kr; tismail@niles.cu.edu.eg; msaeed@ieee.or g; Abstract —The recorded Electroencephalography (EEG) data comes with a large size due to the high sampling rate. Ther efore, large space and more bandwidth are required for storing and transmitting the EEG data. Thus, prepr ocessing and compr essing the EEG data is a very important part in order to transmit and store it efficiently with fewer bandwidth and less space. The objective of this paper is to develop an efficient system for EEG data compression. In this system, the recorded EEG data ar e firstly preprocessed in the preprocessing unit. Standardization and segmentation of EEG data are done in this unit. Then, the resulting EEG data are passed to the compression unite. The compression unit composes of a lossy compression algorithm follo wed by a lossless compression algorithm. The lossy com- pression algorithm transforms the randomness EEG data into data with high redundancy . Subsequently , A lossless compr ession algorithm is added to investigate the high redundancy of the resulting data to get high Compression Ratio (CR) without any additional loss. In this paper , the Discrete Cosine T ransform (DCT) and Discrete W a velet T ransform (D WT) ar e proposed as a lossy compression algorithms. Furthermore, Arithmetic Encoding and Run Length Encoding (RLE) are proposed as a lossless compression algorithms. W e calculate the total compression and reconstruction time (T), Root Mean Squar e Error (RMSE), and CR in order to evaluate the proposed system. Simulation results show that adding RLE after the DCT algorithm gives the best performance in terms of compression ratio and complexity . Using the DCT as a lossy compression algorithm followed by the RLE as a lossless compr ession algorithm gi ves C R = 90% at RM S E = 0 . 14 and more than 95% of CR at RM S E = 0 . 2 . Index T erms —EEG data compression, lossy Compr ession, lossless compr ession, DCT , DWT , RLE, arithmetic encoding. I . I N T R O D U C T I O N Electroencephalography (EEG) is an electrophysiological monitoring mechanism used to record and ev aluate the electri- cal activity in the brain. A number of sensors are attached to the scalp or placed inside the human body called electrodes. These electrodes record the electrical impulses in the brain and send it to an external station for analyzing it and recording the result. The recorded EEG data hav e a large size due to the high sampling frequency and transmitting these large size of data is dif ficult due to the channel capacity and po wer limitations. Data compression is proposed as a solution to handle the problem of channel capacity and power consumption limitation. Generally , The compression methods are classified as lossless compression techniques and lossy compression techniques. The original data in the lossless compression algo- rithms can be reconstructed perfectly without any distortion in the original data. Con versely , the lossy compression techniques lead to non perfect reconstruction since some parts of the original data may be loosed. Ho wev er, Higher Compression Ratio (CR) can be achieved using the lossy compression algorithms compared with the lossless compression techniques [1]. The randomness nature of the EEG data makes it difficult to achiev e a high CR using only lossless compression techniques [2], [3]. Thus, lossy compression algorithms with accepted lev el of distortion are used in this work. Firstly , the original EEG data are standardized, to give it the property of standard normal distribution, and segmented in the preprocessing unit. Then, a lossy compression algorithm followed by lossless compression algorithm are applied to the preprocessed data. In this paper, the Discrete Cosine T ransform (DCT) and Discrete W av elet T ransform (D WT) are used as a lossy compression algorithms. The output data from the lossy compression algo- rithm (DCT/DWT) have high redundancy . Therefore, adding a lossless compression algorithm after the lossy compression algorithm giv es a high CR without any additional loss in the EEG data. Run Length Encoding (RLE) and Arithmetic En- coding (Arithm) are used as a lossless compression algorithms in this work. Sev eral works have been studied the compression of EEG data [4]. Authors in [4] used only the DCT algorithm, which is a lossy compression technique, for EEG data compression. using only lossy compression cannot giv e a high CR compared with the case of using lossy followed by lossless compression algorithm. Authors in [5] proposed a compression system com- posed from DCT and RLE follo wed by Huffman Encoding. This compression system can give high CR but it is more complex and long time is required for compression and recon- struction processes. Authors in [6] introduced a comparativ e analysis between D WT , DCT , and Hybrid (DWT+DCT). A high distortion can be occurred in the EEG data due to using lossy compression algorithm (DCT) followed by another lossy compression algorithm (D WT). In this work, we propose a lossy compression algorithm followed be a lossless compres- sion algorithm in order to balance between the data loss, CR, and the system comple xity . Dif ferent combinations of lossy and lossless compression algorithms are studied in order to achiev e the combination that giv es the best performance. The rest parts of this paper are organized as follows: Section II introduces an ov erview on the compression techniques. Both D WT , DCT , Arithm, and RLE are described in this section. Section III presents the proposed compression model and the performance metrics. Simulation results are discussed in section IV . Finally , section V concludes the paper . I I . D A T A C O M P R E S S I O N T E C H N I Q U E S An o verview of the data compression algorithms used in this paper is presented in this section. Generally , the data compression techniques are classified as lossy and lossless compression techniques [7], [8]. A brief description of the lossy/lossless compression algorithms used in this work is giv en bellow: A. Discr ete Cosine T ransform (DCT) Discrete Cosine Transform (DCT) is a transformation tech- nique that transforms a time series signal into its frequency components. The main feature of DCT is its ability to con- centrate the input signal ener gy at the first few coefficients of the output signal. This feature is in vestigated widely in the data compression field. Let f ( x ) be the input EEG signal of the DCT which composes of N EEG data samples and let Y ( u ) be the output signal of DCT which composes of N coefficients. The one dimensional DCT is gi ven by the following equation [9], [10]: Y ( u ) = r 2 N α ( u ) N − 1 X x =0 f ( x ) cos ( π (2 x + 1) u 2 N ) (1) where α ( u ) = 1 √ 2 , u = 0 1 , u > 0 The first coefficient of Y ( u ) , Y (0) , is the DC component and the remaining coefficients are referred as A C components. The DC component Y (0) is the mean value of the original signal f ( x ) whereas the AC components represent the frequency of the f ( x ) and it is independent on the average. The in verse process of DCT takes the coef ficients of Y ( u ) as an input and transforms it back into f ( x ) . The in verse transform of DCT is giv en as follows: f ( x ) = r 2 N α ( u ) N − 1 X u =0 Y ( u ) cos ( π (2 x + 1) u 2 N ) (2) Most of the coefficients produced by DCT have a small values and usually approximated to zero. B. Discr ete W avelet T ransform (DWT) Discrete W a velet T ransform (DWT) decomposes the in- put signal into high frequency part called details and low frequency part called approximation as shown in Fig. 1. This decomposition of the input signal allo ws studying each frequency component with a resolution matched to its scale Fig. 1: DWT T ree Fig. 2: RLE and inv estigated in the data compression [11], [12]. DWT with Haar basis function is used in this study because it is less complex and giv es good performance. Every two consecutiv e samples ( S (2 m ) , S (2 m + 1) ) in DWT with Haar functions has two coef ficients defined as [6], [13]: C A ( m ) = 1 √ 2 [ S (2 m ) + S (2 m + 1)] (3) C D ( m ) = 1 √ 2 [ S (2 m ) − S (2 m + 1)] (4) Where C A ( m ) is the lo w frequency component (approxima- tion coefficient) and C D ( m ) is the high frequency component (detail coefficient). Equations 3 and 5 show that calculating the C A ( m ) and C D ( m ) is equiv alent to pass a signal through high-pass and low-pass filters with subsampling factor of 2 and normalized it by 1 / p (2) . C. Run Length Encoding (RLE) Run Length Encoding (RLE) is the simplest lossless com- pression algorithm. The idea of RLE is to replace the se- quences of the same data v alues by a single value followed by the number of occurrences as shown in Fig. 2. RLE is efficient with the data that contain lots of repetitiv e values [5], [9], [14]. D. Arithmetic Coding Arithmetic Coding is an entropy encoding algorithm used in lossless data compression. Arithmetic coding takes a message composed of symbols as input and conv erts it into a number (floating point number) less than one and greater than zero. First, Arithmetic algorithm reads the input message (data file) symbol by symbol and starts with a certain interval. Then, it narro ws the interval based on the probability of each symbol. Starting a new interv al needs more bits. Therefore, arithmetic algorithm allows the low probability symbols to narro w the interval more than the high probability symbols and this is the ke y idea behind using the arithmetic encoding in the data compression field [15], [16], [17]. I I I . P R O P O S E D C O M P R E S S I O N S Y S T E M F O R E E G D A TA The proposed compression system composed of a prepro- cessing unit, compression unit, reconstruction unit, and data combiner unit as shown in Fig. 3, . A. Pr epr ocessing Unit The functions of this unit are reading the recorded EEG data, standardizing, and segmenting it. Standardization gi ves the EEG data the property of stan- dard normal distrib ution which makes the EEG data in the same scale and this helps the compression unit to gi ve high compression ratio. Standardization shifts the mean of the EEG data so that it is centered at zero with standard deviation of one as illustrated in Algorithm 1. Let X be a vector of the EEG data, the standardized EEG data X s is giv en as follows: X s = X − µ σ (5) Where µ is the mean and σ is the standard deviation of X . The standardized EEG data are then segmented with sam- pling time T s in order to improv e the compression perfor- mance. As sho wn in Fig. 3, the preprocessing unit produces one segment of the EEG data every T s second. Therefore, the size of the produced EEG segment depends on T s . Decreasing T s improv es the total compression time. Howe ver , the value of T s cannot be decreased lo wer than a threshold value in order to guarantee that each incoming EEG segment arriv es a ne w unit after finishing the previous segment as follows: T s ≥ max ( T Lossy , T thr , T Lossless , T I lossless , T I lossy ) (6) Where T Lossy is the time of lossy compression algorithm, T thr is the thresholding time, T Lossless is the time of lossless compression algorithm, T I lossless is the time of the in verse lossless algorithm, and T I lossy is the time of the in verse lossy algorithm. Therefore, the minimum sampling time ( T s ) is obtained as follows: T min = max ( T Lossy , T thr , T Lossless , T I lossless , T I lossy ) (7) The smallest compression and reconstruction time is achie ved at T s = T min . B. Compr ession Unit The compression unit composes of a lossy compression algorithm follo wed by a lossless compression algorithm. Both DCT and DWT are used as a lossy compression algorithms. Then, a thresholding is applied after the lossy compression algorithm in order to increase the redundanc y of the trans- formed data. The values of the transformed data below a threshold v alue are set to zero. Therefore, v arying the threshold value increases/decreases the number of zero coef ficients. Consequently , the accuracy of the compression system is controllable based on the threshold value. Algorithm 1 Preprocessing Algorithm Input: Recorded EEG Data Output: Preprocessed EEG Data Standardization of EEG Data x ← E E Gdata µ ← mean of x σ ← standar d deviation of x x s = ( x − µ ) /σ Sampling N ← N umberO f Req uiredS ampl es L ← Leng thOf E E GData sp ← f loor ( L/ N ) k ← 1 while k ≤ N do if k = 1 then D ata ← E E GD ata (1 : sp ) else initial ← ( k − 1) ∗ sp + 1 f inals ← k ∗ sp D ata ← E E GD ata ( initial : f inal ) end if if k = N then v ector ← E E GD ata ( k ∗ sp + 1 : L ) D ata ← [ Data v ector ] end if k ← k + sp end while Finally , a lossless compression algorithm is applied. Both RLE and Arithmetic algorithms are proposed in this system. The lossless compression algorithms gi ves high compression ratio due to the high redundancy of the transformed data. C. Reconstruction Unit the inv erse process of the compression unit is applied in this unit to reconstruct the original EEG data. First, the in verse of RLE/Arithmetic algorithm is applied. Then, the in verse of the DCT/D WT is applied to completely reconstruct the original EEG data as shown in Fig. 4 and Algorithm 2. D. P erformance Metrics The performance metrics used to ev aluate the proposed compression system are described below: 1) Root Mean Squar e Error (RMSE): The RMSE measures the error between two signals. Therefore, the RMSE is used in this paper to measure the the error between the original data and the reconstructed data. The RMSE is giv en by: RM S E = s P N i =1 ( X i − Y i ) 2 N (8) Where X and Y are the original and recovered data respec- tiv ely . Fig. 3: Block diagram of the proposed compression system Fig. 4: Compression and Reconstruction Units 2) Compr ession Ratio (CR): The CR calculated as the difference in size between the original data and the compressed data divided by the size of the original data as follows: C R = O rig inalD ataS iz e − C ompD ataS iz e O rig inalD ataS iz e × 100 (9) 3) Compr ession and Reconstruction T ime (T): The last performance metric used in this paper is the compression and reconstruction time which is giv en as follows: T = T comp + T reconst (10) where T comp and T reconst are the compression and recovering time respectiv ely and defined as the following: T comp = T Lossy + T thr + T Lossless (11) T reconst = T I lossless + T I lossy (12) Finally , the total time is given as follows: T = T Lossy + T thr + T Lossless + T I lossy + T I lossless (13) I V . P E R F O R M A N C E E V A L UAT I O N The performance of the proposed compression system is ev aluated using Python run on Intel(R) Core(TM) i3 3.9GHz CPU and 8GB RAM. The size of the used EEG data is 1 MB. Fig. 5 shows the CR with different values of the RMSE. As shown in this figure, using the DCT as a lossy compression algorithm and RLE as a lossless compression technique gi ves the best CR compared with DCT/Arithm and D WT/RLE. The high CR of the DCT/RLE comes from the capability of the DCT to produce data with high redundancy and this facilitates the use of RLE. Both DCT/Arithm and DWT/RLE hav e approximately the same CR at high RMSE. The RMSE values are controlled by changing the threshold v alue. In these results, the threshold values are chosen between 0 . 005 and 0 . 05 . Fig. 6 sho ws the CR with segment size. As shown in this figure, the CR increases slightly if the segments size is increased. The size of the segment depends on the sampling time ( T s ), i.e., increasing T s giv es segments with lar ge size Fig. 5: CR versus RMSE and vice versa. Also, this figure shows that the DCT/RLE has the highest CR. The compression and reconstruction time ( T ) with RMSE of all studied cases is shown in Fig. 7. W e can notice from this figure that both DCT/RLE and DWT/RLE take short time due to its simplicity , whereas DCT/Arithm is more complex and consumes longer time. Fig. 8 sho ws the compression and reconstruction time with segment size. as shown in this figure, increase the segment size causes increase in the compression time. Therefore, there is a trade off between CR and compres- sion time when setting the sampling time ( T s ) which controls the segment size. The comparison between all the proposed compression algorithms in terms of CR and T is shown in Fig. 9. As shown in this figure, DCT/RLE gi ves the best results in both CR and compression time. Also, D WT/RLE gi ves a good compression time and accepted CR whereas DCT/Arithm consumes long time compared with DCT/RLE and D WT/RLE. Finally , Fig. 10 shows the original and the reconstructed EEG data with different CR in case of DCT with RLE. As Algorithm 2 Compression and Reconstruction Algorithm Input: Preprocessed EEG Data Output: Recov ered EEG Data lossy Compression if DCT is Selected then T ransformed Data ← DCT (Pr epr ocessed Data) else T ransformed Data ← D WT (Prepr ocessed Data) end if Thresholding Thr ← Thr eshold V alue [Sorted Data, index] ← sort ( | Pr epr ocessed Data | ) i ← 1 for Length of Data do if | x ( i ) /x (1) | > T hr then i ← i + 1 continue else br eak end if end for T ransformed Data(index(i+1:end)) ← 0 Lossless Compression if RLE is Requir ed then Compr essed Data ← RLE (T ransformed Data) else Compr essed Data ← Arithm (T ransformed Data) end if Reconstruction Unit if RLE is used then Decoded Data ← IRLE (Compr essed Datta) else Decoded Data ← IArithm (Compr essed Data) end if if DCT is used then Reconstructed Data ← IDCT (Decoded Data) else Reconstructed Data ← IDWT (Decoded Data) end if Data combining F inal Output ← [ F inal Output Reconstructed Data ] shown in Fig. 10, there is small distortion in the recov ered EEG data at C R = 95% , i.e., the R M S E = 0 . 188 , whereas at C R = 60 the distortion is less and both the original and recov ered EEG data approximately the same, i.e., the RM S E = 0 . 065 . V . C O N C L U S I O N A compression system composed of both lossy and lossless compression algorithms is designed in this article. The DCT and the D WT transforms followed by thresholding are used as a lossy compression technique. W e ha ve used the RLE and Arithmetic encoding as a lossless compression algorithms. The Fig. 6: CR with segment size Fig. 7: Compression and Reconstruction T ime with RMSE Fig. 8: Compression and Reconstruction T ime with Segment Size data produced by the lossy compression part contains high redundancy and this facilitates the use of lossless algorithms. Fig. 9: Compression and Reconstruction T ime versus Compression Ratio with different v alues of RMSE CR, RMSE and compression time are calculated in order to check the performance of the system. W e conclude that using DCT as a lossy compression algorithm followed by RLE as a lossless compression algorithm gives the best performance compared with D WT and Arithmetic encoding. As a future work, we will implement the technique of DCT with RLE on hardware for EEG data compression and check its performance in real implementation. A C K N O W L E D G E M E N T This work was supported by the Egyptian Information T ech- nology Industry De velopment Agency (ITID A) under IT AC Program CFP 96. R E F E R E N C E S [1] M. Alsenwi, M. Saeed, T . Ismail, H. Mostafa, and S. Gabran, “Hybrid compression technique with data segmentation for electroencephalogra- phy data, ” in 2017 29th International Conference on Micr oelectr onics (ICM) . IEEE, dec 2017. [2] D. Birvinskas, I. Jusas, and Damasevicius, “Fast dct algorithms for eeg data compression in embedded systems, ” Computer Science and Systems , vol. 12, no. 1, pp. 49–62, 2015. [3] M. Alsenwi, T . Ismail, and H. Mostafa, “Performance analysis of hybrid lossy/lossless compression techniques for EEG data, ” in 2016 28th International Confer ence on Microelectr onics (ICM) . IEEE, dec 2016. [4] L. J. Hadjileontiadis, “Biosignals and compression standards, ” in M- Health . Springer , 2006, pp. 277–292. [5] S. Akhter and M. Haque, “Ecg comptression using run length encoding, ” in Signal Pr ocessing Confer ence, 2010 18th European . IEEE, 2010, pp. 1645–1649. [6] A. Deshlahra, G. Shirnewar , and A. Sahoo, “ A comparativ e study of dct, dwt & hybrid (dct-dwt) transform, ” 2013. [7] G. Antoniol and P . T onella, “Eeg data compression techniques, ” Biomed- ical Engineering, IEEE T ransactions on , vol. 44, no. 2, pp. 105–114, 1997. [8] L. K oyrakh, “Data compression for implantable medical de vices, ” in Computers in Car diology , 2008 . IEEE, 2008, pp. 417–420. [9] D. Salomon, Data compression: the complete r efer ence . Springer Science and Business Media, 2004. [10] S. Fauv el and W ard, “ An energy ef ficient compressed sensing framework for the compression of electroencephalogram signals, ” Sensors , vol. 14, no. 1, pp. 1474–1496, 2014. [11] B. A. Rajoub, “ An efficient coding algorithm for the compression of ecg signals using the wavelet transform, ” Biomedical Engineering, IEEE T ransactions on , vol. 49, no. 4, pp. 355–362, 2002. Fig. 10: Original and Reconstructed EEG data with different CR in case of DCT/RLE [12] M. A. Shaeri and A. M. Sodag ar, “ A method for compression of intra-cortically-recorded neural signals dedicated to implantable brain– machine interfaces, ” Neural Systems and Rehabilitation Engineering, IEEE T ransactions on , vol. 23, no. 3, pp. 485–497, 2015. [13] O. O. Khalifa, S. H. Harding, and A.-H. Abdalla Hashim, “Compression using wav elet transform, ” International Journal of Signal Pr ocessing , vol. 2, no. 5, pp. 17–26, 2008. [14] Z. T . DR WEESH and L. E. GEORGE, “ Audio compression based on discrete cosine transform, run length and high order shift encoding, ” International Journal of Engineering and T echnology (IJEIT (, V ol. 4, Issue 1, Pp. 45-51 , 2014. [15] P . G. How ard and J. S. V itter , “ Analysis of arithmetic coding for data compression, ” in Data Compression Confer ence, 1991. DCC’91. IEEE, 1991, pp. 3–12. [16] A. Mof fat, R. M. Neal, and W itten, “ Arithmetic coding revisited, ” T ransactions on Information Systems (T OIS) , vol. 16, no. 3, pp. 256– 294, 1998. [17] I. H. Witten, R. M. Neal, and Cleary , “ Arithmetic coding for data compression, ” Communications of the ACM , v ol. 30, no. 6, pp. 520– 540, 1987.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment