Transparent pronunciation scoring using articulatorily weighted phoneme edit distance

For researching effects of gamification in foreign language learning for children in the "Say It Again, Kid!" project we developed a feedback paradigm that can drive gameplay in pronunciation learning games. We describe our scoring system based on th…

Authors: Reima Karhila, Anna-Riikka Smol, er

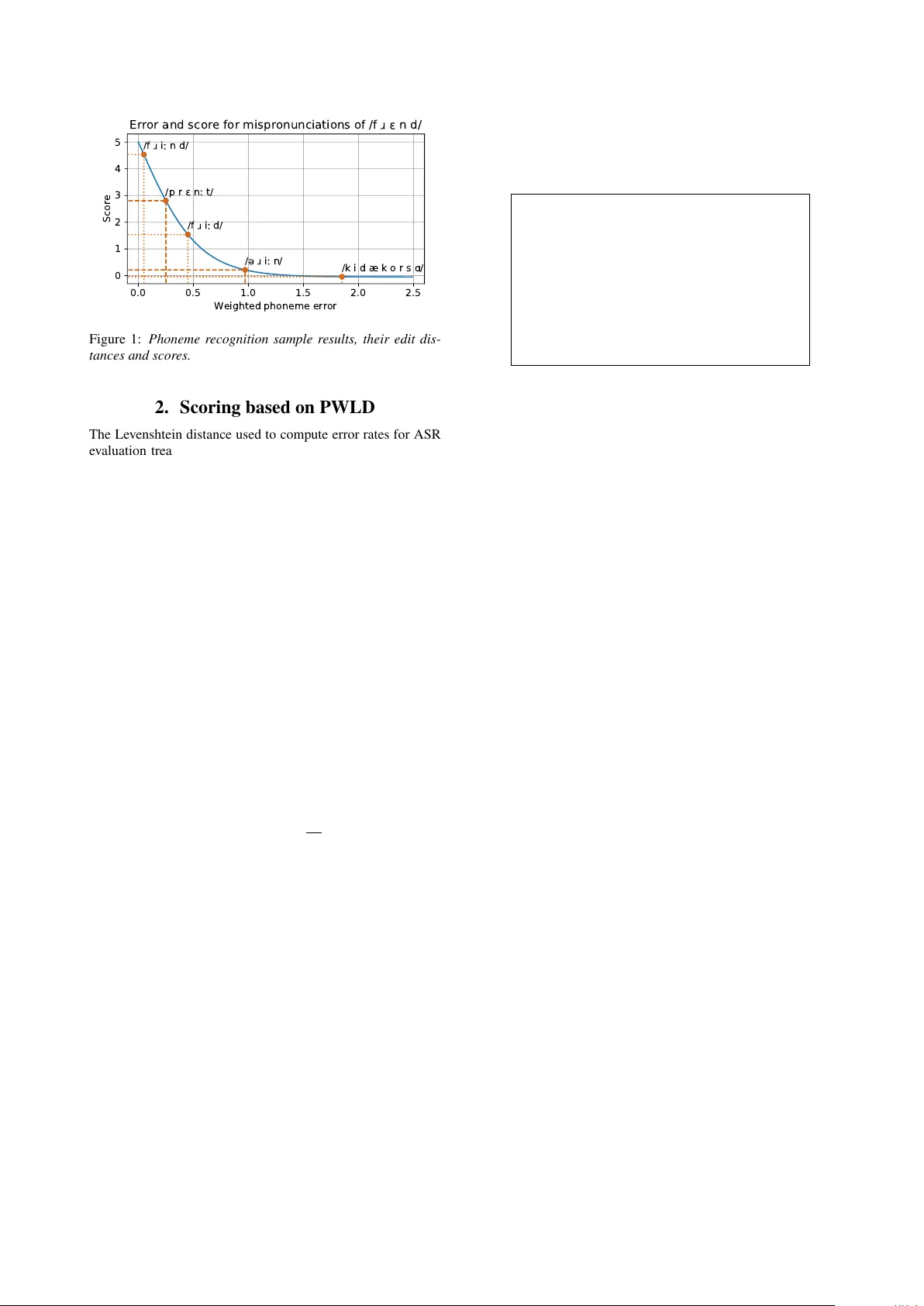

T ranspar ent pr onunciation scoring using articulatorily weighted phoneme edit distance Reima Karhila 1 , Anna-Riikka Smolander 2 , Sari Ylinen 2 , Mikko K urimo 1 1 Aalto Uni versity School of Electrical Engineering 2 Uni versity of Helsinki firstname.lastname@aalto.fi, firstname.lastname@helsinki.fi Abstract For researching effects of gamification in foreign language learning for children in the “Say It Again, Kid!” project we de- veloped a feedback paradigm that can drive gameplay in pro- nunciation learning games. W e describe our scoring system based on the difference between a reference phone sequence and the output of a multilingual CTC phoneme recogniser . W e present a white-box scoring model of mapped weighted Lev- enshtein edit distance between reference and error with error weights for articulatory differences computed from a training set of scored utterances. The system can produce a human- readable list of each detected mispronunciation’ s contribution to the utterance score. W e compare our scoring method to es- tablished black box methods. Index T erms : Computer Assisted Pronunciation T raining, Mispronunciation Detection, Speech Recognition, Multilingual Phoneme Recognition 1. Introduction In Computer Assisted Pronunciation Training (CAPT) our goal is to give speakers feedback on how to improve their pronunci- ation skills. W ith the assumption that mispronunciations are detectable as phoneme detection or classification errors, we can apply variants deriv ed from Automatic Speech Recogni- tion (ASR) acoustic models to ev aluate pronunciation skills of a speaker . For example mispronunciation detection with Ex- tended Recognition Networks (ERN) [1] or Goodness of Pro- nunciation (GOP) scoring based on posterior likelihoods [2, 3] are based normal ASR acoustic models. Deep Neural Network (DNN) based Acoustic modelling has improv ed ASR perfor- mance vastly in the recent decade and DNN models form an excellent basis for ne w experiments in CAPT tasks. Our CAPT game “Say It Again, Kid!" [4, 5] is used to study children’ s foreign language acquisition. W e hav e dev eloped a feedback mechanism with constraints set by the project: (1) The feedback must be usable as a gaming element: W e need a 0-5 star feedback score for each individual utterances. W e also need to compute the score quickly , so we use single pass unidirectional processing. (2) The system must pr oduce meaningful analytics for teachers and resear chers: W e need statistics on what phones are difficult for a speaker and what has been learned during game play . As International Phonetic Alphabet (IP A) is widely known among teachers, we report mispronunciations in IP A characters. (3) W e do not have r esour ces to generate phonetic tran- scriptions of pr onunciation irre gularities in L2 data: Our ana- lytics system is trained on large native databases and the scoring system is trained on a collection of roughly scored utterances. (4) The single utterance annotation scores are not reliable: The scoring system must be robust so it can be trained on noisy annotations, and must produce scores that the users find justi- fied. Describing utterances as chains of articulatory or phono- logical feature vectors has been a popular approach for mis- pronunciation detection [6, 7, 8, 9]. Noting that any realisable articulatory feature vector can be represented by a phonetic unit from the IP A alphabet, we settled on a simpler phone-based ap- proach, extracting articulatory features by mapping phones to feature vectors. For L2 CAPT , accurate scoring based on de- tected phonemes requires a recogniser that covers the phones of the target language as well as the phonetic space for mispro- nunciations. T o e xtend the phone set, the L1 of the speak ers is a natural choice, but including more languages will cov er a larger space of mispronunciations and decrease the L1 dependency . The Connectionist T emporal Classification (CTC) [10] is an end-to-end ASR framework that models all aspects of the speech sequence – both acoustic and linguistic aspects. It does not require segmentation of its training inputs nor does it pro- duce phone alignments in the same way as Hidden Markov Model (HMM) based models do. Duration information is es- sential for language learning for languages where phone dura- tion is important in distinguishing words from others. As our CAPT game aims to teach a standardised w ay of pronunciation, feedback for phoneme duration is valuable also in languages, where duration does not have such a phonemic role. As phone duration is encoded within the netw ork itself and can be learned from nati ve speech samples directly , CTC pro vides a good base for producing duration based feedback. W e can use the list of string edit operations that show the difference between the phonetic forms of scored and reference utterance as analytical feedback. Additionally we can use the list to compute Phonologically W eighted Levenshtein Distance (PWLD) [11] based error , where the effect of ev ery mispronun- ciation is fully transparent, and use it as a basis for a point-based feedback score. By computing error costs for phones based on articulatory/phonological feature weights shared between all phones, we can train a robust lo w-dimensional regression sys- tem from a small number of annotated L2 learner samples. In this paper we present an experiment on scoring utter- ances in a L2 CAPT game, using multilingual CTC phoneme recognition and a data-driven variant of PWLD. There are few academic reports on CAPT in learning games, we found only one [12]. And generally work on scoring of L2 utterances has been done on in-house data sets and reported in statistical man- ner . Though we are unable to share our audio data, by shar- ing our recognition results and scoring experiments as an online compendium 1 to the paper, we hope to giv e some concrete sam- ples for the field of automatic scoring of L2 learners’ utterances. 1 The online compendium can be found at https://github. com/aalto- speech/interspeech2019_karhila_et_al 0.0 0.5 1.0 1.5 2.0 2.5 Weighted phoneme error 0 1 2 3 4 5 Score /f i n d/ /p r n t/ /f i d/ / i n/ /k i d æ k o r s / Error and score for mispronunciations of /f n d/ Figure 1: Phoneme reco gnition sample results, their edit dis- tances and scor es. 2. Scoring based on PWLD The Lev enshtein distance used to compute error rates for ASR ev aluation treats all insertion, deletion and substitution opera- tions identically with a unit cost of 1. Phoneme error rate based on PWLD has been used for automatic prediction of intelligi- bility in [11], where the substitution weights are based on the number of differing articulatory features between each phone pair . W e propose using a corpus of L2 utterances with anno- tated scores to optimise weights for PWLD to form Data Driv en Phonologically W eighted Levenshtein Distance (DD-PWLD). In our approach also insertion and deletion costs are computed based on articulatory features. Thus the edit distance is com- puted as D = O sub F sub + O ins F ins + O del F del (1) where O sub , O ins and O del are n × p v ectors of substitution, insertion and deletion operations for each utterance, F sub , F ins and F del are the f -dimensional vectors of articulatory error costs for substitution, insertion and deletion costs. The oper- ation matrices O are composed from edit operation lists of the hypotheses with the smallest errors in N -best hypothesis lists for the utterances. The mapping to a score in the range [0 , r ] is ˆ y = r 1 − tanh a D L l (2) where L is a vector representing phoneme counts of the ref- erence utterances, l is the length compensation variable, a is general scaling for the mapping, y the human annotations for the utterances. W e want to choose F sub , F ins , F del , l and a to minimise the error between predictions ˆ y and reference annota- tions. Samples of the mapped scores for dif ferent mispronuncia- tions of the utterance "friend" are shown in Figure 1. The scor- ing can be broken down as described by the list of edit opera- tions that is a byproduct of the weighted Levenshtein distance. One sample analytical scoring is shown in T able 1. 3. Experiments W e present an experiment of scoring L2 learner utterances where target language is UK English and the speakers have a Finnish background. Recognition results and scoring training utilities are av ailable at the compendium repository . T able 1: Sample br eakdown of scoring for a pr onunciation at- tempt using the transpar ent scoring scheme in a format that can be shown to a user or a teacher . T ar get pronunciation: / f ô E n d / Y our attempt: / p r E n : t / T otal errors: 2.3 Length compensation multiplier: 6.4 Mapped score: 2.8 Breakdown: / f / → / p / Plosiv e instead of fricative -0.85 / ô / → / r / T rill instead of approximant -0.63 / n / → / n : / T oo long -0.44 / d / → / t / Not voiced -0.38 3.1. Data UK English as the target language is the most important. This is cov ered by WSJCAM0 corpus for adult speech and PF-ST AR for children’ s speech. Finnish as a the nativ e language of the language learner data set is also important, and is cov ered by the Finnish SPEECON and SPEECHD A T databases, both of which contain both adult and child speech. As the CAPT game aims for UK pronunciation, we added American English to the training pool to better detect non-UK pronunciations. This was easily av ailable in WSJ1 for adults and TI-DIGITS for children (adult data from TI-DIGITS was not used). As our aim is to cover as much of the IP A acoustic- phonetic space for non-tonal languages as possible using the re- sources easily available to us, we added Swedish from Spraak- banken as Swedish includes vo wels and retroflex consonants that do not exist in the other languages av ailable to us. This is by far not a complete co verage of the IP A space, b ut is a solid start for our current purposes. L2 UK English data w as collected from Finnish 10-12 year old children using the game prototype. Additional game data was collected from native English speakers of the same age group with Southern English dialect. The dictionaries used are CMUdict for American English, COMBILEX for American and UK English [13], Språkbanken for Swedish and a combination of Speechdat lexicon and rule- based mapping for Finnish. 3.2. Annotations Annotations were made by a single annotator . As it is gener- ally known that the reliability of labels produced by a single annotator is questionable, we pay attention extra attention to downplay outlier influence when training our scoring system in Section 3.8. The annotations were first made on a 0-100 scale and later mapped to the 0-5 scale. The utterances were grouped by ref- erence phrase. The annotator listened to all the samples in a group, and tried to find samples that represent the best pronunci- ations and samples that represent typical errors. For most utter- ance groups, there were one or more mispronunciation patterns that showed up repeatedly These were usually one phoneme away from the model pronunciation. If the attempt differed from the model with one feature e.g. voicedness, the score was deducted from 100 to 90. If the attempt differed more than that the reduction w as bigger , depending on the distance of articu- lation place or manner . This kind of reduction according the phonemes was possible with the best pronounced samples. The more mistakes there were, the more difficult it was to calculate the labelling with such a simple system. This scoring framework was possible as the samples were mainly one-word long. With longer sentences the labelling was more complicated and in these the main focus w as on the words that were pronounced mostly correctly . Because the samples were short, prosody was not used as a criterion in the labelling. Even though e.g. stress was in many cases foreign, it mostly did not reduce the intelligibility and clarity of the phones. 3.3. Phoneme set The phone set consists of all the phones used in the v arious pro- nunciation dictionaries of the four languages or dialects. The grouping of phones between languages is based on the IP A al- phabet. Some phones are combined to form more meaningful units for pronunciation learning. For example, all diphthongs and many vo wel sequences like / iø / and / i A / as well as some combined consonants like / > d Z / are represented as single units. The phonetic units are listed in the online compendium. Of the 157 different phonetic units used, 28 are unique to one lan- guage, the rest are shared between at least two languages or dialects. The dictionary mappings and phone combinations are ev olving work, and the list should not be used as a definite ref- erence. For example, the geminated / > t : s / present in the Finnish language is represented by a single unit, b ut the more ubiquitous normal length variation / > ts / is represented by / t / and / s / sepa- rately . Also rhoticity at the end of vo wel sequences needs a closer look. Future v ersions will see these improv ed. 3.4. Models Three model sets were used in the experiment. A HMM-GMM model is trained to select correct pronunciation v ariants and pro- duce alignments, a CTC model is trained to do phoneme recog- nition, and a regression model to turn the recognition outputs into scores. 3.5. HMM-GMM training The speaker -adaptiv e HMM-GMM model is trained with Kaldi [14]. It is only used to to choose best pronunciation alternativ es and provide segmentations for the training utter- ances that are fed into the CTC training. Sev eral model sets are trained. First, a combined model set trained with all the data is used for segmenting the training data. This is trained with long and short variations of phonetic units merged. Another model set is trained only on languages where duration informa- tion is phonetically relevant. Where it matters, each segment of training utterances as se gmented by the combined system is postprocessed to be short or long variation. This is more nor- mativ e than descriptive. Our interest lies in guiding the users of our system to attune the durations of their uttered phones to- wards some standard, which becomes thus defined by the data. By including the duration information in the model set, we en- able the CTC recognition network to learn it. T able 2 shows the reduction in performance caused by the merged phone set. For most of the test sets, language mod- els (LM) used for the decoding are trained from training data labels. The table shows that data pooling ov er languages de- grades performance. It also shows that performance for close microphones is better than table microphones. T able 2: Err or rates for the recognition systems. These should not be compared to the state-of-the-art, as the only function for the HMM-GMM models is alignment. What the results show is that the extended phoneme set is not ideal for basic recogni- tion tasks and how the performance dr ops when speech data is pooled acr oss languages. WER for HMM-GMMs is computed on test sets, the PER for CTC on development sets. HMM HMM CTC Unmrg. Mer ged Rel. Merged Data set WER WER change PER* UK ENGLISH PF-star 7.83 14.72 +87% 55.2 WSJ0 si mic1 26.47 31.43 +18% 51.5 WSJ0 si mic2 27.68 32.28 +17% 51.4 US ENGLISH WSJ1 si mic1 - 31.43 - 51.5 WSJ1 si mic2 - 26.21 - 51.0 TIDIGITS - 0.83 - - FINNISH Speechdat 7.60 8.54 +12% 45.8 Speecon - clean mic0 6.07 7.72 +27% 48.0 - clean mic1 8.41 10.47 +24% 51.0 - café mic0 6.98 7.72 +11% 50.1 - café mic1 7.55 9.70 +28% 49.2 - children mic0 11.69 12.90 +10% 48.1 - children mic1 12.83 15.45 +20% 48.2 SWEDISH Spraakbanken - 41.46 - 53.4 3.6. CTC model training The CTC model is a 3 layer deep and 600 units wide Gated Re- current Unit (GRU) Recurrent Neural Network (RNN) running on T ensorflow [15]. The model is trained with batches of 64 ran- domly cut 2 second segments with random resampling between 0.95 and 1.05 speed. A random linear sum of noise signals is added to the samples [16] and the final wa veform is filtered by a random filter to emulate microphone differences [17]. The ref- erence labels are subsections of label strings and are cut based on time alignments produced by basic HMM-GMM model. 40 Mel-weighted spectral bins computed on 512 point FFT on 25 ms frames at 10 ms interv al on 8 kHz audio were computed and appended with a binary vector of Speaker age groups (-5, 6-8, 9-12, 13-17, 18-64, 65-) and gender . T able 2 shows the CTC Phoneme Error Rate (PER) performance. Already it per - forms surprisingly consistently for all the nati ve data sets, get- ting around 50% of the phones right, and the performance drop between close and table microphones is much smaller than with the HMM-GMM systems. 3.7. Articulatory Featur es Each phone is described by an articulatory feature vector . Our articulatory feature set is based on question sets used in model clustering parametric speech synthesis. Additional features were added for diphthongs and vo wel sequenecs, describing the direction of movement of the articulation place. T able 3 lists the features used in this work. Compared to the 18 articulatory parameters in [9] or 14 in [11], our selection of 55 descriptors is substantially larger and can cov er a much lar ger se gment of the IP A space without duplicate mappings between phones and feature vectors. T able 3: Articulatory features used in this work Articulatory features for vowels dipthong, long, rhotic, unround-schwa front, nearfront, central, nearback, back, open, nearopen, openmid, mid, closemid, nearclose, close, rounded, unrounded, diphthong-forward, diphthong-backward, diphthong-opening, diphthong-closing, diphthong-rounding, diphthong-unrounding, Articulatory features for non-vowels affricate, approximant, fricati ve, plosiv e, nasal, trill, alveolar , bilabial, coronal, dental, dorsal, labial, labiodental, lateral, postalveolar , velar pulmonic, retroflex ed, syllabic, palatalized, aspirated, lenis, fortis, labialized, voiced, un voiced, geminated T able 4: Corr elation between pr edictions and human annota- tions for the scoring test set. Outliers System All remo ved PWLD-LR 0.31 0.36 DDPWLD-LR 0.47 0.54 PWLD-SVM 0.52 0.61 PWLD-RF 0.49 0.56 3.8. Scoring Scoring was done by two linear regressors (LR) and two non- linear ones. All optimisations are done on the L2 speech database, with training data used once with real labels and scores, and once with shuffled labels and rejected scores. One tenth of the training data is split to be the dev elopment set. PWLD-LR uses the default phonological weights and only terms a and l used for mapping the distance to a score are op- timised. DDPWLD-LR additionally optimises the phonologi- cal weights F for computing the distance. Optimal values are found with the BFGS optimiser . T o reduce outlier influence in optimisation, Cauchy norm (using log of error) and appropri- ate bounds for values are used. The initial best paths from the N -best list are computed with the cost presented in [11]. Sup- port V ector Machine Re gressors (SVR) and Random Forest Re- gressors (RF) are trained on the same parameterisations as the PWLD, concatenated with the length of the reference utterance in number of phones. Rob ust parameters are selected using the separate dev elopment set. 3.9. Results T able 4 lists the correlations between human annotations and predictions. The left result column sho ws the correlation for all data and the right column shows the results when outliers have been removed from the test set. If at least two of the regressors produced an error more than 2 standard de viations above their mean error, the data item in question was marked as an outlier . Out of the 4313 test items, 205 were considered outliers. Com- pared to PWLD, data-driv en estimation of weights for DDP- WLD improved the performance by around 50%, almost to the lev el of SVM and RF performance. The black box methods still beat the DDPWLD by a margin, but the drop in performance is compensated in DDPWLD by the ability to assign a cost for ev ery individual operation. 4. Discussion The description of errors as string operations and describing the sum of string operations as vectors of articulatory differences between reference and hypothesis is an efficient way of param- eterising pronunciation mistakes. It giv es a robust basis for a scoring system. The system’ s leniency is dependent on the qual- ity of the underlying phoneme recogniser . Most recognition er- rors reduce the score rather than improv e it. Therefore the worse the recogniser, the harder it is to get a high score. At the moment the recogniser is performing at around 50% phoneme error rate for the nativ e test sets, which is still considerably higher than the 30% letter error rates reported for the original CTC setups [10]. By using N -best lists, the system works well enough for de- ployment in a g ame. As mentioned in Section 3.3, the phoneme merging between the data sets is not finished and some perfor- mance gains are expected from correcting errors there. Another problem lies mostly on the recogniser’ s side. Al- lowing mid-utterance code-switching is dif ficult with only na- tiv e utterances in the training data. The CTC network relies too much on past information and is not able to correctly find phoneme sequences that contain phone sequences unique to different languages. An example is a mispronunciation of the Finnish word “korkea” / korkea / with the trill / r / replaced by an alveolar approximant / ô / results in / ko ô k h a /. Here, the vo wel sequence / ea /, which is foreign to UK English, is replaced by / k h a /. Another is a mispronunciation of the English word “friend” / f ôE nd / with the alveolar approximant replaced by a trill resulting in the recognition as / pr E n : t /. As the phone combi- nation / fr / is not adequately presented in the training data, the whole utterance is recognised as if it were in the Finnish pho- netic space. The quality of the test data gathered from language learn- ers using an early version of the CAPT data is very variable in speaking and recording quality , and the recordings can start and stop in the middle of the utterance. The code switching issue needs to be inv estigated. The fact that the GR U internal states are reset to zero for e very training batch probably makes the system less prone to code-switching. Reusing the internal state as is for the next batch might also be problematic, as the extra speaker information is included at a lo w level. A revie w of best practises needs to be done. W ord boundaries are also problematic. F or example the Finnish utterance, “on koala” is pronounced either / on ko A l A / or / o N ko A l A /, but always with a vo wel sequence / o A /, whereas the utterance “onko ala?” is pronounced either as / o N ko A l A / or / o N ko A l A /, with the / o A / combination either as vo wel sequence or as slightly separated phones. 5. Conclusions W e ha ve presented a rather simple two-component system for scoring L2 learner utterances using a CTC phone recogniser and DDPWLD as an error measure. W e found that this simple linear scoring method performs almost as well as the established black box regression methods. Future work includes improving the integration of the dif- ferent phoneme sets and dictionaries and develop further our phoneme recogniser . An in-depth comparison to more estab- lished pronunciation quality methods (DTW -based path costs and forced alignment of canonical transcription) using the same data sets is an on-going work. Future work includes also solving the code-switching issue for the CTC training. 6. References [1] A. M. Harrison, W .-K. Lo, X.-j. Qian, and H. Meng, “Imple- mentation of an extended recognition network for mispronunci- ation detection and diagnosis in computer-assisted pronunciation training, ” in Pr oceedings of 2nd W orkshop on Speech and Lan- guage T ec hnology in Education (SLaTE) . W arwickshire, Eng- land, United Kingdom: ISCA, September 2009. [2] S. M. Witt and S. J. Y oung, “Phone-le vel pronunciation scoring and assessment for interactiv e language learning, ” Speech com- munication , vol. 30, no. 2-3, pp. 95–108, 2000. [3] S. Kanters, C. Cucchiarini, and H. Strik, “The goodness of pro- nunciation algorithm: a detailed performance study , ” in Proceed- ings of 2nd W orkshop on Speech and Language T echnology in Education (SLaTE) . W arwickshire, England, United Kingdom: ISCA, September 2009. [4] R. Karhila, S. Ylinen, S. Enarvi, K. Palomäki, A. Nikulin, O. Rantula, V . V iitanen, K. Dhinakaran, A.-R. Smolander , H. Kallio, K. Junttila, M. Uther, P . Hämäläinen, and M. Kurimo, “Siak — a game for foreign language pronunciation learning, ” in Pr oc. Interspeech 2017 , 2017, pp. 3429–3430. [5] S. Ylinen and M. Kurimo, Oppimisen tulevaisuus . Gaudeamus, 2017, ch. Kielenoppiminen vauhtiin puheteknologian avulla, pp. 57–69. [6] R. Duan, T . Kawahara, M. Dantsuji, and J. Zhang, “Multi-lingual and multi-task DNN learning for articulatory error detection, ” in 2016 Asia-P acific Signal and Information Processing Association Annual Summit and Confer ence (APSIP A) , Dec 2016, pp. 1–4. [7] ——, “Effecti ve articulatory modeling for pronunciation error detection of L2 learner without non-native training data, ” in Pr oceedings of the IEEE International Conference on Acous- tics, Speech and Signal Processing (ICASSP) . Ne w Orleans, Louisiana, United States: IEEE, 2017, pp. 5815–5819. [8] V . Arora, A. Lahiri, and H. Reetz, “Phonological feature based mispronunciation detection and diagnosis using multi-task DNNs and active learning, ” in Pr oceedings of INTERSPEECH . Stock- holm, Sweden: ISCA, 2017, pp. 1432–1436. [9] ——, “Phonological feature-based speech recognition system for pronunciation training in non-nativ e language learning, ” The Jour- nal of the Acoustical Society of America , vol. 143, no. 1, pp. 98– 108, 2018. [10] A. Grav es, S. Fernández, F . Gomez, and J. Schmidhuber , “Con- nectionist temporal classification: labelling unsegmented se- quence data with recurrent neural networks, ” in Pr oceedings of the 23rd international conference on Machine learning (ICML) . A CM, 2006, pp. 369–376. [11] L. Fontan, I. Ferrané, J. Farinas, J. Pinquier , and X. Aumont, “Using phonologically weighted lev enshtein distances for the pre- diction of microscopic intelligibility , ” in Proceedings of INTER- SPEECH , San Francisco, California, United States, 2016. [12] S. S.-C. Y oung and Y . H. W ang, “The game embedded CALL system to facilitate English vocab ulary acquisition and pronun- ciation. ” Educational T echnology & Society , vol. 17, no. 3, pp. 239–251, 2014. [13] S. Fitt and K. Richmond, “Redundancy and productivity in the speech technology lexicon-can we do better?” in Pr oceedings of INTERSPEECH . Pittsburgh, Pennsylvania, United States: ISCA, 2006. [14] D. Pove y , A. Ghoshal, G. Boulianne, L. Burget, O. Glembek, N. Goel, M. Hannemann, P . Motlicek, Y . Qian, P . Schwarz, J. Silo vsky , G. Stemmer, and K. V esely , “The Kaldi speech recog- nition toolkit, ” in Pr oceedings of IEEE W orkshop on Automatic Speech Recognition and Understanding (ASR U) , Hilton W aikoloa V illage, Big Island, Hawaii, United States, Dec. 2011, iEEE Cat- alog No.: CFP11SR W -USB. [15] M. Abadi, A. Agarwal, P . Barham, E. Brevdo, Z. Chen, C. Citro, G. S. Corrado, A. Davis, J. Dean, M. Devin, S. Ghemawat, I. Goodfello w , A. Harp, G. Irving, M. Isard, Y . Jia, R. Jozefo wicz, L. Kaiser , M. Kudlur , J. Levenber g, D. Mané, R. Monga, S. Moore, D. Murray , C. Olah, M. Schuster, J. Shlens, B. Steiner, I. Sutskev er , K. T alwar , P . Tuck er , V . V anhoucke, V . V asudev an, F . V iégas, O. Vin yals, P . W arden, M. W attenberg, M. Wick e, Y . Y u, and X. Zheng, “T ensorFlo w: Large-scale machine learning on heterogeneous systems, ” 2015, software available from tensorflow .or g. [Online]. A vailable: http://tensorflow .org/ [16] A. Hannun, C. Case, J. Casper, B. Catanzaro, G. Diamos, E. Elsen, R. Prenger, S. Satheesh, S. Sengupta, A. Coates et al. , “Deep speech: Scaling up end-to-end speech recognition, ” arXiv pr eprint arXiv:1412.5567 , 2014. [17] O. Räsänen, S. Seshadri, and M. Casillas, “Comparison of syllab- ification algorithms and training strategies for robust word count estimation across dif ferent languages and recording conditions, ” in Pr oceedings of INTERSPEECH . Hyderabad, India: ISCA, 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment