Joint High Dynamic Range Imaging and Super-Resolution from a Single Image

This paper presents a new framework for jointly enhancing the resolution and the dynamic range of an image, i.e., simultaneous super-resolution (SR) and high dynamic range imaging (HDRI), based on a convolutional neural network (CNN). From the common trends of both tasks, we train a CNN for the joint HDRI and SR by focusing on the reconstruction of high-frequency details. Specifically, the high-frequency component in our work is the reflectance component according to the Retinex-based image decomposition, and only the reflectance component is manipulated by the CNN while another component (illumination) is processed in a conventional way. In training the CNN, we devise an appropriate loss function that contributes to the naturalness quality of resulting images. Experiments show that our algorithm outperforms the cascade implementation of CNN-based SR and HDRI.

💡 Research Summary

This paper introduces a unified framework that simultaneously performs high‑dynamic‑range imaging (HDRI) and single‑image super‑resolution (SISR) from a single low‑resolution, low‑dynamic‑range (LDR‑LR) input. Recognizing that both HDRI and SISR fundamentally aim to recover lost high‑frequency details, the authors adopt a Retinex‑based decomposition to separate the input luminance into a low‑frequency illumination component (I) and a high‑frequency reflectance component (R). The illumination, being smooth, is upscaled using bicubic interpolation followed by a simple gamma correction (γ = ½) to approximate HDR‑HR illumination (ILL‑E). The reflectance, which encodes fine textures and details, is processed by a dedicated convolutional neural network called REF‑Net.

REF‑Net employs a stacked hourglass‑U‑Net architecture: two hourglass‑shaped U‑Nets are placed sequentially, with the first network’s output concatenated to the second via skip connections, and the final feature map upscaled through sub‑pixel convolution. Because reflectance is defined as a log‑ratio and can assume unbounded values, the authors first apply a hyperbolic tangent (tanh) non‑linearity to map it into (‑1, 1), stabilizing training. The network predicts the tanh‑scaled HDR‑HR reflectance, which is then inverse‑tanh transformed back to the original scale.

Training leverages the MMPSG dataset, which provides multi‑exposure sequences and corresponding HDR images. For each scene, the standard‑exposed image is downsampled to create the LDR‑LR input, while the HDR‑HR reflectance derived from the HDR ground truth serves as the target. To mitigate unknown camera response functions (CRFs), the authors assume a simple gamma curve (γ < 1) and invert it (f⁻¹(x)=x^{2.2}) for linearization, thereby reducing dataset inconsistency.

The loss function combines two terms. The reconstruction loss (L_recon) is a mean absolute error (MAE) between predicted and ground‑truth reflectance in the tanh domain. To enhance perceptual sharpness and generate realistic textures, a relativistic average GAN (RaGAN) loss (L_G) is added, encouraging the generator to produce reflectance that appears more “real” than the true samples when judged by a discriminator. The overall loss is L = L_recon + μ L_G with μ = 10⁻³. Two model variants are reported: HDRI‑SR‑B (reconstruction loss only) and HDRI‑SR‑C (including adversarial loss).

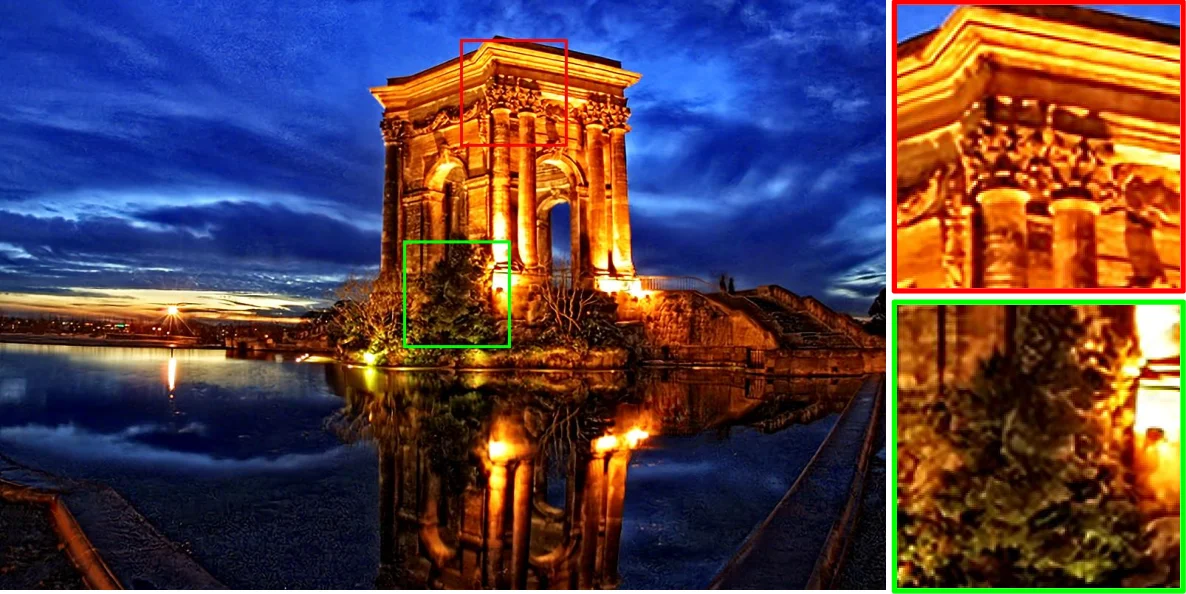

Experiments compare the proposed joint model against cascaded pipelines that apply state‑of‑the‑art SISR (e.g., EDSR) and HDRI networks sequentially. Quantitative metrics (PSNR, SSIM, LPIPS, NIQE) show consistent superiority of the joint approach, especially in preserving high‑frequency textures and handling saturated highlights. Moreover, the unified network uses roughly 30 % fewer parameters than the combined cascaded systems, leading to faster inference suitable for real‑time applications. Qualitative user studies also favor the joint method.

The paper concludes that integrating HDRI and SR into a single CNN, guided by Retinex decomposition and a carefully designed loss, yields both efficiency and higher visual fidelity. Limitations include reliance on simple interpolation for illumination, which may leave residual artifacts in scenes with extreme lighting variations, and the need for broader HDR datasets to further validate generalization. Future work may explore learned illumination enhancement or multi‑scale illumination modeling to address these issues.

Comments & Academic Discussion

Loading comments...

Leave a Comment