Attention-based Audio-Visual Fusion for Robust Automatic Speech Recognition

Automatic speech recognition can potentially benefit from the lip motion patterns, complementing acoustic speech to improve the overall recognition performance, particularly in noise. In this paper we propose an audio-visual fusion strategy that goes…

Authors: George Sterpu, Christian Saam, Naomi Harte

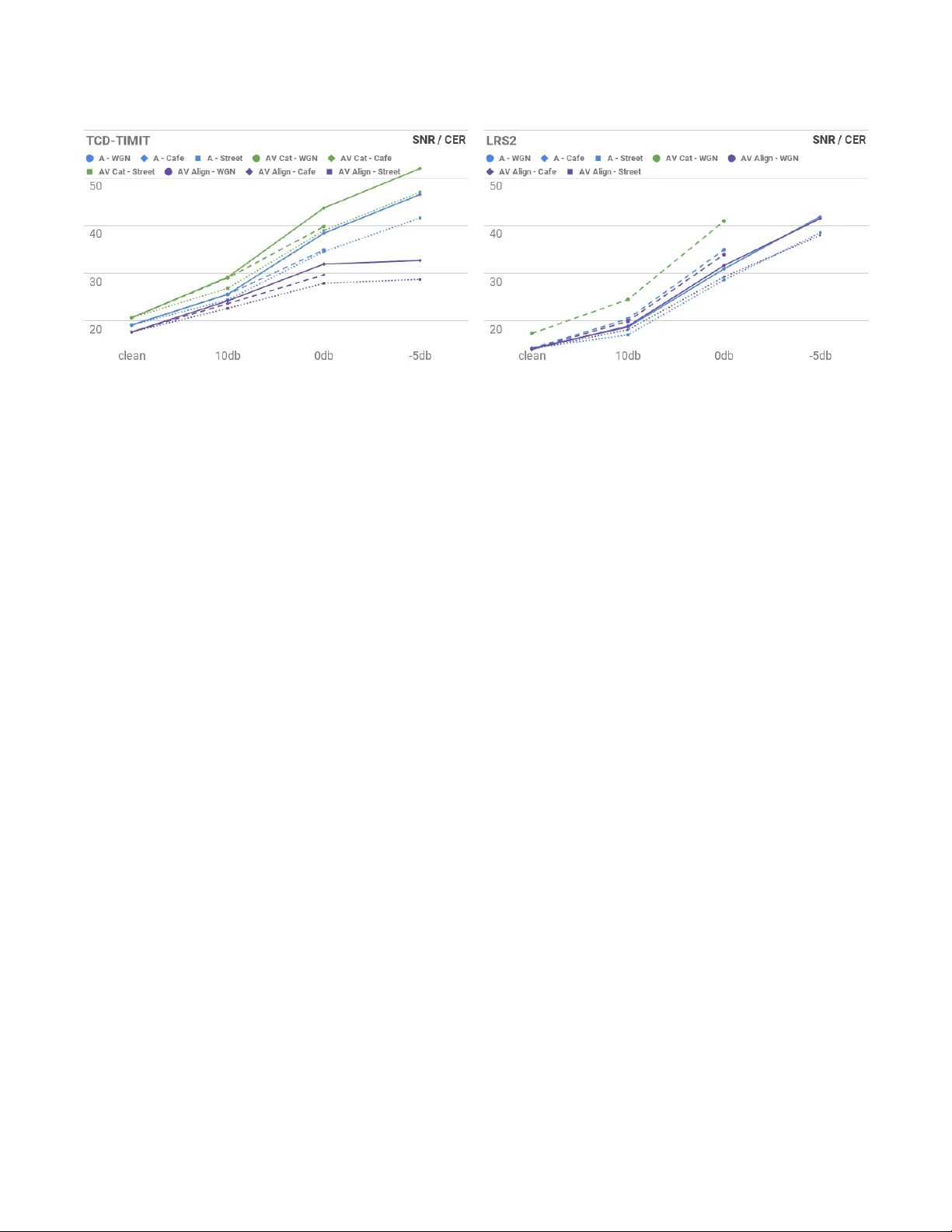

Aention-based A udio- Visual Fusion for Robust A utomatic Spee ch Recognition George Sterpu ∗ AD APT Centre, Sigmedia Lab, EE Engineering, Trinity College Dublin Dublin, Ireland sterpug@tcd.ie Christian Saam ∗ AD APT Centre, Sigmedia Lab, Trinity College Dublin Dublin, Ireland saamc@scss.tcd.ie Naomi Harte AD APT Centre, Sigmedia Lab, EE Engineering, Trinity College Dublin Dublin, Ireland nharte@tcd.ie ABSTRA CT A utomatic spe ech recognition can potentially benet from the lip motion patterns, complementing acoustic sp eech to improve the overall recognition performance, particularly in noise. In this paper we propose an audio-visual fusion strategy that go es beyond simple feature concatenation and learns to automatically align the two modalities, leading to enhanced repr esentations which increase the recognition accuracy in both clean and noisy conditions. W e test our strategy on the TCD- TIMI T and LRS2 datasets, designed for large vocabulary continuous spee ch recognition, applying three types of noise at dierent power ratios. W e also exploit state of the art Sequence-to-Sequence architectures, showing that our method can be easily integrated. Results show relative improvements from 7% up to 30% on TCD- TIMI T over the acoustic modality alone, depending on the acoustic noise level. W e anticipate that the fusion strategy can easily generalise to many other multimodal tasks which involve correlated modalities. Code available online on GitHub: https://github.com/georgesterpu/Sigmedia- A VSR KEY W ORDS Lipreading; A udio- Visual Spe ech Recognition; Multimodal Fusion; Multimodal Interfaces A CM Reference Format: George Sterpu, Christian Saam, and Naomi Harte. 2018. Attention-based A udio-Visual Fusion for Robust Automatic Spe ech Recognition. In 2018 International Conference on Multimodal Interaction (ICMI ’18), October 16–20, 2018, Boulder , CO, USA. ACM, New Y ork, N Y , USA, 6 pages. https://doi.org/ 10.1145/3242969.3243014 1 IN TRODUCTION Human speech interaction is inherently multimodal in nature: we both watch and listen when communicating with other people. Under clean acoustic conditions, the auditory mo dality carries most of the useful information, and recent state of art systems [ 5 ] are capable of automatically transcribing spoken utterances with an accuracy above 95%. The visual modality becomes most eective when the audio channel is corrupted by noise, as it allows us to recover some of the suppressed linguistic features. ∗ Both authors contributed equally to this work. ICMI ’18, Octob er 16–20, 2018, Boulder , CO, USA © 2018 Copyright held by the owner/author(s). Publication rights licensed to the Association for Computing Machinery . This is the author’s version of the work. It is posted here for your personal use. Not for redistribution. The denitiv e V ersion of Record was published in 2018 International Conference on Multimo dal Interaction (ICMI ’18), October 16–20, 2018, Boulder , CO, USA , https://doi.org/10.1145/3242969.3243014. Exploiting both modalities in the context of A utomatic A udio- Visual Sp eech Recognition (A VSR) has been a challenge. One reason is the inconclusive research on what are good visual features for Large V ocabulary Continuous Speech Recognition (LVCSR) [ 14 ] that match the well established Mel-frequency cepstral co ecients for acoustic speech. Another reason is the need for a fusion strategy of two correlated data streams running at dierent frame rates [ 11 ]. Our work addresses b oth these challenges and we make a numb er of contributions. W e introduce an audio-visual fusion strategy that learns to align the two modalities in the featur e space. W e embe d this into a state of the art attention-based Sequence-to-Sequence (Seq2seq) Automatic Speech Recogition (ASR) system consisting of Re current Neural Network (RNN) encoders and de coders. W e evaluate the strategy on a LVCSR task involving the two largest pub- licly available audio-visual datasets, TCD- TIMI T and LRS2, which contain complex sentences of b oth read speech and in-the-wild recordings. Using b oth of these datasets oers repeatability and allows other researchers to compare their systems dir ectly to ours. W e demonstrate improved performance over an acoustic-only ASR system for clean sp eech and also for three types of noise: white noise, cafe noise and street noise. The paper is organised as follows: In section 2 we review related work in the eld of A VSR. In se ction 3 we describe our framework and introduce our audio-visual fusion strategy . Section 4 presents our experimental results, and we discuss our ndings in Se ction 5. 2 RELA TED W ORK ASR. The work of [ 5 , 15 ] shows that attention-based Seq2seq mod- els [ 1 ] can surpass the performance of traditional ASR systems on challenging speech transcription tasks such as dictation and voice search. These models ar e characterised by a high degree of simplicity and generality , requiring minimal domain knowledge and making no strong assumptions about the modelled se quences. These properties make them go od candidates for the A VSR task, where the potential benet of the visual mo dality has been limited by an inability to exploit human domain knowledge for modelling. In our work, we use attention-base d Se q2seq models to encode representations of both modalities. Lip-reading. Transcribing speech from the visual modality alone, i.e. lip-reading, has impr oved signicantly in recent years thanks to the advancements in neural networks. While there have b een multiple contributions to the wor d classication task [ 7 , 13 , 20 , 22 ], the work of [ 19 ] transcribes large vocabular y speech at the charac- ter level, and this is the work most comparable to ours. The visual processing front-end consists of a Conv olutional Neural Network (CNN) which learns a hierarchy of features fr om the training data. W e adopt the CNN variant with residual connections [ 10 ], as it attains competitive results in many computer vision tasks. A udio- Visual fusion. Some of the earliest audio-visual fusion strategies are reviewed in [ 11 , 14 ], broadly classied into feature fusion and decision fusion methods. In A VSR, feature fusion is the dominant approach [ 13 , 19 ]. Y et, as pointe d out by [ 14 ], concate- nating features does not explicitly model the stream reliabilities. Furthermore, [ 19 ] observe that the system be comes over-r eliant on the audio modality and apply a r egularisation technique where one of the streams is randomly dr oppe d out during training [ 12 ]. Our method diers by always learning from the tw o str eams at the same time. In addition, while [ 19 ] concatenates the sentence summaries, we learn to correlate the acoustic with the visual features at ev er y time step, an opportunity to make corrections at much ner detail. Several other approaches from related areas focus on modelling cross-view or cross-modality temporal dynamics and alignment with modications of gated memor y cells [ 16 , 18 , 23 , 24 ]. While these RNN architectures were intr oduce d to address diculties in learning long-term dependencies e.g. Long Short- T erm Memory (LSTM) cells [ 8 ] or Gated Recurrent Units [ 6 ], such dependencies were still found problematic in Seq2seq models based on gated RNN cells and lead to the introduction of temporal attention mechanisms [ 1 ]. Thus it seems r easonable to explore such attention mechanisms for potentially long-range cross-stream interactions rather than putting additional memorisation burdens on the basic building blocks. A gating unit is introduced in [ 21 ], designed to lter out un- reliable features. How ever , it still takes concatenated audio-visual inputs, and the synchrony of the streams is only ensured through identical sampling rate, limiting the temporal context. 3 METHOD W e begin by reviewing attention-based Seq2seq networks in order to introduce our fusion strategy more clearly in Section 3.5. 3.1 Background The network consists of a sequence encoder , a sequence deco der and an attention me chanism. The enco der is based on an RNN which consumes a sequence of feature vectors, generating interme- diate latent representations (coined as memory ) and a nal state representing the sequence latent summary . The decoder is also an RNN, initialised from the sequence summary , which predicts the language units of interest ( e.g. characters). Because the encoding process tends to b e lossy on long input se quences, an attention mechanism was introduced to soft-sele ct from the encoder memory a context vector to assist each decoding step. Attention mechanisms. Attention typically consists in com- puting a context v e ctor as a combination of state vectors ( values) taken from a memory , weighted by their correlation score with a target state (or query ). α i j = s c or e ( v alu e j , que r y i ) (1) c i = Õ j α i j v alu e j (2) The context vector c is then mixed with the query to form a context-aware state vector . So far , this has been used successfully T able 1: CNN Architecture. All convolutions use 3x3 kernels, except the nal one. The Residual Blo ck is taken from [10] in its full preactivation variant. layer operation output shape 0 Rescale [ -1 ... +1] 36x36x 3 1 Conv 36x36x 8 2-3 Res block 36x36x 8 4-5 Res block 18x18x 16 6-7 Res block 9x9x 24 8-9 Res block 5x5x 32 10 Conv 5x5 1x1x 128 in Seq2seq decoders, where the quer y is the current decoder state and the values represent the encoder memory . 3.2 Inputs Our system takes auditory and visual input concurrently . The audio is the raw waveform signal of an entire sentence. The visual input consists of video frame se quences, centred on the speaker’s face, which correspond to the audio track. W e use the OpenFace toolkit [ 3 ] to dete ct and align the faces, then we cr op around the lip region. 3.3 Input pre-processing A udio input. The audio waveforms are re-sampled at 22,050 Hz, with the option at this step to add several types of acoustic noise at dierent Signal to Noise Ratios (SNR). Similar to [ 5 ], we compute the log magnitude spe ctrogram of the input, choosing a window length of 25ms with 10ms stride and 1024 frequency bins for the Short-time Fourier T ransform, and a frequency range from 80Hz to 11,025Hz with 30 bins for the mel scale warp. Finally , we append the rst and se cond order derivatives of the log mel featur es, ending up with a feature of size 90 computed every 10 ms. Visual input. The lip regions are 3-channel RGB images down- sampled to 36x36 pixels. A ResNet CNN [ 10 ] processes the images to produce a feature vector of 128 units per frame. The details of the architecture are presented in T able 1. 3.4 Sequence enco ders A udio and visual feature sequences dier in length, b eing sampled at 100 and 30 Frames per Second (FPS) r espe ctively . Across training examples, the sequences also have variable lengths. W e process them using two LSTM [ 8 ] RNNs. The architecture of the LSTMs consists of 3 layers of 256 units . W e collect the top-layer output sequences of both LSTMs and also their nal states, referring to them as encoding memories and sequence summaries respe ctively . 3.5 A udio- Visual fusion strategy This subsection describes the key architectural contribution. Our premise is that conv entional dual-attention mechanisms, such as the one in [ 19 ], overburden the decoder in Seq2seq architectures. In the uni-modal case, a typical decoder has to perform both lan- guage modelling and acoustic deco ding. Adding another attention mechanism that attends to a second mo dality requires the decoder to also learn correlations among the input modalities. Figure 1: Proposed A udio- Visual Fusion Strategy . The top layer cells of the A udio Encoder (red) attend to the top layer outputs of the Video Encoder (green). The Decoder receives only the A udio Encoder outputs after fusion with the Video outputs. For clarity , the Decoder is not fully shown. W e aim to make the mo delling of the audio-visual correlation more explicit, while completely separating it from the de coder . Thus, we move this task to the encoder side. Our strategy is to decouple one modality from the decoder and to introduce a supplementary attention mechanism to the top layer of the couple d modality that attends to the enco ding memory of the decoupled mo dality . The decoder only receives the nal state and memory of the couple d encoder’s top layer like a standard uni-modal attention decoder . A diagram of the fusion strategy is shown in Figure 1. The queries from Eq. (1) come from the states of the top audio encoder layer , while the values represent the visual encoder memor y . The acoustic encoder’s top layer can no longer be considered to hold acoustic-only representations. They are fused audio-visual representations based on corresponding high le vel features from the two modalities matched via attention. This layer could also be viewed separately as a higher lev el enco der (red layer in Figur e 1) operating on acoustic and visual hidden representations. The following intuition motivates our choice. The top lay ers of stacked RNNs encode higher order features, which are easier to cor- relate than low er levels. They provide speech related abstractions from visual and acoustic features. In addition, any time one feature stream is corrupted by noise its enco dings may be automatically corrected by correlated encodings of the other stream. The audio and visual modalities can have two-way interactions. Howev er , by design only the acoustic mo dality learns from the visual. This is b ecause in clean sp eech, the acoustic modality is dom- inant and sucient for recognition, while the visual one presents intrinsic ambiguities: the same mouth shape can explain multiple sounds. The design assumes that acoustic encodings can be par- tially corrected or even reconstructed from visual encodings. One disadvantage is that an alignment score has to be computed for each timestep of the much longer audio sequence. W e also note that this fusion strategy explicitly models the align- ment b etween each acoustic and all visual enco dings. This elegantly addresses the problem of dierent frame rates, that traditionally required slower modalities to be interp olated in order to match the frame rate of the fastest modality . 3.6 Decoding Our de coder is a single layer LSTM of 256 units . As in [ 5 ], we use four attention heads to improve the o verall performance, while still attending to a single enhanced memor y . The decoder predicts characters, word lev el results are inferred by splitting at blanks. 4 EXPERIMEN TS AND RESULTS W e apply our audio-visual fusion metho d in the context of LV CSR. This is a well suited task for measuring the fusion p erformance, as correlations between modalities can be captured at the sub-word level. W e also consider that the visual modality has a lot more to benet from full sentences instead of isolated wor ds, as longer contexts are critical to disambiguate the spoken message, thus supplying more informative features to the auditory modality . 4.1 Datasets TCD- TIMI T [ 9 ] and LRS2 [ 4 ] are the largest publicly available audio-visual datasets suitable for continuous speech recognition. W e report our results on both datasets. TCD- TIMI T consists of 62 speakers and more than 6,000 ex- amples of read speech, typically 4-5 seconds long, from both pho- netically balanced and natural sentences. The video is laboratory recorded at 1080p resolution from two xed viewpoints. W e follow the speaker-dependent train/test protocol of [9]. LRS2 contains more than 45,000 sp oken sentences from BBC television. Unlike TCD- TIMI T it contains more challenging head poses, but a much lower image resolution of 160x160 pixels. Our results on LRS2 are not directly comparable with the ones on LRS [ 19 ], which is completely dierent from LRS2 and was never publicly released. In addition, LRS2 has a lot more diversity in content and head poses than LRS. T o the b est of our knowledge, this is the rst audio-visual baseline on LRS2. W e used the suggested evaluation protocol on LRS2 with one dierence. The face detection [ 3 ] failed on several challenging videos, so we kept only those where the detection was successful for at least 90% of the frames. With this rule, we kept approximately 87% of the training vide os and 91% of the test videos. T o foster reproducibility , we make available the list of les used in our subset. 4.2 Training and evaluation procedures W e train several uni- and bimodal Se q2seq systems. The unimodal networks process only the acoustic input, either in clean form or additively corrupted by three types of noise: a) White Gaussian Noise (W GN) , b) Cafeteria Noise , and c) Street Noise . The bimo dal networks take both audio and visual inputs at the same time . W e compare our method ( A V Align ) to an audio-only system ( A ) and dual attention feature concatenation [19] ( A V Cat ). In training, we directly optimise the cross entropy loss between ground-truth character transcription and predicted character se- quence via the AMSGrad optimiser [ 17 ]. In evaluation, we measure the Levenshtein edit distance b etween them, normalised by the ground truth length. Our r esults report this Character Error Rate Figure 2: Character Error Rate (CER) [%] on TCD- TIMI T Figure 3: Character Error Rate (CER) [%] on LRS2 (CER) metric in percents. Our results are plotted in Figures 2-3, while complete numerical values are listed in the appendix. 5 DISCUSSION W e begin by analysing our results on TCD- TIMI T . W e rst notice relative impro vements starting at 7% on clean speech (17.7% CER down from 19.16%), up to 30% at -5db SNR (32.68% CER down fr om 46.52%) using our fusion strategy ( A V Align ) over audio only ( A ). The feature concatenation approach ( A V Cat ) performed between 8% and 14% worse than A , suggesting that the A V Cat strategy is far from optimal and may be dicult to train. Indeed, [ 19 ] reported training for 500,000 iterations. They also used a stream dropout regularisation technique which failed to impro ve convergence in our case after training for longer than A V Align took to converge. Despite again clearly outperforming A V Cat , we see no benet of A V Align over audio-only recognition ( A ) on LRS2. Our visual front-end, while eective on the limited variability conditions of TCD- TIMI T , may not b e large enough to cope with the much more demanding conditions, varying poses and larger number of faces. Although not directly comparable , [ 19 ] used a more p owerful front- end architecture with additional pre-training. It is plausible that an under-performing video feature extraction cannot provide str ong enough support to the audio stream. Further , we observe in the network outputs a progression through several stages of learning. At rst, the decoder forms a strong lan- guage model learning correct wor ds and phrases. Later , the inu- ence of the acoustic de coding increases and the network learns letter to sound rules, over-generalising like a child and unlearning some of the correct spellings for wor ds. The comparatively much larger size of LRS2 allows re-learning the multitude of letter to sound rules much more reliably , such that it may become the factor dominating the error rate and driving the training. W e may then expect that the network needs to train longer and past this stage to start to fully exploit the visual information on LRS2. With A V Align the network appears to learn how to cope with noise by leveraging the visual modality . Furthermore, few studies prior to [ 19 ] show improvements in the clean condition, prompting [ 2 ] to call the interactions supplementary rather than complemen- tary . That our model is able to consistently outperform audio only recognition on TCD- TIMI T even under clean conditions points to the inherent acoustic confusability of sp eech and the potentially sub-optimal speech recognition process for which the visual signal may oer some complementary information. W e conclude that the A V Align strategy eectively separates the stream alignment task from the speech sound classication and language mo delling tasks in the decoder , as opposed to the joint modelling in [19]. One shortcoming of our method is the lack of explicit mod- elling of the visual stream’s reliability . Howev er , the result on LRS2 suggests that the network may have learnt to discard noisy or uninformative visual features. An open question is whether the attention-based fusion strategy is p owerful enough to cope with visual noise, rendering unnecessary a more complex structure . 6 CONCLUSIONS In this w ork, w e introduce an audio-visual fusion strategy for speech recognition. The method uses an attention mechanism to automatically learn an alignment between acoustic and visual modalities, leading to an enhance d representation of speech. W e demonstrate its eectiveness on the TCD- TIMIT and LRS2 datasets, observing relative improvements up to 30% ov er an audio-only sys- tem on high quality images, and no signicant degradation when the visual information b ecomes harder to exploit. Since our strategy was able to discover structure in an audio-visual speech recogni- tion task, we expect it to generalise to others tasks where the input modalities are semantically correlated. Further to its performance, what makes our fusion strategy at- tractive is its straightfor ward formulation: it can be applied to attention-based Seq2seq approaches by reusing existing attention code. Not only may it eectively generalise to many other multi- modal applications, but it also allo ws researchers to easily integrate our method into their solutions. A CKNO WLEDGMEN TS The ADAPT Centre for Digital Content T echnology is funded under the SFI Research Centres Programme (Grant 13/RC/2106) and is co-funded under the European Regional Development Fund. REFERENCES [1] Dzmitry Bahdanau, Kyunghyun Cho , and Y oshua Bengio. 2018. Neural Machine Translation by Jointly Learning to Align and Translate. In International Conference on Learning Representations . http://arxiv .org/abs/1409.0473 [2] T . Baltru ˘ saitis, C. Ahuja, and L. P . Morency . 2018. Multimo dal Machine Learning: A Sur vey and Taxonomy. IEEE Transactions on Pattern Analysis and Machine Intelligence (2018), 1–1. [3] T . Baltru ˘ saitis, P. Robinson, and L. P. Mor ency. 2016. OpenFace: An open source facial behavior analysis toolkit. In 2016 IEEE Winter Conference on A pplications of Computer Vision (W ACV) . 1–10. https://doi.org/10.1109/W A CV .2016.7477553 [4] BBC and Oxford University . 2017. The BBC-Oxford Multi-View Lip Reading Sentences 2 (LRS2) Dataset. http://w ww .robots.ox.ac.uk/~vgg/data/lip_reading_ sentences/. (2017). Online, Accessed: 11 A ugust 2018. [5] Chung-Cheng Chiu, Tara Sainath, Y onghui Wu, Rohit Prabhavalkar , Patrick Nguyen, Zhifeng Chen, Anjuli Kannan, Ron J. W eiss, Kanishka Rao, Katya Gonina, Navdeep Jaitly , Bo Li, Jan Chorowski, and Michiel Bacchiani. 2018. State-of-the- art Speech Recognition With Sequence-to-Se quence Models. In ICASSP . https: //arxiv .org/pdf/1712.01769.pdf [6] K yunghyun Cho, Bart van Merrienboer, Caglar Gulcehre, Dzmitr y Bahdanau, Fethi Bougares, Holger Schwenk, and Y oshua Bengio. 2014. Learning Phrase Representations using RNN Encoder–Decoder for Statistical Machine T ranslation. In Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP) . Association for Computational Linguistics, 1724–1734. https: //doi.org/10.3115/v1/D14- 1179 [7] J. S. Chung and A. Zisserman. 2016. Lip Reading in the Wild. In Asian Conference on Computer Vision . [8] F.A. Gers, J. Schmidhuber , and F. Cummins. 1999. Learning to forget: continual prediction with LSTM. IET Conference Proceedings (January 1999), 850–855(5). http://digital- library.theiet.org/content/conferences/10.1049/cp_19991218 [9] Naomi Harte and Eoin Gillen. 2015. TCD- TIMI T: An Audio- Visual Corpus of Continuous Speech. IEEE Transactions on Multimedia 17, 5 (May 2015), 603–615. [10] Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. 2016. Identity Map- pings in De ep Residual Networks. In Computer Vision – ECCV 2016 , Bastian Leibe, Jiri Matas, Nicu Sebe, and Max W elling (Eds.). Springer International Publishing, Cham, 630–645. [11] A. K. Katsaggelos, S. Bahaadini, and R. Molina. 2015. Audio visual Fusion: Challenges and New Approaches. Proc. IEEE 103, 9 (Sept 2015), 1635–1653. https://doi.org/10.1109/JPROC.2015.2459017 [12] Jiquan Ngiam, Aditya Khosla, Mingyu Kim, Juhan Nam, Honglak Lee, and Andrew Y . Ng. 2011. Multimo dal deep learning. In Proceedings of the 28th International Conference on Machine Learning, ICML 2011 . 689–696. [13] Stavros Petridis, Themos Stafylakis, Pingchuan Ma, Feip eng Cai, Georgios Tzimiropoulos , and Maja Pantic. 2018. End-to-end Audiovisual Speech Recogni- tion. In ICASSP . http://arxiv .org/abs/1802.06424 [14] G. Potamianos, C. Neti, G. Gravier , A. Garg, and A. W . Senior . 2003. Recent advances in the automatic recognition of audiovisual spe ech. Proc. IEEE 91, 9 (Sept 2003), 1306–1326. https://doi.org/10.1109/JPROC.2003.817150 [15] Rohit Prabhavalkar , Kanishka Rao, T ara N. Sainath, Bo Li, Leif Johnson, and Navdeep Jaitly . 2017. A Comparison of Sequence-to-Se quence Models for Speech Recognition. In Proc. Interspe ech 2017 . 939–943. https://doi.org/10.21437/ Interspeech.2017- 233 [16] Shyam Sundar Rajagopalan, Louis-Philipp e Morency , Tadas Baltru ˘ saitis, and Roland Goecke. 2016. Extending Long Short-T erm Memory for Multi-View Structured Learning. In Computer Vision – ECCV 2016 (Lecture Notes in Computer Science) . Springer , Cham, 338–353. https://doi.org/10.1007/978- 3- 319- 46478- 7_21 [17] Sashank J. Reddi, Satyen Kale, and Sanjiv Kumar. 2018. On the Convergence of Adam and Beyond. In International Conference on Learning Representations . https://openreview .net/forum?id=ryQu7f- RZ [18] Jimmy Ren, Y ongtao Hu, Yu- Wing Tai, Chuan W ang, Li Xu, W enxiu Sun, and Qiong Y an. 2016. Look, Listen and Learn – A Multimo dal LSTM for Speaker Identication. In Thirtieth AAAI Conference on Articial Intelligence . https: //www.aaai.org/ocs/index.php/AAAI/AAAI16/paper/view/12386 00014. [19] Joon Son Chung, Andrew Senior , Oriol Vinyals, and Andrew Zisserman. 2017. Lip Reading Sentences in the Wild. In The IEEE Conference on Computer Vision and Pattern Recognition (CVPR) . [20] Themos Stafylakis and Georgios Tzimiropoulos. 2017. Combining Residual Networks with LSTMs for Lipreading. In Proc. Interspe ech 2017 . 3652–3656. https: //doi.org/10.21437/Interspeech.2017- 85 [21] F. Tao and C. Busso. 2018. Gating Neural Network for Large V ocabular y Au- diovisual Spe ech Recognition. IEEE/A CM Transactions on A udio, Speech, and Language Processing 26, 7 (July 2018), 1286–1298. https://doi.org/10.1109/T ASLP. 2018.2815268 [22] Michael W and and Jürgen Schmidhuber. 2017. Improving Speaker-Independent Lipreading with Domain- Adversarial Training. In Pr oc. Interspeech 2017 . 3662– 3666. https://doi.org/10.21437/Interspeech.2017- 421 [23] Amir Zadeh, Paul Pu Liang, Navonil Mazumder , Soujanya Poria, Erik Cambria, and Louis-Philippe Morency . 2018. Memory Fusion Network for Multi-view Sequential Learning. In AAAI Conference on Articial Intelligence . https://aaai. org/ocs/index.php/AAAI/AAAI18/paper/view/17341 [24] Amir Zadeh, Paul Pu Liang, Soujanya Poria, Prateek Vij, Erik Cambria, and Louis-Philippe Morency . 2018. Multi-attention Recurrent Network for Human Communication Comprehension. In AAAI Conference on Articial Intelligence . https://aaai.org/ocs/index.php/AAAI/AAAI18/paper/view/17390 A N UMERICAL RESULTS T able 2: Character Error Rate (CER) / W ord Error Rate (WER) [%] on TCD-TIMIT clean 10db 0db -5db A - WGN 19.16 / 45.53 25.58 / 53.89 34.92 / 64.98 A - Cafe 19.16 / 45.53 25.61 / 54.48 38.39 / 68.70 46.52 / 76.44 A - Street 19.16 / 45.53 24.44 / 52.46 34.56 / 64.81 41.58 / 71.06 A V Cat - WGN 20.69 / 51.88 29.02 / 63.77 39.81 / 75.55 A V Cat - Cafe 20.69 / 51.88 29.19 / 63.12 43.69 / 79/26 51.95 / 86.78 A V Cat - Street 20.69 / 51.88 26.87 / 60.61 38.95 / 74.31 47.00 / 81.06 A V Align - WGN 17.70 / 41.90 23.65 / 49.95 29.68 / 57.07 A V Align - Cafe 17.70 / 41.90 24.23 / 51.94 31.93 / 60.88 32.68 / 60.58 A V Align - Street 17.70 / 41.90 22.66 / 49.73 27.92 / 55.19 28.72 / 55.63 T able 3: Character Error Rate (CER) / W ord Error Rate (WER) [%] on LRS2 clean 10db 0db -5db A - WGN 14.29 / 29.90 20.45 / 39.17 34.95 / 57.90 A - Cafe 14.29 / 29.95 18.72 / 36.73 30.93 / 53.10 41.88 / 66.64 A - Street 14.29 / 29.95 17.11 / 34.12 28.62 / 50.19 38.51 / 62.25 A V Cat - WGN 17.40 / 37.43 24.52 / 47.61 40.96 / 68.64 A V Align - WGN 14.11 / 30.48 19.96 / 39.68 33.94 / 57.89 A V Align - Cafe 14.11 / 30.48 18.93 / 38.11 31.65 / 54.35 41.47 / 66.10 A V Align - Street 14.11 / 30.48 18.18 / 36.47 29.26 / 51.29 37.97 / 61.83

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment