Adversarial Feature-Mapping for Speech Enhancement

Feature-mapping with deep neural networks is commonly used for single-channel speech enhancement, in which a feature-mapping network directly transforms the noisy features to the corresponding enhanced ones and is trained to minimize the mean square …

Authors: Zhong Meng, Jinyu Li, Yifan Gong

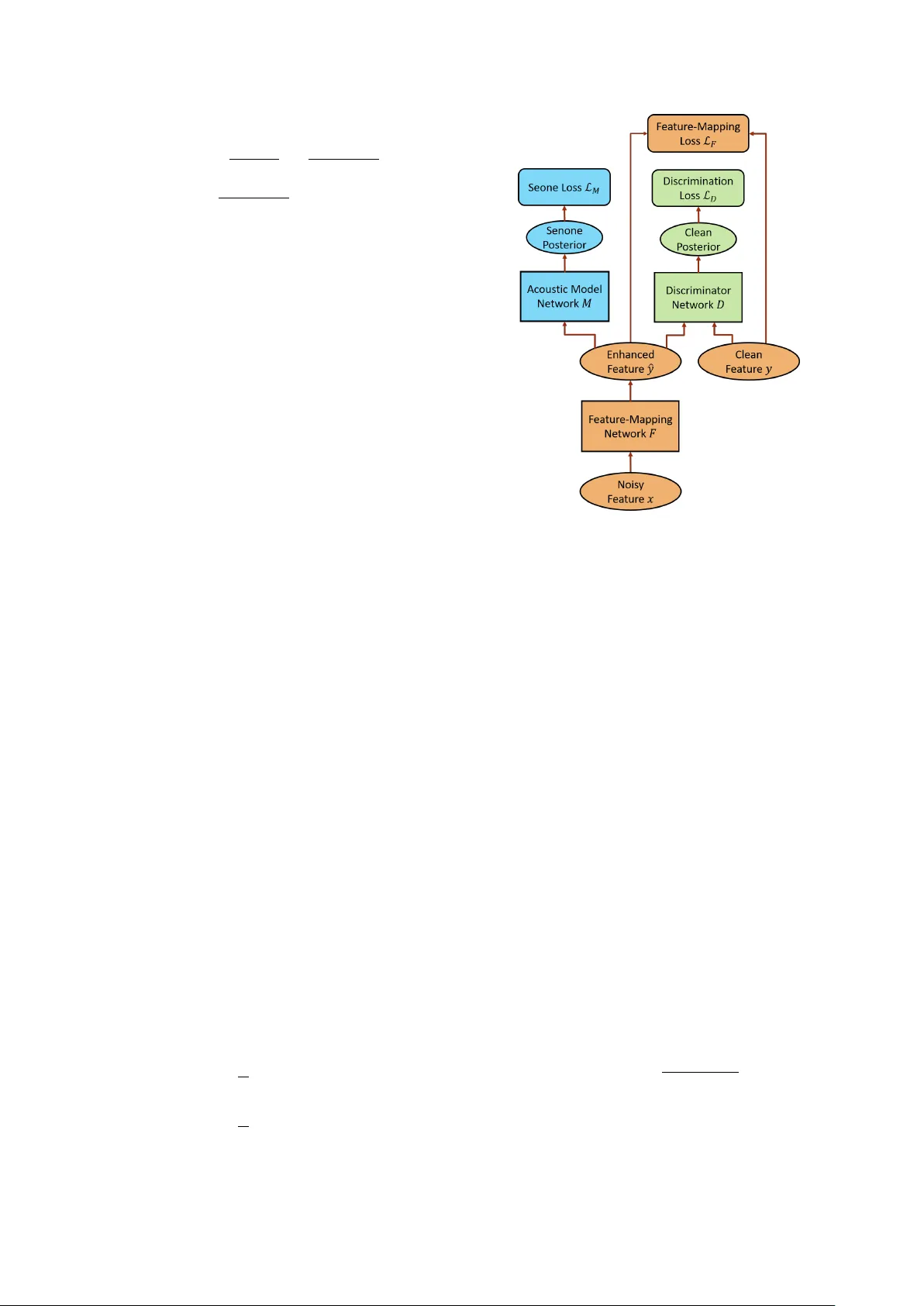

Adversarial F eatur e-Mapping for Speech Enhancement Zhong Meng 1 , 2 , Jinyu Li 1 , Y ifan Gong 1 , Biing-Hwang (F red) J uang 2 1 Microsoft AI and Research, Redmond, W A, USA 2 Georgia Institute of T echnology , Atlanta, GA, USA zhongmeng@gatech.edu, { jinyli, yifan.gong } @microsoft.com, juang@ece.gatech.edu Abstract Feature-mapping with deep neural networks is commonly used for single-channel speech enhancement, in which a feature-mapping network directly transforms the noisy features to the corresponding enhanced ones and is trained to minimize the mean square errors between the enhanced and clean fea- tures. In this paper , we propose an adversarial feature-mapping (AFM) method for speech enhancement which advances the feature-mapping approach with adversarial learning. An ad- ditional discriminator network is introduced to distinguish the enhanced features from the real clean ones. The two networks are jointly optimized to minimize the feature-mapping loss and simultaneously mini-maximize the discrimination loss. The dis- tribution of the enhanced features is further pushed tow ards that of the clean features through this adversarial multi-task train- ing. T o achieve better performance on ASR task, senone-aware (SA) AFM is further proposed in which an acoustic model network is jointly trained with the feature-mapping and dis- criminator networks to optimize the senone classification loss in addition to the AFM losses. Evaluated on the CHiME-3 dataset, the proposed AFM achie ves 16.95% and 5.27% relative word error rate (WER) improvements over the real noisy data and the feature-mapping baseline respectively and the SA-AFM achiev es 9.85% relativ e WER improv ement over the multi- conditional acoustic model. Index T erms : speech enhancement, paralleled data, adversarial learning, speech recognition 1. Introduction Single-channel speech enhancement aims at attenuating the noise component of noisy speech to increase the intelligibil- ity and perceived quality of the speech component [1]. It is commonly used to improve the quality of mobile speech com- munication in noisy environments and enhance the speech sig- nal before amplification in hearing aids and cochlear implants. More importantly , speech enhancement is widely applied as a front-end pre-processing stage to improve the performance of automatic speech recognition (ASR) [2, 3, 4, 5, 6] and speaker recognition under noisy conditions [7, 8]. W ith the advance of deep learning, deep neural network (DNN) based approaches ha ve achieved great success in single- channel speech enhancement. The mask learning approach [9, 10, 11] is proposed to estimate the ideal ratio mask or ideal binary mask based on noisy input features using a DNN. The mask is used to filter out the noise from the noisy speech and recov er the clean speech. Howe ver , it has the presumption that the scale of the masked signal is the same as the clean target and the noise is strictly additiv e and remo vable by the Zhong Meng performed the work while he was a research intern at Microsoft AI and Research, Redmond, W A, USA. masking procedure which is generally not true for real recorded stereo data. T o deal with this problem, the feature-mapping ap- proach [12, 13, 14, 15, 16, 17] is proposed to train a feature- mapping network that directly transforms the noisy features to enhanced ones. The feature-mapping network serves as a non-linear regression function trained to minimize the feature- mapping loss, i.e., the mean square error (MSE) between the enhanced features and the paralleled clean ones. The applica- tion of MSE estimator is based on the homoscedasticity and no auto-correlation assumption of the noise, i.e., the noise needs to have the same v ariance for each noisy feature and the noise needs to be uncorrelated between different noisy features [18]. This assumption is in general violated for real speech signal (a kind of time series data) under non-stationary unknown noise. Recently , adversarial training [19] has become a hot topic in deep learning with its great success in estimating genera- tiv e models. It was first applied to image generation [20, 21], image-to-image translation [22, 23] and representation learning [24]. In speech area, it has been applied to speech enhancement [25, 26, 27, 28], voice con version [29, 30], acoustic model adap- tation [31, 32, 33], noise-robust [34, 35] and speaker -in variant [36, 37] ASR using gradient reversal layer (GRL) [38]. In these works, adversarial training is used to learn a feature or an in- termediate representation in DNN that is inv ariant to the shift among dif ferent domains (e.g., environments, speak ers, image styles, etc.). In other words, a generator network is trained to map data from dif ferent domains to the features with similar distributions via adv ersarial learning. Inspired by this, we adv ance the feature-mapping approach with adversarial learning to further diminish the discrepanc y be- tween the distrib utions of the clean features and the enhanced features generated by the feature-mapping network given non- stationary and auto-correlated noise at the input. W e call this method adversarial feature-mapping (AFM) for speech en- hancement. In AFM, an additional discriminator network is introduced to distinguish the enhanced features from the real clean ones. The feature-mapping network and the discrimina- tor network are jointly trained to minimize the feature-mapping loss and simultaneously mini-maximize the discrimination loss with adv ersarial multi-task learning. W ith AFM, the feature- mapping network can generate pseudo-clean features that the discriminator can hardly tell whether the y are real clean features or not. T o achie ve better performance on ASR task, senone- aware adversarial feature-mapping (SA-AFM) is proposed in which an acoustic model network is introduced and is jointly trained with the feature-mapping and discriminator networks to optimize the senone classification loss in addition to the feature- mapping and discrimination losses. Note that AFM is dif ferent from [26] in that: (1) In AFM, the inputs to the discriminator are enhanced and clean features while in [26] the inputs to the discriminator are the concate- nation of enhanced and noisy features and the concatenation of clean and noisy features. (2) The primary task of AFM is feature-mapping, i.e., to minimize the L 2 distance (MSE) be- tween enhanced and clean features and it is advanced with ad- versarial learning to further reduce the discrepancy between the distributions of the enhanced and clean features while in [26] the primary task is to generate enhanced features that are simi- lar to clean features with generati ve adversarial network (GAN) and it is re gularized with the minimization of L 1 distance be- tween noisy and enhanced features. (3) AFM performs adv er- sarial multi-task training using GRL method as in [38] while [26] conducts conditional GAN iterative optimization as in [19]. (4) In this paper, AFM uses long short-term memory (LSTM)- recurrent neural networks (RNNs) [39, 40, 41] to generate the enhanced features and a feed-forward DNN as the discriminator while [26] uses con volutional neural netw orks for both. W e perform ASR experiments with features enhanced by AFM on CHiME-3 dataset [42]. Evaluated on a clean acoustic model, AFM achie ves 16.95% and 5.27% relative word error rate (WER) improv ements respectively over the noisy features and feature-mapping baseline and the SA-AFM achie ves 9.85% relativ e WER improvement ov er the multi-conditional acoustic model. 2. Adversarial F eature-Mapping Speech Enhancement W ith feature-mapping approach for speech enhancement, we are giv en a sequence of noisy speech features X = { x 1 , . . . , x T } and a sequence of clean speech features Y = { y 1 , . . . , y T } as the training data. X and Y are parallel to each other , i.e., each pair of x i and y i is frame-by-frame synchro- nized. The goal of speech enhancement is to learn a non-linear feature-mapping network F with parameters θ f that transforms X to a sequence of enhanced features ˆ Y = { ˆ y 1 , . . . , ˆ y T } such that the distribution of ˆ Y is as close to that of Y as possible: ˆ y i = F ( x i ) , i = 1 , . . . , T (1) P ˆ Y ( ˆ y ) → P Y ( y ) . (2) T o achiev e that, we minimize the noisy-to-clean feature- mapping loss L F ( θ f ) , which is commonly defined as the MSE between ˆ Y and Y as follows: L F ( θ f ) = 1 T T X i =1 ( ˆ y i − y i ) 2 = 1 T T X i =1 [ F ( x i ) − y i ] 2 . (3) Howe ver , the MSE that feature-mapping approach mini- mizes is based on the homoscedasticity and no auto-correlation assumption of the noise, i.e., the noise has the same variance for each noisy feature and the noise is uncorrelated between dif fer- ent noisy features. This assumption is in general in valid for real speech signal (time series data) under non-stationary unkno wn noise. T o address this problem, we further advance the feature- mapping network with an additional discriminator network and perform adversarial multi-task training to further reduce the dis- crepancy between the distribution of enhanced features and the clean ones gi ven non-stationary and auto-correlated noise is at the input. As shown in Fig. 1, the discriminator network D with pa- rameters θ d takes enhanced features ˆ Y and clean features Y as the input and outputs the posterior probability that an input fea- ture belongs to the clean set, i.e., P ( y i ∈ C ) = D ( y i ) (4) P ( ˆ y i ∈ E ) = 1 − D ( ˆ y i ) (5) Figure 1: The frame work of AFM for speech enhancement. where C and E denote the sets of clean and enhanced features respectiv ely . The discrimination losses L D ( θ f , θ d ) for the D is formulated below using cross-entrop y: L D ( θ f , θ d ) = 1 T T X i =1 [log P ( y i ∈ C ) + log P ( ˆ y i ∈ E )] = 1 T T X i =1 log D ( y i ) + log [1 − D ( F ( x i ))] . (6) T o make the distrib ution of the enhanced features ˆ Y similar to that of the clean ones Y , we perform adversarial training of F and D , i.e, we minimize L D ( θ f , θ d ) with respect to θ d and maximize L D ( θ f , θ d ) with respect to θ f . This minimax com- petition will first increase the generation capability of F and the discrimination capability of D and will eventually conver ge to the point where the F generates extremely confusing enhanced features that D is unable to distinguish whether it is a clean feature or not. The total loss of AFM L AF M ( θ f , θ d ) is formulated as the weighted sum of the feature-mapping loss and the discrimina- tion loss below: L AFM ( θ f , θ d ) = L F ( θ f ) − λ L D ( θ f , θ d ) (7) where λ > 0 is the gradient rev ersal coefficient that controls the trade-off between the feature-mapping loss and the discrimina- tion loss in Eq. (3) and Eq. (6) respectiv ely . F and D are jointly trained to optimize the total loss through adversarial multi-task learning as follo ws: ˆ θ f = arg min θ f L AFM ( θ f , ˆ θ d ) (8) ˆ θ d = arg max θ d L AFM ( ˆ θ f , θ d ) (9) where ˆ θ f and ˆ θ d are optimal parameters for F and D respec- tiv ely and are updated as follows via back propagation through time (BPTT) with stochastic gradient descent (SGD): θ f ← θ f − µ ∂ L F ( θ f ) ∂ θ f − λ ∂ L D ( θ f , θ d ) ∂ θ f (10) θ d ← θ d − µ ∂ L D ( θ f , θ d ) ∂ θ d (11) where µ is the learning rate. Note that the ne gativ e coefficient − λ in Eq. (10) induces rev ersed gradient that maximizes L D ( θ f , θ d ) in Eq. (6) and makes the enhanced features similar to the real clean ones. W ithout the reversal gradient, SGD would make the enhanced features different from the clean ones in order to minimize Eq. (6). For easy implementation, gradient re versal layer is intro- duced in [38], which acts as an identity transform in the forw ard propagation and multiplies the gradient by − λ during the back- ward propagation. During testing, only the optimized feature- mapping network F is used to generate the enhanced features giv en the noisy test features. 3. Senone-A war e Adversarial F eature-Mapping Enhancement For AFM speech enhancement, we only need parallel clean and noisy speech for training and we do not need an y information about the content of the speech, i.e., the transcription. With the goal of improving the intelligibility and percei ved quality of the speech, AFM can be widely used in a broad range of applications including ASR, mobile communication, hearing aids, cochlear implants, etc. Howe ver , for the most important ASR task, AFM does not necessarily lead to the best WER per - formance because its feature-mapping and discrimination ob- jectiv es are not directly related to the speech units (i.e., word, phoneme, senone, etc.) classification. In fact, with AFM, some decision boundaries among speech units may be distorted in searching for an optimal separation between speech and noise. T o compensate for this mismatch, we incorporate a DNN acoustic model into the AFM framework and propose the senone-aware adversarial feature-mapping (SA-AFM), in which the acoustic model network M , feature-mapping network F and the discriminator network D are trained to jointly opti- mize the primary task of feature-mapping, secondary task of the third task of clean/enhanced data discrimination and the third task of senone classification in an adv ersarial fashion. The tran- scription of the parallel clean and noisy training utterances is required for SA-AFM speech enhancement. Specifically , as sho wn in Fig. 2, the acoustic model network M with parameters θ m takes in the enhanced features ˆ Y as the input and predicts the senone posteriors P ( q | ˆ y i ; θ y ) , q ∈ Q as follows: M ( ˆ y i ) = P ( q | ˆ y i ; θ m ) (12) after the integration with feature-mapping network F , we have M ( F ( x i )) = P ( q | x i ; θ f , θ m ) . (13) W e want to make the enhanced features ˆ Y senone- discriminativ e by minimizing the cross-entropy loss between the predicted senone posteriors and the senone labels as follows: L M ( θ m , θ f ) = − 1 T T X i =1 log P ( s i | x i ; θ f , θ m ) = − 1 T T X i =1 log M ( F ( x i )) (14) Figure 2: The frame work of SA-AFM for speech enhancement. where S is a sequence of senone labels S = { s 1 , . . . , s T } aligned with the noisy data X and enhanced data ˆ Y . Simultaneously , we minimize feature-mapping loss L F ( θ f ) defined in Eq. (3) with respect to F and perform adversarial training of F and D , i.e, we minimize L D ( θ f , θ d ) defined in Eq. (6) with respect to θ d and maximize L D ( θ f , θ d ) with respect to θ f , to make the distribution of the enhanced features ˆ Y similar to that of the clean ones Y . The total loss of SA-AFM L SA-AFM ( θ m , θ f , θ d ) is formu- lated as the weighted sum of L F ( θ f ) , L D ( θ f , θ d ) and the senone classification loss L M ( θ m , θ f ) as follows: L SA-AFM ( θ f , θ d , θ m ) = L F ( θ f ) − λ 1 L D ( θ f , θ d ) + λ 2 L M ( θ f , θ m ) (15) where λ 1 > 0 is the gradient reversal coefficient that controls the trade-off between L F ( θ f ) and L D ( θ f , θ d ) , and λ 2 > 0 is the weight for L M ( θ f , θ m ) . F , D and M are jointly trained to optimize the total loss through adversarial multi-task learning as follo ws: ( ˆ θ f , ˆ θ m ) = arg min θ f ,θ m L SA-AFM ( θ f , ˆ θ d , θ m ) (16) ˆ θ d = arg max θ d L SA-AFM ( ˆ θ f , θ d , ˆ θ m ) (17) where ˆ θ f , ˆ θ d and ˆ θ m are optimal parameters for F , D and M respectiv ely and are updated as follows via BPTT with SGD as in Eq. (10), Eq. (11) and Eq. (18) belo w: θ m ← θ m − µ ∂ L M ( θ f , θ m ) ∂ θ m . (18) During decoding, only the optimized feature-mapping network F and acoustic model network M are used to take in the noisy test features and generate the acoustic scores. 4. Experiments In the experiments, we train the feature-mapping network F with the parallel clean and noisy training utterances in CHiME- 3 dataset [42] using different methods. The real far-field noisy speech from the 5th microphone channel in CHiME-3 dev elop- ment data set is used for testing. W e use a pre-trained clean DNN acoustic model to ev aluate the ASR WER performance of the test features enhanced by F . The standard WSJ 3-gram lan- guage model with 5K-word lexicon is used in our e xperiments. 4.1. Feedf orward DNN Acoustic Model T o e valuate the ASR performance of the features enhanced by AFM, we first train a feedforward DNN-hidden Markov model (HMM) acoustic model using 8738 clean training utterances in CHiME-3 with cross-entropy criterion. The 29-dimensional log Mel filterbank (LFB) features together with 1st and 2nd order delta features (totally 87-dimensional) are extracted. Each fea- ture frame is spliced together with 5 left and 5 right context frames to form a 957-dimensional feature. The spliced fea- tures are fed as the input of the feed-forward DNN after global mean and variance normalization. The DNN has 7 hidden lay- ers with 2048 hidden units for each layer . The output layer of the DNN has 3012 output units corresponding to 3012 senone labels. Senone-lev el forced alignment of the clean data is gen- erated using a Gaussian mixture model-HMM system. A WER of 29.44% is achieved when ev aluating the clean DNN acoustic model on the test data. 4.2. Adversarial Featur e-Mapping Speech Enhancement W e use parallel data consisting of 8738 pairs of noisy and clean utterances in CHiME-3 as the training data. The 29- dimensional LFB features are extracted for the training data. For the noisy data, the 29-dimensional LFB features are ap- pended with 1st and 2nd order delta features to form 87- dimensional feature v ectors. F is an LSTM-RNN with 2 hidden layers and 512 units for each hidden layer . A 256-dimensional projection layer is inserted on top of each hidden layer to reduce the number of parameters. F has 87 input units and 29 output units. The features are globally mean and v ariance normalized before fed into F . The discriminator D is a feedforward DNN with 2 hidden layers and 512 units in each hidden layer . D has 29 input units and one output unit. W e first train F with 87-dimensional LFB features as the input and 29-dimensional LFB features as the tar get to mini- mize the feature-mapping loss L ( θ f ) in Eq. (3). This serves as the feature-mapping baseline. Evaluated on clean DNN acous- tic model trained in Section 4.1, the feature-mapping enhanced features achieve 25.81% WER which is 12.33% relativ e im- prov ement over the noisy features. Then we jointly train F and D to optimize L AFM ( θ f , θ d ) as in Eq. (7) using the same input features and targets. The gradient rev ersal coefficient λ is fixed at 60 and the learning rate is 5 × 10 − 7 with a momentum of 0 . 5 in the experiments. As sho wn in T able 1, AFM enhanced fea- tures achieve 24.45% WER which is 16.95 % and 5.27% rela- tiv e improvements o ver the noisy features and feature-mapping baseline. 4.3. Senone-A ware Adversarial Feature-Mapping Speech Enhancement The SA-AFM experiment is conducted on top of the AFM sys- tem described in Section 4.2. In addition to the LSTM F and feedforward DNN D , we train a multi-conditional LSTM T est Data BUS CAF PED STR A vg. Noisy 36.25 31.78 22.76 27.18 29.44 FM 31.35 28.64 19.80 23.61 25.81 AFM 30.97 26.09 18.40 22.53 24.45 T able 1: The ASR WER (%) performance of real noisy dev set in CHiME-3 enhanced by differ ent methods e valuated on a clean DNN acoustic model. FM r epresents feature-mapping . acoustic model M using both the 8738 clean and 8738 noisy training utterances in CHiME-3 dataset. The LSTM M has 4 hidden layers with 1024 units in each layer . A 512-dimensional projection layer is inserted on top each hidden layer to reduce the number of parameters. The output layer has 3012 out- put units predicting senone posteriors. The senone-le vel forced alignment of the training data is generated using a GMM-HMM system. As shown in T able 2, the multi-conditional acoustic model achiev es 19.28% WER on CHiME-3 simulated dev set. System BUS CAF PED STR A vg. Multi-Condition 18.44 23.37 16.81 18.50 19.28 SA-FM 18.19 22.29 15.31 18.26 18.51 SA-AFM 17.02 21.01 14.41 17.13 17.38 T able 2: The ASR WER (%) performance of simulated noisy dev set in CHiME-3 by using multi-conditional acoustic model and differ ent enhancement methods. Then we perform senone-aware feature-mapping (SA-FM) by jointly training F and M to optimize the feature-mapping loss and the senone classification loss in which M takes the en- hanced LFB features generated by F as the input to predict the senone posteriors. The SA-FM achiev es 18.51% WER on the same testing data. Finally , SA-AFM is performed as described in Section 3 and it achieves 17.38% WER which is 9.85% and 6.10% relativ e improvements o ver the multi-conditional acous- tic model and SA-FM baseline. 5. Conclusions In this paper , we advance feature-mapping approach with ad- versarial learning by proposing AFM method for speech en- hancement. In AFM, we ha ve a feature-mapping network F that transforms the noisy speech features to clean ones with parallel noisy and clean training data and a discriminator D that distinguishes the enhanced features from the clean ones. F and D are jointly trained to minimize the feature-mapping loss (i.e., MSE) and simultaneously mini-maximize the discrim- ination loss. On top of feature-mapping, AFM pushes the dis- tribution of the enhanced features further to wards that of the clean features with adversarial multi-task learning.T o achie ve better performance on ASR task, SA-AFM is further proposed to optimize the senone classification loss in addition to the AFM losses. W e perform ASR experiments with features enhanced by AFM on CHiME-3 dataset. AFM achieves 16.95% and 5.27% relativ e WER improvements over the noisy features and feature- mapping baseline when ev aluated on a clean DNN acoustic model. Furthermore, the proposed SA-AFM achieves 9.85% relativ e WER improvement ov er the multi-conditional acoustic model. As we sho w in [43], teacher-student (T/S) learning [44] is better for robust model adaptation without the need of tran- scription. W e are no w working on the combination of AFM with T/S learning to further improv e the ASR model perfor- mance. 6. References [1] P . C. Loizou, Speec h enhancement: theory and practice . CRC press, 2013. [2] G. Hinton, L. Deng, D. Y u et al. , “Deep neural networks for acous- tic modeling in speech recognition: The shared views of four re- search groups, ” IEEE Signal Pr ocessing Magazine , v ol. 29, no. 6, pp. 82–97, 2012. [3] N. Jaitly , P . Nguyen, A. Senior, and V . V anhouck e, “ Application of pretrained deep neural netw orks to large vocab ulary speech recog- nition, ” in Pr oc. Interspeech , 2012. [4] T . Sainath, B. Kingsb ury , B. Ramabhadran et al. , “Making deep belief networks effectiv e for large vocabulary continuous speech recognition, ” in Pr oc. ASR U , 2011, pp. 30–35. [5] L. Deng, J. Li, J.-T . Huang et al. , “Recent adv ances in deep learn- ing for speech research at Microsoft, ” in ICASSP . IEEE, 2013. [6] D. Y u and J. Li, “Recent progresses in deep learning based acous- tic models, ” IEEE/CAA Journal of Automatica Sinica , vol. 4, no. 3, pp. 396–409, 2017. [7] J. Li, L. Deng, Y . Gong, and R. Haeb-Umbach, “ An overview of noise-robust automatic speech recognition, ” IEEEACM- T ransASLP , vol. 22, no. 4, pp. 745–777, April 2014. [8] J. Li, L. Deng, R. Haeb-Umbach, and Y . Gong, Rob ust Automatic Speech Reco gnition: A Bridge to Practical Applications . Aca- demic Press, 2015. [9] A. Narayanan and D. W ang, “Ideal ratio mask estimation using deep neural networks for robust speech recognition, ” in ICASSP . IEEE, 2013. [10] Y . W ang, A. Narayanan, and D. W ang, “On training tar gets for supervised speech separation, ” IEEE/ACM T ransactions on Au- dio, Speech, and Language Processing , v ol. 22, no. 12, pp. 1849– 1858, Dec 2014. [11] F . W eninger, H. Erdogan, S. W atanabe et al. , “Speech enhance- ment with lstm recurrent neural networks and its application to noise-robust asr , ” in International Conference on Latent V ariable Analysis and Signal Separation , 2015. [12] Y . Xu, J. Du, L. R. Dai, and C. H. Lee, “ A regression ap- proach to speech enhancement based on deep neural networks, ” IEEE/ACM T ransactions on Audio, Speech, and Language Pr o- cessing , vol. 23, no. 1, pp. 7–19, Jan 2015. [13] X. Lu, Y . Tsao, S. Matsuda, and C. Hori, “Speech enhancement based on deep denoising autoencoder . ” in Proc. Interspeech , 2013. [14] A. L. Maas, Q. V . Le, and et al., “Recurrent neural networks for noise reduction in robust ASR, ” in Proc. Interspeech , 2012. [15] X. Feng, Y . Zhang, and J. Glass, “Speech feature denoising and derev erberation via deep autoencoders for noisy re verberant speech recognition, ” in Pr oc. ICASSP , 2014, pp. 1759–1763. [16] F . W eninger, F . Eyben, and B. Schuller , “Single-channel speech separation with memory-enhanced recurrent neural networks, ” in Pr oc. ICASSP . IEEE, 2014, pp. 3709–3713. [17] Z. Chen, Y . Huang, J. Li, and Y . Gong, “Impro ving mask learn- ing based speech enhancement system with restoration layers and residual connection, ” in Pr oc. Interspeech , 2017. [18] D. A. Freedman, Statistical models: theory and practice . cam- bridge univ ersity press, 2009. [19] I. Goodfello w , J. Pouget-Abadie, and et al., “Generati ve adv ersar- ial nets, ” in NIPS , 2014. [20] A. Radford, L. Metz, and S. Chintala, “Unsupervised representa- tion learning with deep con volutional generativ e adversarial net- works, ” CoRR , vol. abs/1511.06434, 2015. [21] E. Denton, S. Chintala, A. Szlam, and R. Fer gus, “Deep genera- tiv e image models using a laplacian p yramid of adversarial net- works, ” in Pr oceedings of the 28th International Conference on Neural Information Processing Systems - V olume 1 , ser . NIPS’15. MIT Press, 2015, pp. 1486–1494. [22] P . Isola, J.-Y . Zhu, T . Zhou, and A. A. Efros, “Image-to-image translation with conditional adversarial networks, ” CVPR , 2017. [23] J.-Y . Zhu, T . P ark, P . Isola, and A. A. Efros, “Unpaired image-to- image translation using cycle-consistent adv ersarial networkss, ” in ICCV , 2017. [24] X. Chen, Y . Duan, and et al., “Infogan: Interpretable represen- tation learning by information maximizing generative adversarial nets, ” in NIPS , 2016. [25] S. Pascual, A. Bonafonte, and J. Serr ` a, “Segan: Speech enhance- ment generativ e adversarial network, ” in Interspeech , 2017. [26] C. Donahue, B. Li, and R. Prabha valkar , “Exploring speech en- hancement with generative adversarial networks for robust speech recognition, ” arXiv pr eprint arXiv:1711.05747 , 2017. [27] M. Mimura, S. Sakai, and T . Kawahara, “Cross-domain speech recognition using nonparallel corpora with cycle-consistent adver- sarial networks, ” in Proc. ASRU , Dec 2017, pp. 134–140. [28] Z. Meng, J. Li, Y . Gong, and B.-H. F . Juang, “Cycle-consistent speech enhancement, ” in Pr oc. Interspeech , 2018. [29] T . Kaneko and H. Kameoka, “Parallel-data-free voice conv er- sion using cycle-consistent adversarial networks, ” arXiv pr eprint arXiv:1711.11293 , 2017. [30] C.-C. Hsu, H.-T . Hwang, Y .-C. W u, Y . Tsao, and H.-M. W ang, “V oice con version from unaligned corpora using variational au- toencoding wasserstein generative adversarial networks, ” arXiv pr eprint arXiv:1704.00849 , 2017. [31] S. Sun, B. Zhang, L. Xie et al. , “ An unsupervised deep domain adaptation approach for robust speech recognition, ” Neur ocom- puting . [32] Z. Meng, Z. Chen, V . Mazalov , J. Li, and Y . Gong, “Unsupervised adaptation with domain separation networks for robust speech recognition, ” in Pr oceeding of ASR U , Dec 2017. [33] Z. Meng, J. Li, Y . Gong, and B.-H. F . Juang, “ Adversarial teacher-student learning for unsupervised domain adaptation, ” in Pr oc.ICASSP . IEEE, 2018. [34] Y . Shinohara, “ Adversarial multi-task learning of deep neural net- works for rob ust speech recognition. ” in Interspeec h , 2016, pp. 2369–2372. [35] D. Serdyuk, K. Audhkhasi, P . Brakel, B. Ramabhadran et al. , “In variant representations for noisy speech recognition, ” in NIPS W orkshop , 2016. [36] G. Saon, G. K urata, T . Sercu et al. , “English conv ersational tele- phone speech recognition by humans and machines, ” Proc. Inter- speech , 2017. [37] Z. Meng, J. Li, Z. Chen et al. , “Speaker-in variant training via ad- versarial learning, ” in Proc. ICASSP , 2018. [38] Y . Ganin and V . Lempitsky , “Unsupervised domain adaptation by backpropagation, ” in ICML , 2015. [39] H. Sak, A. Senior , and F . Beaufays, “Long short-term memory re- current neural network architectures for lar ge scale acoustic mod- eling, ” in Interspeech , 2014. [40] Z. Meng, S. W atanabe, J. R. Hershey , and H. Erdogan, “Deep long short-term memory adapti ve beamforming netw orks for mul- tichannel robust speech recognition, ” in ICASSP , 2017. [41] H. Erdogan, T . Hayashi, J. R. Hershey et al. , “Multi-channel speech recognition: Lstms all the way through, ” in CHiME-4 workshop , 2016, pp. 1–4. [42] J. Barker , R. Marx er , E. V incent, and S. W atanabe, “The third CHiME speech separation and recognition challenge: Dataset, task and baselines, ” in Pr oc. ASR U , 2015, pp. 504–511. [43] J. Li, M. L. Seltzer , X. W ang et al. , “Large-scale domain adapta- tion via teacher-student learning, ” in Proc. Interspeech , 2017. [44] J. Li, R. Zhao, J.-T . Huang, and Y . Gong, “Learning small- size DNN with output-distribution-based criteria. ” in Proc. Inter- speech , 2014, pp. 1910–1914.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment