Attention-based Transfer Learning for Brain-computer Interface

Different functional areas of the human brain play different roles in brain activity, which has not been paid sufficient research attention in the brain-computer interface (BCI) field. This paper presents a new approach for electroencephalography (EE…

Authors: Chuanqi Tan, Fuchun Sun, Tao Kong

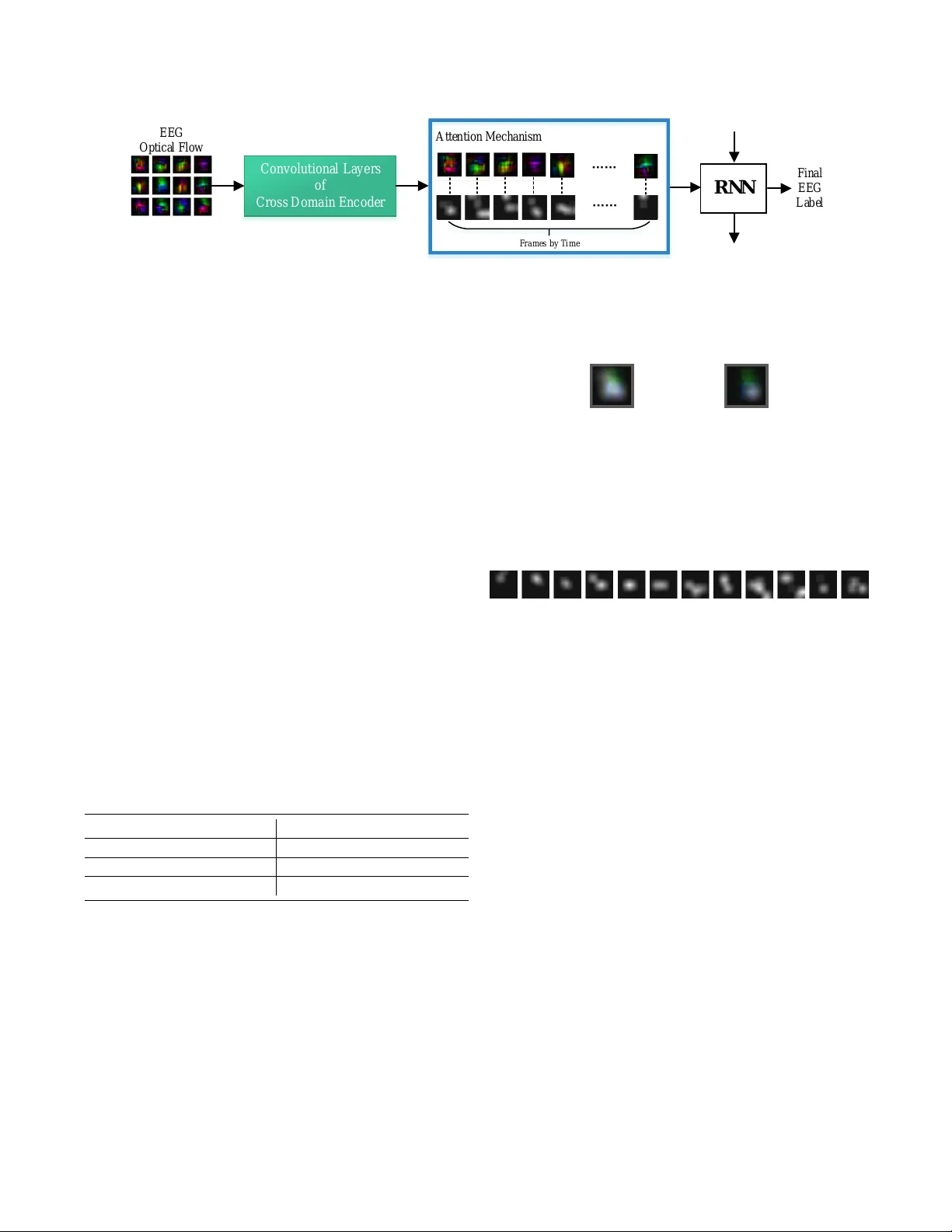

A TTENTION-B ASED TRANSFER LEARNING FOR BRAIN-COMPUTER INTERF A CE Chuanqi T an Fuchun Sun T ao K ong Bin F ang W enchang Zhang State K ey Laboratory of Intelligent T echnology and Systems Tsinghua National Laboratory for Information Science and T echnology (TNList) Department of Computer Science and T echnology , Tsinghua Univ ersity { tcq15@mails, fcsun@mail, kt14@mails, fangbin@mail, zhangwc14@mails } .tsinghua.edu.cn ABSTRA CT Different functional areas of the human brain play dif fer- ent roles in brain activity , which has not been paid sufficient research attention in the brain-computer interf ace (BCI) field. This paper presents a ne w approach for electroencephalogra- phy (EEG) classification that applies attention-based transfer learning. Our approach considers the importance of differ - ent brain functional areas to improve the accuracy of EEG classification, and provides an additional way to automati- cally identify brain functional areas associated with new ac- tivities without the in volv ement of a medical professional. W e demonstrate empirically that our approach out-performs state- of-the-art approaches in the task of EEG classification, and the results of visualization indicate that our approach can de- tect brain functional areas related to a certain task. Index T erms — Attention Mechanism, Brain-computer Interface, T ransfer Learning, Adversarial Network 1. INTR ODUCTION Brain-computer interface (BCI) based systems can read the brain information of the subject and decode it into instructions for controlling an external device, thereby interacting more naturally with the user . The ke y issue in BCI-based systems is the accuracy of Electroencephalography (EEG) classification. One of the most important problems in EEG classification methods is that the r elationship between the functional ar eas of the human brain and the specific activities is not ef fectively utilized . This problem makes it dif ficult to find ke y elec- trodes with higher signal-to-noise ratios, therefore, it is dif- ficult to obtain an effecti ve EEG classifier . Medical research has shown that the functional areas of the human brain hav e strong regional correlation with specific acti vities. In the past, when we designed BCI-based systems, we needed medical experts to specify key electrodes in volv ed in special activity . For a ne w activity , it is dif ficult to construct a usability system without the help of medical experts, which se verely limits the This work is jointly supported by National Natural Science Foundation of China under with Grant No.91848206, 61621136008, U1613212. E E G O p t i c a l F l o w Co nvo l ut i ona l L a ye rs of Cros s D om a i n E nc ode r R N N F i n a l E E G L a b e l A t t e n t i o n M e c h a n i s m F r a m e s by T i m e …… …… S o u r c e D o m a i n T a r g e t D o m a i n C r o s s d o m a i n e n c o d e r S o u r c e D o m a i n L a b e l A d v e r s a r i a l N e t w o r k C r o s s D o m a i n E n c o d e r A t t e n t i o n b a s e d D e c o d e r E E G L a b e l I m a ge N e t E E G opt i c a l f l ow A t t e n t i o n M a p Fig. 1 . Overvie w of our approach. W e applied ImageNet as the source domain and EEG optical flo w as the target domain to an attention-based transfer learning framew ork. In addition to obtain EEG label, it gets an e xtra attention map to reflect the activity of the human brain. applicability of BCI-based systems. It would be meaningful to ha ve a non-medical approach that automatically discov ers activity-related functional areas from brain signals. In addi- tion, another key issue is the lack of training data. Because the cost of biosignal acquisition and labeling is extremely high, it is almost impossible to construct a large, high-quality EEG signal dataset. It is difficult to train adv anced classifiers with- out sufficient training samples. T o solve these problems, we propose an attention-based transfer learning framework that includes two main compo- nents: a cross domain encoder and an attention-based decoder with recurrent neural netw ork (RNN). An o vervie w of our ap- proach is shown in Figure 1. A cross domain encoder has the ability to transfer knowledge from natural images domain by representing the original EEG signal in a ne w form - EEG optical flow . It uses a large amount of training data in the source domain (image classification) to help train the complex feature extractor in the target domain (EEG classification), which solves the problem of a lack of training data by us- ing adversarial transfer learning. The feature extractor will be transferred as knowledge to the tar get domain. An attention- based decoder uses the attention mechanism to automatically discov er the weights of the brain functional areas, which ef- fectiv ely impro ves the accuracy of EEG classification. This mechanism can reflect the brain functional areas related to a specific activity and overcomes the reliance on medical ex- perts when dealing with new acti vity . The main contrib utions of this paper are as follows: (1) W e introduce attention-based transfer learning to the EEG classification task. (2) Our approach provides a no vel way to automatically discover brain functional areas associated with new activities, reducing reliance on medical experts. (3) Ex- periments show that our approach out-performs the state-of- the-art approaches in an EEG classification task and verify the usability of our approach. 2. RELA TED WORK Many works hav e been conducted to improv e EEG classifica- tion accuracy and a great variety of hand-designed features hav e been proposed. W ith the rapid de velopment of deep learning in recent years, many excellent netw orks hav e been presented by researchers. In recent years, many public works hav e discussed deep learning applications in bioinformatics research [1]. T ransfer learning [2] and deep transfer learning [3] enable the use of dif ferent domains, tasks, and distrib utions for train- ing and testing. [4] re viewed the current state-of-the-art trans - fer learning approaches in BCI. [5] proposed a novel EEG representation that reduces the EEG classification problem to an image classification problem that implicates the ability of transfer learning. [6] transferred general features via a con- volutional network across subjects and experiments. [7] e val- uated the transferability between subjects by calculating dis- tance and transferred kno wledge in comparable feature spaces to improv e accuracy . [8] designed a deep transfer learning framew ork which is suitable for transferring knowledge by joint training. [9] and [10] discussed whether the human visual system has attention mechanism. [11] re viewed the recent works on attention-based RNN and its application in computer vision, and categorized the approaches into four classes: item-wise soft attention, item-wise hard attention, location-wise hard attention, and location-wise soft attention. [12] applied the visual attention mechanism in an RNN network to obtain the ability to extract information from images or video by adap- tiv ely selecting a sequence of regions or locations. In [13], an attention-based model is applied to identify multiple objects in an image by using reinforcement learning to identify the most relev ant regions of the input image. [12] demonstrated that attention not only works on object detection tasks but many other computer vision tasks like image classification. [14] introduced an attention-based model to an image caption task. [15] proposed to extract the feature vector by using the intermediate layer of V GG, and the feature can be associated with a specific region in the image through the network map. In natural language processing tasks, [16] applied a soft atten- tion mechanism to machine translation. [17] sho wed the lat- est attention model Google use in machine translation, which uses only attention without a con volutional neural network (CNN) or an RNN in a traditional encoder-decoder model. T o the best of our kno wledge, no researchers have at- tempted to automatically disco ver brain functional areas as- sociated with new acti vities. 3. METHOD Our approach has a traditional encoder -decoder structure that consists of two main components : a cross domain encoder and an attention-based decoder . 3.1. Cross domain encoder T o obtain the ability of transfer learning, the raw EEG signal was conv erted to a new representation - EEG optical flow , which was proposed in our pre vious w ork [5]. Many benefits can be gained from using the EEG optical flow . In particular , EEG optical flo w can enhance the ability of transfer learning fr om natural images . Many studies hav e demonstrated that the front layers in a con volutional neural network (CNN) can extract the gen- eral features of images, such as edges and corners. Therefore, we were able to transfer the front layers of a CNN network trained on ImageNet to extract the general features of the EEG optical flo w . Ho wev er , the general feature extractor trained by natural images does not fully match the EEG optical flow . Inspired by generativ e adversarial nets (GAN), we apply an adv ersarial netw ork to train a better general feature e xtrac- tor which is described in our pre vious work [8]. The pipeline of adversarial transfer learning is sho wn in Figure 2. E E G O p t i c a l F l o w Co nvo l ut i ona l L a ye rs of Cros s D om a i n E nc ode r R N N F i n a l E E G L a b e l A t t e n t i o n M e c h a n i s m F r a m e s by T i m e …… …… S o u r c e D o m a i n T a r g e t D o m a i n C r o s s d o m a i n e n c o d e r S o u r c e D o m a i n L a b e l A d v e r s a r i a l N e t w o r k C r o s s D o m a i n E n c o d e r A t t e n t i o n b a s e d D e c o d e r E E G L a b e l I m a ge N e t E E G opt i c a l f l ow A t t e n t i o n M a p Fig. 2 . Pipeline of adversarial transfer learning.Adversarial network used to identify the origins of input features. W e use features extracted from natural images and the EEG optical flow as the inputs for the adversarial network and train it to identify their origins. If the adv ersarial network achiev es inferior performance, it indicates a small difference between the two types of feature and better transferability , and vice versa. It can be achie ved by optimizing this loss function: L = − X k I [ y = k ] log p k + α L adv er + β < ( v ) , (1) where k is the number of categories, p k is the softmax value of the classifier acti vations, L adv er is the cross entropy of the adversarial network, < ( v ) is the regularization of manifold constraints, and α and β are hyperparameters. Manifold constraints force the learning algorithm to trans- fer useful knowledge from the source domain and ignore the knowledge which may destroy the manifold structure of the target domain. [18] demonstrated that keeping the geometric structure can be reduced to the regularization of: < ( v ) = 1 2 n X i,j =1 ζ ( v i ∗ , v j ∗ )( W ) ij (2) where v i is the embedded representation of sample x i , ζ ( v i ∗ , v j ∗ ) is the loss function to measure the euclidean dis- tance of v i ∗ and v j ∗ , ( W ) ij is the cosine similarity measure of p − near est neighbor in the adjacency matrix. T o train this adv ersarial network, we applied an itera- tively optimizing algorithm with two steps, which has been described in our previous work [8]. In this section, we hav e introduced a cross domain encoder that extracts features suit- able for both the source and target domains and obtains the high-quality features of an EEG signal with help from natural images. 3.2. Attention-based decoder In this section, we use the features extracted by the cross domain encoder to obtain the final EEG label and attention map of the brain through the attention-based decoder . The attention-based decoder is an RNN netw ork, and we feed the features obtained from each EEG optical flow frame into the RNN network, and treat the output of the last timestamp as the final EEG label. In a traditional encoder -decoder network, the input of the decoder is the output of the last fully connected layer of the encoder , which raises a crucial problem. The features ex- tracted from the last fully connected layer lose the location information of the brain functional areas, so these features do not reflect the importance of different brain functional areas for specific activities. Encouraged by recent works in computer vision and neural language process, and inspired by recent success in employing attention in these research works, we applied an attention-based decoder that can attend to salient parts of an EEG optical flo w while carrying out EEG classification. The attention mechanism provided a powerful tool to overcome the important issue mentioned above. The location-wise attention mechanism allows us to consider the weight of different parts in the EEG optical flow , which reflect the dif- ferent functional areas of the human brain. The pipeline of our attention-based decoder is shown in Figure 3. The encoder can obtain feature v ectors of each EEG opti- cal flow . In order to link the items in the feature vector to the parts of the EEG optical flow one by one, we use the feature map of the convolutional layer instead of the output of the fully connected layer . Since a lo w-le vel feature retains more information, it will be lost in the fully-connected layer . In this way , we can extract L vector features of D dimension as fea- ture v ectors, each dimension of feature vector corresponding to a part of the EEG optical flow , as shown in the following equation: a = { a 1 , ..., a L } , a i ∈ R D , (3) where L is the number of frames and D is the number of areas on the EEG optical flow . The items of feature vector are linked to the spatial location of the EEG optical flow by con volutional operation, which is demonstrated in Figure 4: EEG optical flow { a 1 , a 2 , ..., a L } Fig. 4 . Link between items in the feature v ector and spatial location of the EEG optical flow . In the attention mechanism, we need to obtain the conte xt vector as the input of the RNN at each time t . The follo wing equations are applied to calculate the context v ector: ˆ z t : e ti = f att ( a i , h t − 1 ) (4) α ti = exp ( e ti ) P L k =1 exp ( e tk ) (5) ˆ z t = φ ( { a i } , { α i } ) , (6) where h t − 1 is the hidden state of the previous step, f att is the map of a multilayer perceptron (MLP), e ti is the output of the MLP , α ti is the attention weights and φ is the function combining feature vectors and attention weights. There are two types of attention mechanism, the soft at- tention mechanism and the hard attention mechanism. The main difference is the definition of the φ function. In the soft attention mechanism, φ ( { a i } , { α i } ) = P L i α i a i that means all parts of the EEG optical flow will be considered in the con- text vector ˆ z t . In the hard attention mechanism, φ is a function that returns a sampled a i at ev ery point in time according to the multinouilli distribution parameterized by α . The soft attention mechanism is a smooth function; it can be solved directly by the back propagation algorithm, which is equiv alent to optimizing the following loss function: L = − l og ( P ( y | x )) + α L X i (1 − C X t α ti ) 2 . (7) The hard attention mechanism is a non-smooth function that can be approximated by the Monte Carlo algorithm. 4. EXPERIMENTS W e applied our approach to a dataset called Open Music Im- agery Information Retriev al (OpenMIIR) [19]. OpenMIIR is compiled during music perception and imagination, which in- volv es 10 subjects listening to and imagining 12 short music E E G O p t i c a l F l o w Co nvo l ut i ona l L a ye rs of Cros s D om a i n E nc ode r R N N F i n a l E E G L a b e l A t t e n t i o n M e c h a n i s m F r a m e s by T i m e …… …… S o u r c e D o m a i n T a r g e t D o m a i n C r o s s d o m a i n e n c o d e r S o u r c e D o m a i n L a b e l A d v e r s a r i a l N e t w o r k C r o s s D o m a i n E n c o d e r A t t e n t i o n b a s e d D e c o d e r E E G L a b e l I m a ge N e t E E G opt i c a l f l ow A t t e n t i o n M a p Fig. 3 . Pipeline of attention-based decoder . First, the feature v ectors that can maintain spatial information are produced by the con volutional layers of the cross domain encoder . Then, they are combined with the location-wise attention mechanism on each frame. Finally , these feature vectors are sent to the RNN network one by one to obtain the final EEG label. fragments taken from well-known pieces. These signals were recorded using 64 EEG electrodes at 512 Hz, and 240 trials were recorded per subject. The follo wing parameters were used in our approach. W e con verted raw EEG signals into EEG videos with thirteen frames and a resolution of 32*32. These frames were con verted to EEG optical flo w with twelve frames. W e employed V GG16 and VGG19 [20] as the targets of the cross domain encoder . The OpenMIIR dataset does not distinguish between training and test sets, so we randomly selected 10% of the dataset to use as the test dataset. As the baseline, we tested some recently proposed approaches: the deep neural network (DNN) described in [19] and the CNN described in [21]. In addition, we made comparisons to our pre vious work [8], that without an attention mechanism. Experiments on the Open- MIIR dataset were conducted to compare the performance of our approach and that of the baseline approaches, and the results are shown in T able 1. T able 1 . Classification accurac y (%) on the OpenMIIR dataset and comparisons to the baseline approaches. F or ex- ample, the corner mark in O ur ( S of t + V GG 16) refers to use of the soft attention mechanism and application of the VGG16 network as the encoder . [19] 27.22 [21] 27.80 [ 8 ] V GG 16 32.08 [ 8 ] V GG 19 35.00 O ur ( S of t + V GG 16) 37.92 O ur ( S of t + V GG 19) 36.67 O ur ( H ard + V GG 16) 37.08 O ur ( H ard + V GG 19) 35.84 As the results show in T able 1, the soft attention mecha- nism achiev es better classification results than the hard atten- tion mechanism. One possible reason is that the soft attention mechanism considers the interaction between multiple func- tional areas, while the hard mechanism only considers one functional area, as shown in Figure 5. Medical knowledge tells us that the reflection of an acti vity in the brain is the re- sult of a combination of multiple functional areas, which is more in line with the soft attention mechanism. W e visualized an attention map while a subject was lis- tening to an intense piece of music, as sho wn in Figure 6. It (a) S of t (b) H ar d Fig. 5 . V isualization of soft attention mechanism and hard attention mechanism on the same frame. was found that the learned weights of attention are somewhat similar to the result from medical experts [22]. Fig. 6 . V isualization of soft attention mechanism when listing to an intense music fragment. W e can draw the following conclusions from the experi- mental results presented in this section: (1) The experimen- tal results shown in T able 1 demonstrate that our proposed approach performs better than traditional approaches; (2) VGG16 is a better choice for encoder than VGG19 in our attention-based transfer learning for EEG classification task; (3) The performance of the soft attention mechanism is bet- ter than that of the hard attention mechanism; (4) Attention mechanisms can be used to automatically disco ver brain functional areas associated with ne w acti vities and reduce the dependence on medical experts. 5. CONCLUSIONS W e propose a novel approach to improve the accuracy of EEG classification in BCI. This approach takes advantage of the medical fact that dif ferent brain functional areas play dif fer- ent roles in activities. It applies an attention mechanism to automatically assess the importance of functional areas of the brain during activity . It can be concluded that our approach is superior to other state-of-the-art approaches. In addition, our approach can be used to automatically discover brain func- tional areas associated with activities, which is very useful when dealing with EEG data related to a new acti vity . 6. REFERENCES [1] P Mamoshina, A V ieira, E Putin, and A Zhav oronko v , “ Applications of deep learning in biomedicine., ” Molec- ular Pharmaceutics , vol. 13, no. 5, pp. 1445, 2016. [2] Sinno Jialin Pan and Qiang Y ang, “ A surve y on transfer learning, ” IEEE T ransactions on knowledge and data engineering , vol. 22, no. 10, pp. 1345–1359, 2010. [3] Chuanqi T an, Fuchun Sun, T ao K ong, W enchang Zhang, Chao Y ang, and Chunfang Liu, “ A surv ey on deep trans- fer learning, ” in International Conference on Artificial Neural Networks . Springer , 2018, pp. 270–279. [4] V inay Jayaram, Morteza Alamgir , Y asemin Altun, Bern- hard Scholkopf, and Moritz Grosse-W entrup, “T ransfer learning in brain-computer interfaces, ” IEEE Computa- tional Intelligence Magazine , vol. 11, no. 1, pp. 20–31, 2016. [5] Chuanqi T an, Fuchun Sun, W enchang Zhang, Jianhua Chen, and Chunfang Liu, “Multimodal classification with deep con volutional-recurrent neural networks for electroencephalography , ” in International Confer ence on Neural Information Pr ocessing . Springer, 2017, pp. 767–776. [6] Mehdi Hajinoroozi, Zijing Mao, Y uan-Pin Lin, and Y ufei Huang, “Deep transfer learning for cross-subject and cross-experiment prediction of image rapid serial visual presentation ev ents from eeg data, ” in Interna- tional Confer ence on Augmented Cognition . Springer, 2017, pp. 45–55. [7] Y uan-Pin Lin and Tzyy-Ping Jung, “Improving eeg- based emotion classification using conditional transfer learning, ” F r ontiers in Human Neur oscience , vol. 11, 2017. [8] Chuanqi T an, Fuchun Sun, and W enchang Zhang, “Deep transfer learning for eeg-based brain computer interface, ” in 2018 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2018, pp. 916–920. [9] Ronald A Rensink, “The dynamic representation of scenes, ” V isual cognition , vol. 7, no. 1-3, pp. 17–42, 2000. [10] Maurizio Corbetta and Gordon L Shulman, “Control of goal-directed and stimulus-driv en attention in the brain, ” Natur e r eviews neur oscience , v ol. 3, no. 3, pp. 201, 2002. [11] Feng W ang and David MJ T ax, “Survey on the atten- tion based rnn model and its applications in computer vision, ” arXiv preprint , 2016. [12] V olodymyr Mnih, Nicolas Heess, Alex Graves, et al., “Recurrent models of visual attention, ” in Advances in neural information pr ocessing systems , 2014, pp. 2204– 2212. [13] Jimmy Ba, V olodymyr Mnih, and K oray Ka vukcuoglu, “Multiple object recognition with visual attention, ” arXiv pr eprint arXiv:1412.7755 , 2014. [14] Ryan Kiros, Ruslan Salakhutdinov , and Rich Zemel, “Multimodal neural language models, ” in International Confer ence on Machine Learning , 2014, pp. 595–603. [15] Kelvin Xu, Jimmy Ba, Ryan Kiros, K yunghyun Cho, Aaron Courville, Ruslan Salakhudino v , Rich Zemel, and Y oshua Bengio, “Show , attend and tell: Neural image caption generation with visual attention, ” in Interna- tional confer ence on machine learning , 2015, pp. 2048– 2057. [16] Dzmitry Bahdanau, Kyunghyun Cho, and Y oshua Ben- gio, “Neural machine translation by jointly learning to align and translate, ” arXiv pr eprint arXiv:1409.0473 , 2014. [17] Ashish V aswani, Noam Shazeer , Niki Parmar , Jak ob Uszkoreit, Llion Jones, Aidan N Gomez, Łukasz Kaiser, and Illia Polosukhin, “ Attention is all you need, ” in Ad- vances in Neural Information Pr ocessing Systems , 2017, pp. 5998–6008. [18] Deng Cai, Xiaofei He, Jiawei Han, and Thomas S Huang, “Graph regularized nonneg ativ e matrix factor- ization for data representation, ” IEEE T ransactions on P attern Analysis and Machine Intellig ence , vol. 33, no. 8, pp. 1548–1560, 2011. [19] Sebastian Stober , “Learning discriminative features from electroencephalography recordings by encoding similarity constraints, ” in Acoustics, Speec h and Signal Pr ocessing (ICASSP), 2017 IEEE International Confer- ence on . IEEE, 2017, pp. 6175–6179. [20] Y anming Guo, Y u Liu, Ard Oerlemans, Songyang Lao, Song W u, and Michael S Le w , “Deep learning for visual understanding: A revie w , ” Neur ocomputing , vol. 187, pp. 27–48, 2016. [21] Sebastian Stober , A vital Sternin, Adrian M Owen, and Jessica A Grahn, “Deep feature learning for ee g record- ings, ” arXiv preprint , 2015. [22] V ernon L T o wle, Jos ´ e Bola ˜ nos, Diane Suarez, Kim T an, Robert Grzeszczuk, Da vid N Le vin, Raif Cakmur , Samuel A Frank, and Jean-Paul Spire, “The spatial lo- cation of ee g electrodes: locating the best-fitting sphere relativ e to cortical anatomy , ” Electr oencephalography and clinical neur ophysiology , v ol. 86, no. 1, pp. 1–6, 1993.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment