Listen to the Image

Visual-to-auditory sensory substitution devices can assist the blind in sensing the visual environment by translating the visual information into a sound pattern. To improve the translation quality, the task performances of the blind are usually empl…

Authors: Di Hu, Dong Wang, Xuelong Li

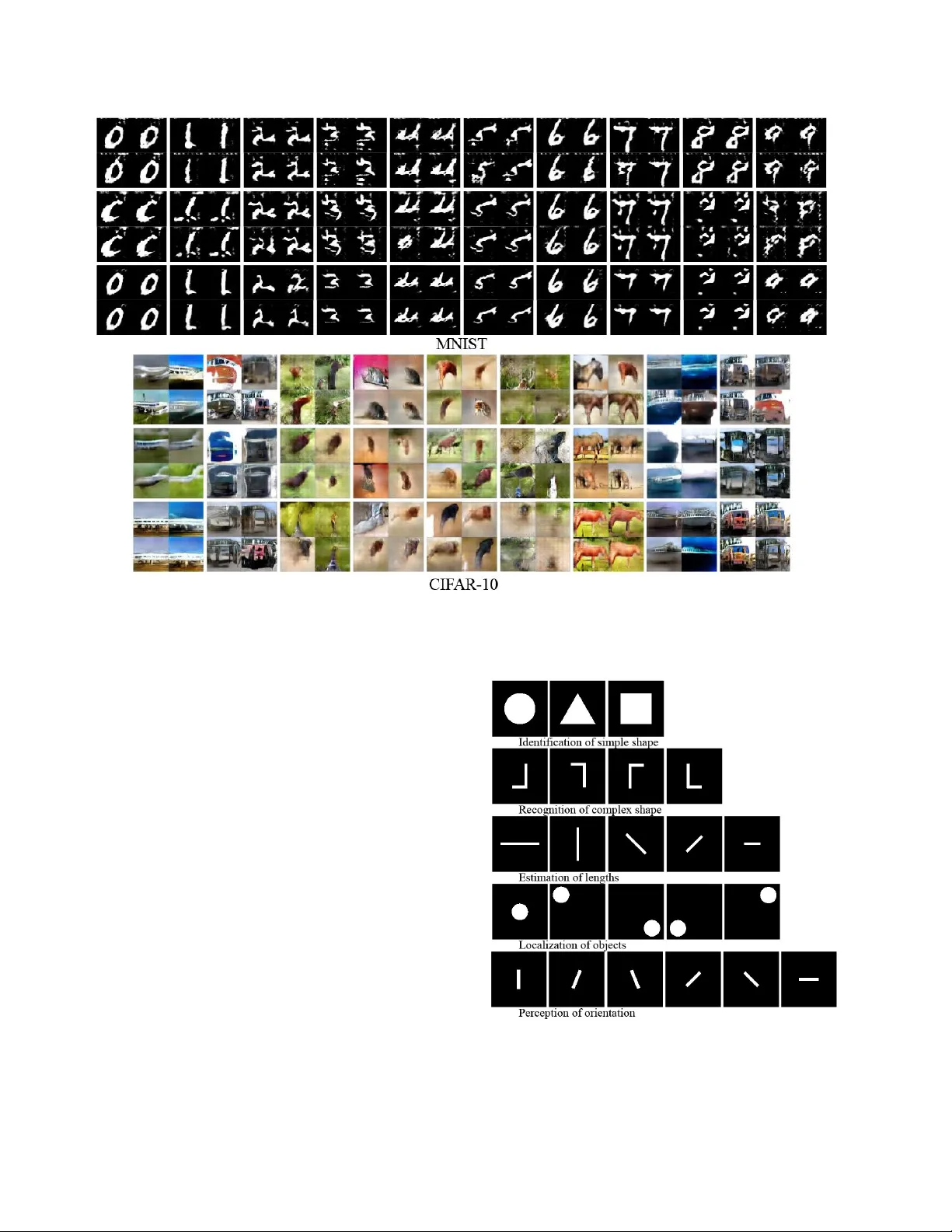

Listen to the Image ∗ Di Hu, Dong W ang, Xuelong Li, Feiping Nie † , Qi W ang School of Computer Science and Center for OPT ical IMagery Analysis and Learning (OPTIMAL), Northwestern Polytechnical Uni versity hdui831@mail.nwpu.edu.cn, nwpuwangdong@gmail.com, xuelong li@ieee.org, feipingnie@gmail.com, crabwq@gmail.com Abstract V isual-to-auditory sensory substitution devices can as- sist the blind in sensing the visual en vir onment by tr ans- lating the visual information into a sound pattern. T o im- pr ove the tr anslation quality , the task performances of the blind ar e usually employed to evaluate differ ent encoding schemes. In contrast to the toilsome human-based assess- ment, we ar gue that machine model can be also developed for evaluation, and more efficient. T o this end, we firstly pr opose two distinct cr oss-modal per ception model w .r .t. the late-blind and congenitally-blind cases, which aim to gener- ate concrete visual contents based on the tr anslated sound. T o validate the functionality of proposed models, two novel optimization strate gies w .r .t. the primary encoding scheme ar e pr esented. Further , we conduct sets of human-based ex- periments to e valuate and compar e them with the conducted machine-based assessments in the cross-modal generation task. Their highly consistent r esults w .r .t. differ ent encod- ing schemes indicate that using machine model to acceler- ate optimization evaluation and r educe e xperimental cost is feasible to some extent, which could dramatically pr omote the upgr ading of encoding scheme then help the blind to impr ove their visual per ception ability . 1. Introduction There are millions of blind people all ov er the world, how to help them to “re-see” the outside world is a signif- icant but challenging task. In general, the main causes of blindness come from various eye diseases [ 35 ]. That is to say , the visual cortex should be lar gely unwounded. Hence, ∗ c 2019 IEEE. Personal use of this material is permitted. Permission from IEEE must be obtained for all other uses, in any current or future media, including reprinting/republishing this material for advertising or promotional purposes, creating ne w collective works, for resale or redis- tribution to servers or lists, or reuse of any copyrighted component of this work in other works. † Corresponding author . Figure 1. An illustration of the vOICe device. A camera mounted on forehead captures the scene in front of the blind, which is then con verted to sound and transmitted to headphones. After 10-15 hours of training, the regions of visual cortex become activ e due to cross-modal plasticity . Best viewed in color . it becomes possible to use other organs (e.g., ears) as the sensor to “visually” perceive the en vironment according to the theory of cross-modal plasticity [ 4 ]. In the past decades, there ha ve been sev eral projects attempting to help the dis- abled to reco ver their lost senses via other sensory channels, and the relev ant equipments are usually named as Sensory Substitution (SS) devices. In this paper , we mainly focus on the visual-to-auditory SS device of vOICe 1 (The upper case of OIC means “Oh! I See!”). The vOICe is an auditory sensory substitution device that encodes 2D gray image into 1D audio signal. Concretely , it translates vertical position to frequency , left-right position to scan time, and brightness to sound loudness, as shown in Fig. 1 . After training with the device, both blindfolded sighted and blind participants can identify dif ferent objects just via the encoded audio signal [ 33 ]. More surprisingly , neural imaging studies (e.g., fMRI and PET) sho w that the visual cortex of trained participants are activ ated when lis- tening to the vOICe audio [ 1 , 28 ]. Some participants, espe- cially the late-bind, report that they can “see” the shown ob- 1 www .seeingwithsound.com jects, ev en the colorful appearance [ 18 ]. As a consequence, the vOICe de vice is considered to pro vide a nov el and effec- tiv e way to assist the blind in “visually” sensing the world via hearing [ 36 ]. Howe ver , the current vOICe encoding scheme is identi- fied as a primary solution [ 33 ]. More optimization efforts should be considered and ev aluated to improve the transla- tion quality . Currently , we have to resort to the cognitiv e assessment of participants. Howe ver , two characteristics make it dif ficult to be adopted in practice. First, the human- based assessment consists of complicated training and test- ing procedures, during which the participants have to keep high-concentration and -pressure for a long period of time. Such toilsome ev aluation is unfriendly to the participants, and it is also inefficient. Second, amounts of control ex- periments are required to provide con vincing assessments, which therefore need much efforts as well as huge emplo y- ment cost. As a result, there is few works focusing on the optimization of current encoding scheme, while it indeed plays a crucial role in improving the visual perception abil- ity of the blinds [ 33 , 18 ]. By contrast, with the rapid de velopment of machine learning techniques, the artificial perception models hav e taken the advantages of efficienc y , economical, and con ve- nience, which exactly settle the abov e problems of human- based e valuation. Hence, can we design proper machine model for cross-modal perception like human, and view it as an assessment reference for different audio encoding schemes? In this paper , to answer these questions, we de- velop two cross-modal perception models w .r .t. the dis- tinct late- and congenitally-blind case, respectiv ely . In the late-blind case, as they hav e seen the environments before they were blind. The learned visual experiences could help them imagine the corresponding objects when listening to the translated sound via the vOICe [ 1 , 18 ]. Based on this, we propose one Late-Blind Model (LBM), where the visual generativ e model is initialized from abundant visual scene for imitating the conditions before blindness, then em- ployed for visual generation based on the audio embeddings of separated visual perception. While the congenitally- blind have nev er seen the world before, therefore the ab- sence of visual knowledge makes them dif ficult to imag- ine the shown objects. In this case, we propose a no vel Congenitally-Blind Model (CBM), where the visual gener- ation depends on the feedback of audio discriminator via a deriv able sound translator . W ithout any prior kno wledge in the visual domain, the CBM can generate concrete visual contents related to the audio signal. Finally , we employ the proposed model to ev aluate dif ferent encoding schemes pre- sented by us and compare the assessments with sets of con- ducted human-based ev aluations. Briefly , our contrib utions are summarized as follows, • W e propose a novel computer-assisted ev aluation task about the encoding schemes of visual-to-auditory SS devices for the blind community , which aims to vastly improv e the efficienc y of ev aluation. • T wo nov el cross-modal perception models are devel- oped for the late-blind and congenitally-blind condi- tions, respectiv ely . The generated proper visual con- tent w .r .t. the audio signal confirm their effecti veness. • W e present two novel optimization strate gies w .r .t. the current encoding schemes, and validate them on the proposed model. • W e conduct amounts of human-based e valuation ex- periments for the presented encoding schemes. The highly consistent results with the machine-model ver- ify the feasibility of machine-based assessments. 2. Related W orks 2.1. Sensory Substitution T o remedy the disabled visual perception ability of the blind, many attempts have been made to covert the visual information into other sensory perception, where the visual- to-auditory sensory substitution is the most attractiv e and promising fashion. The early echolocation approaches pro- vide a considerable con version manner [ 16 , 17 ], but the re- quired sound emission makes it impractical in man y scenar - ios, such as the noisy en vironment. Another important at- tempt is to conv ert the visual information into speech, e.g., the Seeing-AI project 2 . Ho wev er , such direct semantic de- scription is just dev eloped for the low vision community , as it is hard to imagine the concrete visual shape for the blind people, especially the congenitally blind ones. And it also suffers from limited object and scene categories of the training set. Hence, more researchers suggest to encode the visual appearance into sequential audio signal according to specific rules, such as the vOICe. Most researchers consider that cross-modal plasticity is the essential reason to the success of the SS de vices [ 1 , 21 , 5 , 34 , 29 ], which is also the neurophysiological criterion for judging the effecti veness of these devices [ 28 ]. Cross- modal plasticity refers that the sensory depri vation of one modality can have an impact on the cortex de velopment of remaining modalities [ 4 ]. F or example, the auditory cortex of deaf subjects is activ ated by visual stimulate [ 9 ], while the visual cortex is activ ated by auditory messages for the blind people [ 34 ]. T o explore the practical effects, Arno et al. [ 2 ] proposed to detect the neural acti vations via P ositr on Emission T omography (PET) when the participants are re- quired to recognize patterns and shapes with the visual-to- auditory SS devices. For both blindfolded sighted and con- genitally blind participants, they found a variety of cortex regions related to visual and spatial imagery were activ ated relativ e to the baselines of audition. More related works can 2 https://www .microsoft.com/en-us/seeing-ai be found in [ 1 , 21 ]. These evidences show that regions of the brain normally dedicated to vision can be used to pro- cess visual information passed by sound [ 36 , 25 ]. In ad- dition, the above studies also show that there exit effecti ve interactions among modalities in the brain [ 4 , 14 ]. In view that cross-modal plasticity provides a con vincing explanation for sensory substitution, ho w to ef fectiv ely uti- lize it for the disabled people in practice becomes the key problem. The visual-to-auditory SS device of vOICe has provided one feasible encoding scheme that has been ver- ified in the practical usage. But it is dif ficult to guarantee that whether the current scheme is the most effecti ve one. By conducting sets of control e xperiments to the current scheme, such as reversing the encoding direction (e.g., the highest pitch is set to the bottom instead of the top of im- age), Stiles et al. [ 33 ] found that the primary scheme did not hold the best performance in the task of matching images and sounds, which indicates that better encoding scheme is expected to improve the cross-modal perception ability of the blind people further . Howe ver , the ev aluation of encod- ing schemes based on human feedback is relati vely compli- cated and inef ficient. In this paper, a kind of machine model is proposed to accelerate the assessment progress, which is also con venient and economical. 2.2. Cross-modal Machine Lear ning In the machine learning community , it is also expected to build ef fectiv e cross-modal learning model similar to the brain. And many attempts have been made in different cross-modal tasks. Ngiam et al. [ 24 ] introduced a nov el learning setting to ef fectiv ely e valuate the shared represen- tation across modalities, named as “hearing to see”. There- into, the model was trained with one provided modality but tested on the other modality , which later confirmed the ex- isting semantic correlation across modalities. Inspired by this, Sri vasta va et al. [ 32 ] proposed to generate the miss- ing text for a given image, where the shared semantic pro- vided the probability to predict corresponding descriptions. Further , Owens et al. [ 27 ] focused on the more compli- cated sound generation task. They presented an algorithm to synthesize sound for silent videos about hitting objects. T o make the generated sound realistic enough, they re- sorted to a feature-based ex emplar retriev al strategy instead of direct generation [ 27 ]. While more recent work pro- posed an immediate way in generating natural sound for wild videos [ 38 ]. On the contrary , Chung et al. [ 7 ] pro- posed to generate visually talking face based on an initial frame and speech signal. F or a given audio sequence, the proposed model could generate a determined image frame that best represents the speech sample at each time step [ 7 ]. Recently , the impressi ve Generation Adversarial Networks (GANs) [ 12 ] provide more possibilities in the cross-modal generation. The early works focused on the text-based im- age generation [ 30 , 37 ], which were all de veloped based on the conditional GAN [ 22 ]. Moreov er , it becomes possible to generate images that did not exist before. Chen et al. [ 6 ] extended above model into more complicated audiovisual perception task. The proposed model [ 6 ] showed noticeable performance in generating musical sounds based on the in- put image and vice versa. Although the cross-modal machine learning models abov e hav e sho wn impressiv e generation ability for the missing modality , they do not satisfy the blind condition. This is because the blind people cannot receiv e the visual information, while the above models hav e to utilize these in- formation during training. By contrast, we focus on this in- tractable cross-modal generation problem, where the miss- ing modalities are unav ailable in the whole lifecycle. 3. Cross-modal Machine P erception 3.1. Late-blind Model People who had visual experience of outside world but go blind because of diseases or physical injury are called late-blind people. Hence, when wearing the SS device of the vOICe to percei ve objects, the pre-e xisting visual ex- perience in their brain can provide effecti ve reference for cross-modal perception. The relev ant cognitiv e experiments also confirm this, especially some blind participants could unconsciously color the objects while the color information was not encoded into sound [ 18 ]. This probably comes from the participants’ memory of object color [ 18 ]. Such significant character inspires us to build one three-stage Late-Blind Model (LBM), as shown in Fig. 2 . Concretely , we propose to model the late-blind case by decoupling the cross-modal perception into separated sound perception and out-of-sample visual perception, then coupling them for vi- sual generation. In the first stage, as the category labels of translated sound are a vailable, the audio con volutional net- work of VGGish [ 13 ] is employed as the perception model to learn effecti ve audio embeddings via a typical sound clas- sification task, where the input sound are represented in log- mel spectrogram 3 . For the visual modality , to achiev e di ver- siform visual e xperience, the perception model is trained to model abundant visual imageries via adv ersarial mecha- nism in the second stage, which aims to imitate the circum- stances of before going blind. Further , the learned audio embeddings are viewed as the input to visual generation, and the whole cross-modal perception model is fine-tuned by identifying the generated images with an off-the-shelf vi- sual classifier 4 . Obviously , the generated images w .r .t. the translated sound should be much similar to the ones used to train the visual generator , which accordingly provides the 3 The mel-scale depicts the characteristics of human hearing. 4 The visual classifier is trained with the same dataset used in the second stage but fix ed when fine-tuning. Figure 2. The diagram of the proposed late-blind model. The image and vOICe translator outside the dashed boxes indicate the circumstance of blindness when using SS de vices, while the three-stage perception model within the boxes is constituted by sound embedding, visual knowledge learning, and cross-modal generation. Best viewed in color . possibilities to automatically color the shown objects. Results. T o e valuate the proposed LBM, we start with the simple handwritten digits generation task. Concretely , the MNIST images [ 20 ] are firstly translated into sounds via the vOICe for training the audio subnet and the cross-modal generation model, while the more challenging dataset of Ex- tension MNIST (EMNIST) Digits [ 8 ] is employed for train- Figure 3. Generated visual examples using our late-blind model. T op two ro ws are digit images generated from the translated sound of MNIST and visual kno wledge of EMNIST , while bottom two rows are object images generated from the sound of CIF AR-10 and visual knowledge of ImageNet. ing the visual generator and classifier . And the officia l train- ing/testing splits on both datasets are adopted [ 20 , 8 ]. Fig. 3 shows some generated digit examples w .r .t. the translated sounds in the testing set of MNIST 5 . Obviously , the gener- ated digit contents can be easily recognized and also cor- responding to the labels of translated sounds, which con- firms that the visual experience learned from EMNIST in- deed helps to build visual content with audio embeddings. Further , we attempt to generate realistic objects by training LBM with more difficult datasets, i.e., using CIF AR-10 [ 19 ] for cross-modal generation and ImageNet [ 10 ] for visual knowledge learning. T o improv e the complexity of visual experiences, apart from the nine categories of CIF AR-10 6 , we randomly select another ten classes from ImageNet for training the visual networks. As expected, although the translated sounds do not encode the color information of original object, the generated objects are automatically col- ored due to the priori visual knowledge from ImageNet, which provides a kind of confirmation to the theory of experience-dri ven multi-sensory associations [ 18 ]. But on the other hand, due to the absence of real images, our gen- erated images are not as good as the ones generated by di- rectly discriminating images. It exactly confirms the diffi- culty of cross-modal generation in the blind case. 3.2. Congenitally-blind Model Different from late-blind people, congenitally-blind peo- ple were born blind. Their absent visual e xperience makes them extremely difficult to imagine the visual appearance 5 Network settings and training details are in the materials. 6 The deer class of CIF AR-10 is removed due to its absence in the Ima- geNet dataset. Figure 4. The diagram of the proposed congenitally-blind model. The image and vOICe translator outside the dashed boxes represent the circumstance of blindness with SS devices, while the two-stage model within the boxes consists of preliminary sound embedding and cross-modal generativ e adversarial perception. of shown objects. Howe ver , cross-modal plasticity provides the possibility to effecti vely sense concrete visual content via the audio channel [ 18 , 36 ], which depends on specific image-to-sound translation rules. In practice, before train- ing the blinds to “see” objects with the vOICe device, they should learn the translation rules firstly by identifying sim- ple shapes, which could make them sense the objects more precisely [ 33 ]. In other words, the cross-modal translation helps to bridge the visual and audio perception in the brain. Based on this, we propose a two-stage Congenitally-Blind Model (CBM), as sho wn in Fig. 4 . Similarly with LBM, the VGGish network is firstly utilized to model the trans- lated sound via a classification task, and the extracted em- beddings are then used as the conditional input to cross- modal generation. In the second stage, without resorting to the prior visual knowledge, we propose to directly gener- ate concrete visual contents by a novel cross-modal GAN, where the generator and discriminator deal with different modalities. By encoding the generated images into the sound modality with deri vable cross-modal translation, it becomes feasible to directly compare the generated visual image and the original translated sound. Meanwhile, to generate correct visual content to the translated sound, the visual generator takes the audio embeddings as the condi- tional input and the audio discriminator takes the softmax regression as an auxiliary classifier , which accordingly con- stitute a variant auxiliary classifier GAN [ 26 ]. Results. As the visual experience is not required in the congenitally-blind case, the datasets employed for pre- training the visual generator are not needed any more. Hence, our proposed CBM is directly ev aluated on MNIST and CIF AR-10 by following the traditional training/testing splits in [ 20 , 19 ]. As the vOICe translation just deals with Figure 5. Generated visual examples using our congenitally-blind model. T op two rows are digit images generated from the trans- lated sound of the MNIST dataset, while bottom two rows are ob- ject images generated from the sound of the CIF AR-10 dataset. gray images, the images discriminated by sound do not con- tain RGB information, as shown in Fig. 5 . Obviously , these image samples on both datasets are not as good as the ones of LBM, due to the compressed visual content by the sound translator and the absent visual experience. Even so, our CBM can still generate concrete visual content according to the input sound, e.g, the distinct digit forms. As the objects in CIF AR-10 suf fer from complex background that distracts the sound translation for objects, the generated appearances become lo w-quality while the outlines can be still captured, such as horse, airplane, etc. On the contrary , clean back- ground can dramatically help to generate high-quality ob- ject images, and more examples can be found in the follow- ing sections. 4. Evaluation of Encoding Schemes In view that Stiles et al. has shown the current encoding scheme can be further optimized to improv e its applicability for the blind community [ 33 ], it becomes indispensable to efficiently ev aluate different schemes according to the task performance of cross-modal perception. Traditionally , the ev aluation has to be based on the inef ficient participants’ feedback. In contrast, as the proposed cross-modal percep- tion model has shown impressi ve visual generation ability , especially the congenitally-blind one, it is worth consider- ing whether the machine model can be used to ev aluate the encoding scheme. More importantly , such machine-based assessment is more conv enient and efficient compared with the manual fashion. Hence, in this section, we make a com- parison between machine- and human-based assessment, which is performed with modified encoding schemes. Different from the simple re versal of the encoding direc- tion [ 33 ], we aim to explore more possibilities in optimiz- ing the primary scheme. First of all, a well-known fact is that there exist large differences in bandwidth between vi- sion and audition [ 18 ]. When 2D images are projected into 1D audio sequence with limited length, amounts of image content and details are compressed or declined. One direct approach is to increase the audio length, which accordingly makes the vOICe encode more detailed visual contents with more audio frames. Meanwhile, the blind people can also hav e more time to imagine the corresponding image con- tent. But on the other hand, such augmentation cannot be unlimited for efficiency . Hence, the time length is doubled from the primary setting of 1.05s to 2s in the first modified encoding scheme. Apart from the bandwidth discrepancy , another crucial but pre viously neglected fact should be also paid attention to. Generally , humans are most sensitiv e (i.e. able to dis- cern at the lo west intensity) to the sound frequencies be- tween 2K and 5K Hz [ 11 ]. As the blind participants per- ceiv e the image content via their ears, it is necessary to provide high-quality audio signal that should exist in the sensitiv e frequency area. Howe ver , due to the bandwidth discrepancy between modalities, it is dif ficult to precisely perceiv e and imagine all the visual contents in front of the blind via the translated sound. T o address this issue, we argue that the center of the visual field should be more im- portant than other visual areas for the con venience of prac- tical use. Hence, we aim to effecti vely project the central areas to the sensiti ve frequencies of human ears. Inspired by [ 15 ], a novel rectified tanh distribution is proposed for P osition-F r equency (PF) projection, i.e., f r eq uency = s /2 · tanh ( α · ( i − r ow s /2)) + s /2 , (1) where s is the frequency range of the encoded sound, α is the scaling parameter, i is the position of translated pixel, and r ow s is the image height. As shown in Fig. 6 , it is ob- vious that the image centers (row 20-40) fall into the most sensitiv e frequencies area, where the highest pitch corre- sponds to the top position of image. More importantly , compared with the suppressed frequency response of the pe- ripheral regions of images, the central regions enjoy larger frequency range and are accordingly gi ven more attention. In contrast, the exponential function adopted in the primary setting takes no consideration of the auditory perception characteristics of humans. The translated sound of most im- age areas are suppressed in the low-frequenc y area of belo w 2K Hz, which neither focus on the sensiti ve frequencies area nor highlight the central regions of images. Hence, such function could be not an appropriate choice. T o effecti vely e valuate the proposed encoding schemes, amounts of ev aluation tests should be conducted with par- ticipants. Accordingly , if more modified schemes are provided, much more training and testing efforts will be required, which could go beyond what we can support. Hence, we choose to focus on these tw o modifications w .r .t. audio length and position-frequency function, as well as the primary one. 4.1. Machine Assessment The quality of generated modality depends on the quality of the other encoded modality in the cross-modal generati ve Figure 6. Different position-frequency functions of the vOICe translation. Figure 7. Comparison among the generated image examples using our congenitally-blind model in terms of dif ferent encoding schemes. model [ 38 , 30 , 6 ]. Hence, it can be e xpected to e valuate dif- ferent encoding schemes by comparing the generated im- ages. In this section, the proposed CBM is chosen as the ev aluation reference, as the encoding scheme directly im- pacts the quality of translated sound for the audio discrimi- nator , then further af fects the performance of visual gener- ator , as shown in Fig. 4 . Howe ver , the adopted MNIST and CIF AR-10 dataset suffer from absent real object or quite complex background. These weaknesses mak e it quite hard to effecti vely ev aluate the practical ef fects of different en- coding schemes. Therefore, we choose the Columbia Ob- ject Image Library (COIL-20) [ 23 ] as the ev aluation dataset, which consists of 1,440 gray-scale images that belong to 20 objects. These images are taken by placing the objects in the frame center in front of a clean black background, mean- while a fixed rotation of 5 degree around the objects is also performed, which leads to 72 images per object. Consider- ing that this dataset will be also adopted by human-based assessment, we select 10 object categories from COIL-20 for ef ficiency (i.e., COIL-10), where the testing set consists of 10 selected images of each object, and the remaining ones constitute the training set. Results. T o comprehensiv ely ev aluate the generated im- ages, qualitative and quantitativ e ev aluation are both con- sidered. As shown in Fig. 12 , in general outlook, as well as in matters of detail, the images of the modified schemes are superior to the primary ones. Obviously , the images gener - ated by the primary scheme suf fer from horizontal texture noise. This is because most image area are suppressed in the low-frequenc y domain, which makes the audio network dif- ficult to achiev e ef fectiv e embeddings for visual generation. By contrast, longer audio track or more effecti ve PF func- tion can contribute to settle such issue. In addition, com- pared with longer audio signal, the improvement attainable with the proposed PF function of tanh is more significant, such as the details of toy car and fortune cat. And such su- periority comes from the ef fective frequenc y representation of pixel positions. For the quantitati ve ev aluation, we choose to compute inception score [ 31 ] and human-based e valuation, as sho wn in Fig. 8 . Concretely , we ask 18 participants to grade the quality of generated images from 1 to 5 for each scheme, which correspond to { beyond r ecognition, rough outline, clear outline , certain detail, le gible detail } . Then, mean value and standard de viation are computed for comparison. In Fig. 8 , it is clear that the qualitati ve and quantitati ve ev al- uation sho w consistent results. In particular , both of the inception score and human ev aluation show that the modi- fied encoding scheme of PF function enjoys the lar gest im- Figure 8. Evaluation of the generated images by our CBM, where different encoding schemes are compared in human e valuation and inception score. prov ements, which further confirms the significance of the projection function. And the enlarged audio length indeed helps to refine the primary scheme. 4.2. Cognitive Assessment The blind participants’ feedback or task performance is usually served as the indicator to the quality of encoding schemes in the con ventional assessment [ 3 , 33 ]. F ollow- ing the con ventional e valuation strate gy [ 33 ], 9 participants are randomly divided into three groups (3 participants per group). Each group corresponds to one of the three encod- ing schemes. The entire ev aluation process takes about 11 hours. Before the formal e valuation, the participants are firstly asked to complete the preliminary training lessons to be familiar with the translation rules and simple visual concepts. The preliminary lessons include: identification of simple shape, i.e., triangle, square, and circle; recogni- tion of complex shape, e.g., a normal “L ”, an upside-do wn “L ”, a backward “L ”, and a backw ard and upside-do wn “L ”; perception of orientation, e.g., straight white line of fixed- length in different rotation angles; estimation of lengths, e.g., horizonal white line with different lengths; localiza- tion of objects, i.e., circles in dif ferent places of images. During training, the assistant of each participant plays the pre-translated sound for them, then tell them the concrete visual content within the corresponding image 7 . After fin- ishing amounts of repetiti ve preliminary lessons, the par- ticipants are scheduled to achie ve adv anced training of rec- ognizing real objects. Concretely , the COIL-10 dataset is adopted for training and testing the participants, where the training procedure is the same as the preliminary lessons. Note that the e valuation test is conducted after finishing the training of each object category instead of all the categories. Finally , the ev aluation results are vie wed as the quality es- timation of the encoded sound and used as the reference for machine-based assessment. Results. Fig. 9 shows the ev aluation results in precision, re- call and F1-score w .r .t. different encoding schemes. Specif- ically , the scheme with modified PF function performed significantly better than the primary scheme ( p < 0 . 005 , with Bonferroni multiple comparisons correction) in pre- cision, and the recall bars indicate that the scheme with modified PF function performed significantly better than the primary scheme ( p < 0 . 01 , with Bonferroni multiple comparisons correction). Similar results are observed on F1-score. According to the con ventional criterion [ 33 ], it can be concluded that the introduced modifications indeed help to impro ve the quality of cross-modal translation. And it further confirms the assumptions about audio length and the characteristics of human hearing. Nev ertheless, there still remains a large disparity of classification performance between normal visual and indirect auditory perception. 7 The training details and image samples can be found in material. Figure 9. Human image classification performance by hearing the translated sound via different encoding schemes. Hence, more effecti ve encoding schemes are expected for the blind community . Correlation Coefficient Machine (IS) Machine (Eva.) Human (Recall) 0.947 1.000 Human (Precision) 0.952 0.805 Human (F1-score) 0.989 0.889 T able 1. The comparison analysis between machine- and human- based assessment in terms of correlation coefficient. 4.3. Assessment Analysis The main motiv ation of designing the cross-modal per- ception model is to liberate the participants from the bor- ing and inef ficient human assessments. According to the shown results abo ve, we can find that the modified scheme of PF function gets the best performance while the pri- mary scheme is the worst one on both assessments. Fur- ther , quantitativ e comparison is also provided in T able 1 . Obviously , both assessments hav e reached a consensus in terms of correlation coefficient, especially the column of In- ception Scor e (IS), which confirms the v alidity of machine- based assessments to some extent. 5. Conclusion In this paper , we propose a novel and ef fectiv e machine- assisted ev aluation approach to the visual-to-auditory SS scheme. Compared with the con ventional human-based as- sessments, the machine fashion performs more ef ficiently and con veniently . On the other hand, this paper gi ves a ne w direction for the auto-eval uation of SS de vices through lim- ited comparisons, more possibilities should be explored in the future, including more optimization schemes and more effecti ve machine e valuation model. Further, the ev alua- tion should be combined with deriv able encoding module to constitute a completely automated solver of the encoding scheme without any human intervention, just like seeking high-quality models via AutoML. Acknowledgments This w ork was supported in part by the National Nat- ural Science F oundation of China grant under number 61772427, 61751202, U1864204 and 61773316, Natu- ral Science Foundation of Shaanxi Province under Grant 2018KJXX-024, and Project of Special Zone for National Defense Science and T echnology Innov ation. References [1] A. Amedi, W . M. Stern, J. A. Camprodon, F . Bermpohl, L. Merabet, S. Rotman, C. Hemond, P . Meijer , and A. Pascual-Leone. Shape conv eyed by visual-to-auditory sensory substitution activ ates the lateral occipital complex. Natur e neur oscience , 10(6):687, 2007. 1 , 2 , 3 [2] P . Arno, A. G. De V older , A. V anlierde, M.-C. W anet- Defalque, E. Streel, A. Robert, S. Sanabria-Boh ´ orquez, and C. V eraart. Occipital activ ation by pattern recognition in the early blind using auditory substitution for vision. Neuroim- age , 13(4):632–645, 2001. 2 [3] P . Bach-y Rita and S. W . Kercel. Sensory substitution and the human–machine interface. T rends in cognitive sciences , 7(12):541–546, 2003. 8 [4] D. Ba velier and H. J. Ne ville. Cross-modal plasticity: where and how? Natur e Reviews Neur oscience , 3(6):443, 2002. 1 , 2 , 3 [5] D. Brown, T . Macpherson, and J. W ard. Seeing with sound? exploring different characteristics of a visual-to-auditory sensory substitution device. P erception , 40(9):1120–1135, 2011. 2 [6] L. Chen, S. Sriv astava, Z. Duan, and C. Xu. Deep cross- modal audio-visual generation. In Pr oceedings of the on The- matic W orkshops of ACM Multimedia 2017 , pages 349–357. A CM, 2017. 3 , 7 [7] J. S. Chung, A. Jamaludin, and A. Zisserman. Y ou said that? arXiv pr eprint arXiv:1705.02966 , 2017. 3 [8] G. Cohen, S. Afshar , J. T apson, and A. van Schaik. Emnist: an extension of mnist to handwritten letters. arXiv preprint arXiv:1702.05373 , 2017. 4 , 12 [9] L. G. Cohen, P . Celnik, A. Pascual-Leone, B. Corwell, L. Faiz, J. Dambrosia, M. Honda, N. Sadato, C. Gerlof f, M. D. Catala, et al. Functional rele vance of cross-modal plasticity in blind humans. Natur e , 389(6647):180, 1997. 2 [10] J. Deng, W . Dong, R. Socher, L.-J. Li, K. Li, and L. Fei- Fei. Imagenet: A large-scale hierarchical image database. In Computer V ision and P attern Recognition, 2009. CVPR 2009. IEEE Confer ence on , pages 248–255. Ieee, 2009. 4 [11] S. A. Gelfand. Essentials of audiology . Thieme Ne w Y ork, 2001. 6 [12] I. Goodfellow , J. Pouget-Abadie, M. Mirza, B. Xu, D. W arde-Farley , S. Ozair , A. Courville, and Y . Bengio. Gen- erativ e adversarial nets. In Advances in neural information pr ocessing systems , pages 2672–2680, 2014. 3 [13] S. Hershey , S. Chaudhuri, D. P . Ellis, J. F . Gemmeke, A. Jansen, R. C. Moore, M. Plakal, D. Platt, R. A. Saurous, B. Seybold, et al. Cnn architectures for large-scale audio classification. In Acoustics, Speech and Signal Processing (ICASSP), 2017 IEEE International Conference on , pages 131–135. IEEE, 2017. 3 , 11 [14] N. P . Holmes and C. Spence. Multisensory inte gra- tion: space, time and superadditivity . Curr ent Biology , 15(18):R762–R764, 2005. 3 [15] D. Hu, F . Nie, and X. Li. Deep binary reconstruction for cross-modal hashing. IEEE T ransactions on Multimedia , 2018. 6 [16] T . Ifukube, T . Sasaki, and C. Peng. A blind mobility aid modeled after echolocation of bats. IEEE T ransactions on biomedical engineering , 38(5):461–465, 1991. 2 [17] A. J. Kolarik, M. A. T immis, S. Cirstea, and S. Pardhan. Sensory substitution information informs locomotor adjust- ments when walking through apertures. Experimental brain r esearc h , 232(3):975–984, 2014. 2 [18] ´ A. Kristj ´ ansson, A. Moldoveanu, ´ O. I. J ´ ohannesson, O. Balan, S. Spagnol, V . V . V algeirsd ´ ottir , and R. Unnthors- son. Designing sensory-substitution devices: Principles, pitfalls and potential 1. Restorative neur ology and neuro- science , 34(5):769–787, 2016. 2 , 3 , 4 , 5 , 6 [19] A. Krizhevsk y and G. Hinton. Learning multiple layers of features from tiny images. T echnical report, Citeseer, 2009. 4 , 5 [20] Y . LeCun. The mnist database of handwritten digits. http://yann. lecun. com/exdb/mnist/ , 1998. 4 , 5 , 12 [21] L. B. Merabet, L. Battelli, S. Obretenov a, S. Maguire, P . Meijer , and A. Pascual-Leone. Functional recruitment of visual cortex for sound encoded object identification in the blind. Neur or eport , 20(2):132, 2009. 2 , 3 [22] M. Mirza and S. Osindero. Conditional generativ e adversar- ial nets. arXiv pr eprint arXiv:1411.1784 , 2014. 3 [23] S. A. Nene, S. K. Nayar , H. Murase, et al. Columbia object image library (coil-20). 1996. 7 [24] J. Ngiam, A. Khosla, M. Kim, J. Nam, H. Lee, and A. Y . Ng. Multimodal deep learning. In Pr oceedings of the 28th inter- national conference on machine learning (ICML-11) , pages 689–696, 2011. 3 [25] U. Noppeney . The effects of visual depri vation on functional and structural or ganization of the human brain. Neuroscience & Biobehavioral Re views , 31(8):1169–1180, 2007. 3 [26] A. Odena, C. Olah, and J. Shlens. Conditional image synthesis with auxiliary classifier gans. arXiv preprint arXiv:1610.09585 , 2016. 5 , 11 [27] A. Owens, P . Isola, J. McDermott, A. T orralba, E. H. Adel- son, and W . T . Freeman. V isually indicated sounds. In Pro- ceedings of the IEEE Conference on Computer V ision and P attern Recognition , pages 2405–2413, 2016. 3 [28] C. Poirier, A. G. De V older, and C. Scheiber . What neu- roimaging tells us about sensory substitution. Neur oscience & Biobehavioral Re views , 31(7):1064–1070, 2007. 1 , 2 [29] M. Ptito, S. M. Moesgaard, A. Gjedde, and R. Kupers. Cross- modal plasticity rev ealed by electrotactile stimulation of the tongue in the congenitally blind. Brain , 128(3):606–614, 2005. 2 [30] S. Reed, Z. Akata, X. Y an, L. Logeswaran, B. Schiele, and H. Lee. Generativ e adversarial text to image synthesis. arXiv pr eprint arXiv:1605.05396 , 2016. 3 , 7 [31] T . Salimans, I. Goodfello w , W . Zaremba, V . Cheung, A. Rad- ford, and X. Chen. Improv ed techniques for training gans. In Advances in Neural Information Pr ocessing Systems , pages 2234–2242, 2016. 7 [32] N. Sriv astav a and R. R. Salakhutdinov . Multimodal learn- ing with deep boltzmann machines. In Advances in neur al information pr ocessing systems , pages 2222–2230, 2012. 3 [33] N. R. Stiles and S. Shimojo. Auditory sensory substitution is intuiti ve and automatic with texture stimuli. Scientific re- ports , 5:15628, 2015. 1 , 2 , 3 , 5 , 6 , 8 [34] E. Striem-Amit, L. Cohen, S. Dehaene, and A. Amedi. Read- ing with sounds: sensory substitution selecti vely activates the visual word form area in the blind. Neuron , 76(3):640– 652, 2012. 2 [35] B. Thylefors, A. Negrel, R. Pararajase garam, and K. Dadzie. Global data on blindness. Bulletin of the world health or ga- nization , 73(1):115, 1995. 1 [36] J. W ard and T . Wright. Sensory substitution as an artificially acquired synaesthesia. Neuroscience & Biobehavioral Re- views , 41:26–35, 2014. 2 , 3 , 5 [37] H. Zhang, T . Xu, H. Li, S. Zhang, X. Huang, X. W ang, and D. Metaxas. Stackgan: T ext to photo-realistic image syn- thesis with stacked generativ e adversarial networks. arXiv pr eprint , 2017. 3 [38] Y . Zhou, Z. W ang, C. Fang, T . Bui, and T . L. Ber g. V isual to sound: Generating natural sound for videos in the wild. arXiv pr eprint arXiv:1712.01393 , 2017. 3 , 7 6. Network Setting and T raining 6.1. Late-blind Model In this section, we provide the architectural details of the proposed late-blind model. First, the audio ConvNet follows the VGGish architecture proposed in [ 13 ], which achiev es excellent results on audio classification task. Sec- ond, the visual generator and discriminator mostly adopt the same networks in DCGAN framew ork [ ? ], except that the out channel size of last decon volution layer is set to 1 in MNIST experiments. Third, due to different complexi- ties of MNIST and CIF AR-10 data, we use dif ferent visual classifiers for those two datasets. Specifically , a small Con- vNet with two conv olution layers follo wed by two fully- connected layers is utilized to classify MNIST digits, and the ResNet-18 [ ? ] is employed as the visual classifier for CIF AR-10 dataset. The training procedure of the whole late-blind model is composed of three steps. First, the audio Con vNet is pre- trained on MNIST/CIF AR-10 dataset for audio classifica- tion, where the audio is obtained by transforming the im- ages with vOICe. And the visual classifier is pre-trained on EMNIST/ImageNet dataset for image classification. Sec- ond, the adversarial training strategy in [ ? ] is used to train visual generator and discriminator on EMNIST/ImageNet dataset for visual kno wledge learning. Finally , the audio Con vNet, visual generator , and visual classifier are concate- nated for cross-modal generation. W e firstly fix the visual generator and visual classifier , and fine-tune the audio Con- vNet with image classification loss for se veral epochs. Then we train the visual generator and audio Con vNet together with fixed visual classifier . T o be specific, we use a small initial learning rate of 0.001 with Adam optimizer [ ? ] for fine-tuning the audio ConvNet, which decreases by 1 10 when train the visual generator and audio Con vNet together . 6.2. Congenitally-blind Model The proposed congenitally-blind model consists of one sound perception module and one cross-modal generation module. For the former , the of f-the-shelf large-scale audio classification network of VGGish [ 13 ] is emplo yed, but the embedding dim of the second FC layer is set to 128 and the out dim is set to the number of classes, i.e., 10. T o ef- fectiv ely train such sound model, we set batch size to 100 and choose the Adam optimizer with learning rate of 0.0002 and beta 1 of 0.5. For the latter , we propose a variant Aux- iliary Classifier GAN (A CGAN) [ 26 ], where the input con- ditional label is replaced with audio embeddings. More im- portantly , different from the unimodal processing of AC- GAN, the generator deals with visual information while the discriminator focuses on the audio messages. Concretely , the visual generator firstly projects and reshapes the input audio embeddings and noise into certain image shapes (e.g., 8 × 8 × 128 for the MNIST dataset) via one Fully Connected (FC) layer and one reshape layer , which is then processed by 3 up-sampling module. Each up-sampling layer is fol- lowed by one con volutional layer , as well as batch normal- ization and ReLU activ ation. The last layer projects the gen- erated samples into single channel images (in gray scale), where sigmoid function is adopted for activ ation. The au- dio discriminator is developed based on the VGGish net- work, where the acti vation function of con volutional layers is replaced with Leak y ReLU (with 0.2 alpha) and the dis- crimination layer and softmax layer are directly performed ov er the last Flatten layer . The entire cross-modal gener- ation model is optimized via the Adam optimizer (learning rate is set to 0.00002 and beta 1 is 0.5), and the batch size is set to 100. Moreo ver , the deri vable vOICe translation is de- riv ed from the of ficial encoding scheme, and we refactor the official code into a computational graphs for the deriv able purpose. Figure 10. Inception scores of the generated images by our LBM with different encoding schemes. 7. Encoding Scheme Evaluation by LBM In this section, we ev aluate dif ferent encoding schemes quantitativ ely and qualitativ ely . As shown in Fig. 10 , we firstly compute inception score of intermediate generated images by our LBM with different encoding schemes. And the results show similar impro vements, i.e., the modified en- coding scheme of PF function achiev es the largest improv e- ments, with quantitati ve e valuation by CBM and human- based e valuation, which indicates that our LBM frame work can also be used for machine-based assessments to some extent. For qualitative e valuation, we show more gener- ated image examples using our late-blind model with dif- ferent encoding schemes in Fig. 12 . Generally , the images of the modified schemes are better than the primary ones in most classes on MNIST/CIF AR-10 datasets. The gener- ated digit images using the modified encoding scheme show more clean background than the primary ones, especially in number 2, 3, 4, and 6. In addition, compared to longer audio length, the proposed PF function of tanh achie ves more sig- nificant improvement in almost ev ery class, which agrees with the quantitative results in Fig. 10 . As for CIF AR- 10 dataset, images of the modified schemes contain more detail information, such as windows in airplane images, legs in dog images and meadows in horse images. Mean- while, there is no obvious improv ements in sev eral difficult classes, like automobile, ship, and truck, which confirms the dif ficulty of realistic objects generation. This is because the LBM learns visual generator and visual classifier from EMNIST/ImageNet datasets and it’ s hard to transfer learned knowledge to MNIST/CIF AR-10 datasets in cross modal generation, especially when there are extra more classes in ImageNet dataset. Moreover , as for failure case, the mod- ified schemes obtain worse results in number 8, bird, and frog classes, and the reason behind this could be the trained LBM tend to capture detail structure of objects, resulting in ov erall object contour missing. 8. Dataset Examples In this section, we show some digit examples of MNIST [ 20 ] and EMNIST [ 8 ] in Fig. 11 . The MNIST dataset is a subset of a much larger dataset of NIST Spe- cial Database 19 [ ? ], while EMNIST is a extended MNIST dataset and a v ariant of the full NIST dataset. Hence, the EMNIST Digits enjoy an increased v ariability (e.g., size, style, rotation, etc.) and are more challenging [ 8 ]. In the handwritten digits generation task, the EMNIST Digits are adopted to provide abundant digit samples for training the visual models of LBM, while the MNIST dataset is used for training the cross-modal perception model. Figure 11. Some samples in the MNIST and EMNIST Digits dataset. 9. Cognitive Ev aluation Details 9.1. The preliminary training lessons In this section, we briefly introduce the preliminary train- ing lessons employed for training the participants. The used images are shown in Fig. 13 , and the corresponding sounds can be found in the “examples” folder . The first lesson. This lesson focused on the initial identi- fication of simple shapes, i.e., circle, triangle, and square. The assistant of each participant randomly selected and played one translated sound for the participant. After play- ing one sound, the participants were told the concrete con- tent of corresponding image. During the whole first train- ing lesson, each sound (with image) should be played for 15 times. Hence, all the translated sounds were played for 45 times totally . After finishing this lesson, the participants should take a rest for 5 minutes. The second lesson. Based on the first lesson, the second lesson aimed at the recognition of more complex shapes, i.e., a normal “L ”, an upside-down “L ”, a backward “L ”, and a backward and upside-down “L ” (i.e., 7). The assistants randomly selected and played translated sounds for each participant. After playing each sound, the assistants told the participants the concrete shape of corresponding image. In the second lesson, each sound should be played for 15 times totally . Hence, all the translated sounds were played for 60 times. After this lesson, the participants should take a rest for 5 minutes. The third lesson. In the third lesson, we aimed at the per- ception of orientation, i.e., straight white bar of fixed-length at 0, 22, -22, 45, -45, or 90 degrees relativ e to vertical (The positiv e angles correspond to clockwise rotations). The as- sistants randomly selected and played translated sounds for each participant. After playing each sound, the participants were told the concrete orientation of corresponding bar . In the third lesson, each sound should be played for 15 times totally . Hence, all the translated sounds were played for 90 times. After this lesson, all the participants should take a rest for 5 minutes. The fourth lesson. This lesson focused on the estimation of different lengths, i.e., fi ve bars with different lengths. T o improv e the sensiti vity of lengths, these five bars were also placed in one of four orientations, i.e., 0. 90, 45, and -45 degrees as in the third lesson. During training, the assis- tants randomly selected and played translated sounds for each participant. After playing each sound, the assistants told the participants the concrete length of corresponding bar (by touch). The translated sound of each image should be played for 15 times totally . Hence, all the sounds were played for 75 times. After finishing this lesson, the partici- pants should take a rest for 5 minutes. The fifth lesson. In the last lesson, the participants were trained to possess the localization ability , where circles Figure 12. Comparison among the generated image e xamples of MNIST/CIF AR-10 dataset using our late-blind model in terms of dif ferent encoding schemes. For each dataset, the first ro w represents the primary encoding scheme, the second ro w represents the modified scheme w .r .t. longer audio length, and third ro w for the modified scheme w .r .t. the position-frequenc y function of tanh. in different places of images (i.e., upper-left, upper -right, bottom-left, bottom-right, and center) were considered. During training, the assistants first randomly selected and played translated sounds for each participant. After playing each sound, the participants were told the position of corre- sponding circle. The translated sound of each image should be played for 15 times totally . Hence, all the sounds were played for 75 times. 9.2. The advanced training lessons In the adv anced training lessons, all the participants were asked to perform the image classification task by hearing the translated sounds, where the COIL-10 dataset was em- ployed for training and testing. Concretely , the COIL-10 dataset consisted of 10 real objects, such as toy car , for- tune cat, bottle, etc. In the training set, each category had 70 image-sound pairs. Before training the participants, they should be told that the images of each object were taken from dif ferent angles. Note that the ev aluation test was con- ducted after finishing the training of each object category instead of all the categories. During training, the assistant played the translated sounds of each object for each par- ticipant in a certain order . After playing each sound, the Figure 13. The images used for training the participants in the pre- liminary training lessons. assistant told the participant the concrete object and corre- sponding angle. In the testing process, 100 sounds (of 10 objects) in the testing set were successiv ely played for the participants, then the participants were asked to answer if the played sound corresponded to the object in the training process. After training and testing all the 10 objects, we ev aluated the classification performance in terms of recall, precision, and F1-score.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment