AttoNets: Compact and Efficient Deep Neural Networks for the Edge via Human-Machine Collaborative Design

While deep neural networks have achieved state-of-the-art performance across a large number of complex tasks, it remains a big challenge to deploy such networks for practical, on-device edge scenarios such as on mobile devices, consumer devices, drones, and vehicles. In this study, we take a deeper exploration into a human-machine collaborative design approach for creating highly efficient deep neural networks through a synergy between principled network design prototyping and machine-driven design exploration. The efficacy of human-machine collaborative design is demonstrated through the creation of AttoNets, a family of highly efficient deep neural networks for on-device edge deep learning. Each AttoNet possesses a human-specified network-level macro-architecture comprising of custom modules with unique machine-designed module-level macro-architecture and micro-architecture designs, all driven by human-specified design requirements. Experimental results for the task of object recognition showed that the AttoNets created via human-machine collaborative design has significantly fewer parameters and computational costs than state-of-the-art networks designed for efficiency while achieving noticeably higher accuracy (with the smallest AttoNet achieving ~1.8% higher accuracy while requiring ~10x fewer multiply-add operations and parameters than MobileNet-V1). Furthermore, the efficacy of the AttoNets is demonstrated for the task of instance-level object segmentation and object detection, where an AttoNet-based Mask R-CNN network was constructed with significantly fewer parameters and computational costs (~5x fewer multiply-add operations and ~2x fewer parameters) than a ResNet-50 based Mask R-CNN network.

💡 Research Summary

The paper introduces AttoNets, a family of ultra‑compact deep neural networks designed for edge devices through a Human‑Machine Collaborative Design methodology. Instead of relying solely on handcrafted efficiency‑focused architectures or fully automated neural architecture search (NAS), the authors propose a two‑stage process that combines human intuition at the macro‑architecture level with machine‑driven fine‑grained exploration of module‑level designs.

In the first stage, designers specify a high‑level network skeleton: a deep network composed of 16 modular blocks, each connected via residual shortcuts, and an overall pooling and classification head. The focus here is on achieving high representational capacity rather than immediate efficiency. Human‑specified constraints such as minimum Top‑1 accuracy (≥ 65 % on ImageNet) are also defined.

The second stage employs Generative Synthesis, a constrained optimization framework that learns a generator G capable of producing network instances that maximize a universal performance function U while satisfying the indicator function 1_r(·). U balances modeling performance, computational complexity (measured in multiply‑add operations), and architectural complexity (parameter count). The authors set the weighting α = 2, β = γ = 0, thus heavily favoring accuracy. Starting from the human‑defined prototype, the generator iteratively refines module designs, exploring variations in bottleneck structures, channel widths, depthwise separable convolutions, squeeze‑expand patterns, and other efficiency tricks.

The resulting AttoNet variants (e.g., AttoNet‑A, AttoNet‑B, AttoNet‑C) exhibit dramatically reduced resource footprints while maintaining or improving accuracy. On ImageNet, the smallest AttoNet‑A achieves a Top‑1 accuracy 1.8 percentage points higher than MobileNet‑V1 while requiring roughly ten times fewer multiply‑add operations and parameters (≈ 1.2 M parameters vs. 4.2 M, ≈ 40 M MACs vs. 569 M). Larger AttoNets provide comparable accuracy to MobileNet‑V2 with similar or lower computational budgets.

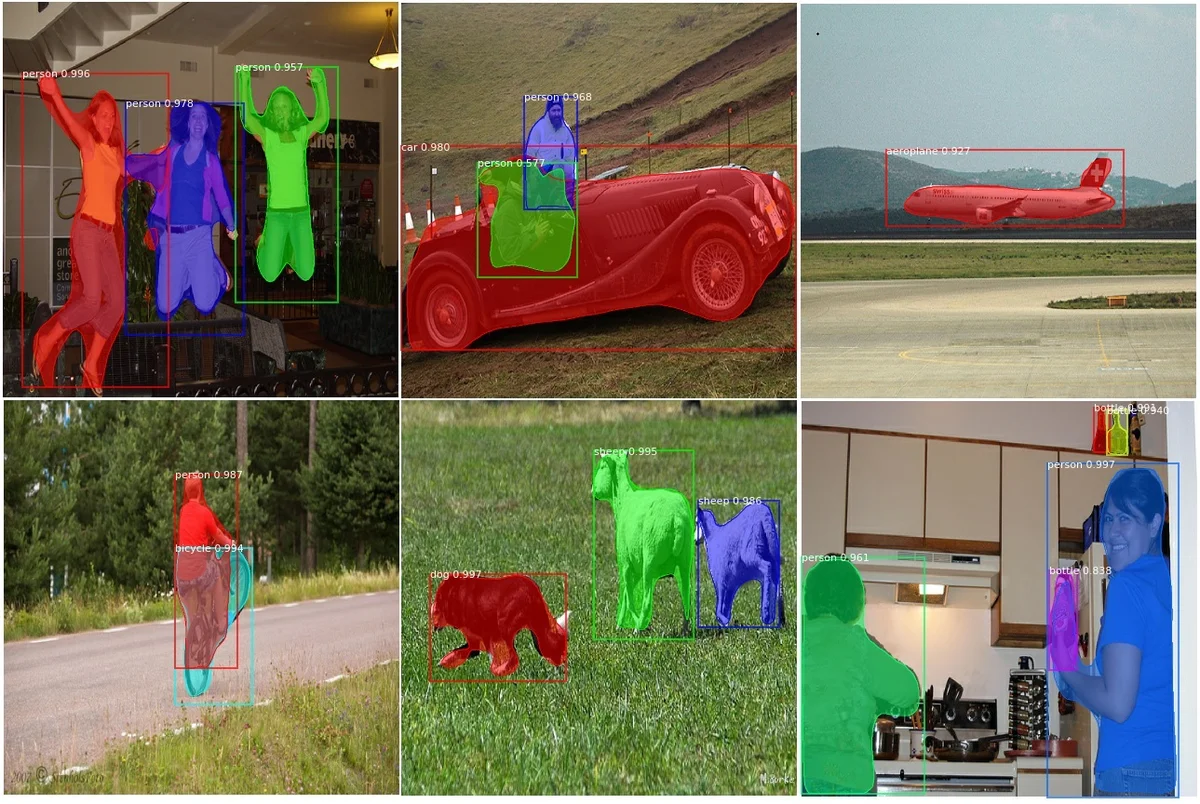

To demonstrate applicability beyond classification, the authors replace the ResNet‑50 backbone in a Mask R‑CNN pipeline with an AttoNet‑B backbone. The resulting detector reduces multiply‑add operations by a factor of five and parameter count by a factor of two relative to the ResNet‑50 baseline, with only a marginal drop (< 1 % absolute) in COCO mAP for object detection and instance segmentation.

Key contributions of the work are: (1) a novel collaborative design paradigm that leverages human expertise for high‑level structural decisions while delegating exhaustive low‑level architectural search to an automated generator; (2) the adaptation of Generative Synthesis to enforce explicit performance constraints, enabling rapid convergence to efficient designs without the massive computational expense typical of NAS; (3) empirical validation that AttoNets outperform state‑of‑the‑art efficient models (MobileNet, ShuffleNet) in both computational and parameter efficiency, and that they can serve as effective backbones for downstream vision tasks.

The paper also outlines future directions, including extending the framework to hardware‑specific constraints (e.g., DSP, NPU, FPGA resource limits), applying the collaborative methodology to other domains such as speech and natural language processing, and incorporating richer human feedback (e.g., layer reordering, memory access patterns) to further accelerate the search process.

Overall, AttoNets demonstrate that a balanced synergy between human design intuition and machine‑driven exploration can produce deep neural networks that are both highly accurate and exceptionally lightweight, making them well‑suited for deployment on resource‑constrained edge platforms.

Comments & Academic Discussion

Loading comments...

Leave a Comment