Truly unsupervised acoustic word embeddings using weak top-down constraints in encoder-decoder models

We investigate unsupervised models that can map a variable-duration speech segment to a fixed-dimensional representation. In settings where unlabelled speech is the only available resource, such acoustic word embeddings can form the basis for "zero-r…

Authors: Herman Kamper

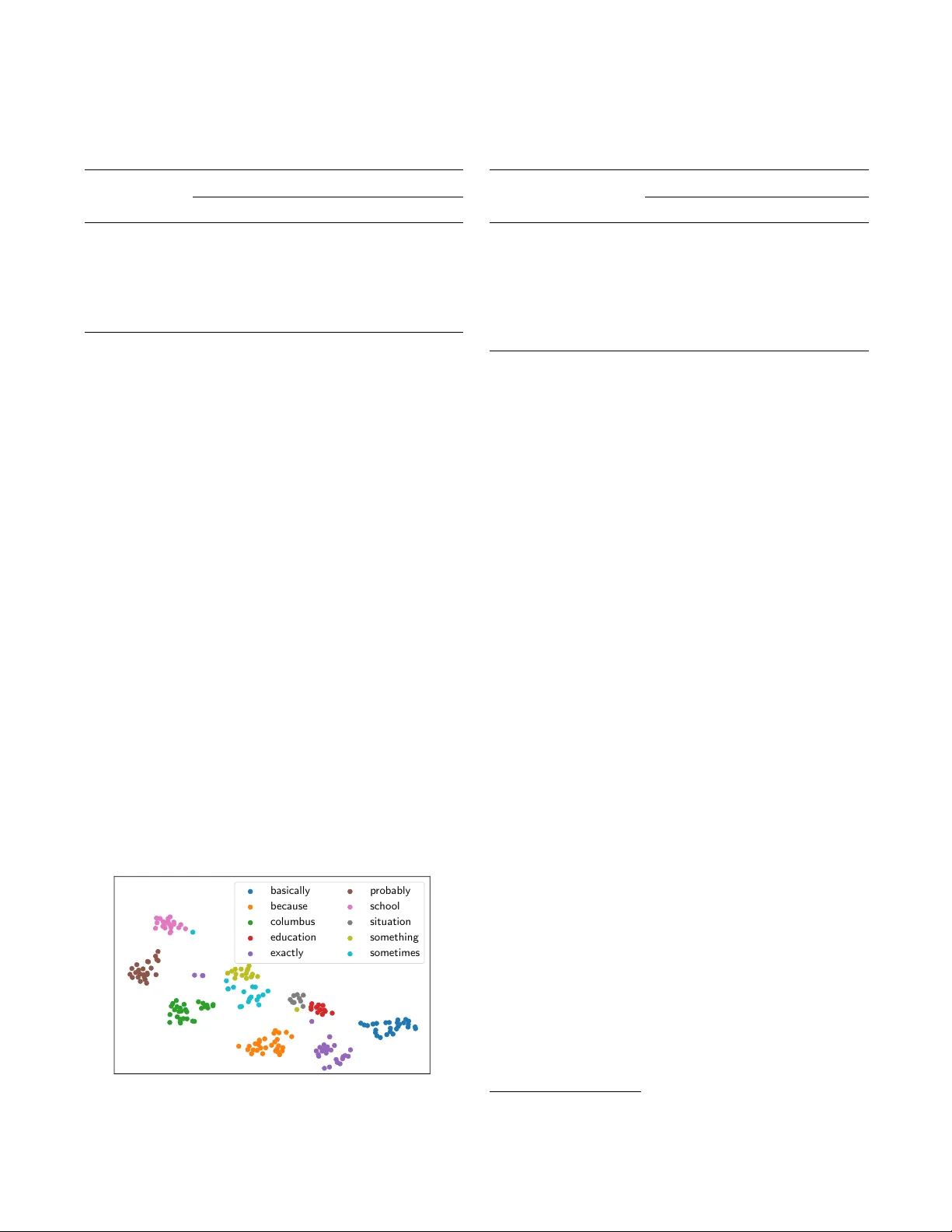

TR UL Y UNSUPER VISED A COUSTIC WORD EMBEDDINGS USING WEAK T OP-DO WN CONSTRAINTS IN ENCODER-DECODER MODELS Herman Kamper E&E Engineering, Stellenbosch Uni versity , South Africa kamperh@sun.ac.za ABSTRA CT W e in vestigate unsupervised models that can map a v ariable- duration speech se gment to a fixed-dimensional representation. In settings where unlabelled speech is the only av ailable re- source, such acoustic wor d embeddings can form the basis for “zero-resource” speech search, disco very and inde xing sys- tems. Most existing unsupervised embedding methods still use some supervision, such as word or phoneme boundaries. Here we propose the encoder-decoder correspondence autoen- coder ( E N C D E C - C AE ), which, instead of true w ord segments, uses automatically discov ered se gments: an unsupervised term discov ery system finds pairs of w ords of the same unknown type, and the E N C D E C - C A E is trained to reconstruct one word giv en the other as input. W e compare it to a standard encoder- decoder autoencoder (AE), a v ariational AE with a prior ov er its latent embedding, and do wnsampling. E N C D E C - C A E out- performs its closest competitor by 29% relati ve in average precision on two languages in a word discrimination task. Index T erms — Acoustic word embeddings, zero-resource speech processing, unsupervised learning, query-by-example. 1. INTR ODUCTION Current speech recognition models require lar ge amounts of annotated resources. For many languages, the transcription of speech audio remains a major obstacle [1]. This has prompted research into zer o-resour ce speech pr ocessing , which aims to dev elop methods that can discover linguistic structure and rep- resentations directly from unlabelled speech [2 – 4]. This prob- lem also has strong links with early language acquisition, since infants learn language without explicit hard supervision [5, 6]. Sev eral zero-resource tasks have been tackled. In unsu- pervised term discovery (UTD), the aim is to find recurring word- or phrase-like patterns in an unlabelled speech collec- tion [7]. In query-by-example search, the goal is to identify utterances containing instances of a gi ven spoken query [8 – 11]. Full-cov erage segmentation and clustering aims to tokenise an entire speech set into word-like units [6, 12 – 15]. In all these tasks, a system needs to compare speech segments of variable length. Dynamic time warping (DTW) is traditionally used, but is computationally expensi ve and has kno wn limitations [16]. Recent studies hav e therefore explored an alignment-free methodology where an arbitrary-length speech segment is em- bedded in a fixed-dimensional space such that segments of the same word type have similar embeddings [17 – 25]. Seg- ments can then be compared by simply calculating a distance in this acoustic word embedding space. Several unsupervised methods hav e been proposed, ranging from using distances to a fixed reference set [18], to unsupervised auto-encoding encoder-decoder recurrent neural networks [19, 20]. Down- sampling, where a fixed number of equidistant frames are used to represent a segment, has pro ven to be an ef fectiv e and strong baseline [13, 24]. Many of the more advanced unsupervised models, howe ver , still use some form of supervision, normally in the form of known w ord boundaries [18, 19, 23]. W e propose a new neural model which is truly unsuper - vised, assuming no labelled speech data or word boundary information. W e use a UTD system—itself unsupervised—to find pairs of word-like segments predicted to be of the same unknown type. Each pair is presented to an autoencoder-lik e encoder-decoder netw ork: one word in the pair is used as the input and the other as the tar get output. The latent represen- tation between the encoder and decoder is used as acoustic embedding. W e call this model the encoder-decoder correspon- dence autoencoder ( E N C D E C - C A E ). The idea is that the model should learn an intermediate representation that is in v ariant to properties not common to the two segments (e.g. speaker , channel), while capturing aspects that are (e.g. word identity). W e compare this model to downsampling [18] and an encoder-decoder autoencoder [19] trained on random seg- ments. W e also propose another model: an encoder-decoder variational autoencoder , which incorporates a prior over its la- tent embedding. W e show that the E N C D E C - C A E outperforms the other models in a word discrimination task on English and Xitsonga data. In Xitsonga, it ev en outperforms a DTW approach which uses full alignment to discriminate words. 2. A COUSTIC WORD EMBEDDING METHODS W e consider three unsupervised neural models in this work, all using an encoder-decoder recurrent neural network (RNN) ar - chitecture [26, 27]. The first model w as proposed as an acoustic embedding method in [19], while the other two are ne w . 2.1. Encoder -decoder autoencoder An encoder-decoder RNN consists of an encoder RNN, which reads in an input sequence while sequentially updating its internal hidden state, and a decoder RNN, which produces an output sequence conditioned on the final output of the en- coder [26]. Chung et al. [19] trained an encoder-decoder as an autoencoder ( E N C D E C - A E ) on unlabelled speech segments, using the input of the netw ork as the target, as shown in Fig- ure 1(a). The final fixed-dimensional output vector z from the encoder (red in Figure 1) giv es an acoustic word embedding. More formally , an input segment X = x 1 , x 2 , . . . , x T consists of a sequence of frame-lev el acoustic feature vectors x t ∈ R D (e.g. MFCCs). The loss for a single training example is ` ( X ) = P T t =1 || x t − f t ( X ) || 2 , with f t ( X ) the t th decoder output conditioned on the latent embedding z , which itself is produced as the output of the encoder when it is fed with X . In our implementation of the E N C D E C - A E we use a trans- formation of the final hidden state of the encoder RNN to produce the embedding z ∈ R M , as also in [20]. W e use g ated recurrent units [28] as the RNN cell type in all the models here. This worked better than LSTMs in v alidations experiments. Most importantly , instead of using true word segments [19], we train the E N C D E C - A E on random speech segments. 2.2. Encoder -decoder variational autoencoder A variational autoencoder (V AE) is a generativ e neural model which uses a v ariational approximation for inference and train- ing [29]. A complete treatment of V AEs is outside the scope of this work, so we refer readers to [29, 30]. Here we only describe how we de velop the encoder-decoder V AE ( E N C D E C - V A E ), where the decoder can be seen as a generative sequence model conditioned on a latent representation z , which we use as acoustic embedding. W e sho w that by choosing spe- cific distributions, the model can be interpreted as a standard E N C D E C - A E with a prior o ver its latent embedding. For the E N C D E C - V A E , it is useful to think of the encoder and decoder as separate networks with parameters φ and θ , respectiv ely . The encoder density is denoted as q φ ( z | X ) and the decoder as p θ ( X | z ) , as in Figure 1(b). For a single training sequence X , the E N C D E C - V A E maximises a lo wer bound for log p θ ( X ) = log T Y t =1 p θ ( x t | x

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment