A Simple Baseline for Audio-Visual Scene-Aware Dialog

The recently proposed audio-visual scene-aware dialog task paves the way to a more data-driven way of learning virtual assistants, smart speakers and car navigation systems. However, very little is known to date about how to effectively extract meaningful information from a plethora of sensors that pound the computational engine of those devices. Therefore, in this paper, we provide and carefully analyze a simple baseline for audio-visual scene-aware dialog which is trained end-to-end. Our method differentiates in a data-driven manner useful signals from distracting ones using an attention mechanism. We evaluate the proposed approach on the recently introduced and challenging audio-visual scene-aware dataset, and demonstrate the key features that permit to outperform the current state-of-the-art by more than 20% on CIDEr.

💡 Research Summary

The paper addresses the emerging task of Audio‑Visual Scene‑Aware Dialog (AVSD), which requires a system to answer questions about short video clips while taking into account both visual and auditory streams as well as dialog history. While prior work has introduced sophisticated pipelines—often chaining speech recognition, dialog management, and language generation modules—and complex multimodal fusion strategies, little analysis has been offered on how to extract the most useful signals from the abundant sensor data. In response, the authors propose a remarkably simple yet highly effective baseline that is trained end‑to‑end and outperforms the previous state‑of‑the‑art by more than 20 % on the CIDEr metric.

The core ideas are fourfold: (1) the question text alone carries a strong prior that can dominate performance; (2) preserving spatial features of video frames (using either VGG‑19 for static features or I3D‑Kinetics for spatiotemporal features) is crucial—collapsing each frame to a single vector dramatically hurts results; (3) temporally subsampling the video to a small, uniformly spaced set of frames (typically 4–6) improves both efficiency and accuracy; and (4) a high‑order multimodal attention mechanism that jointly considers local evidence, a log‑prior, and cross‑modal evidence across all modalities (audio, question, each video frame) yields a richer representation.

The architecture consists of three components: (i) data representation extraction, (ii) multimodal attention, and (iii) answer generation. For representation, the question is embedded via a word‑embedding layer, audio is processed with a pretrained VGGish network, and each sampled video frame is passed through a pretrained CNN (VGG‑19) or a 3‑D CNN (I3D‑Kinetics) to obtain spatial feature maps. The attention module treats each video frame as a separate modality alongside audio and question. For each modality α, a probability distribution over its entities (words for text, temporal slices for audio, spatial locations for video) is computed as pα(k) ∝ exp(ŵα·πα(k) + lα(k) + cα(k)), where πα is a log‑prior, lα is a learned local score, and cα aggregates cross‑modal interactions via bilinear forms with learned projection matrices Lα and Rβ. This factor‑graph‑style attention yields attended vectors aA, aQ, and aVf for each frame f.

These attended vectors are fed into an “Audio‑Visual LSTM” that processes the audio vector first, followed by the sequence of attended video vectors. The final hidden state (h0, c0) of this LSTM serves as the initial state for a second LSTM that generates the answer word‑by‑word. At each decoding step, the answer LSTM receives the previously generated word, the concatenated attended question and history vector aT, and the current hidden state, producing a distribution over the vocabulary via a fully‑connected layer, dropout, and softmax.

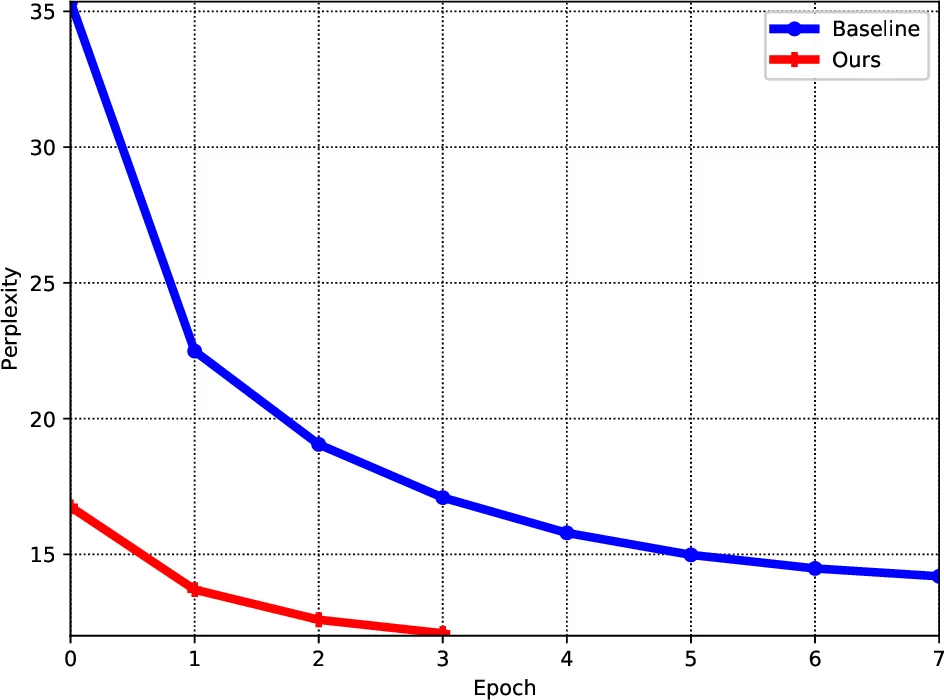

Extensive experiments on the AVSD dataset demonstrate that this minimalist design surpasses the previous multimodal attention baseline (which also used I3D‑Kinetics features) across all standard metrics. CIDEr improves by more than 20 %, while BLEU‑4, METEOR, and ROUGE‑L also show consistent gains. Qualitative visualizations reveal that the attention maps focus on relevant words in the question and on appropriate spatial regions in the video frames; for example, when asked “Is there a person in the video?” the model attends strongly to frames containing a human figure. Moreover, the model’s attention over different frames varies: some frames receive broad attention (capturing overall context), while others focus narrowly on specific objects, illustrating the benefit of treating each frame as an independent modality.

Ablation studies confirm each design choice: removing the question‑only baseline drops performance dramatically, collapsing video frames into a single vector reduces CIDEr by a large margin, using dense temporal sampling (instead of subsampling) hurts both speed and accuracy, and omitting cross‑modal evidence in the attention computation leads to weaker results. Interestingly, adding attention over dialog history did not improve performance, suggesting that the current dataset’s history component is less informative than the visual and auditory streams.

The paper acknowledges limitations: it operates on textual questions rather than raw speech, so integration of speech‑to‑text modules and robustness to ASR errors remain open challenges. Additionally, while the high‑order attention captures interactions among modalities, it does not explicitly model temporal dynamics across frames beyond the simple sequential LSTM; future work could incorporate relational networks or transformer‑style temporal encoders to better capture action sequences.

In conclusion, the authors demonstrate that a carefully designed, end‑to‑end trainable system—leveraging question‑driven priors, spatially rich video features, temporal subsampling, and a principled multimodal attention mechanism—can set a new performance benchmark on AVSD with far less architectural complexity than prior work. This contribution highlights the importance of signal selection and efficient multimodal fusion, offering a solid baseline for future research in audio‑visual dialog systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment