Adaptive Algorithm for Sparse Signal Recovery

Spike and slab priors play a key role in inducing sparsity for sparse signal recovery. The use of such priors results in hard non-convex and mixed integer programming problems. Most of the existing algorithms to solve the optimization problems involve either simplifying assumptions, relaxations or high computational expenses. We propose a new adaptive alternating direction method of multipliers (AADMM) algorithm to directly solve the presented optimization problem. The algorithm is based on the one-to-one mapping property of the support and non-zero element of the signal. At each step of the algorithm, we update the support by either adding an index to it or removing an index from it and use the alternating direction method of multipliers to recover the signal corresponding to the updated support. Experiments on synthetic data and real-world images show that the proposed AADMM algorithm provides superior performance and is computationally cheaper, compared to the recently developed iterative convex refinement (ICR) algorithm.

💡 Research Summary

The paper addresses sparse signal recovery using spike‑and‑slab priors, which lead to a non‑convex mixed‑integer optimization problem. By formulating a hierarchical Bayesian model with a Bernoulli indicator ω and a Laplace slab, the authors derive a MAP objective consisting of a data‑fidelity term, an ℓ₁ regularizer, and a weighted sum of ω‑dependent penalties. Because ω is binary, the problem is intrinsically combinatorial. The key insight is the one‑to‑one mapping between the support set S of non‑zero coefficients and the subvector x_S. The proposed Adaptive Alternating Direction Method of Multipliers (AADMM) alternates between greedy support updates and solving a convex ℓ₁‑regularized subproblem via ADMM. For each iteration, the algorithm evaluates the potential decrease in the objective when adding any unused index (U_S) or removing any current index (V_S). To avoid exhaustive recomputation, upper‑bound approximations are employed. The index yielding the larger reduction is selected; if neither addition nor removal improves the cost, the algorithm stops, guaranteeing monotonic descent and convergence. Support initialization follows from the condition γ_i < 0, which forces inclusion of certain indices. The ADMM subproblem solves min_{x_S} ‖y‑A_S x_S‖₂² + λ‖x_S‖₁ + δ_S using standard primal‑dual updates and soft‑thresholding.

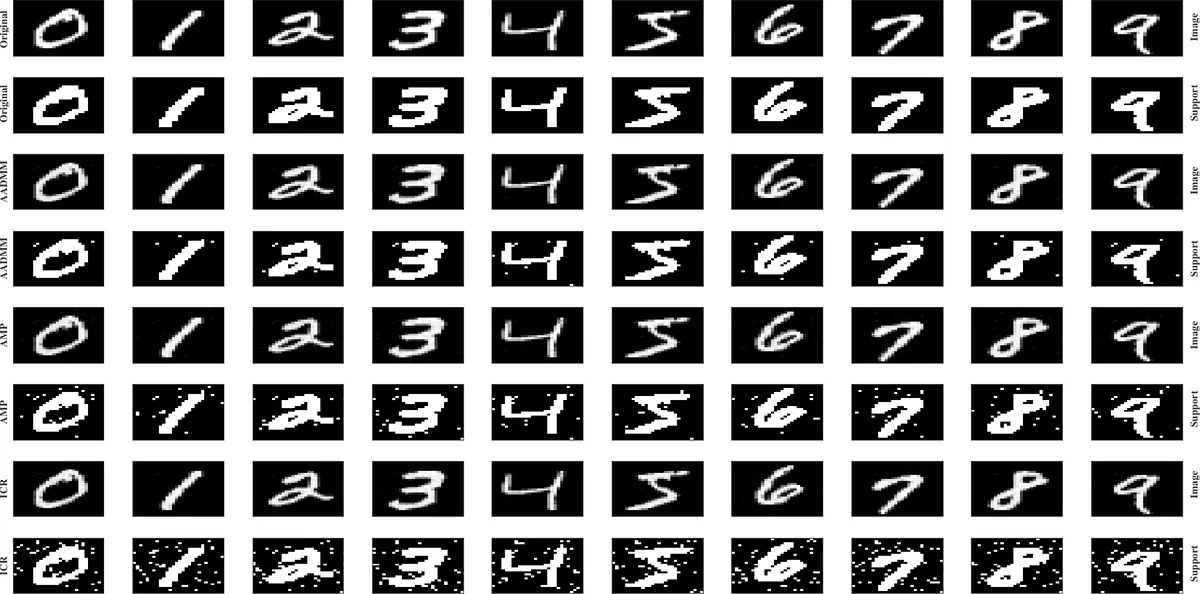

Experimental evaluation on synthetic data and real images (including MRI) compares AADMM with Iterative Convex Refinement (ICR) and Adaptive Matching Pursuit (AMP). Results show that AADMM achieves 2–3 dB higher PSNR than ICR while reducing runtime by roughly 30–40 %. Unlike AMP, which relies on ℓ₂ regularization and cannot directly handle the proposed non‑convex formulation, AADMM solves the original problem without relaxation, yielding sparser reconstructions. The paper concludes that the combination of greedy support selection and ADMM offers a practical, theoretically sound solution to spike‑and‑slab based sparse recovery, and suggests extensions such as multi‑scale support strategies, automatic parameter tuning, and GPU acceleration for future work.

Comments & Academic Discussion

Loading comments...

Leave a Comment