Smart, Deep Copy-Paste

In this work, we propose a novel system for smart copy-paste, enabling the synthesis of high-quality results given a masked source image content and a target image context as input. Our system naturally resolves both shading and geometric inconsistencies between source and target image, resulting in a merged result image that features the content from the pasted source image, seamlessly pasted into the target context. Our framework is based on a novel training image transformation procedure that allows to train a deep convolutional neural network end-to-end to automatically learn a representation that is suitable for copy-pasting. Our training procedure works with any image dataset without additional information such as labels, and we demonstrate the effectiveness of our system on two popular datasets, high-resolution face images and the more complex Cityscapes dataset. Our technique outperforms the current state of the art on face images, and we show promising results on the Cityscapes dataset, demonstrating that our system generalizes to much higher resolution than the training data.

💡 Research Summary

The paper introduces a novel “smart deep copy‑paste” system that enables high‑quality image synthesis by seamlessly merging a masked source region into a target context. The core contribution lies in a training data generation pipeline that requires only a single, unlabeled image dataset. For each training sample, a random rectangular mask is placed on an image, the masked region is subjected to a composite transformation T consisting of (i) global color adjustments (brightness, contrast, saturation, hue), (ii) locally varying shading simulated by mixing two independently color‑transformed copies with a random low‑resolution noise mask, and (iii) a small random homography to emulate geometric misalignment, missing content, and duplicated clutter. The transformed patch becomes the “source image” I_S, while the original image with the masked region removed serves as the “target image” I_T. The network receives (I_T, I_S, mask) and learns to predict a residual that, when added to I_S and composited with I_T, reconstructs the original image.

The architecture follows a U‑Net‑style encoder‑decoder with skip connections, outputting the residual map. Training combines an L1 reconstruction loss with a conditional GAN loss, encouraging both pixel‑wise fidelity and realistic texture synthesis. No semantic labels or paired images are needed, making the approach domain‑agnostic.

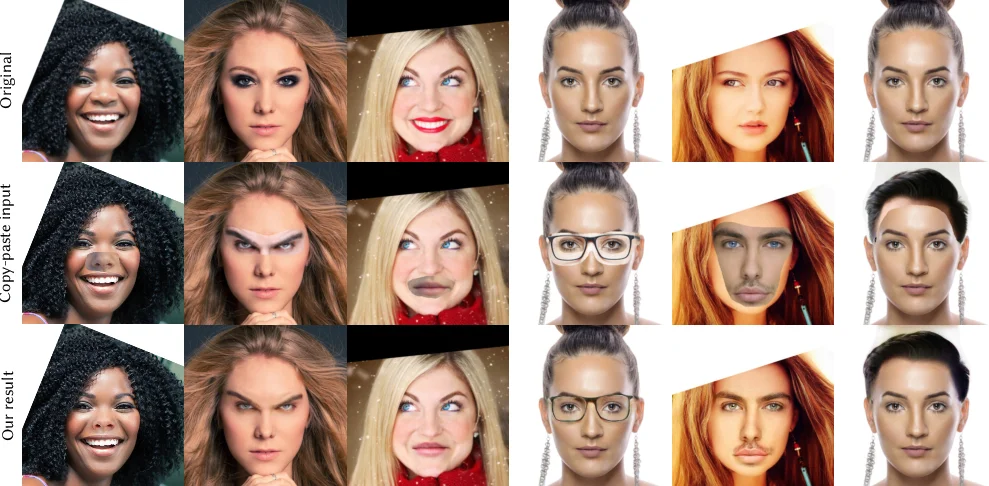

Experiments are conducted on two datasets: a high‑resolution face dataset (21 k images at 512 × 512) and the Cityscapes street‑view dataset (5 k images, down‑sampled to 512 × 1024). On faces, the method outperforms the prior FaceShop system both qualitatively and quantitatively (higher PSNR/SSIM), handling eyes, noses, mouths, and even low‑contrast textures. On Cityscapes, it successfully copies and pastes complex structures such as building facades, road markings, and vehicles, while correcting color mismatches, geometric pose differences, and background clutter. Visual results show smooth boundaries, consistent illumination, and plausible hallucination of missing content when the homography removes parts of the source.

The paper’s strengths include (1) a clever, fully automatic data synthesis strategy that mimics real‑world copy‑paste challenges, (2) an end‑to‑end trainable model that jointly resolves shading and geometry, and (3) demonstrated generalization from faces to high‑resolution urban scenes. Limitations are noted: the transformation range must be carefully balanced—too weak makes the task trivial, too strong reduces it to unconditional inpainting; the homography‑based geometry handling may not capture large 3D pose changes; and training at very high resolutions remains computationally demanding.

Overall, the work advances image editing by removing the need for hand‑crafted datasets or domain‑specific tricks, offering a versatile copy‑paste tool that can be extended to other domains and potentially integrated into consumer‑grade photo‑editing applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment