AdaFlow: Domain-Adaptive Density Estimator with Application to Anomaly Detection and Unpaired Cross-Domain Translation

We tackle unsupervised anomaly detection (UAD), a problem of detecting data that significantly differ from normal data. UAD is typically solved by using density estimation. Recently, deep neural network (DNN)-based density estimators, such as Normali…

Authors: Masataka Yamaguchi, Yuma Koizumi, Noboru Harada

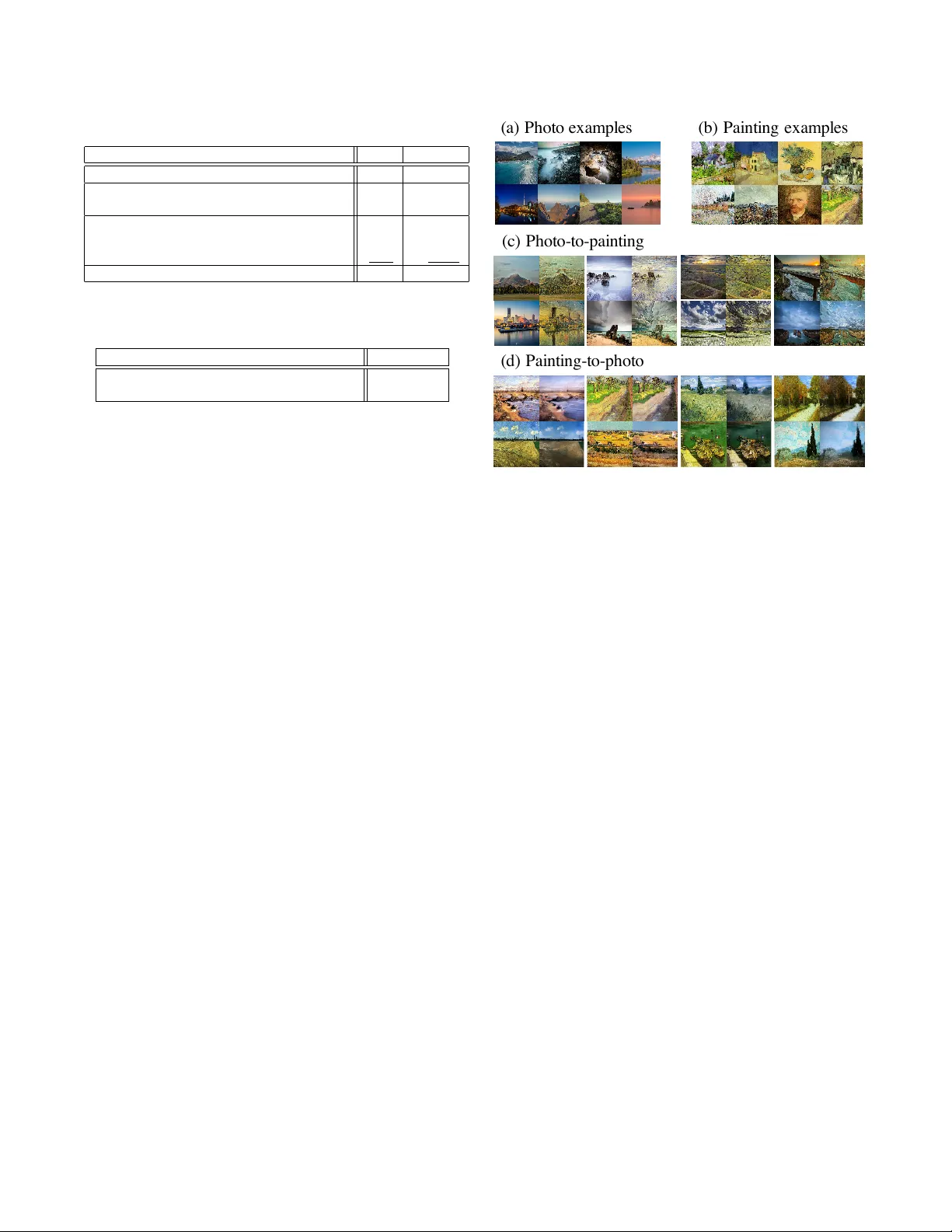

AD AFLO W : DOMAIN-AD APTIVE DENSITY ESTIMA TOR WITH APPLICA TION T O ANOMAL Y DETECTION AND UNP AIRED CR OSS-DOMAIN TRANSLA TION Masataka Y amaguc hi 1 , Y uma K oizumi 2 , and Noboru Harada 2 1 : NTT Communication Science Laboratories, Kanagaw a, Japan 2 : NTT Media Intelligence Laboratories, T okyo, Japan ABSTRA CT W e tackle unsupervised anomaly detection (U AD), a problem of detecting data that significantly differ from normal data. U AD is typically solv ed by using density estimation. Recently , deep neu- ral network (DNN)-based density estimators, such as Normalizing Flows, hav e been attracting attention. Howe ver , one of their draw- backs is the difficulty in adapting them to the change in the normal data’ s distrib ution. T o address this difficulty , we propose AdaFlow , a new DNN-based density estimator that can be easily adapted to the change of the distribution. AdaFlo w is a unified model of a Normal- izing Flow and Adaptiv e Batch-Normalizations, a module that en- ables DNNs to adapt to new distributions. AdaFlow can be adapted to a new distribution by just conducting forward propagation once per sample; hence, it can be used on de vices that hav e limited com- putational resources. W e hav e confirmed the effecti veness of the proposed model through an anomaly detection in a sound task. W e also propose a method of applying AdaFlow to the unpaired cross- domain translation problem, in which one has to train a cross-domain translation model with only unpaired samples. W e hav e confirmed that our model can be used for the cross-domain translation problem through experiments on image datasets. Index T erms — Deep learning, normalizing flow , domain adap- tation, anomaly detection, and cross-domain translation. 1. INTR ODUCTION Anomaly detection, also known as outlier detection, is a problem of detecting data that significantly differ from normal data [1–3]. Since such anomalies might indicate symptoms of mistakes or mali- cious acti vities, their prompt detection may pre vent such problems. Therefore, anomaly detection has received much attention and been applied for various purposes. In this paper , we specifically consider unsupervised anomaly de- tection (UAD), in which only normal data can be used for training anomaly detection models. U AD is typically solved by first training a normal model with normal data and then estimating the deviance of each testing sample with the trained model. In the anomaly de- tection field, many types of normal models have been in vestigated. In the early studies, a Gaussian distribution was used [4, 5], and recently , more flexible statistical models hav e been used such as a Gaussian mixture model (GMM) [6, 7]. More recently , deep neural network (DNN)-based methods have been in vestigated such as an Auto-Encoder (AE) [8, 9], a V ariational Auto-Encoder (V AE) [10– 12], and Generativ e Adversarial Networks (GAN) [13–15]. In the typical setting of U AD, one assumes that training and test- ing data are sampled from the same distribution. Howe ver , this as- sumption does not hold in certain practical scenarios. Let us consider the anomaly detection problem on facility equipments. T ypically , such equipments have various operation patterns, and the environ- mental noise patterns around them may change due to certain factors such as seasons and the weather . In this case, the abov e assump- tion does not always hold; hence, simply applying existing normal models to such problems may significantly decrease the anomaly de- tection accuracy . A na ¨ ıve method one can use to avoid this is to adapt normal models to a new distribution by conducting fine-tuning with newly-collected normal data. Howe ver , fine-tuning requires high memory and computational costs and cannot be easily conducted with de vices installed in facility equipments that typically have only limited computational resources. Therefore, a more efficient adapta- tion method is needed. T o address this problem, we propose a ne w density esti- mator named AdaFlow , a unified model of Normalizing Flows (NFs) [16, 17], a powerful DNN-based density estimator , and the Adaptiv e Batch Normalization (AdaBN) [18], a module that enables DNNs to handle different domains’ data. AdaBN alleviates the difference between domains by scaling and shifting each domain’ s input data so that each domain’ s mean and variance are zero and one, respectiv ely . Since AdaBN can be adapted to a new domain by just adjusting its statistics with the domain’ s data, the adaptation step of AdaFlow can be done by just conducting forward-propagation only once per sample. Therefore, AdaFlow can be used on devices that hav e limited computational resources. W e also propose a method of applying AdaFlo w to the unpaired cross-domain translation problem, in which one has to train a cross- domain translation model with only unpaired data. W e show the ef- fectiv eness of using AdaFlo w for this problem through cross-domain translation experiments on image datasets. 2. RELA TED WORK 2.1. Unsupervised anomaly detection In UAD, the deviation between a normal model and observation is computed; the deviation is often called the “ anomaly score ”. One way of computing anomaly scores is a density estimation-based ap- proach. This approach first trains a density estimator q θ ( · ) , such as a Gaussian distrib ution function, with normal data, and then computes the negativ e log-likelihood of each testing data x ∈ R D with q θ ( · ) . In this approach, its negati ve log-likelihood is used as its anomaly score A ( x , θ ) , i.e., A ( x , θ ) = − ln q θ ( x ) . (1) Then, x is determined to be anomalous when the anomaly score exceeds a pre-defined threshold φ : H ( x , φ ) = ( 0 ( Normal ) A ( x , θ ) < φ 1 ( Anomaly ) A ( x , θ ) ≥ φ . (2) Recently , deep learning has also been inv estigated for defin- ing normal models for UAD. Sev eral studies on deep-learning-based U AD employed an AE [8, 9] (or a V AE [11, 12]). The AE-based anomaly detection framew ork defines the anomaly score as follows: A ( x , θ ) = k x − D θ D ( E θ E ( x )) k 2 , (3) where k·k denotes the L 2 norm, E and D are the encoder and decoder of the AE, and θ E and θ D are its parameters, namely θ = { θ E , θ D } . Then, θ is trained to minimize the anomaly scores of normal data as follows: θ ← arg min θ 1 N N X n =1 A ( x n , θ ) , (4) where x n is the n -th training sample and N is the number of training samples. Although it has been empirically shown that anomaly detection can be addressed by AE-based anomaly detection, one of its draw- backs is that there is no guarantee that minimizing Eq. (4) encour- ages anomaly scores of normal data to be less than those of anomaly data, because anomaly scores of anomaly data are not considered in Eq. (4). In constrast, in the density estimation-based approach, minimizing NLLs of normal data encourages to maximize NLLs of the other data, including anomal data, since the integral value of the likelihood in the input space is always 1. Therefore, instead of using the AE-based anomaly detection approach, we adopt the den- sity estimation-based approach. Specifically , in this paper, we adopt a Normalizing Flow (NF), a DNN-based flexible density estimator . W e explain its details in Section 3. 2.2. Domain adaptation on DNN-based density estimator Although a DNN is a powerful tool for anomaly score computation, it may be problematic for practical use. One problem occurs when adjusting the normal model to a new domain. The distribution of normal data often varies due to aging of the target and/or change in en vironmental noise. Therefore, we need to adapt the normal model to such fluctuations. Let us formulate this problem. Suppose that we hav e a normal model q θ trained on K ≥ 1 dataset(s) collected in in- dividual domains. When the distribution changes, we need to adapt q θ to the new domain ( ( K + 1) -th domain) to obtain a new normal model q 0 θ . This problem can be regarded as an analogy of domain adaptation [19]. Although several domain adaptation methods ha ve been in vestigated [20–22], most require iterativ e optimization and huge memory , and such methods cannot be easily used with devices installed in most practical conditions, which typically have limited computational resources. Therefore, in terms of the computational cost and required memory , a more efficient adaptation method is needed. 3. PR OPOSED METHOD 3.1. Normalizing Flow W e adopt a Normalizing Flow (NF) as a density estimator. NF rep- resents a probabilistic density by transforming a base probabilistic density function q 0 ( z (0) ) with a series of M in vertible projections { f m } M m =1 with each parameter { θ m } M m =1 . In NF , x is regarded as a transformed variable with { f m } M m =1 as follows: x = z M = f M ,θ M ◦ · · · ◦ f 1 ,θ 1 ( z (0) ); (5) thus, z (0) can be obtained by the inv erse transform of (5). Fol- lowing prior works [16, 17], we employ a Gaussian distribution (a) Pre - tr aini ng (b) A dapt ati on Domain 1 Domain 2 Domain 3 Sta t. c al c. (c) C r oss- domai n tra nsit i on (domain 1) (domain 2) AAAChXichVHLSsNAFD2Nr1ofrboR3BSL4ircivjaWHTjUq2xBS0lidMaTJOQpIVa/AHBrS5cKbgQP8APcOMPuOgniMsKblx4mwZEi3qHmTlz5p47c7iaYxqeT9SMSD29ff0D0cHY0PDIaDwxNr7n2VVXF4pum7ab11RPmIYlFN/wTZF3XKFWNFPktOON9n2uJlzPsK1dv+6IQkUtW0bJ0FWfqWypmC4mUiRTEMlukA5BCmFs2YkHHOAQNnRUUYGABZ+xCRUej32kQXCYK6DBnMvICO4FThFjbZWzBGeozB7zWubTfshafG7X9AK1zq+YPF1WJjFDz3RHLXqie3qhj19rNYIa7b/Uedc6WuEU42eT2fd/VRXefRx9qf5QaJz9tycfJSwHXgz25gRM26XeqV87uWxlV3dmGrN0Q6/s75qa9MgOrdqbfrstdq4Q4walf7ajGyjz8opM2wupzHrYqSimMI05bscSMtjEFhR+toxzXOBSikqytCAtdlKlSKiZwLeQ1j4BhGiQVA== AAAChXichVG7SgNBFD1ZXzE+ErURbIIhYhVuQvDVGLSxNMaooCHsrpO4ZLO77G4CMfgDgq0prBQsxA/wA2z8AYt8glgq2Fh4s1kQDcY7zMyZM/fcmcNVLF1zXKJ2QBoYHBoeCY6GxsYnJsORqek9x6zZqsirpm7aB4rsCF0zRN7VXF0cWLaQq4ou9pXKZud+vy5sRzONXbdhiUJVLhtaSVNll6lcqZgqRmKUIC+ivSDpgxj82DYjDzjCMUyoqKEKAQMuYx0yHB6HSIJgMVdAkzmbkebdC5whxNoaZwnOkJmt8Frm06HPGnzu1HQ8tcqv6DxtVkYRp2e6ozd6ont6oc8/azW9Gp2/NHhXulphFcPns7mPf1VV3l2cfKv6KBTO7u/JRQkrnheNvVke03GpduvXT1tvubWdeHOBbuiV/V1Tmx7ZoVF/V2+zYucKIW5Q8nc7ekE+lVhNUDYdy2z4nQpiDvNY5HYsI4MtbCPPz5ZxgUu0pKCUkNLSUjdVCviaGfwIaf0LhoeQVQ== AAAChXichVG7SgNBFD2urxhfURvBJhgiVuFGg6/GoI2lMcYEYgi76yQubnaX3U0gBn9AsDWFlYKF+AF+gI0/YOEniGUEGwtvNguiwXiHmTlz5p47c7iKpWuOS/TSJ/UPDA4NB0aCo2PjE5OhqekDx6zaqsiopm7aOUV2hK4ZIuNqri5yli3kiqKLrHKy3b7P1oTtaKax79YtUajIZUMraarsMpUuFZeLoQjFyItwN4j7IAI/ds3QAw5xBBMqqqhAwIDLWIcMh0cecRAs5gpoMGcz0rx7gTMEWVvlLMEZMrMnvJb5lPdZg8/tmo6nVvkVnafNyjCi9Ex31KInuqdX+vyzVsOr0f5LnXeloxVWcfJ8Nv3xr6rCu4vjb1UPhcLZvT25KGHN86KxN8tj2i7VTv3aabOV3tiLNhboht7Y3zW90CM7NGrv6m1K7F0hyA2K/25HN8gsxdZjlEpEklt+pwKYwzwWuR2rSGIHu8jws2Vc4BJNKSDFpIS00kmV+nzNDH6EtPkFiKaQVg== AAAChXichVHLSsNAFD2Nr1ofrboR3BSL4ircivjaWHTjUq2xBS0lidMaTJOQpIVa/AHBrS5cKbgQP8APcOMPuOgniMsKblx4mwZEi3qHmTlz5p47c7iaYxqeT9SMSD29ff0D0cHY0PDIaDwxNr7n2VVXF4pum7ab11RPmIYlFN/wTZF3XKFWNFPktOON9n2uJlzPsK1dv+6IQkUtW0bJ0FWfqWypmC4mUiRTEMlukA5BCmFs2YkHHOAQNnRUUYGABZ+xCRUej32kQXCYK6DBnMvICO4FThFjbZWzBGeozB7zWubTfshafG7X9AK1zq+YPF1WJjFDz3RHLXqie3qhj19rNYIa7b/Uedc6WuEU42eT2fd/VRXefRx9qf5QaJz9tycfJSwHXgz25gRM26XeqV87uWxlV3dmGrN0Q6/s75qa9MgOrdqbfrstdq4Q4walf7ajGyjz8opM2wupzHrYqSimMI05bscSMtjEFhR+toxzXOBSikqytCAtdlKlSKiZwLeQ1j4BhGiQVA== AAAChXichVG7SgNBFD1ZXzE+ErURbIIhYhVuQvDVGLSxNMaooCHsrpO4ZLO77G4CMfgDgq0prBQsxA/wA2z8AYt8glgq2Fh4s1kQDcY7zMyZM/fcmcNVLF1zXKJ2QBoYHBoeCY6GxsYnJsORqek9x6zZqsirpm7aB4rsCF0zRN7VXF0cWLaQq4ou9pXKZud+vy5sRzONXbdhiUJVLhtaSVNll6lcqZgqRmKUIC+ivSDpgxj82DYjDzjCMUyoqKEKAQMuYx0yHB6HSIJgMVdAkzmbkebdC5whxNoaZwnOkJmt8Frm06HPGnzu1HQ8tcqv6DxtVkYRp2e6ozd6ont6oc8/azW9Gp2/NHhXulphFcPns7mPf1VV3l2cfKv6KBTO7u/JRQkrnheNvVke03GpduvXT1tvubWdeHOBbuiV/V1Tmx7ZoVF/V2+zYucKIW5Q8nc7ekE+lVhNUDYdy2z4nQpiDvNY5HYsI4MtbCPPz5ZxgUu0pKCUkNLSUjdVCviaGfwIaf0LhoeQVQ== AAAChXichVG7SgNBFD2urxhfURvBJhgiVuFGg6/GoI2lMcYEYgi76yQubnaX3U0gBn9AsDWFlYKF+AF+gI0/YOEniGUEGwtvNguiwXiHmTlz5p47c7iKpWuOS/TSJ/UPDA4NB0aCo2PjE5OhqekDx6zaqsiopm7aOUV2hK4ZIuNqri5yli3kiqKLrHKy3b7P1oTtaKax79YtUajIZUMraarsMpUuFZeLoQjFyItwN4j7IAI/ds3QAw5xBBMqqqhAwIDLWIcMh0cecRAs5gpoMGcz0rx7gTMEWVvlLMEZMrMnvJb5lPdZg8/tmo6nVvkVnafNyjCi9Ex31KInuqdX+vyzVsOr0f5LnXeloxVWcfJ8Nv3xr6rCu4vjb1UPhcLZvT25KGHN86KxN8tj2i7VTv3aabOV3tiLNhboht7Y3zW90CM7NGrv6m1K7F0hyA2K/25HN8gsxdZjlEpEklt+pwKYwzwWuR2rSGIHu8jws2Vc4BJNKSDFpIS00kmV+nzNDH6EtPkFiKaQVg== AAAChXichVHLSsNAFD2Nr1ofrboR3BSL4ircivjaWHTjUq2xBS0lidMaTJOQpIVa/AHBrS5cKbgQP8APcOMPuOgniMsKblx4mwZEi3qHmTlz5p47c7iaYxqeT9SMSD29ff0D0cHY0PDIaDwxNr7n2VVXF4pum7ab11RPmIYlFN/wTZF3XKFWNFPktOON9n2uJlzPsK1dv+6IQkUtW0bJ0FWfqWypmC4mUiRTEMlukA5BCmFs2YkHHOAQNnRUUYGABZ+xCRUej32kQXCYK6DBnMvICO4FThFjbZWzBGeozB7zWubTfshafG7X9AK1zq+YPF1WJjFDz3RHLXqie3qhj19rNYIa7b/Uedc6WuEU42eT2fd/VRXefRx9qf5QaJz9tycfJSwHXgz25gRM26XeqV87uWxlV3dmGrN0Q6/s75qa9MgOrdqbfrstdq4Q4walf7ajGyjz8opM2wupzHrYqSimMI05bscSMtjEFhR+toxzXOBSikqytCAtdlKlSKiZwLeQ1j4BhGiQVA== AAAChXichVG7SgNBFD1ZXzE+ErURbIIhYhVuQvDVGLSxNMaooCHsrpO4ZLO77G4CMfgDgq0prBQsxA/wA2z8AYt8glgq2Fh4s1kQDcY7zMyZM/fcmcNVLF1zXKJ2QBoYHBoeCY6GxsYnJsORqek9x6zZqsirpm7aB4rsCF0zRN7VXF0cWLaQq4ou9pXKZud+vy5sRzONXbdhiUJVLhtaSVNll6lcqZgqRmKUIC+ivSDpgxj82DYjDzjCMUyoqKEKAQMuYx0yHB6HSIJgMVdAkzmbkebdC5whxNoaZwnOkJmt8Frm06HPGnzu1HQ8tcqv6DxtVkYRp2e6ozd6ont6oc8/azW9Gp2/NHhXulphFcPns7mPf1VV3l2cfKv6KBTO7u/JRQkrnheNvVke03GpduvXT1tvubWdeHOBbuiV/V1Tmx7ZoVF/V2+zYucKIW5Q8nc7ekE+lVhNUDYdy2z4nQpiDvNY5HYsI4MtbCPPz5ZxgUu0pKCUkNLSUjdVCviaGfwIaf0LhoeQVQ== AAAChXichVG7SgNBFD2urxhfURvBJhgiVuFGg6/GoI2lMcYEYgi76yQubnaX3U0gBn9AsDWFlYKF+AF+gI0/YOEniGUEGwtvNguiwXiHmTlz5p47c7iKpWuOS/TSJ/UPDA4NB0aCo2PjE5OhqekDx6zaqsiopm7aOUV2hK4ZIuNqri5yli3kiqKLrHKy3b7P1oTtaKax79YtUajIZUMraarsMpUuFZeLoQjFyItwN4j7IAI/ds3QAw5xBBMqqqhAwIDLWIcMh0cecRAs5gpoMGcz0rx7gTMEWVvlLMEZMrMnvJb5lPdZg8/tmo6nVvkVnafNyjCi9Ex31KInuqdX+vyzVsOr0f5LnXeloxVWcfJ8Nv3xr6rCu4vjb1UPhcLZvT25KGHN86KxN8tj2i7VTv3aabOV3tiLNhboht7Y3zW90CM7NGrv6m1K7F0hyA2K/25HN8gsxdZjlEpEklt+pwKYwzwWuR2rSGIHu8jws2Vc4BJNKSDFpIS00kmV+nzNDH6EtPkFiKaQVg== AAACm3icSyrIySwuMTC4ycjEzMLKxs7BycXNw8vHLyAoFFacX1qUnBqanJ+TXxSRlFicmpOZlxpaklmSkxpRUJSamJuUkxqelO0Mkg8vSy0qzszPCympLEiNzU1Mz8tMy0xOLAEKxQuIxyTlVsekJ+bmJtbqKIA5SaklibXxAsoGegZgoIDJMIQylBmgICBfYDtDDEMKQz5DMkMpQy5DKkMeQwmQncOQyFAMhNEMhgwGDAVAsViGaqBYEZCVCZZPZahl4ALqLQWqSgWqSASKZgPJdCAvGiqaB+SDzCwG604G2pIDxEVAnQoMqgZXDVYafDY4YbDa4KXBH5xmVYPNALmlEkgnQfSmFsTzd0kEfyeoKxdIlzBkIHTh0ZEEVI3fTyUMaQwWYL9kAv1WABYB+TIZYn5Z1fTPwVZBqtVqBosMXgP9t9DgpsFhoA/zyr4kLw1MDZrNwAWMIEP06MBkhBrpWeoZBJooOzhBY4qDQZpBiUEDGB3mDA4MHgwBDKFgaxczrGFYyyTH5MLkxeQDUcrECNUjzIACmEIBPUCZQA== AAACnXichVG7SgNBFD2u7/iK2ig2waBYSLgRwUclCmIhotEYwYSwu07i4L7Y3QQ0iL0/YGGlYCGCln6AjT9gkU8QSwUbC282C6JivMvsnHvmnjtzuJpjSM8nqjYpzS2tbe0dnZGu7p7evmj/wLZnl1xdpHXbsN0dTfWEIS2R9qVviB3HFaqpGSKjHSzVzjNl4XrStrb8Q0fkTLVoyYLUVZ+pfHQ4q5mVrFk6zicnYwH2ZNFUOc1H45SgIGK/QTIEcYSxbkfvkcUebOgowYSABZ+xARUef7tIguAwl0OFOZeRDM4FjhFhbYmrBFeozB7wv8jZbshanNd6eoFa51sMXi4rYxijJ7qmV3qkG3qmjz97VYIetbcc8q7VtcLJ950Obb7/qzJ597H/pWqg0Li6sScfBcwGXiR7cwKm5lKv9y8fnb1uzqfGKuN0SS/s74Kq9MAOrfKbfrUhUueI8ICSP8fxG6SnEnMJ2piOLyyGk+rACEYxweOYwQJWsI40X3uCK9ziTokpy8qqslYvVZpCzSC+hZL5BI8Rmcg= AAACnXichVG7SgNBFD2u7/iK2ig2waBYSLgRwUclCmIhYqIxgpGwu07i4L7Y3QQ0BHt/wMJKIYUIWvoBNv6AhZ8glgo2Ft5sFkRFvcvsnHvmnjtzuJpjSM8nemxSmlta29o7OiNd3T29fdH+gS3PLrm6yOi2YbvbmuoJQ1oi40vfENuOK1RTM0RWO1iqn2fLwvWkbW36h47YNdWiJQtSV32m8tHhnGZWcmapmp+ajAXYk0VT5TQfjVOCgoj9BMkQxBHGuh29RQ57sKGjBBMCFnzGBlR4/O0gCYLD3C4qzLmMZHAuUEWEtSWuElyhMnvA/yJnOyFrcV7v6QVqnW8xeLmsjGGMHuiSXuieruiJ3n/tVQl61N9yyLvW0Aon33cytPH2r8rk3cf+p+oPhcbVf3vyUcBs4EWyNydg6i71Rv/y0enLxnx6rDJOF/TM/s7pke7YoVV+1WspkT5DhAeU/D6OnyAzlZhLUGo6vrAYTqoDIxjFBI9jBgtYwToyfO0xarjGjRJTlpVVZa1RqjSFmkF8CSX7AZNemco= AAACnXichVG7SgNBFD1Z3/EVtVFsgkGxkHCjgo9KFMRCJFFjBCNhdx3j4L7Y3QQ0iL0/YGGlkEIELf0AG3/Awk8QSwUbC282C6Ki3mV2zj1zz505XM0xpOcTPUaUhsam5pbWtmh7R2dXd6ynd8OzS64usrpt2O6mpnrCkJbI+tI3xKbjCtXUDJHT9hdq57mycD1pW+v+gSO2TbVoyV2pqz5ThdhAXjMrebN0VJgYiwfYk0VT5bQQS1CSgoj/BKkQJBBG2o7dIo8d2NBRggkBCz5jAyo8/raQAsFhbhsV5lxGMjgXOEKUtSWuElyhMrvP/yJnWyFrcV7r6QVqnW8xeLmsjGOYHuiSXuieruiJ3n/tVQl61N5ywLtW1wqn0H3Sv/b2r8rk3cfep+oPhcbVf3vysYvpwItkb07A1Fzq9f7lw9OXtdnV4coIXdAz+zunR7pjh1b5Va9mxOoZojyg1Pdx/ATZ8eRMkjKTibn5cFKtGMQQRnkcU5jDEtLI8rXHqOIaN0pcWVSWlZV6qRIJNX34EkruA5ermcw= AAACm3icSyrIySwuMTC4ycjEzMLKxs7BycXNw8vHLyAoFFacX1qUnBqanJ+TXxSRlFicmpOZlxpaklmSkxpRUJSamJuUkxqelO0Mkg8vSy0qzszPCympLEiNzU1Mz8tMy0xOLAEKxQuIxyTlVsekJ+bmJtbqKIA5SaklibXxAsoGegZgoIDJMIQylBmgICBfYDtDDEMKQz5DMkMpQy5DKkMeQwmQncOQyFAMhNEMhgwGDAVAsViGaqBYEZCVCZZPZahl4ALqLQWqSgWqSASKZgPJdCAvGiqaB+SDzCwG604G2pIDxEVAnQoMqgZXDVYafDY4YbDa4KXBH5xmVYPNALmlEkgnQfSmFsTzd0kEfyeoKxdIlzBkIHTh0ZEEVI3fTyUMaQwWYL9kAv1WABYB+TIZYn5Z1fTPwVZBqtVqBosMXgP9t9DgpsFhoA/zyr4kLw1MDZrNwAWMIEP06MBkhBrpWeoZBJooOzhBY4qDQZpBiUEDGB3mDA4MHgwBDKFgaxczrGFYyyTH5MLkxeQDUcrECNUjzIACmEIBPUCZQA== AAACnXichVG7SgNBFD2u7/iK2ig2waBYSLgRwUclCmIhotEYwYSwu07i4L7Y3QQ0iL0/YGGlYCGCln6AjT9gkU8QSwUbC282C6JivMvsnHvmnjtzuJpjSM8nqjYpzS2tbe0dnZGu7p7evmj/wLZnl1xdpHXbsN0dTfWEIS2R9qVviB3HFaqpGSKjHSzVzjNl4XrStrb8Q0fkTLVoyYLUVZ+pfHQ4q5mVrFk6zicnYwH2ZNFUOc1H45SgIGK/QTIEcYSxbkfvkcUebOgowYSABZ+xARUef7tIguAwl0OFOZeRDM4FjhFhbYmrBFeozB7wv8jZbshanNd6eoFa51sMXi4rYxijJ7qmV3qkG3qmjz97VYIetbcc8q7VtcLJ950Obb7/qzJ597H/pWqg0Li6sScfBcwGXiR7cwKm5lKv9y8fnb1uzqfGKuN0SS/s74Kq9MAOrfKbfrUhUueI8ICSP8fxG6SnEnMJ2piOLyyGk+rACEYxweOYwQJWsI40X3uCK9ziTokpy8qqslYvVZpCzSC+hZL5BI8Rmcg= AAACnXichVG7SgNBFD2u7/iK2ig2waBYSLgRwUclCmIhYqIxgpGwu07i4L7Y3QQ0BHt/wMJKIYUIWvoBNv6AhZ8glgo2Ft5sFkRFvcvsnHvmnjtzuJpjSM8nemxSmlta29o7OiNd3T29fdH+gS3PLrm6yOi2YbvbmuoJQ1oi40vfENuOK1RTM0RWO1iqn2fLwvWkbW36h47YNdWiJQtSV32m8tHhnGZWcmapmp+ajAXYk0VT5TQfjVOCgoj9BMkQxBHGuh29RQ57sKGjBBMCFnzGBlR4/O0gCYLD3C4qzLmMZHAuUEWEtSWuElyhMnvA/yJnOyFrcV7v6QVqnW8xeLmsjGGMHuiSXuieruiJ3n/tVQl61N9yyLvW0Aon33cytPH2r8rk3cf+p+oPhcbVf3vyUcBs4EWyNydg6i71Rv/y0enLxnx6rDJOF/TM/s7pke7YoVV+1WspkT5DhAeU/D6OnyAzlZhLUGo6vrAYTqoDIxjFBI9jBgtYwToyfO0xarjGjRJTlpVVZa1RqjSFmkF8CSX7AZNemco= AAACm3icSyrIySwuMTC4ycjEzMLKxs7BycXNw8vHLyAoFFacX1qUnBqanJ+TXxSRlFicmpOZlxpaklmSkxpRUJSamJuUkxqelO0Mkg8vSy0qzszPCympLEiNzU1Mz8tMy0xOLAEKxQuIxyTlVsekJ+bmJtbqKIA5SaklibXxAsoGegZgoIDJMIQylBmgICBfYDtDDEMKQz5DMkMpQy5DKkMeQwmQncOQyFAMhNEMhgwGDAVAsViGaqBYEZCVCZZPZahl4ALqLQWqSgWqSASKZgPJdCAvGiqaB+SDzCwG604G2pIDxEVAnQoMqgZXDVYafDY4YbDa4KXBH5xmVYPNALmlEkgnQfSmFsTzd0kEfyeoKxdIlzBkIHTh0ZEEVI3fTyUMaQwWYL9kAv1WABYB+TIZYn5Z1fTPwVZBqtVqBosMXgP9t9DgpsFhoA/zyr4kLw1MDZrNwAWMIEP06MBkhBrpWeoZBJooOzhBY4qDQZpBiUEDGB3mDA4MHgwBDKFgaxczrGFYyyTH5MLkxeQDUcrECNUjzIACmEIBPUCZQA== AAACk3ichVHLSsNQFJzGd320KojgRiyKbsqpCD5wUaoLN0KrVgWVkMSrBvMySQtt6A/4Ay5cKXYhfoAf4MYfcOEniEsFNy48TQOiop6Qe+fOPXOSYVTH0D2f6DEmtbS2tXd0dsW7e3r7Esn+gU3PLrmaKGq2YbvbquIJQ7dE0dd9Q2w7rlBM1RBb6vFS436rLFxPt60Nv+KIPVM5tPQDXVN8puRk4kQOqDa5q5pBtSbTlJxMUZrCGv0JMhFIIaq8nbzFLvZhQ0MJJgQs+IwNKPD42UEGBIe5PQTMuYz08F6ghjhrS9wluENh9pjXQz7tRKzF58ZML1Rr/BWDX5eVoxinB7qmF7qnG3qi919nBeGMxr9UeFebWuHIidPh9bd/VSbvPo4+VX8oVO7+25OPA8yFXnT25oRMw6XWnF+unr2sL6yNBxN0Sc/s74Ie6Y4dWuVXrV4Qa+eIc0CZ73H8BMXp9HyaCjOpbC5KqhMjGMMkxzGLLFaQRzGM7BxXqEtD0qKUk5abrVIs0gziS0mrH4JjlXw= AAACinichVG9SsNQGD2N//Wv6iK4FIviVL6qoNWlqIOjba0taClJvNZg/kjSQi19AUcXB10UHMQH8AFcfAGHPoI4VnBx8GsaEBXrF3Lvued+50sOR7F1zfWImiGpp7evf2BwKDw8Mjo2HpmY3HOtiqOKnGrpllNQZFfomilynubpomA7QjYUXeSVk832fb4qHFezzF2vZouiIZdN7UhTZY+pwoFi1E8bJSpFYhQnv6K/QSIAMQS1Y0UecIBDWFBRgQEBEx5jHTJcfvaRAMFmrog6cw4jzb8XaCDM2gp3Ce6QmT3htcyn/YA1+dye6fpqlb+i8+uwMoo5eqY7atET3dMLffw5q+7PaP9LjXeloxV2afxsOvv+r8rg3cPxl6qLQuHu7p48HGHV96KxN9tn2i7Vzvzq6UUru5aZq8/TDb2yv2tq0iM7NKtv6m1aZC4R5oASP+P4DXKL8WSc0sux1EaQ1CBmMIsFjmMFKWxjBzk/hnNc4koalZakpLTeaZVCgWYK30ra+gT7WZK8 AAACk3ichVHLSsNQFJzGd320KojgRiyKbsqpCD5wUaoLN0KrVgWVkMSrBvMySQtt6A/4Ay5cKXYhfoAf4MYfcOEniEsFNy48TQOiop6Qe+fOPXOSYVTH0D2f6DEmtbS2tXd0dsW7e3r7Esn+gU3PLrmaKGq2YbvbquIJQ7dE0dd9Q2w7rlBM1RBb6vFS436rLFxPt60Nv+KIPVM5tPQDXVN8puRk4kQOqDa5q5pBtSbTlJxMUZrCGv0JMhFIIaq8nbzFLvZhQ0MJJgQs+IwNKPD42UEGBIe5PQTMuYz08F6ghjhrS9wluENh9pjXQz7tRKzF58ZML1Rr/BWDX5eVoxinB7qmF7qnG3qi919nBeGMxr9UeFebWuHIidPh9bd/VSbvPo4+VX8oVO7+25OPA8yFXnT25oRMw6XWnF+unr2sL6yNBxN0Sc/s74Ie6Y4dWuVXrV4Qa+eIc0CZ73H8BMXp9HyaCjOpbC5KqhMjGMMkxzGLLFaQRzGM7BxXqEtD0qKUk5abrVIs0gziS0mrH4JjlXw= AAACinichVG9SsNQGD3G//rTqovgUiyKU/mqglaXog6OVo0WVEoSr21omoTktqDFF3B0caiLgoP4AD6Aiy/g4COIo4KLg1/SgKioX8i95577nS85HN21TF8SPbYp7R2dXd09vbG+/oHBeGJoeMt3ap4hVMOxHK+ga76wTFuo0pSWKLie0Kq6Jbb1ynJwv10Xnm869qY8dMVeVSvZ5oFpaJKpwq4sC6kVM8VEitIUVvInyEQghajWnMQtdrEPBwZqqELAhmRsQYPPzw4yILjM7aHBnMfIDO8FjhFjbY27BHdozFZ4LfFpJ2JtPgcz/VBt8Fcsfj1WJjFBD3RNL3RPN/RE77/OaoQzgn855F1vaYVbjJ+Mbrz9q6ryLlH+VP2h0Ln7b08SB5gPvZjszQ2ZwKXRml8/OnvZWFifaEzSJT2zvwt6pDt2aNdfjau8WG8ixgFlvsfxE6jT6Wya8rOp3FKUVA/GMI4pjmMOOaxiDWoYwymaOFcGlBklqyy2WpW2SDOCL6WsfACh9JKS AAACinichVHNLgNRGD0d/6UUG4mNaCpWzVcklI1gYakYmlTTzIzbmpjOTGZum1TjBSxtLNiQWIgH8AA2XsDCI4gliY2Fr9NJBFHfZO4999zvfDMnR3ct05dETxGlo7Oru6e3L9o/EBscig+P7PhO1TOEajiW4+V0zReWaQtVmtISOdcTWkW3xK5+uNq8360Jzzcde1vWXVGoaGXbLJmGJpnK7ckDIbXibDGeoBQFNfEbpEOQQFgbTvwOe9iHAwNVVCBgQzK2oMHnJ480CC5zBTSY8xiZwb3AMaKsrXKX4A6N2UNey3zKh6zN5+ZMP1Ab/BWLX4+VE0jSI93QKz3QLT3Tx5+zGsGM5r/UeddbWuEWh07Gtt7/VVV4lzj4UrVR6Nzd3pNECQuBF5O9uQHTdGm05teOzl63FjeTjSm6ohf2d0lPdM8O7dqbcZ0Vm+eIckDpn3H8BupMKpOi7FxieSVMqhfjmMQ0xzGPZaxjA2oQwynOcaHElFkloyy1WpVIqBnFt1LWPgGmMpKU AAACinichVG9SsNQGD3G//rTqovgUiyKU/mqglaXog6OVo0WVEoSr21omoTktqDFF3B0caiLgoP4AD6Aiy/g4COIo4KLg1/SgKioX8i95577nS85HN21TF8SPbYp7R2dXd09vbG+/oHBeGJoeMt3ap4hVMOxHK+ga76wTFuo0pSWKLie0Kq6Jbb1ynJwv10Xnm869qY8dMVeVSvZ5oFpaJKpwq4sC6kVM8VEitIUVvInyEQghajWnMQtdrEPBwZqqELAhmRsQYPPzw4yILjM7aHBnMfIDO8FjhFjbY27BHdozFZ4LfFpJ2JtPgcz/VBt8Fcsfj1WJjFBD3RNL3RPN/RE77/OaoQzgn855F1vaYVbjJ+Mbrz9q6ryLlH+VP2h0Ln7b08SB5gPvZjszQ2ZwKXRml8/OnvZWFifaEzSJT2zvwt6pDt2aNdfjau8WG8ixgFlvsfxE6jT6Wya8rOp3FKUVA/GMI4pjmMOOaxiDWoYwymaOFcGlBklqyy2WpW2SDOCL6WsfACh9JKS AAACinichVHNLgNRGD0d/6UUG4mNaCpWzVcklI1gYakYmlTTzIzbmpjOTGZum1TjBSxtLNiQWIgH8AA2XsDCI4gliY2Fr9NJBFHfZO4999zvfDMnR3ct05dETxGlo7Oru6e3L9o/EBscig+P7PhO1TOEajiW4+V0zReWaQtVmtISOdcTWkW3xK5+uNq8360Jzzcde1vWXVGoaGXbLJmGJpnK7ckDIbXibDGeoBQFNfEbpEOQQFgbTvwOe9iHAwNVVCBgQzK2oMHnJ480CC5zBTSY8xiZwb3AMaKsrXKX4A6N2UNey3zKh6zN5+ZMP1Ab/BWLX4+VE0jSI93QKz3QLT3Tx5+zGsGM5r/UeddbWuEWh07Gtt7/VVV4lzj4UrVR6Nzd3pNECQuBF5O9uQHTdGm05teOzl63FjeTjSm6ohf2d0lPdM8O7dqbcZ0Vm+eIckDpn3H8BupMKpOi7FxieSVMqhfjmMQ0xzGPZaxjA2oQwynOcaHElFkloyy1WpVIqBnFt1LWPgGmMpKU AAACinichVG9SsNQGD3G//rTqovgUiyKU/mqglaXog6OVo0WVEoSr21omoTktqDFF3B0caiLgoP4AD6Aiy/g4COIo4KLg1/SgKioX8i95577nS85HN21TF8SPbYp7R2dXd09vbG+/oHBeGJoeMt3ap4hVMOxHK+ga76wTFuo0pSWKLie0Kq6Jbb1ynJwv10Xnm869qY8dMVeVSvZ5oFpaJKpwq4sC6kVM8VEitIUVvInyEQghajWnMQtdrEPBwZqqELAhmRsQYPPzw4yILjM7aHBnMfIDO8FjhFjbY27BHdozFZ4LfFpJ2JtPgcz/VBt8Fcsfj1WJjFBD3RNL3RPN/RE77/OaoQzgn855F1vaYVbjJ+Mbrz9q6ryLlH+VP2h0Ln7b08SB5gPvZjszQ2ZwKXRml8/OnvZWFifaEzSJT2zvwt6pDt2aNdfjau8WG8ixgFlvsfxE6jT6Wya8rOp3FKUVA/GMI4pjmMOOaxiDWoYwymaOFcGlBklqyy2WpW2SDOCL6WsfACh9JKS AAACinichVHNLgNRGD0d/6UUG4mNaCpWzVcklI1gYakYmlTTzIzbmpjOTGZum1TjBSxtLNiQWIgH8AA2XsDCI4gliY2Fr9NJBFHfZO4999zvfDMnR3ct05dETxGlo7Oru6e3L9o/EBscig+P7PhO1TOEajiW4+V0zReWaQtVmtISOdcTWkW3xK5+uNq8360Jzzcde1vWXVGoaGXbLJmGJpnK7ckDIbXibDGeoBQFNfEbpEOQQFgbTvwOe9iHAwNVVCBgQzK2oMHnJ480CC5zBTSY8xiZwb3AMaKsrXKX4A6N2UNey3zKh6zN5+ZMP1Ab/BWLX4+VE0jSI93QKz3QLT3Tx5+zGsGM5r/UeddbWuEWh07Gtt7/VVV4lzj4UrVR6Nzd3pNECQuBF5O9uQHTdGm05teOzl63FjeTjSm6ohf2d0lPdM8O7dqbcZ0Vm+eIckDpn3H8BupMKpOi7FxieSVMqhfjmMQ0xzGPZaxjA2oQwynOcaHElFkloyy1WpVIqBnFt1LWPgGmMpKU AAACiHichVG7SgNBFD2ur5j4iNoINmKIWIUbETRWQRtLXzGBJITddaJr9sXuJqghP2BlJ2qlYCF+gB9g4w9Y+AliGcHGwpvNgmgw3mFmzpy5584crmLrmusRvfRIvX39A4OhoXBkeGR0LDo+setaVUcVGdXSLSenyK7QNVNkPM3TRc52hGwousgqlbXWfbYmHFezzB3v2BZFQ943tbKmyh5TuwXFqB81StEYJciPmU6QDEAMQWxY0QcUsAcLKqowIGDCY6xDhssjjyQINnNF1JlzGGn+vUADYdZWOUtwhsxshdd9PuUD1uRzq6brq1V+RefpsHIGcXqmO2rSE93TK33+Wavu12j95Zh3pa0VdmnsdGr741+VwbuHg29VF4XC2d09eShj2feisTfbZ1ou1Xb92sl5c3tlK16foxt6Y3/X9EKP7NCsvau3m2LrCmFuUPJ3OzpBZiGRStDmYiy9GnQqhGnMYp7bsYQ01rGBDD97iDNc4FKKSElpSUq1U6WeQDOJHyGtfgF3DJIX AAACiHichVG7SgNBFD1ZXzHxEbURbMSgWIW7IvioRBtLk7iJoCK76ySu2Re7m0AM+QErO1ErBQvxA/wAG3/Awk8Qywg2Ft5sFkRFvcPMnDlzz505XM01DT8geopJXd09vX3x/kRyYHBoODUyWvCdqqcLRXdMx9vSVF+Yhi2UwAhMseV6QrU0UxS1ylr7vlgTnm849mZQd8WupZZto2ToasBUYUezGvXmXipNGQpj8ieQI5BGFBtO6g472IcDHVVYELARMDahwuexDRkEl7ldNJjzGBnhvUATCdZWOUtwhspshdcyn7Yj1uZzu6YfqnV+xeTpsXIS0/RIN9SiB7qlZ3r/tVYjrNH+S513raMV7t7w8Xj+7V+VxXuAg0/VHwqNs//2FKCExdCLwd7ckGm71Dv1a0enrfxybroxQ1f0wv4u6Ynu2aFde9WvsyJ3gQQ3SP7ejp9AmcssZSg7n15ZjToVxwSmMMvtWMAK1rEBhZ89xAnOcC4lJVlakJY6qVIs0ozhS0irH3kskhg= Fig. 1 . Simplified concept of AdaFlow; (1) pre-training, (2) adapta- tion, and (3) cross-domain transition. In pre-training, all parameters { θ m } 3 m =1 are trained with K = 2 domain datasets. For adapta- tion, BN statistics of second projection f 2 are computed from third domain dataset. For cross-domain transition, BN statistics of input domain is used for in verse projection, and that of target domain is used for forward projection. N ( z (0) ; 0 , I ) for q 0 ( z (0) ) . Then, the likelihood of the given sample x is obtained by repeatedly applying the rule for change of variables as follows: q θ ( x ) = q 0 ( z (0) ) M Y m =1 ∂ f m,θ m ∂ z ( m − 1) − 1 , (6) Thus, the anomaly score computed by NF can be expressed as A ( x , θ ) = − ln q 0 ( z (0) ) − M X m =1 ln ∂ f m,θ m ∂ z ( m − 1) − 1 . (7) Parameters θ = { θ m } M m =1 can be trained by minimizing the anomaly scores as follows: θ ← arg min θ K X k =1 1 N k N k X n =1 A ( x n,k , θ ) , (8) where x n,k and N k are the n -th training sample and the number of training samples of the k -th dataset, respecti vely . 3.2. AdaFlow W e consider domain adaptation for NF . A na ¨ ıve method of adapting NF to the ( K + 1) -th dataset is to fine-tune all { θ m } M m =1 with that dataset. Howe ver , fine-tuning requires high memory and computa- tional costs and cannot be easily conducted with devices installed in f acility equipments that typically have only limited computational resources. Therefore, a more efficient adaptation method is needed. T o address this problem, we propose AdaFlow , a Normalizing Flow-based density estimator that utilizes Adaptiv e Batch Normal- izations (AdaBNs). An AdaBN con verts data as follows: f − 1 m,θ m ( z ) = diag ( γ ) diag ( σ k ) − 1 2 ( z − µ k )) + β , (9) where µ k ∈ R D and σ k ∈ R D are vectors of mean and vari- ance computed with the data in the k -th domain, respectively , and γ ∈ R D and β ∈ R D are learnable parameters shared in all do- mains. The function diag ( λ ) denotes an operator that conv erts λ into a diagonal matrix of which ( i, j ) -th entry is ( λ ) i if i = j , oth- erwise 0 . Note that µ k and σ k are indi vidually calculated for each domain, whereas same γ and β are used for all K domains. By training the whole projections in this manner, µ k and σ k alleviate the difference up to the second-order moment for each domain in the hidden layers. In addition, adapting AdaFlo w to the given ( K +1) -th domain can be achie ved by just computing AdaBNs’ statistics µ K +1 and σ K +1 with data sampled from that domain. W e summarize the ov erall procedure of pre-training and adapt- ing AdaFlow as follows and in Fig 1: (i) pre-train AdaFlow projec- tions with K datasets by (8), (ii) adapt the statistics of AdaBNs µ 0 and σ 0 with the ( K + 1) -th dataset. 3.3. Examples of projection implementations W e next explain projections that can be used for implementing AdaFlow . If each projection is easy to inv ert and the determinant of its Jacobian is easy to compute, exact density estimation at each data point can be easily conducted. W e introduce two projections that satisfy the abov e requirements. Linear T ransf ormation: Linear transformation can be used as a projection for NFs as follows: f − 1 m,θ m ( z ) = W z + b , (10) where W ∈ R D × D and b ∈ R D is a weight matrix and a bias vector , respectively . The determinant of the Jacobian of this pro- jection is | W | − 1 = 1 / | W | . Since its computational complex- ity is O ( D 3 ) , we reparametrize W as a LDU decomposition form W = L diag ( d ) U , where L and U is a lower and upper triangu- lar matrix of which all diagonal elements are one, respecti vely , and d ∈ R D . Since | U | = | L | = 1 and | diag ( d ) | = Q D i ( d ) i , the computational complexity of the determinant of the Jacobian can be reduced to O ( D ) by using this reparametrization form. Leaky ReLU: A Leaky Rectified Linear Unit (Leaky ReLU) is a module used for DNNs, defined as follows: f − 1 m,θ m ( z ) = max( z , α z ) , (11) where α ∈ (0 , 1) is a hyper parameter , and max( λ (1) , λ (2) ) is an operator that outputs element-wise maximum of λ (1) and λ (2) , re- spectiv ely . Since Leaky ReLU is easy to inv ert and the determinant of its Jacobian is easy to compute, it can also be used as a projec- tion for NFs. The determinant of its Jacobian is α − τ , where τ is the number of elements that are less than 0. 4. EXPERIMENTS 4.1. Experimental Settings 4.1.1. Dataset T o verify the effecti veness of AdaFlow , we conducted experiments on an anomaly detection in sound (ADS) task. For the training and (a ) 2. 0 m 2. 0 m 1.0 m 1.0 m 6. 6 m 4. 64 m ( b) : s pe a ke r : c a r m ode l Fig. 2 . Photograph of toy car (left) and arrangement of toy car and loudspeakers for simulating en vironmental noise (right). test datasets, we constructed a toy-car-running sound dataset in a simulated room of a factory , as shown in Fig. 2. The toy cars were placed at in the room, and two loudspeak ers were arranged around a toy car to emit factory noise. For the target and noise sound, we in- dividually collected four types of car-running sounds and four types of factory noise data emitted from two loudspeakers. Then, K = 9 types of pre-training datasets were generated by mixing three of the four types of car sounds and three en vironmental sounds at a signal- to-noise (SNR) of 0 dB. The adaptation and test datasets were gen- erated by mixing the remaining car sound and environmental noise at an SNR of 0 dB. All sounds were recorded at a sampling rate of 16 kHz. Since it is difficult to generate various types of anomalous sounds, we created synthetic anomalous sounds in the same manner as in a previous study [9]. A part of the training dataset for the task of DCASE-2016 [23, 24] was used as anomalous sounds; 140 sounds including slamming doors , knocking at doors , ke ys put on a table , keystr okes on a ke yboard , drawer s being opened , pages being turned , and phones ringing ) were selected. T o synthesize the test data, the anomalous sounds were mixed with normal sounds at anomaly-to-normal power ratios (ANRs) of -20 dB. W e used the area under the R OC curve (A UR OC) as an ev aluation metric. W e also used the negati ve log-likelihood (NLL). Note that the higher A UR OC, the better the model, whereas the lower NLL, the better the model. The frame size of the discrete Fourier transformation was 512 points, and the frame was shifted every 256 samples. The input vectors were the log amplitude spectrum of 64-dimensional Mel- filterbank outputs with a context-windo w size of 5. Thus, the di- mension of input vector x was D = 704 . 4.1.2. Comparison methods W e compared the following models. • AdaFlow: each model is first trained with data sampled from the nine pre-training datasets and then adapted with data sam- pled from the tar get dataset. The architecture is a sequence of linear transformation, AdaBN, leaky ReLU, linear transfor- mation, and AdaBN. For adapting this model, the number of samples used was set to N = 10 , 100 , 1000 . • Normalizing Flow: each model is trained with data sampled from the nine pre-training datasets (the target dataset is not included). The architecture is a sequence of linear transfor- mation, BN, leaky ReLU, linear transformation, and BN. • Normalizing Flow: a model is first trained in the same man- ner as above, and then fine-tuned with data sampled from the target dataset. The architecture is the same as abov e. For fine-tuning this model, the number of samples used was set to N = 1000 . T able 1 . Results from anomaly detection experiments. Method NLL A UR OC (Chance Rate) N/A 0.5 Norm. Flow (T rained with 9 other datasets) 53.9 0.835 Auto-encoder (T rained with 9 other datasets) N/A 0.805 AdaFlow (Adapted with 10 samples) 92.4 0.816 AdaFlow (Adapted with 100 samples) 21.4 0.875 AdaFlow (Adapted with 1000 samples) 15.3 0.882 Norm. Flow (Fine-tuned with 1000 samples) 13.9 0.887 T able 2 . Computational T ime for adapting each model to the target dataset. W e ran these experiments with Intel Xenon CPU (2.30GHz) on a single thread. Method T ime [sec.] Norm. Flow (Fine-tuned with 1000 samples) 3.23 AdaFlow (Adapted with 1000 samples) 0.09 • Auto-encoder: each model is trained with data sampled from the nine pre-training datasets. Since this model cannot be used for density estimation, we only evaluate A UR OC. The architecture is a sequence of linear transformation (the output dimension is 128), ReLU, linear transformation (the output dimension is 64), ReLU, linear transformation (the output di- mension is 128), ReLU, and linear transformation (the output dimension is 704). 4.2. Objective evaluations The experimental results are shown in T ables 1 and 2. From these results, we observed the follo wing things: • Both Normalizing Flows and AdaFlow outperformed Auto- encoder . This observation indicates the superiority of Nor- malizing Flows o ver Auto-encoder in anomaly detection. • AdaFlow outperformed Normalizing Flow trained with nine pre-training datasets, ev en when it was trained with 10 sam- ples. This indicates the superiority of AdaFlo w over non-fine- tuned Normalizing Flow . • The larger the amount of data used for adapting AdaFlow , the better both the metrics were. This indicates that the amount of data used for adaptation should be as large as possible. • AdaFlow can be adapted to a new dataset about 36 times faster than fine-tuning-based Normalizing Flow adaptation, with slight accuracy decrease. This indicates that AdaFlo w is equally accurate yet much more ef ficient than fine-tuning- based adaptation. 5. APPLICA TION TO UNP AIRED CROSS-DOMAIN TRANSLA TION Though AdaFlow was originally designed for conducting density es- timation on multiple domains, we demonstrate that it can be also used for the unpaired cross-domain translation problem, in which one has to train a cross-domain translation model without paired data. W e propose the unpaired cross-domain translation framew ork with AdaFlow in Fig. 1 (c). Given a trained AdaFlow model, data belonging to one domain is first projected to the latent space with that domain’ s AdaBN statistics, and after that the obtained latent variable is reprojected to the data space with the target domain’ s Ad- aBN statistics. ( a ) P hot o e xa m pl e s ( c ) P hot o- t o- pa i nt i ng ( b) P a i nt i ng e xa m pl e s ( d) P a i nt i ng- t o- phot o Fig. 3 . Result examples of unpaired cross-domain translation. (a) training data examples of photos, (b) training data examples of paint- ings, (c) translation result examples of photo to painting, and (d) translation result examples of painting to photo. In both (c) and (d), input images are shown on the left side, and output images are sho wn on the right side. Best viewed in monitor . W e used two datasets for these experiments: the first one con- sisted of 400 photos, and the second one consisted of 400 paintings drawn by V an Goph. Examples are shown in Fig. 3 (a) and (b). As an architecture for AdaFlo w , we emplo yed a variant of Glo w [25], in which activ ation normalization layers are replaced with AdaBN. The cross-domain translation results are shown in Fig. 3 (c, d). W e can see that unpaired cross-domain translation can be achiev ed via AdaFlow , even when it is trained without paired data. These results indicate that AdaFlow can be a density-based alternative to other methods for this problem, such as CycleGAN [26]. 6. CONCLUSIONS W e proposed a new DNN-based density estimator called AdaFlow ; a unified model of the NF and AdaBN. Since AdaFlow can be adapted to a new domain by just adjusting the statistics used in AdaBNs, we can avoid iterative parameter update for adaptation, unlike fine- tuning. Therefore, a fast and low-computational cost domain adap- tation is achieved. W e confirmed the effectiv eness of the proposed method through an anomaly detection in a sound task. W e also pro- posed a method of applying AdaFlow to the unpaired cross-domain translation problem. W e demonstrated the effecti veness of using AdaFlow for the task through cross-domain translation experiments on photo and painting datasets. AdaFlow has the potential to resolve some problems of other im- portant tasks. One possible example is source enhancement [27–29]. It is kno wn that the performance of DNN-based source enhancement is degraded when target/noise characteristics of test data are different from those of training data. This problem is also domain-adaptation problem, thus it might be resolved by using AdaFlow . Therefore, in the future, we plan to apply AdaFlo w to other tasks including source enhancement. 7. REFERENCES [1] V ictoria Hodge and Jim Austin, “ A survey of outlier detection methodologies, ” Artificial intelligence re view , vol. 22, no. 2, pp. 85–126, 2004. [2] Animesh P atcha and Jung-Min Park, “ An overvie w of anomaly detection techniques: Existing solutions and latest technolog- ical trends, ” Computer networks , v ol. 51, no. 12, pp. 3448– 3470, 2007. [3] Jiawei Han, Jian Pei, and Micheline Kamber , Data mining: concepts and techniques , Elsevier , 2011. [4] W alter Andrew Shewhart, Economic contr ol of quality of man- ufactur ed pr oduct , ASQ Quality Press, 1931. [5] Bovas Abraham and George EP Box, “Bayesian analysis of some outlier problems in time series, ” Biometrika , vol. 66, no. 2, pp. 229–236, 1979. [6] Deepak Agarwal, “Detecting anomalies in cross-classified streams: a bayesian approach, ” Knowledge and information systems , vol. 11, no. 1, pp. 29–44, 2007. [7] Y uma K oizumi, Shoichiro Saito, Hisashi Uematsu, and Noboru Harada, “Optimizing acoustic feature extractor for anomalous sound detection based on neyman-pearson lemma, ” in EU- SIPCO , 2017. [8] Chong Zhou and Randy C Paffenroth, “ Anomaly detection with robust deep autoencoders, ” in KDD , 2017. [9] Y uma K oizumi, Shoichiro Saito, Hisashi Uematsu, Y uta Kawachi, and Noboru Harada, “Unsupervised detection of anomalous sound based on deep learning and the neyman- pearson lemma, ” IEEE/ACM T rans. ASLP , 2018. [10] Diederik P Kingma and Max W elling, “ Auto-encoding v aria- tional bayes, ” in ICLR , 2014. [11] Jinwon An and Sungzoon Cho, “V ariational autoencoder based anomaly detection using reconstruction probability , ” Special Lectur e on IE , vol. 2, pp. 1–18, 2015. [12] Y uta Kawachi, Y uma K oizumi, and Noboru Harada, “Com- plementary set variational autoencoder for supervised anomaly detection, ” in ICASSP , 2018. [13] Ian Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farle y , Sherjil Ozair, Aaron Courville, and Y oshua Bengio, “Generativ e adversarial nets, ” in NIPS , 2014. [14] Thomas Schlegl, Philipp Seeb ¨ ock, Sebastian M W aldstein, Ursula Schmidt-Erfurth, and Georg Langs, “Unsupervised anomaly detection with generative adversarial networks to guide marker discov ery , ” in IPMI , 2017. [15] Swee Kiat Lim, Y i Loo, Ngoc-T rung T ran, Ngai-Man Gemma Roig Cheung, and Y uval Elovici, “Doping: Gener- ativ e data augmentation for unsupervised anomaly detection with gan, ” in ICDM , 2018. [16] Danilo Rezende and Shakir Mohamed, “V ariational inference with normalizing flows, ” in ICML , 2015. [17] Laurent Dinh, Jascha Sohl-Dickstein, and Samy Bengio, “Den- sity estimation using real n vp, ” in ICLR , 2017. [18] Y anghao Li, Naiyan W ang, Jianping Shi, Jiaying Liu, and Xi- aodi Hou, “Revisiting batch normalization for practical domain adaptation, ” in ICLR W orkshop , 2016. [19] Sinno Jialin Pan, Qiang Y ang, et al., “ A survey on transfer learning, ” IEEE T ransactions on knowledge and data engi- neering , vol. 22, no. 10, pp. 1345–1359, 2010. [20] Y aroslav Ganin and V ictor Lempitsky , “Unsupervised domain adaptation by backpropagation, ” in ICML , 2015. [21] Konstantinos Bousmalis, George Trigeor gis, Nathan Silber- man, Dilip Krishnan, and Dumitru Erhan, “Domain separation networks, ” in NIPS , 2016. [22] Kuniaki Saito, Y oshitaka Ushiku, and T atsuya Harada, “ Asym- metric tri-training for unsupervised domain adaptation, ” in ICML , 2017. [23] http://www.cs.tut.fi/sgn/arg/dcase2016/ . [24] http://www.cs.tut.fi/sgn/arg/dcase2016/ download . [25] Diederik P Kingma and Prafulla Dhariwal, “Glow: Gener- ativ e flow with inv ertible 1x1 con volutions, ” arXiv preprint arXiv:1807.03039 , 2018. [26] Jun-Y an Zhu, T aesung Park, Phillip Isola, and Alexei A Efros, “Unpaired image-to-image translation using cycle-consistent adversarial networks, ” in ICCV , 2017. [27] Y uma Koizumi, Kenta Niwa, Y usuke Hioka, Kazunori K obayashi, and Y oichi Haneda, “Dnn-based source enhance- ment to increase objective sound quality assessment score, ” IEEE/A CM T rans. ASLP , 2018. [28] Y uma K oizumi, Noboru Harada, Y oichi Haneda, Y usuke Hioka, and Kazunori Kobayashi, “End-to-end sound source enhancement using deep neural network in the modified dis- crete cosine transform domain, ” in ICASSP , 2018. [29] Shinichi Mogami, Hayato Sumino, Daichi Kitamura, Norihiro T akamune, Shinnosuke T akamichi, Hiroshi Saruwatari, and Nobutaka Ono, “Independent deeply learned matrix analysis for multichannel audio source separation, ” in EUSIPCO , 2018.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment