A Spiking Network for Inference of Relations Trained with Neuromorphic Backpropagation

The increasing need for intelligent sensors in a wide range of everyday objects requires the existence of low power information processing systems which can operate autonomously in their environment. In particular, merging and processing the outputs of different sensors efficiently is a necessary requirement for mobile agents with cognitive abilities. In this work, we present a multi-layer spiking neural network for inference of relations between stimuli patterns in dedicated neuromorphic systems. The system is trained with a new version of the backpropagation algorithm adapted to on-chip learning in neuromorphic hardware: Error gradients are encoded as spike signals which are propagated through symmetric synapses, using the same integrate-and-fire hardware infrastructure as used during forward propagation. We demonstrate the strength of the approach on an arithmetic relation inference task and on visual XOR on the MNIST dataset. Compared to previous, biologically-inspired implementations of networks for learning and inference of relations, our approach is able to achieve better performance with less neurons. Our architecture is the first spiking neural network architecture with on-chip learning capabilities, which is able to perform relational inference on complex visual stimuli. These features make our system interesting for sensor fusion applications and embedded learning in autonomous neuromorphic agents.

💡 Research Summary

The paper presents a neuromorphic spiking neural network capable of relational inference and on‑chip learning. The authors address the challenge of training spiking networks, whose activation functions are non‑differentiable, by introducing a spike‑based back‑propagation algorithm that encodes error gradients as binary signed events. The forward path uses a simple integrate‑and‑fire (IF) neuron with a threshold Θ_ff. Unlike many spiking models, both positive and negative spikes are permitted, allowing the accumulated membrane potential V to reflect the net sum of inputs regardless of spike order. A trace variable x, updated with a learning‑rate factor η whenever a spike occurs, acts as a surrogate for the ReLU activation and guarantees that the neuron’s output remains non‑negative.

During the backward pass, each neuron contains a second compartment that integrates error signals into a variable U with its own threshold Θ_bp. When |U| exceeds Θ_bp, a ternary error spike z (±1) is emitted. This spike is gated by a surrogate derivative a⁰(t) that equals 1 when either V>0 or the trace x>0, mimicking the derivative of a ReLU. The resulting error signal δ(t)=z·a⁰(t) is then propagated through the same symmetric synapses used in the forward direction. Weight updates follow a simple rule: Δw = –x for δ=+1, Δw = +x for δ=–1, and zero otherwise. Because all operations are additions, subtractions, and comparisons, the algorithm avoids floating‑point multiplications entirely, making it well suited for digital neuromorphic hardware where only binary spike streams need to be transmitted.

The loss function is an L2 distance between the accumulated activity of the output population (y = V_L + x_L) and a target spike pattern t. The gradient ∂L/∂y = (y–t) is injected directly into the error integration variable U of the output neurons, allowing the error magnitude to be represented by the number and duration of error spikes. This dynamic quantization means that higher precision can be achieved simply by extending the error‑propagation window.

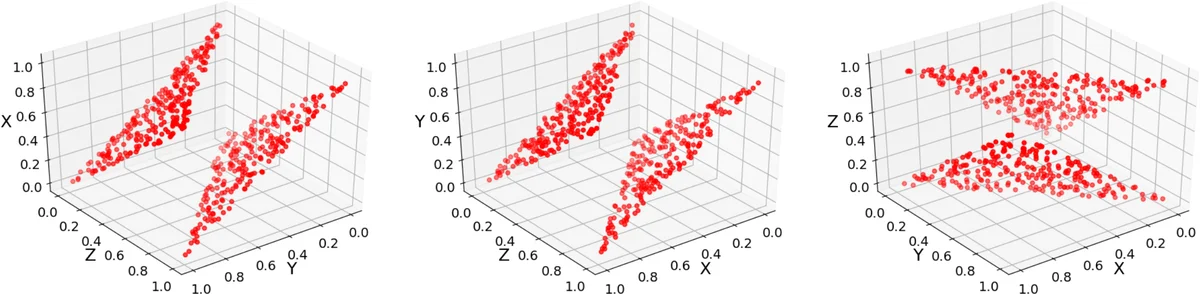

The relational network architecture consists of three types of populations: input‑output (IO) populations that either provide stimuli or reconstruct missing variables, peripheral populations that transform IO signals into higher‑level representations, and a hidden population that integrates peripheral signals and enforces the relationship among variables. For an n‑variable relation, n+1 populations are coupled; during training, two populations act as inputs while the third learns to reproduce the target pattern. Roles are rotated so that each variable can be inferred from the others, enabling bidirectional inference.

Two experimental domains validate the approach. First, an arithmetic relation (a + b = c) is learned, demonstrating that the network can infer a missing operand from the other two. Second, a visual XOR task is built using MNIST digit images: the network receives two digit images and must generate the XOR of their class labels as a third image. Compared with prior biologically inspired relational networks that rely on hard‑wired connections or spike‑timing‑dependent plasticity (STDP), the proposed method achieves comparable or higher accuracy while using fewer neurons (on the order of a few hundred). In the visual XOR experiment, the network reaches over 92 % success, showing that the spike‑based back‑propagation can handle complex, high‑dimensional visual data.

From a hardware perspective, the algorithm’s reliance on integer accumulations and threshold comparisons means it can be mapped directly onto existing digital neuromorphic platforms such as Intel’s Loihi or IBM’s TrueNorth, and can also be adapted to analog spiking circuits with minor modifications. The requirement for symmetric weights (the same forward and backward synaptic strengths) is the main biological compromise, but it enables the use of the same physical synapse array for both directions, simplifying chip design.

In summary, the authors deliver a practical, scalable method for training spiking neural networks to perform relational inference on both synthetic arithmetic and real visual tasks. By converting error gradients into spike events and using a ReLU‑like surrogate derivative, they retain the energy‑efficiency and event‑driven nature of neuromorphic hardware while achieving learning performance comparable to conventional deep networks. This work opens the door to low‑power sensor‑fusion, on‑device learning, and autonomous reasoning in embedded neuromorphic agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment