Hallucinating robots: Inferring Obstacle Distances from Partial Laser Measurements

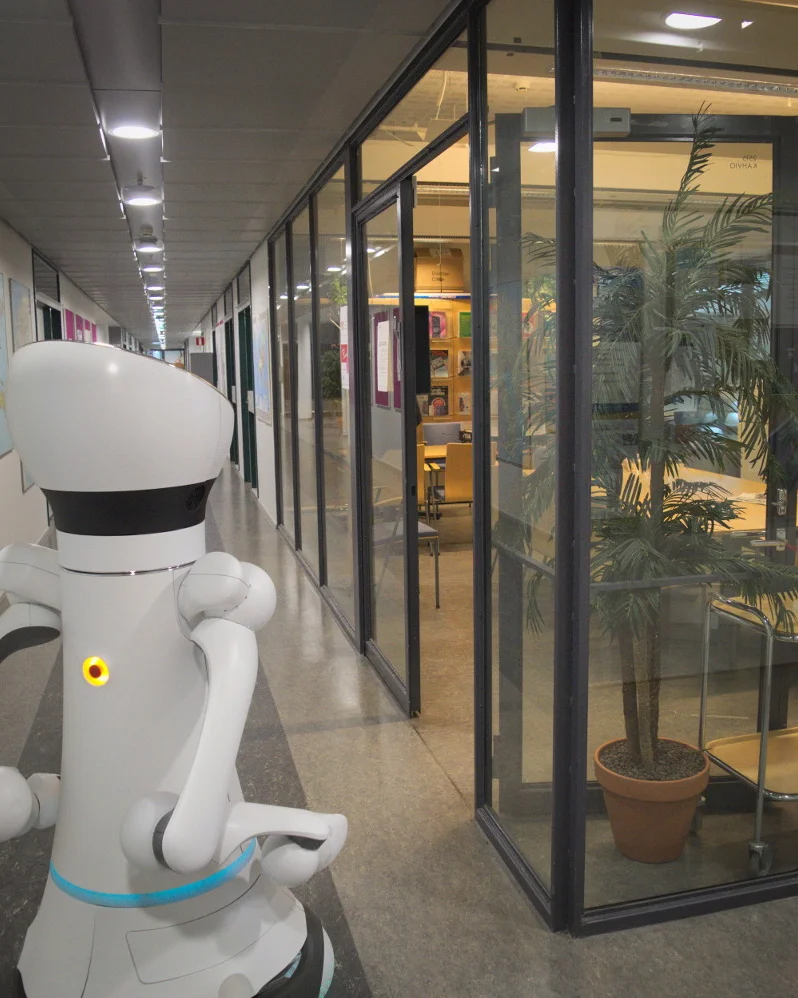

Many mobile robots rely on 2D laser scanners for localization, mapping, and navigation. However, those sensors are unable to correctly provide distance to obstacles such as glass panels and tables whose actual occupancy is invisible at the height the sensor is measuring. In this work, instead of estimating the distance to obstacles from richer sensor readings such as 3D lasers or RGBD sensors, we present a method to estimate the distance directly from raw 2D laser data. To learn a mapping from raw 2D laser distances to obstacle distances we frame the problem as a learning task and train a neural network formed as an autoencoder. A novel configuration of network hyperparameters is proposed for the task at hand and is quantitatively validated on a test set. Finally, we qualitatively demonstrate in real time on a Care-O-bot 4 that the trained network can successfully infer obstacle distances from partial 2D laser readings.

💡 Research Summary

This paper addresses a fundamental limitation of 2‑D laser range finders commonly used for mobile robot navigation: they only report distances to objects intersecting the laser plane, which means that obstacles such as glass panels, tabletops, or any structure that does not intersect the scanner height remain invisible. Traditional solutions rely on richer sensors (3‑D LiDAR, RGB‑D cameras) or sensor fusion, but these increase hardware cost, computational load, and often suffer from limited fields of view.

The authors propose a purely data‑driven approach that infers the true robot‑to‑obstacle distance directly from raw 2‑D laser scans. Formally, given an input vector x∈ℝᴺ (the N‑point laser scan, N=128) they aim to learn a mapping H:ℝᴺ→ℝᴺ such that the output y contains, for each angular direction, the minimum distance to any obstacle over the full vertical range of the robot (i.e., y_i = min_{h∈

Comments & Academic Discussion

Loading comments...

Leave a Comment