KT-Speech-Crawler: Automatic Dataset Construction for Speech Recognition from YouTube Videos

In this paper, we describe KT-Speech-Crawler: an approach for automatic dataset construction for speech recognition by crawling YouTube videos. We outline several filtering and post-processing steps, which extract samples that can be used for trainin…

Authors: Egor Lakomkin, Sven Magg, Cornelius Weber

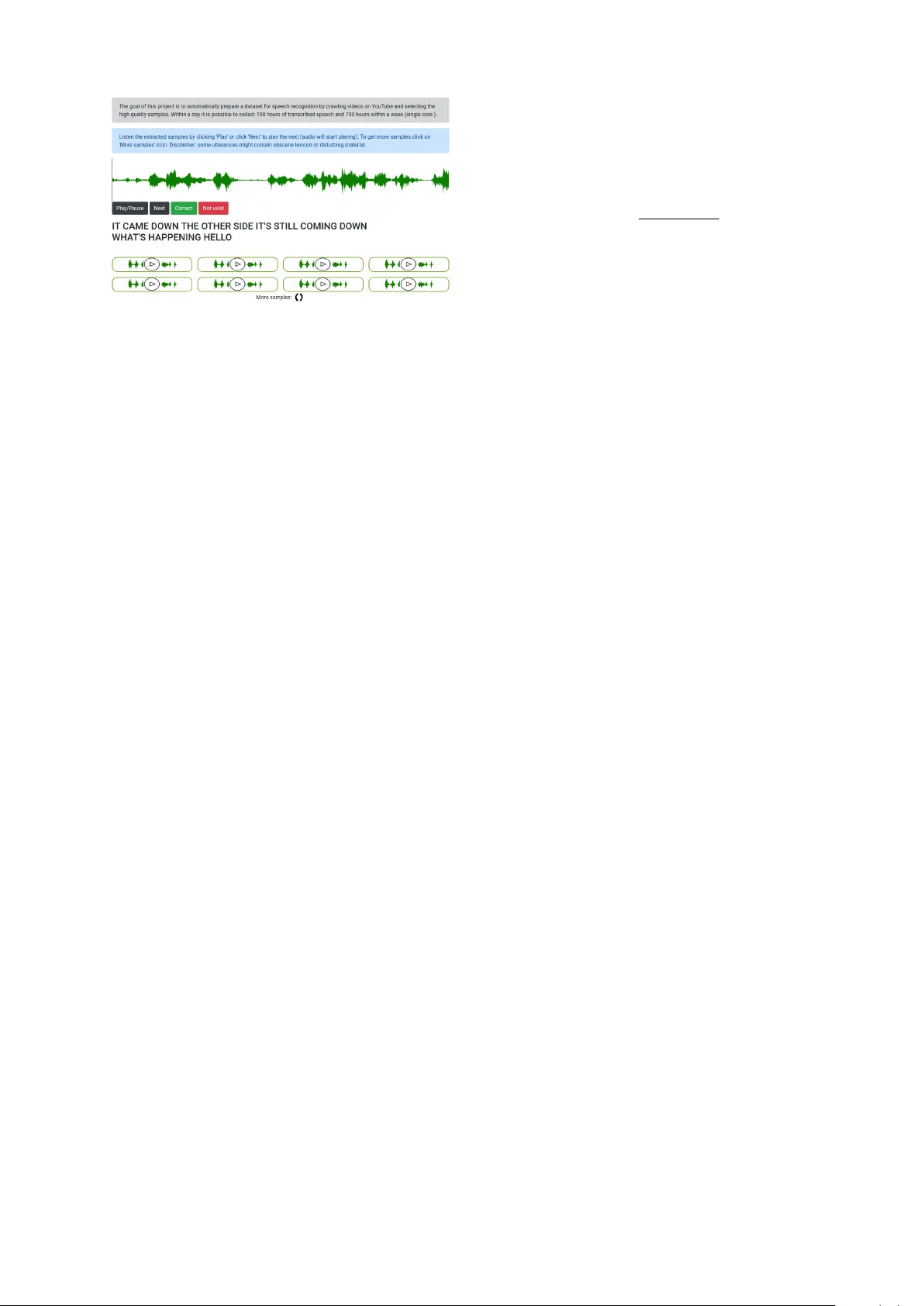

KT -Speech-Crawler: A utomatic Dataset Construction f or Speech Recognition fr om Y ouT ube V ideos Egor Lakomkin Sven Magg Cornelius W eber Stefan W ermter Department of Informatics, Kno wledge T echnology Uni versity of Hamb urg V ogt-K oelln Str . 30, 22527 Hambur g, German y { lakomkin, magg, weber, wermter } @informatik.uni-hamburg.de Abstract In this paper , we describe KT -Speech-Cra wler: an approach for automatic dataset construction for speech recognition by crawling Y ouTube videos. W e outline se veral filtering and post- processing steps, which extract samples that can be used for training end-to-end neural speech recognition systems. In our experi- ments, we demonstrate that a single-core ver - sion of the crawler can obtain around 150 hours of transcribed speech within a day , con- taining an estimated 3.5% word error rate in the transcriptions. Automatically col- lected samples contain reading and sponta- neous speech recorded in various conditions including background noise and music, distant microphone recordings, and a variety of ac- cents and reverberation. When training a deep neural netw ork on speech recognition, we ob- served around 40% word error rate reduction on the W all Street Journal dataset by integrat- ing 200 hours of the collected samples into the training set. The demo 1 and the crawler code 2 are publicly av ailable. 1 Introduction End-to-end neural networks significantly simpli- fied the development of automatic speech recog- nition (ASR) systems ( Grav es and Jaitly , 2014 ). T raditionally , ASR systems are based on Gaus- sian Mixture Models (GMM) or Deep Neural Net- works (DNN) for acoustic state representations follo wed by the Hidden Markov Model (HMM) for sequence-le vel learning. Though such systems are successful and achieve high performance ( Hin- ton et al. , 2012 ), they require word- or phoneme- le vel alignments between the acoustic signal and the transcription. As a result, dataset prepara- tion for such hybrid systems is a labor-intensi ve 1 http://emnlp- demo.lakomkin.me/ 2 https://github.com/EgorLakomkin/ KTSpeechCrawler Figure 1: Architecture of the proposed system cra wl- ing Y ouT ube to find videos with closed captions. Sev- eral filtering and post-processing steps are applied to select high-quality speech candidates. As a result, pairs of speech and corresponding transcriptions are col- lected. and error-prone process as the performance of the whole system is sensiti ve to the quality of the alignment. Also, each component is trained indi- vidually , which makes the whole process comple x and difficult to maintain. Recently , Connectionist T emporal Classification (CTC) loss ( Grav es et al. , 2006 ) has been introduced, which allo ws relax- ing the constraint of ha ving alignment between the spoken text and audio by introducing a sequence- le vel criterion. Also, recurrent neural network- based architectures that are state-of-the-art mod- els in machine translation hav e been applied to speech recognition ( Chan et al. , 2016 ). Conse- quently , neural networks can be trained end-to-end via backpropagation ( Graves and Jaitly , 2014 ). CTC maximizes the log-likelihood of the ground truth transcription and thus only the spoken text is required without an explicit alignment, which is easier and cheaper to obtain. Pre vious work outlined the importance of hav- ing large amounts of annotated data to train deep neural networks. For example, a ten times in- crease of the training data size from 1,200 hours to 12,000 hours resulted in improving the word error rate from 13.9% to 8.46% for clean and from 22.99% to 13.59% for noisy speech ( Amodei et al. , 2016 ). Collecting such large datasets is an expensi ve and labor-intensi ve process, which re- quires a significant amount of resources, usually not a va ilable for the research community com- pared to large industrial companies. F or exam- ple, Baidu’ s internal speech dataset ( Amodei et al. , 2016 ) contains around 10,000 hours of speech, while the largest dataset a v ailable for the re- search community does not exceed 2,000 hours ( David et al. , 2004 ). W e propose to utilize a vast amount of videos a vailable on Y ouT ube with user - provided closed captions as a source to extract speech datasets comparable in size to the ones av ailable in the industry . Our contribution in this paper is two-fold: 1) we pro vide a cra wler that automatically e x- tracts speech samples with transcriptions from Y ouT ube and filters high-quality samples with se veral heuristic measures, and 2) we extend the training data of two benchmark datasets with the extracted samples and validate the benefit of the collected data by training a deep neural network on the original and the combined data to mea- sure test performance dif ference. W e also ev alu- ate the amount of noise in transcriptions by manu- ally checking the word error rate of a random sub- set of the dataset. W e hope that our de veloped tool will foster research of large-scale automatic speech recognition systems 3 . 2 Related work Cro wdsourcing has been successfully used to con- struct speech datasets like V oxF orge 4 or Mozilla’ s Common V oice 5 , where users recorded them- selves through the pro vided web-interface, and up- loaded samples can be checked by other partic- 3 The code and the Dockerfile are av ailable by this link https://github.com/EgorLakomkin/ KTSpeechCrawler 4 http://www.voxforge.org 5 https://voice.mozilla.org/ ipants. While such an approach, in theory , can be a viable strate gy to acquire a large number of di verse speech samples, it has se veral draw- backs. The main limitation of this approach is the dif ficulty of engaging and acquiring users to donate samples to achieve a lar ge and di- verse dataset in terms of the number of differ - ent speakers, accents, en vironments and recording conditions. Another approach, which is widely adopted by the research community , is to make use of a v ast amount of a vailable multi-modal data which contains transcribed speech. For e xam- ple, TED talks ( Rousseau et al. , 2014 ) are care- fully transcribed and contain around 200 hours of speech from around 1,500 speakers (TED-LIUM v2 dataset). LibriSpeech ( Panayotov et al. , 2015 ) is composed of a large number of audiobooks and is the largest freely av ailable dataset: around 960 hours of English read speech. Google re- leased their Speech Commands dataset 6 contain- ing around 65,000 one-second-long utterances. It has already been demonstrated that Y ouT ube captions can be successfully used as a ground truth spoken text transcription to train large-scale ASR systems ( Liao et al. , 2013 ; Lecouteux et al. , 2012 ). Users upload closed captions for vari- ous reasons: to make video accessible for peo- ple ha ving some degree of hear loss, or to help non-nati ve speakers, or to increase the number of views (Y ouT ube search ranking algorithm in- dex es closed captions content 7 ). Ne vertheless, some videos contain inaccurate or ev en unrelated to speech captions, for example, advertisements. Se veral heuristics were proposed to remove low- quality samples: removing captions containing advertisements, language mismatch detection and using forced alignment to detect confident align- ment regions between the caption and the audio. In addition, Y ouT ube has been used previously in multiple ways to automatically collect multi- modal datasets, e.g. emotion recognition datasets by Barros et al. ( 2018 ) and Zadeh et al. ( 2016 ), or opinion mining ( Marrese-T aylor et al. , 2017 ), or video classification (Y ouT ube-8M 8 , or human ac- tion recognition ( Kay et al. , 2017 )). In this work, we combine se veral known heuris- 6 https://ai.googleblog.com/2017/08/ launching- speech- commands- dataset.html 7 https://www.3playmedia.com/customers/ case- studies/discovery- digital- networks/ 8 https://research.google.com/ youtube8m/ tics and propose some additional ones to select high quality samples in an automatic way . W e integrate it into an easy to use tool KT -Speech- Crawler , which can continuously scan ne w videos uploaded to Y ouTube and update the speech database. T o our knowledge, this is the first open- source tool av ailable for automatic speech dataset construction. 3 Crawler In this section, we describe the sample selection strategy , follo wed by sev eral filtering and post- processing heuristics to locate high-quality sam- ples and discard noisy ones from Y ouT ube. 3.1 Candidate selection Firstly , we do wnload candidate videos with En- glish closed captions, which are usually uploaded by the channel owner . T o reach as many videos as possible we use the Y ouTube Search API, where one of the top 100 most common English words is used as a search ke yword to match the video title (for e xample, the, but, have, not, and, ... ). Such frequent ke ywords allo w us to match many videos, ev en though, as a side effect, non-English videos with closed captions in English might be captured. The Y ouT ube Search API allo ws to do wnload the 600 most recent videos for each ke y- word, and since many videos are constantly be- ing uploaded to Y ouT ube it is possible to continu- ously collect speech samples. Also, we memorize Y ouT ube channels containing samples that passed all the filtering steps (see section 3.2) and use other videos from this channel. This leads to many di- verse candidates coming from TV shows and TV series, video blogs, ne ws, and liv e recordings. 3.2 Filtering steps W e perform se veral filtering steps to select suitable candidates: • we discard a caption if it ov erlaps with an- other caption, which sometimes happens due to incorrectly closed caption auto syncing, • we filter out captions that indicate that there is music content in this sample and captions containing non-ASCII characters or URLs, • we remove text chunks which do not corre- spond to the actual spoken te xt, like the infor - mation of the speaker name ( Speaker 1: ... ), annotations ( [laughs], *laughs*, (laughs) ), and punctuation, • we spell out numbers which are within the range from 1 to 100 as they ha ve non- ambiguous pronunciation (in contrast, for e x- ample, 1,500 can be uttered as fifteen hundred or one thousand and five hundr ed ), • we discard captions if they contain any char- acter that is not an English letter , apostrophe or a white space, • we filter segments which have less than one second duration or more than ten seconds, • in addition, we select randomly three phrases from the video and measure the Lev enshtein similarity between the provided closed cap- tion and the transcription generated by the Google ASR API. If the similarity is below a 70% threshold, we discard all the samples in this video. This step allows filtering videos which hav e English subtitles for non-English spoken text or videos with a bad alignment. Also, this filter removes videos with com- pletely misaligned captions. 3.3 Post-pr ocessing steps During our experiments on ev aluating the quality of the extracted samples, we spotted that one of the major problems is imprecise alignments be- tween caption and audio. For example, the first or the last word can be omitted on the recording due to incorrect caption timings. One possible way to reduce the number of samples with mis- aligned borders is to group together nearby cap- tions if they are at a distance of less than one sec- ond. W e stop grouping adjacent utterances if the ov erall length exceeds ten seconds. In addition, we perform a forced alignment 9 between the caption and the corresponding audio using Kaldi ( Pove y et al. , 2011 ) and if the first or the last word is not successfully mapped, we try to extend the caption boundaries (up to 500 milliseconds) until the bor- der word becomes mapped. If we cannot align the border word, we keep the caption boundaries un- changed. 4 Experiments and analysis T o ev aluate the usefulness of the collected sam- ples we conducted three types of e xperiments. W e 9 https://github.com/lowerquality/ gentle Figure 2: Architecture of the ASR model used in this work, follo wing the DeepSpeech 2 architecture. trained the deep neural network-based model on dif ferent training datasets: • on the original training data, • on the mix of the original and with the crawled samples, • only on the crawled samples. For benchmarking, we selected two well-kno wn datasets for training ASR systems: The W all Street Journal and TED-LIUM v2. In all experiments, we kept the same size and architecture of the neu- ral model and its hyperparameters. In this section, we outline the details of benchmark data used in our experiments, neural model architecture and the e valuation protocol and metrics, follo wed by the e valuation results and comparisons. 4.1 ASR model Our ASR model (see Figure 2 ) is a combination of con v olutional and recurrent layers inspired by the DeepSpeech 2 ( Amodei et al. , 2016 ) architecture. Our model contains two 2D con volutional layers for feature extraction from po wer FFT spectro- grams. Power spectrograms are extracted using a Hamming windo w of 20ms width and 10ms stride, resulting in 161 features for each speech frame. Con v olutional layers are followed by five recur- rent bi-directional Gated Recurrent Units ( Chung et al. , 2014 ) layers with a size of 1,024 followed by a softmax layer on top, predicting the char - acter distribution for each speech frame. Over - all, our model has around 61 million parameters. Connectionist T emporal Classification (CTC) loss ( Grav es et al. , 2006 ) is used as a loss criterion to measure how good the alignment produced by the network is compared to the ground truth transcrip- tion. The Stochastic Gradient Descent optimizer is used in all experiments with a learning rate of 0.0003, clipping the norm of the gradient at the T able 1: Evaluation results. W e ev aluated the effect of adding samples extracted from Y ouTube by our tool on two benchmarking datasets: WSJ and TED-LIUM v2. W e trained the deep neural network on the origi- nal training data, then combined the data with Y ouT ube samples ( WSJ+Y ouT ube , for example), and, finally , only on the Y ouT ube samples. W e report word and character error rate. T rain T est WER CER WSJ WSJ 27.4% 7.2% WSJ + Y ouT ube (200h) WSJ 15,8% 4.2 % Y ouT ube (200h) WSJ 31.5% 8.3% TED TED 32.6% 10.4% TED + Y ouT ube (300h) TED 28.1% 8.2% Y ouT ube (300h) TED 36.6% 10.6% le vel of 400 with a batch size of 32. During the training, we apply learning rate annealing with a factor of 1.1. W e apply the SortaGrad algorithm ( Amodei et al. , 2016 ) during the first epoch by sorting utterances by their duration ( Hannun et al. , 2014a ). W e select the model with the best word er- ror rate measured on the validation set to prev ent model ov erfitting. 4.2 Data and ev aluation measure 4.2.1 WSJ The W all Street Journal (WSJ) dataset is a well- kno wn dataset for ev aluating ASR systems, con- taining utterances of read speech coming from the ne ws domain. The WSJ training set ( train-si284 ) consists of 81 hours containing 37,318 sentences from 284 speakers (142 male and 142 female). W e used the de v93 de velopment set for v alidation and report the word error rate on the e v al92 test set. 4.2.2 TED talks W e also ev aluated our approach on the TED- LIUM v2 dataset, which contains around 200 hours of transcribed TED 10 talks of 1,495 speak- ers. In contrast to the WSJ dataset, it contains spontaneous speech rather than read speech. 4.3 Results W e summarize our results in T able 1 . Note that we did not use a language model for decoding in our experiments but used greedy decoding, where the most probable character at each timestep was emitted. It is well known that decoding with the 10 https://www.ted.com/ Figure 3: A screenshot of the web-based demo to browse the collected samples, presenting the extracted utterance and the corresponding transcription. language model and beam search significantly im- prov es the performance on the test set of character - based end-to-end models ( Hannun et al. , 2014b ), but as our goal was to demonstrate the impact of adding extracted samples within the same neural model and test set, we left it out. W e observed that adding samples from Y ouT ube positi vely con- tributed to the overall performance in both met- rics: word (WER) and character error rates (CER). For example, the word error rate improved from 34.2% to 15.8% on the WSJ test set by adding 200 hours of samples (108,617 utterances) to the WSJ training set. Similar results can be observ ed on the TED talks dataset: WER and CER improv ed from 32.6% to 28.1% and 10.4% to 8.2% by adding 300 hours of Y ouT ube samples. T o be sure that none of the TED videos appeared in the Y ouT ube set, which could lead to ov erestimation of the perfor- mance, we excluded videos that contain a TED to- ken in the title or in the description. Interestingly , if only Y ouT ube samples were used as the training set, we observed CER values of 8.3% and 10.6% for the WSJ and the TED datasets, respecti v ely (compared to 7.2% and 10.4% using original train- ing data), indicating that ha ving a domain-specific training set plays an important role and there is a room for impro vement in designing better filtering and post-processing steps. 4.4 T ranscriptions quality W e manually in vestigated samples by using de vel- oped a web-based demo, see Fig. 3 and analyzed the quality of the collected samples and their tran- scriptions. Our developed web-service presents random eight utterances and their corresponding transcriptions to the user and allows to load more samples if necessary . W e also integrated a simple functionality to validate the extracted samples: a user can confirm that the caption is correct or if not enter the right transcription. W E R = S + D + I S + D + C (1) W e computed the word error rate using equation 1 , where S, I, D, C is number of substitutions, insertions, deletions and correct words, respec- ti vely . W e estimated 3.5% word error rate on the small randomly selected subset of 600 samples. The most common type of error was missing or wrongly added one or two words at the beginning or at the end of the utterance. 5 Conclusions and future work In this work, we presented an open-source system that automatically constructs datasets for training end-to-end neural speech recognition systems. W e demonstrated the usefulness of the collected sam- ples on the WSJ and TED datasets. W e provide the code for the crawler and metadata and a script to easily construct a dataset of 500 hours. Future work includes extending the script to support other languages. A more sophisticated approach to identify wrongly added or missing words in transcriptions could also be used by using attention-based neural networks like pointer net- works. W e are also aware that some collected sam- ples may contain automatically generated utter- ances with T ext-T o-Speech software, which may require performing speaker recognition to balance the dataset. Furthermore, domain-specific speech datasets can be collected by selecting samples af- ter analyzing captions and video metadata (for ex- ample, in the financial domain). In addition, sam- ples with several people talking at the same time and noisy samples with low signal-to-noise ratio need to be filtered, which could be implemented as neural network-based modules. W e belie ve that ha ving lar ge, free and high- quality speech datasets av ailable to the research community will foster the dev elopment of new architectures and applications for speech under- standing, and we hope that our presented tool will contribute to that. Acknowledgments This project has receiv ed funding from the Eu- ropean Union’ s Horizon 2020 research and inno- v ation programme under the Marie Sklodowska- Curie grant agreement No 642667 (SECURE) and partial support from the German Research Foun- dation DFG under project CML (TRR 169). References Dario Amodei, Rishita Anubhai, Eric Battenberg, Carl Case, Jared Casper , Bryan Catanzaro, Jingdong Chen, Mike Chrzanowski, Adam Coates, and et al. 2016. Deep Speech 2: End-to-End Speech Recog- nition in English and Mandarin. Pr oceedings of The 33r d International Conference on Machine Learn- ing , 48:173–182. Pablo Barros, Nikhil Churamani, Egor Lakomkin, Henrique Siqueira, Alexander Sutherland, and Ste- fan W ermter . 2018. The OMG-Emotion Behavior Dataset. T o appear in International Joint Confer- ence on Neural Networks . W illiam Chan, Navdeep Jaitly , Quoc Le, and Oriol V inyals. 2016. Listen, attend and spell: A neural network for large vocabulary con versational speech recognition. In 2016 IEEE International Confer- ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 4960–4964. IEEE. Junyoung Chung, Caglar Gulcehre, KyungHyun Cho, and Y oshua Bengio. 2014. Empirical Ev aluation of Gated Recurrent Neural Networks on Sequence Modeling. Deep Learning and Representation Learning W orkshop . Christopher Cieri David, Christopher Cieri David, David Miller, and K evin W alker . 2004. The Fisher Corpus: a Resource for the Next Generations of Speech-to-T ext. In Proceedings 4th International Confer ence On Languag e Resources and Evalua- tion , pages 69–71. Alex Grav es, Santiago Fern ´ andez, Faustino Gomez, and Jrgen Schmidhuber . 2006. Connectionist tem- poral classification. In Pr oceedings of the 23r d in- ternational confer ence on Machine learning - ICML ’06 , pages 369–376, New Y ork, New Y ork, USA. A CM Press. Alex Graves and Navdeep Jaitly . 2014. T owards end- to-end speech recognition with recurrent neural net- works. Pr oceedings of the 31st International Con- fer ence on International Confer ence on Machine Learning - V olume 32 , pages II–1764. A wni Hannun, Carl Case, Jared Casper , Bryan Catan- zaro, Greg Diamos, Erich Elsen, Ryan Prenger , Sanjeev Satheesh, Shubho Sengupta, Adam Coates, and Andrew Y Ng. 2014a. Deep Speech: Scal- ing up end-to-end speech recognition. CoRR , abs/1412.5567. A wni Y . Hannun, Andrew L. Maas, Daniel Ju- rafsky , and Andrew Y . Ng. 2014b. First-Pass Large V ocabulary Continuous Speech Recognition using Bi-Directional Recurrent DNNs. CoRR , abs/1408.2873. Geoffre y Hinton, Li Deng, Dong Y u, Geor ge Dahl, Abdel-rahman Mohamed, Na vdeep Jaitly , Andrew Senior , V incent V anhoucke, Patrick Nguyen, T ara Sainath, and Brian Kingsbury . 2012. Deep Neural Networks for Acoustic Modeling in Speech Recog- nition: The Shared V ie ws of Four Research Groups. IEEE Signal Pr ocessing Magazine , 29(6):82–97. W ill Kay , Joao Carreira, Karen Simonyan, Brian Zhang, Chloe Hillier , Sudheendra V ijaya- narasimhan, Fabio V iola, Tim Green, T rev or Back, Paul Natsev , Mustafa Suleyman, and Andrew Zisserman. 2017. The Kinetics Human Action V ideo Dataset. Benjamin Lecouteux, Georges Linar ` es, and Stanislas Oger . 2012. Integrating imperfect transcripts into speech recognition systems for building high-quality corpora. Computer Speech & Language , 26(2):67– 89. Hank Liao, Erik McDermott, and Andrew Senior . 2013. Lar ge scale deep neural network acous- tic modeling with semi-supervised training data for Y ouTube video transcription. In 2013 IEEE W ork- shop on Automatic Speech Recognition and Under- standing , pages 368–373. Edison Marrese-T aylor, Jor ge Balazs, and Y utaka Mat- suo. 2017. Mining fine-grained opinions on closed captions of Y ouTube videos with an attention-RNN. In Pr oceedings of the 8th W orkshop on Computa- tional Approac hes to Subjectivity , Sentiment and So- cial Media Analysis , pages 102–111, Stroudsb urg, P A, USA. Association for Computational Linguis- tics. V assil Panayotov , Guoguo Chen, Daniel Pove y , and Sanjeev Khudanpur . 2015. Librispeech: An ASR corpus based on public domain audio books. In 2015 IEEE International Conference on Acous- tics, Speech and Signal Pr ocessing (ICASSP) , pages 5206–5210. IEEE. Daniel Pov ey , Daniel Pove y , Arnab Ghoshal, Gilles Boulianne, Nagendra Goel, Mirko Hannemann, Y anmin Qian, Petr Schwarz, and Georg Stemmer . 2011. The kaldi speech recognition toolkit. In IEEE Automatic Speech Recognition and Understanding W orkshop . Anthony Rousseau, Anthon y Rousseau, Paul Del ´ eglise, and Y annick Est ` eve. 2014. Enhancing the TED- LIUM corpus with selected data for language mod- eling and more TED talks. In Pr oceedings 9th In- ternational Confer ence On Language Resour ces and Evaluation , pages 26–31. Amir Zadeh, Ro wan Zellers, Eli Pincus, and Louis- Philippe Morency . 2016. MOSI: Multimodal Cor- pus of Sentiment Intensity and Subjectivity Analysis in Online Opinion V ideos. IEEE Intelligent Systems , 31.6:82–88.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment