Robust Sound Source Localization considering Similarity of Back-Propagation Signals

We present a novel, robust sound source localization algorithm considering back-propagation signals. Sound propagation paths are estimated by generating direct and reflection acoustic rays based on ray tracing in a backward manner. We then compute th…

Authors: Inkyu An, Doheon Lee, Byeongho Jo

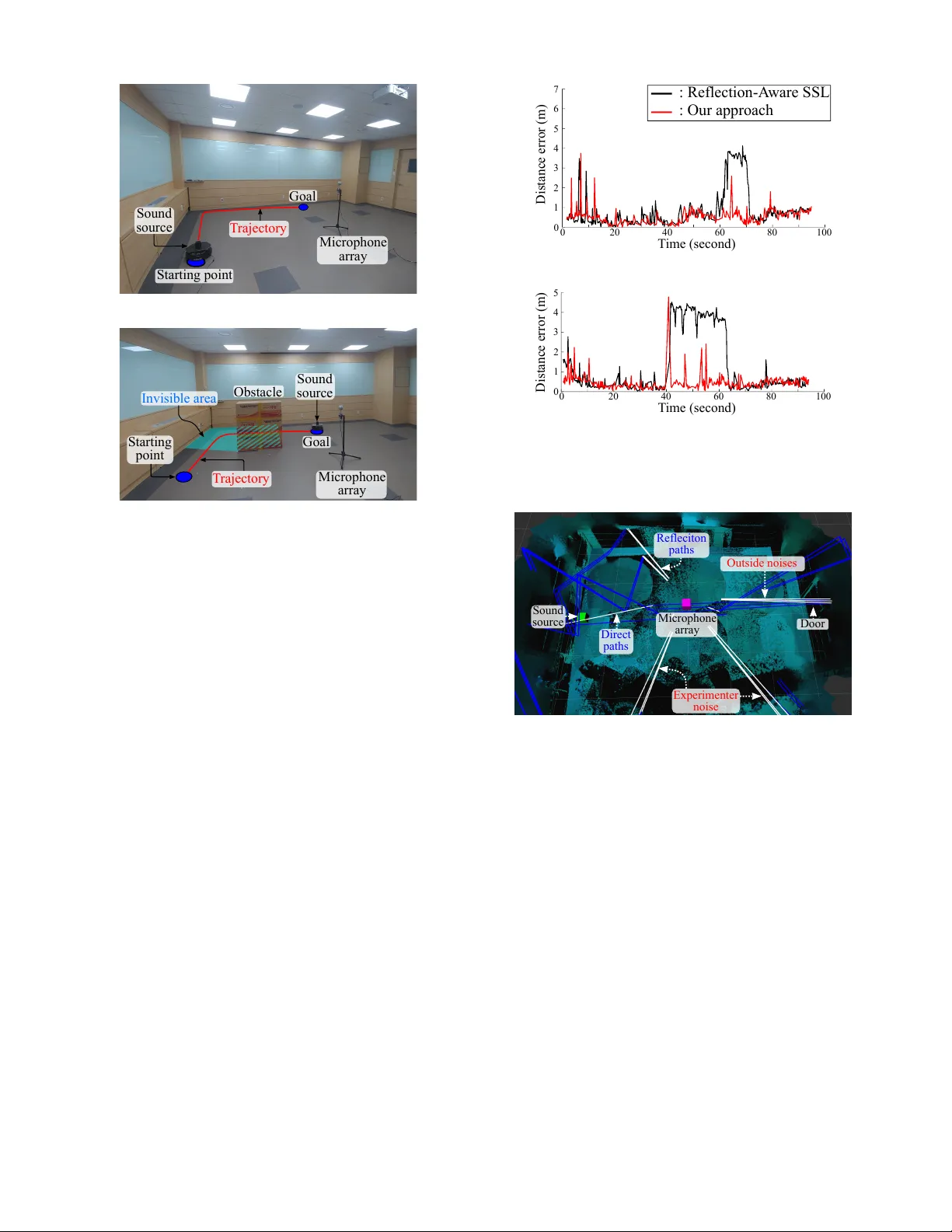

Rob ust Sound Source Localization considering Similarity of Back-Propagation Signals Inkyu An 1 , Doheon Lee 1 , Byeongho Jo 2 , Jung-W oo Choi 2 and Sung-Eui Y oon 1 1 School of Computing 2 School of Electrical Engineering K orea Adv anced Institute of Science and T echnology Daejeon, South K orea Email: {inkyu.an, doheonlee, byeongho, jwoo}@kaist.ac.kr , sungeui@kaist.edu Abstract —W e present a novel, robust sound source localization algorithm considering back-propagation signals. Sound pr opa- gation paths are estimated by generating dir ect and reflection acoustic rays based on ray tracing in a backward manner . W e then compute the back-propagation signals by designing and using the impulse response of the backward sound propagation based on the acoustic ray paths. F or identifying the 3D source position, we suggest a localization method based on the Monte Carlo localization algorithm. Candidates f or a source position is determined by identifying the conv ergence regions of acoustic ray paths. This candidate is validated by measuring similarities between back-propagation signals, under the assumption that the back-propagation signals of different acoustic ray paths should be similar near the sound source position. Thanks to considering similarities of back-propagation signals, our approach can localize a source position with an averaged err or of 0.51 m in a room of 7 m by 7 m area with 3 m height in tested envir onments. W e also observe 65 % to 220 % improvement in accuracy over the state- of-the-art method. This improvement is achie ved in envir onments containing a moving source, an obstacle, and noises. I . I N T R O D U C T I O N As robots become more widely available, it is getting more imperativ e for a robot to understand en vironments for safe and accurate operations. There have been many kinds of research efforts to percei ve the en vironments by acquiring and using data from hardware sensors. One of main the research topics for understanding the en vironments focus on identifying locations of a robot itself and other objects in en vironments from collected data by vision cameras and depth sensors. Departing from these approaches, an acoustic data measured by acoustic sensors has recently attracted attention as an important clue for localizing various objects. The problem identifying the location of a sound source from collected acoustic data is widely known as the sound source localization (SSL). There hav e been many types of SSL researches including the use of a time dif ference of arriv al (TDO A) of sound wav es at microphones [6, 14] and advanced methods using a spherical microphone array based on the analysis of the spherical harmonics functions [5, 8, 10, 15]. These approaches, unfortunately , are not designed mainly for the estimation of 3D position but for the identification of an incoming direction of sound. Especially , when a sound source is [ Separation signa l 1] [ Separation signa l 2] Microphone array Estimated position Back-propagation signal 1 Back-propagation signal 2 Reflecti vity : Reflecti vity : Ground truth Acoustic ray path: Acoustic ray path: Fig. 1: Our approach generates direct and indirect acoustic ray paths and localizes the sound source, while considering back-propagation signals on generated acoustic ray paths. The back-propagation signals are virtually computed signals that could be heard at particular locations and computed by using impulse responses, considering the tra vel distance and reflection amplification of acoustic ray paths from separation signals that are extracted from measured signals at the microphones. When two back-propagation signals of acoustic ray paths are highly correlated, we treat them to be originated from the same source. occluded by an obstacle or multiple sound sources are located in the same direction, most of prior approaches cannot specify the location of the source generating the sound signal. T o address this issue, recent techniques were proposed to find a 3D source location e ven if the sound source is in the non-line-of-sight state [1, 2]. These techniques estimate sound propagation paths from the source to microphones as acoustic rays, generated by the ray tracing technique, and identify the 3D source location by using generated acoustic rays. Howe ver , the accuracy of these methods decreases in noisy en vironments including a moving sound and obstacles. Main Contributions. T o robustly identify the sound source location, we present a nov el, sound source localization algo- rithm using back-propagation signals (Fig. 1). Using a beam- forming algorithm, we first compute incoming directions of the sound and separation signals corresponding to those specific incoming directions (Sec. IV -A). W e then estimate sound prop- agation paths by generating acoustic ray paths in the re verse direction to the incoming directions of the sound (Sec. IV -B), and compute the back-propagation signals using the impulse response of the acoustic ray path from the separation signal (Sec. IV -C). Intuitively speaking, back-propagation signals are virtually computed signals that could be heard at a particular location in acoustic paths from the measured signals at the microphone array . Finally , we use the Monte Carlo localization algorithm estimating a location of the sound as a con verging region of computed acoustic ray paths. In particular , we utilize the computed back-propagation signals of different acoustic ray paths for accurate estimation of the sound location, under the intuitiv e assumption that acoustic paths coming from the same sound source should hav e similar back-propagation signals at the estimated location (Sec. IV -D). Giv en en vironments containing a moving source, an obstacle, and noises in a 7m × 7m × 3m room, our localization algorithm estimates the location of the sound source with an error of 0.5m on av erage. The localization algorithm of our approach is improv ed by 65%–220% compared to the prior work that does not consider the back-propagation signals. I I . R E L A T E D W O R K S In this section, we giv e a brief overvie w of prior works on sound source localization and sound propagation. A. Sound sour ce localization There has been a significant amount of ef forts to localize a sound source by estimating a direction of sound. Many works hav e been studied based on time difference of arri val (TDO A) of a microphone array . Knapp et al. [6] suggested an efficient algorithm to estimate a time delay of sound arriv als at each microphone pair . When the shape of the microphone array is spherical, one can uniformly estimate angle of sound sources in ev ery azimuth and elev ation angle. Therefore, many beamformer algorithms ha ve been studied by using the spherical Fourier transform, which transforms the function on the unit sphere to the spherical harmonics domain. Rafaely [9, 11] presented a theoretical framew ork of analysis of spherical microphone array . This approach localized the source direction by computing the beam energy map on the unit sphere using steered beamformer algo- rithms. V alin et al. [14] suggested a memoryless localization algorithm based on a simple delay-and-sum beamformer on the space domain using 8 microphones located on the surface of the sphere, and Rafaely [10] extended the delay-and-sum localization method to process on the spherical harmonics domain. Y an et al. [15] suggested a localization algorithm using the minimum variance distortionless response (MVDR) power spectra on the spherical harmonics domain. Li et al. [8] presented a MUSIC (Multiple Signal Classification) based beamformer algorithm, which uses an orthogonality between a noise-only subspace and a signal-plus-noise subspace on the spherical harmonics domain. Khaykin et al. [5] presented a fre- quency smoothing technique for spherical microphone arrays. Unfortunately , these techniques were designed for detecting incoming directions, not the 3D location of a sound source in an arbitrary environment. Recently , 3D sound source localization methods have emerged by considering not only the direct path, but also indirect paths such as reflection and diffraction of sound propa- gation. An et al. [2] suggested a reflection-aware SSL algorithm via approximating sound propagation as acoustic rays generated by a ray tracing technique. This technique was extended to a diffraction-a ware localization method for handling a non-line- of-sight sound source [1]. These methods localize the 3D source position by identifying the conv ergence region of acoustic rays based on the hypothesis that acoustic rays are generated from the same source. Ho wever , if there exist many conv ergence regions of acoustic rays in environments containing a moving sound, obstacles, and noises, we observe that the accuracy deteriorates (Sec. V). In this paper , we aim to ov ercome this issue by utilizing the acoustic signals back-propagated to the source location. B. Sound pr opagation For the generation of acoustic rays and back-propagation signals, an accurate sound propagation model should be em- ployed. V arious sound propagation models have been studied for generating a realistic sound in a virtual en vironment. For generating a realistic sound, these prior methods ha ve focused on modeling ho w the sound emitted at the source is propagated to the listener . At a broad le vel, sound propagation techniques can be categorized as numerical acoustic (N A) and geometric acous- tic (GA) methods. N A techniques have been studied based on modeling the sound propagation using the acoustic wa ve equation. Since it requires considerable amount of computation time to solve the acoustic wa ve equation, there are dif ficulties in extending NA techniques to real-time applications. GA techniques are based on ray tracing algorithms and facilitate an efficient sound simulation for real-time applications by estimating an acoustic impulse response between a source and a listener in an environment. Cao et al. [3] enabled efficient computation of sound simulation through bidirectional path tracing. Li et al. [7] proposed an ef ficient sound simulator for 360 ° videos by handling early and late reverberation separately . There have been hybrid approaches supporting v arious lo w- frequency wav e properties within GA methods. Y eh et al. [16] presented a hybrid approach that combines geometric and numeric methods for handling complex en vironments. Schissler et al. [12] suggested an efficient way to deal with re verberation by considering high-order diffraction and diffuse reflection in large en vironments. For improving the accuracy of sound source location, which is an in verse problem to the sound generation, we adopt the Audio stream Direction 1 Direction 2 Direction 3 Direction 4 T ime (m illise cond) Sound pressure (Pa) Extract separation signals : Direct ray s : Reflecti on ray s Particle Ray 1 Ray 2 Microphone array Back-propagation signal 1 Back-propagation signal 2 Ground truth Microphone array Estimat ed position Ground truth Computing sound dir ections & seperat ion signals Generating acous tic rays Computing back-pr opagation signal s Estimating sour ce position Separation signal s Directions & separation signals Acoustic rays & separation signals Acoustic rays & back- propagation signals -0.01 0 0.01 0 10 20 30 40 50 -0.01 0 0.01 0 10 20 30 40 50 -0.01 0 0.01 0 10 20 30 40 50 -0.01 0 0.01 0 10 20 30 40 50 0 10 20 30 40 50 -2 0 2 0 10 20 30 40 50 -2 0 2 Fig. 2: An overvie w of the proposed method. Detailed explanations are in Sec. III. concept of an impulse response used for these sound generation methods. The main difference ov er these sound generation method is that we need to re versely propagate signals measured at the microphone array to ones that can be heard in particular locations on acoustic paths. I I I . OV E RV I E W Our sound source localization algorithm utilizes signals, called back-propagation signals, that are back-propagated to particular locations on sound propagation paths from signals measured at the microphone. The overvie w of our algorithm is shown in Fig. 2. Our method expresses a surrounding en vironment in form of a mesh map, which is reconstructed from the point cloud collected by the depth sensor . At runtime, audio streams are collected by a 32 channel microphone array . After localizing incoming direc- tions of sound using a MVDR (minimum variance distortionless response) based beamformer algorithm, we estimate acoustic signals observed from major incoming directions by applying beam patterns [11]. For simulating acoustic paths, we generate direct and re- flection acoustic rays by applying ray tracing in the backward manner [2]. Specifically , we generate direct acoustic rays in the opposite directions to those of incoming sounds. Once these direct acoustic rays intersect with the surrounding en vironment, we generate reflection acoustic rays to re versely simulate the reflection effect. Finally , we perform the Monte Carlo localization algorithm for identifying a source position from the generated acoustic paths. If these acoustic rays are actually coming from the same sound source, back-propagation signals at a candidate location should be similar to each other . W e therefore utilize those back- propagation signals of acoustic rays at a candidate location as an important factor of identifying the sound source location. This back-propagation signal of an acoustic path is computed by the impulse response that is initialized with the separation signal estimated for each direct acoustic ray . I V . S O U N D S O U R C E L O C A L I Z A T I O N U S I N G BA C K - P RO PAG A T E D S I G N A L S In this section, we describe each module of our approach illustrated in Fig. 2. A. Beamforming In a real en vironment in volving moving sound sources, obsta- cles, or noise, acoustic rays generated nai vely by our approach may con ver ge to a position other than the actual location of the sound source. W e also found that this occurs in practice and thus its accuracy decreases in previous works [2]. T o solve this problem, we aim to generate and utilize back-propagation signals to a candidate 3D location along acoustic rays. This back-propagation signals at a location can be computed by simulating the rev erse process of sound propagation, i.e., by rev ersely performing ray tracing. The input signals measured at the microphone consist of many different signals that were propagated through dif ferent paths from a sound source. Ideally , we want to estimate the propagation paths of those signals using acoustic rays and restore back-propagation signals on a particular position on those propagation paths. T o generate acoustic rays, we estimate incoming sound direc- tions at the microphone using a beamforming algorithm [11]. Note that our input signals are measured at discrete locations of the microphones, b ut each microphone signal is, in fact, a mixture of signals from different directions. W e therefore aim to compute signals along incoming directions, and use the beamforming algorithm. Fig. 3 shows a beam energy function representing a magni- tude distribution of sound signals on the unit sphere computed by the beamforming method. W e then extract sound signals in- coming from the directions of dominant magnitude by applying beam patterns [11] steered to those directions. W e utilize the minimum variance distortionless reponse (MVDR) based beamforming algorithm [11, 15] for computing incoming directions of the sound. Other high-resolution beam- forming techniques, such as MUSIC [8], can also be applied in here, but MUSIC based beamformers showed rather inconsis- tent results depending on the number of assumed sources. Fig. 3 also shows local maxima of the beam energy function y ( θ, φ ) , computed by the MVDR, on the unit sphere representing the incoming directions of the sound: [ ˆ d 1 , ˆ d 2 , · · · , ˆ d N ] = f max { y ( θ, φ ) } , (1) where ˆ d n denotes a directional vector of the n -th local max- imum on the unit sphere among N dif ferent local maxima in a frame, θ is an elev ation angle, φ is an azimuth angle, and f max {·} is a function for finding local maxima of the beam energy function. In practice, we identify 8 local maxima on av erage in our tested experiments. The computed directional vectors [ ˆ d 1 , ˆ d 2 , · · · , ˆ d N ] are used as a set of in verse directions of incoming sounds, and we thus 0 50 100 150 200 250 300 350 180 160 140 120 100 80 60 40 20 0 Elevation (degree) Azimuth (degree) Max (0dB) Min (-20 dB ) Separation signal s Pressure (Pa) T ime (m illise cond) Dir ection 1 Dir ection 2 Dir ection 3 Norm alize d beam ener gy function (dB) T ime (m illise cond) T ime (m illise cond) Pressure (Pa) Pressure (Pa) Fig. 3: A beam energy function computed by a beamforming algorithm, where the horizontal axis is the azimuth angle and the vertical axis is the elev ation angle of the unit sphere. Local maxima of the beam energy function are treated most significant incoming directions of sound. The separation signal of each incoming direction is extracted by using the beam pattern from the input signals measured by microphones. generate our acoustic rays in those estimated directions, to simulate the back-propagation of sound paths. W e then extract separation signals, which could be heard in those incoming directions. For the n -th direction ˆ d n , the separation signal S n [ f ] is computed by designing and using a beamforming weight W n [ f ] , which is a beam pattern in the spherical harmonic domain [11]: S n [ f ] = M [ f ] · { W n [ f ] } ∗ , (2) where f is a frequency , M is the spherical harmonic coef- ficients, which are measurement signals (32 channels) trans- formed by spherical Fourier transform, and {·} ∗ is the complex conjugate. All of variables, S n [ f ] , M [ f ] , and W n [ f ] contain data for L frequency bins ranging from 0 to 24 kHz. B. Acoustic ray tracing W e explain how to generate acoustic rays from estimated directions [ ˆ d 1 , ˆ d 2 , · · · , ˆ d N ] that are the inv erse directions of incoming sounds. W e want to estimate propagated paths (e.g., direct and reflection path) of the sound from its source location to the microphone array location using the acoustic rays. W e generate such acoustic rays considering direct and reflection paths based on the RA-SSL algorithm [2]. For the n -th acoustic ray path, denoted by R n , its primary acoustic ray , r 0 n , is created in to the n -th direction vector ˆ d n , as shown in Fig. 4. If the acoustic ray collides with an obstacle, its secondary , reflection ray is generated by assuming the specular reflection, and is denoted by r 1 n , where the superscript represents the order of the acoustic ray path. When R n is propagated until a K -th order , the acoustic ray path R n consists of K acoustic rays: i.e., R n = [ r 0 n , r 1 n , · · · , r K − 1 n ] . T riangle k T riangle 1 : Back-propagation signal : Separation signal Origin of the microphone array Fig. 4: An e xample of generating an acoustic ray path R n and its back-propagation signal. The primary acoustic ray , r 0 n , of the n -th acoustic ray path R n is generated to the direction v ector ˆ d n that is the inv erse direction of the n -th incoming sound. When the acoustic ray r 0 n hits an obstacle represented by T riangle 1, its reflection acoustic ray r 1 n is generated according to the specular reflection based on the normal vector ˆ n 1 of Triangle 1. The back-propagation signal P n is computed by using the impulse response of R n at a specific point, Π n , on the path from the separation signal S n . C. Back-pr opagation signals W e introduce ho w to compute back-propagation signals based on acoustic ray paths [ R 1 , R 2 , · · · , R N ] and separation signals [ S 1 , S 2 , · · · , S N ] ; there is a tuple of ( R n , S n ) for the direction vector ˆ d n that is the in verse direction of the n -th incoming sound. W e want to compute the back-propagation signal P n from the separation signal S n by designing and using an impulse response of backward sound propagation based on the acoustic ray path R n . The impulse response describes the reaction of any linear system as a function of time-independent variables; the input is the separation signal and the output is the back-propagation signal in our system. In this work, we utilize the impulse response for the back- ward propagation to improve the accuracy of the sound source localization. In the forw ard sound propagation, the impulse response of an acoustic ray path is described by attenuations according to the tra vel distance of a ray path and reflection. For example, the tra vel distance attenuation represents the decrease of sound pressure in versely proportional to the trav el distance of the ray path, because the sound is propagated according to the spherical wav e in 3D en vironments; similar for the reflection attenuation. On the other hand, for the backward propagation problem, the attenuation of travel distance and reflection becomes an amplification of the sound pressure. Suppose that we aim to compute the back-propagation signal from the starting point to a specific point Π n (Fig. 4) on an acoustic ray path using the backward impulse response, where there is the n -th tuple ( R n , S n ) and the acoustic ray path R n consists of K acoustic rays [ r 0 n , · · · , r K − 1 n ] ; r 0 n is a primary ray and r k n is the k -th reflection ray (1 ≤ k ≤ K − 1) . In the frequency domain, the backward impulse response H Π n n is described by amplifications because of the trav el distance l and the reflection until the k -th order reflection ray r k n : H Π n n [ f ] = exp j 2 π f l c · A D [ l ] · A R [ R n , k , f ] , (3) where the term inside the exponential function is for shifting the back-propagation signal to the time delay of the sound propagation at the specific point Π n and c is the speed of sound. A D is a coefficient of the trav el distance amplification, and is defined by a function of the tra vel distance l : A D [ l ] = 4 π (1 + l ) . Also, A R is a coef ficient of the reflection amplification, and is defined by considering specular reflections until the k -th order reflection ray: A R [ R n , k , f ] = k Y i =1 1 Γ i [ f ] , (4) where Γ i denotes the reflecti vity (reflection coefficient) of the triangle hit by the ( i − 1) -th order ray; the reflection coef ficient is a function of frequency f and we refer to coefficient values reported by [13]. The back-propagation signal P Π n n at the specific point Π n on the acoustic ray path R n is finally computed by the product of the backward impulse response H Π n n and the separation signal S n in the frequency domain: P Π n n [ f ] = S n [ f ] · H Π n n [ f ] . (5) D. Estimating a source position W e now explain ho w to estimate the source position using back-propagation signals of acoustic ray paths. The Monte Carlo sound source localization algorithm iden- tifying the conv ergence region of acoustic ray paths was sug- gested in the prior work (RA-SSL) [2]; the conv ergence region means the area where acoustic ray paths gather . Ho wever , in real en vironments containing a moving source, obstacles, and noises, the accuracy of RA-SSL can decrease. For example, when there are background noises or complex configurations with obstacles, they can trigger to generate many arbitrary or incoherent acoustic ray paths, which may cause many con ver - gence regions. If some cases, those conv ergence regions may be considered stronger than the one of the ground truth, deterio- rating the accuracy of the localization method. By considering back-propagation signals, we aim to identify those arbitrary and incoherent acoustic ray paths and cull away acoustic ray paths with different back-propagation signals indicating that they are from different sound sources. Intuitiv ely speaking, if there are two acoustic ray paths caused by the same source, their back-propagation signals should be similar near the location of their sound source. In other words, when back-propagation signals of two acoustic ray paths are different at a location, the location is unlikely to be a candidate for a con verging re gion of the sound source. Based on this observation, we design and utilize similarity between back- propagation signals for robustly identifying the sound source’ s location even in these dif ficult settings. Fig. 5: Examples of determining the point of the acoustic ray path for computing the back-propagation signal. F or the particle of x 2 j , the perpendicular foots π k 2 on all k -th order acoustic rays of the n -th acoustic ray path are computed. W e then decide the representative perpendicular foot Π 2 n satisfying the shortest distance from x 2 j to R n . Our estimation process is based on the Monte Carlo localiza- tion algorithm consisting of three parts: sampling, computing a weight of particles, and resampling. The main dif ferentiation of our approach over the prior technique is that our method improv es the localization accuracy based on a no vel module for computing weights of particles based on our back-propagation signals. Suppose there are i -th particles, x i j , representing hypothetical locations of the sound source at a j frame. W e would like to compute how close the particle is to acoustic ray paths. For this, we define a specific point Π i n , which is decided to be the point satisfying the shortest distance between x i j and any point on the n -th acoustic ray path; i.e., Π i n = argmin π k i || x i j − π k i || , where π k i is the perpendicular foot on the n -th acoustic ray path from the x i j position (Fig. 5). W e then compute our back- propagation signal according to Eq. 3 on the shortest point Π i n on the n -th acoustic ray path from the particle x i j . From the back-propagation signal P Π i n n [ f ] in the frequency domain, we compute the back-propagation signal p Π i n n [ t ] in the time domain signal. W e then calculate a particle weight, w i j , representing the probability of being a conv ergence region of the sound source, based on two factors: a distance weight, w d , representing how away the particle is from the n -th acoustic ray path and a similarity weight, w s , indicating how similar between p Π i n n [ t ] and other signals gi ven acoustic ray paths: w i j = P ( O j | x i j ) = 1 n c N j X n =1 [ w d ( x i j , R n ) + α · w s ( x i j , R n )] , (6) where N j is the number of acoustic ray paths at the j frame, O j is the observation containing [ P Π i 1 1 , · · · , P Π i N j N j ] and [ R 1 , · · · , R N j ] , n c is a normalizing constant, and α denotes a parameter for adjusting different weights. The distance weight w d is calculated by using the Euclidean distance between x i j the particle location and the point Π i n : w d ( x i j , R n ) = G ( || x i j − Π i n || | 0 , σ w ) , (7) where G is the Gaussian distribution function with the zero mean and a standard de viation σ w . w d is maximized when the particle x i j is on the perpendicular foot Π i n , which is on the n -th acoustic ray path . The similarity weight w s measures the similarity between the back-propagation signal p Π i n n from the n -th acoustic ray path and ones of other acoustic ray paths: w s ( x i j , R n ) = 1 n s N j X m =1 , m 6 = n ( L − l cc ( n,m ) L , if a cc ( n, m ) > a th 0 , otherwise, (8) where n s is the normalizing constant, L is the length of the back-propagation signal, a cc ( · ) is the peak coefficient in a normalized range of − 1 to 1 , l cc ( · ) is the peak coef ficient delay , and a th denotes the threshold value of a cc ( · ) . Both v ariables of a cc ( · ) and l cc ( · ) are computed by applying the cross-correlation operation between two signals, n -th and m -th signals: a cc ( n, m ) = max { ( p Π i n n ? p Π i m m )[ τ ] } , l cc ( n, m ) = argmax τ { ( p Π i n n ? p Π i m m )[ τ ] } , (9) where ? is the cross-correlation operator . As shown in Fig. 6, a cc ( · ) represents ho w much both back- propagation signals are correlated, and l cc shows the time dif fer- ence of occurrence between both back-propagation signals. As both back-propagation singles are from the same sound source, ideally a cc and l cc become one and zero, respecti vely . Getting back to Eq. 8, we treat that two back-propagation signals are similar , when their peak coefficient is bigger than the threshold, i.e., a cc > a th . In this case, we assign a higher weight according to the relativ e time delay of the length of the signal, ( L − l cc L ) ; i.e., we give the highest weight when two signals are matched without any delay , under the assumption that those two signals are originated from the same sound source. V . R E S U LT S A N D D I S C U S S I O N In this section, we show ho w our approach accurately estimates the sound source location by measuring distance errors between the ground truth and the estimated position. The yellow disk in Fig. 1 represents a 95% confidence area for the estimated source. W e also compare distance errors of our approach to the prior work (RA-SSL) to demonstrate the effecti veness of our algorithm considering the back-propagation signals; RA-SSL is the version of our approach without using the similarity of the back-propagation signals. The hardware platform consists of Eigenmike, which is the 32-channel microphone array of the mh acoustics, and the i7 CPU computer . For reconstructing indoor en vironments, we first collect a point cloud by using Kinect v1 and then build a mesh map consisting of triangles from the point cloud; the reflection coefficients are appropriately assigned to the triangles by referring the reported v alues in [13]. W e report values of parameters used for our algorithm: α for controlling the influence of each weight is 1 , the standard deviation σ w of the Gaussian distribution function used for T ime (m illise cond) Pressure (Pa) T ime (m illise cond) Back-propagation signal Back-propagation signal Cross-corr elation ope ration Delay ( ) Coef fici ent 0 Pressure (Pa) Fig. 6: An example of computing the peak coefficient a cc and the peak coefficient delay l cc by using the cross-correlation operation. Gi ven two back-propagation signals, p Π i n n and p Π i m m at Π i n and Π i m , respectively , we perform the cross-correlation operation between two signals. The maximum coefficient be- comes the peak coef ficient a cc and the time delay from the time origin, 0, to the time realizing the maximum coef ficient becomes the peak coefficient delay l cc . computing the distance weight is 0.5 that is determined by the consideration of the size of the indoor environment (about one tenth of the room width 7m), and the threshold v alue a th for checking the correlation between back-propagation signals is 0.15. W e also show the results over different parameter v alues in Sec. V -C. W e use 3840 samples for the separation signal, where the sampling frequency is 48 kHz; 3840 audio samples (80 ms) are a sufficient length for covering direct and first reflection signals as indicated in [4]. A. Benchmarks Different experiments were conducted in two scenes: the moving sound without and with an obstacle. In both en viron- ments (Fig. 7a and Fig. 7b), a robot equipped with an omni- directional speaker mov ed along the red trajectory , and the 32- channel microphone array recorded the audio signals, and these data are used for v arious tests with the ground truth information on the sound source locations. In Fig. 7b, we put an obstacle made by paper boxes, to cause the robot in visible along the robot’ s trajectory for the microphone array; at the in visible area, the sound source becomes the non-line-of-sight (NLOS) source. Handling the NLOS source w as reported to be a quite difficult problem in RA-SSL, because direct sound propagation paths are blocked by the obstacle and we ha ve to rely on indirect sound paths that are incoherent and sensitiv e to noise. Furthermore, the number of indirect acoustic ray paths passing near the ground truth is usually small, and thus the accuracy of the localization algorithm tends to deteriorate. Sound source Microphone array T raj ectory Starting point Goal (a) The environment without the obstacle. Sound source Obstacle Invisible area Microphone array T raj ectory Starting point Goal (b) The environment with the obstacle. Fig. 7: The test environments w/ and w/o an obstacle that can make the sound source non-line-of-sight one. W e use the clapping sound as the sound source. Additionally , these scenes are not free from noise naturally occurring in a typical en vironment; they are exposed to noises, as sho wn in Fig. 9, since they are not controlled scenes. Noises can cause to trigger many incoherent acoustic ray paths, hindering them to conv erge in a single location. B. A moving sound source W e first sho w ho w our approach has the adv antage compared to RA-SSL in a simple scene with a moving sound. In Fig. 8a, the black and red graphs denote the distance errors of RA- SSL and our approach, respecti vely . The av erage distance errors across the whole test time are 0.9231m for RA-SSL and 0.5594m for our approach; the accurac y of the sound source localization is improv ed about 65% based on our approach. The experiment en vironments in Fig. 7 hav e many kinds of noises, caused by experimenters and the outside, as sho wn in Fig. 9, and these noises generate acoustic rays that do not help to localize the sound source. Especially , when the mo ving sound source turns the corner from 60 s to 70 s, the accuracy of RA- SSL deteriorates significantly . In this case, RA-SSL estimates source positions incorrectly near the noisy area (Fig. 9), while their signals from the noise source and from the ground truth source are dif ferent. On the other hand, the red graph sho ws that our method is robust ev en in this case, thank to considering the back-propagation signals on estimated source locations; the similarity weight improves the rob ustness of the source localization algorithm. 0 20 40 60 80 100 0 1 2 3 4 5 6 7 T ime (second) Distance e rror (m) : Reflect ion-A ware SSL : Our approach (a) Accurac y of moving sound w/o the obstacle (Fig. 7a). 0 20 40 60 80 100 0 1 2 3 4 5 T ime (second) Distance e rror (m) (b) Accurac y of moving sound w/ the obstacle (Fig. 7b). Fig. 8: The distance errors between the ground truth and the estimated source positions, where the black line is for the prior work (RA-SSL) and the red line is for our approach. Microphone array Experimenter noise Outside noise s Sound source Direct paths Reflecit on paths Door Fig. 9: The test en vironment contains many noises, some of which come from the outside and experimenters at the bottom. They generate incoherent acoustic rays, in addition to ones from the sound source. C. A moving sound around an obstacle W e now show results with the more challenging environment including an obstacle between the source trajectory and the microphone array sho wn in Fig. 7b. Fig. 8b shows graphs of the distance errors of RA-SSL and our approach. The a verage distance errors of RA-SSL and our approach are 1.4919m and 0.4623m, respectively . Especially , where the sound source is in the NLOS state from 40 to 70 seconds, the accuracy of RA-SSL decreases drastically , because blocking the direct sound propagation paths makes the con vergence of acoustic rays weak near the ground truth. On the other hand, e ven in this challenging case, we get a stable result, 220% improv ement compared to RA-SSL, by considering similarity between back- propagation signals of indirect acoustic paths. T o analyze ef fects of v arying values of α , σ w , and a th , we measure the a verage distance errors ov er v arious parameter T ABLE I: The a verage distance errors over various parameter values α 0.5 0.75 1.0 1.25 1.5 σ w = 0 . 5 , a th = 0 . 15 0.55m 0.52m 0.46m 0.63m 0.6m σ w 0.3 0.4 0.5 0.6 0.7 α = 1 . 0 , a th = 0 . 15 0.44m 0.48m 0.46m 0.57m 0.65m a th 0.1 0.125 0.15 0.175 0.2 σ w = 0 . 5 , α = 1 . 0 0.52m 0.47m 0.46m 1.12m 1.3m values. T able I sho ws that our algorithm is robust to changes of parameters, where v alues of α , σ w , and a th vary from 0.5 to 1.5, from 0.3 to 0.7, and from 0.1 to 0.15, respecti vely . Howe ver , if the value of a th , the threshold for checking the peak coefficient, becomes 0.17, the accuracy dramatically decreases. This is mainly because coef ficients of pairs of back-propagation signals originated by the same source have values from 0.17 to 0.23. As a result, when a th becomes too large, equal to or bigger than 0.17, we ev en filter out similar signals, and this enforces our approach to fall back to beha ve like the prior method RA- SSL. Nonetheless, our approach ev en in this case outperforms the prior method; RA-SSL ’ s average error is 1.4919m. D. Analysis of back-pr opagation signals Let us see how back-propagation signals ha ve positi ve effects on the 3D sound source localization. Fig. 10 sho ws four separation signals observed from different incoming directions of the same sound source. On the right side of the figure, we also sho w four back-propagation signals generated from those observed separation signals at a location of the ground truth. The width of the red rectangle (a) shown on the left side of the figure indicates the time difference, caused by the distance dif- ference of sound propagation paths, of separation signals. After computing the back-propagation signals from those separation signals, we observe that the time difference, denoted by the width of the red rectangle (b), of back-propagation signals was reduced. The reduction of the time difference in (b) compared to (a) can be interpreted that the back-propagation signals are restored better , since they are all originated from the same sound source. W e also measure cross-correlation between signals. When ev ery pair of separation signals in Fig. 10 are analyzed by the cross-correlation operation, the a verage of peak coef ficients and peak coefficient delays are 0.2183 and 280 samples, respectiv ely . For the back-propagation signals, the av erage of peak coefficients and peak coefficient delays are 0.2245 and 35 samples. These values indicate that the computed back- propagation signals are restored in a way that those signals are similar to each other . Note that a higher peak coefficient indicates more correlated signals, and the peak coef ficient delays close to zero represents that signals are well-aligned in time. V I . L I M I TA T I O N S A N D C O N C L U S I O N W e hav e presented a novel sound source localization algo- rithm using back-propagation signals. After estimating prop- agation paths of the sound by generating acoustic ray paths, 0 10 20 30 40 50 0 10 20 30 40 50 0 10 20 30 40 50 0 10 20 30 40 50 T ime (m illise cond) Sound pressure (Pa) -0.01 0 0.01 Separation signals Back-p r opagation signals Dir ection 1 Dir ection 2 -0.01 0 0.01 Dir ection 3 -0.01 0 0.01 Dir ection 4 -0.01 0 0.01 Dir ection 1 Dir ection 2 Dir ection 3 -2 0 2 Dir ection 4 -2 0 2 -2 0 2 -2 0 2 0 10 20 30 40 50 0 10 20 30 40 50 0 10 20 30 40 50 0 10 20 30 40 50 (a) (b) Fig. 10: On the left, we sho w separation signals heard from different incoming directions of the same sound source, while the right side sho ws their corresponding, back-propagation signals. The widths of red rectangles, (a) and (b), represent the time differences of the separation and back-propagation signals. The time dif ference (b) of the back-propagation signals is smaller than the separation signals (a), indicating that the back-propagation signals are more similar each other compared to the separation signals. the back-propagation signal virtually computed at a specific point on the acoustic ray path is considered. W e utilize those back-propagation signals of different acoustic paths for rob ustly identifying the con verging region of the source, ev en in envi- ronments with noises and an obstacle. While we ha ve demonstrated benefits of our approach, it has sev eral limitations and opens up many interesting future directions. In Fig. 8, in some cases, our accuracy is lower than the prior work because of cropping a specific length of an audio signal from a measured signal at the microphone ev ery fixed cycle; the cropped signal may not be able to collect enough audio signal at the beginning of the sound. W e plan to deal with this problem by cropping and processing a meaningful audio signal from a measured signal at the microphone. Currently , real-time computation of our method is not ensured, due to the premature implementation of our current proof-of-concept system; the beamforming module runs in Matlab and the cross- correlation operation performed in pairs of av ailable acoustic ray paths (e.g., 8 paths on average in our tests) runs serially . W e plan to address this issue by re-implementing the beamforming module in C++ and designing the cross-correlation operations in a parallel manner . Other sound propagation phenomena that are frequently observed at lo w frequencies or in the lo w frequency region such as scattering and dif fraction are not handled yet. Fortunately , a ray tracing based approach supporting the dif fraction effect is recently proposed [1], and can be adopted for our method. The acoustic material properties such as reflection coef ficients of triangles of objects are not automatically assigned, and some of deep learning approaches showing promising results can be employed to solve this problem [13]. R E F E R E N C E S [1] Inkyu An, Doheon Lee, Jung-woo Choi, Dinesh Manocha, and Sung-eui Y oon. Diffraction-aw are sound local- ization for a non-line-of-sight source. arXiv preprint arXiv:1809.07524 , 2018. [2] Inkyu An, Myungbae Son, Dinesh Manocha, and Sung- eui Y oon. Reflection-aw are sound source localization. In ICRA , 2018. [3] Chunxiao Cao, Zhong Ren, Carl Schissler, Dinesh Manocha, and Kun Zhou. Interactive sound propagation with bidirectional path tracing. A CM T ransactions on Graphics (TOG) , 35(6):180, 2016. [4] Jingdong Chen and Jacob Benesty . A time-domain widely linear mvdr filter for binaural noise reduction. In Ap- plications of Signal Pr ocessing to Audio and Acoustics (W ASP AA), 2011 IEEE W orkshop on , pages 105–108. IEEE, 2011. [5] Dima Khaykin and Boaz Rafaely . Coherent signals direction-of-arriv al estimation using a spherical micro- phone array: Frequency smoothing approach. In Applica- tions of Signal Pr ocessing to Audio and Acoustics, 2009. W ASP AA ’09. IEEE W orkshop on , pages 221–224. IEEE, 2009. [6] C. Knapp and G. Carter . The generalized correlation method for estimation of time delay . IEEE T rans. Acoust., Speech, Signal Pr ocess. , 24(4):320–327. [7] Dingzeyu Li, T imothy R Langlois, and Changxi Zheng. Scene-aware audio for 360° videos. arXiv pr eprint arXiv:1805.04792 , 2018. [8] Xuan Li, Shefeng Y an, Xiaochuan Ma, and Chaohuan Hou. Spherical harmonics music versus con ventional music. Applied Acoustics , 72(9):646–652, 2011. [9] Boaz Rafaely . Analysis and design of spherical micro- phone arrays. IEEE T ransactions on speech and audio pr ocessing , 13(1):135–143, 2005. [10] Boaz Rafaely . Phase-mode versus delay-and-sum spheri- cal microphone array processing. IEEE signal processing Letters , 12(10):713–716, 2005. [11] Boaz Rafaely . Fundamentals of spherical array pr ocess- ing , volume 8. Springer , 2015. [12] Carl Schissler , Ravish Mehra, and Dinesh Manocha. High- order diffraction and diffuse reflections for interactiv e sound propagation in large en vironments. ACM T rans- actions on Graphics (TOG) , 33(4):39, 2014. [13] Carl Schissler , Christian Loftin, and Dinesh Manocha. Acoustic classification and optimization for multi-modal rendering of real-world scenes. IEEE transactions on visualization and computer graphics , 24(3):1246–1259, 2018. [14] J.-M. V alin, F . Michaud, and J. Rouat. Robust localization and tracking of simultaneous moving sound sources using beamforming and particle filtering. Robot. A uton. Syst. , 55(3). [15] Shefeng Y an, Haohai Sun, U Peter Svensson, Xiaochuan Ma, and Jens M Hovem. Optimal modal beamforming for spherical microphone arrays. IEEE T ransactions on Audio, Speech, and Langua ge Pr ocessing , 19(2):361–371, 2011. [16] Hengchin Y eh, Ravish Mehra, Zhimin Ren, Lakulish An- tani, Dinesh Manocha, and Ming Lin. W av e-ray coupling for interactive sound propagation in large complex scenes. A CM T ransactions on Graphics (TOG) , 32(6):165, 2013.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment