Fully Supervised Speaker Diarization

In this paper, we propose a fully supervised speaker diarization approach, named unbounded interleaved-state recurrent neural networks (UIS-RNN). Given extracted speaker-discriminative embeddings (a.k.a. d-vectors) from input utterances, each individ…

Authors: Aonan Zhang, Quan Wang, Zhenyao Zhu

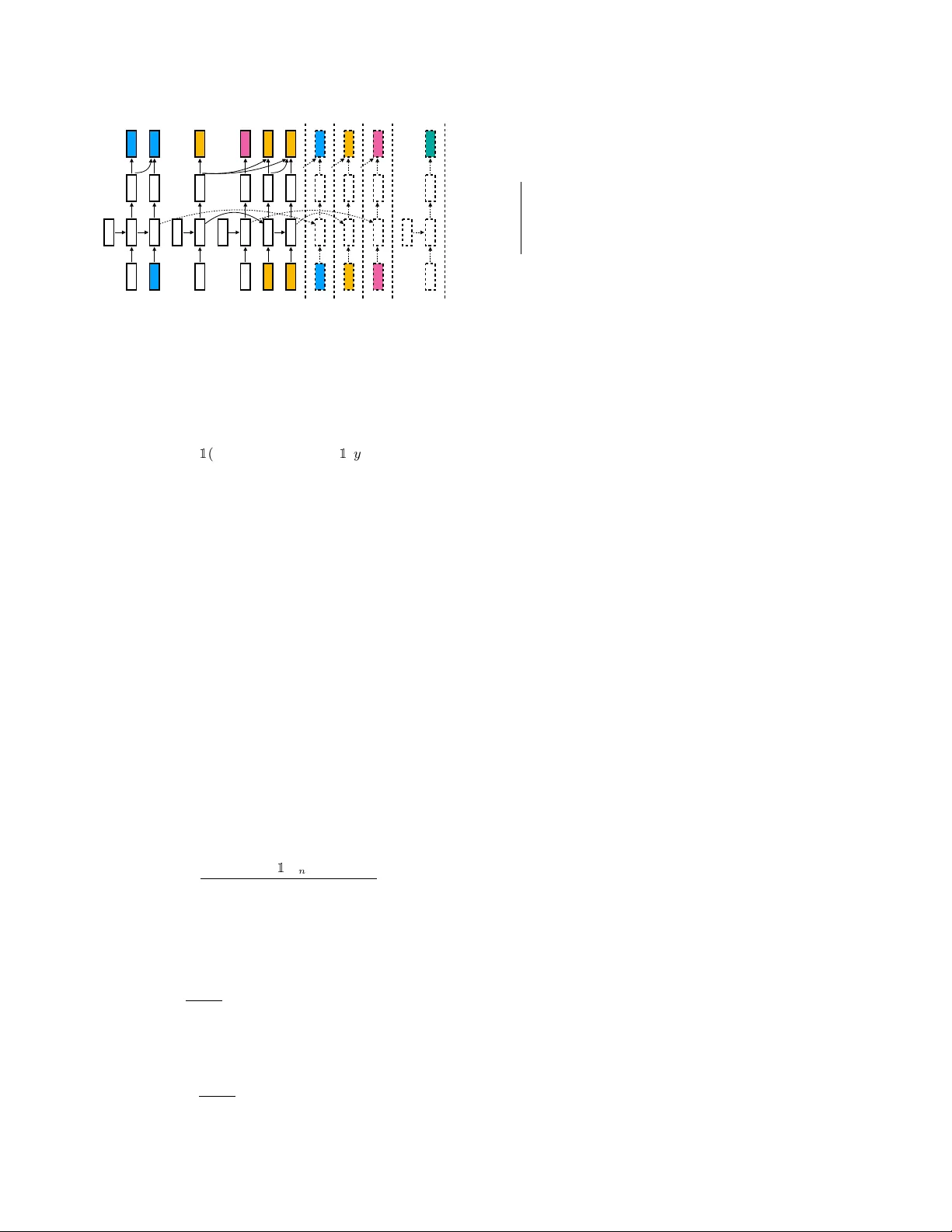

FULL Y SUPER VISED SPEAKER DIARIZA TION Aonan Zhang 1,2 Quan W ang 1 Zhenyao Zhu 1 J ohn P aisley 2 Chong W ang 1 1 Google Inc., USA 2 Columbia Uni versity , USA 1 { aonan , quanw , zyzhu , chongw } @google.com 2 { az2385 , jpaisley } @columbia.edu ABSTRA CT In this paper , we propose a fully supervised speaker diarization approach, named unbounded interleaved-state r ecurr ent neural networks (UIS-RNN). Giv en extracted speak er-discriminati ve em- beddings ( a.k.a. d-vectors) from input utterances, each individual speaker is modeled by a parameter-sharing RNN, while the RNN states for different speak ers interleav e in the time domain. This RNN is naturally integrated with a distance-dependent Chinese restaurant process (ddCRP) to accommodate an unknown number of speakers. Our system is fully supervised and is able to learn from examples where time-stamped speaker labels are annotated. W e achiev ed a 7.6% diarization error rate on NIST SRE 2000 CALLHOME, which is better than the state-of-the-art method using spectral clustering. Moreov er , our method decodes in an online f ashion while most state-of-the-art systems rely on offline clustering. Index T erms — Speaker diarization, d-v ector , clustering, recur- rent neural networks, Chinese restaurant process 1. INTR ODUCTION Aiming to solve the problem of “who spoke when”, most existing speaker diarization systems consist of multiple relativ ely indepen- dent components [ 1 , 2 , 3 ], including but not limited to: (1) A speech segmentation module, which removes the non-speech parts, and di- vides the input utterance into small segments; (2) An embedding ex- traction module, where speaker -discriminativ e embeddings such as speaker factors [ 4 ], i-vectors [ 5 ], or d-vectors [ 6 ] are extracted from the small segments; (3) A clustering module, which determines the number of speakers, and assigns speaker identities to each segment; (4) A resegmentation module, which further refines the diarization results by enforcing additional constraints [ 1 ]. For the embedding extraction module, recent work [ 2 , 3 , 7 ] has sho wn that the diarization performance can be significantly im- prov ed by replacing i-vectors [ 5 ] with neural network embeddings, a.k.a. d-vectors [ 6 , 8 ]. This is largely due to the fact that neu- ral networks can be trained with big datasets, such that the model is sufficiently robust against varying speaker accents and acoustic conditions in different use scenarios. Howe ver , there is still one component that is unsupervised in most modern speaker diarization systems — the clustering module. Examples of clustering algorithms that ha ve been used in diarization systems include Gaussian mixture models [ 7 , 9 ], mean shift [ 10 ], agglomerativ e hierarchical clustering [ 2 , 11 ], k-means [ 3 , 12 ], Links [ 3 , 13 ], and spectral clustering [ 3 , 14 ]. The first author performed this work as an intern at Google. The implementation of the algorithms in this paper is av ailable at: https://github.com/google/uis- rnn Since both the number of speak ers and the se gment-wise speaker labels are determined by the clustering module, the quality of the clustering algorithm is critically important to the final diarization performance. Howe ver , the fact that most clustering algorithms are unsupervised means that, we will not able to improve this module by learning from examples when the time-stamped speaker labels ground truth are a v ailable. In fact, in many domain-specific applica- tions, it is relativ ely easy to obtain such high quality annotated data. In this paper, we replace the unsupervised clustering module by an online generati ve process that naturally incorporates labelled data for training. W e call this method unbounded interleaved-state re- curr ent neural network (UIS-RNN), based on these facts: (1) Each speaker is modeled by an instance of RNN, and these instances share the same parameters; (2) An unbounded number of RNN instances can be generated; (3) The states of different RNN instances, cor- responding to dif ferent speakers, are interlea ved in the time domain. W ithin a fully supervised frame work, our method in addition handles complexities in speaker diarization: it automatically learns the num- ber of speak ers within each utterance via a Bayesian non-parametric process, and it carries information through time via the RNN. The contributions of our w ork are summarized as follows: 1. Unbounded interleaved-state RNN, a trainable model for the general problem of segmenting and clustering temporal data by learning from examples. 2. Frame work for a fully supervised speaker diarization system. 3. Ne w state-of-the-art performance on NIST SRE 2000 CALL- HOME benchmark. 4. Online diarization solution with of fline quality . 2. BASELINE SYSTEM USING CLUSTERING Our diarization system is built on top of the recent w ork by W ang et al. [ 3 ]. Specifically , we use exactly the same segmentation module and embedding extraction module as their system, while replacing their clustering module by an unbounded interleav ed-state RNN. As a brief re vie w , in the baseline system [ 3 ], a text-independent speaker recognition netw ork is used to e xtract embeddings from slid- ing windows of size 240ms and 50% overlap. A simple voice acti v- ity detector (V AD) with only two full-cov ariance Gaussians is used to remove non-speech parts, and partition the utterance into non- ov erlapping segments with max length of 400ms. Then we average window-le vel embeddings to se gment-le vel d-vectors, and feed them into the clustering algorithm to produce final diarization results. The workflo w of this baseline system is shown in Fig. 1 . The text-independent speaker recognition network for comput- ing embeddings has three LSTM layers and one linear layer . The network is trained with the state-of-the-art generalized end-to-end loss [ 6 ]. W e have been retraining this model for better performance, which will be later discussed in Section 4.1 . …… sliding windows window step window size …… d-vectors …… segments …… diarization results Run LSTM Aggregate Cluster Process Fig. 1 . The baseline system architecture [ 3 ]. 3. UNBOUNDED INTERLEA VED-ST A TE RNN 3.1. Overview of approach Giv en an utterance, from the embedding extraction module, we get an observation sequence of embeddings X = ( x 1 , x 2 , . . . , x T ) , where each x t ∈ R d . Each entry in this sequence is a real-valued d-vector corresponding to a segment in the original utterance. In the supervised speaker diarization scenario, we also have the ground truth speaker labels for each se gment Y = ( y 1 , y 2 , . . . , y T ) . With- out loss of generality , let Y be a sequence of positiv e inte gers by the order of appearance. For example, Y = (1 , 1 , 2 , 3 , 2 , 2) means this utterance has six segments, from three different speakers, where y t = k means seg- ment t belongs to speaker k . UIS-RNN is an online generati ve process of an entire utterance ( X , Y ) , where 1 p ( X , Y ) = p ( x 1 , y 1 ) · T Y t =2 p ( x t , y t | x [ t − 1] , y [ t − 1] ) . (1) T o model speaker changes, we use an augmented representation p ( X , Y , Z ) = p ( x 1 , y 1 ) · T Y t =2 p ( x t , y t , z t | x [ t − 1] , y [ t − 1] , z [ t − 1] ) , (2) where Z = ( z 2 , . . . , z T ) , and z t = 1 ( y t 6 = y t − 1 ) ∈ { 0 , 1 } is a binary indicator for speaker changes. F or example, if Y = (1 , 1 , 2 , 3 , 2 , 2) , then Z = (0 , 1 , 1 , 1 , 0) . Note that Z is uniquely determined by Y , b ut Y cannot be uniquely determined by a given Z , since we don’t know which speaker we are changing to. Here we leav e z 1 undefined, and factorize each product term in Eq. ( 2 ) as three parts that separately model sequence generation, speaker as- signment, and speaker change: p ( x t , y t , z t | x [ t − 1] , y [ t − 1] , z [ t − 1] ) = p ( x t | x [ t − 1] , y [ t ] ) | {z } sequence generation · p ( y t | z t , y [ t − 1] ) | {z } speaker assignment · p ( z t | z [ t − 1] ) | {z } speaker change . (3) For the first entry of the sequence, we let y 1 = 1 and there is no need to model speaker assignment and speaker change. In Section 3.2 , we introduce these components separately . 1 W e denote an ordered set (1 , 2 , . . . , t ) as [ t ] . 3.2. Details on model components 3.2.1. Speaker change W e assume the probability of z t ∈ { 0 , 1 } follo ws: p ( z t = 0 | z [ t − 1] , λ λ λ ) = g λ λ λ ( z [ t − 1] ) , (4) where g λ λ λ ( · ) is a function paramaterized by λ λ λ . Since z t indicates speaker change at time t , we ha ve p ( y t = y t − 1 | z t , y [ t − 1] ) = 1 − z t . (5) In general, g λ λ λ ( · ) could be any function, such as an RNN. But for simplicy , in this work, we make it a constant value g λ λ λ ( z [ t − 1] ) = p 0 ∈ [0 , 1] . This means { z t } t ∈ [2 ,T ] are independent binary variables parameterized by λ λ λ = { p 0 } : z t ∼ iid. Binary ( p 0 ) . (6) 3.2.2. Speaker assignment pr ocess One of the biggest challenges in speaker diarization is to deter- mine the total number of speakers for each utterance. T o model the speaker turn behavior in an utterance, we use a distance de- pendent Chinese restaurant process (ddCRP) [ 15 ], a Bayesian non- parametric model that can potentially model an unbounded number of speakers. Specifically , when z t = 0 , the speaker remains un- changed. When z t = 1 , we let p ( y t = k | z t = 1 , y [ t − 1] ) ∝ N k,t − 1 , p ( y t = K t − 1 + 1 | z t = 1 , y [ t − 1] ) ∝ α. (7) Here K t − 1 : = max y [ t − 1] is the total number of unique speakers up to the ( t − 1) -th entry . Since z t = 1 indicates a speaker change, we hav e k ∈ [ K t − 1 ] \ { y t − 1 } . In addition, we let N k,t − 1 be the number of blocks for speaker k in y [ t − 1] . A block is defined as a maximum-length subsequence of continuous segments that belongs to a single speaker . For example, if y [6] = (1 , 1 , 2 , 3 , 2 , 2) , then there are four blocks (1 , 1) | (2) | (3) | (2 , 2) separated by the vertical bar , with N 1 , 5 = 1 , N 2 , 5 = 2 , N 3 , 5 = 1 . The probability of switching back to a previously appeared speaker is proportional to the number of continuous speeches she/he has spoken. There is also a chance to switch to a ne w speaker , with a probability proportional to a constant α . The joint distribution of Y given Z is p ( Y | Z , α ) = α K T − 1 Q K T k =1 Γ( N k,T ) Q T t =2 ( P k ∈ [ K t − 1 ] \{ y t − 1 } N k,t − 1 + α ) 1 ( z t =1) . (8) 3.2.3. Sequence generation Our basic assumption is that, the observation sequence of speaker embeddings X is generated by distributions that are parameterized by the output of an RNN. This RNN has multiple instantiations, cor - responding to different speakers, and the y share the same set of RNN parameters θ θ θ . In our work, we use gated recurrent unit (GR U) [ 16 ] as our RNN model, to memorize long-term dependencies. At time t , we define h t as the state of the GR U corresponding to speaker y t , and m t = f ( h t | θ θ θ ) (9) as the output of the entire network, which may contain other layers. Let t 0 : = max { 0 , s < t : y s = y t } be the last time we saw speaker y t before t , then: h t = GR U ( x t 0 , h t 0 | θ θ θ ) , (10) x 1 x 0 x 0 x 3 x 0 h 2 h 0 h 3 h 0 h 4 h 5 h 1 h 0 m 2 m 3 m 4 m 5 m 1 x 1 x 2 x 5 x 2 h 6 h 7 m 6 m 7 x 3 x 4 x 5 x 6 x 7 x 6 h 7 m 7 x 7 x 4 h 7 m 7 x 7 h 0 x 0 h 7 m 7 x 7 current stage y 7 =1 y 7 =2 y 7 =3 y 7 =4 Fig. 2 . Generati ve process of UIS-RNN. Colors indicate labels for speaker segments. There are four options for y 7 giv en x [6] , y [6] . where we can assume x 0 = 0 and h 0 = 0 , meaning all GRU in- stances are initialized with the same zero state. Based on the GR U outputs, we assume the speaker embeddings are modeled by: x t | x [ t − 1] , y [ t ] ∼ N ( µ µ µ t , σ 2 I ) , (11) where µ µ µ t = ( P t s =1 1 ( y s = y t )) − 1 · ( P t s =1 1 ( y s = y t ) m s ) is the av eraged GR U output for speaker y t . 3.2.4. Summary of the model W e briefly summarize UIS-RNN in Fig. 2 , where Z and λ λ λ are omit- ted for a simple demonstration. At the current stage (shown in solid lines) y [6] = (1 , 1 , 2 , 3 , 2 , 2) . There are four options for y 7 : 1 , 2 , 3 (existing speakers), and 4 (a ne w speaker). The probability for gen- erating a new observ ation x 7 (shown in dashed lines) depends both on previous label assignment sequence y [6] , and previous observ a- tion sequence x [6] . 3.3. MLE Estimation Giv en a training set ( X 1 , X 2 , . . . , X N ) containing N utterances to- gether with their labels ( Y 1 , Y 2 , . . . , Y N ) , we maximize the fol- lowing log joint lik elihood: max θ θ θ,α,σ 2 , λ λ λ N X n =1 ln p ( X n , Y n , Z n | θ θ θ , α, σ 2 , λ λ λ ) . (12) Here we include all hyper -parameters, and each term in Eq. ( 12 ) can be factorized exactly as Eq. ( 2 ). The estimation of λ λ λ depends on how g λ λ λ ( · ) is defined. When we simply hav e g λ λ λ ( z [ t − 1] ) = p 0 , we hav e a closed-form solution: p ∗ 0 = P N n =1 P T n t =2 1 ( y n,t = y n,t − 1 ) P N n =1 T n − N , (13) where T n denotes the sequence length of the n th utterance. For θ θ θ and σ 2 , there is no closed-form update. W e use stochas- tic gradient ascent by randomly selecting a subset B ( τ ) ⊂ [ N ] of | B ( τ ) | = b utterances. For θ θ θ , we update: θ θ θ ( τ ) = θ θ θ ( τ − 1) + N ρ ( τ ) b X n ∈ B ( τ ) ∇ θ θ θ ln p ( X n | Y n , Z n , θ θ θ , − ) , (14) since θ θ θ is independent of ( Y n , Z n ) . Eq. ( 15 ) also applies to σ 2 by replacing θ θ θ with σ 2 . For α , we update α ( τ ) = α ( τ − 1) + N ρ ( τ ) b X n ∈ B ( τ ) ∇ α ln p ( Y n | Z n , α, − ) , (15) Data: X test = ( x test 1 , x test 2 , . . . , x test T ) Result: Y ∗ = ( y ∗ 1 , y ∗ 2 , . . . , y ∗ T ) initialize x 0 = 0 , h 0 = 0 ; for t = 1 , 2 , . . . , T do ( y ∗ t , z ∗ t ) = arg max ( y t ,z t ) ln p ( z t ) Eq. ( 6 ) + ln p ( y t | z t , y ∗ [ t − 1] ) Eq. ( 5 , 7 ) + ln p ( x t | x [ t − 1] , y ∗ [ t − 1] , y t ) Eq. ( 11 ) update N k,t − 1 and GR U hidden states; end Algorithm 1: Online greedy MAP decoding for UIS-RNN. where p ( Y n | Z n , α, − ) is giv en in Eq. ( 8 ). In our experiments, we run multiple iterations with a constant step size ρ ( τ ) = ρ until con ver gence. 3.4. MAP Decoding Since we can decode each testing utterance in parallel, here we as- sume we are given a testing utterance X test = ( x 1 , x 2 . . . , x T ) without labels. The ideal goal is to find Y ∗ = arg max Y ln p ( X test , Y ) . (16) Howe ver , this requires an exhaustiv e search over the entire combi- natorial label space with complexity O ( T !) , which is impractical. Instead, we use an online decoding approach which sequentially per- forms a greedy search, as sho wn in Alg. 1 . This will significantly re- duce computational complexity to O ( T 2 ) . W e observe that in most cases the maximum number of speakers per-utterance is bounded by a constant C . In that case, the complexity will further reduce to O ( T ) . In practice, we apply a beam search [ 17 ] on the decoding algorithm, and adjust the number of look-ahead entries to achiev e better decoding results. 4. EXPERIMENTS 4.1. Speaker recognition model W e hav e been retraining the speaker recognition network with more data and minor tricks (see next few paragraphs) to improve its per- formance. Let’ s call the te xt-independent speak er recognition model in [ 3 , 6 , 18 ] as “d-vector V1”. This model is trained with 36M ut- terances from 18K US English speakers, which are all mobile phone data based on anonymized v oice query logs. T o train a new version of the model, which we call “d-vector V2” [ 19 ], we added: (1) non-US English speakers; (2) data from far-field devices; (3) public datasets including LibriSpeech [ 20 ], V oxCeleb [ 21 ], and V oxCeleb2 [ 22 ]. The non-public part contains 34M ut- terances from 138K speakers, while the public part is added to the training process using the MultiReader approach [ 6 ]. Another minor b ut important trick is that, the speaker recognizer model used in [ 3 ] and [ 6 ] are trained on windows of size 1600ms, which causes performance degradation when we run inference on smaller windows. For example, in the diarization system, the win- dow size is only 240ms. Thus we have retrained a ne w model “d- vector V3” by using variable-length windows, where the window size is drawn from a uniform distribution within [240 ms , 1600 ms ] during training. The speaker verification Equal Error Rate (EER) of the three models on two testing sets are shown in T able 1 . On speaker veri- fication tasks, adding more training data has significantly improv ed T able 1 . Speaker verification EER of the three speaker recognition models. en-ALL represents all English locales. The EER=3.55% for d-vector V1 on en-US phone data is the same as the number reported in T able 3 of [ 6 ]. Model EER (%) on en-US EER (%) on en-ALL phone data phone + farfield data d-vector V1 3.55 6.14 d-vector V2 3.06 2.03 d-vector V3 3.03 1.91 the performance, while using variable-length windows for training also slightly further improv ed EER. 4.2. UIS-RNN setup For the speaker change, as we have stated in Section 3.2.1 , we as- sume { z t } t ∈ [2 ,T ] follow independent identical binary distributions for simplicity . Our sequence generation model is composed of one layer of 512 GR U cells with a tanh activ ation, follo wed by two fully-connected layers each with 512 nodes and a ReLU [ 23 ] activ ation. The two fully-connected layers corresponds to Eq. ( 9 ). For decoding, we use beam search of width 10. 4.3. Evaluation protocols Our ev aluation setup is exactly the same as [ 3 ], which is based on the pyannote.metrics library [ 24 ]. W e follo w these common conv entions of other works: • W e ev aluate on single channel audio. • W e exclude overlapped speech from e valuation. • W e tolerate errors less than 250ms in segment boundaries. • W e report the confusion error, which is usually directly re- ferred to as Diarization Error Rate (DER) in the literature. 4.4. Datasets For the ev aluation, we use 2000 NIST Speaker Recognition Evalua- tion (LDC2001S97), Disk-8, which is usually directly referred to as “CALLHOME” in literature. It contains 500 utterances distributed across six languages: Arabic, English, German, Japanese, Mandarin, and Spanish. Each utterance contains 2 to 7 speakers. Since our approach is supervised, we perform a 5-fold cross val- idation on this dataset. W e randomly partition the dataset into fi ve subsets, and each time leav e one subset for ev aluation, and train UIS- RNN on the other four subsets. Then we combine the e v aluation on fiv e subsets and report the av eraged DER. Besides, we also tried to use two off-domain datasets for training UIS-RNN: (1) 2000 NIST Speaker Recognition Evaluation, Disk- 6, which is often referred to as “Switchboard”; (2) ICSI Meeting Corpus [ 25 ]. W e first tried to train UIS-RNN purely on of f-domain datasets, and ev aluate on CALLHOME; we then tried to add the of f- domain datasets to the training partition of each of the 5-fold. 4.5. Results W e report the diarization performance results on 2000 NIST SRE Disk-8 in T able 2 . For each version of the speaker recognition model, we compare UIS-RNN with two baseline approaches: k-means and spectral offline clustering. For k-means and spectral clustering, the number of speakers is adaptiv ely determined as in [ 3 ]. For UIS- RNN, we show results for three types of ev aluation settings: (1) in- domain training (5-fold); (2) off-domain training (Disk-6 + ICSI); and (3) in-domain plus off-domain training. T able 2 . DER on NIST SRE 2000 CALLHOME, with comparison to other systems in literature. VB is short for V ariational Bayesian resegmentation [ 1 ]. The DER=12.0% for d-vector V1 and spectral clustering is the same as the number reported in T able 2 of [ 3 ]. d-vector Method T raining data DER (%) V1 k-means — 17.4 spectral — 12.0 UIS-RNN 5-fold 11.7 UIS-RNN Disk-6 + ICSI 11.7 UIS-RNN 5-fold + Disk-6 + ICSI 10.6 V2 k-means — 19.1 spectral — 11.6 UIS-RNN 5-fold 10.9 UIS-RNN Disk-6 + ICSI 10.8 UIS-RNN 5-fold + Disk-6 + ICSI 9.6 V3 k-means — 12.3 spectral — 8.8 UIS-RNN 5-fold 8.5 UIS-RNN Disk-6 + ICSI 8.2 UIS-RNN 5-fold + Disk-6 + ICSI 7.6 Castaldo et al. [ 4 ] 13.7 Shum et al. [ 9 ] 14.5 Senoussaoui et al. [ 10 ] 12.1 Sell et al. [ 1 ] (+VB) 13.7 (11.5) Garcia-Romero et al. [ 2 ] (+VB) 12.8 (9.9) From the table, we see that the biggest improvement in DER actually comes from upgrading the speaker recognition model from V2 to V3. This is because in V3, we ha ve the windo w size consistent between training time and diarization inference time, which was a big issue in V1 and V2. UIS-RNN performs noticeably better than spectral of fline clus- tering, when using the same speaker recognition model. It is also im- portant to note that UIS-RNN inference produces speaker labels in an online fashion. As discussed in [ 3 ], online unsupervised cluster- ing algorithms usually perform significantly worse than of fline clus- tering algorithms such as spectral clustering. Also, adding more data to train UIS-RNN also improved DER, which is consistent with our expectation – UIS-RNN benefits from learning from more examples. Specifically , while large scale off- domain training already produces great results in practice (Disk-6 + ICSI), the av ailability of in-domain data can further improve the performance (5-fold + Disk-6 + ICSI). 5. CONCLUSIONS In this paper, we presented a speaker diarization system where the commonly used clustering module is replaced by a trainable unbounded interlea ved-state RNN. Since all components of this system can be learned in a supervised manner , it is preferred over unsupervised systems in scenarios where training data with high quality time-stamped speaker labels are available. On the NIST SRE 2000 CALLHOME benchmark, using exactly the same speaker embeddings, this new approach, which is an online algorithm, out- performs the state-of-the-art spectral offline clustering algorithm. Besides, the proposed UIS-RNN is a generic solution to the se- quential clustering problem, with other potential applications such as face clustering in videos. One interesting future work direction is to directly use accoustic features instead of pre-trained embeddings as the observation sequence for UIS-RNN, such that the entire speaker diarization system becomes an end-to-end model. 6. REFERENCES [1] Gre gory Sell and Daniel Garcia-Romero, “Diarization re- segmentation in the factor analysis subspace, ” in Interna- tional Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2015, pp. 4794–4798. [2] Daniel Garcia-Romero, David Snyder , Gregory Sell, Daniel Pov ey , and Alan McCree, “Speaker diarization using deep neural network embeddings, ” in International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2017, pp. 4930–4934. [3] Quan W ang, Carlton Do wney , Li W an, Philip Andrew Mans- field, and Ignacio Lopz Moreno, “Speaker diarization with lstm, ” in International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2018, pp. 5239–5243. [4] F abio Castaldo, Daniele Colibro, Emanuele Dalmasso, Pietro Laface, and Claudio V air , “Stream-based speaker segmentation using speaker factors and eigenv oices, ” in International Con- fer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2008, pp. 4133–4136. [5] Najim Dehak, Patrick J Kenny , R ´ eda Dehak, Pierre Du- mouchel, and Pierre Ouellet, “Front-end factor analysis for speaker verification, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , vol. 19, no. 4, pp. 788–798, 2011. [6] Li W an, Quan W ang, Alan Papir , and Ignacio Lopez Moreno, “Generalized end-to-end loss for speaker verification, ” in In- ternational Conference on Acoustics, Speech and Signal Pro- cessing (ICASSP) . IEEE, 2018, pp. 4879–4883. [7] Zbyn ˘ ek Zaj ´ ıc, Marek Hr ´ uz, and Lud ˘ ek M ¨ uller , “Speaker di- arization using conv olutional neural network for statistics ac- cumulation refinement, ” in INTERSPEECH , 2017. [8] Georg Heigold, Ignacio Moreno, Samy Bengio, and Noam Shazeer , “End-to-end text-dependent speaker verification, ” in International Conference on Acoustics, Speech and Signal Pro- cessing (ICASSP) . IEEE, 2016, pp. 5115–5119. [9] Stephen H Shum, Najim Dehak, R ´ eda Dehak, and James R Glass, “Unsupervised methods for speaker diarization: An in- tegrated and iterati ve approach, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , vol. 21, no. 10, pp. 2015– 2028, 2013. [10] Mohammed Senoussaoui, Patrick Kenn y , Themos Stafylakis, and Pierre Dumouchel, “ A study of the cosine distance- based mean shift for telephone speech diarization, ” IEEE/ACM T ransactions on Audio, Speech and Language Processing (T ASLP) , vol. 22, no. 1, pp. 217–227, 2014. [11] Gregory Sell and Daniel Garcia-Romero, “Speaker diariza- tion with plda i-vector scoring and unsupervised calibration, ” in Spoken Languag e T echnology W orkshop (SLT), 2014 IEEE . IEEE, 2014, pp. 413–417. [12] Dimitrios Dimitriadis and Petr Fousek, “Developing on-line speaker diarization system, ” pp. 2739–2743, 2017. [13] Philip Andrew Mansfield, Quan W ang, Carlton Downey , Li W an, and Ignacio Lopez Moreno, “Links: A high- dimensional online clustering method, ” arXiv pr eprint arXiv:1801.10123 , 2018. [14] Huazhong Ning, Ming Liu, Hao T ang, and Thomas S Huang, “ A spectral clustering approach to speak er diarization., ” in IN- TERSPEECH , 2006. [15] David M Blei and Peter I Frazier , “Distance dependent chinese restaurant processes, ” Journal of Machine Learning Resear ch , vol. 12, no. Aug, pp. 2461–2488, 2011. [16] Kyunghyun Cho, Bart V an Merri ¨ enboer , Caglar Gulcehre, Dzmitry Bahdanau, Fethi Bougares, Holger Schwenk, and Y oshua Bengio, “Learning phrase representations using rnn encoder-decoder for statistical machine translation, ” arXiv pr eprint arXiv:1406.1078 , 2014. [17] Mark F . Medress, Franklin S Cooper , Jim W . Forgie, CC Green, Dennis H. Klatt, Michael H. O’Malley , Edward P Neubur g, Allen Newell, DR Reddy , B Ritea, et al., “Speech understanding systems: Report of a steering committee, ” Arti- ficial Intelligence , v ol. 9, no. 3, pp. 307–316, 1977. [18] Y e Jia, Y u Zhang, Ron J W eiss, Quan W ang, Jonathan Shen, Fei Ren, Zhifeng Chen, Patrick Nguyen, Ruoming P ang, Igna- cio Lopez Moreno, et al., “Transfer learning from speaker ver- ification to multispeaker text-to-speech synthesis, ” in Confer - ence on Neural Information Pr ocessing Systems (NIPS) , 2018. [19] Quan W ang, Hannah Muckenhirn, Ke vin Wilson, Prashant Sridhar , Zelin Wu, John Hershey , Rif A. Saurous, Ron J. W eiss, Y e Jia, and Ignacio Lopez Moreno, “V oicefilter: T argeted voice separation by speaker-conditioned spectrogram mask- ing, ” arXiv pr eprint arXiv:1810.04826 , 2018. [20] V assil Panayotov , Guoguo Chen, Daniel Pove y , and Sanjeev Khudanpur , “Librispeech: an asr corpus based on public do- main audio books, ” in International Confer ence on Acous- tics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2015, pp. 5206–5210. [21] Arsha Nagrani, Joon Son Chung, and Andrew Zisserman, “V oxceleb: a large-scale speaker identification dataset, ” arXiv pr eprint arXiv:1706.08612 , 2017. [22] Joon Son Chung, Arsha Nagrani, and Andrew Zisserman, “V oxceleb2: Deep speaker recognition, ” arXiv pr eprint arXiv:1806.05622 , 2018. [23] V inod Nair and Geoffre y E Hinton, “Rectified linear units im- prov e restricted boltzmann machines, ” in Pr oceedings of the 27th international conference on machine learning (ICML) , 2010, pp. 807–814. [24] Herv ´ e Bredin, “ pyannote.metrics : a toolkit for repro- ducible ev aluation, diagnostic, and error analysis of speaker diarization systems, ” hypothesis , v ol. 100, no. 60, pp. 90, 2017. [25] Adam Janin, Don Baron, Jane Edwards, Dan Ellis, Da vid Gel- bart, Nelson Morgan, Barbara Peskin, Thilo Pfau, Elizabeth Shriberg, Andreas Stolcke, et al., “The icsi meeting corpus, ” in International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2003, v ol. 1, pp. I–I.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment