Learning latent representations for style control and transfer in end-to-end speech synthesis

In this paper, we introduce the Variational Autoencoder (VAE) to an end-to-end speech synthesis model, to learn the latent representation of speaking styles in an unsupervised manner. The style representation learned through VAE shows good properties…

Authors: Ya-Jie Zhang, Shifeng Pan, Lei He

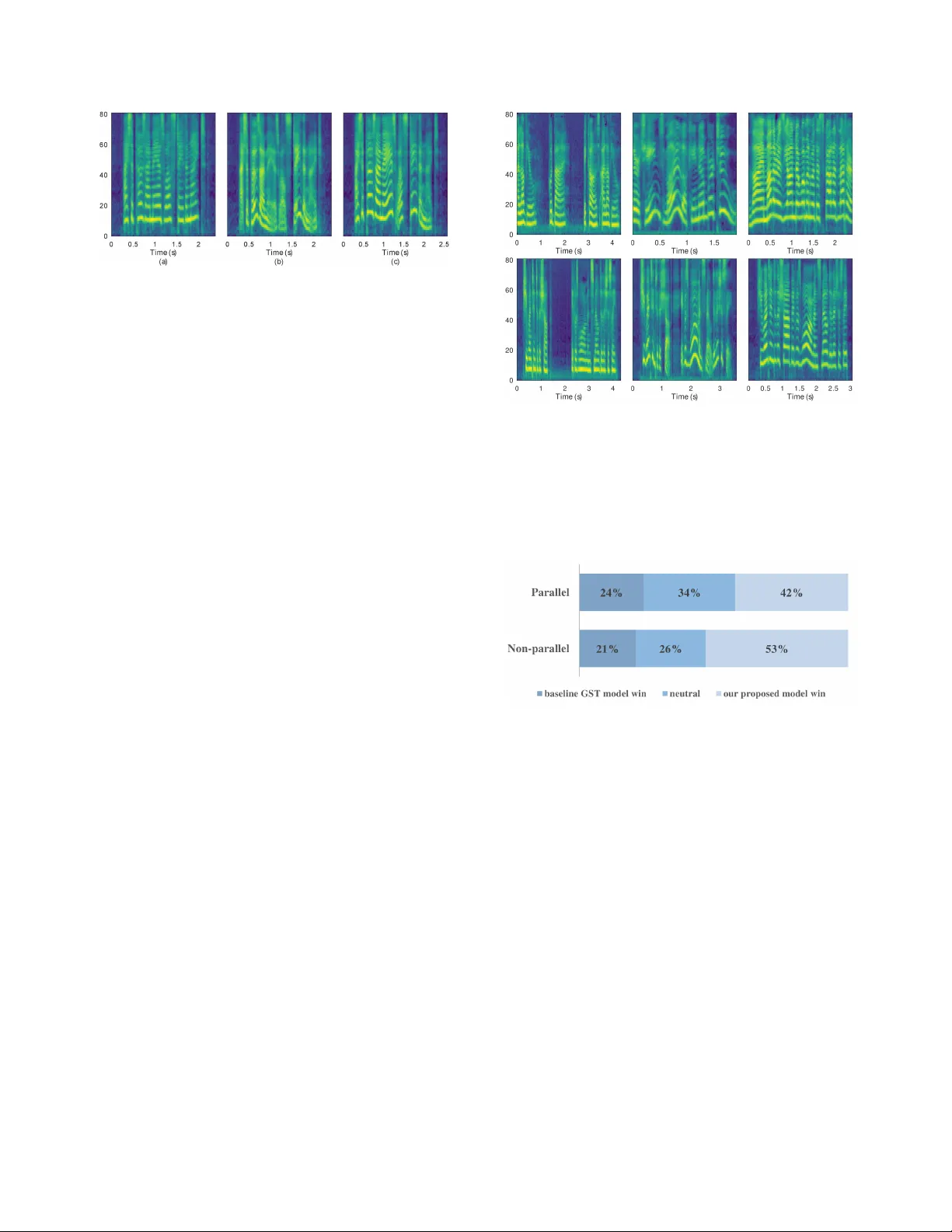

LEARNING LA TENT REPRESENT A TIONS FOR STYLE CONTR OL AND TRANSFER IN END-TO-END SPEECH SYNTHESIS Y a-Jie Zhang 1 ? , Shifeng P an 2 , Lei He 2 , Zhen-Hua Ling 1 1 National Engineering Laboratory for Speech and Language Information Processing, Uni versity of Science and T echnology of China, Hefei, P .R.China 2 Microsoft China zyj008@mail.ustc.edu.cn, { peterpan, helei } @microsoft.com, zhling@ustc.edu.cn ABSTRA CT In this paper , we introduce the V ariational Autoencoder (V AE) to an end-to-end speech synthesis model, to learn the latent representation of speaking styles in an unsupervised manner . The style representation learned through V AE sho ws good properties such as disentangling, scaling, and combi- nation, which makes it easy for style control. Style transfer can be achiev ed in this frame work by first inferring style representation through the recognition network of V AE, then feeding it into TTS network to guide the style in synthesizing speech. T o a v oid K ullback-Leibler (KL) div ergence collapse in training, sev eral techniques are adopted. Finally , the proposed model shows good performance of style control and outperforms Global Style T oken (GST) model in ABX preference tests on style transfer . Index T erms — unsupervised learning, variational au- toencoder , style transfer , speech synthesis 1. INTRODUCTION End-to-end text-to-speech (TTS) models which generate speech directly from characters have made rapid progress in recent years, and achieved very high voice quality [1–3]. While the single style TTS, usually neutral speaking style, is approaching the e xtreme quality close to human expert recording [1, 3], the interests in expressi ve speech synthesis also keep rising. Recently , there also published many promising works in this topic, such as transferring prosody and speaking style within or cross speakers based on end-to- end TTS model [4 – 6]. Deep generati ve models, such as V ariational Autoencoder (V AE) [7] and Generative Adversarial Network (GAN) [8], are po werful architectures which can learn complicated distri- bution in an unsupervised manner . Particularly , V AE, which explicitly models latent variables, have become one of the most popular approaches and achiev ed significant success on Paper accepted by IEEE ICASSP 2019 ? W ork done during internship at Microsoft STC Asia text generation [9], image generation [10, 11] and speech generation [12, 13] tasks. V AE has many merits, such as learning disentangled factors, smoothly interpolating or continuously sampling between latent representations which can obtain interpretable homotopies [9]. Intuitiv ely , in speech generation, the latent state of speaker , such as affect and intent, contributes to the prosody , emotion, or speaking style. F or simplicity , we’ll hereafter use speaking style to represent these prosody related expressions. The latent state plays a pretty similar role as the latent variable does in V AE. Therefore, in this paper we intend to introduce V AE to T acotron2 [1], a state-of-the-art end-to-end speech synthesis model, to learn the latent representation of speaker state in a continuous space, and further to control the speaking style in speech synthesis. T o be specific, direct manipulation can be easily imposed on the disentangled latent variable, so as to control the speaking style. On the other hand, with variational inference the latent representation of speaking style can be inferred from a reference audio, which then controls the style of synthesized speech. Style transfer , from reference audio to synthesized speech, is thus achieved. Last but not least, directly sampling on prior of latent distribution can generate a lot of speech with various speaking style, which is very useful for data augmentation. Comprehensiv e ev aluation shows the good performance of this method. W e ha ve become aw are of recent work by Akuzaw a et al. [12] which combines an autoregressiv e speech synthesis model with V AE for expressi ve speech synthesis. The pro- posed work differs from Akuzawa’ s as follows: 1) their goal is to synthesize expressiv e speech, which is achiev ed by direct sampling from prior of latent distribution at inference stage, while our goal is to control the speaking style of synthesized speech through direct manipulate latent v ariable or variational inference from a reference audio; 2) the proposed work is on end-to-end TTS model while Akuzawa’ s not. The rest of the paper is organized as follows: Section 2 introduces V AE model, our proposed model architecture and tricks for solving KL-di ver gence collapse problem. Section 3 presents the experimental results. Finally , the paper will be concluded in Section 4. 2. MODEL In this section, we first revie w V ariational Autoencoder . W e then show the details of our proposed style transfer model. 2.1. V ariational A utoencoder V ariational Autoencoder was first defined by Kingma et al. [7] which constructs a relationship between unobserved continuous random latent variables z and observed dataset x . The true posterior density p θ ( z | x ) is intractable, which results in an indifferentiable marginal likelihood p θ ( x ) . T o address this, a recognition model q φ ( z | x ) is introduced as an approximation to the intractable posterior . Following the variational principle, log p θ ( x ) can be rewritten as shown in equation (1), where L ( θ, φ ; x ) is the variational lo wer bound to optimize. log p θ ( x ) = K L [ q φ ( z | x ) || p θ ( z | x )] + L ( θ , φ ; x ) ≥ L ( θ , φ ; x ) = E q φ ( z | x ) [log p θ ( x | z )] − K L [ q φ ( z | x ) || p θ ( z )] (1) Generally , the prior o ver latent variables p θ ( z ) is assumed to be centered isotropic multiv ariate Gaussian N ( z ; 0 , I ) , where I is the identity matrix. The usual choice of q φ ( z | x ) is N ( z ; µ ( x ) , σ 2 ( x ) I ) , so that K L [ q φ ( z | x ) || p θ ( z )] can be calculated in closed form. In practice, µ ( x ) and σ 2 ( x ) are learned from observed dataset via neural networks which can be viewed as an encoder . The expectation term in equation (1) plays the role of decoder which decodes latent variables z to reconstruct x . The decoder may produce the expected reconstruction if the output of decoder is averaged ov er many samples of x and z [14]. In the rest of the paper, − E q φ ( z | x ) [log p θ ( x | z )] is referred to as reconstruction loss and K L [ q φ ( z | x ) || p θ ( z )] is referred to as KL loss. Stochastic inputs can be processed by stochastic gradient descent via backpropagation, but stochastic units within the network cannot be processed by backpropagation. Thus, in practice, ”reparameterization trick” is introduced to V AE framew ork. Sampling z from distribution N ( µ , σ 2 I ) is de- composed to first sampling ∼ N ( 0 , I ) and then computing z = µ + σ , where denotes an element-wise product. 2.2. Proposed Model Architecture In this work, we introduce V AE into end-to-end TTS model and propose a flexible model for style control and style transfer . The whole network consists of two components, as shown in Fig.1: (1) A recognition model or inference network which encodes reference audio into a fixed-length short vector of latent representation (or latent v ariables z which stand for style representation), and (2) an end-to-end TTS model based Fig. 1 . An architecture of the proposed style transfer TTS model. The dashed lines denote sampling z from parametric distribution. on T acotron 2, which con verts the combined encoder states (including latent representations and text encoder states) to generated target sentence with specific style. The input texts are character sequences and the acoustic features are mel-frequency spectrograms. One may use v ari- ous powerful and complex neural networks for the recognition model. Here, we only adopt a recurrent reference encoder followed by two fully connected layers. W e use the same architecture and hyperparameters for reference encoder as W ang et al. [4] which consists of six 2-D con volutional layers followed by a GR U layer . The output, which denotes some embedding of the reference audio, is then passed through tw o separate fully connected (FC) layers with linear acti v ation function to generate the mean and standard deviation of latent variables z . The prior and approximati ve posterior are Gaus- sian distribution mentioned Section 2.1. Then z is derived by reparameterization trick. The encoder which deals with character inputs consists of three 1-D conv olutional layers with 5 width and 512 channels followed by a bidirectional [15] LSTM [16] layer using zoneout [17] with probability 0.1. The output text encoder state is simply added by z and then is consumed by a location-sensitive attention network [18] which con v erts encoded sequence to a fixed-length context vector for each decoder output step. In addition, z should be first passed through a FC layer to mak e sure the dimension equal to te xt encoder state before add operation. The attention module and decoder have the same architecture as T acotron 2 [1]. Then, W a veNet [19] vocoder is utilized to reconstruct wa veform. The total loss of proposed model is sho wn in equation (2). Loss = K L [ q φ ( z | x ) || p θ ( z )] − E q φ ( z | x ) [log p θ ( x | z , t )] + l stop (2) Compared with the lower bound in equation (1), the recon- struction loss term is conditioned on both latent variable z and input text t and a stop token loss l stop is added. It is Fig. 2 . Spectrograms generated by interpolation between two z . The interpolation coef ficient is : (a) z a , (b) 1 3 z a + 2 3 z d , (c) 2 3 z a + 1 3 z d , (d) z d . worth mentioning that, after comparing L2-loss with negati ve log lik elihood of Gaussian distrib ution, we finally choose L2- loss of mel spectrograms as reconstruction loss. 2.3. Resolve KL collapse problem During training, we observe that the KL loss K L [ q φ ( z | x ) || p θ ( z )] is always found collapsed before they learned a distinguish- able representation, which is a common phenomenon but a crucial issue in training V AE models. In other words, the con vergence speed of KL loss far surpasses that of the reconstruction loss and the KL loss quickly drops to nearly zero and never rises again, which means the encoder doesn’t work. Thus, KL annealing [9] is introduced to our task to solve this problem. That is, during training, add a variable weight to the KL term. The weight is close to zero at the beginning of training and then gradually increase. In addition, KL loss is taken into account once every K steps. By combining these two tricks, the KL loss keeps nonzero and av oids to collapse. 3. EXPERIMENTS AND ANAL YSIS 3.1. experimental setup An 105-hour audiobook recordings dataset read with various storytelling styles by a single English speaker (Blizzard Challenge 2013) was used in our experiments. The dataset contains 58453 utterances for training and 200 for test. 80- dimensional mel spectrograms were extracted with frame shift 12.5 ms and frame length 50 ms. GST model [4] with character inputs was used as our baseline model. The hyperparameters are set according to [4]. As for our proposed model, the dimension of latent v ariables is 32. The parameter K mentioned in 2.3 is 100 before 15000 training steps and 400 after the threshold. At inference stage, in evaluation of style control, we directly manipulate z without going through the whole recog- nition model. W ith regard to e valuation of style transfer, we feed audio clips as reference and go through the recognition model. Both parallel and non-parallel style transfer audios are Fig. 3 . Spectrograms generated to demonstrate disentangled factors. The first ro w exhibits the control of pitch height only by adjusting latent dimension 6 to be -0.9, -0.1, 0.7. The second row shows that the local pitch variation is gradually magnified by increasing the value of dimension 10, which is 0.1, 0.5, 0.9, respectiv ely . generated and ev aluated 1 . Parallel transfer means the target text information is the same as reference audio’ s, vice versa. 3.2. Style control 3.2.1. Interpolation of latent variables As mentioned in [9], V AE supports smoothly interpola- tion and continuous sampling between latent representations, which obtains interpretable homotopies. Thus, we did in- terpolation operation between two z 2 . One can generate speech with high speaking rate and high-pitch, the other with low speaking rate and low-pitch. The mel spectrograms of generated speech are sho wn in Fig. 2. As we can see, both pitch and speaking rate of generated speech gradually decrease along with the interpolating. The result shows that the learnt latent space is continuous in controlling the trend of spectrograms which will further reflect in the change of style. 3.2.2. Disentangled factors A disentangled representation means that a latent v ariable completely controls a concept alone and is inv ariant to changes from other factors [10]. In experiments, we found that sev eral dimensions of z could independently control different style attributes, such as pitch-height, local pitch 1 The audio samples can be found at http://home.ustc.edu.cn/ ˜ zyj008/ICASSP2019 . 2 These two z are deriv ed by feeding tw o audios to the recognition model, one with high speaking rate and high-pitch, the other with low speaking rate and low-pitch. Fig. 4 . Audio (a) and (b) are generated with z which setting a single dimension to be non-zero with other dimensions to be zero. The valued dimension in (a) controls pitch height, while in (b) controls pitch variation. (c) is generated with the summation of z from (a) and (b). variation, speaking rate. Fig. 3 shows the alteration of spectrograms by manipulating single dimension while fixing others. Adjusting one of these dimensions, only one attrib ute of generated speech changes. This shows that, in our model, V AE has the ability of learning disentangled latent factors. Next, we combined two disentangled dimensions to verify the additivity of latent variables. Fig. 4 illustrates the combination results of pitch height and local pitch variati on attributes. It sho ws that the audio generated with combined z inherits the characteristics of both disentangled dimensions. 3.3. Style transfer Fig. 5 shows mel spectrograms of the style transferred synthetic speech aligned with their corresponding references. The reference audios are chosen from test set with certain styles. The synthesized audios share the same input text. As we can see in Fig. 5, the mel spectrograms of generated speech and their reference audio have pattern similarities, such as in pitch-height, pause time, speaking rate and pitch variation. 3.4. Subjective test T o subjectiv ely e v aluate the performance of style transfer, crowd-sourcing ABX preference tests on parallel and non- parallel transfer were conducted. For parallel transfer , 60 audio clips with their texts are randomly selected from test set. For non-parallel transfer, 60 sentences of text and 60 other reference audio clips are selected to generate speech. The baseline voice is generated from the best GST model we hav e built. Each case in ABX test is judged by 25 native judgers. The total number of judger is 56 for parallel test and 57 for non-parallel test. The criterion in rating is ”which one’ s speaking style is closer to the reference style” with three choices: (1) 1st is better; (2) 2nd is better; (3) neutral. Fig. 6 shows the ABX results. As we can see, the proposed model outperforms GST model on both parallel and non-parallel style transfer (at p-value < 10 − 5 ). It shows Fig. 5 . The first row exhibits the mel spectrograms of three recordings with dif ferent styles, while the second row exhibits the synthesised audios referenced on those recordings separately . The synthesised audios hav e the same text ”She went into the shop . It was warm and smelled deliciously . ” Fig. 6 . ABX test results for parallel and non-parallel transfers. that V AE can better model the latent style representations, which results in better style transfer . What’ s more, the performance of the proposed model on non-parallel transfer is much better than that on parallel transfer, which shows the better generalization capability of the proposed model. 4. CONCLUSION A V AE module is introduced to end-to-end TTS model, to learn the latent representation of speaking style in a continuous space in an unsupervised manner , which then can control the speaking style in synthesized speech. W e have demonstrated that the latent space is continuous and explored the disentangled factors in learned latent v ariables. The proposed model shows good performance in style transfer , which outperforms GST model via ABX test, especially in non-parallel transfer . Future work will k eep focusing on getting better disentan- gled and interpretable latent representations. In addition, the scope of style transfer research will further extend to multi- speakers, instead of single speaker . 5. REFERENCES [1] Jonathan Shen, Ruoming Pang, Ron J W eiss, Mike Schuster , Navdeep Jaitly , Zongheng Y ang, Zhifeng Chen, Y u Zhang, Y uxuan W ang, Rj Skerrv-Ryan, et al., “Natural TTS synthesis by conditioning W aveNet on mel spectrogram predictions, ” in 2018 IEEE International Confer ence on Acoustics, Speec h and Signal Pr ocessing (ICASSP) . IEEE, 2018, pp. 4779– 4783. [2] W ei Ping, Kainan Peng, Andre w Gibiansky , Sercan O Arik, Ajay Kannan, Sharan Narang, Jonathan Raiman, and John Miller , “Deep voice 3: Scaling te xt-to-speech with con v olutional sequence learning, ” 2018. [3] Naihan Li, Shujie Liu, Y anqing Liu, Sheng Zhao, Ming Liu, and Ming Zhou, “Close to human quality TTS with transformer , ” arXiv preprint , 2018. [4] Y uxuan W ang, Daisy Stanton, Y u Zhang, RJ Skerry- Ryan, Eric Battenberg, Joel Shor , Y ing Xiao, Fei Ren, Y e Jia, and Rif A Saurous, “Style tokens: Unsupervised style modeling, control and transfer in end-to-end speech synthesis, ” in Pr oceedings of the 35th International Confer ence on Machine Learning, ICML 2018, , 2018, pp. 5167–5176. [5] RJ Skerry-Ryan, Eric Battenberg, Y ing Xiao, Y uxuan W ang, Daisy Stanton, Joel Shor, Ron J W eiss, Rob Clark, and Rif A Saurous, “T owards end-to-end prosody transfer for e xpressi ve speech synthesis with T acotron, ” in Proceedings of the 35th International Confer ence on Machine Learning,ICML 2018 , 2018, pp. 4700–4709. [6] Daisy Stanton, Y uxuan W ang, and RJ Skerry- Ryan, “Predicting expressi ve speaking style from text in end-to-end speech synthesis, ” arXiv pr eprint arXiv:1808.01410 , 2018. [7] Diederik P Kingma and Max W elling, “ Auto- encoding variational bayes, ” in Pr oc. 2nd International Confer ence on Learning Representations , 2014. [8] Ian Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, David W arde-Farle y , Sherjil Ozair , Aaron Courville, and Y oshua Bengio, “Generativ e adversarial nets, ” in Advances in neural information processing systems , 2014, pp. 2672–2680. [9] Samuel R Bowman, Luke V ilnis, Oriol V inyals, Andrew M Dai, Rafal Jozefo wicz, and Samy Bengio, “Generating sentences from a continuous space, ” in Pr oceedings of the 20th SIGNLL Confer ence on Computational Natural Language Learning, CoNLL 2016 . [10] Irina Higgins, Loic Matthey , Arka Pal, Christopher Burgess, Xavier Glorot, Matthew Botvinick, Shakir Mohamed, and Alexander Lerchner , “ β -V AE: Learning basic visual concepts with a constrained variational framew ork, ” 2016. [11] Christopher P Burgess, Irina Higgins, Arka Pal, Loic Matthey , Nick W atters, Guillaume Desjardins, and Alexander Lerchner, “Understanding disentangling in β -V AE, ” arXiv preprint , 2018. [12] Kei Akuzawa, Y usuke Iwasa wa, and Y utaka Matsuo, “Expressiv e speech synthesis via modeling expressions with variational autoencoder , ” in Proc. Interspeech 2018 , 2018, pp. 3067–3071. [13] W ei-Ning Hsu, Y u Zhang, and James Glass, “Learning latent representations for speech generation and trans- formation, ” in Pr oc. Interspeech 2017 , 2017, pp. 1273– 1277. [14] Carl Doersch, “T utorial on variational autoencoders, ” arXiv pr eprint arXiv:1606.05908 , 2016. [15] Mike Schuster and Kuldip K Paliwal, “Bidirectional recurrent neural networks, ” IEEE T ransactions on Signal Pr ocessing , vol. 45, no. 11, pp. 2673–2681, 1997. [16] Sepp Hochreiter and J ¨ urgen Schmidhuber , “Long short- term memory , ” Neur al computation , vol. 9, no. 8, pp. 1735–1780, 1997. [17] David Krueger , T egan Maharaj, J ´ anos Kram ´ ar , Mo- hammad Pezeshki, Nicolas Ballas, Nan Rosemary Ke, Anirudh Goyal, Y oshua Bengio, Aaron Courville, and Chris Pal, “Zoneout: Regularizing rnns by randomly preserving hidden acti v ations, ” in Pr oc. ICLR,2017 , 2017. [18] Jan K Chorowski, Dzmitry Bahdanau, Dmitriy Serdyuk, Kyungh yun Cho, and Y oshua Bengio, “ Attention-based models for speech recognition, ” in Advances in neural information pr ocessing systems , 2015, pp. 577–585. [19] A ¨ aron V an Den Oord, Sander Dieleman, Heiga Zen, Karen Simonyan, Oriol V inyals, Alex Grav es, Nal Kalchbrenner , Andre w W Senior , and K oray Kavukcuoglu, “W a veNet: A generativ e model for raw audio., ” in SSW , 2016, p. 125.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment