To Reverse the Gradient or Not: An Empirical Comparison of Adversarial and Multi-task Learning in Speech Recognition

Transcribed datasets typically contain speaker identity for each instance in the data. We investigate two ways to incorporate this information during training: Multi-Task Learning and Adversarial Learning. In multi-task learning, the goal is speaker …

Authors: Yossi Adi, Neil Zeghidour, Ronan Collobert

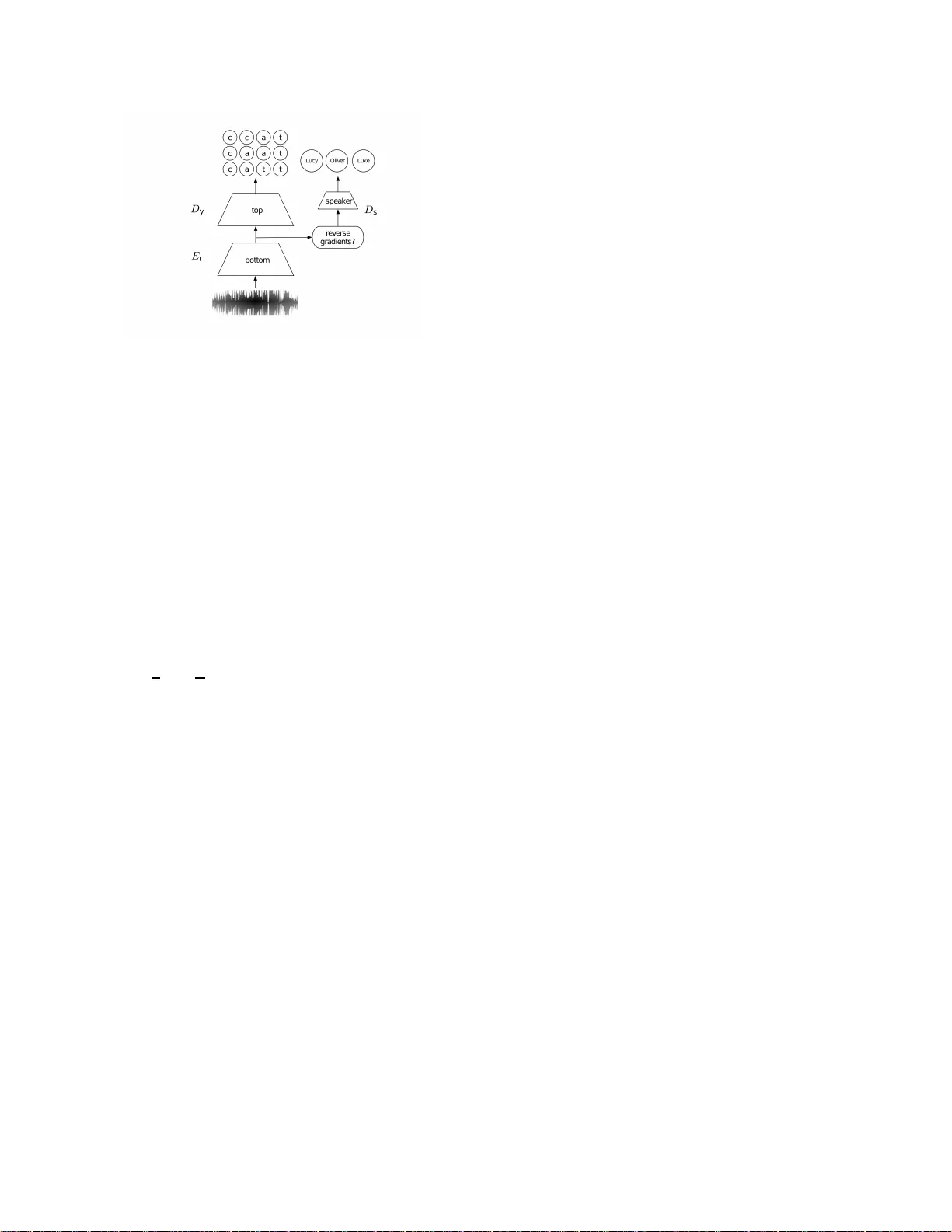

T O REVERSE THE GRADIENT OR NO T : AN EMPIRICAL COMP ARISON OF AD VERS ARIAL AND MUL TI-T ASK LEARNING IN SPEECH RECOGN ITION *Y oss i Adi 1 , 2 , *Neil Ze ghidour 2 , 3 , Ronan Collobert 2 , Nicolas Usunier 2 , V ital iy L iptchinsky 2 , Gabriel Synnaeve 2 1 Bar -Ilan Univ ersity . 2 Face book AI Researc h. 3 CoML, ENS/INRIA. ABSTRA CT T ranscribed datasets typically contain speaker identity for each instance in the data. W e in vestigate two ways to incor- porate this info rmation during training : Multi-T ask Learnin g and Adversarial Learnin g . In mu lti- ta sk learnin g, the goal is speaker pr e diction; we expect a perfo rmance im provement with this joint train ing if the two tasks of speec h recogn itio n and speaker recogn ition share a co m mon set of u nderly in g features. In contrast, ad versarial learning is a means to learn representatio ns in variant to the speaker . W e then expec t b et- ter performance if this learnt in variance helps generalizing to new speakers. While the two approaches seem natural in the context of speech r ecognitio n, they ar e incom patible because they correspo nd to opposite grad ients back- prop a gated to the model. In order to better understand th e effect of these ap- proach e s in terms of err or rates, we compare both strategies in controlled settings. Moreover , we explore the u se of ad- ditional un-transcribed d ata in a semi-supe r vised, ad versarial learning manner to improve error rates. Our results show that deep m odels trained on big datasets alread y develop in variant representatio ns to speakers without any auxiliary loss. When considerin g adversarial lear n ing a nd multi-task learn ing, th e impact on the aco ustic model seems mino r . However , mo dels trained in a semi-supervised manner can improve error-rates. Index T erms : automatic speech recog nition, ad versarial learning, multi-task learning, ne u ral networks 1. INTRODUCTION The minimal components o f a speech rec o gnition dataset are audio r ecordin g s and th eir correspon d ing tr anscription . T h ey also typically co ntain an add itio nal in forma tio n, which is the anonymou s identifier of the spe a ker correspon ding to eac h ut- terance. As speaker v ariations are one of th e most challen ging aspects o f speech processing, this in f ormation can b e lever- aged to improve the perfor mance and robustness of sp eech recogn itio n systems. W ork cond ucted while Y ossi Ad i was a n Inte rn at Fac ebook AI Rese arch. *Equal contri buti on T raditionally , the iden tity o f the speakers has b e en used to extract speaker repre sentations and use it as an add itio nal input to the acoustic model [1, 2] . This app roach r equires an ad ditional feature extraction p rocess o f th e input signal. Recently , two ap proach es have been proposed to lev erage speaker informatio n as another supervision to the acou stic model; Multi-T ask Learnin g (M T ) [3, 4] an d Adversarial Learning (AL ) [5]. In th e co ntext of speech reco gnition, a way o f perf orming MT or AL is to ad d a spea ker c la ssification branch in parallel of th e m ain b ranch trained for tran scr ip- tion. All laye r s below the fork of the two branc hes r eceive gradients fro m both transcrip tio n loss and spea ker loss. In MT our g oal is to jointly tran scribe the speech signal together with classifying the speaker identity . Pr evious work has exp lored using au xiliary tasks suc h a s gender or con text [6], as well as speaker classification [7, 8]. For example, the auth o rs in [ 7] pr opose to classify the speaker identity in addition to estimating the pho neme-state p osterior prob abili- ties used f o r ASR, th ey presented a non- negligible improve- ment in terms of Pho neme Err o r Rate following th e above approa c h . An oppo site ap proach is to assume th at good acoustic rep - resentations should be inv ariant to any task that is no t the speech recogn ition task, in par ticular the speaker character- istics. A method to learn suc h inv ariances is AL: the branc h of the speaker classification task is trained to red uce its classi- fication error, howe ver th e main b ranch is trained to max imize the loss o f this speaker classifier . By d oing so, it learns inv ari- ance to spe a ker charac teristics. This appr oach has been pr evi- ously used for sp e ech reco gnition to learn in variance to no ise condition s [9], spe aker identity [ 10, 11, 12] and acce nt [13]. The autho r s in [ 11], p r oposed to minim iz e the senone classifi- cation lo ss, and simultaneously m a x imize th e speaker classifi- cation lo ss. This approach ach iev es a significant imp rovement in terms o f W o r d Err or Ra te . Our study arises fro m two observations. First, both the MT and AL approac hes describ ed ab ove ha ve be e n u sed with the same purpo se despite being fundam entally opposed , and this raises the q uestion of which o ne is the most ap prop r i- ate choice to improve the perfor mance o f speech recognition systems. Secon d ly , they only differ by a simple computa- tional step which consists in reversing the gradien t at the f ork between the sp e a ker br a n ch and the main branch . This al- lows for controlled experiments wher e both approaches c an be compare d with eq uiv alent architec tu res and numb er of pa- rameters. In th is work we focus on letter based acoustic models. In this setting, we a re given audio re c o rding s and their tra n - scripts, an d train a neu ral n e twork to outp ut letters with a sequence-b ased cr iterion. During train in g, we also hav e ac- cess to the identity of the speaker of each utteran ce. W e per- form a systematic co mparison between MT an d AL for the task of large vocabulary speech reco gnition on the W a ll Street Journal dataset (WSJ) [14]. Knowing wheth er the speaker in- formation sho uld be used to im pact low level or hig h level representatio ns is also a v aluable inf o rmation , so for eac h ap- proach we experiment with three lev els to fork th e spea ker branch to: lower lay er , middle an d upp er lay er . Our Contribution: (i) W e observed that deep models trained on big d atasets, already develop in variant represen- tations to speakers with n either AL no r MT ; (ii) Both AL a nd MT do no t h ave a clea r im p act on the acoustic m odel with re- spect to erro r r ates; and (iii) Using additio n al, u n-tran scribed speaker labeled data, (i.e. the speaker is known, but without the transcription), seems pr omising. I t improves Letter-Error Rates (LER), e ven though these improvements did not trans- fer into W o rd-Err or Rates (WER) improvements. 2. ADVE RSARIAL VS. MUL TIT ASK Giv en a training set of n transcribed acoustic u tterances cou- pled with their sp eaker ide n tity , o ur goal is to utilize these speaker labels to improve transcription loss. Formally , let S = ( X i , Y i , s i ) n i =0 be the training set, in which ea ch examp le is composed of three elements: a se- quence of m acou stic features X i = { x 1 , · · · , x m } where each x j is a d -dimension a l vector, a sequence of k character s Y i = { y 1 , · · · , y k } which are not aligned with X i , and a speaker label s i . I n the following subsection s we pr esent two common appro aches to add the speaker iden tity as ano th er supervision to the network. 2.1. Adversarial Learning As speec h reco g nition tasks should not dep end on a specific set of speakers, in AL, we would like to lear n an acoustic rep - resentation which is speaker-in varian t an d at the sam e time characters -discriminative . For that purp o se, we consider the first k layers of the network as an enco der, denoted by E r with parameters θ r , which map s the acoustic f eatures X i to R i = { r 1 , · · · , r l } where l c a n be smaller than m . Then , the repre- sentations R i are fed into a deco der n etwork D y with param - eters θ y , to output the charac ters p osteriors p ( Y i | X i ; θ r , θ y ) . Furthermo re, we intro duce another decode r network to classify the sp e a ker , deno te D s . The ab ove decoder, maps R i to the spea ker posteriors p ( s i | X i ; θ r , θ y ) . In or der to make the representatio n R speaker -in variant , we tr ain E r and D s jointly u sing ad versarial loss, where we optimize θ r to maximize speaker classification loss, and at th e same time optimizing θ s to minimize the speaker classifica- tion loss. Recall, we would like to make th e representation R characters -discriminative , hen ce, we further op timize θ y and θ r to minimize th e tr anscription lo ss. Th erefor e , the total loss is con stru cted as follows, L ( θ r , θ y , θ s ) = L acoustic ( θ r , θ y ) − λ L spk ( θ r , θ s ) (1) where L acoustic ( θ r , θ y ) is the tra nscription loss, L spk ( θ r , θ s ) is th e spe a ker loss, and λ is a tr a de-off p arameter which con - trols the b alance between th e two. T his minima x g ame will enhance the discriminativ e p roperties of D s and D y while at the same time p ush E r tow ards gen erating speaker-in variant representatio n. As suggested in [ 5, 1 1], we optimize the p arameters using Stochastic Grad ient Descent where we rev erse the gradients of ∂ L spk /∂ θ r [5]. 2.2. Multi-T ask Learning Similarly to AL , in MT we consid e r an encode r E r with pa- rameters θ r and two decode r s D y and D s with parameter s θ y and θ s respectively . Ho wev er , in M T , our goal is to mini- mize both L acoustic ( θ r , θ y ) and L spk ( θ r , θ s ) . Therefore , the objective in MT c a se can be expr essed a s follows, L ( θ r , θ y , θ s ) = L acoustic ( θ r , θ y ) + λ · L spk ( θ r , θ s ) (2) Practically , o n f orward pr opagatio n, both AL and MT act the same way . Y et, o n back- p ropag ation th ey act exactly the op- posite. In AL, we rev erse th e grad ients f rom D s before bac k - propag ating into E r . In contrast, in MT we sum toge th er the gradients fro m D s and D y . Fig ure 1 depicts an illustration of the describ e d model. 3. MODELS Our acoustic models ar e based on Gated Conv olutional Neu- ral Networks (Gated Con v Nets) [15], fed with 40 lo g-mel fil- terbank en ergies extracted every 10 ms with a 25 ms sliding window . Gated Con vNets stack 1D conv o lutions with Gated Linear Un its (GLUs). 1D Con vNets were intro duced early in the sp eech community , and are also refer red as Ti me-Delay Neural Networks [16]. W e set Ł acoustic ( θ r , θ y ) to be th e AutoSeg Criterion (ASG) c r iterion [17, 18], wh ich is similar to the Connection- ist T empor al Classification (CTC) criterion [1 9]. Although speech utteran ces contain time dependen t tran - scriptions, the speaker labels shou ld be applied to the whole Fig. 1 . An illu stration o f o ur ar c hitecture. W e first feed the network a sequenc e of acoustic featur e s, then we take th e out- put of some intermediate re p resentation an use it also to clas- sify the spea ker . sequence. Hence, wh en optimizing for m ulti-task or adver - sarial lear n ing we add a sp e aker branc h which aggregates over all the time fram es to classify the speaker . This po ol- ing op eration agg regates th e time -depen dent r epresentation s into a single sequence-level representation s = g ( · ) . T wo straightfor ward ag gregation method s are to take the sum over all fram es: g ( · ) = P t r t where r t is th e rep resentation at time frame t , or the max g ( · ) = max t ( r t ) , where the max is taken over eac h dimension. W e p ropo se to use a tr ade-off so- lution between these two m ethods, which is the LogSumExp [20], s = 1 τ log 1 T P t e τ · r t , wh ere τ is a hyp e r-parameter controls the trade-off between the sum an d the max; with high values of τ th e Lo gSumExp is similar to th e ma x , while it tends to a sum as τ → 0 . In practice, we used τ = 1 . W e set L speaker ( θ r , θ s ) to be th e Negative Log Likeli- hood (NLL) lo ss function . T he overall n etwork, togeth e r with D y , is trained by back-prop agation. At infer ence time, D s , the speaker classification branch is discarded. 4. EXPERIMENT AL RESUL TS In the following section we describe our experim e ntal results. First, we provid e all the tech nical d e tails and setu ps in Sub- section 4.1. Th en, we describ e our baselines and analyze their ability to encode info rmation about the speaker in Sub sec- tion 4.2. Lastly , on Su b section 4.3 we present ou r results on the WSJ data set [14]. 4.1. Setups All mo dels were compo sed of 17 gated conv olutional laye r s followed by a weight normalization [21]. W e used a dropou t layer after ev ery GLU lay er with d ropou t rate of 0 .25. W e op - timized th e mo d els using SGD with two different learn ing rate values, we used learning ra te of 1.4 for E r and D y and learn - ing rate o f 0.1 fo r D s . W e did not use m omentum or weight decay . All the mod els were tra in ed using WSJ dataset which contains 283 speakers with 81.5 h ours o f speakin g time. W e com puted WER using ou r own one-p ass decoder, which perfo rms a simple beam-search with be am thresh old- ing, histogram prun in g and langu age mo d el smear ing [22]. W e did not implement any sort of model adaptation bef ore decodin g , nor any word graph r e sco ring. Our de c oder relies on KenLM [23] fo r the langu age modelin g part, u sing a 4- gram LM trained on the stand ard d ata of WSJ [14]. For LER, we used V iter bi deco ding as in [17]. The speaker branch , D s , was compo sed of o ne ga te d con- volutional lay er of width 5 and 20 0 feature maps with weig ht normalizatio n, f o llowed by a linear la y er . F or MT mo dels we u sed a static λ value of 0.5. For AL models, we slo wly increased the λ from 0 to 0. 2 u sing the following up date scheme, λ i = 2 / (1 + e − p i ) − 1 , where p i is a scaled version of the epoch number . The a b ove technique was successfully explored in previous studies [ 2 4, 5]. In practice, we observed that reversing the gradien ts f rom the beginn ing of tra in ing can cause the network to diver ge. T o avoid that, we first traine d the mod els with out back- p ropag ating the gradients from D s to E r , (i.e. λ = 0 ). Then, we tra in ed only D s , and finally , we trained both o f them join tly . W e found the above p rocedu re crucial fo r AL, h owe ver in MT the performance is equiv alent to standard training. Thus, fo r com parison, we fo llowed the above appro ach in both settings. 4.2. Representation Analysis W e would like to assess to wh at extent the network encodes the spea ker identity? The motiv ation for answering this ques- tion is two-fold (i) to analyz e th e networks’ b ehavior with respect to the speaker inform ation; and (ii) having a mea- surement of ho w much speaker in formatio n is encoded in the representatio n; this can provid e an intu iti ve insight into how much error r ates can be impr oved u sin g speaker-adversarial learning. T o tackle this question, we fo llow a similar app roach to the one pro posed in [25, 26]. W e first trained a baseline net- work with o ut the speaker classification branch, then we used it to extract representatio ns of the speech signal from differ- ent la y ers in the network. Finally , we use these representa- tions only , to train a mod el to classify the speaker identity . After training, we measure the m odel’ s accuracy to analyz e the presen ce of speaker id e ntity in the representations. The b asic premise of this appro ach is that if we canno t optimze a classifier to predict speaker identity based o n rep - resentation f rom the model, then this pr operty is not e n coded in the r epresentation , o r ra ther, no t enc o ded in an effecti ve way , co nsidered the way it will be used. Notice th a t we are not interested in improvin g speaker classification but rather to have a compar ison between the mod els based on the clas- In Mi d Ou t 0 2 0 4 0 6 0 8 0 Sp e a k e r Cl a ssi f ic a t i on A c c u r a cy Fig. 2 . Speaker classification acc uracy using the rep resenta- tion from d ifferent layers of th e network. Results are re p orted for Baseline, MT and AL mo dels u sing th ree d ifferent set- tings, after the second, eighth , an d fifteenth lay ers. sifier’ s classification accuracy . For that purpo se, we defined three settings, where we add the sp e a ker br anch af ter: ( i) two conv o lutional layers, denote as I N , (ii) eig ht con volutional layers, deno te as M I D , an d (iii) 15 convolutional layers, denote as O U T . For each setting we trained a classification model for 10 epochs, using th e same architecture as th e speaker c lassification bran ch. Figure 2 presents the speaker classification accuracy for the baselin e togeth e r with M T and AL using representatio ns from I N , M I D and O U T . Notice , we observed a similar be- havior in AL, MT , and the b aseline, wher e the classification gets harder when e xtracting representation s from deepe r lay - ers in the n etwork. This implies th at the network alre a dy de- velops speaker inv ariant repre sentations dur ing trainin g. Even though , adding a d ditional speaker loss can pu sh it fu rther and improve speaker inv ar iance. 4.3. WSJ Experiments Follo wing our experiments in subsection 4.2, we tr ained MT and AL in th r ee versions, I N , M ID and O U T . T able 1 sum- marizes the results of our m odels, th e baseline , an d state-of- the-art systems. Using both AL and MT seem s to have a mino r effect on transcription mod eling when training on WSJ dataset. Both approa c h es a chieve equal or som ewhat worse LE R than the baseline o n the d evelopment set, and imp rove over the test set, with AL performs sligh tly better than MT . When considering WER, both AL and MT improve r e sults over the baseline on the test set, howe ver non e of th em im prove over th e develop- ment set. T hese resu lts are som ewhat coun ter-intuitive since both approach es attain similar results, howe ver can b e con- sidered a s opposite paradigm s. On e explanation is that both methods act as another regulariza tio n term. In other words, although the two appr oaches pu sh th e gradients towards o p - posite dir ections, when treated ca refully , they both impose a n- T able 1 . Letter Erro r Rates an d W o rd Er ror Rates on th e WSJ dataset. Model Nov93 dev Nov92 eval T y pe Layer LER WER LER W ER Human [27] - 8.1 - 5.0 BLSTMs [28] - 6.6 - 3.5 convnet BLSTM w/ addi- tional data [27] - 4.4 - 3.6 Our Baseline 7.2 9.7 4.9 5.9 Mult. I N 7.2 9.9 4.8 5.8 M I D 7.3 9.8 4.9 5.9 O U T 7.3 10.1 4.8 5.7 Adv . I N 7.2 9.9 4.7 5.7 M I D 7.3 10.0 4.8 5.8 O U T 7.2 9.8 4.7 5.6 Semi. I N 6.8 9.8 4.6 6.2 M I D 6.8 9.7 4.7 6.0 O U T 6.9 10.0 4.6 6.3 other constrain t o n the m odels’ p arameters [29, 3 0, 31]. 4.4. Adversarial Semi-Supervised Learning Lastly , we wanted to inv estigate whether the ob served im- provements were limited by the relatively small amount o f speakers ( 283) in the train set of WSJ. For that pur pose, we ran a semi- supervised exp e riment were we control the am o unt of tran scribed d ata by using o nly speaker labeled examples. W e add ≈ 35 hours of speech using an other 1 32 speakers, from the L ib rispeech corp us [32], and train the network in an adversarial fashion. For the ne w training e xamples we do not use the transcription s, but o nly the sp eaker iden tity , hence op- timizing the speaker loss only . Results ar e summarized on T a- ble 1. Using ad d itional speaker labeled d ata, seems beneficial in terms of LER: it impr oves over the baseline and previous AL results in all settings. On th e o ther h and, when con sidering WER, none of the settings impr ove over the baseline . T his misalignm ent be- tween th e LER and WER r esults can be either because w e constrained the mo del’ s outputs by L M at deco ding tim e , hence WER re su lts ar e greatly affected by the type o f LM used, or because th e LER improvemen ts are o n mean ingless parts in th e sequence. The analysis of this observations as well as jointly optimizin g acou stic mo del and LM are left fo r future research . 5. CONCLUSION AND FUTURE WORK In this pa per, we stu d ied AL and MT in th e context of speech recogn itio n. W e showed th at deep mod els a lready learn speaker-in variant r epresentation s, but can still ben efit from semi-superv ised speaker ad versarial learn ing. For futu re work, we would like to examine the effect o f semi-superv ised learn in g for low-resource langu ages. 6. REFE RENCES [1] A. Senior and I. Lopez-Moreno, “Improving dnn speaker in- dependen ce wi th i-vec tor inputs, ” in A coustics, Speech and Signal P r ocessing (ICASSP), 2014 IEEE International Confer- ence on . IEEE, 2014, pp. 225–229 . [2] V . Peddinti, G. Chen, D. Povey , and S. Khudanpur , “Re verber - ation robust acoustic modeling using i-vectors with time delay neural networks, ” i n INTERSPEE CH , 2015 . [3] R. Caruana, “Multitask learning, ” in Learning to learn , pp. 95–133 . Springer , 1998. [4] R. Collobert, J. W eston, L. Bottou, M. Karlen, K. Kavukcuoglu , and P . Kuksa, “Natural language pro- cessing (almost) from scratch, ” Journ al of Mac hine Learning Resear ch , vol. 12, pp. 2493–253 7, 2011. [5] Y . Ganin et al., “Domain-adversarial training of neural net- works, ” T he J ournal of Mac hine Learning Resear ch , vol. 17, no. 1, pp. 2096–2030 , 2016 . [6] G. P ironko v , S. Dupon t, and T . Dutoit, “Mu lti-task learning for speech recognition: an ov ervie w , ” in Pr oc. of ESANN , 2016. [7] G. P ironko v , S. Dupont, and T . Dutoit, “Speaker -awa re l ong short-term memory multi-task learning f or speech recogni- tion, ” in Pr oc. of EUSIPCO , 2016. [8] Z. T ang, L. Li, and D. W ang, “Multi-task recurrent model for speech and speak er recognition, ” i n Proc. of APSIP A , 2016. [9] Dmitriy S . et al. , “Inv ariant representations for noisy speech recognition, ” CoRR , vol. abs/1612.01928, 2016. [10] T aira Tsuchiya, Naohiro T awara, T estuji Ogaw a, and T etsunori K obayash i, “Speaker in variant feature e xtraction for zero- resource languag es with adversarial learning, ” in Pr oc. of ICASSP , 2018. [11] Z. Meng, J. Li, Z. Chen, Y . Zhao, V . Mazalov , Y . Gong, et al., “Speaker -in v ariant training via adversarial learning, ” arXiv:1804.007 32 , 2018. [12] G. Saon et al., “English con versational telephone speech recog- nition by humans and machines, ” , 2017. [13] Sining Sun, Ching-Feng Y eh, Mei-Y uh Hwan g, Mari Osten- dorf, and Lei Xie, “Domain adversarial training for accented speech recognition, ” P r oc. of ICASSP , 2018. [14] D. B Paul and J. M Baker , “The design for the wall street journal-based csr corpus, ” in Pr oc. of the workshop on Speech and Natur al Languag e , 1992. [15] Y . N Dauphin, Aa Fan, M. Auli, and D. Grangier , “Language modeling wi th gated con vo lutional networks, ” arXiv:1612.080 83 , 2016. [16] V . Peddinti, D . P ov ey , and S. Khudanpur , “ A time delay neural network architecture for ef ficient mode ling of long temporal contex ts, ” in INTERSPE ECH , 2015. [17] R. Collobert, C. Puhrsch, and G. Synnae ve, “W av2letter: an end-to-end con vnet-based speec h recog nition system, ” arXiv:1609.031 93 , 2016. [18] V . Liptchinsk y , G. Synnae ve , and R. C ollobert, “Letter-based speech recognition with gated conv nets, ” , 2017. [19] A. Graves, S . Fern ´ andez, F . Gomez, and J. Schmidhuber , “Connectionist temporal classification: labelling unsegmented sequence data with r ecurrent neural networks, ” in Proc . of ICML , 2006. [20] D. Palaz, G. Synnae ve, and R. Collobert, “Jointly learning to locate and classify w ords using con volutional networks., ” in Pr oc. of INTERSPEECH , 2016. [21] T . Salimans and D. Kingma, “W eight normalization: A sim- ple reparameterization to accelerate training of deep neural net- works, ” in Proc . of NIPS , 2016. [22] V . Steinbiss, B. Tran, and H. Ne y , “Impro vemen ts in beam search, ” in Pr oc. of ICSLP , 1994. [23] K. Heafi el d, I. Pouzyre vsky , J. H Clark, and P . K oehn, “Scal- able modified kneser-ne y l anguage mode l estimation, ” in Pr oc. of ACL , 2013 . [24] G. Lample, N. Ze ghidour , N. Usunier, A. Bordes, L . Denoy er , et al., “F ader networks: Manipulating images by sli ding at- tributes, ” in Pr oc. of NIPS , 2017. [25] Y . Adi, E. K erman y , Y . Belinko v , O. Lavi, and Y . Goldber g, “Fine-grained analysis of sentence embeddin gs using auxiliary prediction tasks, ” Pr oc. of ICLR , 2016. [26] Y Adi, E Kermany , Y Belinkov , O La vi, and Y Goldberg, “ Analysis of sentence embedding models using prediction tasks in natural language processing, ” IBM Jo urnal of Resear ch and Developmen t , vol. 61, no. 4, pp. 3–1, 2017. [27] Dario Amodei et al., “Deep speech 2 : E nd-to-end speech recognition in english and mandarin, ” i n Pr oc. of ICML , 2016. [28] W . Chan and I. Lane, “Deep recurrent neural networks for acoustic modelling, ” arXiv:1504.0 1482 , 2015. [29] S. Ruder , “ An ov ervie w of multi-task learning in deep neural networks, ” arXiv:1706 .05098 , 2017. [30] M. Nski, L. Si mon, and F . Jurie, “ An adversa rial regularisa- tion for semi-supervised training of structured output neural networks, ” in Pro c. of NIPS , 2017. [31] D. Cohen, B. Mitra, K. Hofmann, and W B. Croft, “Cross domain regu larization for neural ranking mode ls using adver - sarial learning, ” arXiv pr eprint arXiv:1805.03403 , 2018. [32] V . Panayotov , G. Chen, D. Pov ey , and S. Khudanpur , “Lib- rispeech: an asr corpus based on public doma in audio book s, ” in Proc. o f ICASSP , 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment