End-to-end Anchored Speech Recognition

Voice-controlled house-hold devices, like Amazon Echo or Google Home, face the problem of performing speech recognition of device-directed speech in the presence of interfering background speech, i.e., background noise and interfering speech from ano…

Authors: Yiming Wang, Xing Fan, I-Fan Chen

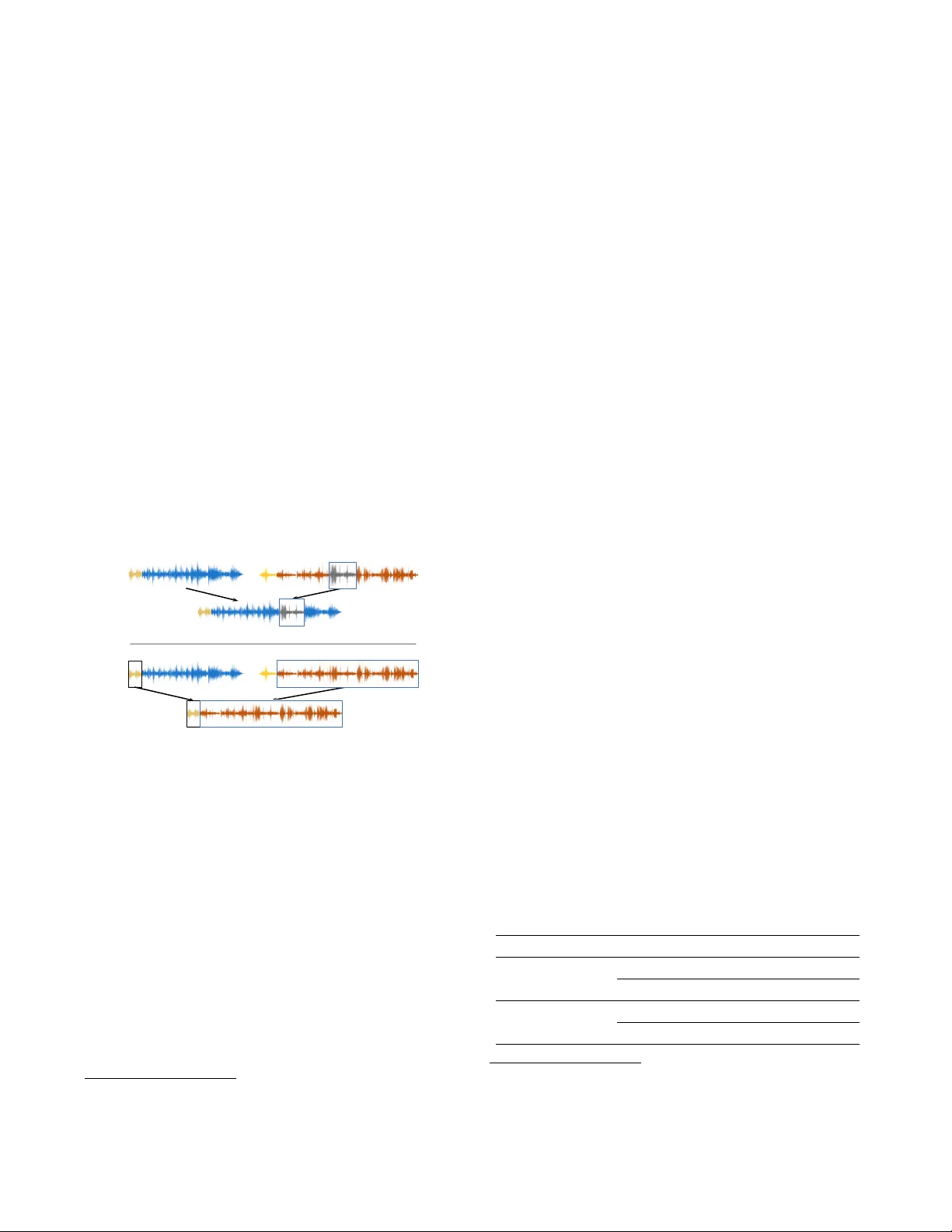

END-TO-END ANCHORED SPEECH RECOGNITION Y iming W ang 1 ∗ , Xing F an 2 , I-F an Chen 2 , Y uzong Liu 2 , T ongfei Chen 1 ∗ , Bj ¨ orn Hoffmeister 2 1 Center for Language and Speech Processing, Johns Hopkins Uni versity , Baltimore, MD, USA 2 Amazon.com, Inc., USA { yiming.wang,tongfei } @jhu.edu , { fanxing,ifanchen,liuyuzon,bjornh } @amazon.com ABSTRA CT V oice-controlled house-hold de vices, like Amazon Echo or Google Home, face the problem of performing speech recognition of device- directed speech in the presence of interfering background speech, i.e., background noise and interfering speech from another person or media device in proximity need to be ignored. W e propose two end-to-end models to tackle this problem with information extracted from the anchor ed se gment . The anchored segment refers to the wake-up word part of an audio stream, which contains valuable speaker information that can be used to suppress interfering speech and background noise. The first method is called Multi-source Attention where the attention mechanism takes both the speaker in- formation and decoder state into consideration. The second method directly learns a frame-level mask on top of the encoder output. W e also explore a multi-task learning setup where we use the ground truth of the mask to guide the learner . Giv en that audio data with interfering speech is rare in our training data set, we also propose a way to synthesize “noisy” speech from “clean” speech to mitigate the mismatch between training and test data. Our proposed meth- ods show up to 15% relative reduction in WER for Amazon Alexa liv e data with interfering background speech without significantly degrading on clean speech. Index T erms — End-to-End ASR, anchored speech recognition, attention-based encoder -decoder netw ork, rob ust speech recognition 1. INTR ODUCTION W e tackle the ASR problem in the scenario where a foreground speaker first wakes up a voice-controlled de vice with an “anchor word”, and the speech after the anchor word is possibly interfered with background speech from other people or media. Consider the following e xample: S P E A KE R 1 : Alexa, play r oc k music. S P E A KE R 2 : I need to go gr ocery shopping . Here the wake-up word “ Alexa” is the anchor word, and thus the utterance by speaker 1 is considered as device-directed speech, while the utterance by speaker 2 is the interfering speech. Our goal is to extract information from the anchor word in order to recog- nize the device-directed speech and ignore the interfering speech. W e name this task anchor ed speech reco gnition . The challenge of this task is to learn a speaker representation from a short seg- ment corresponding to the anchor word. A couple of techniques hav e been proposed for learning speaker representations, e.g., i- vector [1, 2], mean-v ariance normalization [3], maximum likelihood linear regression (MLLR) [4]. W ith the recent progress in deep learning, neural networks are used to learn speaker embeddings ∗ This work was done while the authors were interns at Amazon. for speaker verification/recognition [5, 6, 7, 8]. More relev ant to our task, two methods—anchor mean subtraction (AMS) and an encoder-decoder network—are proposed to detect desired speech by extracting speaker characteristics from the anchor word [9]. This work is further extended for acoustic modeling in hybrid ASR sys- tems [10]. Speaker-dependent mask estimation is also explored for target speak er extraction [11, 12]. Recently , much work has been done towards end-to-end ap- proaches for speech recognition [13, 14, 15, 16, 17, 18]. These approaches typically have a single neural network model to replace previous independently-trained components, namely , acoustic, lan- guage, and pronunciation models from hybrid HMM systems. End- to-end models greatly alleviate the complexity of building an ASR system. Compared with the others, the attention-based encoder- decoder models [19, 16] do not assume conditional independence for output labels as CTC based models [20, 14] do. W e propose two end-to-end models for anchored speech recog- nition, focusing on the case where each frame is either completely from device-directed speech or completely from interfering speech, but not a mixture of both [11, 21]. They are both based on the attention-based encoder-decoder models. The attention mechanism provides an explicit way of aligning each output symbol with dif- ferent input frames, enabling selective decoding from an audio, i.e., only decode desired speech that is taking place in part of the entire audio stream. In the first method, we incorporate the speaker in- formation when calculating the attention ener gy , which leads to an anchor-a ware soft alignment between the decoder state and encoder output. The second method learns a frame-level mask on top of the encoder , where the mask can optionally be learned in the multi-task framew ork if the gold mask is given. This method will pre-select the encoder output before the attention energy is calculated. Fur - thermore, since the training data is relati vely clean, in the sense that it contains de vice-directed speech only , we propose a method to synthesize “noisy” speech from “clean” speech, mitigating the mismatch between training and test data. W e conduct e xperiments on a training corpus consisting of 1200 hours live data in English from Amazon Echo. The results demon- strate a significant WER relati ve gain of 12-15% in test sets with in- terfering background speech. For a test set that contains only device- directed speech, we see a small relativ e WER degradation from the proposed method, ranging from 1.5% to 3%. This paper is or ganized as follows. Section 2 first gi ves an ov erview of the attention-based encoder-decoder model and then presents our two end-to-end anchored ASR models. Section 3 de- scribes ho w we synthesize our training data and train our second proposed model in a multi-task fashion. Section 4 shows our exper - iments and results. Section 5 includes conclusions and future work. T o appear in Proc. ICASSP2019, May 12-17, 2019, Brighton, UK c IEEE 2019 h 1 h 2 h 3 h T Attention c n − 1 q n − 1 y n − 1 Decoder q n y n Encoder x 1: L y n − 2 y n − 1 Fig. 1 . Attention-based Encoder-Decoder Model. It is an illustration in the case of a one-layer decoder . If there are more layers, as in our experiments, an updated context vector c n will also be fed into each of the upper layers in the decoder at the time step n . 2. MODEL O VER VIEW 2.1. Attention-based Encoder -Decoder Model The basic attention-based encoder-decoder model typically consists of 3 modules as depicted in Fig 1: 1) an encoder transforming a se- quence of input features x 1: L into a high-lev el representation of the features h 1: T through a stack of con volution/recurrent layers, where T ≤ L due to possible frame down-sampling; 2) an attention mod- ule summarizing the output of the encoder h 1: T into a fixed length context vector c n at each output step for n ∈ [1 , . . . , N ] , which de- termines parts of the sequence h 1: T to be attended in order to predict the output symbol y n ; 3) a decoder module taking the context vec- tor c n as input and predicting the next symbol y n giv en the history of previous symbols y 1: n − 1 . The entire model can be formulated as follows: h 1: T = Enco der( x 1: L ) (1) α n,t = A ttention( q n , h t ) (2) c n = X t α n,t h t (3) q n = Deco der( q n − 1 , [ y n − 1 ; c n − 1 ]) (4) y n = arg max v ( W f q n + b f ) (5) Although our proposed methods do not limit itself in an y partic- ular attention mechanism, we choose the Bahdanau Attention [19] as the attention function for our experiments. So Eqn. (2) takes the form of: ω n,t = v > tanh( W q q n + W h h t + b ) (6) α n,t = softmax( ω n,t ) (7) 2.2. Multi-source Attention Model Our first approach is based on the intuition that the attention mech- anism should consider both the speaker information and the decoder state – when computing the attention weights, in addition to condi- tioning on the decoder state, the speaker information extracted from the input frames is also utilized. In our scenario, the device-directed speech and the anchor word are uttered by the same speaker , while the interfering background speech is from a different speaker . There- fore, the attention mechanism can be augmented by placing more attention probability mass on frames that are more similar to the an- chor word in terms of speaker characteristics. Formally speaking, besides our previous notations, the an- chor word se gment is denoted as w 1: L 0 . W e add another encoder S - Enco der to be applied on both w 1: L 0 and x 1: L to generate a fixed-length vector ˜ w and a variable length sequence u 1: T respec- tiv ely: ˜ w = Pooling (S - Encoder( w 1: L 0 )) (8) u 1: T = S - Enco der( x 1: L ) (9) As sho wn abov e, S - Enco der e xtracts speaker characteristics from the acoustic features. In our experiments, the pooling function is im- plemented as Max-pooling across all output frames if S - Enco der is a con volutional network, or picking the hidden state of the last frame if S - Encoder is a recurrent network. Rather than being appended to acoustic feature vector and fed into the decoder 1 as proposed in [10], ˜ w is directly in volv ed in computing the attention weights. Specifi- cally , Eqn. (7) and Eqn. (3) are replaced by: φ t = Similarit y( u t , ˜ w ) (10) α anchor aware n,t = softmax( ω n,t + g · φ t ) (11) c n = X t α anchor aware n,t h t (12) where g is a trainable scalar used to automatically adjust the relativ e contribution from the speaker acoustic information. Similarit y ( · , · ) is implemented as dot-product in our experiments. As a result, the at- tention weights are essentially computed from two dif ferent sources: the ASR decoding state, and the confidence of decision on whether each frame belongs to the de vice-directed speech. W e call this model Multi-sour ce Attention to reflect the way the attention weights are computed. 2.3. Mask-based Model The Multi-source Attention model jointly considers speaker char- acteristic and ASR decoder state when calculating the attention weights. Howe ver , since the attention weights are normalized with a softmax function, whether each frame needs to be ignored is not independently decided, which reduces the modeling flexibility in frame selection. As the second approach we propose the Mask-based model, where a frame-wise mask on top of the encoder 2 is estimated by lev eraging the speaker acoustic information contained in the anchor word and the actual recognition utterance. The attention mechanism is then performed on the masked feature representation. Compared with the Multi-source Attention model, attention in the Mask-based model only focuses on remaining frames after masking, and for each frame it is independently decided whether to be masked out based on their acoustic similarity . Formally , Eqn. (6) and Eqn. (3) are modified as: φ t = sigmoid( g · Similarity( u t , ˜ w )) (13) h masked t = φ t h t (14) ω n,t = v > tanh( W q q n + W h h masked t + b ) (15) c n = X t α n,t h masked t (16) where Similarit y( · , · ) in Eqn. (13) is dot-product as well. 1 W e tried that in our preliminary experiments b ut it did not perform well. 2 Here “frame-wise” actually means frame-wise after down-sampling , in accordance with the frame down-sampling in the encoder network (see Sec- tion 4.1 for details). 2 3. SYNTHETIC D A T A AND MUL TI-T ASK TRAINING 3.1. Synthetic Data A problem we encountered in our task is: there is very little training data that has the same condition as the test case. Some utterances in the test set contain speech from two or more speakers (denoted as the “speaker change” case), and some of the other utterances only con- tain background speech (denoted as the “no desired speaker” case). In contrast, most of the training data does not hav e interfering or background speech, making the model unable to learn to ignore. In order to simulate the condition of the test case, we generate two types of synthetic data for training: • Synthetic Method 1: for an utterance, a random segment 3 from another utterance in the dataset is inserted at a random position after the wake-up word part within this utterance, while its transcript is unchanged. • Synthetic Method 2: the entire utterance, excluding the wake- up word part, is replaced by another utterance, and its tran- script is considered as empty . Fig. 2 illustrates the synthesizing process. These two types of syn- thetic data simulate the “speaker change” case and the “no desired speaker” case respecti vely . The synthetic and device-directed data are mixed together to form our training data. The mixing proportion is determined from experiments. what’s the weather play a song from frozen what’s the weather what’s the weather play a song from frozen < SPACE > Fig. 2 . T wo types of synthetic data: Synthetic Method 1 (top) and 2 (bottom). The symbol h W i represents the wake-up word, and h S PAC E i represents empty transcripts. 3.2. Multi-task T raining for Mask-based Model For the generated synthetic data, we know which frames come from the original utterance and which are not, i.e., we hav e the gold mask for each synthetic utterance, where the frames from the original ut- terance are labeled with “1”, and the other frames are labeled with “0”. Using this gold mask as an auxiliary target, we train the Mask- based model in a multi-task w ay , where the o verall loss is defined as a linear interpolation of the normal ASR cross-entropy loss and the cross-entropy-based mask loss: (1 − λ ) L ASR + λ L mask . The gold mask provides a supervision signal to explicitly guide S - Enco der to extract acoustic features that can better distinguish the inserted frames from those in the original utterance. As will be shown in our e xperiments, with the multi-task training the predicted mask is more accurate in selecting desired frames for the decoder . 3 The frame length of a segment is uniformly sampled within the range [50,150] in our experiments. It is possible that the randomly selected segment is purely non-speech or ev en silence. 4. EXPERIMENTS 4.1. Experimental Settings W e conduct our experiments on training data of 1200-hour live data in English collected from the Amazon Echo. Each utterance is hand- transcribed and begins with the same wake-up word whose align- ment with time is provided by end-point detection [22, 23, 24, 25]. As we have mentioned, while the training data is relatively clean and usually only contains de vice-directed speech, the test data is more challenging and under mismatched conditions with training data: it may be noisy , may contain background speech 4 , or may e ven con- tain no device-directed speech at all. In order to ev aluate the per- formance on both the matched and mismatched cases, tw o test sets are formed: a “normal set” (25k words in transcripts) where utter - ances have a similar condition as those in the training set, and a “hard set” (5.4k w ords in transcripts) containing the challenging utterances with interfering background speech. Note that both of the two test sets are real data without any synthesis. W e also prepare a dev el- opment set (“normal”+“hard”) with a similar size as the test sets for hyper-parameter tuning. For all the experiments, 64-dimensional log filterbank energy (LFBE) features are extracted every 10ms with a window size of 25ms. The end-to-end systems are grapheme-based and the vocabulary size is 36, which is determined by thresholding on the minimum number of character counts from the training tran- scripts. Our implementation is based on the open-sourced toolkit O P E N S E Q 2 S E Q [26]. Our baseline end-to-end model does not consider anchor words. Its encoder consists of three conv olution layers resulting in 2x frame down-sampling and 8x frequency do wn-sampling, followed by 3 Bi- directional LSTM [27] layers with 320 hidden units. Its decoder con- sists of 3 unidirectional-LSTM layers with 320 hidden units. The at- tention function is Bahdanau Attention [19]. The cross-entropy loss on characters is optimized using Adam [28], with an initial learning rate 0.0008 which is then adjusted by exponential decay . A beam search with beam size 15 is adopted for decoding. The abov e setting is also used in our proposed models. 4.2. Multi-source Attention Model vs. Baseline S - Enco der consists of three conv olution layers with the same archi- tecture as that in the baseline’ s encoder . First of all, we compare Multi-source Attention Model and the baseline trained on the de vice-directed-only data, i.e., without an y synthetic data. The results are shown in T able 1. T able 1 . Multi-source Attention Model vs. Baseline with de vice- directed-only training data. The WER, substitution, insertion and deletion v alues are all normalized by t he baseline WER on the “nor - mal” set 6 . The normalization applies to all the tables throughout this paper in the same way . Model T raining Set T est Set WER sub ins del WERR(%) Baseline Device- directed-only normal 1.000 0.715 0.108 0.177 — hard 3.354 1.762 1.123 0.469 — Mul-src. Attn. Device- directed-only normal 1.015 0.731 0.115 0.169 -1.5 hard 3.262 1.746 1.062 0.454 +2.8 4 background speech includes: 1) interfering speech from an actual non- device-directed speaker; and 2) multi-media speech, meaning that a televi- sion, radio, or other media device is playing back speech in the background. 6 For example, if WER for the baseline is 5 . 0% for the “normal” set, and 3 The relative WER reduction (WERR) of Multi-source Attention on the “hard” set is 2.8% and it is mostly due to a reduction in insertion errors. W e also observe a slight WER degradation of 1.5% relative on the “normal” set. It implies that the proposed model is more robust to interfering background speech. Next, we further v alidate the effecti veness of the Multi-source Attention model by showing how synthetic training data has dif ferent impact on it and the baseline model respectively . Synthetic training data is prepared such that 50% of the utterances in the training set are kept unchanged, 44% are processed with Synthetic Method 1, and 6% are processed with Synthetic Method 2. The ratio is tuned on the dev elopment set. This new training data is referred as “augmented” in all result tables. T able 2 exhibits the results. For the baseline model, the performance degrades drastically when trained on aug- mented data: the deletion errors on both of the “normal” and “hard” test sets get much higher . This is expected since without the anchor word the model has no extra acoustic information of which part of the utterance is desired, so that it tends to ignore frames re gardless of whether they are actually from device-directed speech. On the con- trary , for the Multi-source Attention model the WERR (augmented vs. device-directly-only) on the “hard” set is 12 . 5% , and WER on the “normal” set does not get worse. Moreov er , the insertion errors on both test sets get reduced while the deletion errors increase much less than those in the case of the baseline model, indicating that by incorporating the anchor word information the proposed model ef- fectiv ely improves the ability of focusing on device-directed speech and ignoring others. This series of experiments also reveals signifi- cant benefits from using the synthetic data with the proposed model. In total, the combination of the Multi-source Attention model and augmented training data achiev es 14 . 9% WERR on the “hard” set, with only 1 . 5% degradation on the “normal” set. T able 2 . Augmented vs. Device-directed-only training data. Results with “De vice-directed-only” training set are from T able 1 for clearer comparisons. Model T raining Set T est Set WER sub ins del WERR(%) Baseline Device- directed-only normal 1.000 0.715 0.108 0.177 — hard 3.354 1.762 1.123 0.469 — Augmented normal 3.215 1.223 0.038 1.954 -221.5 hard 4.208 1.777 0.246 2.185 -30.9 Mul-src. Attn. Device- directed-only normal 1.015 0.731 0.115 0.169 -1.5 hard 3.262 1.746 1.062 0.454 +2.8 Augmented normal 1.015 0.700 0.108 0.207 -1.5 hard 2.854 1.569 0.723 0.562 +14.9 4.3. Mask-based Model In the Mask-based model experiments, 3 conv olution and 1 Bi- directional LSTM layers are used as S - Encoder , as we observed that it empirically performs better than conv olution-only layers. Due to the importance of using the augmented data for training our previous model, the same synthetic approach is directly applied to train the Mask-based model. Also, as we mentioned in Sec 3.2, multi-task training can be conducted since we know the gold mask for each synthesized utterance. Giv en the imbalanced mask labels, 25 . 0% for the “hard” set, then the normalized values would be 1 . 000 and 5 . 000 respectiv ely . i.e., frames with label “1” (corresponding to those from the original utterance) constitute the majority compared with frames with label “0” (corresponding to those from another random utterance), we use weighted cross entropy loss for the auxiliary mask learning task, where the weight on frames with label “1” is 0 . 6 and on those with label “0” is 1 . 0 , to counteract the label imbalance. W e first set the multi-task loss weighting factor λ = 1 . 0 so that only the mask learning is performed. It turns out that around 70% of frames with label “0” and 98% with label “1” are recalled on a held-out set synthesized the same way as the training data, which demonstrates the effecti veness of estimating masks from the syn- thetic data. Then we perform ASR using the Mask-based model with and without mask supervision respectiv ely , and the results are presented in T able 3. WERRs are all relative to the baseline model trained on device-directed-only data. For the Mask-based model without mask supervision, it achieves 3 . 9% WERR on the “hard” set while has a degradation of 34 . 8% on the “normal” set. On the other hand, with mask supervision ( λ = 0 . 1 ) corresponding to the multi-task training, it yields 12 . 6% WERR on the “hard” set while only 3 . 0% worse on the “normal” set. The performance gap between them can be attributed to the ability of mask prediction: while with mask su- pervision the recall is still around 70% (for frames labeled as “0”) and 98% (for frames labeled as “1”) on the held-out set, it is only 48% and 50% respectiv ely without mask supervision. Note that ev en with multi-task training, the WER performance of the Mask-based model is still slightly behind the Multi-source Attention model, mainly due to the insertion error . Our conjecture is, the mask prediction is only done within the encoder, which may lose semantic information from the decoder that is potentially useful for discriminating device-directed speech from others. T able 3 . Mask-based Model: with and without mask supervision. Model Training Set T est Set WER sub ins del WERR(%) w/o Supervision Augmented normal 1.348 0.725 0.096 0.527 -34.8 hard 3.223 1.508 0.628 1.087 +3.9 w/ Supervision Augmented normal 1.030 0.715 0.115 0.200 -3.0 hard 2.931 1.586 0.809 0.536 +12.6 5. CONCLUSIONS AND FUTURE WORK In this paper we propose two approaches for end-to-end anchored speech recognition, namely Multi-source Attention and the Mask- based model. W e also propose two ways to generate synthetic data for end-to-end model training to improve the performance. Given the synthetic training data, a multi-task training scheme for the Mask- based model is also proposed. W ith the information extracted from the anchor w ord, both of these methods show their ability in picking up device-directed part of speech and ignore other parts. This results in large WER impro vement of 15% relative on the test set with inter- fering background speech, with only a minor degradation of 1.5% on clean speech. Obviously the mismatch still exists between the train- ing and test data. Future work would include finding a better way to generate synthetic data with more similar condition to the “hard” test set, and taking decoder state into consideration when estimating the mask. The other direction is to utilize anchor word information in contextual speech recognition [29]. 6. A CKNO WLEDGEMENTS The authors would like to thank Hainan Xu for proofreading. 4 7. REFERENCES [1] Najim Dehak, Patrick J K enny , R ´ eda Dehak, Pierre Dumouchel, and Pierre Ouellet, “Front-end factor analysis for speaker veri- fication, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , vol. 19, no. 4, pp. 788–798, 2011. [2] George Saon, Hagen Soltau, David Nahamoo, and Michael Picheny , “Speaker adaptation of neural network acoustic models using i-vectors., ” in ASR U , 2013, pp. 55–59. [3] Fu-Hua Liu, Richard M Stern, Xuedong Huang, and Alejandro Acero, “Efficient cepstral normalization for robust speech recog- nition, ” in Pr oceedings of the workshop on Human Language T echnology . Association for Computational Linguistics, 1993, pp. 69–74. [4] Christopher J Leggetter and Philip C W oodland, “Maximum likelihood linear regression for speak er adaptation of continuous density hidden markov models, ” Computer speech & language , vol. 9, no. 2, pp. 171–185, 1995. [5] Ehsan V ariani, Xin Lei, Erik McDermott, and Javier Gonzalez- Dominguez, “Deep neural networks for small footprint text- dependent speaker verification., ” in Acoustics, Speech and Sig- nal Pr ocessing (ICASSP), 2014 IEEE International Conference on . IEEE, 2014, pp. 4080–4084. [6] Mitchell McLaren, Y un Lei, and Luciana Ferrer , “ Advances in deep neural network approaches to speaker recognition, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2015 IEEE International Conference on . IEEE, 2015, pp. 4814–4818. [7] David Snyder , Pe gah Ghahremani, Daniel Po ve y , Daniel Garcia- Romero, Y ishay Carmiel, and Sanjeev Khudanpur, “Deep neu- ral network-based speaker embeddings for end-to-end speaker verification, ” in Spoken Language T echnology W orkshop (SLT), 2016 IEEE . IEEE, 2016, pp. 165–170. [8] Georg Heigold, Ignacio Moreno, Samy Bengio, and Noam Shazeer , “End-to-end text-dependent speaker verification, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Conference on . IEEE, 2016, pp. 5115–5119. [9] Roland Maas, Sree Hari Krishnan Parthasarathi, Brian King, Ruitong Huang, and Bj ¨ orn Hoffmeister , “ Anchored speech de- tection, ” in INTERSPEECH , 2016, pp. 2963–2967. [10] Brian King, I-Fan Chen, Y onatan V aizman, Y uzong Liu, Roland Maas, Sree Hari Krishnan Parthasarathi, and Bj ¨ orn Hoffmeister , “Robust speech recognition via anchor w ord representations, ” in INTERSPEECH , 2017, pp. 2471–2475. [11] Marc Delcroix, Katerina Zmolikov a, Keisuke Kinoshita, At- sunori Ogaw a, and T omohiro Nakatani, “Single channel tar- get speaker extraction and recognition with speaker beam, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2018 IEEE International Conference on . IEEE, 2018, pp. 5554–5558. [12] Jun W ang, Jie Chen, Dan Su, Lianwu Chen, Meng Y u, Y an- min Qian, and Dong Y u, “Deep extractor network for target speaker recovery from single channel speech mixtures, ” in IN- TERSPEECH , 2018, pp. 307–311. [13] Alex Graves, “Sequence transduction with recurrent neural net- works, ” CoRR , vol. abs/1211.3711, 2012. [14] Alex Graves and Navdeep Jaitly , “T owards end-to-end speech recognition with recurrent neural networks, ” in International Confer ence on Machine Learning , 2014, pp. 1764–1772. [15] Jan K Chorowski, Dzmitry Bahdanau, Dmitriy Serdyuk, Kyungh yun Cho, and Y oshua Bengio, “ Attention-based mod- els for speech recognition, ” in Advances in neural information pr ocessing systems , 2015, pp. 577–585. [16] William Chan, Navdeep Jaitly , Quoc Le, and Oriol V inyals, “Listen, attend and spell: A neural network for large v ocabulary con versational speech recognition, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Confer - ence on . IEEE, 2016, pp. 4960–4964. [17] Chung-Cheng Chiu, T ara N Sainath, Y onghui Wu, Rohit Prab- hav alkar, Patrick Nguyen, Zhifeng Chen, Anjuli Kannan, Ron J W eiss, Kanishka Rao, Ekaterina Gonina, et al., “State-of-the-art speech recognition with sequence-to-sequence models, ” in 2018 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2018, pp. 4774–4778. [18] Zhehuai Chen, Qi Liu, Hao Li, and Kai Y u, “On modular train- ing of neural acoustics-to-word model for lvcsr, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2018 IEEE Interna- tional Conference on . IEEE, 2018, pp. 4754–4758. [19] Dzmitry Bahdanau, Kyunghyun Cho, and Y oshua Bengio, “Neu- ral machine translation by jointly learning to align and translate, ” arXiv preprint arXiv:1409.0473 , 2014. [20] Alex Grav es, Santiago Fern ´ andez, Faustino Gomez, and J ¨ urgen Schmidhuber , “Connectionist temporal classification: labelling unsegmented sequence data with recurrent neural networks, ” in Pr oceedings of the 23rd international conference on Machine learning . A CM, 2006, pp. 369–376. [21] Zhehuai Chen, Jasha Droppo, Jinyu Li, and W ayne Xiong, “Pro- gressiv e joint modeling in unsupervised single-channel over - lapped speech recognition, ” IEEE/ACM T ransactions on Audio, Speech and Language Pr ocessing (T ASLP) , vol. 26, no. 1, pp. 184–196, 2018. [22] W on-Ho Shin, Byoung-Soo Lee, Y un-Keun Lee, and Jong-Seok Lee, “Speech/non-speech classification using multiple features for robust endpoint detection, ” in Acoustics, Speech, and Signal Pr ocessing (ICASSP), 2000 IEEE International Conference on . IEEE, 2000, vol. 3, pp. 1399–1402. [23] Xin Li, Huaping Liu, Y u Zheng, and Bolin Xu, “Rob ust speech endpoint detection based on improved adaptiv e band- partitioning spectral entropy , ” in International Confer ence on Life System Modeling and Simulation . Springer, 2007, pp. 36– 45. [24] Matt Shannon, Gabor Simko, and Carolina Parada, “Improved end-of-query detection for streaming speech recognition, ” in IN- TERSPEECH , 2017, pp. 1909–1913. [25] Roland Maas, Ariya Rastro w , Chengyuan Ma, Guitang Lan, Kyle Goehner , Gautam T iwari, Shaun Joseph, and Bj ¨ orn Hoffmeister , “Combining acoustic embeddings and decod- ing features for end-of-utterance detection in real-time far-field speech recognition systems, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2018 IEEE International Conference on . IEEE, 2018, pp. 5544–5548. [26] Oleksii Kuchaie v , Boris Ginsbur g, Igor Gitman, V italy Lavrukhin, Jason Li, Huyen Nguyen, Carl Case, and Paulius Mi- cike vicius, “Mixed-precision training for nlp and speech recog- nition with openseq2seq, ” 2018. [27] Sepp Hochreiter and J ¨ urgen Schmidhuber, “Long short-term memory , ” Neural computation , vol. 9, no. 8, pp. 1735–1780, 1997. [28] Diederik P Kingma and Jimmy Ba, “ Adam: A method for stochastic optimization, ” arXiv preprint , 2014. [29] Zhehuai Chen, Mahav eer Jain, Y ongqiang W ang, Michael Seltzer , and Christian Fuegen, “End-to-end contextual spebech recognition using class language models and a token passing de- coder , ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2019 IEEE International Confer ence on . IEEE, 2019 (to appear). 5

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment