Convolutional Neural Networks to Enhance Coded Speech

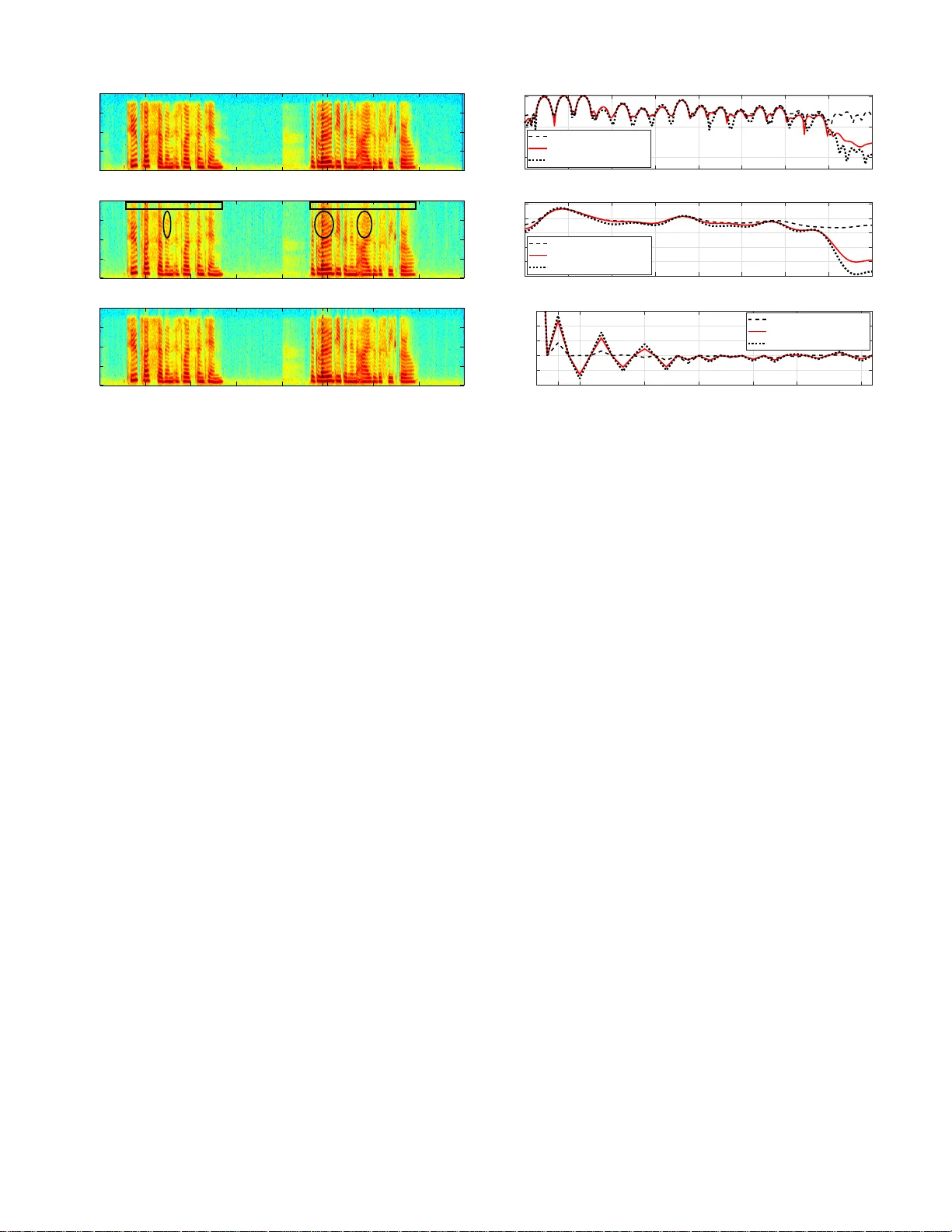

Enhancing coded speech suffering from far-end acoustic background noise, quantization noise, and potentially transmission errors, is a challenging task. In this work we propose two postprocessing approaches applying convolutional neural networks (CNN…

Authors: Ziyue Zhao, Huijun Liu, Tim Fingscheidt