Adaptive and optimal online linear regression on $ell^1$-balls

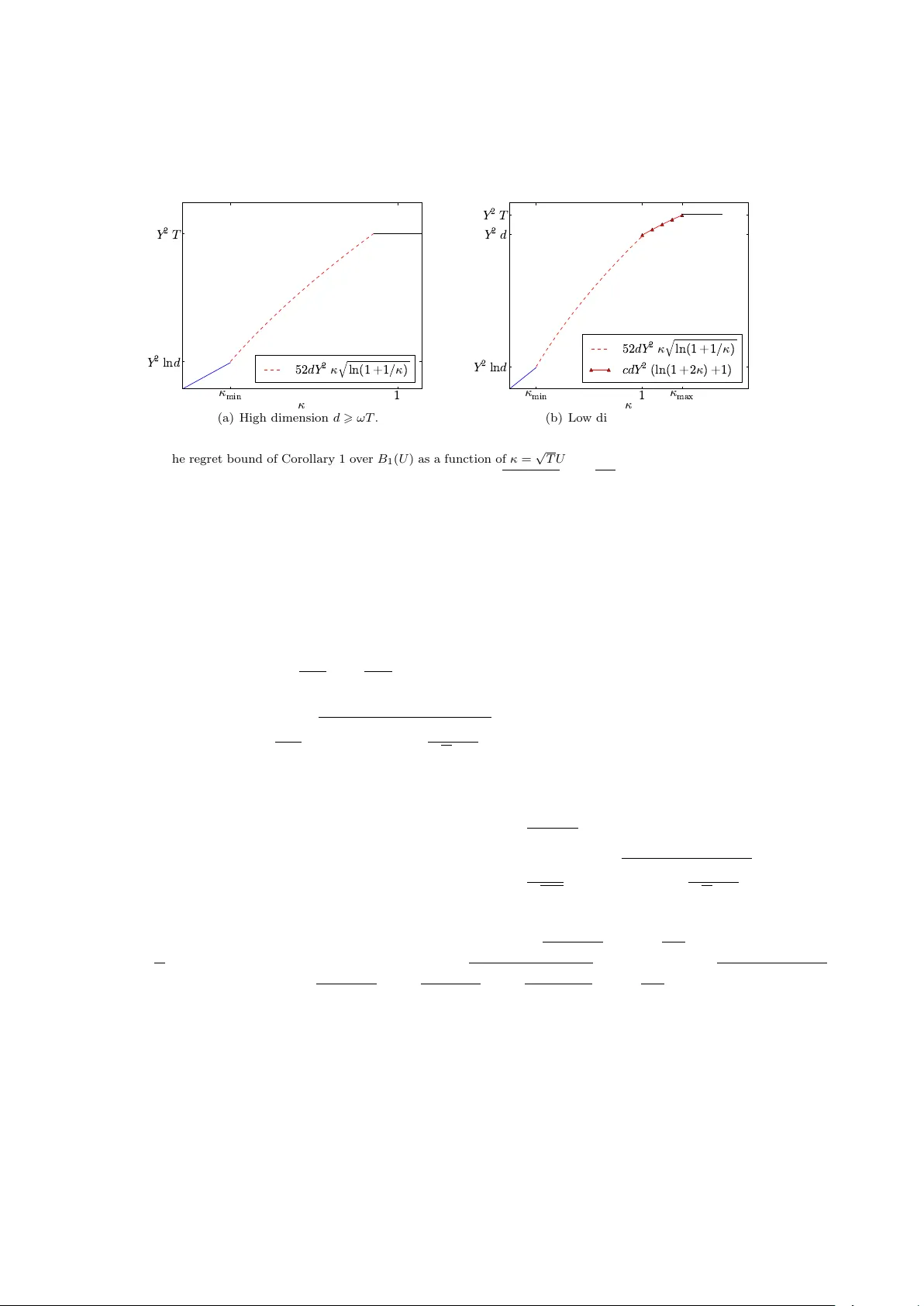

We consider the problem of online linear regression on individual sequences. The goal in this paper is for the forecaster to output sequential predictions which are, after $T$ time rounds, almost as good as the ones output by the best linear predicto…

Authors: Sebastien Gerchinovitz (DMA, CLASSIC), Jia Yuan Yu