Decision-Making Under Uncertainty in Research Synthesis: Designing for the Garden of Forking Paths

To make evidence-based recommendations to decision-makers, researchers conducting systematic reviews and meta-analyses must navigate a garden of forking paths: a series of analytical decision-points, each of which has the potential to influence findings. To identify challenges and opportunities related to designing systems to help researchers manage uncertainty around which of multiple analyses is best, we interviewed 11 professional researchers who conduct research synthesis to inform decision-making within three organizations. We conducted a qualitative analysis identifying 480 analytical decisions made by researchers throughout the scientific process. We present descriptions of current practices in applied research synthesis and corresponding design challenges: making it more feasible for researchers to try and compare analyses, shifting researchers’ attention from rationales for decisions to impacts on results, and supporting communication techniques that acknowledge decision-makers’ aversions to uncertainty. We identify opportunities to design systems which help researchers explore, reason about, and communicate uncertainty in decision-making about possible analyses in research synthesis.

💡 Research Summary

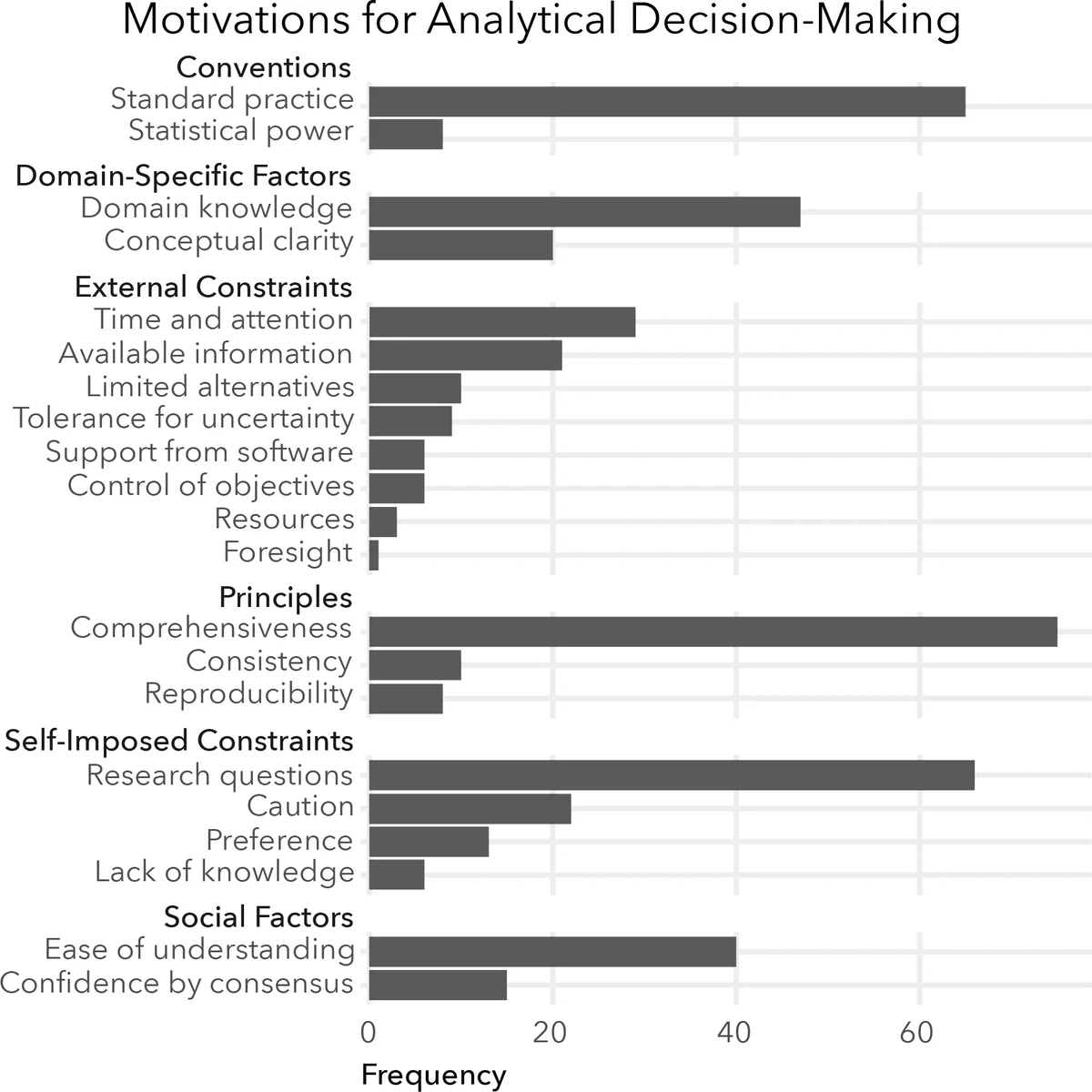

The paper investigates how professional researchers who conduct systematic reviews and meta‑analyses navigate the “garden of forking paths”—the myriad analytical decisions that can shape study outcomes. To uncover the practical challenges and design opportunities, the authors interviewed eleven researchers from three distinct organizations: the U.S. Navy, a large public‑university medical center, and a Veterans’ Affairs medical center. Across the interviews, they identified and coded 480 concrete analytical decisions made throughout the research synthesis workflow.

The authors adopt a decision‑theoretic lens and categorize each decision according to three strategies derived from Lipshitz and Strauss’s framework for handling uncertainty: Acknowledge, Reduce, and Suppress.

- Acknowledge decisions involve recognizing multiple possible analysis paths, broadening the scope, or explicitly documenting caveats to pre‑empt misinterpretation. Examples include searching gray literature, framing research questions broadly, and noting potential biases.

- Reduce decisions impose rules, conventions, or assumptions to narrow the choice set and increase reproducibility. Typical actions are applying predefined inclusion/exclusion criteria, selecting a specific statistical model, or following a standard data‑extraction protocol.

- Suppress decisions are made under time pressure or intuition, effectively discarding alternatives without systematic justification. Participants described this as “guessing” or “going with my gut” when resources were limited.

Key findings reveal three major pain points. First, researchers rarely explore multiple analytical pathways; they usually commit to a single route because of time constraints, limited tooling, or institutional pressure. Second, there is a disconnect between the rationale for a decision (why it was made) and the impact of that decision on the final meta‑analytic results. While participants can articulate their reasoning, they seldom quantify how a particular choice shifts effect‑size estimates or heterogeneity statistics. Third, decision‑makers who consume the synthesized evidence (policy makers, clinicians, military leaders) display a strong aversion to uncertainty, prompting researchers to downplay or hide methodological ambiguities rather than openly discuss them.

The paper critiques existing research‑synthesis software (RevMan, EPPI‑Reviewer, Rayyan) for being modular and stage‑specific. These tools support routine tasks—screening, risk‑of‑bias assessment, forest‑plot generation—but they do not facilitate simultaneous exploration of alternative analyses, systematic capture of decision rationales linked to outcome changes, or communication of uncertainty to non‑technical audiences.

From these observations, the authors propose three design directions for next‑generation synthesis platforms:

-

Exploratory Analysis Path Interface – a workspace where users can instantiate multiple “what‑if” scenarios (different inclusion criteria, statistical models, sensitivity analyses) in parallel, run them automatically, and compare results side‑by‑side through interactive dashboards.

-

Decision‑Impact Visualization – a visual trace that connects each analytical choice to its downstream effect on key meta‑analytic metrics (e.g., pooled effect, confidence interval width, I²). This helps researchers see which decisions are truly consequential and which are benign.

-

Uncertainty Communication Toolkit – templated narrative and visual elements (e.g., uncertainty ribbons, sensitivity‑analysis summaries) that can be inserted into final reports, making the degree of methodological uncertainty explicit for policy audiences.

The authors conclude that acknowledging uncertainty early (surveying the space of possible analyses) is essential for later reduction or suppression steps to be well‑grounded. By embedding support for multi‑path exploration, rationale‑impact linkage, and transparent uncertainty reporting, future systems can reduce hidden researcher degrees of freedom, improve reproducibility, and ultimately deliver more trustworthy evidence to decision‑makers. The paper calls for prototyping these ideas and evaluating them with both synthesis practitioners and the stakeholders who rely on their findings.

Comments & Academic Discussion

Loading comments...

Leave a Comment