L0TV: A Sparse Optimization Method for Impulse Noise Image Restoration

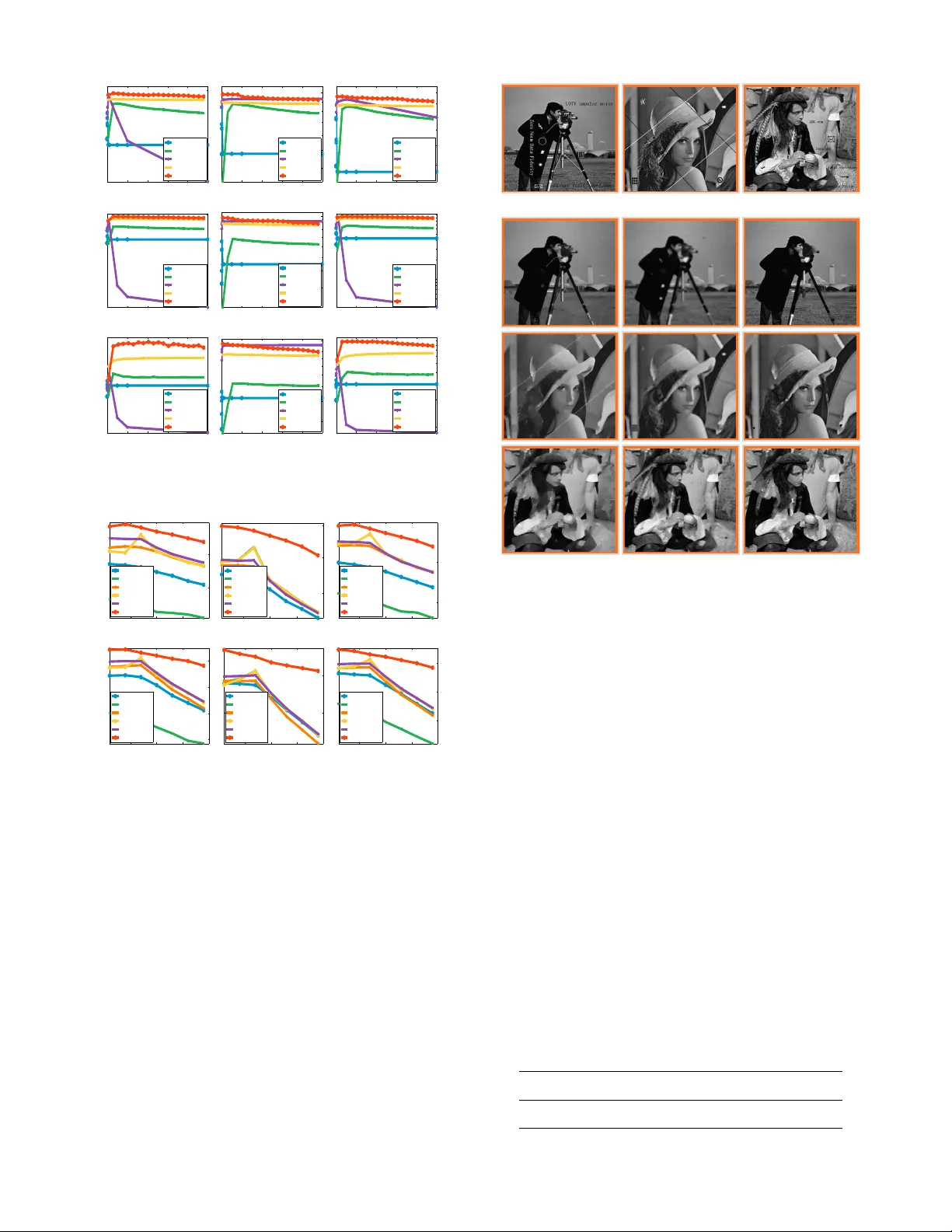

Total Variation (TV) is an effective and popular prior model in the field of regularization-based image processing. This paper focuses on total variation for removing impulse noise in image restoration. This type of noise frequently arises in data ac…

Authors: Ganzhao Yuan, Bernard Ghanem