Autoencoder Based Architecture For Fast & Real Time Audio Style Transfer

Recently, there has been great interest in the field of audio style transfer, where a stylized audio is generated by imposing the style of a reference audio on the content of a target audio. We improve on the current approaches which use neural netwo…

Authors: Dhruv Ramani, Samarjit Karmakar, Anirban P

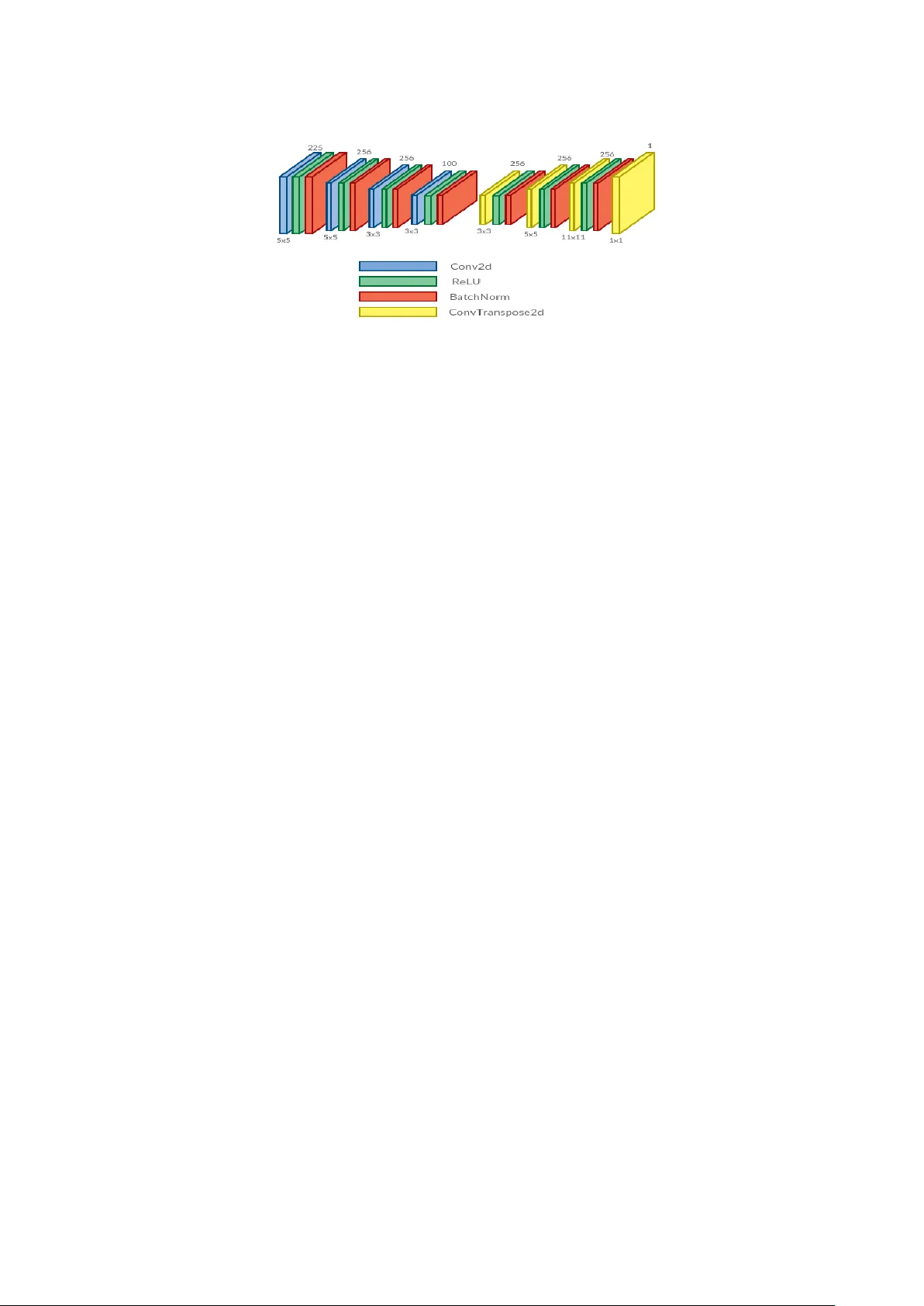

A utoencoder Based Ar chitectur e f or F ast & Real T ime A udio Style T ransfer Dhruv Ramani, Samarjit Karmakar , Anirban Panda, Asad Ahmed, Pratham T angri Ne vronas National Institute of T echnology , W arangal, India { dhruvramani98, karmakar.samarjit, ahmed.asad19, prathamtangri2015 } @gmail.com, anirban.panda@ieee.org Abstract Recently , there has been great interest in the field of audio style transfer , where a stylized audio is generated by imposing the style of a reference audio on the con- tent of a target audio. W e impro v e on the current approaches which use neural net- works to extract the content and the style of the audio signal and propose a ne w au- toencoder based architecture for the task. This network generates a stylized audio for a content audio in a single forward pass. The proposed network architecture prov es to be adv antageous o ver the quality of audio produced and the time taken to train the network. The netw ork is exper - imented on speech signals to confirm the v alidity of our proposal. 1 Introduction The task of artistic style transfer has been far stud- ied and implemented for generating stylized im- ages. It pro vides an insight that the content and style representation of visual imagery are separa- ble. Style transfer in images can be explained as imposing the style extracted from a reference im- age onto the content of a target image. The sem- inal works of Gatys et al (2016) and Johnson et al (2016), sho ws the usage of con v olutional neural networks (CNNs) for the task. CNNs pro v e to be adv antageous for the task because of the represen- tations learned by it in the deeper layers. These deep features can be used to represent the content and the style of an image, separately . This has led to a great increase of research in the area of style transfer which incorporates neural networks to transfer the ”style” of an image (eg. a painting) to another (eg. a photograph). The task of audio style transfer is recently gain- ing popularity as an area of research because of its wide applications in audio editing and sound gen- eration. The meaning of style and content for an audio signal is different as compared to an image. The current consensus implies that style refers to the speaker’ s identity , accent, intonation, and the content refers to the linguistic information enc- coded in it lik e phonemes and word. Over time, v arious methods hav e been proposed which in- volv e usage of models used for image style trans- fer on audio. This in volves con verting the raw au- dio into a spectrogram and using neural networks to e xtract the required features. Further, we gener- ate wa veforms which match the high le vel network acti v ations from a content signal while simultane- ously matching low le vel statistics computed from lo wer le vel activ ations from a style signal. In this paper , we propose a new and a nov el architecture which uses similar approaches to stylize an au- dio. But unlike previous proposed methods, our proposed architecture stylizes an audio in a single network pass and is thus extremely useful for real time audio style transfer . The network architecture is carefully crafted to ensure f aster training time and low computational usage. In this paper , we explore previous methods which hav e been pro- posed for artistic style transfer in images and au- dio, propose a new architecture and analyze it’ s performance. 2 Related W ork The work of Gatys et al (2016) shows the ad- v antageous use of con volutional neural networks (CNNs) for stylizing the tar get image. In it, the content of an image is conceptualized as high le vel representation gained from the deeper lay- ers of a CNN trained for image classification. The style representation of an image is taken as a lin- ear combination of the gram matrix of the feature maps of dif ferent layers of the same network. Let the filter response tensor of the l t h layer of the network be F l ( S ) , where S is the style image, Figure 1: A frame work for audio style transfer using a single con volutional autoencoder , trained on spectrograms of speech signals and a single style signal is used to generate stylized audio. The signal is pre-processed by applying Short T ime Fourier T ransform (STFT) on the raw input audio to generate an audio spectrogram. This spectrogram is passed through the transformation network to generate the stylized spectrogram. F or retriev eing the audio back from the spectrogram, Grif fin-Lim algorithm is used to con v ert the stylized spectrogram to the required stylized audio. then the gram matrix representation of this layer is gi ven as: Gram ( F l ( S )) = F l ( S ) · F l ( S ) T (1) The above method w as slow and had to be itera- ti vely optimized for each content-style pair to ob- tain a single stylized output. Johnson et al (2016) proposed a transformation netw ork based architec- ture. In this, they train a transformation network on content images, imposing the style of a single style image to generate the stylized output. The network learns a mapping from the content images to stylized images, which are biased towards a sin- gle style image. The stylized outputs are generated in a single forward pass of the network, hence, this method has aptly been named fast neural style transfer and is extremely useful for real time style transfer applications. The loss for the network is given by taking into account a measure of content and style similar to what was defined by Gatys et al (2016). A VGG network which had been pre-trained for im- age classification is used for e xtracting content and style from the respectiv e images. The content and style representations are obtained in the same way as mentioned before. The loss function captures both high and low le vel information of the image. The method also accounts for total variation loss which improves spatial smoothness in the output image. The total loss gi v en by , l t ot al = α l cont en t ( Y , C ) + β l st yle ( Y , S ) + γ l t v (2) where Y is the output of the transformation net- work, C is the content image and S is the style image. This loss is minimized by backpropaga- tion using Stochastic Gradient Descent (SGD) as an optimizer . The w ork of Ulyanov (2016) on audio style transfer , used a similar optimization framework as Gatys et al (2016), but unlike Gatys et al, they did not use a deep pre-trained neural network, in- stead opting for a shallo w network (A single layer with 4096 random filter). In this, the output spec- trogram is initialized as a random noise. This is then optimized using the model till the loss be- tween features taken from content and style au- dio, and the output audio, is minimized. Howe v er the results of this were limited and preserv ation of content and style was not to a high degree. The work by Grinstein et al (2018) utilized this mean- ing, ho we ver , the work only considered style loss since the original audio itself was being modified, the content loss wasn’ t considered. Audio style transfer has been experimented on iterati ve optimization based approaches using neu- ral networks, opening up the scope for research in real time approaches which can generate the stylized audio in a single forw ard pass of a feed- forward neural network. 3 Problem Definition and F ormulation W e aim to solve the problem of neural audio style transfer using a transformation network and a loss network. Gi ven a content audio A and a style audio B , our task is to find the audio ˆ Z which satisfies the equation, ˆ Z = ar gmin Z ( α || C ( Z ) − C ( A ) || 2 + β || S ( Z ) − S ( B ) || 2 ) (3) Figure 2: An autoencoder based architecture for the transformation network and the loss netw ork. The number of filters and the k ernel size for each layer appear abov e and belo w the layer blocks respectiv ely in the diagram. Here, C represents content of an audio and S repre- sents the style of an audio. α and β are parameters which signify the amount of content or style we re- quire in our output audio ˆ Z . A higher v alue of α ov er β would result in a predominance of content in the audio ˆ Z . 4 Our Proposed Ar chitectur e The general framew ork we propose to adopt for the purpose of real time audio style transfer is il- lustrated in Figure 1. The raw speech signal contains all the infor- mation in the temporal domain. The signal is pre-processed so that it can be reconstructed us- ing Grif fin-Lim algorithm (Griffin and Lim, 1984) later . Initially , Short T erm Fourier Transform (STFT) is applied to bring the raw audio from time domain to frequency domain. This helps us to un- derstand the frequency range at which the signal emphasizes. This relati ve emphasis helps in shap- ing the high level features like phoneme or emo- tion. The frequency domain signal is then con- verted into magnitude-spectral domain by taking the magnitude of the result of STFT . The magni- tude is chosen o ver the phase as it provides higher and richer information about the high lev el fea- tures and helps in easier reconstruction of the sig- nal. The spectrum of this signal is obtained by taking log of magnitude with time as the horizon- tal axis and frequency as the vertical axis. The frequency is transformed to the log scale to vi- sualize the features related to human perception of natural sound. The obtained spectrum of the speech signal is kno wn as an audio spectrogram. This can be thought of an image representation of the audio signal, except the fact that transla- tion along frequency axis can change high-le vel features like emotion and doesnt change features like words spok en. Mathematically , let x ( t ) be the input ra w audio signal. The spectrogram for the signal x ( t ) for a window function w is gi v en by , S pec { x ( t ) , w } = l og e ( | ST F T { x ( t ) , w }| ) (4) where, the ST F T function is gi ven by , ST F T { x ( t ) , w } = Z + ∞ − ∞ x ( t ) w ( t − τ ) e − j ω t d t (5) A network architecture similar to that proposed by Johnson et al (2016) is adopted. A transfor- mation network parameterized by λ t rans is utilized to find a mapping from an input space of content audio spectrogram to an output space of stylized audio spectrogram. T o calculate the loss, a loss network parameterized by λ l oss is used to extract the content from the respecti ve spectograms. Sub- sequently , the same loss network is used to extract the style from the spectograms. The loss network is pretrained to extract a hierarchy of represen- tation from the audio spectrogram, incorporating both lo w le vel features and high le v el features. 4.1 Loss Network W e adopt an encoder -decoder architecture (Perez et al., 2017), illustrated in Figure 2, for the loss network. It consists of 4 con volutional layers and 4 transposed con volutional layers. ReLU non- linearity follo wed by Batch Normalization is ap- plied to all the layers except the last layer . The Figure 3: A framework for training the spectrogram transformation network ( ST N ) and loss calculation for the purpose of backpropagation of ST N . network is treated as an autoencoder . The netw ork compresses the input spectrogram to lo wer dimen- sional latent space and further tries to reconstruct the same input. As a consequence of this process, the encoder part of the autoencoder learns to cap- ture high lev el features of the input spectrogram and is able to represent the same in lo wer dimen- sions, called as latent embedding.. The decoder part is used to reconstruct the spectrogram from the latent embedding. This is then optimized with backpropagation to ensure that the reconstructed spectrogram is a similar to the input. The latent embedding feature activ ation map is used to model content of a spectrogram and the linear combination gram matrix of feature acti v a- tion maps of first, second and third con volutional layers is used to model style of a spectrogram. 4.2 T ransf ormation Network W e use the same encoder-decoder architecture, il- lustrated in Figure 2, for the tranformation net- work. Instead of training the transformation net- work from scratch, pretrained weights from the loss network are used. This approach is impres- si ve as it utilizes the weights of a pretrained neural network which has learned the distribution of au- dio spectrograms, and therefore does not require re-learning of the content representation. The net- work is only optimized to accommodate the lo w le vel features of a single spectrogram of a gi ven style which need not be related to the samples which had been used to train the network. This makes the training process faster comparativ ely while ensuring proper results in under one epoch of training. Using the same architecture also en- sures homogeneity within the dimensions of the spectrogram. A frame work for training this net- work is illustrated in Figure3. An input spectrogram ( I ) is passed through spectrogram transformation network ( ST N ) to generate an output spectrogram ( Y ). The weights and biases of the loss network ( LN ) are frozen. LN is used for calculation of content of Y ( C on ( Y ) ), style of Y ( St y ( Y ) ), content of C ( Con ( C ) ) and style of S ( S t y ( S ) ). Since we want to preserve the content of the input spectrogram, here I and C will be the same spectrogram. The loss is calculated as: (6) l oss = α × M SE ( C on ( Y ) , Con ( C )) + β × M S E ( St y ( Y ) , S t y ( S )) This loss is minimized by optimizing the weights and biases of ST N using backpropagation. 5 Experiments T o train the proposed architecture on speech sig- nals, we use the publicly av ailable CSTR VCTK Corpus (Y amagishi and Junichi, 2012). The VCTK corpus provides text labels for the speech and is widely used in speech to text synthesis. Ho we ver , since we employ an autoencoder based architecture, the text labels aren’t used. The corpus contains clean speech from 109 speakers which read out 400 sentences, the majority of which ha v e British accents. W e do wn sampled the audio to 16 kHz for our con v enience. T o con v ert the raw audio signal to a spectro- gram, we apply Short T erm Fourier T ransform (a) The original content audio spectrogram. (b) The style audio spectrogram. (c) The stylized output audio spectrogram. Figure 4: Audio spectrograms in l og magnitude- spectral domain (STFT) to bring the speech utterance to a fre- quency domain. W e then apply l og to the mag- nitude of the signal to con vert it into a magnitude- spectral domain. The signal is now in the form of an audio spectrogram. The loss network is trained on the spectrograms of audios from the VCTK corpus. W e optimize the weights and biases us- ing backpropgation to minimize the mean squared error between the reconstructed spectrogram and the input provided. The loss is minimized using Adam (Kingma and Ba, 2015) optimizer with a learning rate of 10 − 3 , weight decay of 10 − 4 and batch size as 24. The activ ations of this network are used to represent the content and style of a sig- nal, separately . While training the transformation network, the weights and biases of the loss network are kept frozen. W e use pre-trained weights of loss net- work for training the transformation network for faster learning. The pre-processed signals from the corpus are used as content samples and a single style sample is used for training the transforma- tion network. As a result, the network gets biased to wards generating stylized output spectrograms pertaining to a single style for any content spec- trogram. The loss in Equation 6 is minimized us- ing Adam (Kingma and Ba, 2015) optimizer, with learning rate of 10 − 3 , β 1 as 0.999 and β 2 as 0.99. The v alue of α in the loss function is tak en as 100 and β is taken as 10 4 . These v alues had been cho- sen after excessi v e experimentation. The models hav e been implemented using the PyT orch deep learning framew ork and trained on a single Nvidia GTX 1070 T i GPU. 6 Results The key finding is that the low level statisti- cal information from a style audio spectrogram, kept constant while the spectrogram transforma- tion network is trained, can be transferred to a tar - get spectrogram, while preserving the content of it, in a single forward pass of the network, while testing. The qualitativ e results in the form of spec- trograms of the content, style utterance and styl- ized output generated using this architecture are sho wn in Figure 4a, 4b and 4c. From the spec- trograms, it can be observed that the content is re- tained, ho we ver the output contains very different properties such as pitch, accent, etc. The te xture of the style audio spectrogram is present in the output audio spectrogram, whereas the content, defined by the lightly shaded re gions within the dark back- ground in the original content audio spectrogram, is retained. The stylized output audio spectrogram may be conv erted into a raw audio signal by post- processing using Grif fin-Lim algorithm for further auditory analysis to support this claim. 7 Conclusion In this work, we propose a new architecture for real time audio style transfer . W e experi- mented and ev aluated the proposed model on se v- eral speech utterances and the model is found to sho w promising results. Since a single transfor- mation network is trained for stylizing the con- tent audio on a specific style audio, the style au- dio isn’t needed during testing. In future work, more conduciv e research and experimentation on the meaning and representation of ”style” in audio will result in the disco very of features which will help generate various forms of the stylized audio. Moreov er , as the loss network is separated from the transformation network, we may incorporate se veral other features for calculation of loss, such as accent or music, to have only specific features transferred to the generated audio and preserving se veral other features. Acknowledgments W e would like to thank Innov ation Garage, NIT W arangal for their in v aluable help in pro viding us with necessary computing capabilities which made our research possible. References [Gatys et al.2016] Leon A. Gatys, AlexandS. Ecker , Matthias Bethge. 2016. Image Style T ransfer Us- ing Con volutional Neur al Networks . Proceedings of IEEE Conference on Computer V ision and P attern Recognition (CVPR) 2016. [Johnson et al.2016] Justin Johnson, Alexandre Alahi, Li Fei-Fei. 2016. P er ceptual Losses for Real- T ime Style T ransfer and Super -Resolution . Proceed- ings of European Conference on Computer V ision (ECCV) 2016. [Grinstein et al.2018] Eric Grinstein, Ngoc Duong, Alex ey Ozerov , Patrick Prez. 2018. Audio style transfer . Proceedings of IEEE International Con- ference on Acoustics, Speech and Signal Processing (ICASSP) 2018. [Ulyanov et al.2016] Dmitry Ulyanov . 2016. Au- dio T extur e Synthesis and Style T r ansfer . https://dmitryulyanov .github .io/audio-texture- synthesis-and-style-transfer/. [Simonyan and Zisserman2015] Karen Simonyan, An- drew Zisserman. 2015. V ery Deep Con volutional Networks for Lar ge-Scale Image Recognition . Pro- ceedings of International Conference on Learning Representations (ICLR) 2015. [Deng et al.2009] Jia Deng, W ei Dong, Richard Socher , Li-Jia Li, Kai Li, Li Fei-Fei. 2009. ImageNet: A Lar ge-Scale Hierar chical Imag e Database . Pro- ceedings of IEEE Conference on Computer V ision and Pattern Recognition (CVPR) 2009. [Kingma and Ba2015] Diederik P . Kingma, Jimmy Ba. 2015. Adam: A Method for Stoc hastic Optimization . Proceedings of International Conference for Learn- ing Representations (ICLR) 2015. [Y amagishi and Junichi2012] Y amagishi, Ju- nichi. 2012. English multi-speaker corpus for CSTR voice cloning toolkit . http://homepages.inf.ed.ac.uk/jyamagis/page3/ page58/page58.html . [Perez et al.2017] Anthony Perez, Chris Proctor , Archa Jain. 2017. Style T ransfer for Pr osodic Speech . http://web .stanford.edu/class/cs224s/reports/ Anthony Perez.pdf . [Griffin and Lim1984] D. Griffin, Jae Lim. 1984. Sig- nal estimation fr om modified short-time F ourier transform . IEEE T ransactions on Acoustics, Speech, and Signal Processing 1984.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment