Characterizing the 2016 Russian IRA Influence Campaign

Until recently, social media were seen to promote democratic discourse on social and political issues. However, this powerful communication ecosystem has come under scrutiny for allowing hostile actors to exploit online discussions in an attempt to manipulate public opinion. A case in point is the ongoing U.S. Congress investigation of Russian interference in the 2016 U.S. election campaign, with Russia accused of, among other things, using trolls (malicious accounts created for the purpose of manipulation) and bots (automated accounts) to spread propaganda and politically biased information. In this study, we explore the effects of this manipulation campaign, taking a closer look at users who re-shared the posts produced on Twitter by the Russian troll accounts publicly disclosed by U.S. Congress investigation. We collected a dataset of 13 million election-related posts shared on Twitter in the year of 2016 by over a million distinct users. This dataset includes accounts associated with the identified Russian trolls as well as users sharing posts in the same time period on a variety of topics around the 2016 elections. We use label propagation to infer the users’ ideology based on the news sources they share. We are able to classify a large number of users as liberal or conservative with precision and recall above 84%. Conservative users who retweet Russian trolls produced significantly more tweets than liberal ones, about 8 times as many in terms of tweets. Additionally, trolls’ position in the retweet network is stable over time, unlike users who retweet them who form the core of the election-related retweet network by the end of 2016. Using state-of-the-art bot detection techniques, we estimate that about 5% and 11% of liberal and conservative users are bots, respectively.

💡 Research Summary

The paper provides a comprehensive quantitative investigation of the Russian Internet Research Agency (IRA) influence operation on Twitter during the 2016 U.S. presidential election. Using the list of 2,752 IRA‑identified troll accounts released by the U.S. Congress, the authors harvested a massive dataset through Crimson Hexagon, comprising 13,631,266 tweets and retweets posted in 2016 by over 1.08 million distinct users. Of the listed trolls, 1,148 accounts were present in the data, generating 1,032 original tweets and a total of 538,166 original messages.

To assess the political orientation of the broader user base, the study adopts a media‑source‑based labeling scheme. URLs embedded in tweets are expanded, then matched against partisan media lists compiled by AllSides and Media Bias/Fact Check. The authors construct two corpora: 641 liberal‑leaning outlets and 398 conservative‑leaning outlets. Users are initially labeled liberal or conservative according to which set of URLs they share more frequently; users with equal counts are discarded. This yields a seed set of 10,074 labeled users. A label‑propagation algorithm is then run on the directed retweet network (1,407,190 nodes, 4,874,786 edges), achieving precision and recall of 0.84 on the seed set and extending ideological labels to the majority of active participants.

Network analysis reveals that troll accounts occupy a stable, high‑degree position throughout the year; their in‑degree and out‑degree remain consistently large, indicating they are persistent sources of content. However, the users who retweet trolls evolve over time, coalescing into a dense core by the end of 2016. This suggests that while trolls act as content generators, the amplification of their messages is driven primarily by ordinary users who become the central diffusion agents.

Bot detection is performed with state‑of‑the‑art classifiers (e.g., Botometer). Approximately 11 % of conservative users and 5 % of liberal users are identified as bots, indicating a higher prevalence of automated accounts in the conservative side. The authors also find that conservative users retweet troll content about eight times more often than liberal users, amplifying the partisan imbalance.

Content analysis employs topic modeling and keyword frequency counts. Conservative trolls focus on themes such as refugees, terrorism, Islam, and prominent conservative figures (Trump, Clinton, Obama). Liberal trolls concentrate on school shootings, police, and related social‑justice topics, while still mentioning the same political figures. This thematic split demonstrates targeted framing aligned with each side’s salient issues.

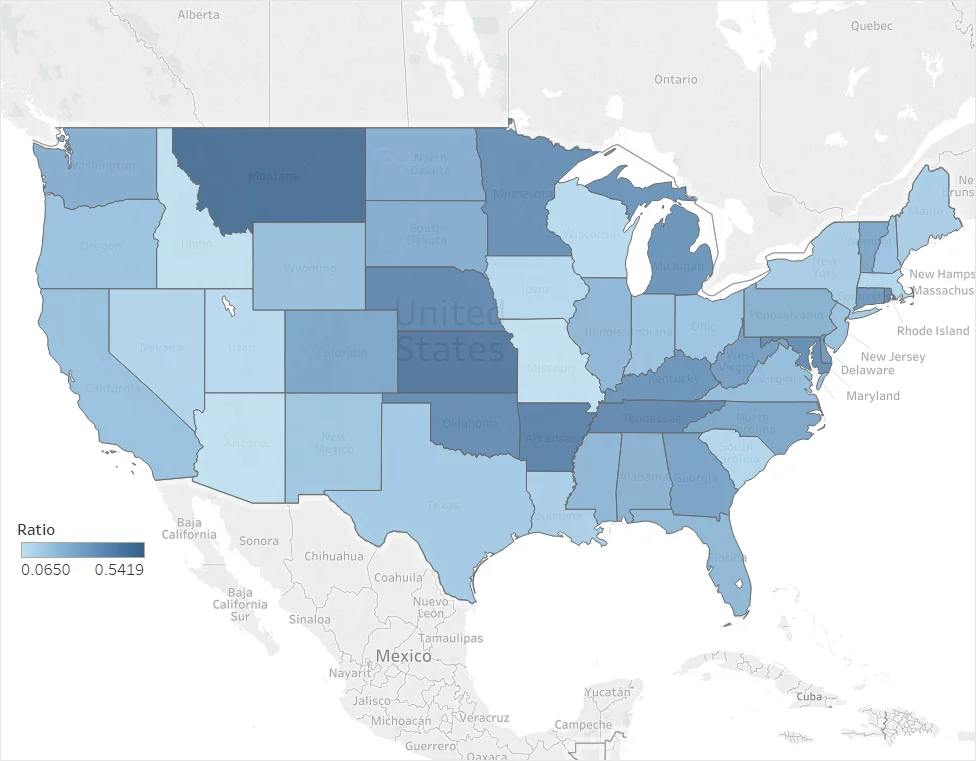

Geospatial analysis maps tweet origins to U.S. states. Overall tweet volume correlates with state population, but certain states (e.g., Florida, Texas, California) show disproportionate engagement with troll content, hinting at localized amplification that could have affected swing‑state outcomes.

The paper’s contributions are fourfold: (1) a novel measurement of manipulated‑content consumption via retweet dynamics; (2) a high‑accuracy, network‑based method for inferring user ideology; (3) evidence that bots and partisan users jointly shape the diffusion of troll messages, with conservatives playing a dominant role; and (4) a multi‑dimensional (temporal, textual, geographic) portrait of the IRA campaign’s reach. The findings underscore the necessity for platform‑level interventions, improved bot detection, and policy frameworks aimed at mitigating foreign influence operations in democratic elections.

Comments & Academic Discussion

Loading comments...

Leave a Comment