Lightweight and Optimized Sound Source Localization and Tracking Methods for Open and Closed Microphone Array Configurations

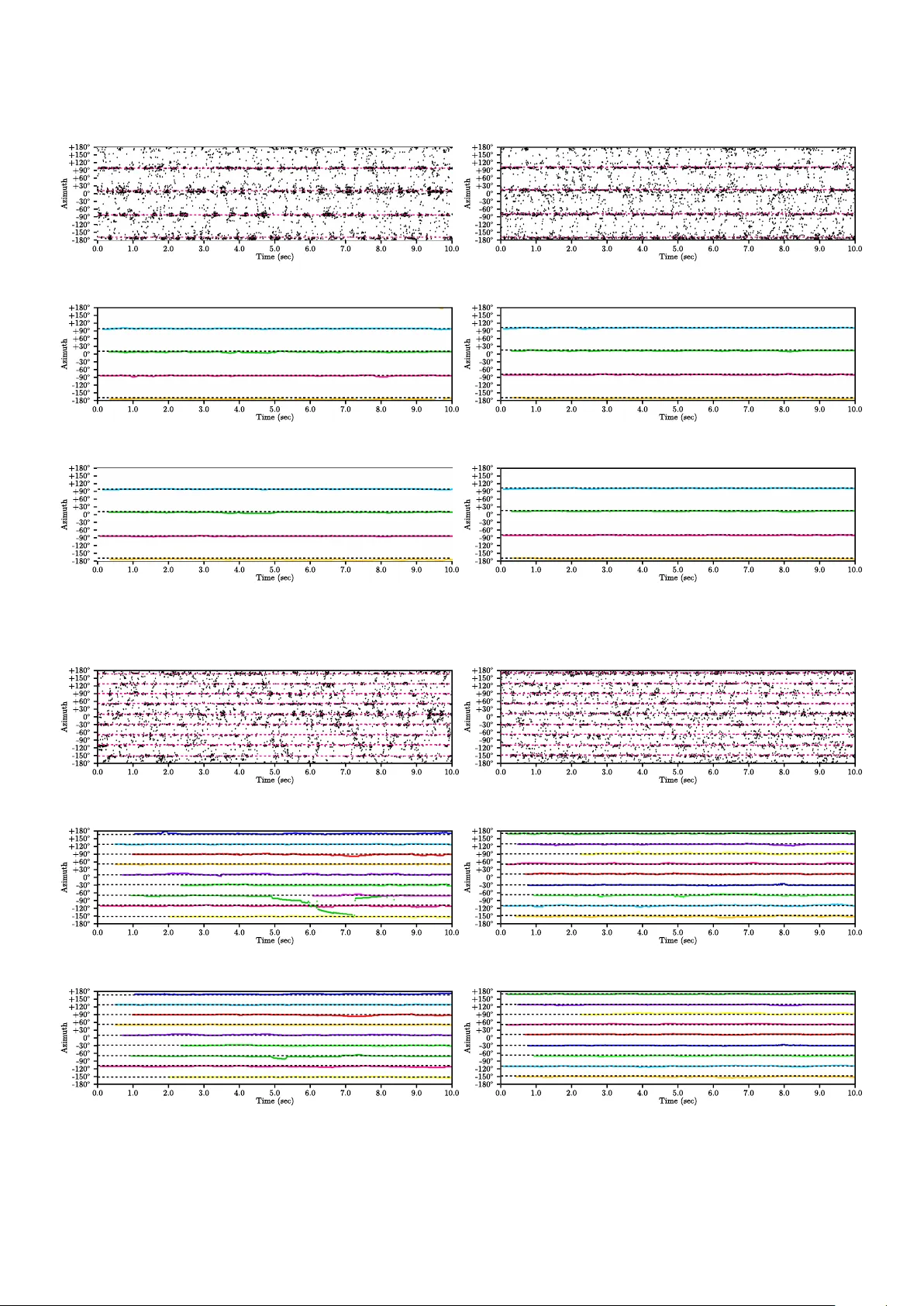

Human-robot interaction in natural settings requires filtering out the different sources of sounds from the environment. Such ability usually involves the use of microphone arrays to localize, track and separate sound sources online. Multi-microphone…

Authors: Francois Grondin, Francois Michaud