Enabling Multiple Access for Non-Line-of-Sight Light-to-Camera Communications

Light-to-Camera Communications (LCC) have emerged as a new wireless communication technology with great potential to benefit a broad range of applications. However, the existing LCC systems either require cameras directly facing to the lights or can …

Authors: Fan Yang, Shining Li, Zhe Yang

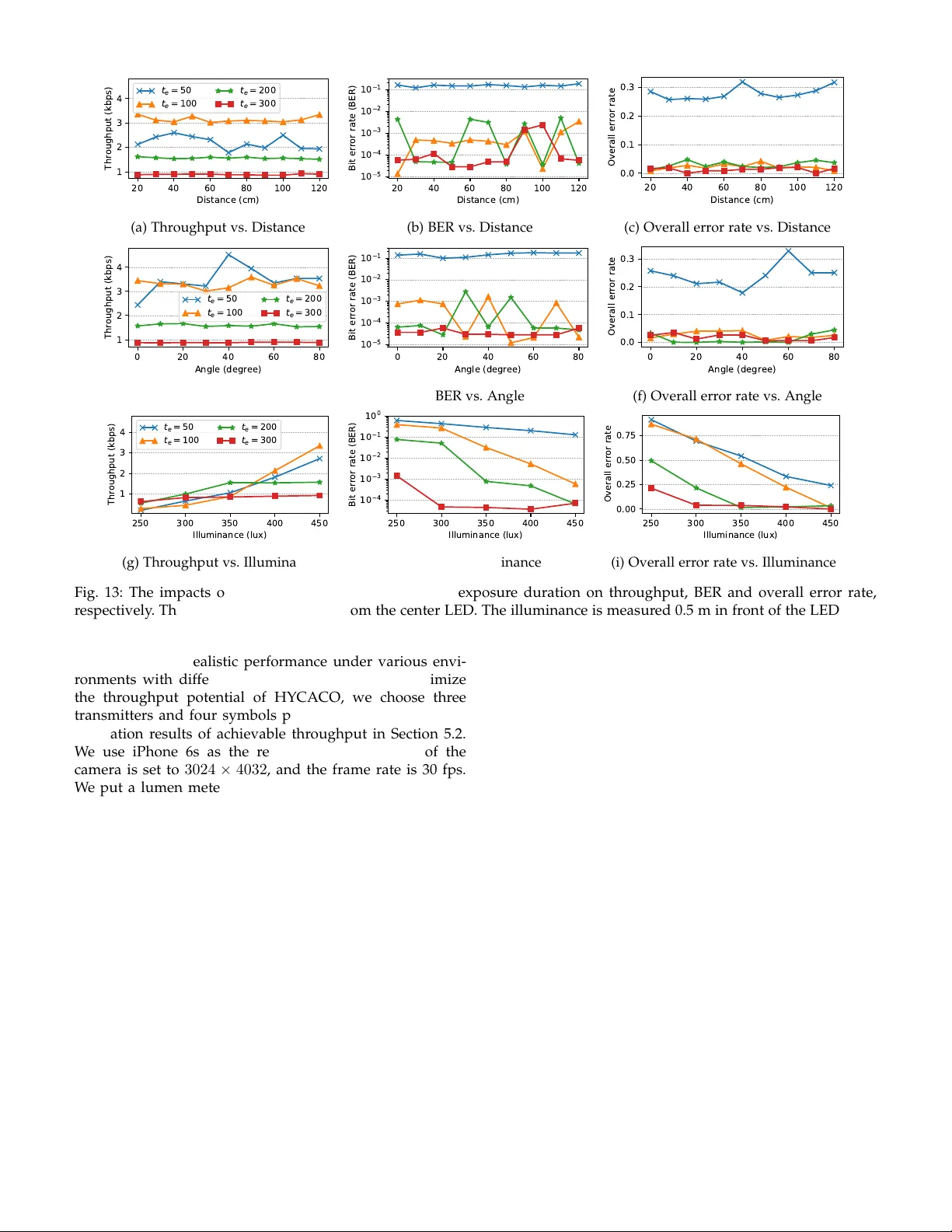

1 Enab ling Multiple Access f or Non-Line-of-Sight Light-to-Camera Comm unications F an Y ang, Shining Li, Zhe Y ang, Member , IEEE, T ao Gu, Senior Member , IEEE, and Cheng Q ian Abstract —Light-to-Camera Communications (LCC) hav e emerged as a new wireless communication technology with great potential to benefit a broad range of applications. Howe ver , the existing LCC systems either require cameras directly facing to the lights or can only communicate ov er a single link, resulting in low throughputs and being fragile to ambient illuminant interference. We present HYCACO , a novel LCC system, which enables multiple light emitting diodes (LEDs) with an unaltered camera to communicate via the non-line-of-sight (NLoS) links. Different from other NLoS LCC systems, the proposed scheme is resilient to the complex indoor luminous environment. HYCACO can decode the messages by exploring the mixed reflected optical signals transmitted from multiple LEDs. By fur ther exploiting the rolling shutter mechanism, we present the optimal optical frequencies and camera exposure duration selection strategy to achiev e the best performance. We built a hardware prototype to demonstrate the efficiency of the proposed scheme under different application scenarios. The experimental results show that the system throughput reaches 4.5 kbps on iPhone 6s with three transmitters. With the robustness, improv ed system throughput and ease of use, HYCACO has great potentials to be used in a wide range of applications such as adver tising, tagging objects, and device certifications. Index T erms —Visible Light Communication, Camera Communication, Rolling Shutter, LED , NLoS, Smar tphone . F 1 I N T R O D U C T I O N I N recent years, the rolling shutter based visible light communication (VLC) [1] has been a promising technique which uses the Complementary Metal-Oxide- Semiconductor (CMOS) sensor within a digital camera for data reception. It enables the camera to sample optical signals at a much faster rate than the frame rate, and can utilize the pervasively deployed commercial of f-the- shelf (COTS) LEDs as transmitters. Since most of the COTS smartphones have CMOS cameras built-in, LCC has shown great potential in short range wireless communications. Fur- thermore, LCC has some unique features, e.g., it pr ovides a natural way to visually associate the received information with the transmitter ’s identity , which can be used in indoor localization, augmented reality , etc. LCC can be classified into two categories. One is line- of-sight (LoS), i.e., the camera directly faces to the LED [2]. The other one is non-line-of-sight (NLoS), i.e., the camera observes the reflected optical signals [3], as illustrated in Fig. 1. However , each of the kinds has hit its bottleneck of throughput. The throughput depends on several factors including the signal frequency and the camera features, mostly importantly on the region of interest (RoI) [4]. The NLoS LCC typically has higher throughput because the optical signals occupy the whole image, while the optical signals only occupy part of the image via the LoS link unless the camera is very close to the LED. However , the received light strength of an NLoS link is attenuated significantly due to the diffuse reflection, which increases the demodulation • F . Y ang, C. Qian, S. Li and Z. Y ang ar e with the School of Com- puter Science and Engineering, Northwestern Polytechnical University, Xi’an China, 710129. E-mail: { craftsman, infinite } @mail.nwpu.edu.cn, { lishining, zyang } @nwpu.edu.cn. • T . Gu is with School of Computer Science and IT , RMIT University , Australia. Email: tao.gu@rmit.edu.au error . Moreover , optical signals from other illuminants are mixed by the r eflector , which causes significant interfer- ences. Therefore, it is easy to enable multiple access for LoS LCC with a cost of lower throughputs, while NLoS LCC has higher throughputs but hard to enable multiple access. Enabling multiple access for NLoS LCC is challenging for four main reasons: (i) The information transmitted from each LED is hard to be extracted because the entire image is filled with the mixed r eflected optical signals. (ii) The captured optical signals are mixed with the environment background and image noise introduced by camera hard- ware. In LCC, the images are usually captured with high ISOs and short exposur e durations, which causes substantial random noise, including chroma noise and luminance noise. (iii) Paramount to the practical implementation of LCC is ensuring high-quality lighting that is satisfactory to human, which limits the design space of the optical waveform. (iv) LCC is a one-way communication link, via which the transmitter cannot get any feedback from the receiver . It brings intrinsic difficulties to implement the unsynchro- nized communication in realtime. In this paper , we propose a HYbrid light-to-CAmera COmmunication (HYCACO) system. Different from many existing approaches, HYCACO works in more realistic in- door luminous environments (i.e., multiple light sources and natural illumination), where multiple LEDs are coor- dinated to emit square wave signals simultaneously . W e embed the information in the phase of the square waves, which is called phase-shift keying (PSK). Different from the conventional PSK, we propose a new scheme, hybrid PSK (HPSK), where the waveform emitted from each LED adopts differ ent orders of PSKs. Therefor e, the received signals can be considered as a combination of square waves with differ ent properties, e.g., phase and/or frequency . In particular , when a CMOS camera obtains an image with 2 (a) LoS (b) NLoS Fig. 1: Comparison between LoS and NLoS light-to-camera communications. respect to the reflector illuminated by the LEDs, it contains bright and dark bands corresponding to the mixed optical signals. In order to extract information from the mixed signals, we propose a SUperimposed Rect-wave Division (SURD) algorithm. W e further exploit the rolling shutter effect and figure out the relationship between the camera settings and the features of optical signals. W e propose a solution to recover the optical signal by capturing two images with differ ent ex- posure durations but the same exposure value (EV). W e use a simple preamble to help to sample hundreds of judging points from millions of pixels in the image. Thus, HYCACO has high computational efficiency and can provide realtime responses with the COTS smartphones. Besides, W e address the symbol loss pr oblem, which is caused by the unsyn- chronized communication channel, with the Luby transform codes (L T codes) [5]. W e have implemented a prototype system to evaluate the performance of HYCACO. W e employ an Arduino UNO board to act as the modulator to control up to 7 LEDs as the transmitter . At the receiver end, we develop an iOS applica- tion and an Android application to verify the performance on iPhone 6s and Nexus 5, respectively . W e conduct the extensive experiments under various camera settings and environments to evaluate the system performance compre- hensively . The experimental results have demonstrated the efficacy of the proposed scheme. In summary , this paper makes the following contribu- tions: • T o our knowledge, HYCACO is the first NLoS LCC system which enables multiple access. W e have imple- mented a prototype system and test the performance of HYCACO in different scenarios. The extensive experi- mental results demonstrate that HYCACO can achieve a throughput of 4 . 5 k bps . This is a significant improve- ment compared with other state-of-the-art LCC sys- tems. • W e propose a new modulation scheme, HPSK, to trans- mit messages from multiple LEDs and also propose the corresponding algorithm, SURD, to demodulate the signals. • W e first present the relationship between the exposure settings and the features of optical signals, and come up with the optimal optical frequency and camera exposure duration selection strategy . time row Y -1 Y -2 te tr te tr te tr … te tr te tr … te tr te tr te tr Y -3 y y-1 0 1 2 te tr GAP (Y -1)tr+te ON OFF Optical signal Image view (Y -1)tr … … ty=ytr Fig. 2: The optical signal is a square wave. t e is the expo- sure duration of the captured image and t r is the readout duration of the camera. • W e propose a simple solution to separate the captured optical signals from the complex image background, which improves the robustness of the LCC systems. 2 P R E L I M I N A RY Before presenting the proposed HYCACO scheme, we present the preliminary about the rolling shutter mechanism and the image capture model of the mainstr eam CMOS cam- eras. Then we describe the characteristics of unsynchronized communication in LCC. 2.1 Image Capture Model The electronic rolling shutters have been widely employed by most of the smartphones which have CMOS cameras built-in. When a CMOS camera captures photos or videos, it does not expose every pixel of the entire image all at once. Instead, each sensor array of pixels in the image is triggered by row (shown in Fig. 2). The exposure duration of a pixel row is shifted by a fixed amount of readout duration. It means that a pulsing light will illuminate only some rows of the pixels at a time, resulting in the alternately dark and bright bands in the image. W e can detect the frequency of these bands in the image, and thus infer the frequency of the pulsing light. Therefore, it can act as a sampling process to the optical signal with much higher sampling rate than the frame rate of the camera. As a result, this effect allows us to recor d the flicker pattern by taking spatio-temporal images with an unaltered digital camera, where differ ent patterns can be used to r epresent differ ent symbols. Conceptually , the image captured by the camera can be thought of as having two layers: the texture layer and the signal layer . HYCACO consists of several ON-OFF keying (OOK) modulated LEDs as the transmitters and a CMOS sensor as the receiver . The luminance emitted from an LED is L , and we use the input signal s i ( t ) to control the state of the LED, where i denotes the serial number of the LED. If s i ( t ) = 1 , the LED is ON; if s i ( t ) = 0 , the LED is OFF . Thus, the illuminance of light falling on the camera sensor is e ( t ) = E + H i (0) s i ( t ) L, (1) where H i (0) is the channel DC gain [6] and E is the non- flickering lights (such as sunlight). Let r ( x, y , t ) be the 3 S1 S9 S8 S7 S6 S5 S4 S3 S2 Tx Rx F1 F2 gap miss frame duration Fig. 3: Mixed symbol and symbol loss due to unsynchro- nization. radiance incident at sensor pixel ( x, y ) at time t . The ra- diance r ( x, y , t ) can be factorized into spatial and temporal components: r ( x, y, t ) = l ( x, y ) e ( t ) , (2) where l ( x, y ) is the amplitude of the temporal radiance profile at pixel ( x, y ) and is determined by the image back- ground. The measured brightness value of a pixel at ( x, y ) in the image is [7] i ( x, y ) = k l ( x, y ) ∞ −∞ f ( t y − t ) e ( t ) dt + n ( x, y ) , = k l ( x, y ) texture layer × ( f ∗ e )( t y ) signal layer + n ( x, y ) , (3) where k is the sensor gain, t y is the temporal shift for a pixel in row y , and t y = y t r (as illustrated in Fig. 2). t r is the readout duration. f ( t ) is the shutter function. If the pixels in row y capture light at time t , f ( t ) = 1 ; otherwise, f ( t ) = 0 . n ( x, y ) is the image noise. Since the signal layer is unidimensional, we perform analysis on vertical sum images—that is, i ( y ) = x i ( x, y ) , l ( y ) = x l ( x, y ) and n ( y ) = x n ( x, y ) . Then, Equation (3) can be written as i ( y ) = k l ( y ) × ( f ∗ e )( t y ) + n ( y ) . (4) 2.2 Unsynchr onized LCC Channel The characteristic of a camera’s discontinuous receiving and the diversity of cameras lead to an unsynchronized LCC channel. Such an unsynchronized communication channel is very likely to experience the mixed symbol frame and the symbol loss problems. RollingLight [2] demonstrates these problems under differ ent unsynchronized scenarios. The optical signals emitted from the LEDs are continuous, but the camera receives the signals frame by frame. A camera does not expose at all time in a frame duration. There exists a time gap between the end time of the exposure of the last row and the start time of the next frame, as illustrated in Fig. 2. For example, as shown in Fig. 3, there exists a time gap between the end of exposur e in frame F 1 and the start of exposure in frame F 2 . Frame F 1 receives a mixture of symbol S 1 , S 2 , S 3 , and part of S 4 . When the length of the gap is longer than the symbol duration, some symbols may be completely lost, e.g., symbol S 5 . In this case, symbol S 4 , S 5 , S 6 will not be extracted by the receiver . Differ ent frame rates cause different levels of unsynchronizations, which lead to different symbol loss ratios [8]. t i > t e t i = t e t i < t e (a) Captured images R o w y Amplitude Sampling Point t i > t e t i = t e t i < t e (b) Simulated signal layers Fig. 4: The brightnesses of the bands change gradually due to the rolling shutter effect. t i is the minimum pulse duration, and t e is the exposure duration. 3 C A R R I E R F R E Q U E N C Y S E L E C T I O N In this section, we study the lower and upper frequency limits of the transmissions, and how exposure duration im- pacts the performance of the LCC systems. So we can choose the optimal optical frequencies and exposure duration for HYCACO. 3.1 Lighting Requirements W ithout loss of generality , we assume that LCC is used for both lighting and communication. Hence, the LEDs used in the LCC system should meet the lighting requir ements for human. When the carrier frequency is smaller than eye’s temporal resolution, called critical flicker fr equency (CFF), flicker happens [9]. T ypically , human eyes are able to resolve up to 50Hz to luminance flicker and 25Hz to chromatic flicker [10]. Although human eye has a cutoff frequency in the vicinity of 50Hz, some studies have shown that long-term exposure to higher frequency (unintentional) flickering (in the 70 to 160 Hz range) can also cause malaise, headaches, and visual impairment [11]. Besides, the per- ceived brightness of an LED varies proportionally to the average duty cycle of its flicker pattern. Therefor e, the duty cycle in a CFF cycle duration should be unchanged too. W e set the lower frequency limit of the optical signals to 200 Hz and modulate the waveforms with a duty cycle of 50%. 3.2 Pulse Duration vs. Exposure Duration The duration of the optical signals recorded in one frame is proportional to the width of the RoI and the readout duration t r . One advantage of NLoS compared to LoS is it can easily amplify the RoI to the full width of the receiver . As illustrated in Fig. 2, the camera captures an image with an exposure duration t e . Let Y denote the width (number of pixel rows) of the image. The duration for the imager to 4 open and allow photons to enter is ( Y − 1) t r + t e . However , the signal layer of the image is the convolution of the shutter function and the optical signal. Hence the duration of the signal recor ded in the image is ( Y − 1) t r . Although the exposure duration makes no reference to the signal duration of the image, it affects the gradient pattern of the bands in the image. For most cameras of smartphones, the shutter function can be considered as a window function, and the window length is the exposure duration. The square waves are convoluted with the shutter function which causes the gradient effects of the bands, though this may not be obvious to the naked eyes. The cap- turing can be classified into three circumstances according to the relationship of the minimum pulse duration t i and the exposure duration t e , i.e., t i > t e , t i = t e , and t i < t e , as shown in Fig. 4a. W e can see that under the same pulsing LED, the length of gradient increases as the exposur e dura- tion increases in the captured images. The simulated signal layers of the images in Fig. 4a are illustrated in Fig. 4b. W e can see that the shutter function deforms the square wave by convolution, but it does not change the frequency of the original waveform, and when t i ≥ t e , the amplitude of the signal layer is proportional to the original waveform, too. Only when t i < t e , the signal layer losses the spatial details of the original waveform. Therefore, as long as t i ≥ t e , there exists a set of sample points in the signal layer that can fully describe the original waveform, as illustrated in F ig. 4b. Furthermore, there are some physical constraints of cam- eras placed on the reception of the optical signal. Cameras on the market usually have dif ferent t r . W e pr opose a simple method to calibrate t r by sending a known preamble. W e measure several phones’ t r and the supported range of exposure duration. W e find t r is smaller than the minimum exposure duration that the OS allows the user to set. t r is usually from several micr oseconds to a dozen microseconds. Theoretically , the upper frequency limit is 1 / 2 t r Hz. How- ever , when t i < t e , we cannot infer the accurate spatial detail of the original waveform, and when t i ≪ t e , the signal layer is approximately constant. In our proposal, HYCACO needs both the spatial and temporal details of the waveforms to perform the demodulation. Thus, we set t i = t e to obtain the maximum achievable throughput. 4 H Y C A C O D E S I G N The architecture of HYCACO is shown in Fig. 5. Several COTS LEDs each connected to a transistor switch circuit are employed as the transmitters. A microcontr oller encodes the input data to ON-OFF symbols and dispatches the symbols to the circuits. Thus, the data waveforms are modulated onto the instantaneous power of the optical carriers. A smartphone with a built-in CMOS camera is employed as the receiver . First, the smartphone continuously takes images of the reflector (a rough surface) and calculates the signal layer of each image. Second, the signal layers are de- modulated to several sequences of N-ary symbols according to the transmitter number . Third, we decode the symbol sequences to binary data packets. Finally , we combine the data packets to retrieve the full input message. T aking pictures Computing signal layer Demodulation Decoding Encoding Microcontroller C C C Modulation T ransistor switch circuit T ransmitter Receiver Combining Fig. 5: Shows the architecture of HYCACO. The transmitters are several temporally modulated LEDs, and the receiver is a rolling shutter camera. 4.1 Modulation and Encoding Scheme with Multiple Access The inspiration of our multiple access scheme comes from Orthogonal Frequency Division Multiplexing (OFDM). The waveform emitted from each LED can be considered as a subcarrier . Thus, the optical carrier is s ( t ) = s i ( t ) , (5) where i denotes the serial number of the subcarrier/LED. In our prototypes, the transmitters share the same microcon- troller and are thus inherently synchronized. By decoding the messages modulated on each subcarrier , the receiver can communicate with each transmitter , respectively . For the convenience of evaluation, we let each transmitter sends a piece of input data, and the receiver combines the pieces to retrieve the full input data. Our multiplexing technique is much simpler than OFDM. Here ar e three differ ences: (i) The frequency is a sine wave in OFDM, while it is a square wave in HY - CACO. (ii) The subcarrier itself is not useful in transmitting the information in OFDM, while it conveys information by changing its phase in HYCACO. (iii) Unlike OFDM, HYCACO has no intersymbol interference (ISI) problem, because light suf fers less from multipath effect than RF and the frequency of modulation is below 1 / 2 t r Hz (usually less than 100 kHz). In this paper , a Hybrid PSK (HPSK) scheme is proposed for multi-carrier modulation. As the amplitude of each subcarrier is invariant, to increase the number of distinct symbol changes, we let each frequency adopts its allowed highest order in PSK. The allowed highest order is decided by the period and the time granularity . In the end of Section 3.2, we let the minimum pulse duration t i equals to the exposure duration t e to obtain the maximum achievable throughput. Thus, t e is the time unit, denoted by 1. Let T denote the symbol duration in the unit of t e , and let the subcarrier serial number i repr esent the number of cycles in one symbol. The period of subcarrier i is T /i , and it adopts T /i order of PSK. Fig. 6 illustrates an example of HPSK with two LEDs. Thus, subcarrier i can be expressed as follows: s i ( t ) = 1 , 0 ≤ t mo d T i + S i T < T 2 i 0 , T 2 i ≤ t mo d T i + S i T < T i , (6) 5 0 4 8 12 16 T i m e / t e 0 1 2 0 1 0 1 Amplitude = 0 s y m b o l : 0 = 0 s y m b o l : 0 = 0 s y m b o l : 0 = 1 2 s y m b o l : 1 = s y m b o l : 2 = 3 2 s y m b o l : 3 = s y m b o l : 1 = s y m b o l : 1 An example of HPSK with two LEDs s 1 ( t ) s 2 ( t ) s ( t ) Fig. 6: Shows an example of HPSK modulation with two LEDs. θ is the phase of the waveform. symbol is a N-ary number where N is the order of PSK. The numbers on the Y - axis represent the states of the LEDs, e.g., 2 means two LEDs are ON, 1 means one LED is ON, and 0 means all LEDs are OFF . where S i denotes the symbol it r epresents. First of all, we need to choose the minimum symbol du- ration T according to the number of transmitters. Subcarrier i has the following pr operties: • The optional range of the frequency is from 200 to 1 / 2 t e Hz. • i ∈ N , where N denotes natural numbers. • i ≤ T / 2 . • T /i ∈ N . Let I denote the set of subcarrier subscripts and D denote the set of divisors of T . we can derive that I ⊆ D − { T } . Let I denote the transmitter number , and I = | I | . The proper T is min T s.t. | D − { T }| ≥ I . (7) Secondly , we encode the input data to N-ary symbols. The encoding scheme is as follows: 1) Convert the input data to binary . 2) Divide the binary sequence into I sequences, each of which with a length of a multiple of log 2 ( T /i ) . 3) Convert the divided sequences to N-ary sequences cor- respondingly , where N = T /i . Lastly , we distribute these N-ary sequences to the corre- sponding transmitters. Let us use an example to demonstrate the encoding and modulation process. As illustrated in Fig. 7a, we use two transmitters to send the message Hello! . The modulated optical signal is shown in Fig. 7b. 4.2 Signal Recovery An NLoS link typically has a low signal-to-noise ratio (SNR) because of the complex image background and the diffuse reflection. W e tackle these challenges by taking a long exposure image which has the same EV as that of the short exposure images. EV is a number that repr esents a combination of a camera’s exposure duration, ISO, and f- number . The relationship is given by the exposure equation Hello! 0100100001 10010101 101 10001 101 100 1020121 1 12301230 01 101 1 1 100100001 01 101 1 1 100100001 Convert to binary and split it into 2 sequences Convert to quaternary sequence Subcarrier 1 Subcarrier 2 QPSK BPSK Binary sequence (a) Encoding and modulation process 0 4 8 12 16 20 24 28 32 36 40 44 48 52 56 60 64 68 T i m e / 2 0 0 s 0 1 2 Amplitude Preamble(length=4) s ( t ) (b) Input Signal Fig. 7: An HYCACO encoding and modulation example which uses two transmitters to send the m essage Hello! . prescribed by ISO 2720:1974 1 . As the f-number is fixed in smartphones, the short is captured with a high ISO and the long is captured with a low ISO. The two images are captured with the same image background and are motion blur-fr ee. The two images are given as i short ( y ) = k short l ( y ) × ( f short ∗ e )( t y ) + n short ( y ) , (8) i long ( y ) = k long l ( y ) × ( f long ∗ e ′ )( t y ) + n long ( y ) , (9) where k is the sensor gain which can be adjusted by the ISO, l ( y ) is the image background, f ( t ) is the shutter function, e ( t ) is the illuminance of light falling on the sensor , which is the sum of r eceived optical signals. The long exposure can be approximated as the texture layer of the short exposure. f long is chosen so that it is significantly longer than the period of the temporal signal, thus ( f long ∗ e ′ )( t y ) ≈ K , where K is a constant. Images captured with the same EV will present the same scene luminance [12], thus k short = K k long . Because the long one is captured with a low ISO, its image noise is much smaller than the short one’s. After summing the intensities along each image row , n long ( y ) could be negligible. Let g ( y ) denote the signal layer of the short exposure. Thus, g ( y ) ≈ i short ( y ) i long ( y ) . (10) As illustrated in Fig. 8, the texture layer may not look the same in the two images. It is because that the short one is captured with a high ISO and thus introduces more image noise. The long exposure only needs to be captured once at 1. N 2 t = LS K , where N is the relative aperture (f-number), t is the exposure time (”shutter speed”) in seconds, L is the average scene luminance, S is the ISO arithmetic speed, K is the reflected-light meter calibration constant. 6 Short exposure image Long exposure image Sum Sum Compute signal layer Filter out DC component Sampling [1, -1, 0, 0, 0, 1, -1, 0, -1, 0, 0, 1, 1, 0, 0, -1, 0, 0, -1, 1, -1, 0, 0, 1, 0, 0, -1, 1, 0, 0, -1, 1, 1, -1, 0, 0, 0, -1, 1, 0, -1, 1, 0, 0, 1, 0, 0, -1, 1, -1, 0, 0, 0, -1, 1, 0, 0, 0, 1, -1, 0, 1, -1, 0] Normalize Fig. 8: Shows the process of signal recovery . The long exposure image has the same EV as the short one’s. The result is a sequence which represents the illumination levels. the beginning of the reception. As long as the communica- tion time is long enough, the extra cost of capturing the long exposure could be negligible. The LEDs are located at differ ent locations and thus have differ ent distances and irradiance/incidence angles to the reflector . The channel gain of each LED-reflector link is different. The channel attenuations can be compensated by detecting the frequencies of the subcarriers. The com- pensation algorithm will be addressed in our future work. Here, we just assume the channel gains of the links are approximately equal. Thus, equation (1) can be written as e ( t ) = I LH (0) s ( t ) + E , (11) where I LH (0) is a constant. Non-flickering component E can be filter out by a DC filter , as illustrated in Fig. 8. W e extract the packets by detecting the pr eambles in the signal layer . The transmitter sends a known preamble at the beginning of a packet, as shown in Fig. 7b. In the signal layer , the preamble is convolved with the shutter function to form one and a half cycles of a triangle wave, which starts at a peak. The extracted signal layer of the example in Section 4.1 is illustrated in Fig. 8. The preamble also gives us the information of the sampling period. Let n p denote the width of the preamble in the signal layer . The width proportional to one unit time in the signal layer is n p / 3 , denoted by n i . n i can be used to estimate the readout duration t r , i.e., t r = t i /n i . W e use n i as the sampling period. Then, we normalize the sampling result to get an illumination level sequence, denoted by g [ k ] , as illustrated in Fig. 8. According to Nyquist–Shannon sampling theorem, g [ k ] can fully describe s ( t ) . 4.3 Demodulation and Decoding W e propose a superimposed rect-wave division algorithm to divide the optical signal into a set of square waves. The Fourier series of Equation (6) is s i ( t ) = 1 2 + 2 π ∞ n =1 sin ( i (2 n − 1) t + θ ) 2 n − 1 , (12) where t ∈ [0 , T ) . The first sinusoid component ( n = 1 ) is the fundamental frequency which has the same frequency and phase as the square wave. When the continuous signal s i ( t ) is sampled at the inverse of one unit time Hz, we get the discrete form of s i ( t ) , x i [ k ] = 1 2 + 2 π T / 2 i n =1 sin i (2 n − 1)2 π ( k + S i T ) 2 n − 1 , (13) where k = 0 , 1 , 2 , · · · , T − 1 . Thus, the discrete form of the optical carrier is x [ k ] = x i [ k ] . By taking a real discrete Fourier transform (DFT) of both sides, we get the frequency domain, X [ ω ] = X i [ ω ] , i ∈ I , (14) where ω = 0 , 1 , 2 , · · · , T / 2 . ∠ X i [ i ] is the phase of the i th subcarrier . The frequency bins X i [ ω ] repr esent the har- monics which construct subcarrier x i , where ω is the cycle number of the harmonic. W e can see that X i [ ω ] = 0 when ω = i (2 n − 1) and ω = 0 , hence X 1 [1] = X [1] and X i [ i ] = X [ i ] − j = i − 1 ,j ∈ I j =1 X j [ i ] . The demodulation and decoding process is expressed in Algorithm 1. 4.4 Dealing with Unsynchronized Communications W e address the symbol loss pr oblem with L T codes. L T codes employ a particularly simple algorithm based on the XOR to encode and decode the message. W e encode the input data to L T codes before the HYCACO encoding process. The process of generating an encoding packet is easy to describe: 1) Divide the input data into n blocks of roughly equal length. 2) Randomly choose d blocks, where 1 ≤ d ≤ n and the degree d is a pseudorandom number . 3) The value of the encoding symbols is the XOR of the d blocks, i.e., M i 1 ⊕ M i 2 ⊕ · · · ⊕ M i d , where M i is the i th packet and { i 1 , i 2 , . . . , i d } are randomly chosen indices of the d blocks. 7 Algorithm 1 SUperimposed Rect-wave Division (SURD) Input : g [ k ] , I Output : S [ i ] X ← short-time Fourier transform (STFT) of g [ k ] for each X ∈ X do for each i ∈ I do if i = 1 then X i [ i ] ← X [ i ] else X i [ i ] ← X [ i ] − j = i − 1 ,j ∈ I j =1 X j [ i ] end if S [ i ] ← ∠ X i [ i ] × T 2 iπ Calculate x i via Equation (13) X i ← Real DFT of x i end for end for DC Power Supply T ransistor Switch Circuits Arduino UNO LED luminaires Fig. 9: Experimental equipments of the transmitters 4) A prefix is appended to the symbols defining the list of indices and the total blocks n in the input data. W e perform the HYCACO encoding and modulation process on these encoded packets. The receiver keeps ex- tracting packets from the captured images. If a packet is of degree d > 1 , it is first XORed against all the decoded packets in a message queuing area, then stored in a buffer area if its reduced degree is gr eater than 1. When a new packet of degree d = 1 is received or reduced, it is moved to the message queuing area and matched against all the packets in the buffer . When all n packets have been moved to the queuing area, the received data has been successfully decoded. 5 E VA L UAT I O N In this section, we first evaluate the achievable thr ough- put of HYCACO with different prototype settings, such as smartphone model, transmitter number , packet duration and frame rate. Then we choose the proper settings ac- cording to the achievable throughput evaluation results to evaluate the realistic performance under different capturing geometry , illuminance and exposure duration. 5.1 Experiment Setup The transmitters of our hardwar e prototype consist of a DC power supply , an Arduino UNO, and several COTS LEDs each connected with a transistor switch circuit board (shown in Fig. 9). The transistor switch circuit boards are used to (a) Capturing (b) Settings Fig. 10: GUI of r eceiver app T ABLE 1: Parameters of The Receiver Exposure Duration ( µs ) ISO Image Resolution ( X × Y ) Frame Rate (fps) Readout Duration ( µs ) iPhone 6s 13- 333333 23- 1840 640 × 480 - 4032 × 3024 3-240 6.45 Nexus 5 13- 866975 100- 10000 640 × 480 - 3264 × 2448 7-30 12.5 amplify the signal to a proper voltage level for the LEDs. The circuit boards are connected to the Arduino UNO, the microcontr oller , which accepts the input data and generates ON-OFF symbols. The max forward voltage and current for the LED is 40 V and 350 mA, respectively . These LEDs illuminate a rough surface painting which is the reflector . The reflector is 2.1 m away from the nearest LED. The measured illuminance in the room (all LEDs are off) is about 50 lux. On the receiver side, we test two devices, iPhone 6s and Nexus 5, and build two apps for iOS and Android. As illustrated in Fig. 10b, users can set the exposure duration t e equal to the time unit t i . After pressing Save button, the app automatically set the ISO of the short exposur e to the maximum, and compute the corresponding expo- sure duration and ISO of the long exposure. W e make the exposure duration of the long one hundred times of the short’s. Therefor e, the long exposure is captured with a small ISO and a long exposure duration, which makes the long exposure nearly flicker-fr ee and noise-free. The setting ranges and the calibrated readout durations of the receivers are illustrated in T able 1. This app has two working modes: (a) process and demodulate the image within the phone; (b) pr ocess the image within the phone and upload the sampling result g [ k ] to a server , then the server demodulates g [ k ] and sends the retrieved message back to the phone, as illustrated in Fig. 10a. Mode (b) is designed for single frame communication such as indoor positioning. 5.2 Achie vable Throughput W e evaluate the achievable throughput by choosing the frames without reception error . Since the frame rates of dif- ferent cameras are slightly different, we address this prob- lem and come up with a definition of “frame throughput” quantified using the bits per frame unit (symbol: “bit/f”). W e set the time unit to 100 µs and set the resolution to the maximum. 5.2.1 Throughput vs. T ransmitter Number According to Equation (7), the more transmitters, the longer duration the transmitter needs to send a symbol. Therefor e, 8 1 2 3 4 5 6 7 Transmitter number 0 50 100 150 200 250 Frame throughput (bit/f) i P h o n e 6 s ( 3 0 2 4 × 4 0 3 2 ) N e x u s 5 ( 2 4 4 8 × 3 2 6 4 ) 5 10 15 20 25 S y m b o l d u r a t i o n ( 1 0 0 s ) (a) Throughput vs. T ransmitter number 1 2 3 4 5 Symbols per packet 0 25 50 75 100 125 Frame throughput (bit/f) = { 1 , 2 , 3 } = { 1 , 2 , 3 , 4 , 6 } = { 1 , 2 , 3 , 4 , 6 , 8 , 1 2 } (b) Throughputs vs. Packet duration 3 0 6 0 9 0 1 2 0 1 5 0 1 8 0 2 1 0 2 4 0 F r a m e r a t e ( fp s) 7 2 0 1 0 8 0 1 9 3 6 2 1 6 0 2 4 4 8 3 0 2 4 Im a g e w i d t h M a x i m u m t h r o u g h p u t 5 0 0 1 0 0 0 1 5 0 0 2 0 0 0 2 5 0 0 3 0 0 0 3 5 0 0 (c) Throughput vs. Frame rate Fig. 11: The achievable thr oughput. Frame throughput is the amount of data decoded per frame. 15 35 55 75 95 115 Transmitter Spacing (cm) 1 2 3 Throughput (kbps) (a) Throughput 15 35 55 75 95 115 Transmitter Spacing (cm) 1 0 4 1 0 3 1 0 2 1 0 1 Bit error rate (BER) BER 0.0 0.1 0.2 0.3 Overall error rate Overall (b) BER & Overall error rate Fig. 12: Throughput, BER and overall error rate with a varying transmitter spacing. the number of transmitters determines the symbol duration, the symbol duration determines the granularity of a packet, and different levels of granularity cause differ ent symbol loss ratios. If no symbol is lost (no signal in the image is discarded), given the image resolution and readout dura- tion, we can simulate the frame throughputs with differ ent numbers of transmitters for transmission. The r esults are illustrated in Fig. 11a. W e can see that HYCACO reaches the highest frame thr oughput when the number of transmitters is 5, symbol duration T is 12 units of time, and the subcarrier numbers I are { 1 , 2 , 3 , 4 , 6 } . The frame throughput on Nexus 5 is higher than on iPhone 6s, although images captured by iPhone 6s have higher resolution. This is because the readout duration of iPhone 6s is much smaller than that of Nexus 5. According to our experiments, the readout duration of iPhone 6s and Nexus 5 is 6.45 µs and 12.5 µs , r espectively . Although on Nexus 5 can theoretically achieve higher throughput, images captured by Nexus 5 contain more noise, and iPhone 6s has a broader range of frame rate. Therefor e, we use iPhone 6s as the r eceiver for the rest of the evaluation. 5.2.2 Throughput vs. P acket duration Even if we assume that there is no reception error , the throughput is still variable due to unsynchronization. W e need a preamble preceding each packet to extract the pack- ets from the signal layer . The preamble takes an extra cost of 4 units of time for the transmitter to send a packet. Packet duration equals the sum of the preamble duration and the product of the symbol duration and symbols per packet. Signals before the first detected preamble and after the last detected preamble are discarded, which causes symbol losses. Fig. 11b shows how the packet duration affects the frame throughput. W e can see the variance increases as the packet duration increases. When the packet duration is small, the preambles take too many shares in the signal layer , which reduces the throughput; when the packet du- ration is big, the discarded signals might be too long which increases the variance. In real deploy environments, the LEDs are installed at differ ent locations, which leads to channel gain differ ences of the LED-reflector links. W ithout channel compensation, more transmitters would cause more r eception error . W e choose four symbols per packet and the subcarrier numbers { 1 , 2 , 3 } for the rest of the evaluation, because this combina- tion achieves nearly highest average throughput with fewer transmitters. 5.2.3 Throughput vs. F rame Rate W e check the impact of the camera frame rate on the throughput. iPhone 6s supports to capture images with a wide range of frame rate from 3 to 240 fps, but the frame rate increases as the resolution decreases. W e measure the achievable throughput by choosing a frame that achieves the highest frame throughput and multiplying it by the frame rate. The results are illustrated in Fig. 11c. The throughput reaches the maximum when the frames are captured in 3024p/30 format. In CMOS cameras, the frame duration is limited by the readout duration t r and the resolution. As illustrated in Fig. 2, frame duration t f = ( Y − 1) t r + GAP . Thus, the corresponding maximum frame rate is 1 /t f . Hereafter , for the realistic performance evaluation, the capture format is set to 3024p/30. 5.3 Realistic Perf ormance In this section, we conduct our evaluations based on the following metrics: • Throughput : the average amount of data successfully decoded per second in the received frames. • Bit error rate (BER) : the percentage of wrongly decoded data in the total amount of data. • Overall err or rate : the percentage of overall reception error , including the frames failed to extract, the packets failed to demodulate, and the wrongly decoded bits. This can be expressed as p e = 1 − p f × p p × p b , where p f , p p and p b are the per centage of successfully decoded frames, packets and bits,respectively . 9 20 40 60 80 100 120 Distance (cm) 1 2 3 4 Throughput (kbps) t e = 5 0 t e = 1 0 0 t e = 2 0 0 t e = 3 0 0 (a) Throughput vs. Distance 20 40 60 80 100 120 Distance (cm) 1 0 5 1 0 4 1 0 3 1 0 2 1 0 1 Bit error rate (BER) (b) BER vs. Distance 20 40 60 80 100 120 Distance (cm) 0.0 0.1 0.2 0.3 Overall error rate (c) Overall error rate vs. Distance 0 20 40 60 80 Angle (degree) 1 2 3 4 Throughput (kbps) t e = 5 0 t e = 1 0 0 t e = 2 0 0 t e = 3 0 0 (d) Throughput vs. Angle 0 20 40 60 80 Angle (degree) 1 0 5 1 0 4 1 0 3 1 0 2 1 0 1 Bit error rate (BER) (e) BER vs. Angle 0 20 40 60 80 Angle (degree) 0.0 0.1 0.2 0.3 Overall error rate (f) Overall error rate vs. Angle 250 300 350 400 450 Illuminance (lux) 1 2 3 4 Throughput (kbps) t e = 5 0 t e = 1 0 0 t e = 2 0 0 t e = 3 0 0 (g) Throughput vs. Illuminance 250 300 350 400 450 Illuminance (lux) 1 0 4 1 0 3 1 0 2 1 0 1 1 0 0 Bit error rate (BER) (h) BER vs. Illuminance 250 300 350 400 450 Illuminance (lux) 0.00 0.25 0.50 0.75 Overall error rate (i) Overall error rate vs. Illuminance Fig. 13: The impacts of distance, angle, illuminance and exposure duration on throughput, BER and overall error rate, respectively . The reflector is 2.1 m away from the center LED. The illuminance is measured 0.5 m in front of the LED. W e examine the realistic performance under various envi- ronments with different exposure durations. T o maximize the throughput potential of HYCACO, we choose thr ee transmitters and four symbols per packet according to the evaluation results of achievable throughput in Section 5.2. W e use iPhone 6s as the receiver . The r esolution of the camera is set to 3024 × 4032 , and the frame rate is 30 fps. W e put a lumen meter 0.5 m in front of the center LED to measure the light level of the LED. When the LEDs are off, the meter reading is 50 lux. 5.3.1 Eff ect of T ransmitter Spacing Since the channel gain differ ences among the LED-reflector links are crucial to the quality of recovered signals, we study how the adjacent distance between the transmitters affects the performance of HYCACO. The received light strength depends on its irradiance, incidence, and attenuation which follows a squar e law . W e place the LEDs in line and the surfaces of LEDs parallel to the reflector , and the distance between the reflector and the LED surface is 2.1 m. W e vary the adjacent distance between transmitters from 15 cm to 115 cm, while the receiver is fixed at a distance of 50 cm from the reflector . The time unit and the exposure duration is 100 µs . The measured illuminance is 450 lux. The experiment results ar e illustrated in Fig. 12. W e can see that as the transmitter spacing increases, the throughput decrease, the BER and the overall error rate increase. The more precisely the illuminance proportionate to the number of ON-state LEDs, the higher quality of the reception will be. W e hereafter fix the adjacent distance to 30 cm. 5.3.2 Impact of Reflector-Receiver Distance W e evaluate the performance of HYCACO under a varying distance between the reflector and the receiver and report the results in Fig. 13a, 13b, 13c. The measured illuminance is 450 lux. The camera is parallel to the r eflector . The results show that the throughput and r eception error are independent of the distance. This is very different from the LoS LCC approaches, which are highly affected by the distance because the distance affects the RoI in the captured image. The limitation of the capturing distance is 120 cm because the reflector will not fill the field-of-view (FoV) of the camera if the distance further increases. Images captured with low exposure durations will have low luminance and introduce more noise. Thus the signal layers have low SNRs. The shadow of the phone or the user on the reflector will further decrease the SNR which causes the fluctuation of the evaluation results. Therefore, the shorter exposure duration, the larger fluctuation. 5.3.3 Impact of Viewing Angle W e then report in Fig. 13d, 13e, 13f the evaluation results under a varying viewing angle. V iewing angle is the angle between the camera and the reflector . Since the distance does affect the performance, we adjust the distance while varying the angle to make sure the reflector fill the FoV . 10 T ABLE 2: Measured Illuminance, V oltage, and Curr ent Illuminance (lux) voltage (V) Current (A) 450 40 0.31 400 37.5 0.25 350 35.5 0.15 300 34 0.08 250 33 0.05 The measured illuminance is 450 lux. Similar to what we have observed with varying distances, the throughput and reception error are independent of the angle, too. Actually , the channel gain for each pixel sensor is different. That is why the frame has a brightest center and a gradual reduction of brightness on both sides (shown in Fig. 8), which makes it cannot directly set thresholds for detecting symbols. W e address this challenge by capturing an extra long exposure image. In summary , the reflector-r eceiver distance and the view- ing angle have nearly no impact on the performance when the signal layer has sufficient SNR. But when the SNR is low , the shadow of the phone or the user , which is inevitable, may cause the fluctuation of the throughput. Furthermore, the viewing angle affects the position of the brightest center in a frame. 5.3.4 Impact of Illuminance Since the signal layer contains substantial noise for the high ISO, the performance is sensitive to the illuminance of the reflector . W e evaluate the performance under a varying illuminance measured 0.5 m in front of the center LED. The receiver is fixed at a distance of 0.5 m from and parallel to the reflector . W e vary the light output of the LEDs by adjusting the input currents, as shown in T able 2. The experiment results are reported in Fig. 13g, 13h, 13i. W e can see that the plotted results of exposure durations below 100 µs (included) have linear relationships as the illuminance increases; the plotted results of exposure durations above 100 µs have linear r elationships with the illuminance when the illuminance is below a threshold. Long exposure duration helps the camera improve the quality of the captured image when the environment lu- minance is low , e.g., improve the image luminance, reduce the image noise. Therefor e, if the reflector does not have a sufficient brightness, we can enhance the SNR by extending the exposure duration. However , the SNR is enhanced at a cost of reducing theoretical throughput. 5.3.5 Impact of Exposure Duration In the design of HYCACO, the exposure duration is the time unit of the duration of a symbol, which limits the theoretical throughput. W e vary the exposure duration from 50 µs to 300 µs , and the reported results show that the throughput increases as the exposure duration decreases. However , when the exposure duration and/or the illuminance is smaller than a threshold, the reception error increases which causes the throughput decreases. When the illuminance is higher than 450 lux and the exposur e duration is longer than 100 µs , the frames are decoded with a BER lower than 1% and an overall err or rate lower than 5%. T ABLE 3: Latency of Each Processing Step Processing Step Latency (ms) Sum pixels in each row and compute signal layer 40.61 Filter out DC component 0.24 Extract packets in a frame, Sampling, and normalize 30.8 Demodulate and decode a packet 1.12 The supported range of exposure duration depends on the hardware of the experiment devices. T ake the case of iPhone 6s, the duration of the signal recor ded in an image is (3024 − 1) × 6 . 45 = 19489 . 35 µs , which is the limitation of the packet duration. If the transmitter number is three and a packet has four symbols, to make sure a frame at least contains one complete packet, the maximum exposure duration is 19489 . 35 / [(6 × 4 + 4) ∗ 2] = 348 . 02 µs . Although iPhone 6s allows us to set the exposure duration as short as 13 µs , it would result in a very low SNR. In the actual implementation of HYCACO, four main causes reduce the SNR: • The latency of the operational amplifier in the switch circuit and the ON-OFF latency of the LED result in the slope of signal edge. • For the smartphone cameras employ electronic shutters rather than mechanical shutters, the pixel sensor still accumulates the photons during the readout phase, which interferes the information for imaging. • The brightness of a pixel is proportional to the amount of its received photons. Short exposure duration will result in low brightness. • Summing up all the pixels in each row can reduce the image noise, but cannot eliminate the image noise. 5.4 P ower Consumption and Latency W e finally measure the power consumption and the latency of processing 4032 × 3024 frames in our iOS receiver app. LCC is high power consumption, for CMOS sensors re- quire a lot of power . W e measure the energy impact with Xcode Instrument 2 . When the HYCACO app is capturing, processing and decoding simultaneously , the energy usage is 18 ± 1 (ranging between 0 and 20, with 20 indicating that the device is using power at a very high rate, and 0 indicating that very little power is being used). W ith this level of energy usage, the phone could run out the 1715 mAh battery within one or two hours. Furthermore, after about ten minutes of continuously receiving, the phone gets really hot. The measured time consuming for each processing step is listed in T able 3. The processing steps are illustrated in Fig. 8. If the exposure duration (time unit) is 100 µs , the packet duration is 2800 µs . Five or six packets can be extracted in one frame. W e assume that the user does not significantly move the phone, and the long exposure only needs to be captured once at the beginning of the reception. Thus, the total processing time of each frame is about 78 ms. The major cause of the latency is due to the high resolution, which 2. The Energy Usage in Xcode Instrument indicates a level from 0 to 20, indicating how much energy the app is using at any given time. These numbers are subjective. 11 contains twelve million pixels. If we lower the resolution by half, the processing can be done in realtime. Alternatively , we can reduce the latency by summing only part pixels in each row into a sample, but this will bring down the SNR. 6 D I S C U S S I O N Cameras on the market usually have differ ent frame rates, resolution, and readout durations. The throughputs of LCC systems are highly related to these features. Moreover , LCC is unsuitable for continuous receptions due to its high power consumption. Therefore, the first aim of an LCC system should be easy to use rather than the high throughput. The application scenarios of LoS LCC are restricted by the small RoI and the FoV of the camera. Light suf fers less from multipath effects than W iFi signals, and the radiant intensities follow an attenuation pattern. NLoS LCC can be used for indoor positioning and/or orientation techniques by estimating the channel gain of the transmitter-reflector links. W e plan it for future work. Our prototype works fine under a condition that the illuminance perceived by the camera is proportional to the number of ON-state LEDs. Employing more LED luminaires for transmission may violate the assumption. The relative position of the camera and the reflector has nearly no impact on the performance, because the channel gain of the reflector-r eceiver link is the same for all transmitters. Whereby the perceived illuminance differ ences are domi- nated by the transmitter-reflector links. When HYCACO can work with channel compensations, the application scenarios will be various. 7 R E L AT E D W O R K NLoS LCC. Most of the existing NLoS LCC approaches only utilize one LED for transmission. Danakis et al. [13] first propose that the CMOS camera can be used as a receiver in order to capture the continuous changes of the status (ON-OFF) of the light. MILC [14] improves the throughput with multi-level illuminations. Martian [3] encodes bits by varying the duty cycle of a pulse waveform. Rajagopal et al. [15] propose a hybrid VLC system, which simultaneously transmits low-speed data to cameras and high-speed data to photodiode receivers. ReflexCode [4] adopts reflected light emitted from multiple LEDs as its communication media, which looks very similar to our work, but it is actually another way to implement multi-level illuminations. Using a single LED to provide multi-level illuminations need an additional hardware like a DAC, which will increase the cost. V arying the luminous intensity or the duty cycle may break the overall brightness energy balance. The LEDs in HYCACO flicker with a constant duty cycle (50%) and thus are naturally flicker-free to human eyes. LoS LCC. If an LED has sufficient brightness, the LoS link has a suf ficient SNR and thus is mor e resilient to the am- bient noise. RollingLight [2] employs frequency shift keying scheme and delivers a throughput of 11.32 Bps. However , this throughput is achieved when the camera is very close to the LED. CamCom [16] uses undersampled frequency shift OOK to encode bits, and it achieves a throughput of 400 bps using 100 LEDs. Luo et al. [17] propose undersampled phase shift OOK, and their system reaches 150 bps with a dual LED lamp. ColorBars [18] utilizes Color Shift Keying (CSK) to modulate data using different colors transmitted by the LED. The major bottleneck of throughput is the small RoI. Besides, LoS LCC provides a natural way to enable visual association, which creates an opportunity for indoor positioning. Luxapose [19] explores the indoor positioning problem by detecting the presence of the luminaires in the captured image. LiT ell [20] proposes a robust localization scheme that employs unmodified fluor escent lights (FLs) as location landmarks. However , these positioning schemes need LoS links and enough spatial resolutions to separate transmissions from different transmitters. Other Recent VLC W orks. Several recent works inves- tigate other specific types of VLC. Screen-to-camera com- munications [8], [21], [22] employ the screens as the trans- mitters which have larger resolution and more changing states. Disco [7] uses a modified display as the transmitter to send sine wave signals. It recovers the signals with a simultaneous dual exposur e (SDE) sensor . DarkLight [23] and DarkVLC [24] allows light-based communication to be sustained even when the LED lights appear dark or OFF . Kaleido [9] utilizes the rolling shutter effect to prevent unauthorized users from taping a video played on a screen. LCC is a just a special type of VLC. In the transmitter , the rolling shutter deforms the signal frequency spatial detail by convolution. Thereby , with the proposed signal r ecovery approach, lots of modulation schemes employed in other kinds of VLC systems can also be employed in LCC. 8 C O N C L U S I O N In this paper , we design and implement HYCACO, which enables multiple access for NLoS LCC. W e demonstrated the efficacy of our design using a hardware prototype, which achieves a throughput of 4.5 kbps. HYCACO works fine when the measured illuminance is 450 lux which is the recommended illuminance for an indoor environment. Unlike the width-driven demodulation [2], [3], which is complex in computation, we just sample hundreds of judg- ing points from the received signal for the demodulation. Therefor e, HYCACO is high computational efficiency and can provide realtime responses with hand-held devices. The major concern of NLoS links is the signal power attenuation. W e extract the signal layer from the short exposure image by dividing it from a long exposure image. Thus, not only the image backgr ound is eliminated, but also the SNR is enhanced. If we use the existing LED infrastructur es for transmissions, the channel gains of the transmitter-r eflector links will be different. W e can add a compensation algorithm to make HYCACO functional or use the channel properties for indoor positioning. W e plan to address these challenges in future work. R E F E R E N C E S [1] A. Jovicic, J. Li, and T . Richardson, “Visible light communication: opportunities, challenges and the path to market,” IEEE Commu- nications Magazine , vol. 51, no. 12, pp. 26–32, 2 013. [2] H.-Y . Lee, H.-M. Lin, Y .-L. W ei, H.-I. W u, H.-M. T sai, and K. C.-J. Lin, “Rollinglight: Enabling line-of-sight light-to-camera commu- nications,” in Proceedings of the 13th Annual International Conference on Mobile Systems, Applications, and Services . ACM, 2015, pp. 167– 180. 12 [3] H. Du, J. Han, Q. Huang, X. Jian, C. Bo, Y . W ang, H. Xu, and X. Li, “Martian–message broadcast via led lights to heterogeneous smartphones: poster ,” in Proceedings of the 22nd Annual Interna- tional Conference on Mobile Computing and Networking . ACM, 2016, pp. 417–418. [4] Y . Y ang, J. Nie, and J. Luo, “Reflexcode: Coding with superposed reflection light for led-camera communication,” Proc. of the 23th ACM MobiCom (to appear) , 2017. [5] M. Luby , “Lt codes,” in Foundations of Computer Science, 2002. Proceedings. The 43rd Annual IEEE Symposium on . IEEE, 2002. [6] J. M. Kahn and J. R. Barry , “W ireless infrared communications,” Proceedings of the IEEE , vol. 85, no. 2, pp. 265–298, 1997. [7] K. Jo, M. Gupta, and S. K. Nayar , “Disco: Display-camera com- munication using rolling shutter sensors,” ACM T ransactions on Graphics (TOG) , vol. 35, no. 5, p. 150, 2016. [8] W . Hu, H. Gu, and Q. Pu, “Lightsync: unsynchr onized visual com- munication over screen-camera links,” in Proceedings of the 19th annual international conference on Mobile computing & networking . ACM, 2013, pp. 15–26. [9] L. Zhang, C. Bo, J. Hou, X.-Y . Li, Y . W ang, K. Liu, and Y . Liu, “Kaleido: Y ou can watch it but cannot recor d it,” in Proceedings of the 21st Annual International Conference on Mobile Computing and Networking . ACM, 2015, pp. 372–385. [10] J. Gancarz, H. Elgala, and T . D. Little, “Impact of lighting require- ments on vlc systems,” IEEE Communications Magazine , vol. 51, no. 12, pp. 34–41, 2013. [11] S. Keeping, “Characterizing and minimizing led flicker in lighting applications,” Electronic Products magazine , 2012. [12] D. W ¨ uller and H. Gabele, “The usage of digital cameras as lumi- nance meters.” in Digital Photography , 2007, p. 65020U. [13] C. Danakis, M. Afgani, G. Povey , I. Underwood, and H. Haas, “Using a cmos camera sensor for visible light communication,” in 2012 IEEE Globecom Workshops . IEEE, 2012, pp. 1244–1248. [14] Z. Y ang, H. Zhao, Y . Pan, C. Xu, and S. Li, “Magnitude matters: A new light-to-camera communication system with multilevel illumination,” in Computer Communications Workshops (INFOCOM WKSHPS), 2016 IEEE Conference on . IEEE, 2016, pp. 1075–1076. [15] N. Rajagopal, P . Lazik, and A. Rowe, “Hybrid visible light com- munication for cameras and low-power embedded devices,” in Proceedings of the 1st ACM MobiCom workshop on V isible light com- munication systems . ACM, 2014, pp. 33–38. [16] R. D. Roberts, “Space-time forward error correction for dimmable undersampled frequency shift on-off keying camera communica- tions (camcom),” in Ubiquitous and Future Networks (ICUFN), 2013 Fifth International Conference on . IEEE, 2013, pp. 459–464. [17] P . Luo, Z. Ghassemlooy , H. Le Minh, X. T ang, and H.-M. T sai, “Undersampled phase shift on-off keying for camera communi- cation,” in Wireless Communications and Signal Processing (WCSP), 2014 Sixth International Conference on . IEEE, 2014, pp. 1–6. [18] P . Hu, P . H. Pathak, X. Feng, H. Fu, and P . Mohapatra, “Colorbars: Increasing data rate of led-to-camera communication using color shift keying,” in Proceedings of the 11th ACM Confer ence on Emerging Networking Experiments and T echnologies . ACM, 2015, p. 12. [19] Y .-S. Kuo, P . Pannuto, K.-J. Hsiao, and P . Dutta, “Luxapose: Indoor positioning with mobile phones and visible light,” in Proceedings of the 20th annual international conference on Mobile computing and networking . ACM, 2014, pp. 447–458. [20] C. Zhang and X. Zhang, “Litell: robust indoor localization using unmodified light fixtures,” in Proceedings of the 22nd Annual Inter- national Conference on Mobile Computing and Networking . ACM, 2016, pp. 230–242. [21] W . Du, J. C. Liando, and M. Li, “Softlight: Adaptive visible light communication over screen-camera links,” in Computer Communi- cations, IEEE INFOCOM 2016-The 35th Annual IEEE International Conference on . IEEE, 2016, pp. 1–9. [22] B. Zhang, K. Ren, G. Xing, X. Fu, and C. W ang, “Sbvlc: Se- cure barcode-based visible light communication for smartphones,” IEEE T ransactions on Mobile Computing , vol. 15, no. 2, pp. 432–446, 2016. [23] Z. T ian, K. W right, and X. Zhou, “The darklight rises: V isible light communication in the dark,” in Proceedings of the 22nd Annual In- ternational Conference on Mobile Computing and Networking . ACM, 2016, pp. 2–15. [24] ——, “Lighting up the internet of things with darkvlc,” in Proceed- ings of the 17th International Workshop on Mobile Computing Systems and Applications . ACM, 2016, pp. 33–38. Fan Y ang received the BS degree in com- puter science from Qingdao University , Qing- dao , China, in 2009. He received the MS de- gree in software engineering from Xidian Uni- versity , Xi’an, China, in 2014. He is currently working toward the PhD degree with the School of Computer Science at Nor thwestern P olytech- nical University . His research interests include indoor positioning and visible light communica- tions. Shining Li received the BS and MS degrees in computer science from Nor thwestern P oly- technical University , Xi’an, China, in 1989 and 1992, respectively . He received the PhD degree in computer science from Xi’an Jiaotong Uni- versity , Xi’an, China, in 2005. He is currently a professor at the School of Computer Science, Nor thwestern Polytechnical University . His re- search interests include mobile computing and wireless sensor networks. He is a member of the IEEE. Zhe Y ang received his B.S . degree in informa- tion engineering in 2005 and the M.S. degree in control theor y and engineer ing in 2008, both from Xi’an Jiaotong University , Xi’an, China. He received the Ph.D . degree in electrical and com- puter engineering from the Univ ersity of Victoria, Victoria, Br itish Columbia, Canada, in 2013. He then joined the Depar tment of Computer Sci- ence at Nor thwestern P olytechnical University , Xi’an, China, with the exceptional promotion to associate professor . His research areas include protocol design, optimization, and resource management of wireless communication networks. T ao Gu is currently an associate professor in computer science with RMIT Univ ersity , Aus- tralia. His current research interests include mo- bile computing, ubiquitous/per v asive computing, wireless sensor networks, distr ib uted network systems, sensor data analytics, cyber physical system, Internet of Things, and online social net- works. He is a senior member of the IEEE and a member of the ACM. Cheng Qian received the BE degree in Inter net of things Engineering from Nor thwestern Poly- technical University , China in 2017. Since Octo- ber 2017, he has been working toward the MS degree in Computer Science and T echnology from Nor thwestern Polytechnical University .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment