A Multimodal Approach towards Emotion Recognition of Music using Audio and Lyrical Content

We propose MoodNet - A Deep Convolutional Neural Network based architecture to effectively predict the emotion associated with a piece of music given its audio and lyrical content.We evaluate different architectures consisting of varying number of tw…

Authors: Aniruddha Bhattacharya, K.V. Kadambari

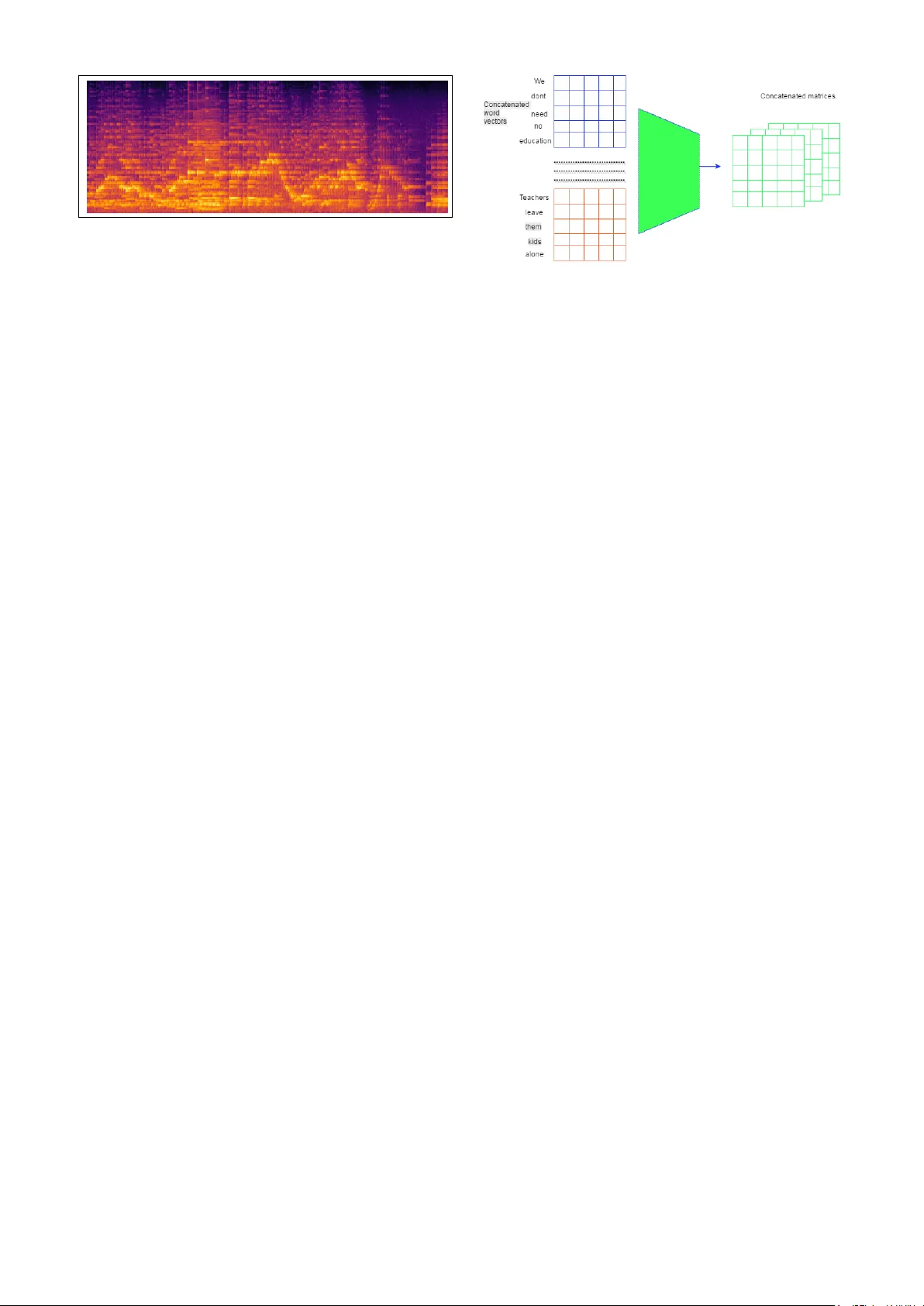

A MUL TIMOD AL APPR O A CH T O W ARDS EMO TION RECOGNITION OF MUSIC USING A UDIO AND L YRICAL CONTENT Aniruddha Bhattacharya Senior Undergraduate,NIT W arangal baniruddha2@student.nitw.ac.in Kadambari K.V Assistant Professor ,NIT W arangal kadambari@faculty.nitw.ac.in ABSTRA CT W e propose MoodNet -A Deep Con volutional Neural Net- work based architecture to effecti vely predict the emo- tion associated with a piece of music gi ven its audio and lyrical content.W e e valuate different architectures consist- ing of varying number of two-dimensional con volutional and subsampling layers,followed by dense layers.W e use Mel-Spectrograms to represent the audio content and word embeddings-specifically 100 dimensional word vectors, to represent the textual content represented by the lyrics.W e feed input data from both modalities to our MoodNet archi- tecture.The output from both the modalities are then fused as a fully connected layer and softmax classfier is used to predict the category of emotion.Using F1-score as our metric,our results sho w excellent performance of Mood- Net ov er the two datasets we experimented on-The MIREX Multimodal dataset and the Million Song Dataset.Our ex- periments reflect the hypothesis that more complex mod- els perform better with more training data.W e also observe that lyrics outperform audio as a better expressed modal- ity and conclude that combining and using features from multiple modalities for prediction tasks result in superior performance in comparison to using a single modality as input. 1. INTR ODUCTION W ith the e ver-increasing amount of digital music on- line,there arises a need of ef fectiv e organisation and re- triev al of such amount of data.Although traditionally ,the most common search and retrie val categories like artist and genre have recei ved greater attention in music informa- tion retriev al(MIR) research,emotion based retriev al tech- niques too have been prov en to be an effecti ve criterion for MIR [8] receiving greater attention [7, 26]. Generally ,Music Emotion Recognition(MER) has re- lied on audio features like Mel-frequency cepstral co- efficients (MFCCs) or mid-level features like chord [22],rhythmic patterns [22] etc for emotion recognition. L yrical features being semantically rich have also been widely used for emotion recognition,as their meanings con vey emotions more clearly and are composed in accor- dance to the music signals. For lyrical content analysis,mostly statistical natural language processing(NLP) techniques lik e bag of words [24] and probabilistic latent semantic models [9] hav e been used to extract te xtual features. W ith recent e volution of po werful computational hard- ware like GPUs,Deep Neural Networks have been used successfully in audio content analysis and retrie val,with exceptional accuracy in tasks like speech recognition [23] and computer vision [12] In computer vision, deep con volutional neural networks (Deep CNNs) simulate the behaviour of the human vision system and learn hierarchical features, allo wing object lo- cal in variance and robustness to translation and distortion in the model [14]. They hav e sho wn state-of-the-art perfor- mance in speech recognition [23] and music related tasks like music segmentation [25]. Like wise,vector space representations have also prov ed to be am effecti ve method of representing words [4, 20, 27].Using such representations along with Deep CNNs hav e shown great results in various NLP tasks like sen- tence classification [28] and sentiment analysis [6, 29]. In this paper ,we propose we propose MoodNet –a deep con volutional neural network based architecture,that com- bines the features obtained from both audio and lyrical modalities for classification of music based on mood.W e use mel-spectrograms as input for the audio modality .For the corresponding lyrical content,we use the vector repre- sentation of w ords present in the lyrical content as input to the network.Both of these representations are used as in- puts to the Deep CNNs which output a single feature v ec- tor(for each modality).The two vectors are combined and are classified using a softmax classifier . W e disuss CNNs for audio content and textual content analysis in Section 2 and Section 3 respectively .The Mood- Net architecture is introduced in Section 4 follo wed by ex- periments and results in Section 5. W e end our paper with a conclusion and the scope for future work in Section 6. 2. CNNS IN A UDIO CONTENT ANAL YSIS 2.1 Motivation CNNs are moti vated by our perception of vision where neurons capture local information and higher lev el infor- mation is obtained [14].CNNs are therefore designed to provide a way of learning robust features that respond to certain visual objects with translational and distortion in- variance.These advantages often work well with audio sig- nals too.Deep CNNs learn features hierarchically ,learning lower le vel features at the shallow end and hierarchically Figure 1 . Mel-spectrogram representation of audio.T ime and frequency are represented by Y and X-ax es respec- tiv ely . learning complex and higher -level features at the deeper ends [14]. Audio analysis tasks uses CNNs with the underly- ing assumption that auditory ev ents are detectable by ob- serving their time-frequenc y representation.As emotion of a song represents a high-le vel feature as compared to beat,chords,tempo as mid-le vel features,this hierarchical nature aligns with the motiv ations behind the architecture of Deep CNNs. 2.2 Representation Mel-spectrograms hav e been one of the most widespread features used for various audio analysis tasks like music auto-tagging and latent feature learning . The use of the mel-scale is supported by domain kno wledge about the hu- man auditory system [19] and has been empirically proven by impressiv e performance gains in various tasks [5]. The visual representation of audio mel-spectrogram is used as input to the MoodNet architecture(Figure 1). 2.3 Con volutions A conv olution layer of size lbd learns d features of lb, where l refers to the height and b refers to the width of the learned kernel. The kernel size also represents the maxi- mum span of a component it can capture. If the kernel size is too small, the corresponding con volutional layer would fail to learn a proper representation of the distribution of the data. For this reason, relati vely lar ger dimensional ker - nels are often preferred [10]. The con volution axes are an important aspect of con- volution layers. 2D con volutions generally perform better than 1D con volutions as the former can learn both tempo- ral and spectral structures and have been used in tasks like boundary detection [25] and chord recognition [10] 2.4 Pooling The pooling operation results in reduction of the fea- ture map size with an operation, usually a max func- tion. It is widely used in most of the modern CNN ar- chitectures.Pooling applies subsampling to reduce the size of feature map.While doing so ,instead of preserving in- formation about the whole input,it only tries to preserve the information of an activ ation in the region. The non- linear behaviour of subsampling also provides distortion Figure 2 . The construction of the text input from lyrical content as a 3 dimensional matrix. and translation inv ariances. For smaller pooling sizes ,the network cannot have enough distortion in variance.On the other hand,if it is too lar ge, many feature locations may be left out when needed. Normally , the pooling axes should match the con volution axes. 3. CNNS IN L YRICAL CONTENT ANAL YSIS 3.1 Motivation 3.1.1 W or d Embeddings Semantic representation of words is a challenging task in natural language processing.W ith the recent development of neural word representations models [13, 17, 18],word embeddings ha ve pro vided a broad scope for distrib utional semantic models. For the first time, distributed represen- tations of words makes it possible to capture semantics of words; including e ven the shift in meaning of words ov er time [16]. Such capability explains the recent suc- cessful switch in the field of natural language process- ing from linear models ov er sparse inputs, e.g. support vectors machines(SVMs) and logistic regression, to non- linear neural-network models ov er dense inputs. As a re- sult,models that rely on word embedding have been very successful in recent years, across a lar ge spectrum of lan- guage processing tasks [15]. W ord embeddings based on neural networks are prediction-based models.For a net- work to learn distributed representations for words,it learns its parameters by predicting the correct word (or its con- text) in a suitable text window over the training corpus. While the main objecti ve of training the entire netw ork is to learn superior parameters, word vector representations are based upon the idea that similar words are closer to- gether . In linguistics, this is known as Distributional Hy- pothesis. This very idea is beneficial for extracting features from text represented in a ’natural’ way; especially for un- derstanding the context of word use in mood prediction. Since this notion is viable for any natural language, we take adv antage of that and apply it to musical lyrics. 3.2 Representation Each word in the lyrics of a song is a v ector .So all the words in a sentence are v ectors which ,when concatenated represent a two-dimensional matrix.Similarly ,multiple lines of sentences when concatenated represent a three- dimensional matrix. The resultant input is similar to that of an image with multiple channels and thus serves as input to our Deep CNN architecture.The representation is sho wn in Figure 2. 4. MOODNET ARCHITECTURE Figure 2 sho ws one of the proposed architectures, a 4-layer MoodNet architecture which consists of 4 con volutional layers and 4 max-pooling layers.F or the audio content , this netw ork takes a log-amplitude mel-spectrogram sized 961366 as input. MoodNet-3 *MoodNet-4 **MoodNet-5 Mel-spectrogram (input: 96 × 1366 × 1) Con v 3 × 3 × 128 MP ( 2 , 4 ) (output: 48 × 341 × 128) Con v 3 × 3 × 256 MP ( 2 , 4 ) (output: 24 × 85 × 256) Con v 3 × 3 × 512 MP ( 2 , 4 ) (output: 12 × 21 × 512) *Con v 3 × 3 × 1024 *MP ( 3 , 5 ) (output: 4 × 4 × 1024) **Con v 3 × 3 × 2048 **MP ( 4 , 4 ) (output: 1 × 1 × 2048) Flatten 2048 × 1 T able 1 . The configurations of 3, 4, and 5-layer architec- tures for the audio modality .The darker layers sho w the additional layers for 4 and 5-layer architectures MoodNet-3 *MoodNet-4 **MoodNet-5 Mel-spectrogram (input: 100 × 10 × 20) Con v 3 × 3 × 6 MP ( 2 , 2 ) (output: 49 × 5 × 120) Con v 3 × 3 × 256 MP ( 2 , 2 ) (output: 24 × 4 × 256) Con v 3 × 3 × 512 MP ( 2 , 2 ) (output: 12 × 2 × 512) *Con v 3 × 3 × 1024 *MP ( 3 , 2 ) (output: 4 × 1 × 1024) **Con v 3 × 3 × 2048 **MP ( 4 , 2 ) (output: 1 × 1 × 2048) Flatten 2048 × 1 T able 2 . The configurations of 3, 4, and 5-layer archi- tectures for the text modality .The darker layers show the additional layers for 4 and 5-layer architectures.Note that zero padding has been used whenever required to a void di- mensionality reaching zero Similarly ,for the lyrical content,the entire corpus is first searched for the line with maximum length,This shall be the width of the three-dimensional matrix input.For all Figure 3 . Overall architecture of MoodNet.Here A –The Con volutional Layers; B –The te xt input as sentence; C –The Mel-Spectrogram input for audio; D –The Fully connected layer with successive dense layers and dropout; E –Softmax output; F –Modalities combined other sentence,the edges are padded with zeroes to match the maximum-width,resulting in a uniform width three- dimensional matrix. Each word is represented as a vector of length 100.W e use the publicly available GloV e [21] vectors, which were trained on a corpus of 6 Billion tokens from a 2014 W ikipedia dump. The vectors are of dimensionality 100, trained on the non-zero entries of a global word-w ord co- occurrence matrix, which tabulates ho w frequently words co-occur with one another in a gi ven corpus.Thus,each word represents a vector ,each sentence a matrix,hence each instance of multiple lined sentence i.e the lyrics of an entire song is represented as a three-dimensional matrix. Both the lyrical and audio components are fed into our Deep CNN architecture,which results in two fea- ture vectors for each modality .As referred in T able 1 and T able 2,we obtain 2048 units from each modality after flat- tening the final layer from the Deep CNN architecture.W e now combine the modalities,resulting in a 1D vector of 5096 units.W e now progressiv ely add Dense layers and a 20 % dropout rate and successi vely reduce the size of the layer from 5096 to 2048 units,1024 units,512 units and fi- nally 256 units,using ReLU activ ation.Finally ,we obtain a fully connected layer of size 5.This layer is now classified using a softmax classifier .The overall architecture is repre- sented in Figure 3. The architecture is extended to deeper ones with 4 and 5 layers.The number of feature maps and subsam- pling sizes for the audio modality are summarised in T able 1.Similarly ,the deep architecture for te xt modality is summarized in T able 2. 5. EXPERIMENTS AND RESUL TS 5.1 Overview W e used two datasets to ev aluate our MoodNet architec- ture, the MIREX Multimodal dataset [21](Dataset I) and the Million Song Dataset [2](Dataset II). W e test three architectures (MoodNet-3,4,5) in both Dataset I and Dataset II. In both datasets, the audio was trimmed as 29.0 clips (the shortest signal in the dataset) and downsampled to 12 kHz. The hop size was set to 256 samples (21 ms) during time-frequency transformation, re- sulting in an output of 1,366 frames in total. For the lyrics, Dataset I already contains the lyrics.For Dataset II ,we selected a subset of the song names and scraped their corresponding lyrics from lyrics.wikia.com if they were a vailable,else remov ed them from the dataset. As each word represents a 100 dimensional vec- tor ,each sentence in the lyrics represented a matrix(by concatenating the vectors vertically).Again,a number of such sentences,when concatenated,would represent a 3- dimensional matrix.Of course,we take necessary steps to handle problems like v ariable length of sentences for a song,or variable number of lines for dif ferent songs. The audio and textual components are used as inputs to the MoodNet architecture. W e used AD AM adaptive optimisation [11] on Keras [3] and Theano [1] frame work during the experi- ments.Categorical cross-entropy function has been used since as it results in faster con ver gence than distance-based functions such as mean squared error and mean absolute error . 5.2 Dataset I:MIREX Multimodal The MIREX Multimodal dataset has a total of 903 30- second clips, each of which belongs to one of the fiv e clus- ters (as shown in T able 3). Each cluster contains dif ferent numbers of clips, say , 170 clips in cluster I, 164 clips in cluster II, 215 clips in cluster III, 191 clips in cluster IV , and 163 clips in cluster V .The distrib ution has been repre- sented in Figure 4. Figure 4 . The distrib ution of samples among the five clus- ters in the MIREX Multimodal Dataset that we obtained. W e used F1 score as the accuracy metric for our exper - iment,as it considers both the precision ’p’ and the recall Cluster Mood I passionate, rousing, confident, boisterous II cheerful, fun, sweet,amiable III poignant, wistful, bittersweet, autumnal IV humorous, silly , campy , quirky , witty V aggressiv e, fiery , intense, volatile T able 3 . Emotion Categories and their defined clusters used in MIREX Multimodal Dataset ’ r’ v alue to compute the score. The results obtained by our architecture are summarized in T able 4. Architecture F-measure MoodNet-3 72.3 MoodNet-4 76.34 MoodNet-5 75.68 T able 4 . F-score obtained by v arious MoodNet architec- tures on the MIREX Multimodal dataset 5.3 Dataset II:Million Song Dataset W e also ev aluated our MoodNet architecture using the Million Song Dataset (MSD) with last.fm tags. W e se- lect the top 50 tags and extracted the mood based tags only .Among the variety of tags present,we clustered the tags according to T able 3.W e selected subset of 50,000 samples from MSD and searched for the corresponding lyrics from lyrics.wikia . W e removed the songs whose Figure 5 . The distrib ution of samples among the five clus- ters in the subset of the Million Song Dataset that we ob- tained. lyrics were not found.Our labels for each song was made by clustering the obtained tags and grouping them into one of the fi ve clusters as described in T able 3.Thus,the total dataset was reduced to 48,476 (40,476 for training and 8000 for validation).The distributionof samples across the datset has been represented by Figure 5. W e followed the same process of representing audio as mel-spectrograms and lyrics as word embeddings as in Dataset I. W e used F-1 score as the accurac y metric in our exper - iment with MSD.The results obtained are summarized in T able 5. Architecture F-measure MoodNet-3 66.28 MoodNet-4 69.73 MoodNet-5 71.29 T able 5 . F-score obtained by v arious MoodNet architec- tures on the Million Song Dataset 5.4 Modalities and Perf ormance W e also experimented with different modalities as in- puts.W e supplied only audio as input ,only text(lyrical con- tent) and both audio and lyrical modalities. From the results we obtained,as shown in T able 6,it is clear that when considering single modality as in- put,lyrics outperform audio.This result is expected as lyrics con vey meaning and emotion more e xplicitly ,than Mel- spectrograms of audio .It is also observed that when both audio and text modalities are combined ,they outperform the results obtained from a single modality input.This leads us to conclude that combining audio and te xt modalities leads to an increase in accuracy in emotion detection. Dataset Audio L yrics Audio+L yrics MIREX Multimodal 56.46 62.39 66.28 MSD 58.34 64.79 69.73 T able 6 . F-score obtained by MoodNet-4 architecture for audio and text modalities as inputs,both individually and combined 6. CONCLUSION AND FUTURE WORK W e presented MoodNet -an emotion detection model based on Deep Con volutional Neural Networks. It w as sho wn that our MoodNet architecture ,based on Deep Con volu- tional Neural Networks with 2D con volutions can be effec- tiv ely used for emotion detection.W e tested our hypothesis on two datasets. In both datasets,we tested our architec- tures with both audio and text input ,individually and com- bined.For audio,we used mel-spectrograms as input.For the text modality ,we used word embeddings as input. Our results sho w that lyrics as a single modality in- put outperforms audio.Also,combining both the modalities gav e a better performance in both our datasets.Thus,we conclude that a combination of v arious modalities as input results in a better representation of a song as a whole. It is to be noted that we didn’t use Long Short- T em Memory(LSTM) based Recurrent Neural Net- works(RNNs) as our task in volved detecting the emotion of an entire piece of music as a whole.In the future,we also plan to build an architecture capable of showing dynamic temporal behaviour .RNNs may be useful in that regard. As future work,we also plan to explore video as an addi- tional input modality .Also,mood based song recommender systems based on our architecture,should ef fectively help users discover new music and tackle the cold-start problem associated with collaborati ve filtering,used in m ost current recommender systems. 7. REFERENCES [1] Frederic Bastien, P ascal Lamblin, Razv an P ascanu and James Bergstra, Ian Goodfellow , Arnaud Bergeron, Nicolas Bouchard, David W arde-Farle y , and Y oshua Bengio. Theano: new features and speed improve- ments. , 2012. [2] Thierry Bertin-Mahieux, Daniel PW Ellis, Brian Whit- man, and Paul Lamere. The million song dataset. Pr oc. of the 12th International Society for Music Information Retrieval Confer ence,(ISMIR) , pages 591–596, 2011. [3] Francois Chollet. K eras:Deep learning library for theano and tensorflow . 2015. [4] R. Collobert and J. W eston. A unified architecture for natural language processing: Deep neural networks with multitask learning. Pr oc. of the 25th international confer ence on Machine learning , pages 160–167. [5] Sander Dieleman and Benjamin Schrauwen. End-to- end learning for music audio. IEEE International Con- fer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP), 2014 , pages 6964–6968, 2014. [6] C. N. dos Santos and Gatti.M. Deep conv olutional neural networks for sentiment analysis of short texts. COLING-2014 , pages 69–78. [7] Lu.L et al. Automatic mood detection and tracking of music audio signals. IEEE T rans. Audio,Speec h and Language Pr ocessing , 14(1):5–18, 2006. [8] A Friber g. In Digital A udio Emotions An Overview of Computer Analysis and Synthesis of Emotional Expr es- sion in Music , pages 1–6, 2008. [9] T Hofmann et.al. Probabilistic latent semantic index- ing. Pr oc. ACM SIGIR , pages 50–57, 1999. [10] Eric J Humphre y and Juan P Bello. Rethinking au- tomatic chord recognition with con volutional neural networks. 11th International Confer ence on Machine Learning and Applications , 2:357–362, 2012. [11] Diederik P . Kingma and Jimmy Ba. Adam: A method for stochastic optimization. CoRR , 2014. [12] A. Krizhevsk y , I. Sutske ver , and G. Hinton. Imagenet classification with deep con volutional neural netw orks. NIPS , 2012. [13] Q. V . Le and T . Mikolov . Distrib uted representations of sentences and documents. Pr oc. of The 31st Interna- tional Confer ence on Mac hine Learning , pages 1188– 1196, 2014. [14] Y ann LeCun and Y oshua Bengio. Conv olutional net- works for images, speech, and time series. The hand- book of br ain theory and neural networks , page 3361(10), 1995. [15] T . Luong, R. Socher , and C. D. Manning. Better word representations with recursive neural networks for morphology . CoNLL , pages 104–113, 2013. [16] C. D. Manning. Computational linguistics and deep learning. COLING , 41(4):701–707, 2015. [17] T . Mik olov , K. Chen, G. Corrado, and J. Dean. Dis- tributed representations of w ords and phrases and their compositionality . Pr oc. Advances in Neural Informa- tion Pr ocessing Systems , 26:3111–3119, 2013. [18] T . Mik olov , K. Chen, G. Corrado, and J. Dean. Ef- ficient estimation of word representations in vector space. ICLR , 2013. [19] Brian CJ Moore. An intr oduction to the psychology of hearing . Brill, 2012. [20] F . Morin and Y . Bengio. Hierarchical probabilistic neu- ral network language model. Pr oc. of the international workshop on artificial intelligence and statistics , pages 246–252, 2005. [21] R. P anda, R. Malheiro, B. Rocha, A. Oliveira, and R. P . Pai va. Multi-modal music emotion recognition: A ne w dataset methodology and comparative analysis. Proc. CMMR , pages 570–582, 2013. [22] Lu Qi, Xiaoou Chen, Deshun Y ang, and Jun W ang. Boosting for multi-modal music emotion. 11th Inter- national Society for Music Information and Retrieval Confer ence , 2010. [23] T ara N Sainath, Abdel rahman Mohamed, Brian Kings- bury , and Bhuv ana Ramabhadran. Deep con volutional neural networks for lvcsr . IEEE International Con- fer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) , pages 8614–8618, 2013. [24] F Sebastiani. Machine learning in automated te xt cate- gorization. ACM CSUR , 34(1):1–47, 2002. [25] Karen Ullrich, Jan Schluter, and Thomas Grill. Bound- ary detection in music structure analysis using con volu- tional neural networks. Pr oc. of the 15th International Society for Music Information Retrieval Confer ence, ISMIR , 2014. [26] Y .H. Y ang et al. In T owar d multi-modal music emotion classification , pages 70–79, 2008. [27] Y .Bengio, R. Ducharme, P . V incent, and C. Jan vin. A neural probabilistic language model. The J ournal of Machine Learning Resear ch , 3:1137–1155, 2003. [28] Kim Y oon. Conv olutional neural networks for sen- tence classification. Pr oc. of the 2014 Conference on Empirical Methods in Natural Language Pr ocessing (EMNLP) , pages 1764–1751, 2014. [29] Xiang Zhang, Junbo Zhao, and Y ann LeCun. Character-le vel con volutional networks for text clas- sification. Advances in Neural Information Pr ocessing Systems , pages 649–657, 2015.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment