On The Inductive Bias of Words in Acoustics-to-Word Models

Acoustics-to-word models are end-to-end speech recognizers that use words as targets without relying on pronunciation dictionaries or graphemes. These models are notoriously difficult to train due to the lack of linguistic knowledge. It is also uncle…

Authors: Hao Tang, James Glass

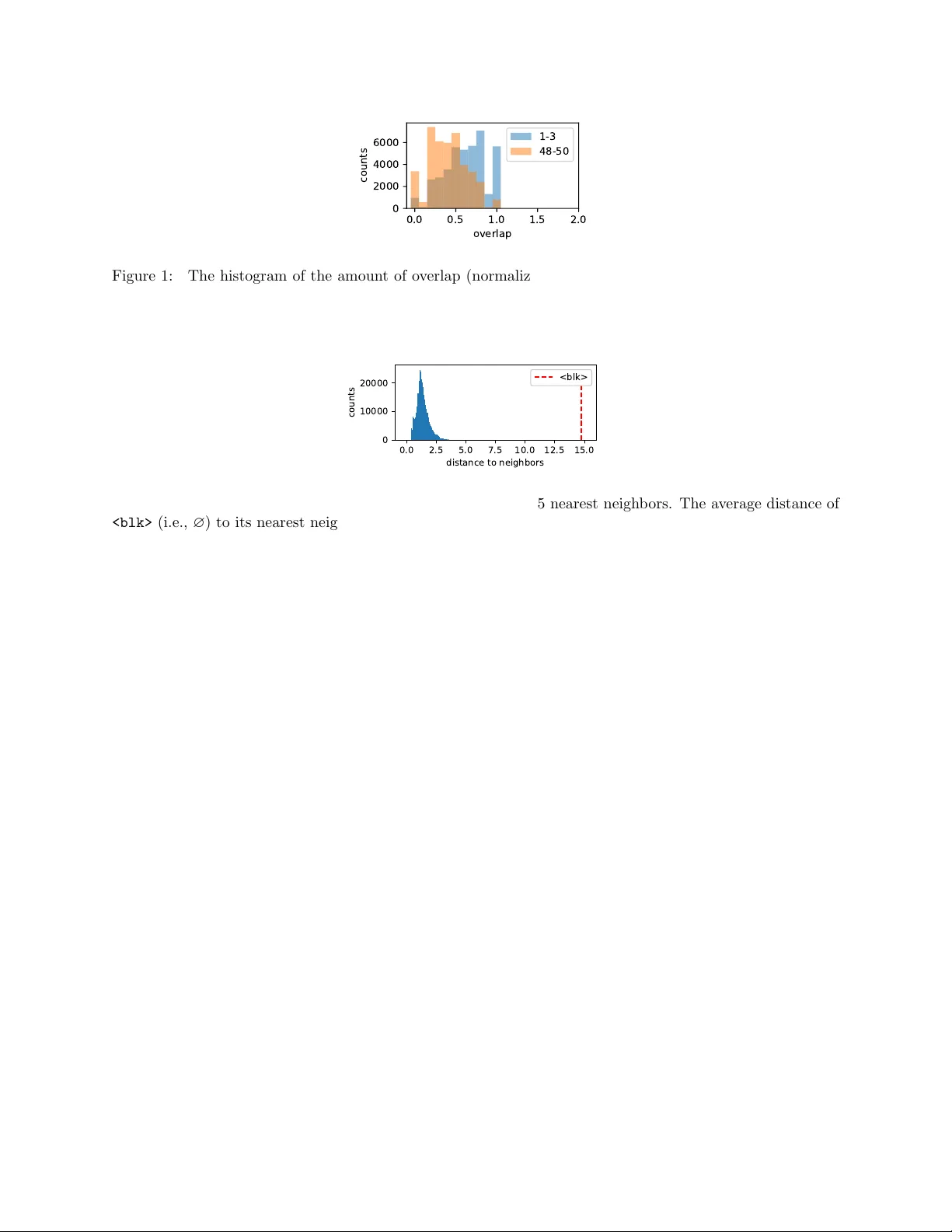

On The Inductiv e Bias of W ords in Acoustics-to-W ord Mo dels Hao T ang, James Glass Massac husetts Institute of T echnology Computer Science and Artificial In telligence Lab oratory Cam bridge MA, USA { haotang, glass } @mit.edu Abstract Acoustics-to-w ord mo dels are end-to-end sp eec h recognizers that use words as targets without relying on pron unciation dictionaries or graphemes. These models are notoriously difficult to train due to the lack of linguistic kno wledge. It is also unclear ho w the amount of training data impacts the optimization and generalization of suc h mo dels. In this work, w e study the optimization and generalization of acoustics-to- w ord models under different amoun ts of training data. In addition, w e study three t yp es of inductiv e bias, lev eraging a pron unciation dictionary , w ord b oundary annotations, and constraints on word durations. W e find that constraining w ord durations leads to the most improv emen t. Finally , w e analyze the w ord embedding space learned b y the model, and find that the space has a structure dominated by the pron unciation of words. This suggests that the contexts of words, instead of their phonetic structure, should b e the future focus of inductive bias in acoustics-to-w ord models. 1 In tro duction Acoustics-to-w ord models are a sp ecial class of end-to-end models for automatic sp eec h recognition (ASR) where the output targets are w ords [12, 2, 6, 5, 1, 17, 19, 9]. In contrast to other end-to-end mo dels where the output targets are phonemes or graphemes [7, 3], acoustics-to-word models directly predict words without relying on an y intermediate lexical units. A parallel set of transcriptions (in words) and acoustic recordings is sufficien t to train these mo dels. This prop ert y offers a significan t edge ov er con ven tional ASR systems, b ecause acoustics-to-word models require minimal domain expertise to train and use, and might potentially b e cheaper to build than con ven tional speech recognizers, dep ending on the cost of experts and obtaining the necessary resources. When not given enough data, acoustics-to-word mo dels are notoriously difficult to train [2]. The reason b ehind the difficult y is often attributed to the lac k of inductive bias, e.g., linguistic knowledge about phonemes and the pronunciation of w ords. T o impro ve the performance of acoustics-to-word mo dels, muc h of the previous w ork has focused on injecting inductiv e bias to the models, such as initializing acoustics-to-word mo dels with a pre-trained phone recognizer [2, 1, 19]. This approac h has been shown to b e critical in training acoustics-to-w ord mo dels. How ever, it defeats the purpose of acoustics-to-w ord models by requiring a lexicon during training. The central question is whether a lexicon is really necessary in training acoustics-to-word models. The result in [2] suggests that the optimization of acoustics-to-w ord mo dels is inheren tly difficult, and they further argue that fitting the training set itself is difficult b ecause there is not enough data. Additional inductiv e bias can provide a b etter initialization for optimizing the ob jective. Ho wev er, it is unclear and rather counter-in tuitiv e that fitting a small training set is more difficult than fitting a large one. In this work, we first study the optimization of acoustics-to-word mo dels. W e sho w that, in contrast to previous studies, acoustics-to-word mo dels are able fit a training set of v arious sizes without any inductive bias. W e then study the sample complexit y of acoustics-to-w ord mo dels, and sho w that, instead of having 1 an all-or-none learning effect as seen in [2], the generalization error decreases as w e increase the num b er of samples. In addition, we study the acoustics-to-w ord models under differen t inductive biases, including the pronunciation of words, word b oundaries, and word durations. W e also v ary the amoun t of additional data needed for these inductive biases. These results characterize the optimization and generalization of acoustics-to-w ord mo dels. Giv en the ability to train an acoustics-to-word mo del end to end, it is natural to ask to what exten t the common resources, such as the phoneme in ven tory , pronunciation dictionary , and language mo del, are learned by the model. W e analyze the weigh ts in the last lay er for predicting words and find sp ecial structures in the word em b edding space. These results sho w the limitation of acoustics-to-word mo dels and shed light on future direction for impro vemen t. 2 Acoustics-to-W ord Mo dels The class of acoustics-to-word mo dels can b e either implemen ted with connectionist temp oral classification (CTC) [4] or sequence-to-sequence mo dels [9]. In this w ork, w e fo cus on the case of CTC acoustics-to-word mo dels. Let x = ( x 1 , . . . , x T ) be an input sequence of T acoustic feature vectors, or frames, where x t ∈ R d for t = 1 , . . . , T . Let y = ( y 1 , . . . , y K ) b e an output sequence of K labels, where y k ∈ L for k = 1 , . . . , K and a v o cabulary set L . F or example, in our case, x t is a log-Mel feature vector, and y k is w ord. W e use the | · | to denote the length of a sequence. F or example, in this case, | x | = T and | y | = K . The goal of the mo del is to map an input sequence to an output sequence. In ASR, it is typical to ha ve an output sequence shorter than the input sequence, i.e., K < T . In order to predict K lab els given T frames, a CTC mo del predicts T lab els, allowing the prediction to ha ve rep eating lab els and ∅ ’s, where ∅ is the blank symbol for predicting nothing. T o get the final output sequence, there is a post-pro cessing step B that remo ves the rep eating lab els and the blank sym b ols, in that order. T o train a CTC mo del, we maximize the conditional likelihoo d p ( y | x ) = X z ∈ B − 1 ( y ) T Y t =1 p ( z t | x t ) (1) for a sample pair ( x, y ), where B − 1 maps a lab el sequence of length | y | to the set of all p ossible sequences of length | x | by rep eating lab els and inserting ∅ ’s. In other words, the function B − 1 the pre-image of B , and note that z t ∈ L ∪ { ∅ } for t = 1 , . . . , | x | . The conditional likelihoo d and its gradient with respect to each individual p ( z t | x t ) can b e efficiently computed with dynamic programming [4]. The individual conditional probabilit y p ( z t | x t ) is t ypically mo deled by a neural net w ork. The in- put sequence x 1 , . . . , x T is first transformed into a sequence of hidden vectors h 1 , . . . , h T , and p ( z t | x t ) = log softmax( W h t ) for t = 1 , . . . , T . Different studies ha ve used different net work architectures [7]. In this w ork, we use long short-term memory netw orks (LSTMs) to transform the input sequence. 3 Inductiv e Bias of W ords Giv en how little domain knowledge is used in acoustics-to-word models, muc h effort has b een put into injecting inductiv e bias in to acoustics-to-word models. In this section, w e describ e three approac hes to ac hieve this. 3.1 Lexicon A lexicon provides a mapping from a word to its canonical pron unciations. In conv en tional sp eec h recognizers, hidden Marko v mo dels are constructed for each phoneme (under different contexts). Once the mo dels are 2 trained, it is straigh tforward to generalize to unseen words by adding the pronunciations of those w ords to the lexicon. In end-to-end sp eec h recognizers, the need for a lexicon is sidestepp ed by using graphemes instead phonemes as targets. Some end-to-end sp eec h recognizers are able to generalize to unseen words this wa y , but it is still v ery difficult to add or remo ve a word from the v o cabulary of an end-to-end speech recognizer. In terms of acoustics-to-word mo dels, it is easy to remov e a w ord from the vocabulary , but hard for the mo dels to generalize to unseen words [5]. T o mak e use of a lexicon, past studies train a phoneme-based CTC mo del on the phoneme sequences con verted from word sequences in the training set [2, 1, 19]. Acoustics-to-word mo dels are then initialized with the pre-trained phoneme-based CTC mo del with the hop e that the acoustics-to-w ord mo dels are able to utilize the phonetic kno wledge enco ded in the phoneme-based CTC mo del. 3.2 W ord Boundary Another type of inductive bias is word boundaries. Sp eec h recognizers in general tend to p erform worse on long utterances than short ones, and rely on insertion p enalties to correct such bias. The training errors of long utterances are also typically higher than those of short ones. One hypothesis is that word boundaries are more difficult to pinp oin t during training for long utterances than for short ones. In similar spirit, if w e ha ve access to word b oundaries, we can train a frame classifier to enco de the kno wledge, and transfer it to acoustics-to-w ord mo dels through initialization. 3.3 W ord Duration Another factor that mak es pinp oin ting w ord b oundaries difficult is the high v ariance of word durations. In the WSJ training set ( si284 ), the a v erage duration of a w ord (measured from the forced alignments) is 339.5 ms with a standard deviation of 198.0 ms, while the a verage duration of a phoneme is 81.6 ms with a standard deviation of 46.7 ms. These statistics sho w that it is more difficult to estimate the n umber of w ords in an utterances than the n umber of phonemes. T o introduce word duration bias in acoustics-to-word mo dels, we down-sample the hidden vectors after eac h LSTM lay er. Sp ecifically , supp ose h n 1 , . . . , h n T is the output vectors pro duced by the ( n − 1)-th LSTM la yer after taking h n − 1 1 , . . . , h n − 1 T as input. Instead of the entire T v ectors, w e feed h n 1 , h n 3 , . . . , h n 2 b T / 2 c− 1 to the n -th LSTM lay er. Every down-sampling reduces the frame rate by half. Down-sampling has b een in tro duced in the past for sp eeding up inference [18, 3, 8, 15], but it can also act as imp osing a constrain t on the minimum w ord duration [14]. 4 Exp erimen ts W e choose the W all Stree Journal data set ( WSJ0 and WSJ1 ) for our exp eriments for the wide v ariety of w ords and ric h word usage in the data set. It consists of 80 hours of read sp eec h and 13,635 unique words in the training set ( si284 ). W e follo w the standard proto col using 90% of the si284 for training, 10% of si284 for developmen t, and dev93 and eval92 for testing. W e obtain forced alignmen ts of words and phonemes with a sp eak er-adaptive Kaldi system, follo wing the standard recip e [10]. W e extract 80-dimensional log-Mel features, and use them as input without concatenating i-vectors. W e use a 4-lay er unidirectional LSTM with 500 units per lay er. The softmax targets at each time step are the 13,635 words plus the blank symbol ( ∅ ). The LSTMs are trained b y minimizing the CTC loss with v anilla sto chastic gradien t descen t (SGD) for 20 ep ochs, a step size of 0.05, and gradien t clipping of norm 5. The mini-batc h size is one utterance. The b est mo del within the 20 ep o c hs is selected and trained for another 20 ep o c hs with learning rate 0.0375 deca yed by 0.75 after each ep o c h. The b est mo del out of the 40 ep o c hs is used for ev aluation. No additional regularization is used. T o see how the amoun t of data impacts optimization and generalization, w e train the LSTMs on different amoun t of training data, one-half of si84 (around 5 hours), si84 (around 10 hours), one-half of si284 3 T able 1: WERs (%) of acoustics-to-word mo dels trained on differen t sizes of the training set. The last column is the training p erplexit y of the last ep o c h. train dev dev93 eval92 si284 si84 -half 63.0 64.8 58.3 1.27 si84 51.9 55.1 45.1 0.54 si284 -half 32.9 36.2 33.5 0.40 si284 21.4 29.4 26.3 0.34 T able 2: PERs (%) of phoneme-based CTC mo dels trained on different sizes of the training set. train dev dev93 eval92 si84 -half 38.0 40.0 26.1 si84 27.7 29.1 16.1 si284 -half 16.1 15.0 12.4 si284 12.3 11.9 9.4 (around 35 hours) and the entire si284 (around 70 hours). Results are shown in T able 1. W e measure the training perplexity of the last ep och, i.e., the sum of cross en tropy at eac h frame divided b y the n umber labels (as opp osed to the num b er of frames). Except for the one with half of si84 , the other three mo dels are able to fit the training set without trouble. Note that the mo dels receive different n umbers of gradient up dates, so it is not surprising that lo wer training error is observed when using more data. The generalization error is b etter when using more data as exp ected. T o in tro duce the inductiv e bias given by the lexicon, w e train a 3-la yer LSTM phoneme-based CTC mo del using the same training pro cedure. The quality of the phoneme recognizer is shown in T able 2, and the p erformance is on par with the state of the art of a similar arc hitecture [7]. Similarly , to introduce the inductiv e bias of word b oundaries, w e train a 4-lay er LSTM w ord frame classifier with a lo ok ahead of one frame and online decoding [13]. The qualit y of the word frame classifier is sho wn in T able 3. This arc hitecture with additional lo ok ahead is able to ac hieve state-of-the-art frame error rates [13]. How ever, to limit the confounding factors, w e fix the lo ok ahead to one. T o see the impact of the amoun t of training data, w e initialize the acoustics-to-word models with phoneme- based CTC mo dels and w ord frame classifiers trained on differen t amounts of training data. The bottom three la yers of LSTMs are initialized with the v arious pre-trained mo dels. In other words, the last lay er of the frame classifier is discarded. The last la yer and the softmax lay er of the initialized acoustics-to- w ord model is randomly initialized the same w ay as the baseline mo dels w ere. This num b er of la yers for initialization is motiv ated b y recent success in multitask CTC [16, 11]. As a comparison, we also ha ve a set of acoustics-to-w ord models initialized with the b ottom three la y ers of the trained acoustics-to-word mo dels in T able 1. Results are sho wn in T able 4. Not only do we see no improv ement in initializing with phoneme- based CTC mo dels and frame classifiers, it actually hurts p erformance in many cases. Small improv ements from initializing with acoustics-to-word models themselves has b een observ ed, with the b est mo del ac hieving 27.5% WER on dev93 and 24.4% on eval92 . Finally , we exp erimen t with the amoun t of down-sampling in LSTM lay ers. W e ha ve four LSTMs with increasing amount of down-sampling after the input. Results are shown in T able 5. W e see significant impro vemen t, with the b est having a down-sampling factor of four. This is consisten t with the commonly used frame rate [18, 8, 15, 14]. 4 T able 3: FERs (%) of word frame classifiers trained on differen t sizes of the training set. train dev dev93 eval92 si84 -half 67.4 62.4 61.7 si84 56.0 55.3 53.2 si284 -half 40.6 46.3 47.2 si284 28.3 44.3 45.7 T able 4: WERs (%) on the dev elopment set for acoustics-to-word models initialized from different models. The first column indicates the amount of data on which the initial mo dels are trained. phoneme CTC w ord frame w ord CTC si84 -half 27.4 25.9 22.3 si84 28.9 24.8 21.9 si284 -half 25.0 31.1 20.6 si284 21.1 22.0 19.4 T able 5: WERs (%) of acoustics-to-w ord models for different amoun t of down-sampling in the LSTM lay ers. do wn-sampling dev dev93 eval92 2 0 21.4 29.4 26.3 2 1 21.8 2 2 17.7 26.8 24.6 2 3 18.6 2 4 18.8 5 0.0 0.5 1.0 1.5 2.0 overlap 0 2000 4000 6000 counts 1-3 48-50 Figure 1: The histogram of the amount of o verlap (normalized by the n umber of phonemes in the shorter w ord) in canonical pronunciations of a w ord to its close neighbors (the first to the third nearest neigh b or) and far neighbors (the 48th to the 50th nearest neighbor). 0 . 0 2 . 5 5 . 0 7 . 5 1 0 . 0 1 2 . 5 1 5 . 0 d i st a n c e t o n e i g h b o r s 0 1 0 0 0 0 2 0 0 0 0 c o u n t s < b l k > Figure 2: The histogram of distances from a word to its top 25 nearest neigh b ors. The av erage distance of (i.e., ∅ ) to its nearest neighbors is sho wn in dashed red. 5 Analysis The gap b et ween the results in T able 1 and the state-of-the-art end-to-end system [20], i.e., 14.1% absolute on eval92 , is large, and it shows that a v anilla acoustics-to-word mo del do es not p erform well out of the b o x. A phoneme-based CTC mo del with a lexicon and without a language mo del can achiev e 26.9% WER on eval92 [7]. Based on these results and the best down-sampling factor, it is likely that the mo del only learns to map acoustic features to words without utilizing the dep endencies b et ween w ords muc h. T o confirm this hypothesis and to understand how words are arranged in the em b edding space, we analyze the weigh ts of the softmax la yer. Given a word, we take its corresp onding weigh t vector in the softmax lay er and compute its nearest neighbors. W e first notice that similar pronouncing words tend to b e nearest neighbors of each other. T o see this, we define the ov erlap (in canonical pron unciation) as the n umber of phoneme tok ens app eared in b oth w ords divided by the length of the shorter word. W e compare the ov erlaps of words from tw o groups, the word to its close neighbors (the first to the third neigh b or) and the word to its far neighbors (the 48th to the 50th neigh b or). As shown in Fig. 1, the close neighbors hav e more similar pronunciations than the far neighbors. This confirms the h yp othesis that the model relies more on the acoustics rather than the language to predict words. W e then notice that the blank symbol is significantly further a wa y (in L2 distance) from other words, and this can b e seen from the histogram of distances in Fig. 2. W e refer to the distance b etw een a w ord and its first nearest neigh b or as the margin. In other words, the margin of the blank sym b ol is significan tly larger than that of other words. W e then notice that the margin is related to the o ccurrence of words in the training set, as is sho wn in Fig. 3. This might be the consequence of conditional indep endence assumed in the CTC loss. If this is indeed the case, improv ed p erformance can only b e achiev ed through a different ob jective or an inductive bias on w ord dep endency . 6 1 0 0 1 0 1 1 0 2 1 0 3 1 0 4 1 0 5 c o u n t s 0 2 4 6 8 d i st t o t h e 1 st n e i g h b o r Figure 3: The num b er of times a word app ears in the training set against the distance to its first nearest neigh b or. The p oin t in the upp er right corner is SIL (the silence). 6 Conclusion In this work, w e study the optimization and generalization of acoustics-to-word mo dels. W e find that the mo dels are able to fit data sets of v arious sizes without trouble. The generalization error decreases as exp ected, as w e increase the amount of training data. In contrast to other studies, we find no impro vemen t in initializing the mo dels with a pre-trained phoneme-based CTC mo del or a word frame classifier. Down- sampling the hidden vectors after the LSTM lay er pro vides significan t improv ement. T o understand what hinders the p erformance, we analyze the w ord embeddings learned by the mo del. The mo del discov ers similar sounding w ords and places them in the corresp onding neighborho od. How ever, the model might not b e utilizing the lab el dep endency m uc h during deco ding. This suggests that lab el dep endency should b e the future fo cus of inductive bias in acoustics-to-w ord mo dels. References [1] Kartik Audhkhasi, Brian Kingsbury , Bh uv ana Ramabhadran, George Saon, and Mic hael Pic heny . Build- ing comp etitiv e direct acoustics-to-word mo dels for English con versational sp eec h recognition. In IEEE International Confer enc e on A c oustics, Sp e e ch and Signal Pr o c essing (ICASSP) , 2018. [2] Kartik Audhkhasi, Bhuv ana Ramabhadran, George Saon, Michael Pichen y , and Da vid Nahamo o. Direct acoustics-to-w ord mo dels for English con versational speech recognition. In Intersp e e ch , 2017. [3] William Chan, Na vdeep Jaitly , Quoc Le, and Oriol Vin yals. Listen, attend and spell: A neural netw ork for large vocabulary conv ersational sp eech recognition. In IEEE International Confer enc e on A c oustics, Sp e e ch and Signal Pr o c essing (ICASSP) , 2016. [4] Alex Grav es, Santiago F ern´ andez, F austino Gomez, and J ¨ urgen Schmidh ub er. Connectionist temp oral classification: lab elling unsegmented sequence data with recurrent neural netw orks. In International Confer enc e on Machine L e arning (ICML) , 2006. [5] Jin yu Li, Guoli Y e, Amit Das, Rui Zhao, and Yifan Gong. Adv ancing acoustic-to-word CTC mo del. In IEEE International Confer enc e on A c oustics, Sp e e ch and Signal Pr o c essing (ICASSP) , 2018. [6] Jin yu Li, Guoli Y e, Rui Zhao, Jasha Dropp o, and Yigan Gong. Acoustic-to-word mo del without OO V. In IEEE Workshop on Automatic Sp e e ch R e c o gnition and Understanding (ASRU) , 2017. [7] Y a jie Miao, Mohammad Gow ayy ed, and Florian Metze. EESEN: End-to-end sp eech recognition using deep RNN mo dels and WFST-based deco ding. In IEEE Workshop on Automatic Sp e e ch R e c o gnition and Understanding (ASRU) , 2015. 7 [8] Y a jie Miao, Jinyu Li, Y ongqiang W ang, Shi-Xiong Xhang, and Yifan Gong. Simplifying long short-term memory acoustic mo dels for fast training and deco ding. In IEEE International Confer enc e on A c oustics, Sp e e ch and Signal Pr o c essing (ICASSP) , 2016. [9] Shruti Palask ar and Florian Metze. Acoustic-to-w ord recognition with sequence-to-sequence mo dels. arXiv:1807.09597 , 2018. [10] Daniel P ov ey , Arnab Ghoshal, Gilles Boulianne, Luk as Burget, Ondrej Glem b ek, Nagendra Go el, Mirko Hannemann, P etr Motlicek, Y anmin Qian, Petr Sch warz, et al. The k aldi sp eec h recognition toolkit. In IEEE 2011 Workshop on Automatic Sp e e ch R e c o gnition and Understanding (ASRU) , 2011. [11] Ramon Sanabria and Florian Metze. Hierarc hical multi task learning with CTC. , 2018. [12] Hagen Soltau, Hank Liao, and Hasim Sak. Neural sp eec h recognizer: Acoustic-to-w ord LSTM mo del for large vocabulary sp eech recognition. In Intersp e e ch , 2017. [13] Hao T ang and James Glass. On training recurrent netw orks with truncated backpropagation through time in sp eec h recognition. In IEEE Workshop on Sp oken L anguage T e chnolo gy (SL T) , 2018. [14] Hao T ang, Liang Lu, Lingp eng Kong, Kevin Gimpel, Karen Livescu, Chris Dyer, Noah A. Smith, and Stev e Renals. End-to-end neural segmental models for sp eec h recognition. IEEE Journal of Sele cte d T opics in Signal Pr o c essing , 11, 2017. [15] Hao T ang, W eiran W ang, Kevin Gimp el, and Karen Livescu. Efficient segmen tal cascades for sp eec h recognition. In Intersp e e ch , 2016. [16] Sh ubham T oshniwal, Hao T ang, Liang Lu, and Karen Liv escu. Multitask learning with low-lev el auxil- iary tasks for enco der-deco der based sp eec h recognition. In Intersp e e ch , 2017. [17] Sei Ueno, Hirofumi Inaguma, Masato Mimura, and T atsuya Kaw ahara. Acoustic-to-word attention- based mo del complemented with character-lev el CTC-based mo del. In IEEE International Confer enc e on A c oustics, Sp e e ch and Signal Pr o c essing (ICASSP) , 2018. [18] Vincen t V anhouck e, Matthieu Devin, and Georg Heigold. Multiframe deep neural net works for acoustic mo deling. In IEEE International Confer enc e on A c oustics, Sp e e ch and Signal Pr o c essing (ICASSP) , 2013. [19] Chengzh u Y u, Ch unlei Zhang, Chao W ang, Jia Cui, and Dong Y u. A multistage training framew ork for acoustic-to-w ord mo del. In Intersp e e ch , 2018. [20] Y u Zhang, William Chan, and Navdeep Jaitly . V ery deep conv olutional net works for end-to-end speech recognition. In IEEE International Confer enc e on A c oustics, Sp e e ch and Signal Pr o c essing (ICASSP) , 2017. 8

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment