Detecting Backdoor Attacks on Deep Neural Networks by Activation Clustering

While machine learning (ML) models are being increasingly trusted to make decisions in different and varying areas, the safety of systems using such models has become an increasing concern. In particular, ML models are often trained on data from potentially untrustworthy sources, providing adversaries with the opportunity to manipulate them by inserting carefully crafted samples into the training set. Recent work has shown that this type of attack, called a poisoning attack, allows adversaries to insert backdoors or trojans into the model, enabling malicious behavior with simple external backdoor triggers at inference time and only a blackbox perspective of the model itself. Detecting this type of attack is challenging because the unexpected behavior occurs only when a backdoor trigger, which is known only to the adversary, is present. Model users, either direct users of training data or users of pre-trained model from a catalog, may not guarantee the safe operation of their ML-based system. In this paper, we propose a novel approach to backdoor detection and removal for neural networks. Through extensive experimental results, we demonstrate its effectiveness for neural networks classifying text and images. To the best of our knowledge, this is the first methodology capable of detecting poisonous data crafted to insert backdoors and repairing the model that does not require a verified and trusted dataset.

💡 Research Summary

The paper addresses the pressing problem of backdoor (trojan) attacks on deep neural networks (DNNs) that arise when an adversary injects maliciously crafted training samples into an otherwise untrusted dataset. Unlike evasion attacks, backdoor attacks remain dormant on clean inputs and only activate when a specific trigger—known only to the attacker—is present, making detection extremely difficult without a trusted clean dataset. Existing defenses either require large verified data for outlier detection or involve costly retraining, which is impractical for modern DNNs.

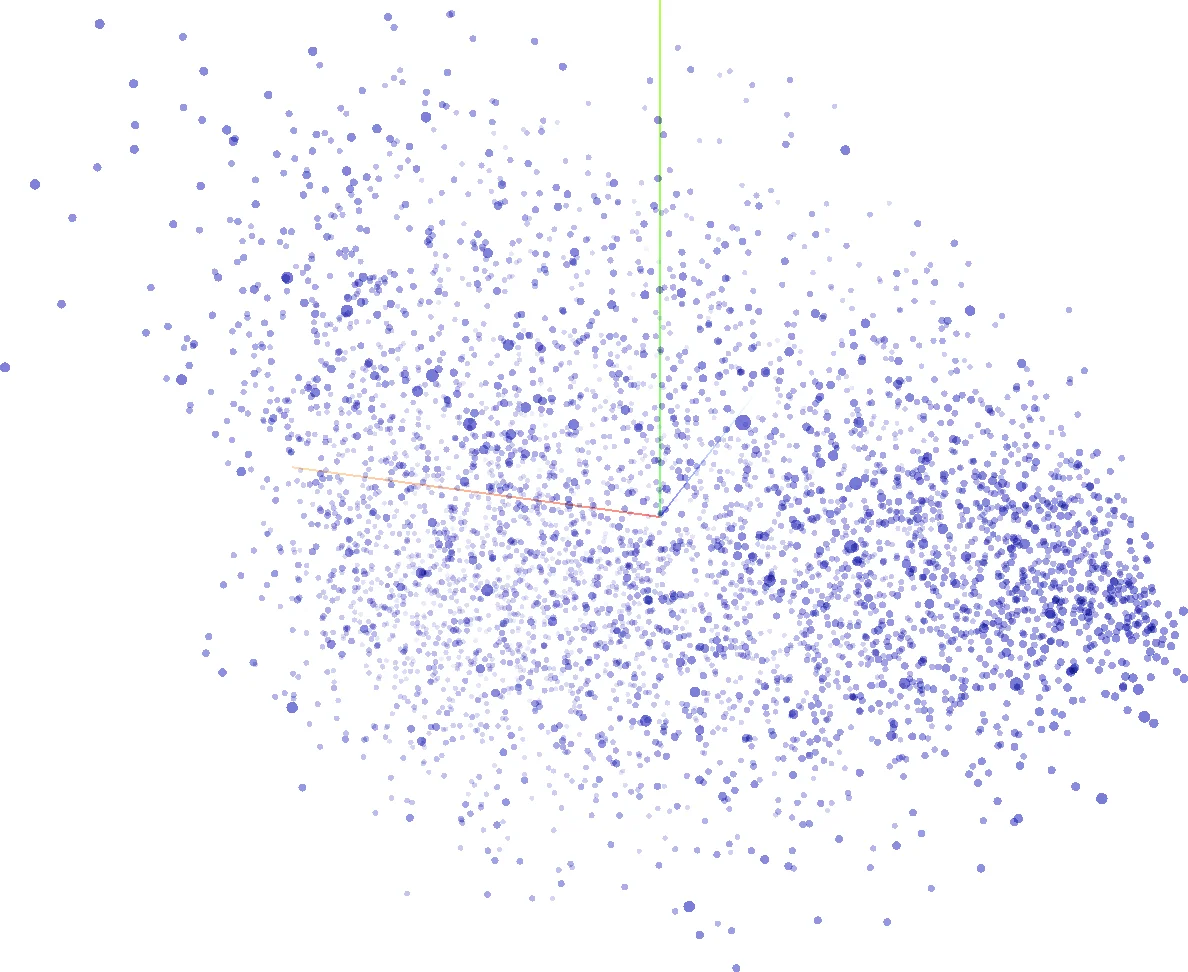

To overcome these limitations, the authors propose Activation Clustering (AC), a novel methodology that leverages the internal representations of a trained network to identify poisoned samples. The core insight is that, while both legitimate target‑class samples and backdoor‑triggered samples receive the same final prediction, they do so for fundamentally different reasons. Legitimate samples activate features that genuinely belong to the target class, whereas backdoor samples activate features of the source class together with the trigger. Consequently, the activation patterns in the last hidden layer of the network should form two distinct clusters when backdoor samples are present.

The AC pipeline proceeds as follows: (1) Train the DNN on the potentially poisoned training set. (2) Feed every training example through the trained model and record the activation vector of the final hidden layer, flattening it to a one‑dimensional representation. (3) Reduce dimensionality using Independent Component Analysis (ICA), which the authors found superior to Principal Component Analysis for preserving discriminative structure. (4) Apply k‑means clustering with k = 2 to the reduced activations for each predicted class separately. (5) Determine which cluster, if any, corresponds to poisoned data. Two complementary strategies are introduced:

-

Exclusionary Reclassification (ExRe): Remove the candidate cluster, retrain the model on the remaining data, and then classify the removed samples with the new model. If the removed samples are mostly classified as their original label, the cluster is deemed legitimate; if they are predominantly classified as another class (the source class), the cluster is flagged as poisoned. An ExRe score l/p (where l is the number of correctly classified samples and p the number classified as the most frequent alternative class) is compared against a threshold T (default = 1).

-

Relative Size Comparison: In the absence of a clear ExRe signal, the size of the cluster relative to the total number of samples for that class is examined; unusually small clusters are likely to be poison.

The authors evaluate AC on three domains: (i) MNIST digit classification, where a 3 × 3 inverted‑pixel pattern in the bottom‑right corner serves as the trigger; (ii) LISA traffic‑sign dataset, where a post‑it‑like sticker on stop signs is the trigger; and (iii) Rotten Tomatoes sentiment analysis, where a unique string “‑travelerthehorse” appended to positive reviews creates the backdoor. In each case, poisoned samples are labeled as the target class (e.g., digit l → (l+1) mod 10, stop sign → speed‑limit, positive review → negative).

Experimental results demonstrate that AC achieves detection rates exceeding 95 % across all datasets, with near‑perfect performance (≈99 %) on MNIST. The method remains robust when multiple backdoors are inserted simultaneously or when classes are multimodal (contain sub‑populations). After identifying and removing poisoned samples, the model is retrained; the clean model’s standard accuracy drops by less than 0.1 %, while the backdoor‑induced misclassifications disappear entirely.

Key contributions of the work are:

- The first backdoor detection technique that does not rely on any trusted clean data, making it applicable in realistic settings where data provenance is uncertain.

- A simple yet effective use of activation‑space clustering, requiring only standard training and a lightweight post‑processing step.

- Open‑source release as part of IBM’s Adversarial Robustness Toolbox, facilitating adoption by practitioners.

Limitations include the computational cost of ICA on very large activation matrices, sensitivity to the choice of dimensionality‑reduction method, and a potential decline in detection efficacy when the proportion of poisoned data exceeds roughly 30 %, at which point clusters may merge. Future work suggested by the authors involves automatic threshold selection, exploring alternative reduction techniques (e.g., LDA, t‑SNE), extending the approach to multiple hidden layers, and adapting AC for streaming or online learning scenarios.

In summary, Activation Clustering provides a practical, data‑agnostic defense against backdoor poisoning attacks, bridging a critical gap between theoretical vulnerability analyses and deployable security mechanisms for modern deep learning systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment