Hardware-Efficient Structure of the Accelerating Module for Implementation of Convolutional Neural Network Basic Operation

This paper presents a structural design of the hardware-efficient module for implementation of convolution neural network (CNN) basic operation with reduced implementation complexity. For this purpose we utilize some modification of the Winograd mini…

Authors: Aleks, r Cariow, Galina Cariowa

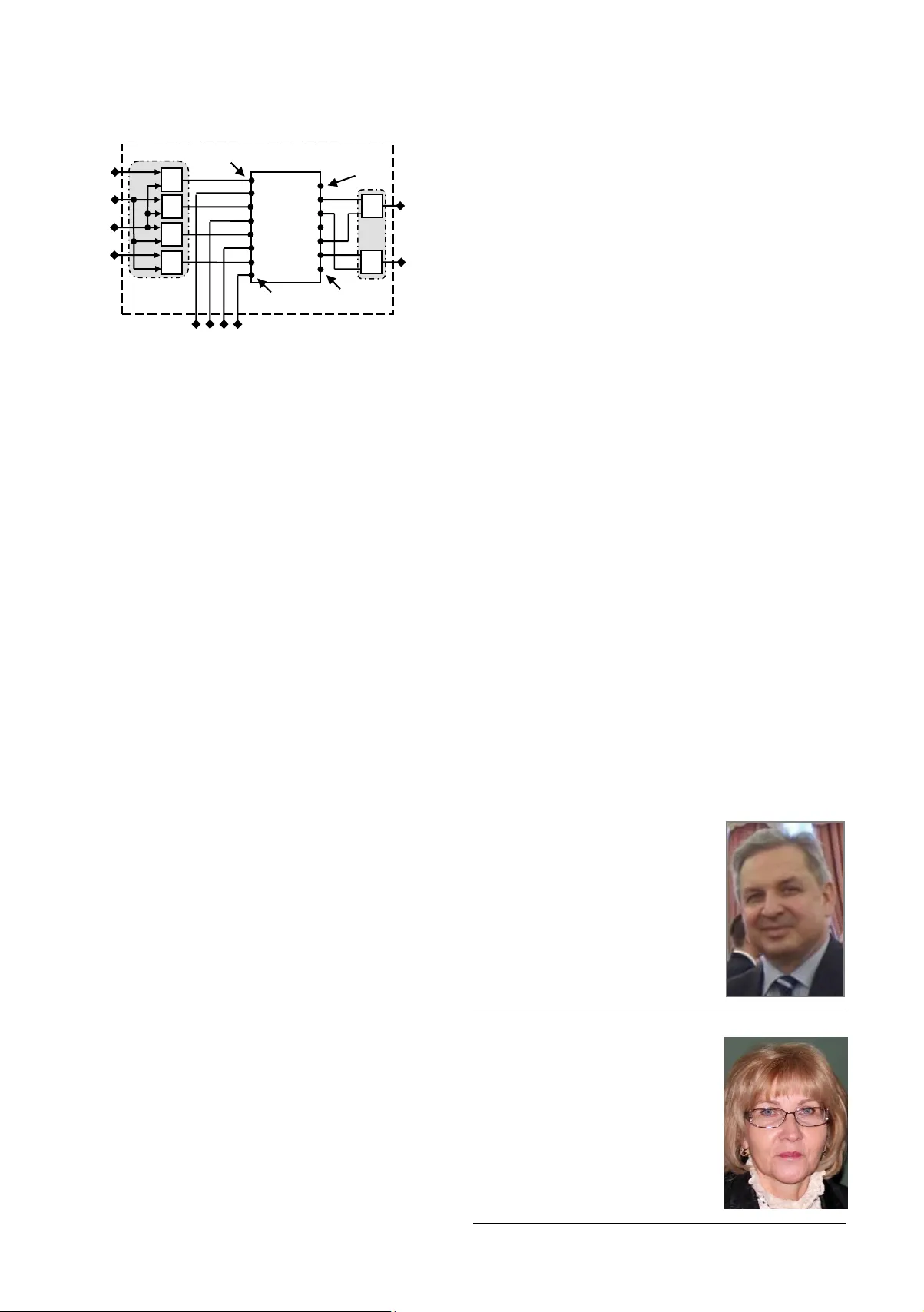

Measurement A utomation Mon itoring, Jan. 2 017, vol. 61, no. xx 1 A leksandr CARIOW 1 , Galina C ARIOWA 1 1 W EST POMIERANIAN UN IVERSITY OF TECHNOLOG Y, SZCZECIN, Żołniersk a St. 49, 71 -21 0, Szczecin Pol and Hardw are-Efficient Structure of the A ccelerating Modu le for Implementation of C onvolution al Neural Netw ork Bas ic Operation Abstract This paper presents a structural design of the hardware-ef ficient module f or implementatio n of convolution neural network (CNN) basic operation with reduced impleme ntation complexity . For this purpose we utilize some modification of the Winograd ’s minimal filtering method as well as computation vectorization principle s. This module calculate inner products of two consecutive segme nts of the original data sequence , formed by a sliding window of length 3, with t he elements of a filter i mpulse response. The fully parallel structure of th e module for calculating these two inner products, based on the i mpleme ntation of a naïve method of calculation, requires 6 binary multipliers and 4 binary ad ders. T he use of t he Winog rad ’s minimal filter ing met hod allow s to cons truct a mo du le str ucture t hat requires only 4 bi nary multipliers and 8 binary adders. Since a high- performance convolutional n eural networ k c an contain t ens or even hundreds of such mo dules, such a reduction can have a significant effect. Keywords : convol ution neural network, Winogra d ’s minimal filtering algorithm, imple mentation comple xity reduction, FPGA implementation 1. Introduction Artificial intelligence, deep learning, and neural networks represent powe rful and in credibly effective machine learning -based techniques u sed to solve many scientific and practical problems. Applications of d eep neural n etworks to machine learning are diverse and promptly develop ing, reachin g th e various fields o f fundamental sciences, technologies and real-world. Am ong th e various types of deep neural networks, convolutional neural networks (CNN) are the most widely used [1]. The basic and most time-consuming operation in CNN is the operation of a two- dimensional convolution. Several me thods have been proposed to accelerate the calculation o f convolu tion, in cluding th e reduction of arithmetic op erations via Fast Fourier transform ( FFT ) and the use of hardware accelerators based on FPGA, G PU and ASIC [2- 16 ] . FFT based method of com puting convolution is traditionally used for large filters, but state of the art CNN use small filters. In this situation one of the most effective algorithms used in the computation o f a small-length two-dimensional convolution is th e Winograd's minimal filtering algorithm, that is most intensively used in rece nt tim e [ 17 ]. The a lgorithm compute linear c onvolution over small tiles with minimal complexity, which makes it more effective with small filters and small batch sizes. In fac t, this algorithm ca lculates two inner p roducts of neighboring vec tors formed by a sliding tim e window from the c urrent data stream w ith an impulse response of the 3-tap finite impulse response (FIR) filter. Many publications hav e been devoted to th e impleme ntation of computations in networks base d on the Winograd's minimal filtering me thod [ 17 -20]. How ever, the principles of organizing the structure o f the m odule that im plements the filtering algorithm have not been considered in d etail by anyone. Our publication is intended to fill this gap. 2. Preliminaries As alread y no ted, the b asic o peration of convolutional neu ral networks is a sliding inn er product of vectors, for med by a moving time windo w from the current data stream with an impulse response of the M -tap FIR filter. It can be described by the following f ormula: i M i l i l h x y 1 0 , 1 ,..., 1 , 0 M i , 1 ,. .. , 1 , 0 M N l , where i x are the elements of the curren t data stream, i h are the elements of the im pulse response of FIR f ilter, which are constants. Direct calculation of the inner product of two vectors of length M requires M multiplication and M -1 additions. Direct a pplication of two c onsecutive steps of a 3-tap FIR f ilter with coefficients } , , { 2 1 0 h h h to a set of 4 elements } , , , { 3 2 1 0 x x x x requires 6 additions and 6 multiplications: 2 2 1 1 0 0 0 h x h x h x y , 2 3 1 2 0 1 1 h x h x h x y (1) Fig. 1 explains the essence of the reasoning. Fig. 1. Demonstration of t he essence of co mputat ion executio n in accorda nce w ith the Winograd's mini mal filtering method The idea of Winograd's m inimal filtering method is t o compute these two filter outputs in following way [ 17 ]: 0 2 0 1 ) ( h x x , 2 ) ( 2 1 0 2 1 2 h h h x x , 2 ) ( 2 1 0 1 2 3 h h h x x , 2 2 1 4 ) ( h x x , 3 2 1 0 y , 4 3 2 1 y . The values 2 ) ( 2 1 0 h h h and 2 ) ( 2 1 0 h h h can be calculated in advance, then this method requires 4 multiplications and 8 additions, which is equal to number of arith metical operation s in the direct me thod. But since multiplica tion is a m uch more complicated operation than addition, the Winograd's minimal filtering method is more efficient than the direct method of com putation. The abo ve expressions exhaustively d escribe the entire set of mathematical operations needed to compute, but th ey d o not disclose the way and sequence of the com putation organization, n or the stru cture o f the pro cessor module that im plements these operations. 3. Structural sy nthesis of Wi nograd's min imal filtering module Let ] , , , [ 3 2 1 0 1 4 x x x x X - be a colu mn vector, that represent the input tile , ] , , [ 2 1 0 1 3 h h h H - be a c olumn v ector, that contains the all coefficients of i mpulse respo nse of 3 -tap FIR filter (filter tile), and ] , [ 1 0 1 4 y y Y - be a column v ector contain ing result of computing two outputs the 3-tap FIR filter. Then, a fully parallel 0 x 1 x 2 x 3 x 2 0 0 i i i h x y 2 0 1 1 i i i h x y 2 Measure ment Automation Monitoring, Jan. 2 017, vol. 61, n o. xx algorithm f or com putation 1 4 Y using Winograd's minimal filtering m ethod can be w ritten w ith t he help of following matrix - vector calculating procedure: 1 4 ) 1 ( 4 1 4 ) 2 ( 4 2 1 4 ) ( X A S A Y diag (2 ) where 1 1 1 1 1 1 1 1 ) 1 ( 4 A , 1 1 1 0 0 1 1 1 ) 2 ( 4 2 A , and ] , , , [ 3 2 1 0 1 4 s s s s S , 0 0 h s , 2 ) ( 2 1 0 1 h h h s , 2 ) ( 2 1 0 2 h h h s , 2 3 h s . Entries o f the matrix ) ( 1 4 S d i a g can be compu ted with the help of the following procedure: 1 3 ) 1 ( 3 5 ) 2 ( 5 4 4 1 4 H A A D S (3 ) where ) 1 , 2 1 , 2 1 , 1 ( 4 di ag D , 1 1 1 1 1 1 ) 1 ( 3 5 A , 1 1 1 1 1 1 ) 2 ( 5 4 A , Fig. 2 show s a data flow diagram of the proposed algorit hm f or the implementation of Winogra d's mini mal filtering basic operation. In this paper, data flow diagr ams are oriented from left to right and Fig. 3 shows a data flow diagram of the process for calculating the v ector 1 4 S entries. Straight lines in the fig ures denote the op erations of data transfer. The circles in these figures show the operation of multiplication by a real number in scribed inside a circle. Points where lines converge denote summation a dotted lines ind icate the sign-change operations. We use th e usual lines without arrows on purpose, so as not to clutter the picture. Fig. 2 . The data flow diagram o f proposed algorith m for implementat ion of Winograd's mini mal filtering bas ic operation Fig. 3 . The data flow diagra m describing t he process of calculating entr ies of the vector 1 4 S in accordance w ith the procedure (3 ). In low po wer appli cation specific integrated circuits (ASIC) design, optimization must be primarily do ne at the level of transistor amount. From this point of view a multiplication requires much more intensive hardware resources th an an addition. Mo reover, a binary multiplier occupies much more area and co nsumes much more power th an bin ary adder. This is because the implementation co mplexity of a fully parallel multip lier grows qu adratically with operand length, while the implementation complexity of an adder increases lin early with operand length. Therefore, the algorithm containing as little as possible of multiplications is p referable from the point of view of ASIC design. Fig. 4 shows a structure of processing module for ASIC- oriented implementation of Wi nograd's minimal filtering basic operation. The module contains four two-input and two three-input algebraic adders, four m ultipliers and a register memory f or storing the va lues i s . It is assumed that these elem ents can be pre computed and written to th e register memory before the calculations begin. Depending o n th e requireme nts for the speed of calculations, the modules can be cascaded and combined into clusters. Fig. 4 . The structure of the processor module for implement ing the Winograd’ s minimum filtering ope ration (ASIC point of view). Today a better alternative than A SIC are FPGAs (field - programmable gate arrays) - the in tegrated circuits designed to be configured by a customer o r a d esigner. If th e earl y FPGAs contained only small embedd ed multipliers, then more recent FPGAs co ntain DSP blocks, th at in clude not only multipliers, but also internal adders designed in such a w ay that part of the additions in (1) can also be computed inside th e DSP blocks. However even if the DSP blo ck contains embedded multipliers, their number is always limited. This mea ns that if the implemented scheme has a large nu mber of multiplications, the projected processor may not always fit into the ch ip and the problem of minimizing the number of multipliers remains relevant. This applies fully to FPGAs Stratix II that contain DSP blocks, each of which includes just 4 multipliers, as well as three adders at the block input and three adders at the block o utput. Such a b lock structure allows using the hardware resources o f the chip with a maximum degree of efficiency. It is easy to see that a full y parallel implementation of direct calculations does not fit into the boundaries of one Stratix II DSP block. Fig. 5 shows a structu re of processing module for implementation of Winograd's minima l filtering basic operation on the base of Altera Stratix II high-s peed FPGA chip. The b ulk of t he computation is p erform ed inside the DSP block, but the adders outlined by the dash-dotted line on a gray background are implemented using external logic gates. By way of background information , it is necessary to em phasize , th at not all outputs of the DS P block are used in the proposed solution (see F ig . 5). Depending o n th e performance that is needed in the neural network, the number of processor modules implementing the Winograd’s filtering basic operation can be quite large. 0 s 1 s 2 s 3 s 0 x 1 x 0 y 1 y 2 x 3 x 0 s 1 s 2 s 3 s 2 1 2 1 0 h 1 h 2 h 0 y 1 y 0 x 1 x 2 x 3 x 0 s 1 s 2 s 3 s Measurement A utomation Mon itoring, Jan. 2 017, vol. 61, no. xx 3 Fig. 5 . The structure of the acce lerating module for imple menting the W inograd’s minimum filtering bas ic operation (FPGA point of view ). 4. Conclusion This work lo oks into some issues of structural design of the hardware-efficient module for implementation of CNN basic operation using W inograd’s minimal filtering method. This method reduces the number of multipliers at the expense o f increased number o f adders . Taking in to account a relative hardware complexity of m ultiplier and add er, reducing the number of multipliers at th e expense of th e increased n umber o f adders is desirable. The calculations demonstrate the effectiv eness o f proposed solutions and their universal impact o n the different types of CNN layers as well as on the principles of network operation. 5. References [1] Kriz hevsky A. Sutsk ever I. and Hi nton G. E. : Imagene t classificatio n with deep convolutional neural networ ks, in Procee dings of the 26th Annual Conference on Neural Information Processing Sy stems (NIPS ’12), pp. 1097– 110 5, Lake T ahoe, Nev, US A, Decembe r 2012. [2] Farabet C. , Martini B. , Akselr od P. , Talay S. , L eCun Y. , and Culurciell o E. , : Hardw are accelerated convolutional neural networks for sy nthetic visio n syste ms, in Proceedings of 2 010 I EEE I nternational Symposium on Cir cuits and Sy stems. 2010, p p. 257 – 260. [3] Zhao R., Song W., Zhang W., Xing T., Lin J. -H, Srivastava M., Gupta R., and Zhang Z.: Accele rating binarized c onvolutional neural netw orks with sof tware-pro grammable fpgas, in Proce edings of the 2017 ACM/SIG DA I nternational Symposium o n Fiel d -Progr ammable Gate Array s, 2017, pp. 15 – 24. [4] Zhang C, Li P, Sun G, Guan Y, Xiao B, Cong J.: Optimiz ing FPGA- based accelerator design for dee p c onvolutional neural netw orks. Procee dings of the 2015 ACM/SIG DA International Symposium on Field-Prog rammable Gate A r rays: ACM, USA , 2015; 161 – 170. [5] Škoda P., L ipić T., Srp Á . , Rogina B. M., Skala K., and Vajda F.: Impleme ntation framework for arti ficial neural networks on fpga, in 2011 Proce edings of the 3 4th I nt ernational C onvention MI PRO, May 2011, pp. 274 – 278. [6] Cadambi S. , Majumdar A. , Becchi M., Chakradhar S. , and. Graf H. P: A programmable parallel accelerator for learning and classification , in Procee dings of the 19th i nternational conference on P aralle l architectures and comp ilation techni ques, ser. PA CT ’10. New York, NY, USA: A CM, 2010, pp. 273 – 28 4. [7] Qadeer W. , Hamee d R. , S hacham O. , Venkat esan P. , Kozy raki s C. , a nd Horow itz M. A. : Convolution engine : ba lancing efficiency & flexibility in sp ecialize d computing, in ACM SIGA RCH Comp uter Architecture News, vo l. 41, no. 3. ACM, 2 013, pp. 24 – 35. [8] Chakradhar S. , Sankarad as M. , Jakkula V. , and Cadambi S. : A dynamically configurable coprocessor for convolutional neural networ ks. S IGA RCH Comput. A rchit. News, Jun e 2010, 38(3) , pp. 247 – 257. [9] Farabet C ., Poulet C., Han J.Y., L eCun Y.: CN P: an FPGA-base d processor for convolutional networks. F PL 2009.International Conference on Fiel d Progr ammable L ogic and A pplications, 2009: IEEE, Prague, Cze ch Republic, 2009, pp. 32 – 37. [10] Farabet C. , Martini B. , Akselr od P. , Talay S. , LeCun Y. , and. Culurciell o E.: Hardware accelerated convol utional neural n etwor ks for synthetic vision systems, in Circuits and Systems (ISCAS) , Proceedings of 20 10 I EEE International Sy mp osium, 2010, pp. 257 – 260. [11] Ovtcharov K, Ruwase O, Kim JY, Fower s J, Strauss K, Chung ES. Accele rating deep convolutional neural netw orks using specialized hardware . Microsoft Research Wh itepaper : Microsof t Research, 2 015. [12] Li Y., L iu Z ., Xu K., Yu H., an d Ren F ., “A 7.663 - tops 8.2- w energy efficient fpga accelerator for binary convolutional neural networks,” arXiv:1702.06 392, Fe b 2017. [13] Chen Y. H., Krishna T. , Emer J. S. , and Sze V.: Eyer iss: A n En ergy- Efficient Reconfigurable Accel erator for Deep Convolutional Neural Networks, I EEE Jour nal o f Solid-State Circuits, vol. 52, no. 1, pp. 127 – 138, 2017. [14] Qiu J., W a ng J., Yao S ., G uo K ., L i B., Zhou E., Y u J., Tang T ., X u N., Song S., Wang Y., Yang H. : Go ing Deeper with Embedded FPGA Platform for Convolutional Neural Netw ork,” FPGA ’16, ACM, 2016 , pp. 26 – 35. [15] Zhang C. , Li P. , Sun G. , Guan Y. , Xiao B. , and Co ng J. : Optimizi ng fpga-based accelerator design for deep convolutional neural networks, ACM, 2015, pp. 1 61 – 170. [16] Cong J. and Xiao B. : Minimizing computatio n in c onvolutional neural networ ks, Lecture N otes in Compute r Science (including s ubseries Lecture Notes in Artificial Intel ligence and Le cture Notes in Bioinformatics), 2 014 , vol. 8681, pp. 281 – 290. [17] Lavin A. and Gray S.: Fast a lgorithms for convolutional n eural networ ks, I n Proceedings of the I EEE Confer ence on Comp uter Vision and Pattern Recog nition. 2016, pp. 4013 – 4021. [18] Li H. , Fan X. , Jiao L. , Cao W. , Zhou X. , and W ang L. : A high performance FPGA-based ac celer ator f or large-scale convolutional neural netwo rks, in 26th Inter national Confer ence on F ield Programmable Logic and Applications (FPL) 2 9 Aug. -2 Sept. 2016, Lausanne, Sw itzerland, pp. 1 – 9, DOI : 10.1109/FPL .2016.7577308 [19] Qiang L an, Zelong Wang, Me i Wen, Chunyuan Z hang, and Yijie Wang: High Pe rformance I mplementation of 3D Convolut ional Neural Networks on a GPU. Computational Intelligence and Neurosc ience. Hindawi, v ol . 2017, pp. 1- 8. [20] Lu L. , Liang Y. , Xiao Q.,Yan S.: Eval uating F ast Alg orithms for Convolutional Neural Networks o n FPGAs , 25th IEEE Annual International Symposium on F ield-Programmable Cu stom Computing Machines , 2017 , pp. 101- 108 , DOI : 10.110 9/FCCM.2017. 64 _____________________________________ Received: 00.00.2014 Paper reviewed Accepted: 05. 01.2015 Prof., DSc ., PhD Aleksandr CARIO W He rece ived the Candidate of Sciences (PhD) and Doctor of Sciences degree (DSc or Habilitation) in Computer Sciences from LITMO o f St. Petersburg, Russia in 1984 and 2001, respectively. In Septe m ber 1999, he joined the f aculty o f Co mputer Sciences and Information Technology at the West Po meranian University of Technology, Szczec in , Poland, where he is currently a pro fessor in the Depar tment of Co mputer Architectures and Teleinfor matics. His research interests include digita l s ignal proces sing algorithms, VLSI architectures, and data processing pa rallelizat ion. e-mail: acariow@ wi.zut.edu.pl PhD Galina CARIO WA She received the MSc de gree in Mathematics from Moldavian State University, Chişină u in 1978 and PhD degree in co mputer scie nce from Wes t Po meranian University of Technology, Szczec in, Poland in 2007. She i s currently working as an assistant professor of the Department of Computer Arc hitectures and Teleinformatics . She is also a n Assoc iate-Editor o f World Research Journal of Transactions on Algorithms. Her sc ientific interests inc lude numerica l linear algebra and digital signa l process ing algorith ms, VLSI architectures, and data processing pa rallelizat ion. e-mail: gcariowa@ wi.zut.edu.pl 0 s 1 s 2 s 3 s 0 x 1 x 3 x 4 x 0 y 1 y 8 _ inp u t DS P b lock II S tratix 1 _ output 7 _ o u tput 1 _ inpu t

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment