High-quality speech coding with SampleRNN

We provide a speech coding scheme employing a generative model based on SampleRNN that, while operating at significantly lower bitrates, matches or surpasses the perceptual quality of state-of-the-art classic wide-band codecs. Moreover, it is demonst…

Authors: Janusz Klejsa, Per Hedelin, Cong Zhou

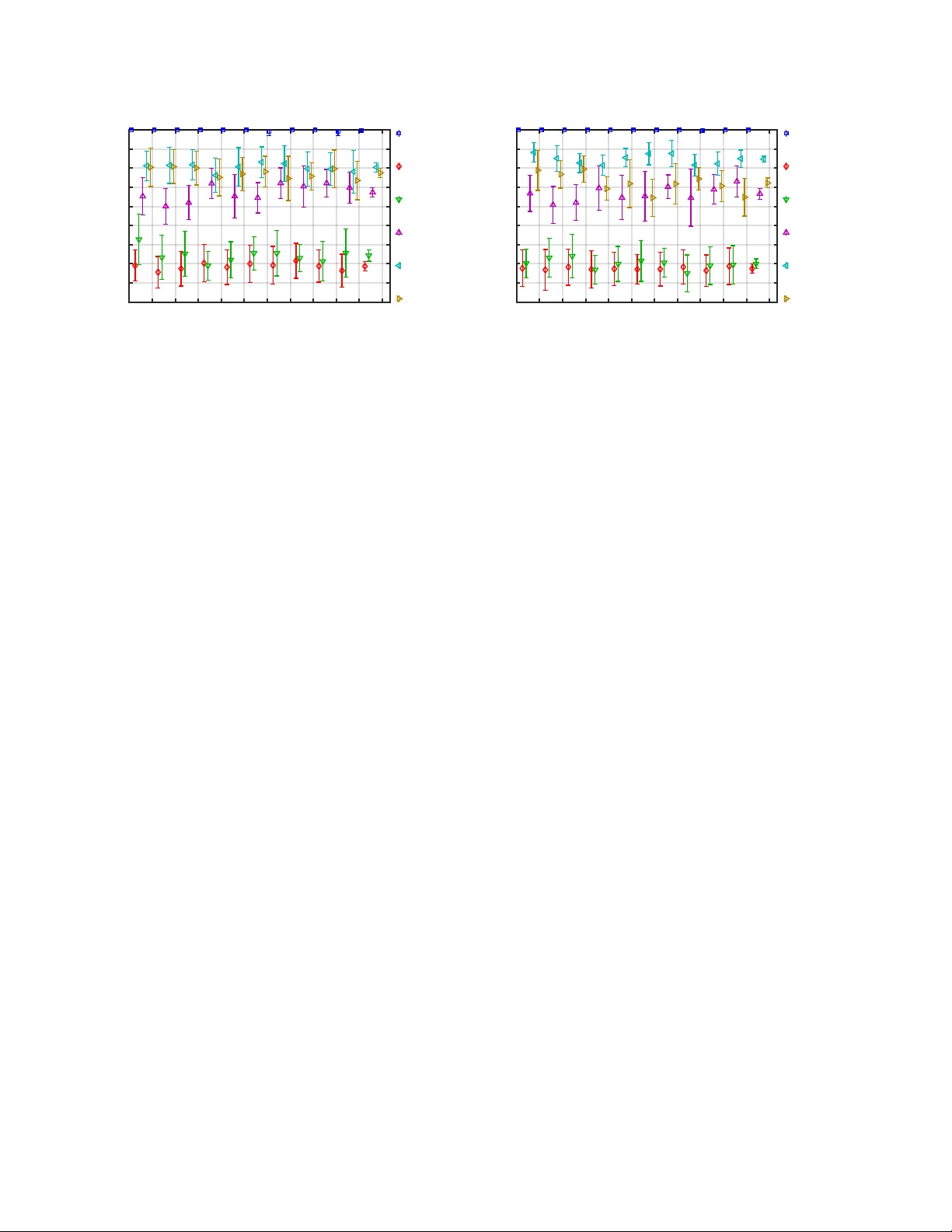

HIGH-QU ALITY SPEECH CODING WITH SAMPLE RNN J anusz Klejsa ? P er Hedelin ? Cong Zhou † Roy F ejgin † Lars V illemoes ? ? Dolby Sweden AB, Stockholm, Sweden † Dolby Laboratories, San Francisco, CA, USA ABSTRA CT W e provide a speech coding scheme employing a generati ve model based on SampleRNN that, while operating at significantly lower bitrates, matches or surpasses the perceptual quality of state-of-the- art classic wide-band codecs. Moreov er, it is demonstrated that the proposed scheme can pro vide a meaningful rate-distortion trade-off without retraining. W e ev aluate the proposed scheme in a series of listening tests and discuss limitations of the approach. Index T erms — speech coding, deep neural netw orks, vocoder , SampleRNN 1. INTR ODUCTION Generativ e modeling for audio based on deep neural networks, such as W a veNet [1] and SampleRNN [2], has provided significant ad- vances in natural-sounding speech synthesis. The main application has been in the field of te xt-to-speech [3, 4, 5] where the models replace the vocoding component. Moreov er, generati ve models can be conditioned by global and local latent representations [1]. In the context of voice con version, this facilitates natural separation of the conditioning into a static speaker identifier and dynamic linguistic information [6]. In this paper we develop a wide-band conditioning from the vocoder [7] with parameters quantized to 5.6, 6.4 and 8 kb/s and use these to condition a SampleRNN model. W e benchmark our re- sulting speech coding scheme against AMR-WB [8] at 23.05 kb/s and SILK [9] at 16 kb/s, which, at this bitrate, is a state-of-the-art classic speech codec providing good to e xcellent quality [10]. While it was shown in [11] that the subjecti ve quality of AMR- WB at 23.05 kb/s can be approached at only 2.4 kb/s by using W aveNet synthesis conditioned on narrowband vocoder parameters, the quality gap to classic speech coding schemes w as not closed. Furthermore, the reconstructed wa veforms occasionally suf fered from typical blind bandwidth extension artifacts (the W a veNet-based decoder used narrow-band conditioning but synthesized wide-band speech). The purpose of this paper is two-fold. First, we demonstrate that the approach of [11] is reproducible with another generativ e netw ork architecture. Second, we inv estigate the scalability of such a scheme in terms of rate-quality trade-of f. In particular , we demonstrate that higher reconstruction quality can be achieved by allo wing a higher bitrate for the conditioning. The subjectiv e quality is e valuated with a methodology based on MUSHRA[12]. The speech coder structure considered in this paper is shown in Fig. 1 at a high level. It includes an encoder based on a vocod- ing structure gov erned by a parametric signal model that facilitates quantization constrained by bitrate. The decoder scheme uses a four - tier SampleRNN architecture. The conditioning parameters are de- signed in a way that lower quality conditioning can be embedded into higher quality conditioning, which allo ws for fixed dimension- ality of the conditioning irrespecti vely of the operating bitrate. The signal sample modeling and synthesis are based on a discretized lo- gistic mixture [13] instead of the 8 -bit µ -la w domain used in [1, 11]. Perhaps one of the most interesting questions related to the proposed scheme is whether its performance generalizes to unseen speech signals. While it is relati vely straightforward to avoid model ov erfitting within the selected dataset by using a state-of-the-art training approach (e.g., [14, 15]) the issue of robustness of the cod- ing algorithm remains unclear . In order to facilitate comparison to [11] we carried our experiments with the same multi-speaker dataset, namely the W all Street Journal set WSJ0 [16]. Howe ver , it com- prises only American English, and seems to have been created with capture de vices of limited quality . Hence, we cross-v alidated the performance of the proposed approach on another publicly av ailable dataset (clean speech from the VCTK corpus [17]). W e demonstrate how the performance of the coding scheme degrades on signals from the ne w data set and then improves ag ain with retraining on an extended dataset. This paper is structured as follows. First, the vocoder and its quantization scheme are described in Section 2 along with the em- bedding approach. Next, we describe our SampleRNN model and provide the details of its v ocoder conditioning in Section 3. The e x- perimental setup and the listening test results are presented in Sec- tion 4. Finally , conclusions are presented in Section 5. 2. V OCODER WITH QU ANTIZED P ARAMETERS The encoder scheme is based on a wide-band version of a linear pre- diction coding (LPC) vocoder [7]. Signal analysis is performed on a per-frame basis, and it results in the following parameters: i) an M -th order LPC filter , ii) an LPC residual RMS le vel s , iii) pitch f 0 , and, iv) a k -band voicing vector v . A v oicing component v ( i ) , i = 1 , . . . , k gi ves the fraction of periodic ener gy within a band. All these parameters are used for conditioning of SampleRNN, as described in Section 3. W e note the signal model used by the en- coder aims at describing only clean speech (without background or samples V o coder analysis Entrop y encoding Entrop y decoding bitstream Sample RNN samples bitstream Encoder Decoder Fig. 1 . Block diagrams of the v ocoder-based encoder and the Sam- pleRNN decoder . T able 1 . Operating points of the encoder ( k = 6 ) r nominal M spectral n bits n bits [kb/s] dist. [dB] s v w 8.0 22 0.754 1 + 9 9 6.4 16 0.782 1 + 8 9 5.6 16 1.33 1 + 8 9 simultaneously activ e talkers). The analysis scheme operates on 10 ms frames of a signal sam- pled at 16 kHz. In the proposed encoder design, the order of the LPC model, M , depends on the operating bitrate. Standard combi- nations of source coding techniques are utilized to achie ve encoding efficienc y with appropriate perceptual consideration, including vec- tor quantization (VQ), predicti ve coding and entropy coding [18]. In this paper, for all experiments, we define the operating points of the encoder as in T able 1. W e used standard tuning practices. For e xam- ple, the spectral distortion for the reconstructed LPC coefficients is kept close to 1 dB [19]. The LPC model is coded in the line spectral pairs (LSP) domain utilizing prediction and entropy coding. For each LPC order, M , a Gaussian mixture model (GMM) was trained on the WSJ0 train set, pro viding probabilities for the quantization cells. Each GMM component has a Z -lattice according to the principle of union of Z - lattices [20, 21]. The final choice of quantization cell is according to a rate-distortion weighted criterion. The residual level s is quantized in the dB domain using a hybrid approach similar to that in [22]. Small level inter-frame variations are detected, signalled by one bit, and coded by a predicti ve scheme using fine uniform quantization. In other cases the coding is memo- ryless with a larger , yet uniform, step-size cov ering a wide range of lev els. Similar to lev el, pitch is quantized using a hybrid approach of predictiv e and memoryless coding. Uniform quantization is em- ployed but ex ecuted in a warped pitch domain. Pitch is warped by f w = cf 0 / ( c + f 0 ) where c = 500 Hz and f w is quantized and coded using 10 bit/frame. V oicing is coded by memoryless VQ in a w arped domain. Each voicing component is warped by v w ( i ) = log ( 1 − v ( i ) 1+ v ( i ) ) . A 9 bit VQ was trained in the warped domain on the WSJ0 train set. A feature vector h f for conditioning SampleRNN is constructed as follo ws. The quantized LPC coefficients are con verted to reflec- tion coef ficients. The v ector of refection coef ficients is concatenated with the other quantized parameters, i.e. f 0 , s , and v . In the remain- der of the paper we use either of two constructions of the condition- ing vector . The first construction is the straightforward concatena- tion described abov e. For e xample, for M = 16 , the total dimension of the v ector h f is 24 ; for M = 22 it is 30 . The second construction is an embedding of lower -rate conditioning into a higher-rate format. For e xample, for M = 16 , an 22 -dimensional vector of the reflec- tion coefficients is constructed by padding the 16 coefficients with 6 zeros. The remaining parameters are replaced with their coarsely quantized (low bitrate) v ersions, which is possible since their loca- tions within h f are now fix ed. 3. CONDITIONAL SAMPLE RNN SampleRNN is a deep neural generativ e model proposed in [2] for generating raw audio signals. It consists of a series of multi-rate recurrent layers, which are capable of modeling the dynamics of a sequence at dif ferent time scales. SampleRNN models the proba- GRU Learned upsampling + 1 × 1 conv 1 × 1 conv Tier 2 GRU Learned upsampling + 1 × 1 conv 1 × 1 conv Tier 3 GRU Learned upsampling + 1 × 1 conv 1 × 1 conv Tier 4 1 × 1 conv MLP Tier 1 h f p ( x i | x

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment