Speaker Selective Beamformer with Keyword Mask Estimation

This paper addresses the problem of automatic speech recognition (ASR) of a target speaker in background speech. The novelty of our approach is that we focus on a wakeup keyword, which is usually used for activating ASR systems like smart speakers. T…

Authors: Yusuke Kida, Dung Tran, Motoi Omachi

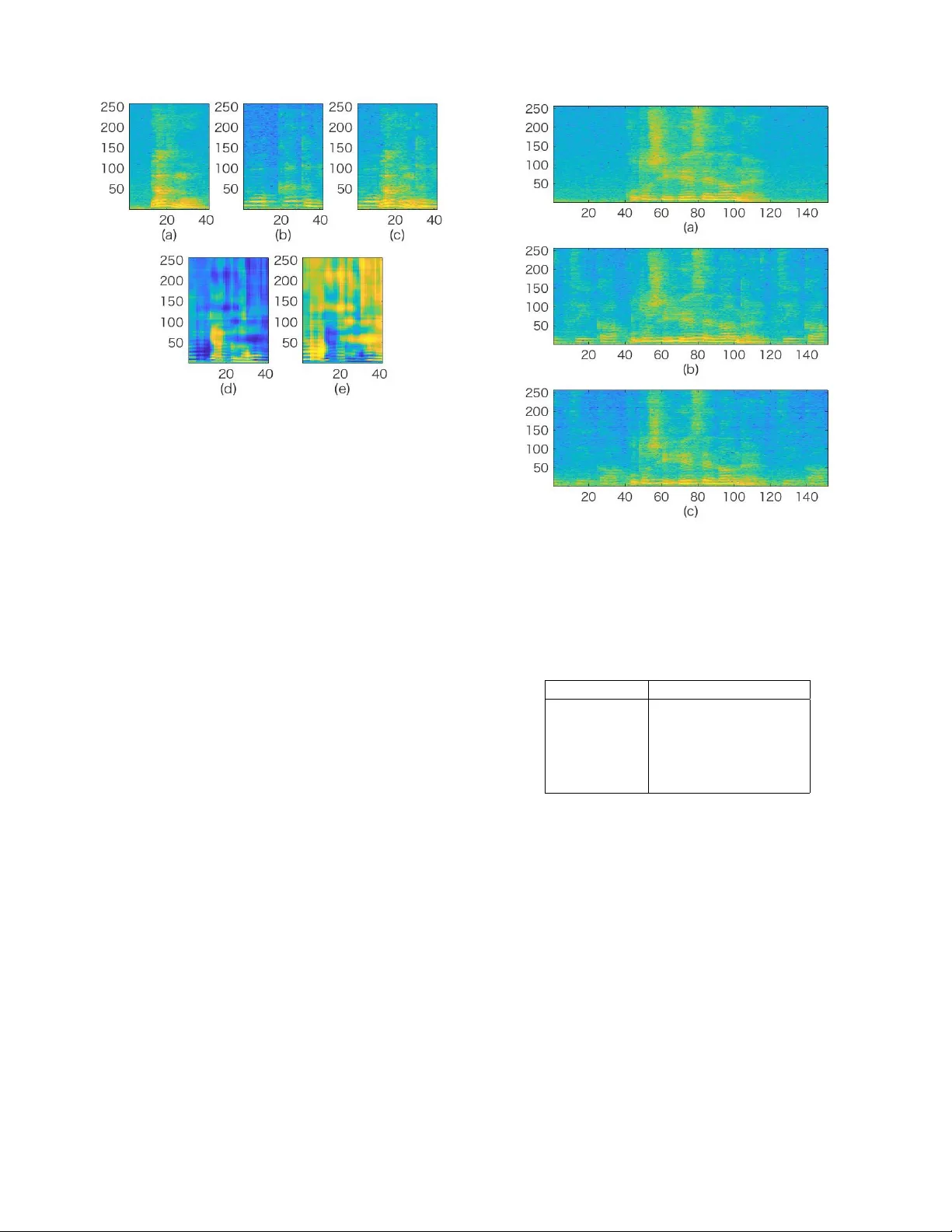

SPEAKER SELECTIVE BEAMFORMER WITH KEYWORD MASK ESTIMA TION Y usuke Kida, Dung T ran, Motoi Omachi, T oru T aniguc hi, Y uya Fujita Y ahoo Japan Corporation ABSTRA CT This paper addresses the problem of automatic speech recog- nition (ASR) of a target speaker in background speech. The nov elty of our approach is that we focus on a wakeup ke y- word, which is usually used for activ ating ASR systems like smart speakers. The proposed method firstly utilizes a DNN- based mask estimator to separate the mixture signal into the keyw ord signal uttered by the target speaker and the remain- ing background speech. Then the separated signals are used for calculating a beamforming filter to enhance the subse- quent utterances from the target speaker . Experimental e valu- ations sho w that the trained DNN-based mask can selecti vely separate the keyw ord and background speech from the mix- ture signal. The effecti veness of the proposed method is also verified with Japanese ASR experiments, and we confirm that the character error rates are significantly impro ved by the pro- posed method for both simulated and real recorded test sets. Index T erms — W akeup keyw ord, speech enhancement, robust automatic speech recognition, background speech 1. INTR ODUCTION Robustness against background speech is one of the key fac- tors for automatic speech recognition (ASR). Ev en if multiple talkers are surrounding us and speaking simultaneously , we can focus on a specific tar get speaker . This is called the cocktail-party effect [1], and this function is realized by our auditory system. On the other hand, current ASR systems cannot handle such a situation and therefore the background speech usually causes a serious performance degradation in ASR. One practical example of this scenario is a smart speaker located in a living room. In this situation, back- ground speech from surrounding people, television and radio are expected to o verlap the target speech. A simple way to realize robust ASR against background speech is to introduce blind speech separation processing be- fore recognition. Blind speech separation has been studied for decades, and it can be divided into single-channel and multi- channel approaches. The single-channel approach is based on the spectral characteristics of each source signal. Non- negati ve matrix factorization (NMF) [2] and time-frequency c 2018 IEEE masking [3] are well known methods. In addition, recent deep learning-based technologies such as permutation in- variant training (PIT) [4] or deep clustering [5] have caught a great deal of researchers’ attention. The multi-channel approach is based on the spatial information. Independent component analysis (ICA) [6][7], full-rank spatial cov ariance model-based methods [8][9] are typical solutions, and also deep learning-based techniques have been studied recently [10]. The mixture signal of a target speech with background speech can be separated into individual source signals by the abov e blind speech separation algorithms. Howe ver , these algorithms cannot identify which output signal corresponds to the target speech to be recognized. This problem is re- garded as one of the permutation problem [11], and there has been some work to try to solve it by imposing constraints about speaker gender [12] or signal intensity [13]. Howe ver , such constraints are not necessarily satisfied. ˆ Zmol ´ ıkov ´ a, et al. proposed a method named SpeakerBeam, which extracts target speech directly without any constraints about signal characteristics [14][11]. Their method instead assumes that the target speaker is known in advance, and requires a pre- recorded clean utterance from the target speak er . In this work, we propose an alternative approach to realize robust ASR of a target speaker in background speech while av oiding the permutation problem. The novelty of our method is that we focus on a wakeup keyw ord like ”okay Google” or ”alexa”, which is used for activ ating ASR systems such as a smart speaker . These systems usually assume that the target speaker speaks a specific ke yword and then a command to be recognized. Therefore, it is naturally considered that the key- word utterance provides some important cues about the tar- get speaker , which is beneficial for recognizing a subsequent command utterance. Motiv ated by this, the proposed method utilizes the keyword utterance to estimate spatial information of the target speaker . From the same point of view , King, et al. also proposed to utilize the ke yword to calculate the mean value for feature normalization used for the acoustic model- ing [15]. Our proposed method firstly separates the mixture signal into the keyw ord and the remaining background speech using a specially designed DNN-based mask estimator . Then the separated signals are used for calculating a beamform- ing filter to enhance the subsequent utterances from the tar get speaker . An advantage of the proposed method compared to SpeakerBeam is that the proposed method can enhance any speaker’ s utterance where the keyword is spoken, and it does not require the pre-recording procedure. Signal-lev el and ASR ev aluations are performed to ver - ify the effecti veness of our proposed method. W e use two Japanese test sets for the ev aluation. The first test set is com- posed of simulated mixture signals between two speakers, and the second set is of realistic utterances recorded under tele vi- sion sound. The rest of the paper is structured as follows. Sections 2 and 3 describe the details of our proposed method. The training and test sets used are described in Section 4. The experimental ev aluations and results are shown in Sections 5 and 6. Section 7 presents our conclusions and future work. 2. DNN-B ASED KEYWORD MASK ESTIMA TION 2.1. Network configuration Our DNN structure is shown in Fig. 1. Giv en a mixture sig- nal of a keyword with background speech, this DNN outputs two kinds of mask. The first mask is a ke yword mask which works as an extractor of the keyword. The other mask is a non-ke ywor d mask which w orks as a remov er of the k eyword, and accordingly extracts the remaining background speech. The interested signal for the extraction or removal is always the specific ke yword. Therefore, this DNN can be trained ef- ficiently , because the acoustic variation to be considered is far less than those used in standard speech separation problems. The mix ed signal is firstly conv erted to feature v ectors. In this work, magnitude spectra are used for the features. Con- ditions for speech analysis are sho wn in T able 1. A following context splicing block extends the 256 dimensional magni- tude spectra with its neighboring 20 context frames (from left 10 frames to right 10 frames), resulting in 5,376 dimensions. Then mean and variance normalization is performed with its global value calculated from the entire training data. Finally , the normalized feature vectors are fed into the DNN. Our DNN has 3 fully connected (FC) hidden layers. Each hidden layer has 1,024 nodes, and the output layer has 256 nodes for each of the two masks. The acti v ation function used in the hidden layers is a rectified linear unit (ReLU) function, but the sigmoid function is used for the output layer in order to limit the range of DNN output between 0 and 1. 2.2. Parameter optimization The network parameters in the DNN are trained to minimize the error between the tw o output masks and a gi ven reference. In this work, we use an ideal binary mask (IBM) for the ref- erence like [16], and a cross entropy function is adopted for the error criterion. The mini-batch size for the stochastic gra- dient descent (SGD) algorithm is set to 128. Dropout is also used, in which the dropout rate is 0.2 for the input layer and Fig. 1 . Our DNN structure for keyword mask estimation. The output of DNN is two types of mask correspond to the key- word and the remaining non-keyw ord component. Colored blocks include trainable neural network parameters. T able 1 . Condition for speech analysis. Sampling frequency 16 kHz W indo w type Hanning Frame length 32 ms Frame shift 16 ms 0.5 for each hidden layer . The learning rate is set to 0.01. The number of training epochs is 50. 3. PR OPOSED SYSTEM 3.1. Overview A schematic diagram of the proposed system is presented in Fig. 2. The proposed system is activ ated when the exis- tence of a keyw ord utterance is given by a ke yword detection method, which is defined outside of the system. Given a de- tected ke yword with its estimated time region, the observed signal among the ke yword region is fed into the trained DNN shown in Fig. 1. Then the keyw ord and the remaining back- ground speech are separated by applying the obtained key- wor d mask and non-ke ywor d mask to the original mixture sig- nal respectiv ely . This process is repeated for each of the mi- crophone channels, and the separated multi-channel signals are then used for calculating a beamforming filter . A well known minimum variance distortionless response (MVDR) beamformer is employed in this work. After that, the beam- forming process is applied to the subsequent signal in order to enhance tar get speaker utterances while reducing background speech. Note that the beamforming filter is calculated once after the keyw ord, and not updated during subsequent com- mand. Finally , the enhanced target speaker utterance is input to the ASR system. 3.2. MVDR filter estimation MVDR filter estimation can be explained as follo ws. Given a background non-ke yword speech cov ariance matrix R nn and a steering vector v of k eyword speech, the MVDR filter γ can be calculated by the following equation: γ = [ γ (1) , γ (2) , · · · , γ ( c )] t = R − 1 nn v v h R − 1 nn v , (1) where c denotes the number of microphone, and · t and · h indicate transpose and conjugate transpose of a matrix, re- spectiv ely . Note that the frequency index is omitted from the abov e equation and the following discussion if not necessary . In this work, R nn is estimated using the observed multi- channel magnitude spectra Y τ = [ y τ (1) , y τ (2) , · · · , y τ ( c )] t and the non-keywor d mask ¯ m ( n ) τ with τ denoting the time frame index. ¯ m ( n ) τ is defined as the median of M ( n ) τ = { m ( n ) τ (1) , m ( n ) τ (2) , · · · , m ( n ) τ ( c ) } , with m ( n ) τ ( · ) denoting the estimated non-ke ywor d mask . Based on these, R nn is calculated like [10] as: R nn = X τ ∈ T ¯ m ( n ) τ Y τ ( ¯ m ( n ) τ Y τ ) h , (2) where T indicates the set of time frames indices among the keyw ord region. The steering v ector v = [ v (1) , v (2) , · · · , v ( c )] t can be es- timated by a cov ariance matrix R kk , which can be calculated similarly as R nn with the ke ywor d mask ¯ m ( k ) τ : R kk = X τ ∈ T ¯ m ( k ) τ Y τ ( ¯ m ( k ) τ Y τ ) h , (3) An eigen value decomposition of R kk is calculated, and v is estimated as the eigen-vector with the maximum eigen value. The estimated beamforming filter γ is then applied to the subsequent mixture signal as x τ = γ h Y τ , and the enhanced target signal x τ is obtained. 4. D A T A 4.1. T raining data f or keyword mask estimator In this work, we used our in-house Japanese data for the training and test set. Keyw ord and background speech were recorded independently in the same quiet room. W e used a 4-channel microphone array for the recording. For the training data, the recorded 4-channel signal was treated as four single-channel signals. Speakers were located at sev eral points in the room, and the distances between the micro- phone array and the speakers were between 1 and 3 meters. Fig. 2 . A schematic diagram of the proposed system. Note that the MVDR filter is estimated during the short ke yword region only and fix ed. Fig. 3 . Recording setting for the real-set. One speaker was located at A or B, and spoke to ward the microphone array . The number of recorded keyword utterances was 1,660 from 35 speakers, and that of background speech utterances was 1,400 from 25 speakers. V arious combination pairs between keyw ord and background speech made a total of 116,200 mixed utterances, and these were used as the training set of our DNN-based mask estimator . Note that the keyw ord and background speech for the mixing were selected from differ - ent speakers and their genders may be the same. The average signal-to-noise ratio (SNR) for the mixing was 3.2 dB, and the standard deviation was 3.4 dB. The keyw ord used in this work was a single Japanese word composed of 3 syllables with av erage duration 0.7 second. 4.2. Evaluation data T wo test sets were used for verifying the effecti veness of the proposed method. They were recorded in the same room as the training data, and the same microphone array was used. The first set was recorded in the same manner as the training set using dif ferent speak ers. For the test set, the target speaker spoke a keyword and then a command. The command utter- ances are designed assuming a personal assistant system. 120 target utterances from 4 speakers and 120 interfering utter- ances from another 4 speakers were randomly mixed, and 10 different combination patterns resulted in a test set compris- ing 1,200 utterances. The two speakers were located at differ - ent angles from the microphone array . W e call this test set a simu-set . Another test set was also recorded in the same room, but the situation was more realistic. The recording setting is pre- sented in Fig. 3. In this test set, one target speaker spoke under television sound. The distance from the microphone array to the television was 1.2 meters, and that to the target speaker was also 1.2 meters. The recording was performed by chang- ing the speaker location (sho wn as A and B in the figure) and the volume of the television. The number of utterances was 4,396 from 67 speakers. W e call this test set real-set . 5. SIGNAL-LEVEL EV ALU A TION 5.1. Evaluation metrics Firstly , the signal-lev el e valuation was performed to verify that the ke yword mask can extract the keyw ord signal only from the mixture while the non-ke yword mask can extract the remaining signal as well. As ev aluation measures, the signal- to-distortion ratio improvement (SDRi) [17] was used. The SDRi represents the degree of reduction of the undesired sig- nal and the extraction of the desired signal. When this fig- ure becomes positive, the estimated mask is thought to work properly . Given a magnitude spectra of the desired signal X t,f , that of the undesired signal N t,f and the mask m t,f , the SDRi is calculated by: SDRi = 1 F X f ∈ F 10 log 10 P τ ∈ T m τ ,f X τ ,f X ∗ τ ,f P τ ∈ T m τ ,f N τ ,f N ∗ τ ,f − ξ , (4) where f , F and F denote the frequency bin index, the total number of frequency bins, and the set of all frequency in- dex es respectively . ξ represents the SDR before masking and is defined as follows: ξ = 1 F X f ∈ F 10 log 10 P τ ∈ T X τ ,f X ∗ τ ,f P τ ∈ T N τ ,f N ∗ τ ,f . (5) 5.2. Results The simu-set was used for ev aluation. In this work, the ev al- uation was performed using manually annotated keyword re- gions to separate the accuracies of ke yword detection and the proposed method. W e compared the following four kinds of mask: estimated keywor d mask m ( k ) , estimated non-ke ywor d mask m ( n ) , IBM corresponding to the keyword IBM ( k ) , and IBM corresponding to the non-keyword IBM ( n ) . Note that the keyword signal was regarded as X t,f for the ev aluation of ke yword mask , but it was regarded as N t,f for that of non- ke ywor d mask . T able 2 indicates the average and standard deviation of the SDRi, which was calculated from whole ut- terances of the simu-set . W e found from this table that the T able 2 . Comparison of SDRi between the estimated DNN- based mask and IBM. The table indicates av erage ( µ ) and standard deviation ( σ ) as µ ± σ in decibel (dB). m ( k ) IBM ( k ) m ( n ) IBM ( n ) SDRi 4.9 ± 2.6 11.1 ± 3.4 3.1 ± 1.6 10.8 ± 4.0 trained DNN-mask worked preferably , because the results of the two estimated masks were all positi ve. 5.3. Example of the processing r esult An example of the DNN-based mask estimation is presented in Fig. 4, which shows the spectrograms of the original key- word speech, bac kground speech, and their mix ed speech sig- nal. The estimated keywor d mask and non-ke ywor d mask are also presented. From the figure, we can see that the estimated two masks selecti vely e xtracted the keyw ord and background speech respectiv ely . From this, it turned out that the trained DNN was able to model the desired behavior . Howe ver , Fig. 4 (d) also shows that the high frequency part of the keyword signal was erroneously missing from the ke ywor d mask (see around frame 20). W e speculate that this was the reason for the performance gap of SNRi between m ( k ) and IBM ( k ) seen in T able 2. Furthermore, the beamforming result using the estimated ke ywor d mask and non-ke ywor d mask sho wn in Fig. 4 (d), (e) is also presented. Fig. 5 (a), (b) show spectrograms of a command speech from the target speak er and its mixed signal with background speech, which are subsequent signals seen in Fig. 4 (a), (c). Note that the shown spectrograms are one of the four recorded channels. The beamforming result is also shown in Fig. 5 (c). From the figure, background speech was significantly reduced by the beamforming. Therefore, our proposed method works well for this example. 6. ASR EV ALU A TION 6.1. ASR system Next, the effecti veness of the beamforming using the trained DNN-based masks was verified with ASR experiments. Our ASR system used a DNN-HMM (Deep Neural Network– Hidden Markov Model) based acoustic model. The DNN had 5 fully connected hidden layers and each layer had 1,024 nodes. The model parameters were trained with the cross en- tropy error criterion. 1,800 hours of speech data was used for training the acoustic model, which was collected through our voice service including search, dialogue and car-navig ation. The training utterances were split into three subsets, and 20% of them were used directly . 40% was mixed with v arious kinds of daily life noise, and v arious rev erberation filters gen- erated by a room simulator were added to the remaining 40%. Fig. 4 . An example of the DNN-based mask estimation re- sult. (a): keyword speech, (b): background speech, (c): mixed speech, (d) estimated keyword mask, (e) estimated non-keyw ord mask. The language model was a tri-gram model trained using text queries of the Y ahoo! J AP AN search engine and transcrip- tions of mobile voice search queries. The vocabulary size was about 1.6 million words. Our decoder was an internally dev eloped single-pass WFST decoder [18]. Prior to recog- nition, DNN-based voice activity detection (V AD) similar to [19] was performed to minimize insertion errors. 6.2. Results for simu-set The results for the simu-set are firstly presented. W e com- pared the proposed method with some reference signals, and T able 3 summarizes their results. The table shows the char- acter error rates (CER) and the relative error reduction rates (RERR) from the result when the mixed signal is input di- rectly to the ASR, which is shown as ‘Mixed’. ’Clean’ indi- cates the result of the target signal before mixing. ‘Proposed’ indicates the proposed method. ‘Oracle (IBM)’ indicates the result of oracle experiment that runs the proposed method with IBM calculated during the k eyword region instead of the estimated masks. Therefore, the result of ‘Oracle (IBM)’ is thought to be an upper limit of the proposed method. In ad- dition, ’BeamformIt’ shows the result of BeamformIt, a well known beamforming method [20]. W e would like to start our discussion by comparing ‘Clean’ and ‘Mixed’. From the table, we can see the er- ror rates were drastically increased by mixing background speech. ‘BeamformIt’ sho wed it improved the error rate from ‘Mixed’, but the improvement was not significant. Howe ver , this result had been expected because BeamformIt simply es - timated the beamforming filter from the observed signal, and it seemed difficult to selectiv ely extract target speech from Fig. 5 . An example of the proposed method. (a): command signal from a target speaker , (b): mixed speech, (c): processed mixed speech by the proposed method. T able 3 . ASR result for simu-set. The proposed method shows significant CER impro vement. CER (%) RERR (%) Mixed 30.0 - BeamformIt 28.6 5.3 Proposed 22.0 26.7 Oracle (IBM) 18.6 38.0 Clean 10.1 66.3 the mixture. On the other hand, we can see that ‘Proposed’ improv ed the CER significantly , e ven if the beamforming filter was estimated during only the short keyw ord utterance. From this result, we confirmed the effecti veness of the pro- posed method for ASR under background speech. Ho wev er , the CER of ‘Oracle (IBM)’ was smaller than ‘Proposed’, and the performance gap was not trivial. This means that there is still room to further improv e our mask estimator . 6.3. Results for r eal-set T able 4 shows the results for the real-set. As described in Section 4.2, the ev aluation was performed for four different acoustic conditions including two speaker locations and two lev els of the tele vision v olume (medium, lar ge). Note that the results of IBM are not presented in this table as IBM could not T able 4 . ASR result for the real-set. The figures indicates CER (%), and those in the brackets show RERR (%) from the ‘Mixed’. Different from BeamformIt, the proposed method sho ws improvement in an y conditions. Speaker position TV -v olume Mixed BeamformIt Proposed A Medium 34.3 30.8 (10.2) 26.2 (23.6) A Large 48.9 48.2 (1.4) 37.9 (22.5) B Medium 26.7 29.4 (-10.1) 24.1 (9.7) B Large 38.1 43.4 (-13.9) 32.5 (14.7) be calculated from real recorded data. The table shows that the CER was significantly decreased by our proposed method in all acoustic conditions. Thus, the effecti veness of the pro- posed method could also be confirmed in the realistic situa- tion. 7. CONCLUSION This paper describes one solution for rob ust ASR under back- ground speech. The novelty of our approach is that it uti- lizes the wakeup keyword utterance in order to estimate spa- tial characteristics of the target speaker . The proposed method firstly separated the mixture signal into the keyword and the remaining background speech using a DNN-based mask es- timator , and then the separated signals were used for calcu- lating a beamforming filter to enhance the subsequent utter - ances from the target speaker . The signal-lev el ev aluation showed that our DNN-based mask estimator could selectively separate these signals, and the effecti veness of the proposed method was also confirmed with ASR experiments. Our future work includes the improvement of the mask estimator using a more sophisticated neural network architec- ture with more training data. The verification of the proposed method under various noise conditions are also included in the future work. 8. REFERENCES [1] Neville Moray , “ Attention in dichotic listening: Affec- tiv e cues and the influence of instructions, ” Quarterly journal of experimental psychology , vol. 11, no. 1, pp. 56–60, 1959. [2] Paris Smaragdis, “Con voluti ve speech bases and their application to supervised speech separation, ” IEEE T rans. Audio, Speech, and Language Pr ocessing , vol. 15, no. 1, pp. 1–12, 2007. [3] Ozgur Y ilmaz and Scott Rickard, “Blind separation of speech mixtures via time-frequency masking, ” IEEE T rans. signal pr ocessing , vol. 52, no. 7, pp. 1830–1847, 2004. [4] Dong Y u, Morten Kolbæk, Zheng-Hua T an, and Jesper Jensen, “Permutation in variant training of deep mod- els for speaker -independent multi-talker speech separa- tion, ” in Pr oc. ICASSP , 2017, pp. 241–245. [5] John R Hershey , Zhuo Chen, Jonathan Le Roux, and Shinji W atanabe, “Deep clustering: Discriminative em- beddings for segmentation and separation, ” in Pr oc. ICASSP , 2016, pp. 31–35. [6] Paris Smaragdis, “Blind separation of conv olved mix- tures in the frequency domain, ” Neurocomputing , vol. 22, no. 1-3, pp. 21–34, 1998. [7] T aesu Kim, T orbjørn Eltoft, and T e-W on Lee, “Indepen- dent vector analysis: An extension of ica to multi variate components, ” in Pr oc. ICA , 2006, pp. 165–172. [8] Ngoc QK Duong, Emmanuel V incent, and R ´ emi Gri- bon v al, “Under-determined re verberant audio source separation using a full-rank spatial covariance model, ” IEEE T rans. Audio, Speech, and Language Pr ocessing , vol. 18, no. 7, pp. 1830–1840, 2010. [9] Hiroshi Saw ada, Hirokazu Kameoka, Shoko Araki, and Naonori Ueda, “Multichannel extensions of non-negati ve matrix factorization with complex-v alued data, ” IEEE T rans. Audio, Speech, and Language Pro- cessing , vol. 21, no. 5, pp. 971–982, 2013. [10] T akuya Y oshioka, Haken Erdogan, Zhuo Chen, and Fil Allev a, “Multi-microphone neural speech separation for far-field multi-talker speech recognition, ” in Pr oc. ICASSP , 2018, pp. 5739–5743. [11] Marc Delcroix, Kate ˆ rina ˆ Zmol ´ ıkov ´ a, Keisuke Kinoshita, Atsunori Ogaw a, and T omohiro Nakatani, “Single channel target speaker extraction and recognition with speaker beam, ” in Pr oc. ICASSP , 2018, pp. 5554–5558. [12] Chao W eng, Dong Y u, Michael L Seltzer , and Jasha Droppo, “Deep neural networks for single-channel multi-talker speech recognition, ” IEEE T rans. Audio, Speech, and Language Pr ocessing , vol. 23, no. 10, pp. 1670–1679, 2015. [13] Y annan W ang, Jun Du, Li-Rong Dai, and Chin-Hui Lee, “Unsupervised single-channel speech separation via deep neural network for different gender mixtures, ” in Pr oc. APSIP A , 2016, pp. 1–4. [14] Kate ˆ rina ˆ Zmol ´ ıkov ´ a, Marc Delcroix, K eisuke Ki- noshita, T akuya Higuchi, Atsunori Ogawa, and T omo- hiro Nakatani, “Speaker -aware neural network based beamformer for speaker extraction in speech mixtures, ” in Pr oc. Interspeech , 2017, pp. 2655–2659. [15] Brian King, I-Fan Chen, Y onatan V aizman, Y uzong Liu, Roland Maas, Sree Hari Krishnan Parthasarathi, and Bj ¨ orn Hoffmeister , “Robust speech recognition via anchor word representations, ” Proc. Interspeech , pp. 2471–2475, 2017. [16] Jahn Heymann, Lukas Drude, and Reinhold Haeb- Umbach, “Neural network based spectral mask estima- tion for acoustic beamforming, ” in Pr oc. ICASSP , 2016, pp. 196–200. [17] Emmanuel V incent, R ´ emi Gribonv al, and C ´ edric F ´ evotte, “Performance measurement in blind audio source separation, ” IEEE T rans. Audio, Speech, and Language Pr ocessing , vol. 14, no. 4, pp. 1462–1469, 2006. [18] Ken-ichi Iso, Edward Whittaker , T adashi Emori, and Junpei Miyake, “Improv ements in japanese voice search, ” in Pr oc. Interspeech , 2012, pp. 2109–2112. [19] Xiao-Lei Zhang and Ji W u, “Deep belief networks based voice activity detection, ” IEEE T rans. Audio, Speech, and Language Pr ocessing , vol. 21, no. 4, pp. 697–710, 2013. [20] Xavier Anguera, Chuck W ooters, and Javier Hernando, “ Acoustic beamforming for speaker diarization of meet- ings, ” IEEE T rans. Audio, Speech, and Language Pro- cessing , vol. 15, no. 7, pp. 2011–2022, 2007.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment