Neural Style Transfer: A Review

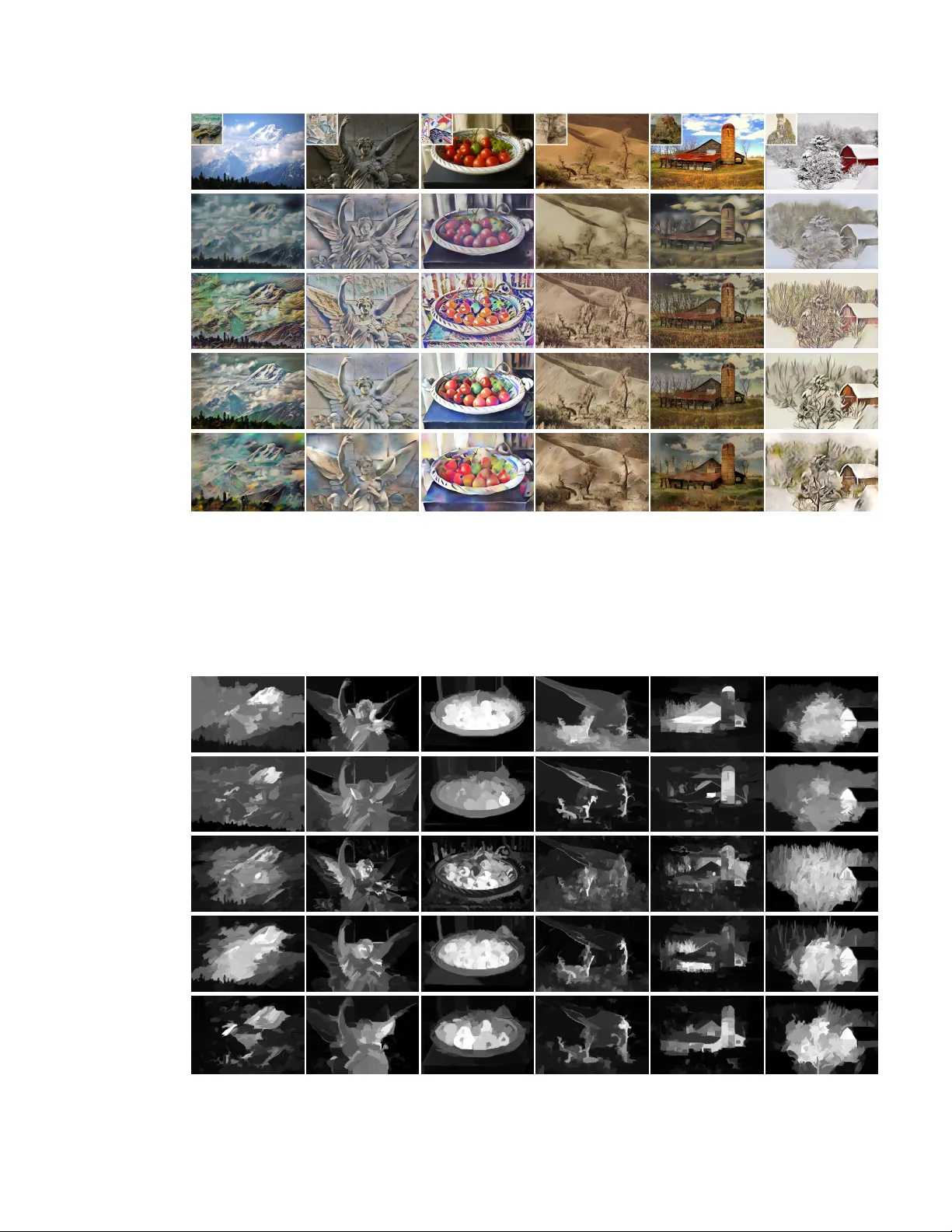

The seminal work of Gatys et al. demonstrated the power of Convolutional Neural Networks (CNNs) in creating artistic imagery by separating and recombining image content and style. This process of using CNNs to render a content image in different styl…

Authors: Yongcheng Jing, Yezhou Yang, Zunlei Feng