Multi-Channel Auto-Encoder for Speech Emotion Recognition

Inferring emotion status from users' queries plays an important role to enhance the capacity in voice dialogues applications. Even though several related works obtained satisfactory results, the performance can still be further improved. In this pape…

Authors: Zefang Zong, Hao Li, Qi Wang

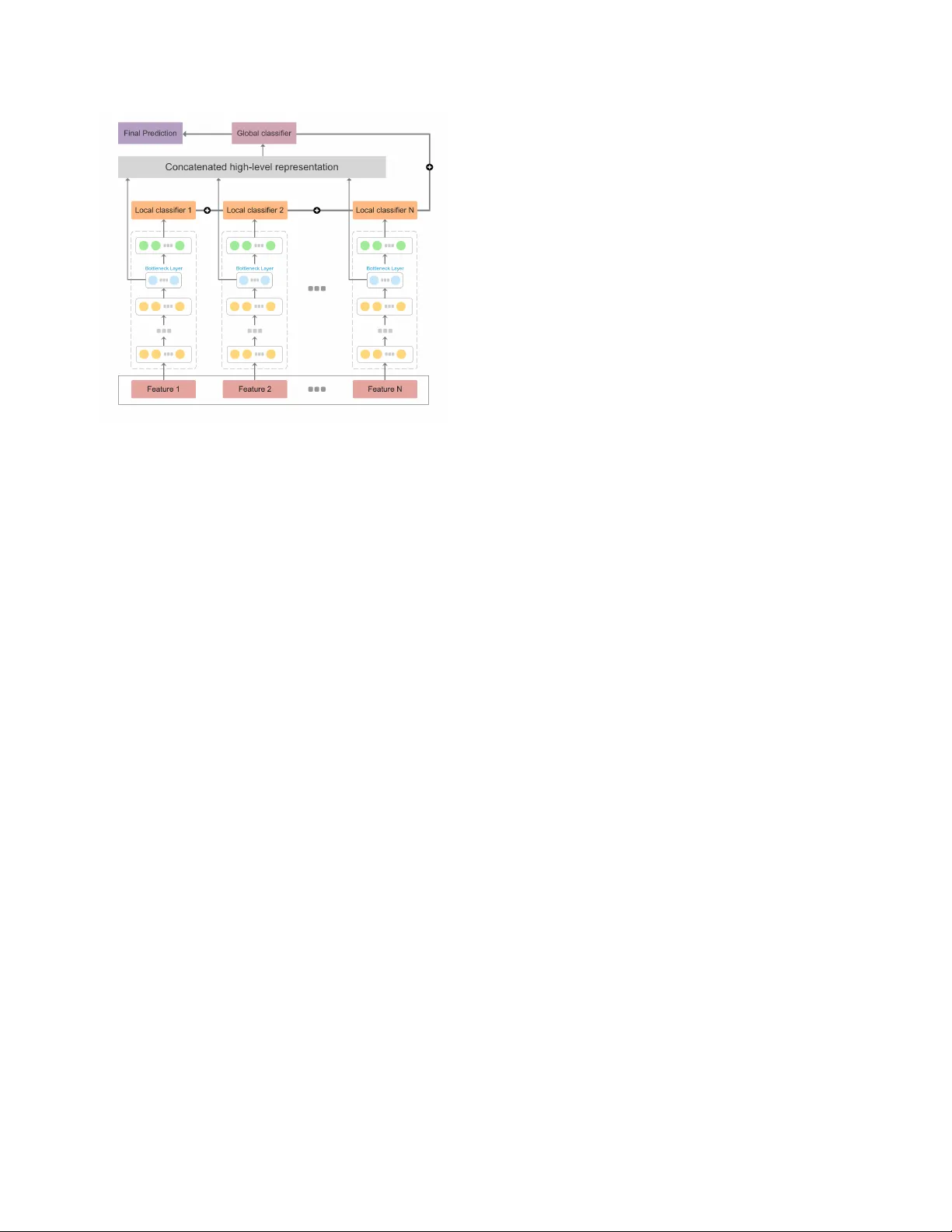

MUL TI-CHANNEL A UTO-ENCODER FOR SPEECH EMO TION RECOGNITION Zefang Zong, Hao Li, Qi W ang Department of Electronic Engineering, Tsinghua Uni versity , Beijing, China ABSTRA CT Inferring emotion status from users’ queries plays an impor - tant role to enhance the capacity in voice dialogues applica- tions. Even though several related works obtained satisfactory results, the performance can still be further impro ved. In this paper , we proposed a novel framework named multi-channel auto-encoder (MTC-AE) on emotion recognition from acous- tic information. MTC-AE contains multiple local DNNs based on dif ferent low-le vel descriptors with dif ferent statis- tics functions that are partly concatenated together , by which the structure is enabled to consider both local and global features simultaneously . Experiment based on a benchmark dataset IEMOCAP shows that our method significantly out- performs the existing state-of-the-art results, achieving 64 . 8% leav e-one-speaker-out unweighted accuracy , which is 2 . 4% higher than the best result on this dataset. Index T erms — Categorical emotion recognition, deep neural networks, auto-encoders, bottleneck features 1. INTR ODUCTION As voice-controlled intelligent applications develop rapidly , emotion recognition and analysis are becoming more and more important. Obtaining information from literal expres- sions cannot satisfy our demand any more, for a great part of information is conv eyed by human emotions. Some ut- terance, for example, ironic phrases, may hav e completely opposite meaning from what it sounds literally . And voice- controlled virtual assistants like Siri 1 may work much better with emotion information. So inferring emotion from voice data can help to understand the accurate meaning of users, as well as providing more humanized responses. T raditionally , two major frame works were explored for speech emotion recognition. One is HMM-GMM frame work based on dynamic features [1] , and the other one is classified by support vector machines (SVM) based on high-level rep- resentations generated by applying lots of functions on low- lev el descriptors (LLDs) [2]. Recently , more and more attention has been paid to deep learning methods for speech emotion recognition which brings a better performance than the traditional frameworks [3]. Some researches focus on utterance-le vel features which 1 http://www .apple.com/ios/siri/ usually extract high-lev el representations from LLDs and then utilize deep neural netw ork (DNN) for classification [4]. Meanwhile, instead of high-level statistics representation, some other researchers utilize frame-le vel representation or raw signal as inputs to neural network for an end-to-end train- ing [5, 6]. Generally , deep learning approaches has made a great contribution to the field of speech emotion learning. Howe ver , the current methods using deep learning have some apparent limitations in training procedure. In the frame- work using utterance-lev el features, the features to be inputted into neural networks are always generated by concatenating LLDs directly . Not only does this method ignore the inde- pendent nature of each feature, but also results in generating too high dimensions of the input, which mak es it quite hard to reach a satisfying result because of overfitting due to amount- limited training data. Although we can reduce the dimension susing other methods, the process of reduction may lose im- portant information, which is inevitable to an e xtent. In this paper, we put forward the multi-channel auto- encoder (MTC-AE) as a ne w scheme to avoid the limitations listed above. Instead of training the network using all features concatenated directly , we take features from sev eral local classifiers by utilizing DNN separately , which can keep the information from the independence of each classifier, and add a strong regularization to the whole system, helping to relie ve ov erfitting. Features are tak en from bottleneck layers of each classifier and then concatenated into a higher -dimension one. The final concatenated feature will be the input of a global classifier . Because of the regularization of local classifiers, the concatenated feature is more discriminativ e for classifica- tion. Finally , we fuse the outputs of all classifiers to obtain the final prediction results. It’ s important that the training pro- cedure in both global classifier and the local ones are trained simultaneously through a single objectiv e function, which guarantees that the final results consider both independence and rele vance among different features. In addition, inspired by bottleneck features[7], we initialize each local DNN with the stacked denoising auto-encoder (SD AE) as the complete MTC-AE scheme to yield lower classification error caused by corrupted inputs and reach even better performance. The structure of MTC-AE is shown in Figure 1. Experiments on benchmark dataset IEMOCAP sho w that our method outperforms the existing state-of-the-art meth- ods, achie ving 64 . 8% unweighted accuracy with leav e-one- Fig. 1 . The structure of Multi-channel Auto-encoder(MTC- AE). The yello w layers in each local calssifier are pre-trained by SD AEs. The blue layers are bottleneck layers which are concatenated for global classifier . And the blue layers are fully connected layer with random initialization for each local classifiers. All the predictions are fused for final prediction. speaker -out (LOSO) 10-fold cross-validation. 2. MUL TI-CHANNEL A UTO-ENCODER In this section, we will interpret the complete scheme of MTC-AE in detail. In each local DNN, we use SDAEs for initialization before supervised training, to denoise cor - rupted versions of the inputs. Local classifiers are obtained after training from each local DNN based on each LLD. Meanwhile, we take bottleneck layers of each local DNN and concatenate them together as the total representation to train a global classifier . T raining global classifier and the local ones simultaneously does not only take both relev ance and independence of different LLDs into consideration, b ut also reliev e overfitting caused by high-dimensional input and amount-limited training data. Figure 1 shows the detailed structure. 2.1. Local Initialization Because of the large amount of local classifiers, our network structure is relativ ely complex. Initialization of network pa- rameters is quite a key factor af fecting performance. In voice data, it is usual that data gets corrupted. Corruption of data effecti vely influence the performance of training. Inspired by DBNF[7], we utilize SD AEs for initialization. As shown in Figure 1, the lowest two layers in our local DNN are pre- trained by using SD AEs in an unsupervised manner . A de- noising auto-encoder operates mostly like a traditional auto- encoder , except that denoising auto-encoder takes the cor- rupted version e x as its input, which is generated by a cor- ruption process q ( e x | x ) operated on the clean data x , and is meant to reconstruct the original data x . Generally , a random fraction of the elements of x are set to be zero as the cor - rupted version e x . The reconstruction process can be written mathematically: ˆ x = σ 2 ( W 2 · σ 1 ( W 1 · e x + b 1 ) + b 2 ) (1) where e x is the input, ˆ x is the output, W 1 , W 2 are the linear transforms, b 1 , b 2 are the biases, and σ 1 , σ 2 are the non-linear activ e functions. In our formulation, we use the ELU function [8] for both σ 1 , σ 2 : σ 1 ( x ) = σ 2 ( x ) = ( x x ≥ 0 α · ( e x − 1) x < 0 (2) where α is set to be 1 in our paper . W e use the back- propagation algorithm to train the auto-decoder by mini- mizing the cost function as following: min W 1 ,b 1 ,W 2 ,b 2 X x || x − ˆ x || 2 + β · ( || W 1 || 2 + || W 2 || 2 ) (3) where β is the hyper parameter to limit the influence of regu- larization term. In our experiments, β is set to be 10 − 4 . After the pre vious auto-encoder is trained, the hidden rep- resentation is regarded as the original data for training, and con veyed to the next auto-encoder . T otally , for each local classifier , two auto-encoders are trained for initialize the low- est two layers. 2.2. Joint Fine-tuning After training SD AEs, a bottleneck layer , a hidden layer and a classification layer will be connected to form a feed-forward neural network for each local classifier . The three layers at- tached on the top are initialized randomly , while the previous layers are initialized with the auto-encoder weights. Then, as shown in Figure 1, the feed-forw ard neural network is utilized as a block to form a local classifier in our framew ork. It’ s important to note that only measuring the local classi- fiers does consider information of independence sufficiently , but ignores the relev ance among dif ferent features on the other hand. Therefore, we concatenate the bottleneck layer of each local classifier as the global representation to train the global classifier , which is initialized randomly . The global classifier takes the relev ance of each local representation into consideration, and measures it effecti vely . Moreov er , in order to optimize the whole system consid- ering both relev ance and the independence, we use a single objectiv e function to train local classifiers and the global one simultaneously: min φ g ,φ l,i λ · H ( p ( x ) , q φ g ( x )) + (1 − λ ) · N X i =1 H ( p ( x ) , q φ l,i ( x )) (4) where p ( · ) is the true distribution of one-hot label and q ( · ) is the approximating distribution. φ g is the parameters of global classifier and φ l,i is the parameters of i th classifier . N is the number of local classifiers and λ is the weight coefficient that between 0 and 1 , in our experiments, we set λ to be 0 . 1 . Spe- cially , for λ = 0 , only the global classifiers are included in the framework, and for λ = 1 , local classifiers are included instead of the ”global classifiers”. H ( · ) is a function that returns cross-entropy between an approximating distribution and a true distribution that can be written mathematically: H ( p ( x ) , q ( x )) = − X x p ( x ) · log ( q ( x )) (5) For the objectiv e function, Eq.4, can be optimized based on back-propagation algorithm. Since our model contains many classifiers, we fuse the outputs of each classifier by summation simply with a weight parameter γ between global classifier and local classifier , which is set to be 0 . 95 in this paper: F ( x ) = γ · q φ g ( x ) + (1 − γ ) · N X n =1 q φ l,i ( x ) (6) The maximum output position of F ( x ) is regarded as the final prediction result. 3. RESUL TS AND DISCUSSION 3.1. Dataset The IEMOCAP [9] database contains approximately 12 hours’ audio-visual conv ersations of 10 speakers in English, with them manually segmented into utterances. The database contains the follo wing categorical labels: anger , happiness, sadness, neutral, excitement, frustration, fear , surprise, and others. In our e xperiment, we form a four-class emotion clas- sification dataset containing { happy , angry , sad and neutral } after merging happiness and excitement categories as the happy category only , to compare with the former state-of-the- art methods as mentioned in section 3.2. T able 1 presents the detail utterance number and the corresponding percentage of each category . 3.2. Experimental Details Evaluation W e performed all ev aluations using 10-fold LOSO cross-validation, to stay in the same manner as most approaches, so that there is no speaker overlap between the Category Happy Anger Sad Neutral T otal Utterances 1636 1103 1084 1708 5531 Percentage 29.6 19.9 19.6 30.9 100 T able 1 . The number of utterances and their corresponding percentage for each emotion category . training and test data. As for the method to ev aluating the per- formance, we utilize the unweighted accuracy (UA), which hav e been used in several pre vious emotion challenges. U A is quite a good measurement in this case since the class distribution is imbalanced. Featur e Extraction W e utilize openSMILE toolkit [10] to extract statistics features which was used in the INTER- SPEECH 2010 Paralinguistic Challenge [11], as discussed in [4]. T otally , 1582-dimensional features are generated by ex- tracting 38 kinds of LLDs shown in T able 2, and applying 21 statistics functions shown in T able 3. Details of these features can be found in [11]. Low le vel Descriptors (LLDs) PCM loudness MFCC [0-14] log Mel Frequency Band [0-7] Line Spectral Pairs (LSP) Frequenc y [0-7] F0 by sub-harmonic summation F0 En velope V oicing probability Jitter local Jitter difference of dif ference of periods (DDP) Shimmer local T able 2 . 38-dimensional frame-lev el acoustic features Statistics Functions Position maximum/minimum Arithmetic mean, standard deviation Linear regression coef ficients 1/2 Linear regression error quadratic/absolute Quartile 1/2/3 Quartile range 2-1/3-2/3-1 Percemtile 1/99 Percemtile range 99-1 Up-lev el time 75/99 T able 3 . 21 kinds of statistics functions applied on LLDs Network Setting In our experiments, 38 local classifiers consist in the total framew ork, corresponding 38-dimensional frame-lev el acoustic features mentioned above. For per -training the SD AE, Adam[12] is used for opti- mization with 0 . 0003 learning rate for 200 iteration, and the batch size is 64 . Input vectors are corrupted by applying masking noise to set a random 20% of their elements to zero. Method Reference V alidation Setting U A (%) Ensemble of SVM T rees [APSIP A ASC, 2012] 10-fold LOSO 60.9 Replicated Softmax Models + SVM [ISCAS, 2014] 10-fold LOSO 57.4 CNN Feature + MKL Classifier [ICDM, 2016] 10-fold 61.3 Contextual LSTM [A CL, 2017] First 8 speakers for training 57.1 Attention-based RNN [ICASSP , 2017] 4 Sessions for training 58.8 Deep Multi-layered Neural Network [Neural Networks, 2017] 8-fold LOSO 60.9 Multi-task DBN Feature + SVM [T rans-AC, 2017] 10-fold LOSO 62.4 MTC-DNN Our Method 10-fold LOSO 62.7 MTC-AE Our Method 10-fold LOSO 64.8 T able 4 . The performance on IEMOCAP dataset with different models and comparison with the state of the art based on unweighted accuracy Each auto-encoder contains 400 hidden units and the next one is trained on top of it when finish training. For fine-tuning process, a bottleneck layer with 30 units is added, and followed by a new hidden layer with 100 units. For global classifier , the new hidden layer is set with 1000 units. Similarly , Adam is used for optimization with 0 . 0003 learning rate for 1000 iteration, and batch size is 64 . After each epoch, the current model was ev aluated on validation set, and the model performing best on this set is used for testing. All of these processes are done on GPUs using Keras toolkit. State-of-the-art Methods T o ev aluate the ef fectiveness of proposed framework, we compare the performance of emo- tion classification with some state-of-the-art methods based on IEMOCAP as following: [APSIP A ASC, 2012] [13] proposed an ensemble of trees of binary SVM classifiers to address the sentence-level multi- modal emotion recognition problem. [ISCAS, 2014] [14] proposed a multi-modal framework for emotion recognition using bag-of-words features and undirected, replicated softmax topic models. [ICDM, 2016] [15] feed features extracted by deep conv o- lutional neural networks(CNN) into a multiple kernel learning classifier to do multimodal emotion recognition. [A CL, 2017] [16] This paper propose a LSTM-based model to capture contextual information between utterance- lev el features in the same video. [ICASSP , 2017] [17] This paper study automatically dis- cov ering emotionally relev ant speech features using a deep recurrent neural network(RNN) and a local attention base fea- ture pooling strategy . [Neural Networks, 2017] [18] proposed speech emotion recognition system to empirically explore feed-forward and recurrent neural network architectures and their v ariants. [T rans-AC, 2017] [4] This paper propose a frame work for acoustic emotion recognition based on the deep belief net- work (DBN) frame work. 3.3. Experimental Results and Analysis T able 4 compares the classification performance of different models from different state-of-the-art literatures based on IEMOCAP dataset. Our experiments was run with 10-fold LOSO as most of the previous work did, and was carried out separately based on whether to use SDAEs to pre-train the network. W e call the netw ork without SDAEs as MTC-DNN. The highest accuracy on unweighted accuracy was 62 . 4% by using muti-task deep belief network to obtain feature rep- resentation, and utilizing SVM as the classifier [4] before our work. Comparing to this, the two methods we proposed, named MTC-DNN and MTC-AE, improved the U A by 0 . 3% and 2 . 4% , respecti vely . Both the two methods outperformed the existing state-of-the-art methods. It well prov es that tak- ing the independence of different features into consideration does help to improve the performance of the system. It also adds a strong regularization to the whole system, and makes the system more discriminating for classification. And it is remarkable to point out that using SDAEs to pre-train the network helps to further improve the classification accuracy . SD AEs explicitly reduces the existing noises from the data set, and helps to accelerate in con vergence of the network. 4. CONCLUSION In this paper , we proposed a novel architectures named MTC- AE for speech emotion recognition. Multiple local DNNs not only keeps the information from the independence of each feature, but also adds a regularization to the whole scheme, helping to relie ve ov er fitting. Moreov er , bottleneck layers from local DNNs are concatenated altogether to form a strong classifier . SDAEs is utilized to initialize complex networks. Experiments show that our method significantly outperforms the existing state-of-the-art methods with U A on IEMOCAP dataset. 5. REFERENCES [1] B Schuller , G Rigoll, and M Lang, “Hidden markov model-based speech emotion recognition, ” in Inter- national Conference on Multimedia and Expo, 2003. ICME ’03. Pr oceedings , 2003, pp. I–401–4 vol.1. [2] Bj ¨ orn Schuller , Bogdan Vlasenko, Florian Eyben, Ger- hard Rigoll, and Andreas W endemuth, “ Acoustic emo- tion recognition: A benchmark comparison of perfor- mances, ” in Automatic Speech Recognition & Under- standing, 2009. ASRU 2009. IEEE W orkshop on . IEEE, 2009, pp. 552–557. [3] Y elin Kim, Honglak Lee, and Emily Mower Prov ost, “Deep learning for robust feature generation in audio- visual emotion recognition, ” in Acoustics, Speech and Signal Processing (ICASSP), 2013 IEEE International Confer ence on . IEEE, 2013, pp. 3687–3691. [4] Rui Xia and Y ang Liu, “ A multi-task learning frame- work for emotion recognition using 2d continuous space, ” IEEE T r ansactions on Affective Computing , vol. 8, no. 1, pp. 3–14, 2017. [5] Shiqing Zhang, Shiliang Zhang, Tiejun Huang, and W en Gao, “Multimodal deep conv olutional neural network for audio-visual emotion recognition, ” in Pr oceedings of the 2016 A CM on International Confer ence on Multi- media Retrieval . A CM, 2016, pp. 281–284. [6] George Trigeor gis, Fabien Ringev al, Raymond Brueck- ner , Erik Marchi, Mihalis A Nicolaou, Bj ¨ orn Schuller , and Stefanos Zafeiriou, “ Adieu features? end-to-end speech emotion recognition using a deep con volutional recurrent network, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2016 IEEE International Confer- ence on . IEEE, 2016, pp. 5200–5204. [7] Jonas Gehring, Y ajie Miao, Florian Metze, and Alex W aibel, “Extracting deep bottleneck features using stacked auto-encoders, ” in IEEE International Confer- ence on Acoustics, Speech and Signal Pr ocessing , 2013, pp. 3377–3381. [8] Djork-Arn Clevert, Thomas Unterthiner , and Sepp Hochreiter , “Fast and accurate deep network learning by exponential linear units (elus), ” Computer Science , 2015. [9] Carlos Busso, Murtaza Bulut, Chi-Chun Lee, Abe Kazemzadeh, Emily Mower , Samuel Kim, Jeannette N Chang, Sungbok Lee, and Shrikanth S Narayanan, “Iemocap: Interactiv e emotional dyadic motion capture database, ” Language r esources and evaluation , vol. 42, no. 4, pp. 335, 2008. [10] Florian Eyben, “Opensmile: the munich versatile and fast open-source audio feature extractor , ” in ACM In- ternational Confer ence on Multimedia , 2010, pp. 1459– 1462. [11] Bjrn Schuller , Stefan Steidl, Anton Batliner , Felix Burkhardt, Laurence Devillers, Christian A. Mller , and Shrikanth S. Narayanan, “The interspeech 2010 par- alinguistic challenge, ” in INTERSPEECH 2010, Con- fer ence of the International Speech Communication As- sociation, Makuhari, Chiba, J apan, September , 2010, pp. 2794–2797. [12] Diederik P Kingma and Jimmy Ba, “ Adam: A method for stochastic optimization, ” Computer Science , 2014. [13] V iktor Rozgic, Sankaranarayanan Ananthakrishnan, Shirin Saleem, Rohit Kumar , and Rohit Prasad, “En- semble of svm trees for multimodal emotion recogni- tion, ” in Signal & Information Processing Association Annual Summit and Conference (APSIP A ASC), 2012 Asia-P acific . IEEE, 2012, pp. 1–4. [14] Mohit Shah, Chaitali Chakrabarti, and Andreas Spanias, “ A multi-modal approach to emotion recognition using undirected topic models, ” in Cir cuits and Systems (IS- CAS), 2014 IEEE International Symposium on . IEEE, 2014, pp. 754–757. [15] Soujanya Poria, Iti Chaturvedi, Erik Cambria, and Amir Hussain, “Conv olutional mkl based multimodal emo- tion recognition and sentiment analysis, ” in Data Min- ing (ICDM), 2016 IEEE 16th International Conference on . IEEE, 2016, pp. 439–448. [16] Soujanya Poria, Erik Cambria, De vaman yu Hazarika, Nav onil Majumder , Amir Zadeh, and Louis-Philippe Morency , “Context-dependent sentiment analysis in user-generated videos, ” in Pr oceedings of the 55th An- nual Meeting of the Association for Computational Lin- guistics (V olume 1: Long P apers) , 2017, v ol. 1, pp. 873– 883. [17] Seyedmahdad Mirsamadi, Emad Barsoum, and Cha Zhang, “ Automatic speech emotion recognition us- ing recurrent neural networks with local attention, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2017 IEEE International Confer ence on . IEEE, 2017, pp. 2227–2231. [18] H. M. Fayek, M Lech, and L Cavedon, “Ev aluating deep learning architectures for speech emotion recognition., ” Neural Networks , 2017.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment