Tensors, Learning, and Kolmogorov Extension for Finite-alphabet Random Vectors

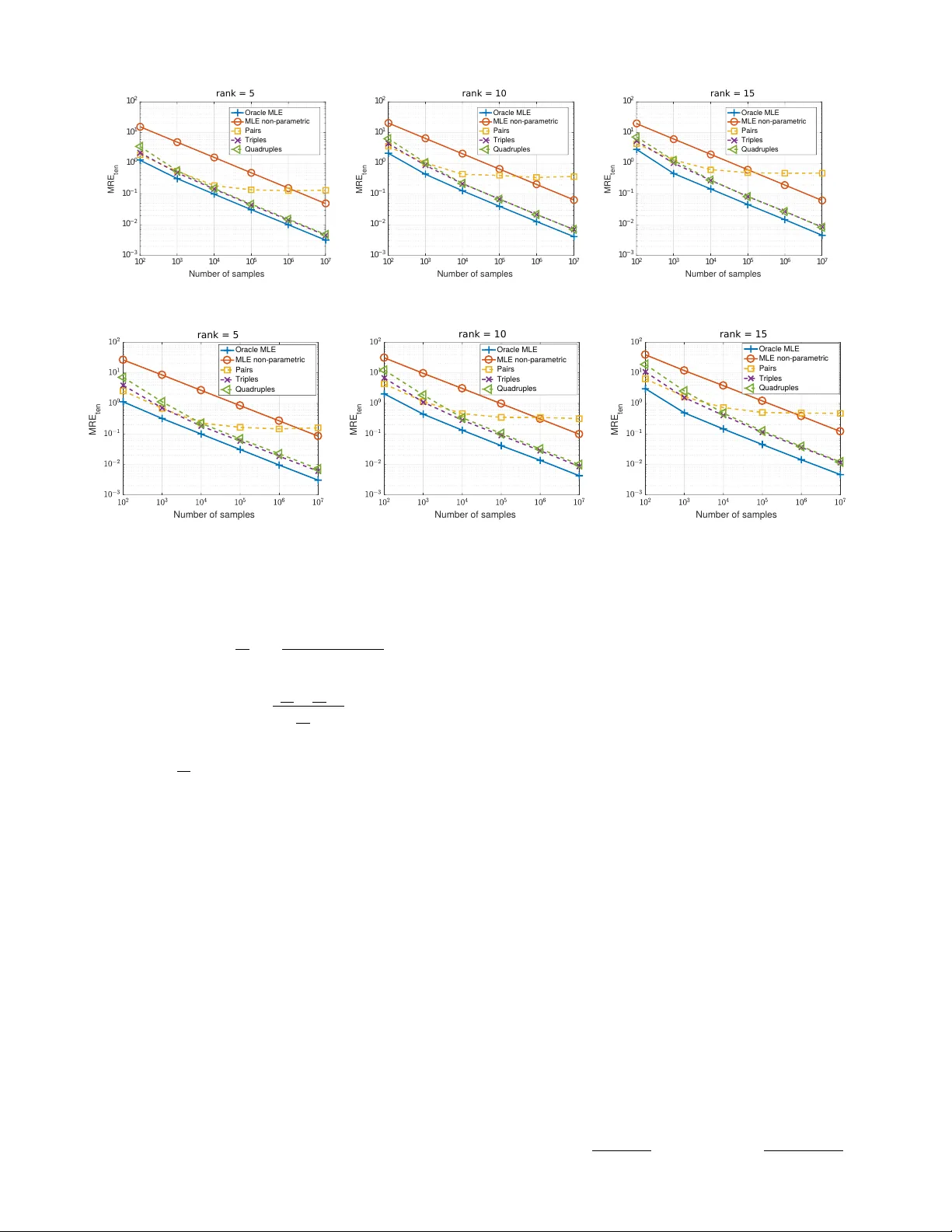

Estimating the joint probability mass function (PMF) of a set of random variables lies at the heart of statistical learning and signal processing. Without structural assumptions, such as modeling the variables as a Markov chain, tree, or other graphi…

Authors: Nikos Kargas, Nicholas D. Sidiropoulos, Xiao Fu