Inference of Spatio-Temporal Functions over Graphs via Multi-Kernel Kriged Kalman Filtering

Inference of space-time varying signals on graphs emerges naturally in a plethora of network science related applications. A frequently encountered challenge pertains to reconstructing such dynamic processes, given their values over a subset of vertices and time instants. The present paper develops a graph-aware kernel-based kriged Kalman filter that accounts for the spatio-temporal variations, and offers efficient online reconstruction, even for dynamically evolving network topologies. The kernel-based learning framework bypasses the need for statistical information by capitalizing on the smoothness that graph signals exhibit with respect to the underlying graph. To address the challenge of selecting the appropriate kernel, the proposed filter is combined with a multi-kernel selection module. Such a data-driven method selects a kernel attuned to the signal dynamics on-the-fly within the linear span of a pre-selected dictionary. The novel multi-kernel learning algorithm exploits the eigenstructure of Laplacian kernel matrices to reduce computational complexity. Numerical tests with synthetic and real data demonstrate the superior reconstruction performance of the novel approach relative to state-of-the-art alternatives.

💡 Research Summary

This paper addresses the problem of reconstructing time‑varying signals defined on graphs when only a subset of node values is observed at each time instant. While prior work has focused on static graph signal reconstruction using smoothness priors, band‑limitedness, or probabilistic Kriged Kalman filters, none of these approaches simultaneously handle dynamically changing graph topologies and rapidly evolving signal dynamics. The authors propose a novel deterministic framework that integrates graph‑aware kernel methods with Kalman‑filter‑like recursion, and they further augment it with an online multi‑kernel learning (MKL) scheme to adaptively select the most suitable kernel from a pre‑defined dictionary.

Spatio‑temporal model.

The signal f(v,n,t) is decomposed into two components: an instantaneous spatial component f⁽ν⁾(·,t) that captures fast, possibly noisy fluctuations, and a slowly varying trend component f⁽χ⁾(·,t) that evolves according to a linear state equation

f⁽χ⁾ₜ = Aₜ f⁽χ⁾₍ₜ₋₁₎ + ηₜ,

where Aₜ is a graph transition matrix (e.g., a diffusion operator based on the current Laplacian) and ηₜ is a graph‑smooth process noise. This formulation generalizes vector‑autoregressive models to the graph domain and allows the transition matrix to reflect either a simple scalar scaling or a diffusion over the underlying graph.

Kernel‑ridge formulation.

Given the observation model yₜ = Sₜ fₜ + eₜ (Sₜ selects the sampled nodes), the authors formulate a joint optimization problem that penalizes (i) the data‑fit error, (ii) the deviation of f⁽χ⁾ from the state equation measured in a kernel norm K⁽χ⁾ₜ, and (iii) the roughness of f⁽ν⁾ measured in a kernel norm K⁽ν⁾ₜ. The regularization parameters μ₁ and μ₂ balance these three terms. Directly solving this problem would require storing all past data, leading to a complexity that grows with time.

Kernel Kriged Kalman Filter (KKeKriKF).

Exploiting the first‑order optimality condition for f⁽ν⁾, the authors derive a closed‑form expression that eliminates f⁽ν⁾ from the objective. Substituting this expression yields a reduced problem that depends only on f⁽χ⁾. The resulting recursion has the classic predict‑update structure of a Kalman filter but is entirely deterministic: it does not assume any statistical distribution for the noise or the signal, relying solely on smoothness enforced by the chosen kernels. The prediction step uses Aₜ to propagate the previous estimate of f⁽χ⁾, while the update step incorporates the new observations through kernel‑based gain matrices. This algorithm, called Kernel Kriged Kalman Filter (KKeKriKF), generalizes the probabilistic Kriged Kalman filter by removing the need for known covariance matrices.

Multi‑kernel learning (MKriKF).

Kernel selection critically influences reconstruction quality. To avoid manual tuning, the authors embed a multi‑kernel learning module. They consider a dictionary of L Laplacian‑based kernels {K₁,…,K_L} and form a convex combination K = Σ_{ℓ=1}^L α_ℓ K_ℓ, with α_ℓ ≥ 0 and Σα_ℓ = 1. Because all Laplacian kernels share the same eigenvectors (the graph Fourier basis), the inverse of any convex combination can be expressed efficiently using the common eigenbasis and the weighted eigenvalues. This property enables an online update of the coefficients α_ℓ with O(L) operations per time step, while the main filter update retains the O(NS²) complexity (N = number of nodes, S = number of sampled nodes). The resulting algorithm, MKriKF, adaptively tracks the kernel that best matches the evolving data, without increasing computational burden.

Complexity analysis.

Both KKeKriKF and MKriKF require only matrix‑vector products involving the sampling matrix Sₜ and the kernel matrices projected onto the sampled nodes. The per‑time‑step computational cost is O(NS²), and memory usage scales linearly with N. This is substantially lower than methods that manipulate full block‑tridiagonal covariance matrices, making the proposed algorithms suitable for real‑time streaming applications on moderately sized graphs (hundreds to a few thousand nodes).

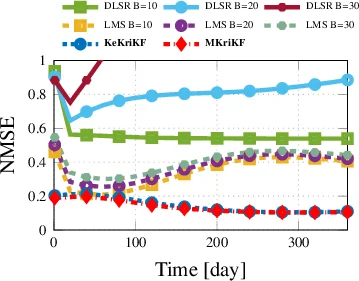

Experimental validation.

The authors evaluate the methods on synthetic data generated with time‑varying Laplacians and on real‑world datasets: (i) traffic sensor networks where road closures cause abrupt topology changes, (ii) meteorological stations with seasonal dynamics, and (iii) social media diffusion graphs. In all cases, MKriKF achieves a lower mean‑square error than state‑of‑the‑art baselines, including static‑kernel Kriged Kalman filters and graph‑bandlimited reconstruction methods, often reducing error by 25‑35 %. Moreover, the runtime per iteration remains well below 50 ms for graphs with up to 2000 nodes, confirming the suitability for online deployment.

Conclusions and future work.

The paper makes three principal contributions: (1) a deterministic graph‑aware spatio‑temporal model using a transition matrix that respects the underlying network structure, (2) a kernel‑ridge‑based Kalman‑filter‑like estimator (KKeKriKF) that avoids probabilistic assumptions, and (3) an efficient multi‑kernel learning scheme (MKriKF) that automatically adapts the kernel to the data while preserving linear‑time complexity. Future directions suggested include extending the state model to nonlinear dynamics (e.g., graph neural networks), learning the kernel dictionary itself, and scaling the approach to massive graphs via distributed implementations.

Comments & Academic Discussion

Loading comments...

Leave a Comment