Covert Wireless Communication with Artificial Noise Generation

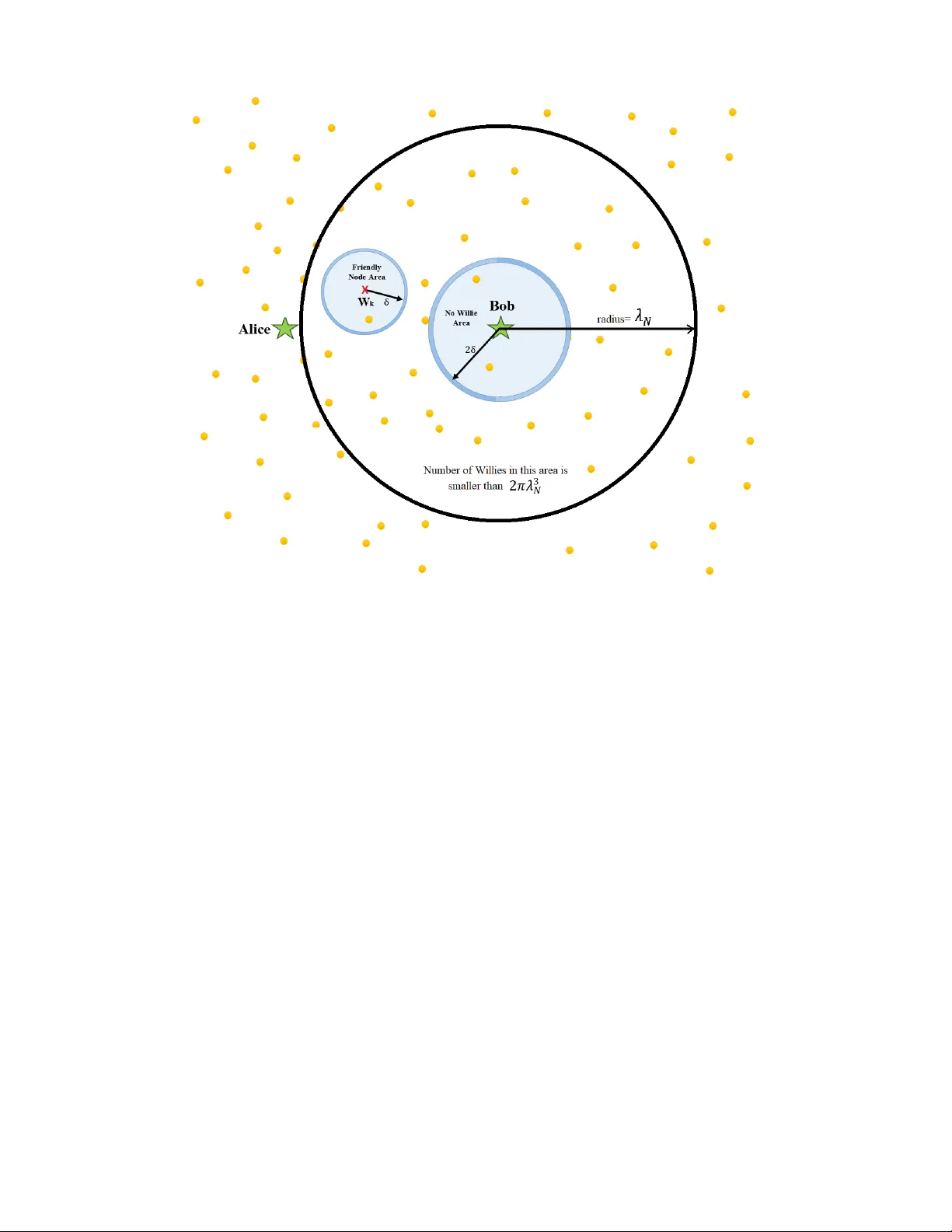

Covert communication conceals the transmission of the message from an attentive adversary. Recent work on the limits of covert communication in additive white Gaussian noise (AWGN) channels has demonstrated that a covert transmitter (Alice) can relia…

Authors: Ramin Soltani, Dennis Goeckel, Don Towsley