Abstraction Learning

There has been a gap between artificial intelligence and human intelligence. In this paper, we identify three key elements forming human intelligence, and suggest that abstraction learning combines these elements and is thus a way to bridge the gap. Prior researches in artificial intelligence either specify abstraction by human experts, or take abstraction as a qualitative explanation for the model. This paper aims to learn abstraction directly. We tackle three main challenges: representation, objective function, and learning algorithm. Specifically, we propose a partition structure that contains pre-allocated abstraction neurons; we formulate abstraction learning as a constrained optimization problem, which integrates abstraction properties; we develop a network evolution algorithm to solve this problem. This complete framework is named ONE (Optimization via Network Evolution). In our experiments on MNIST, ONE shows elementary human-like intelligence, including low energy consumption, knowledge sharing, and lifelong learning.

💡 Research Summary

The paper proposes a novel framework called ONE (Optimization via Network Evolution) to address the long‑standing gap between artificial intelligence and human intelligence. The authors argue that human intelligence is built on three essential elements: intrinsic motivation to understand the world, a unified network (the brain) that accumulates and shares knowledge across tasks, and limited complexity (time, data, space, and energy) that forces efficient solutions. They claim that “abstraction learning” can simultaneously satisfy these three elements and thus serve as a pathway toward human‑like intelligence.

To make abstraction a learnable objective, the authors identify three major challenges: (1) how to represent abstractions within a neural network, (2) how to define an objective function that can distinguish good from bad abstractions, and (3) how to let the objective influence the network’s structure, which is typically non‑differentiable. Their solution consists of three technical innovations.

-

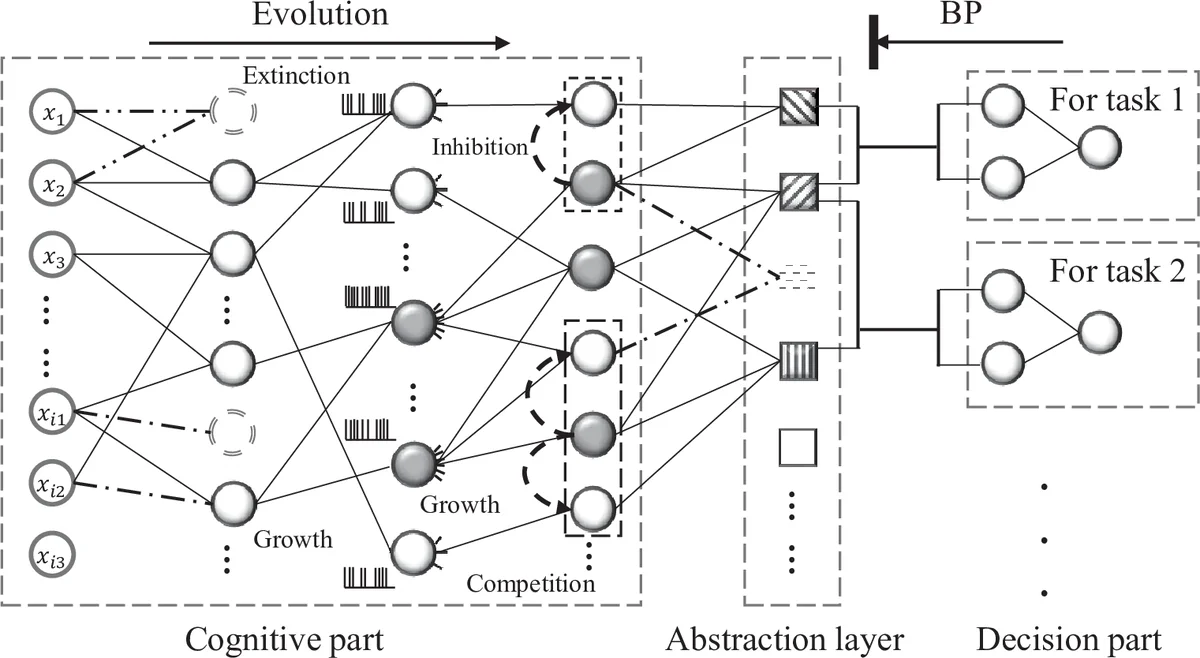

Partition Structure Prior – The network is divided into a task‑agnostic “cognition” part and multiple task‑specific “decision” parts, separated by an “abstraction‑locked layer”. Neurons in this layer are pre‑allocated abstraction neurons. Each abstraction neuron corresponds to a sub‑network that can be dynamically activated for a given input. The cognition part generates abstractions, while the decision part selects a small subset of them for each task. Because task losses never modify the abstraction neurons, knowledge is retained and can be reused across tasks.

-

Constrained Optimization Formulation – The learning problem is cast as a constrained optimization that enforces three desired properties of abstractions:

- Variety – Constraints A1 and A2 limit the number of active neurons per layer and the out‑degree of each neuron, ensuring a diverse set of sparse subnetworks.

- Simplicity – Constraints A3 and A4 bound the total number of active abstraction neurons for any input and for all inputs belonging to the same class, encouraging concise explanations.

- Effectiveness – An entropy‑based objective selects, for each task, a fixed number E of abstraction neurons whose activation patterns have the lowest entropy across classes, i.e., neurons that already separate categories. Constraints A5 and A6 enforce the selection and zero out weights of unselected neurons in the task‑specific heads.

The overall loss combines the standard task loss (e.g., cross‑entropy) with the entropy term, while all constraints are hard‑coded.

-

Network Evolution Algorithm – Because the structure itself must be optimized, gradient‑based methods are insufficient. The authors introduce biologically inspired mechanisms:

- Growth adds random connections to explore new structures.

- Competition lets only the most promising subnetworks survive, based on the constraints and the entropy objective.

- Extinction removes poorly performing or redundant subnetworks.

This evolutionary process iteratively refines the set of abstractions while simultaneously training the task‑specific heads.

The authors evaluate ONE on the MNIST dataset, treating each digit classification as a separate task learned sequentially. Three “human‑like” properties emerge:

- Low Energy Consumption – For any task, only a small fraction (<10 %) of the total neurons fire, reducing computational cost.

- Knowledge Sharing – As more tasks are added, the proportion of newly created abstractions declines while reuse of existing abstractions rises, demonstrating effective transfer.

- Lifelong Learning – Because abstraction neurons are frozen after they are created, the network exhibits negligible catastrophic forgetting.

These results confirm that the proposed abstraction‑centric, evolution‑based approach can achieve multi‑task learning, transfer, and continual learning within a single unified network.

While promising, the work has limitations. Experiments are confined to a simple image classification benchmark; it remains unclear how the method scales to more complex domains such as language, reinforcement learning, or large‑scale vision. The performance heavily depends on manually set hyper‑parameters for the constraints (V1, V2, S1, S2, E, etc.), suggesting a need for automated tuning. Moreover, the evolutionary search may become computationally expensive for deeper networks.

In summary, the paper introduces a fresh perspective by treating abstraction as a first‑class learnable entity, integrating structural constraints and evolutionary search into a unified framework. If extended to richer tasks and equipped with adaptive constraint handling, ONE could become a significant step toward AI systems that accumulate, share, and reuse knowledge in a manner reminiscent of human cognition.

Comments & Academic Discussion

Loading comments...

Leave a Comment