XNORBIN: A 95 TOp/s/W Hardware Accelerator for Binary Convolutional Neural Networks

Deploying state-of-the-art CNNs requires power-hungry processors and off-chip memory. This precludes the implementation of CNNs in low-power embedded systems. Recent research shows CNNs sustain extreme quantization, binarizing their weights and inter…

Authors: Andrawes Al Bahou, Geethan Karunaratne, Renzo Andri

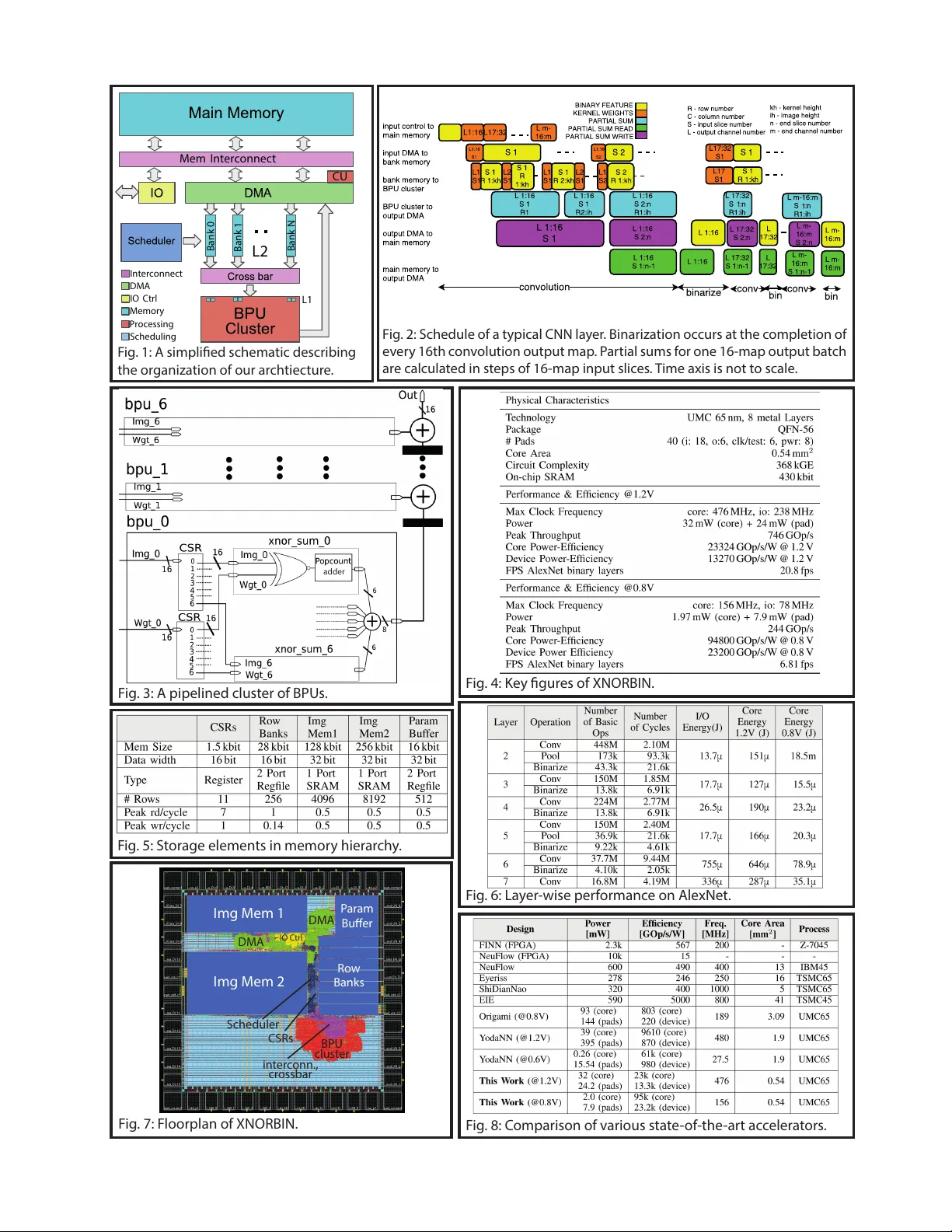

XNORBIN: A 95 T Op/s/W Hardware Accelerator for Binary Con v olutional Neural Netw orks Andrawes Al Bahou ∗ , Geethan Karunaratne ∗ , Renzo Andri, Lukas Cavigelli, Luca Benini Integrated Systems Laboratory , ETH Zurich, Zurich, Switzerland ∗ These authors contributed equally to this w ork and are listed in alphabetical order . Abstract Deploying state-of-the-art CNNs requires power-hungry processors and off-chip memory . This precludes the im- plementation of CNNs in low-po wer embedded systems. Recent research shows CNNs sustain extreme quantization, binarizing their weights and intermediate feature maps, thereby saving 8-32 × memory and collapsing energy-intensiv e sum-of-products into XNOR -and- popcount operations. W e present XNORBIN, an accelerator for binary CNNs with computation tightly coupled to memory for aggressive data reuse. Implemented in UMC 65nm technology XNORBIN achieves an energy efficiency of 95 T Op/s/W and an area ef ficiency of 2.0 T Op/s/MGE at 0.8 V . I . I N T RO D U C T I O N & R E L ATE D W O R K The recent success of conv olutional neural networks (CNNs) hav e turned them into the go-to approach for many complex machine learning tasks. Computing the forward pass of a state-of-the-art image classification CNN requires ≈ 20 GOp/frame (1 multiply-accumulate corresponds to 2 Op) and access to 20M-100M weights [1]. Such networks are out of reach for low-po wer (mW -le vel) embedded systems and are typically run on W -le vel embedded platforms or workstations with po werful GPUs. Hardware accelerators are essential to push CNNs to mW -range low-po wer platforms, where state-of-the-art energy efficienc y at negligible accuracy loss is achieved using binary weight networks [2]. For maximum energy efficienc y , it is imperative to store repeatedly accessed data on-chip and limit any off-chip communication. This puts stringent constraints on the CNNs (weights and intermediate feature map size) that can fit the de vice, limiting applications and crippling accuracy . Binary neural networks (BNNs) use bipolar binarization (+1/-1) for both the model weights and feature maps, reducing ov erall memory size and bandwidth constraints by ≈ 32 × , and simplifying the costly sum-of-products computation to mere XNOR-and-popcount operations while keeping an acceptable accuracy penalty relati ve to full-precision networks [3], also thanks to iterative improv ement through multiple BNN con volutions [4]. In this work, we present XNORBIN, a hardware accelerator targeting fully-binary CNNs to address both the memory and computation energy challenges. The key operations of BNNs are 2D-conv olutions of multiple binary (+1/-1) input feature maps and binary (+1/-1) filter kernel sets, resulting in multiple integer -valued feature maps. This conv olutions can be formulated as many parallel XNOR-and-popcount operations with the potential for intensi ve data reuse. The activ ation function and the optional batch normalization can then be collapsed to a re-binarization on a pre-computed per-output feature map threshold v alue and can be applied on-the-fly . An optional pooling operation can be enabled after the re-binarization. XNORBIN is complete in the sense that it implements all common BNN operations (e.g. those of the binary AlexNet described in [3]) and supports a wide range of hyperparameters. Furthermore, we provide a tool for automatic mapping of trained BNNs from the PyT orch deep learning framew ork to a control stream for XNORBIN. I I . A R C H I T E C T U R E A top-le vel ov erview of the chip architecture is provided in Fig. 1. The XNORBIN accelerator sequentially processes each layer of the BNN. Data flow: T o support up to 7 × 7 kernel sizes, the processing core of XNORBIN is composed of a cluster (Fig. 3) of 7 BPUs (Basic Processing Units), where ev ery BPU includes a set of 7 xnor_sum units. These units calculate the XNOR-and-popcount result on 16 bit vectors, containing values of 16 feature maps at a specific pixel. The outputs of all 7 xnor_sum units in a BPU are added-up, computing one output v alue of a 1D con volution on an image row each cycle. On the next higher level of hierarchy , the results of the BPUs are added up to produce one output value of a 2D con volution (cf. Fig. 3). Cycle-by-cycle, a con volution window slides horizontally over the image. The resulting integer value is forwarded to the DMA controller, which includes a near-memory compute unit (CU). The CU accumulates the partial results by means of a read-add-write operation, since the feature maps are processed in tiles of 16. After the final accumulation of partial results, the unit also performs the thresholding/re-binarization operation (i.e. activ ation and batch normalization). When binary results hav e to be written back to memory , the DMA also handles packing them into 16 bit words. Data re-use/buffering: XNORBIN comes with three levels of memory and data buf fering hierarchy . 1) At the highest lev el, the main memory stores the feature maps and the partial sums of the con volutions. This memory is divided into two SRAM blocks, where one serves as the data source (i.e. contains the current layer’ s input feature maps) while the other serves as data sink (i.e. contains the current output feature maps/partial results), and switching their roles as layer changes. These memories are sized such that they can store the worst-case layer of a non-trivial network (implemented: 128 kbit and 256 kbit to accommodate binary AlexNet), thus av oid the tremendous energy cost of pushing intermediate results off-chip. Additionally , a parameter b uffer stores the weights, binarization threshold, and configuration parameters and is sized to fit the target network, but may be used as a cache for for an external flash memory storing these parameters. Integrating this accelerator with a camera sensor would allow to completely eliminate off-chip communication. 2) On the next lo wer level of hierarchy , the row banks are used to buffer rows of the input feature maps to reliev e pressure on the SRAM. Since these row banks need to be rotated when shifting the con volution window down, they are connected to the BPU cluster through a crossbar . 3) The crossbar connects to the working memory inside the BPUs, the controlled shift re gisters (CSRs, cf. Fig. 3), for the input feature maps and the filter weights. These are shifted when moving the con volution window forward. All the data words in the CSRs are accessible in parallel and steered to the xnor_sum units. Scalability: XNORBIN is not limited to 7 × 7 conv olutions. It can be configured to handle any smaller filter sizes down to 1 × 1 . Howe ver , the size of BNN’ s largest pair of input and output feature maps (pixels × number of maps for both) has to fit into the main memory (i.e. 250 kbit for the actual implementation of XNORBIN). Furthermore, any con volution layer with a filter size larger than 7 × 7 would need to be split into smaller con volutions due to the number of parallel working BPUs, xnor_sum units per BPU, the number of row banks, and the size of the CSRs, thereby introducing a large overhead. There are no limitations to the depth of the network when streaming the network parameters from an external flash memory . I I I . I M P L E M E N T A T I O N R E S U L T S W e have tested our design running the binary AlexNet model from [3], which comes pre-trained on the ImageNet dataset. The throughput and energy consumption per layer are sho wn in Fig. 6. The results are implicitly bit-true—there is no implementation loss such as going from fp32 to a fixed-point representation, since all intermediate results are integer-v alued or binary . The design has been implemented in UMC 65nm technology . Evaluations for the typical-case corner at 25 ◦ C for 1.2V and 0.8V , hav e yielded a throughput of 746 GOp/s and 244 GOp/s and an energy efficiency of 23.3 TOp/s/W and 95 TOp/s/W , respectiv ely . The system consumes 1.8 mW@0.8V from which 69% are in the memory , 14.6% in the DMA and crossbar and 13% in the BPUs. The key performance and physical characteristics are presented in Fig. 4. The implementation parameters such as memory sizes hav e been chosen to support BNN models up to the size of binary AlexNet. W e compare energy efficiency of XNORBIN to state-of-the-art CNN accelerators in Fig. 8. T o the best of our knowledge, this is the first hardware accelerator for binary neural networks. The closest comparison point are the FPGA-based FINN results [5] with a 168 × higher energy consumption when running BNNs. The strongest competitor is [2], which is a binary-weight CNN accelerator strongly limited by I/O energy , requiring 25 × more energy per operation than XNORBIN. I V . C O N C L U S I O N Thanks to the binarization of the neural networks, the memory footprint of the intermediate results as well as the filter weights could be reduced by 8-32 × , making XNORBIN capable to fit all intermediate results of a simple, but realistic BNN, such as binary AlexNet, into on-chip memory with a mere total accelerator size of 0.54 mm 2 . Furthermore, the computational complexity decreases significantly as full-precision multiplier-accumulate units are replaced by XNOR and pop-count operations. Due to these benefits—smaller compute logic, keeping intermediate results on-chip, reduced model size—XNORBIN outperforms the ov erall energy efficienc y of existing accelerators by more than 25 × . R E F E R E N C E S [1] A. Canziani, A. Paszke, and E. Culurciello, “ An analysis of deep neural network models for practical applications, ” CoRR, vol. abs/1605.07678, 2016. [2] R. Andri, L. Cavigelli, D. Rossi, and L. Benini, “Y odaNN: An architecture for ultralow power binary-weight cnn acceleration, ” IEEE Transactions on Computer-Aided Design of Inte grated Circuits and Systems, vol. 37, no. 1, pp. 48–60, 2018. [3] M. Rastegari, V . Ordonez, J. Redmon, and A. Farhadi, “Xnor-net: Imagenet classification using binary con volutional neural networks, ” in European Conference on Computer V ision. Springer , 2016, pp. 525–542. [4] X. Lin, C. Zhao, and W . Pan, “T owards accurate binary conv olutional neural network, ” in Advances in Neural Information Processing Systems 30, I. Guyon, U. V . Luxburg, S. Bengio, H. W allach, R. Fergus, S. V ishwanathan, and R. Garnett, Eds. Curran Associates, Inc., 2017, pp. 345–353. [5] Y . Umuroglu, N. J. Fraser, G. Gambardella, M. Blott, P . Leong, M. Jahre, and K. V issers, “Finn: A framework for fast, scalable binarized neural network inference, ” in Proceedings of the 2017 ACM/SIGD A International Symposium on Field-Programmable Gate Arrays. A CM, 2017, pp. 65–74. Mem Interco nnect CU Bank N L2 L1 Interc onnect DMA IO Ctrl Memory Proces si ng Scheduli ng Bank 1 Bank 0 F ig . 1: A simplied schema tic descr ibing the or ganiza tion of our ar ch tiec tur e . F ig . 5: S t or age elemen ts in memor y hier ar ch y . F ig . 8: C ompar ison of v ar ious sta t e - of-the -ar t ac c eler a t ors . F ig . 4: Key gur es of XNORBIN. F ig . 2: S chedule of a t ypical CNN la y er . Binar iza tion oc curs a t the c ompletion of ev er y 16th c on v olution output map . P ar tial sums f or one 16-map output ba t ch ar e calcula t ed in st eps of 16-map input slic es . T ime axis is not t o scale . F ig . 7: F loor plan of XNORBIN. F ig . 3: A pipelined clust er of BPU s . F ig . 6: La y er - wise per f or manc e on A le xNet . I mg M em 1 I mg M em 2 P ar am Bu er R ow Banks BPU clust er in t er c onn., cr ossbar CSRs S cheduler D MA D MA IO Ctr l adder

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment