Characterizing Co-located Datacenter Workloads: An Alibaba Case Study

Warehouse-scale cloud datacenters co-locate workloads with different and often complementary characteristics for improved resource utilization. To better understand the challenges in managing such intricate, heterogeneous workloads while providing quality-assured resource orchestration and user experience, we analyze Alibaba’s co-located workload trace, the first publicly available dataset with precise information about the category of each job. Two types of workload—long-running, user-facing, containerized production jobs, and transient, highly dynamic, non-containerized, and non-production batch jobs—are running on a shared cluster of 1313 machines. Our multifaceted analysis reveals insights that we believe are useful for system designers and IT practitioners working on cluster management systems.

💡 Research Summary

This paper presents a comprehensive characterization of Alibaba’s publicly released co‑located workload trace, the first dataset that provides precise job‑type information for a large‑scale production cluster. The trace captures 24 hours of activity on a homogeneous cluster of 1,313 machines, each equipped with 64 CPU cores, and records both long‑running, user‑facing containerized services (managed by the Sigma scheduler) and transient, non‑containerized batch jobs (managed by the Fuxi scheduler). By analyzing resource reservation, actual usage, dynamicity, and interaction patterns, the authors uncover several key insights that are relevant to designers of cluster management systems and to practitioners operating large‑scale data centers.

Overall cluster utilization shows moderate CPU usage (average 40–50 % during the first four hours, then declining) and relatively high memory consumption (above 50 % for most of the time). Compared with Google’s trace, Alibaba’s environment is more memory‑constrained, reflecting the memory‑intensive nature of many online services.

For the long‑running container workloads, the study finds that CPU over‑booking is rare; most containers reserve up to the physical limit of 64 cores per machine. Memory reservation, however, is substantial: about 74 % of machines have 60–80 % of their memory reserved for containers. Actual CPU utilization is extremely low—approximately 60 % of containers use less than 1 % of a core on average—while memory usage is modest but consistently above 2 % per container. This pattern indicates pervasive CPU over‑provisioning and memory over‑commitment, a design choice intended to absorb load spikes and meet strict latency requirements of user‑facing services. The authors also note that container placement is uneven across machines due to constraints such as affinity, anti‑affinity, and priority, which leads to resource imbalance.

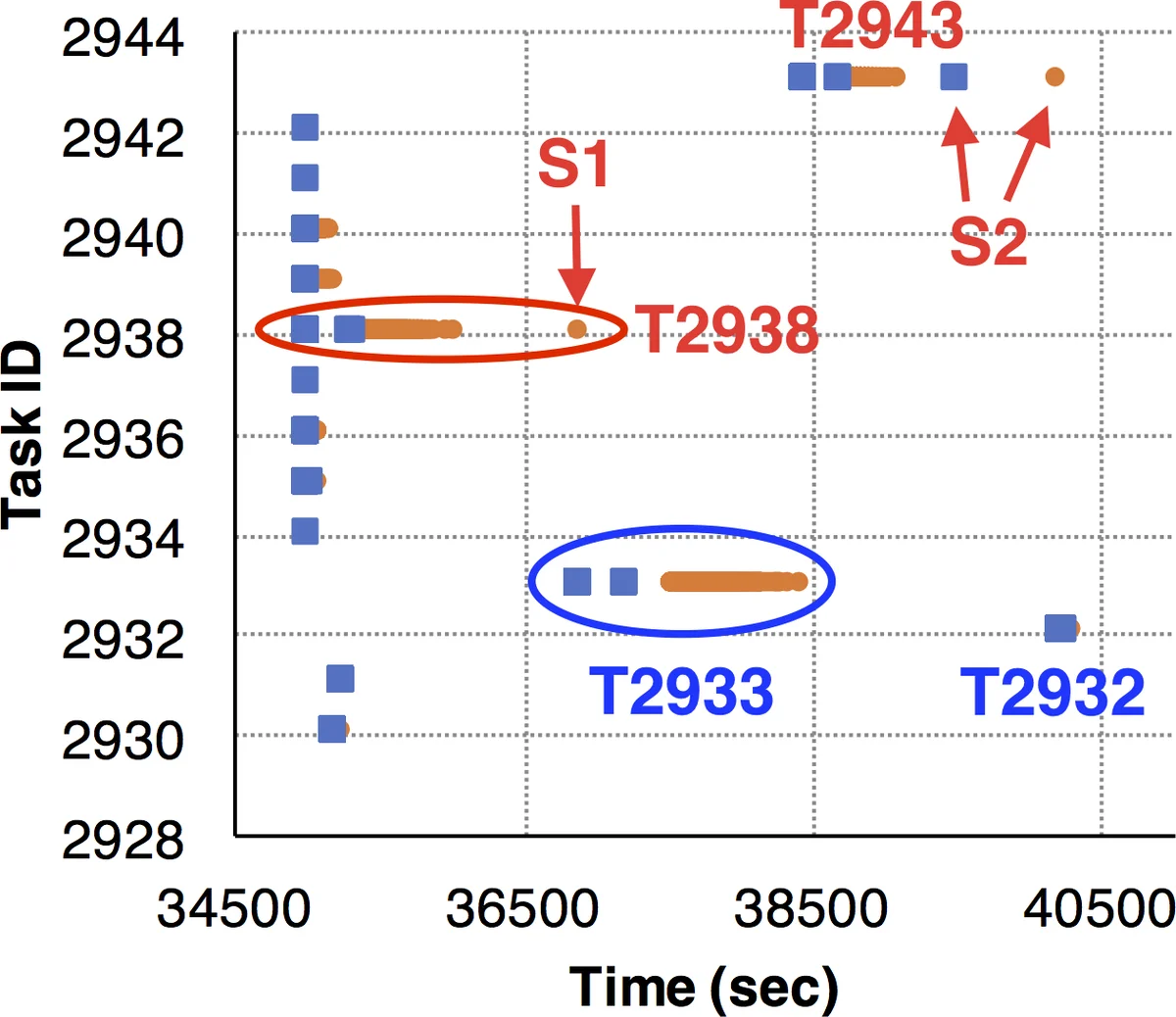

Batch job analysis reveals a markedly different behavior. By subtracting container usage from total cluster usage, the authors isolate the batch workload’s contribution. Batch tasks appear as “waves” in the utilization timeline, reflecting their short‑lived, highly dynamic nature. On average, batch jobs consume about 70–80 % of the resources they request, but a non‑trivial fraction exhibit severe under‑utilization or over‑utilization, manifesting as classic “straggler” problems. Cumulative distribution functions show that many task instances use less than half of their requested CPU or memory, confirming that resource requests are often over‑estimated.

A critical observation concerns the interaction between the two schedulers. Although a global Level‑0 controller mediates resource matching, the study finds limited coordination between Sigma and Fuxi. Over‑provisioned memory reservations by containers can starve batch jobs, and CPU over‑booking, even if sparse, can reduce the scheduling flexibility for batch workloads. These contentions lead to performance interference, suggesting that the current two‑level architecture does not fully exploit the potential of co‑location.

The paper also highlights that several long‑standing issues persist in modern data centers. Poorly predicted resource usage and straggler tasks, first reported in earlier studies of Google’s trace, remain prevalent in Alibaba’s environment. However, because batch jobs are non‑production and can tolerate over‑commitment, inaccurate CPU predictions are less critical than in latency‑sensitive services. Conversely, straggler mitigation still requires attention from both administrators (e.g., speculative execution) and developers (e.g., workload balancing).

In summary, the authors provide a multi‑dimensional view of co‑located workloads: (1) CPU resources are heavily over‑provisioned for long‑running services, while memory is frequently over‑committed; (2) a large proportion of containers remain idle, indicating opportunities for elastic scaling; (3) batch jobs suffer from stragglers and imprecise resource requests; (4) the existing two‑level scheduling framework lacks tight integration, leading to avoidable resource contention. The findings suggest that future cluster management systems should incorporate more accurate usage prediction, support container migration/re‑scheduling, and adopt a unified, co‑location‑aware scheduler to improve overall utilization and performance.

Comments & Academic Discussion

Loading comments...

Leave a Comment