Rhythm-Flexible Voice Conversion without Parallel Data Using Cycle-GAN over Phoneme Posteriorgram Sequences

Speaking rate refers to the average number of phonemes within some unit time, while the rhythmic patterns refer to duration distributions for realizations of different phonemes within different phonetic structures. Both are key components of prosody …

Authors: Cheng-chieh Yeh, Po-chun Hsu, Ju-chieh Chou

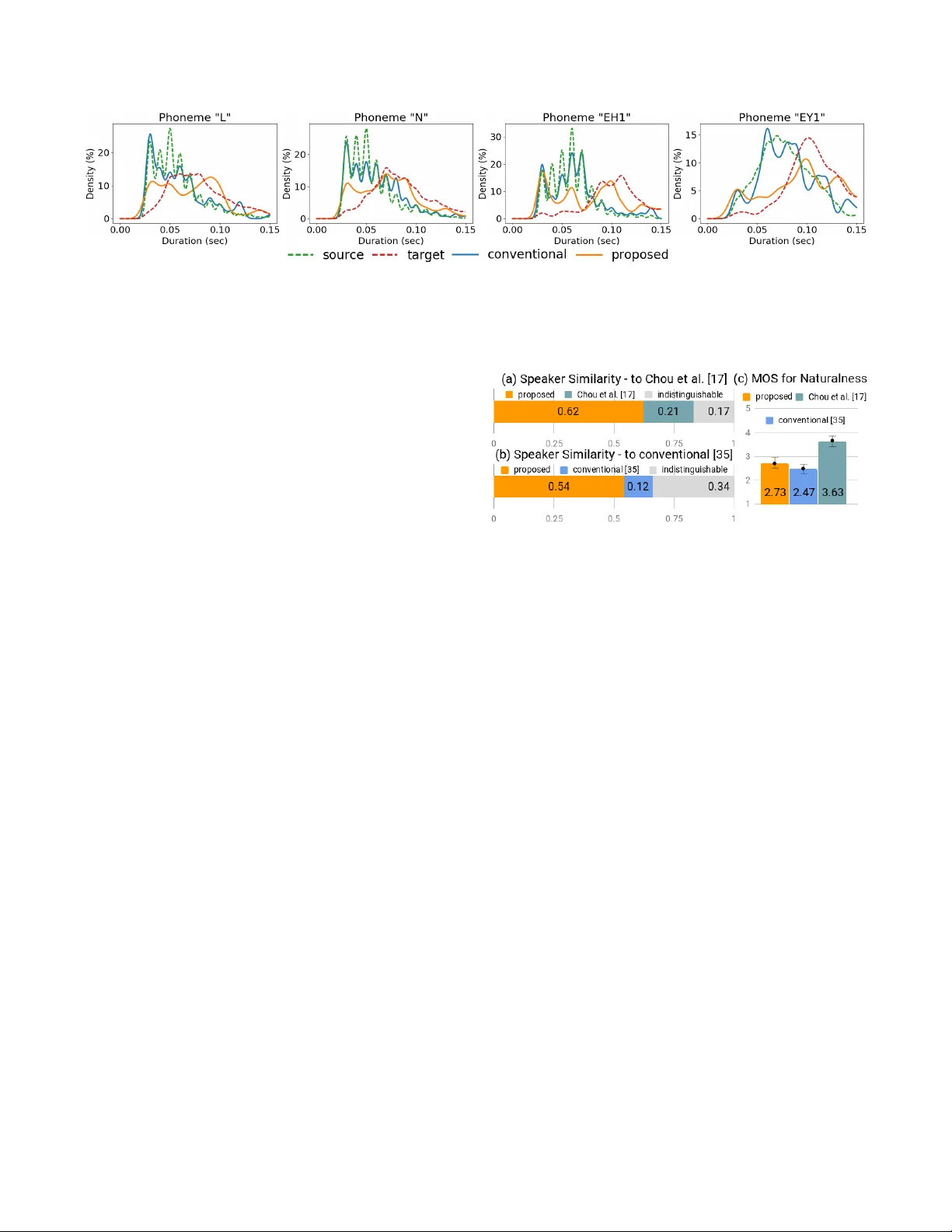

RHYTHM-FLEXIBLE V OICE CONVERSION WITHOUT P ARALLEL D A T A USING CYCLE-GAN O VER PHONEME POSTERIORGRAM SEQUENCES Cheng-chieh Y eh 1 , P o-c hun Hsu 1 , J u-chieh Chou 1 , Hung-yi Lee 1 , Lin-shan Lee 1 1 College of Electrical Engineering and Computer Science, National T aiwan Uni versity { r06942067, b03901071, r06922020, hungyilee } @ntu.edu.tw , lslee@gate.sinica.edu.tw ABSTRA CT Speaking rate refers to the av erage number of phonemes within some unit time, while the rhythmic patterns refer to duration distrib utions for realizations of different phonemes within different phonetic structures. Both are key compo- nents of prosody in speech, which is different for different speakers. Models like cycle-consistent adversarial network (Cycle-GAN) and v ariational auto-encoder (V AE) ha ve been successfully applied to voice conv ersion tasks without par- allel data. Howe ver , due to the neural network architectures and feature vectors chosen for these approaches, the length of the predicted utterance has to be fixed to that of the in- put utterance, which limits the flexibility in mimicking the speaking rates and rhythmic patterns for the target speaker . On the other hand, sequence-to-sequence learning model was used to remo ve the abov e length constraint, but parallel train- ing data are needed. In this paper , we propose an approach utilizing sequence-to-sequence model trained with unsuper- vised Cycle-GAN to perform the transformation between the phoneme posteriorgram sequences for dif ferent speakers. In this way , the length constraint mentioned above is remov ed to of fer rhythm-flexible voice con version without requiring parallel data. Preliminary e valuation on two datasets sho wed very encouraging results. Index T erms — voice con version, sequence-to-sequence learning, unsupervised learning, cycle-gan 1. INTR ODUCTION V oice con version (VC) is a task aiming to con vert the speech signals from a certain acoustic domain to another while keep- ing the linguistic content the same. Examples of considered acoustic domains include not only the speaker identity , but many other factors orthogonal to the linguistic content such as speaking style, speaking rate [1], noise condition, emo- tion [2, 3], accent [4], etc., with potential applications ranging from speech enhancement [5, 6], computer -assisted pronun- ciation training for non-nativ e language learner [4], speaking assistance [7], to speaker identity con version [8, 9, 10, 11], to name a few . When speaker identity con version is considered, in ad- dition to the fact that the same phoneme sounds different when produced by dif ferent speakers, it is well known that the prosody can also be very dif ferent for different speakers. The prosody of speech includes not only the pitch range, but at least the speaking rate and the rhythmic patterns. While speaking rate refers primarily to the average number of phonemes produced within some unit time, the rhythmic pattern refers to the duration distributions for realizations of different phonemes within dif ferent phonetic structures. It is obvious that the speaking rate and rhythmic patterns are very different for different speakers. When the goal is to mimic the voice characteristics of a specific speaker , it is important that the prosody including the speaking rate and the rhythmic patterns of the target speak er is reproduced. This is why fle x- ible speaking rates and rhythmic patterns are highly desired for voice con version (VC). The many approaches proposed for VC may be in most cases classified into two types: text-independent and text- dependent. T ext-independent VC directly predicts the target speech signals based on the source speech signals without considering the linguistic content or text. This is usually achiev ed with acoustic models such as Gaussian mixture models (GMMs) [8, 12] or deep neural netw orks (DNNs) [13]. T ext-dependent VC, on the other hand, con verts speech sig- nals through the textual information. That is, a speech rec- ognizer is used to estimate the textual information from the source speech and a speech synthesizer is used to predict the target speech from the textual information. The con- version units for te xt-dependent VC are usually rougher (e.g., phonemes, characters or words) than those used in text-independent VC (e.g., frames). Approaches recently proposed using phoneme posteriorgram vectors as the con- version unit [14, 15] may be considered as a compromise between the two, because the posteriorgram probabilities for all possible phonemes in the source speech signals are estimated, con verted and used to generate the target speech signals frame by frame. T ypically , text-independent VC requires parallel data. In other words, the data of utterance pairs produced by the source and tar get speak ers for the same sentences are needed to train the con version model. But recently , methods based on deep learning using only non-parallel data hav e been pro- posed [16, 17, 18]. Howe ver , in these approaches due to the limitations of the con version models or acoustic features used, the utterance length before and after conv ersion has to be k ept the same, so the goal of reproducing the speaking rate and rhythmic patterns of the target speaker is simply impos- sible to realize. Sequence-to-sequence learning performed on phoneme posteriorgram sequences may be a possible ap- proach to achie ve the abo ve mentioned goal [19], b ut all such approaches reported so far required parallel data. In this paper , we propose a rhythm-flexible VC approach produc- ing target speech signals of variable length but trained with non-parallel data only . Below in subsection 2.1, we first introduce the related works on text-independent VC using deep learning trained with non-parallel data and the associated length constraint. W e then show in subsection 2.2 a primarily text-dependent approach using sequence-to-sequence model to transform be- tween source and target speakers over the phoneme poste- riorgram sequences, which ov ercame the problem of length constraint but required parallel data. In section 3, we there- fore present the approach proposed here in this paper using non-parallel data but ov ercoming the length constraint to offer rhythm-flexible VC. W e list model architectures and imple- mentation for this proposed approach in section 4, and sho w experimental results with ev aluations in section 5. Finally , we make some discussions and concluding remarks in section 6. 2. RELA TED WORK 2.1. Non-parallel VC using Deep Learning Recently , deep generativ e models such as V ariational Autoen- coders (V AEs) [20] and Generativ e Adversarial Networks (GANs) [21] including Conditional GANs (CGANs) [22] were broadly studied because they can be applied to unsuper- vised learning problems. This is specially attractiv e for VC because that implies parallel data may not be needed. W ith V AEs, the encoder first extracts a latent feature representing the speaker-independent linguistic content, and then the de- coder is trained to generate the voice of the target speaker conditioned on the latent feature and some extra informa- tion re garding the target speaker [16, 17, 23]. With CGANs, with the guidance of the discriminator , the conditional gen- erator tries to generate acoustic features sounding like being produced by the target speaker conditioned on the acoustic features produced by the source speaker . Among the many extensions of CGANs, cycle-consistent adversarial network (Cycle-GAN) [24] and Star-GAN [25] hav e been very suc- cessfully used as domain translators between the source and target domains, and ha ve been used for VC [18, 26, 27, 28]. Although the above approaches are able to perform voice con version without parallel data, the length of the generated signals are locked to be the same as that of the input signals due to the neural network architectures or the acoustic fea- tures used. For example, some of them used combinations of recurrent neural networks (RNNs) and con volutional neural networks (CNNs) [16, 17, 23] rather then the sequence-to- sequence encoder-decoder architecture. These methods took a single frame or a segment of frames (e.g. 128 frames) as the input, and then generated a single frame or a segment of frames with the same length as the output. Some other approaches chose Mel-cepstral coefficients (MCEPs), loga- rithmic fundamental frequency (log F0), and aperiodicities (APs) as the features, but the con version was performed on MCEPs only [18, 28]. The con verted MCEPs have to be of the same length as the original ones in order to be aligned with the sequences of log F0 and APs when synthesizing back to the wa veform. This fixed-length constraint makes it impos- sible for these very attractiv e deep learning approaches not requiring parallel data to be rhythm-flexible to better catch the prosody of the target speak er . 2.2. Sequence-to-sequence Con version over Posterior - gram Sequences T rained with Parallel Data An approach utilizing Recurrent Neural Networks (RNNs) encoder-decoder for sequence-to-sequence learning [29] transforming the phoneme posteriorgram sequences between different speakers that can overcome the length constraint mentioned above was proposed [19]. In this approach, in addition to a speech recognizer to produce the phoneme posteriorgram sequences and a speech synthesizer to recon- struct the signals, a module for transformation between the phoneme posteriorgram sequences for the source and tar- get speakers was added in between to perform VC. This latter transformation module includes an RNN encoder and an RNN decoder operating frame by frame. The end-of- sequence token produced at the RNN decoder at any time removed the length constraint mentioned abov e and offered more flexible rh ythm for the output speech. Howe ver , the supervised training for sequence-to-sequence learning re- quires parallel data. This leads to the new approach proposed in this paper, which offers rhythm-flexible VC with variable length b ut doesn’t require parallel data, as is presented belo w . 3. PR OPOSED APPRO A CH The approach proposed here successfully overcomes the length constraint mentioned in subsection 2.1 and removes the need for parallel data mentioned in subsection 2.2 by adopting Cycle-GAN, which is an unsupervised style transfer model capable of transforming the phoneme posteriorgram sequences between speakers. The three components of the approach is respectiv ely presented in subsections 3.1, 3.2, and 3.3 and Figure 1 (a)(b)(c), the Cycle-GAN in subsec- tion 3.4 and Figure 2, while the ov erview of the whole VC process is in Figure 3. P P R ( ⋅ ) Mel-scale spectrogram Phoneme posteriorgram sequence a i u e a i u e a i u e a i u e a i u e P P T S ( ⋅ ) a i u e a i u e a i u e a i u e a i u e Log-magnitude spectrogram Phoneme posteriorgram sequence Griffin-Lim Speech Waveform U P P T D e c o d e r a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e Source domain phoneme posteriorgram sequence T arget domain phoneme posteriorgram sequence U P P T E n c o d e r a i u e a i u e a i u e a i u e a i u e a i u e a i u e (a) (c) (b) = P P R ( x ) p x = [ , , ⋯ , ] x ⎯ ⎯ ⎯ x ⎯ ⎯ ⎯ 1 x ⎯ ⎯ ⎯ 2 x ⎯ ⎯ ⎯ T x = P P R ( x ) p x Close to = P P R ( y ) p y x = [ , , ⋯ , ] x 1 x 2 x T x = P P R ( x ) p x Fig. 1 . The three components of the proposed approach. P P R ( · ) in (a) stands for Phoneme Posteriorgram Recognizer and P P T S ( · ) in (b) for Phoneme-Posteriorgram-to-Speech Synthesizer . U P P T in (c) stands for Unsupervised Phoneme Posteriorgram T ransformer, which includes an encoder and a decoder . Dotted red arrows around the U P P T decoder indi- cates the output at the previous time index is used as the input at the next time index. The green arrow indicates the final state of the encoder is fed to the initial state of the decoder . 3.1. Phoneme Posterior gram Recognizer As in Figure 1 (a), let x = [ x 1 , x 2 , · · · , x T x ] and y = [ y 1 , y 2 , · · · , y T y ] be the acoustic feature vector sequences from the source and target speaker domains, x t and y t be the feature vector at time index t , and T x and T y be the lengths of x and y . In Figure 1 (a), x and y are the Mel-scale spectro- gram. Also, l x = [ l x 1 , l x 2 , · · · , l x T x ] and l y = [ l y 1 , l y 2 , · · · , l y T y ] are the ground truth label phoneme sequences correspond- ing to x and y , respectiv ely . Phoneme Posteriorgram Rec- ognizer P P R ( · ) is a speaker-independent neural network that estimates the phoneme posterior probabilities frame by frame given an acoustic feature vector sequence. This recog- nizer P P R ( · ) is trained to minimize L xent ( l x , P P R ( x )) and L xent ( l y , P P R ( y )) , which are the cross-entropy between the ground truth label sequences (an one-hot vector for each time t ) and the estimated phoneme posteriorgram sequences for data in both source and target speak er domains. 3.2. Phoneme-Posterior gram-to-Speech Synthesizer As in Figure 1 (b), Phoneme-Posteriorgram-to-Speech Syn- thesizers P P T S x ( · ) and P P T S y ( · ) are the rev erse process of P P R ( · ) for data in the source and target speaker domains respectiv ely , or two neural networks that predict the speech feature vectors ¯ x and ¯ y frame by frame giv en the phoneme posteriorgram sequences ˆ p x = P P R ( x ) and ˆ p y = P P R ( y ) . In Figure 1 (b), ¯ x and ¯ y are the log-magnitude version of x and y . Griffin-Lim is the algorithm synthesizing the speech wa veform from the predicted log-magnitude version ¯ x and ¯ y [30]. P P T S x ( · ) and P P T S y ( · ) , are respectiv ely trained to minimize the mean squared error between the ground truth speech feature vectors and the reconstructed version, L mse ( ¯ x, P P T S x ( ˆ p x )) and L mse ( ¯ y , P P T S y ( ˆ p y )) . 3.3. Unsupervised Phoneme Posteriorgram T ransformer As shown in Figure 1 (c), the Unsupervised Phoneme Posteri- orgram T ransformer U P P T is an attention-based sequence- to-sequence model including an U P P T encdoer and an U P P T decoder , which transforms a source domain posteri- orgram sequence ˆ p x = P P R ( x ) into another posteriorgram sequence very close to those for signals in the target domain, ˆ p y = P P R ( y ) . The green arrow indicates the final state of the encoder is fed to the initial state of the decoder , and the dotted red arrows around the U P P T decoder indicate the output of the pre vious time index is used as the input at the next time index. This is a sequence-to-sequence model used to remove the length constraint and achie ve the rhythm- flexible VC mentioned pre viously . 3.4. Cycle-GAN Let X and Y be the two sets that contain all estimated phoneme posteriorgram sequences ˆ p x , ˆ p y from the source and target speaker domains respectively . W e adopt here the cycle-consistent generative adversarial network (Cycle- GAN) to learn the mapping between X and Y without paired data. As shown in Figure 2, the whole training procedure includes two sets of generati ve adversarial networks (GANs), each with a generator and a discriminator . After Cycle-GAN training, two generators, G X → Y and G Y → X are obtained. These two generators are two transformers ( U P P T s in sub- section 3.3) that transform ˆ p x to ˆ p x → y ( ˆ p x → y = G X → Y ( ˆ p x ) ) and ˆ p y to ˆ p y → x ( ˆ p y → x = G Y → X ( ˆ p y ) ) respecti vely , where ˆ p x → y is a phoneme posteriorgram sequence mapped from the source domain to target domain and ˆ p y → x vice versa. T wo discriminators are also trained, D X and D Y , to discriminate whether a phoneme posterior gram sequence is a real one gen- erated from a signal in a domain, or a fake one transformed from another domain. 3.4.1. T raining Goal of Gener ators (or UPPTs) The generators G X → Y , G Y → X take the phoneme posterior - gram sequences from a speak er domain as the input and pro- duce another phoneme posterior gram sequence close to those for another speaker domain. Both are built with attention- based sequence-to-sequence model to learn the mapping be- tween ˆ p x ∈ X and ˆ p y ∈ Y such that the distribution of G X → Y a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e G Y → X a i u e a i u e a i u e a i u e a i u e a i u e a i u e G Y → X G X → Y a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e a i u e D Y D X scalar: belongs to X or not as close as possible as close as possible scalar: belongs to Y or not Fig. 2 . Cycle-GAN. G X → Y and G Y → X refer to generators. D X and D Y refer to discriminators. Blocks with the same color share the same set of neural network parameters. Each generator is built with a pair of U P P T encoder and U P P T decoder . G X → Y ( ˆ p x ) is as indistinguishable from that of ˆ p y as possible, and G Y → X ( ˆ p y ) is as indistinguishable from ˆ p x as possible. The training of the generators are guided by the discrimina- tors described below to achie ve the abo ve scenario. 3.4.2. T raining Goal of Discriminator s The discriminators D X and D Y take a phoneme posterior- gram sequence as the input, and produce a scalar indicating how ”real” the input is from the sets X or Y for the domain considered, or actually fake or transformed from another do- main. Such discriminators are to guide the generators. So the training objective of discriminators are to distinguish be- tween the real sequences such as ˆ p x , ˆ p y and fake sequences such as G Y → X ( ˆ p y ) , G X → Y ( ˆ p x ) generated by the generators, and give higher scores to real ones and lower scores to fake ones. 3.4.3. Objective functions Sev eral objectiv e functions are defined here as giv en below . 1. Adversarial Loss: Adversarial losses [21] are applied to both mapping functions, G X → Y and G Y → X . For the map- ping function G X → Y and its discriminator D Y , we express the objectiv e as in (1). L GAN ( G X → Y , D Y ) = E y ∼ Y [log D Y ( y )] + E x ∼ X [log (1 − D Y ( G X → Y ( x )))] . (1) L GAN ( G Y → X , D X ) is defined in exactly the same way as (1), except the roles of X and Y are reversed. 2. Cycle Consistency Loss: Cycle consistency losses [24] are applied when training the two generators. The transform cycle should be able to bring x back to the original phoneme posteriorgram sequence, i.e. G Y → X ( G X → Y ( x )) ≈ x and G X → Y ( G Y → X ( y )) ≈ y . W e express this objectiv e as in (2). Domain X Speaker a i u e a i u e a i u e a i u e a i u e a i u e P P R ( ⋅ ) Domain Y Speaker G X → Y P P T ( ⋅ ) S Y Domain Y Speaker a i u e a i u e a i u e a i u e a i u e a i u e P P R ( ⋅ ) a i u e a i u e a i u e a i u e Domain X Speaker G Y → X P P T ( ⋅ ) S X a i u e a i u e a i u e a i u e Fig. 3 . The complete voice con version process for the pro- posed approach. Blocks with the same color share the same set of neural network parameters. (Note that L xent in (2) means cross-entropy) L cycl e ( G X → Y , G Y → X ) = E x ∼ X [ L xent ( x, G Y → X ( G X → Y ( x )))] + E y ∼ Y [ L xent ( y , G X → Y ( G Y → X ( y )))] . (2) 3. Identity Mapping Loss: Identity mapping loss as pro- posed in the original work of Cycle-GAN [24] is also used here. When real samples of the target domain are provided as the input to the generator , the transformed result should be as close to the input as possible. It was found that adding this objective as an extra regularization term for the genera- tors actually improved the transformed results. W e express this objectiv e as in (3). L identity ( G X → Y , G Y → X ) = E x ∼ X [ L xent ( x, G Y → X ( x )))] + E y ∼ Y [ L xent ( y , G X → Y ( y )))] . (3) The full objectiv e for Cycle-GAN training is the sum of (1)(2)(3): L cycl e g an ( G X → Y , G Y → X , D X , D Y ) = L GAN ( G X → Y , D Y ) + L GAN ( G Y → X , D X ) + λ 1 L cycl e ( G X → Y , G Y → X ) + λ 2 L identity ( G X → Y , G Y → X ) , (4) where λ 1 , λ 2 are balancing parameters. So ov erall we aim to solve: G ∗ X → Y , G ∗ Y → X = arg min G X → Y ,G Y → X max D X ,D Y ( L cycl e g an ) . (5) 3.5. Overall V oice Con version As shown in Figure 3, the overall voice con version is achieved by first passing the source speaker’ s speech signal through P P R ( · ) in subsection 3.1 to obtain its phoneme posteri- orgram sequence, then using U P P T in subsection 3.3 to transform it to the tar get domain, and finally using P P T S ( · ) in subsection 3.2 trained on the target domain to synthesize the target speech signal from the given phoneme posterior- gram sequence. 4. MODEL IMPLEMENT A TION AND TRAINING W e adopted primarily the model architecture from the CBHG module [31] for all three parts of the proposed approach including the phoneme posteriorgram recognizer P P R ( · ) , phoneme-posteriorgram-to-speech synthesizer P P T S ( · ) and the unsupervised phoneme posteriorgram transformer U P P T (or the generators). The con volution-bank in CBHG module was found to be able to better capture the local information ov er time, reduce ov erfitting and generalize well to long and complex inputs, such as acoustic feature sequences [32]. As previously suggested [18], for the discriminators, we treated the input phoneme posteriorgram sequences as pictures with channel size one, and performed se veral 1D-con v olution lay- ers with strides larger then one for better capturing the local properties such as ho w many frames a speak er usually needs to produce a specific phoneme. The attention mechanism in U P P T was shown to be able to effecti vely improve the decoder’ s prediction [33]. The ov erall model architecture and training details are available 1 but left out here for space limitation. W e considered P P R ( · ) as a pseudo-labeler . W e first trained P P R ( · ) with the objective mentioned in sub- section 3.1, and then trained P P T S ( · ) with the objective mentioned in subsection 3.2. W ith the above done, we then collected the estimated results of P P R ( · ) to train U P P T . For P P R ( · ) , we used mel-scale spectrogram as the input acoustic features, and the phoneme set defined in Carnegie Mellon pronouncing dictionary [34] as the labels for the pos- teriorgram sequences. Thus the input to U P P T were se- quences of vectors with dimension 70 (39 phoneme types with stress combinations, each treated as mono-phoneme). For P P T S , we used log-magnitude spectrogram as the out- put acoustic features, o ver which Griffin-Lim algorithm [30] was applied to synthesize the wa veform. All other detailed setting followed the pre vious work [32]. 5. EXPERIMENTS AND RESUL TS 5.1. Experimental setup W e used two datasets under a fully non-parallel setting. One is Librispeech [36], an audio book read by multi-speakers. The other one is VCTK [37], which is a multi-speaker dataset primarily reading newspapers and elicitation paragraphs in- tended to identify the speaker’ s accent. Both datasets were randomly split into training, validation and testing sets with percentages of 80%, 10% and 10%. The phone boundaries were not av ailable in both datasets, so we used a force-aligner 1 https://github .com/acetylSv/rhythmic-flexible-vc-arch ( i ) (i i) ( i ) (i i) A C A → C C → A ( i ) (i i) ( i ) (i i) B D B → D D → B fast slow conventional proposed Fig. 4 . A verage speaking rates (number of syllables / sec) for utterances in testing set before and after con version. The dots and bars indicate the av erages and the standard deviations. Speakers A, B belonged to the fastest speaking group and C, D to the slo west speaking group, (i) achie ved by the con ven- tional method [35] while (ii) by the proposed approach. The numbers shown are the a verages. pretrained on Librispeech dataset [38] to get the phone bound- aries and corresponding phone classes for training P P R ( · ) . 5.1.1. Librispeech Dataset Using Praat Script Syllable Nuclei [39] to measure the speak- ing rate, we picked the fastest 20 speakers and the slowest 20 speakers to form a subset with a total length of 15.8 hours or 4609 utterances for ev aluation of conv ersion across different speaking rates. When training the three compo- nents in Figure 1, we used all the 40 speakers to train the speaker -independent P P R ( · ) , the grouped fastest and slow- est 20 speakers as two domains to initialize the Cycle-GAN training for U P P T (follo wed by indi vidual training for each con version pair), and individually trained speaker-dependent P P T S ( · ) for each speaker . 5.1.2. VCTK Dataset W e chose 18 speakers, some nativ e and some non-nati ve, with a total of 7.3 hours or 7132 utterances, as a dif ferent scenario for rhythmic patterns. W e used all the 18 speakers to train the speak er-independent P P R ( · ) , b ut trained the U P P T and P P T S ( · ) for each conv ersion pair individually . 5.2. Objective Evaluation T o sho w the proposed approach is able to learn the speak- ing rates of the target speak ers, we chose two speak ers A, B (with IDs 6925 and 460) from the fastest speaking group of Librispeech and tw o speakers C, D (with IDs 163 and 1363) from the slowest speaking group and performed the con ver - sions A ↔ C and B ↔ D on the utterances in their testing sets using a conv entional method [35] and the proposed approach. This is actually an ablation study , since the only difference be- tween the tw o is whether the U P P T proposed here was used or not. The results are plotted in Figure 4, where the averages Fig. 5 . Example rhythmic patterns (duration distributions) for phonemes ”L”, ”N”, ”EH1”, ”EY1”. The histograms were normalized by Gaussian kernel density estimation (bandwidth=0.125). Different colors represent the rhythmic patterns for different speak ers (green and red) and conv erted voice (blue and bro wn). and standard deviations of the speaking rates are shown for the two approaches. W e can see from Figure 4 the proposed approach could mimic the speaking rates of the tar get speaker much better . T o show the proposed approach is capable of learning the rhythmic patterns (phoneme duration distributions) for the target speaker , we chose a pair of speakers E, F (with IDs p231 and p265) from VCTK and performed the con version E → F on their testing utterances. W e used the pretrained force- aligner to obtain the phoneme duration and normalized the histograms by Gaussian kernel density estimation. The ex- ample rhythmic patterns for two vo wels and two consonants are plotted in Figure 5, in which the different colors are re- spectiv ely for source and target speak ers (green and red) and the con verted voice by a con ventional [35] (blue) and the pro- posed (bro wn) approaches. W e can see from Figure 5 dif fer- ent speakers did sho w very different rhythmic patterns, and the proposed approach was able to mimic these patterns of the target speak er much better . 5.3. Subjective Evaluation Subjectiv e e v aluation w as performed on con verted v oice (in- cluding both intra-gender and inter-gender con versions) from VCTK datasets. In the binary preference test for speaker similarity , 20 subjects were gi ven pairs of con verted voice in random order and asked to choose one sounding more similar to a reference target utterance produced by the tar get speaker , comparing the proposed approach to a recently proposed non-parallel VC by Chou et al. [17] and the conv entional method [35]. The results are in Figure 6 (a)(b). W e can see the proposed approach obviously outperformed the two previous approaches in terms of speak er similarity . The MOS for naturalness in Figure 6 (c) shows the pro- posed approach is better than the con ventional method [35], although not as good as the recently proposed non-parallel VC [17], very probably because of the mean square error (MSE) objective function used in training P P T S ( · ) in sub- section 3.2. It was found that models trained with MSE objectiv e tend to output av erage predictions [40], which Fig. 6 . Subjecti ve ev aluation results: binary preference test for speaker similarity compared to (a) the recently proposed non-parallel VC with length constraint (Chou et al. [17]), (b) the conv entional method [35] (ablation study); and (c) 5-scale naturalness MOS scores similarly compared. may lead to over -smoothed log-magnitude spectrograms and blurred sounds after the Griffin-Lim algorithm. Inv estigations for replacing Griffin-Lim vocoder with a neural v ocoder [41] or applying post-filters to enhance the output log-magnitude spectrograms are under progress. Another possible direction may be applying sequence-to-sequence Cycle-GAN directly on log-magnitude spectrograms rather than on the phoneme posteriorgram sequences, but at the difficulties of the high feature dimension and complex model structures. 6. CONCLUSION Objectiv e and subjecti ve ev aluation on two dif ferent datasets showed that the proposed approach is able to mimic the voice characteristics of a target speaker , including the speaking rate and rhythmic patterns, without parallel data by utilizing sequence-to-sequence learning trained with Cycle-GAN to remov e the length constraint. Although phoneme boundaries are needed for the training data, an easily obtained pretrained force-aligner can offer these boundaries. 7. REFERENCES [1] Dimitrios Rentzos, S V aseghi, E T urajlic, Qin Y an, and Ching-Hsiang Ho, “T ransformation of speaker char- acteristics for voice conv ersion, ” in Automatic Speech Recognition and Understanding , 2003. ASR U’03. 2003 IEEE W orkshop on . IEEE, 2003, pp. 706–711. [2] Ryo Aihara, Ryoichi T akashima, T etsuya T akiguchi, and Y asuo Ariki, “Gmm-based emotional v oice con ver - sion using spectrum and prosody features, ” American Journal of Signal Pr ocessing , vol. 2, no. 5, pp. 134–138, 2012. [3] Hiromichi Kawanami, Y ohei Iwami, T omoki T oda, Hi- roshi Saruwatari, and Kiyohiro Shikano, “Gmm-based voice conv ersion applied to emotional speech synthe- sis, ” in Eighth Eur opean Conference on Speech Com- munication and T echnolo gy , 2003. [4] Keisuk e Oyamada, Hirokazu Kameoka, T akuhiro KANEK O, Hiro yasu ANDO, Kaoru HIRAMA TSU, and Kunio KASHINO, “Non-nati ve speech con version with consistency-a ware recursiv e network and generati ve ad- versarial network, ” in Pr oceedings of APSIP A Annual Summit and Confer ence , 2017, vol. 2017, pp. 12–15. [5] Alexander B Kain, John-Paul Hosom, Xiaochuan Niu, Jan PH van Santen, Melanie Fried-Oken, and Janice Staehely , “Improving the intelligibility of dysarthric speech, ” Speec h communication , vol. 49, no. 9, pp. 743– 759, 2007. [6] Frank Rudzicz, “ Acoustic transformations to improve the intelligibility of dysarthric speech, ” in Proceed- ings of the Second W orkshop on Speech and Language Pr ocessing for Assistive T echnologies . Association for Computational Linguistics, 2011, pp. 11–21. [7] Keigo Nakamura, T omoki T oda, Hiroshi Saruwatari, and Kiyohiro Shikano, “Speaking-aid systems us- ing gmm-based voice conv ersion for electrolaryngeal speech, ” Speech Communication , vol. 54, no. 1, pp. 134–146, 2012. [8] Y annis Stylianou, Oli vier Capp ´ e, and Eric Moulines, “Continuous probabilistic transform for voice con ver- sion, ” IEEE T ransactions on speech and audio pr ocess- ing , vol. 6, no. 2, pp. 131–142, 1998. [9] Alexander Kain and Michael W Macon, “Spectral voice con version for text-to-speech synthesis, ” in Acoustics, Speech and Signal Pr ocessing, 1998. Pr oceedings of the 1998 IEEE International Conference on . IEEE, 1998, vol. 1, pp. 285–288. [10] Daisuke Saito, Keisuk e Y amamoto, Nobuaki Mine- matsu, and Keikichi Hirose, “One-to-many voice con version based on tensor representation of speaker space, ” in T welfth Annual Confer ence of the Interna- tional Speech Communication Association , 2011. [11] T omi Kinnunen, Lauri Juvela, Paa vo Alku, and Junichi Y amagishi, “Non-parallel voice con version using i- vector plda: T owards unifying speaker verification and transformation, ” in Acoustics, Speech and Signal Pr o- cessing (ICASSP), 2017 IEEE International Confer ence on . IEEE, 2017, pp. 5535–5539. [12] T omoki T oda, Alan W Black, and Keiichi T okuda, “V oice conv ersion based on maximum-likelihood esti- mation of spectral parameter trajectory , ” IEEE T ransac- tions on Audio, Speech, and Language Pr ocessing , v ol. 15, no. 8, pp. 2222–2235, 2007. [13] Sriniv as Desai, E V eera Raghavendra, B Y egna- narayana, Alan W Black, and Kishore Prahallad, “V oice con version using artificial neural networks, ” in Acous- tics, Speech and Signal Pr ocessing, 2009. ICASSP 2009. IEEE International Confer ence on . IEEE, 2009, pp. 3893–3896. [14] Lifa Sun, Hao W ang, Shiyin Kang, K un Li, and Helen M Meng, “Personalized, cross-lingual tts using phonetic posteriorgrams., ” in INTERSPEECH , 2016, pp. 322– 326. [15] Feng-Long Xie, Frank K Soong, and Haifeng Li, “ A kl di vergence and dnn-based approach to voice con ver- sion without parallel training sentences., ” in INTER- SPEECH , 2016, pp. 287–291. [16] Chin-Cheng Hsu, Hsin-T e Hwang, Y i-Chiao W u, Y u Tsao, and Hsin-Min W ang, “V oice con version from unaligned corpora using variational autoencod- ing wasserstein generativ e adv ersarial netw orks, ” arXiv pr eprint arXiv:1704.00849 , 2017. [17] Ju-chieh Chou, Cheng-chieh Y eh, Hung-yi Lee, and Lin-shan Lee, “Multi-target voice con version without parallel data by adversarially learning disentangled au- dio representations, ” arXiv pr eprint arXiv:1804.02812 , 2018. [18] T akuhiro Kaneko and Hirokazu Kameoka, “Parallel- data-free voice conv ersion using cycle-consistent ad- versarial networks, ” arXiv preprint , 2017. [19] Hiroyuki Miyoshi, Y uki Saito, Shinnosuke T akamichi, and Hiroshi Saruwatari, “V oice con version us- ing sequence-to-sequence learning of context posterior probabilities, ” arXiv pr eprint arXiv:1704.02360 , 2017. [20] Diederik P Kingma and Max W elling, “ Auto-encoding variational bayes, ” arXiv preprint , 2013. [21] Ian Goodfellow , Jean Pouget-Abadie, Mehdi Mirza, Bing Xu, Da vid W arde-Farley , Sherjil Ozair, Aaron Courville, and Y oshua Bengio, “Generative adversar- ial nets, ” in Advances in neur al information pr ocessing systems , 2014, pp. 2672–2680. [22] Mehdi Mirza and Simon Osindero, “Conditional gener- ativ e adversarial nets, ” arXiv pr eprint arXiv:1411.1784 , 2014. [23] Chin-Cheng Hsu, Hsin-T e Hwang, Y i-Chiao W u, Y u Tsao, and Hsin-Min W ang, “V oice con version from non-parallel corpora using variational auto-encoder , ” in Signal and Information Pr ocessing Association Annual Summit and Confer ence (APSIP A), 2016 Asia-P acific . IEEE, 2016, pp. 1–6. [24] Jun-Y an Zhu, T aesung Park, Phillip Isola, and Alex ei A Efros, “Unpaired image-to-image translation using cycle-consistent adversarial networks, ” arXiv pr eprint arXiv:1703.10593 , 2017. [25] Y unjey Choi, Minje Choi, Munyoung Kim, Jung- W oo Ha, Sunghun Kim, and Jaegul Choo, “Star - gan: Unified generativ e adversarial networks for multi- domain image-to-image translation, ” arXiv pr eprint arXiv:1711.09020 , 2017. [26] Y ang Gao, Rita Singh, and Bhiksha Raj, “V oice imper- sonation using generativ e adversarial networks, ” arXiv pr eprint arXiv:1802.06840 , 2018. [27] Fuming Fang, Junichi Y amagishi, Isao Echizen, and Jaime Lorenzo-T rueba, “High-quality nonparallel v oice con version based on cycle-consistent adversarial net- work, ” arXiv pr eprint arXiv:1804.00425 , 2018. [28] Hirokazu Kameoka, T akuhiro Kaneko, K ou T anaka, and Nobukatsu Hojo, “Starg an-vc: Non-parallel many-to- many voice con version with star generativ e adversarial networks, ” arXiv pr eprint arXiv:1806.02169 , 2018. [29] Ilya Sutskev er, Oriol V inyals, and Quoc V Le, “Se- quence to sequence learning with neural networks, ” in Advances in neural information pr ocessing systems , 2014, pp. 3104–3112. [30] Daniel Griffin and Jae Lim, “Signal estimation from modified short-time fourier transform, ” IEEE T ransac- tions on Acoustics, Speech, and Signal Pr ocessing , v ol. 32, no. 2, pp. 236–243, 1984. [31] Jason Lee, Kyunghyun Cho, and Thomas Hof- mann, “Fully character-le vel neural machine trans- lation without explicit segmentation, ” arXiv preprint arXiv:1610.03017 , 2016. [32] Y uxuan W ang, RJ Skerry-Ryan, Daisy Stanton, Y onghui W u, Ron J W eiss, Navdeep Jaitly , Zongheng Y ang, Y ing Xiao, Zhifeng Chen, Samy Bengio, et al., “T acotron: T ow ards end-to-end speech synthesis, ” arXiv pr eprint arXiv:1703.10135 , 2017. [33] Dzmitry Bahdanau, Kyungh yun Cho, and Y oshua Ben- gio, “Neural machine translation by jointly learning to align and translate, ” arXiv pr eprint arXiv:1409.0473 , 2014. [34] Robert W eide, “The carnegie mellon pronouncing dic- tionary [cmudict. 0.6], ” 2005. [35] Lifa Sun, Kun Li, Hao W ang, Shiyin Kang, and Helen Meng, “Phonetic posteriorgrams for many-to-one v oice con version without parallel data training, ” in Multime- dia and Expo (ICME), 2016 IEEE International Confer- ence on . IEEE, 2016, pp. 1–6. [36] V assil Panayotov , Guoguo Chen, Daniel Povey , and San- jeev Khudanpur , “Librispeech: an asr corpus based on public domain audio books, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2015 IEEE International Confer ence on . IEEE, 2015, pp. 5206–5210. [37] Christophe V eaux, Junichi Y amagishi, Kirsten MacDon- ald, et al., “Cstr vctk corpus: English multi-speaker cor - pus for cstr voice cloning toolkit, ” 2017. [38] Michael McAulif fe, Michaela Socolof, Sarah Mihuc, Michael W agner , and Morgan Sonderegger , “Montreal forced aligner: trainable text-speech alignment using kaldi, ” in Pr oceedings of interspeech , 2017. [39] Nivja H De Jong and T on W empe, “Praat script to detect syllable nuclei and measure speech rate automatically , ” Behavior r esear ch methods , v ol. 41, no. 2, pp. 385–390, 2009. [40] T akuhiro Kaneko, Hirokazu Kameoka, Nob ukatsu Hojo, Y usuke Ijima, Kaoru Hiramatsu, and Kunio Kashino, “Generativ e adv ersarial network-based postfilter for sta- tistical parametric speech synthesis, ” in Acoustics, Speech and Signal Pr ocessing (ICASSP), 2017 IEEE In- ternational Confer ence on . IEEE, 2017, pp. 4910–4914. [41] A ¨ aron V an Den Oord, Sander Dieleman, Heiga Zen, Karen Simon yan, Oriol V inyals, Alex Graves, Nal Kalchbrenner , Andre w Senior , and K oray Ka vukcuoglu, “W av enet: A generativ e model for raw audio, ” CoRR abs/1609.03499 , 2016.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment