Wasserstein GAN and Waveform Loss-based Acoustic Model Training for Multi-speaker Text-to-Speech Synthesis Systems Using a WaveNet Vocoder

Recent neural networks such as WaveNet and sampleRNN that learn directly from speech waveform samples have achieved very high-quality synthetic speech in terms of both naturalness and speaker similarity even in multi-speaker text-to-speech synthesis …

Authors: Yi Zhao, Shinji Takaki, Hieu-Thi Luong

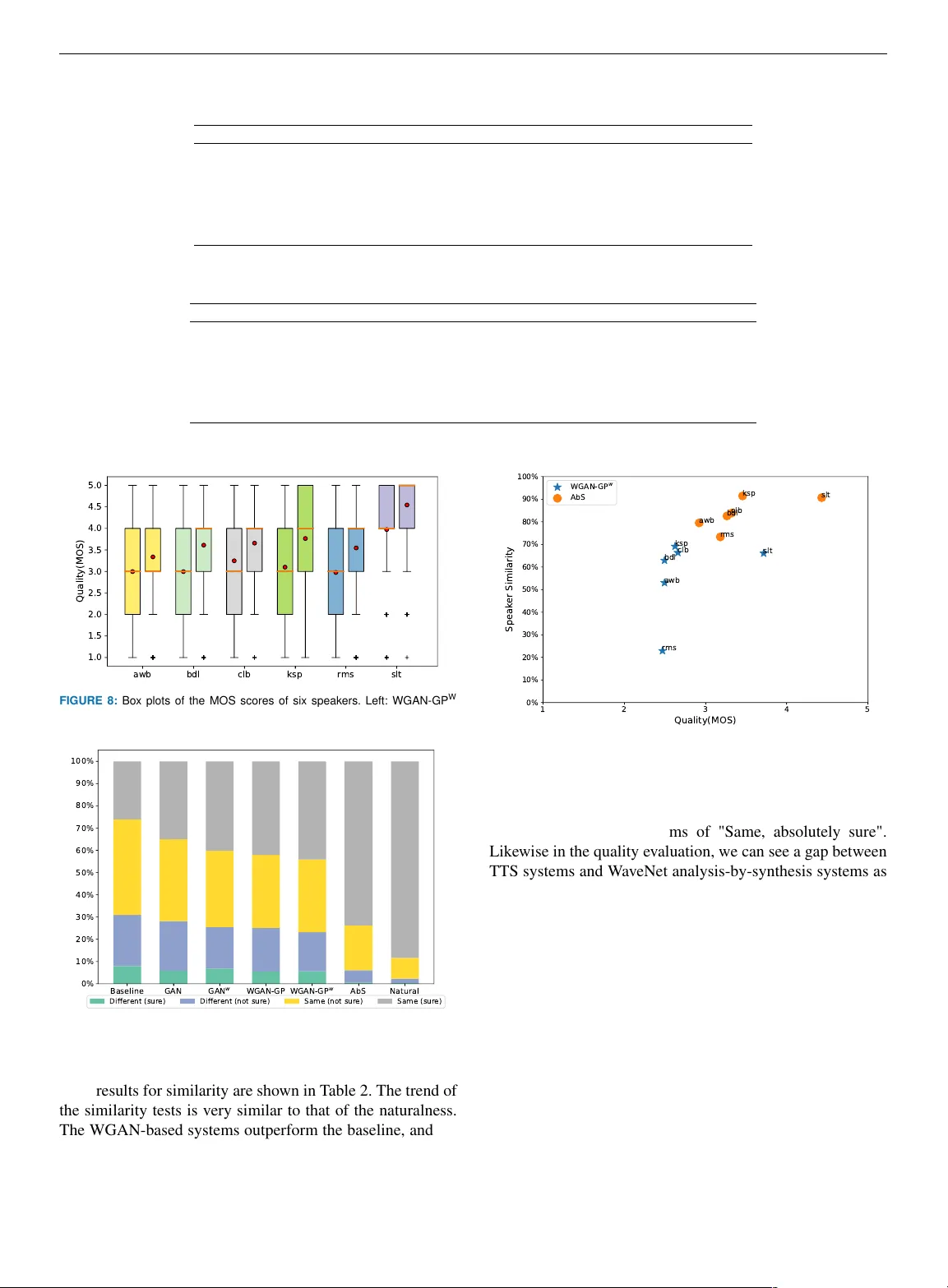

PREPRINT . WORK IN PR OGRESS. Digital Object Identifier 10.1109/DOI W asser stein GAN and W avef orm Loss-based Acoustic Model T raining f or Multi-speaker T e xt-to-Speec h Synthesis Systems Using a W a veNet V ocoder YI ZHA O 1 , (Student Member , IEEE), SHINJI T AKAKI 2 , (Member , IEEE), HIEU-THI LUONG 2 ,(Student Member , IEEE), JUNICHI Y AMA GISHI 2,3 ,(Senior Member , IEEE), D AISUKE SAIT O 1 ,(Member , IEEE), NOBU AKI MINEMA TSU 1 ,(Member , IEEE) 1 Department of Electrical Engineering and Information Systems, Graduate School of Engineering, The Univ ersity of T ok yo, 7-3-1 Hongo, Bunkyo-ku, T okyo 113-8656, Japan (e-mail: zhaoyi@gav o.t.u-tokyo.ac.jp, dsk_saito@ga vo.t.u-tok yo.ac.jp, mine@gav o.t.u-tokyo.ac.jp) 2 Digital Content and Media Sciences Research Division, National Institute of Informatics, 2-1-2 Hitotsubashi, Chiyoda-ku, T okyo 101-8430, Japan (e-mail: takaki@nii.ac.jp, luonghieuthi@nii.ac.jp, jyamagis@nii.ac.jp) 3 The Centre for Speech T echnology Research, Uni versity of Edinb urgh, 10 Crichton Street, EDINBURGH, EH89AB, United Kingdom Corresponding author: Y i Zhao(e-mail: zhaoyi@g av o.t.u-tokyo.ac.jp). This work was partially supported by MEXT KAKENHI Grant Numbers 16H06302, 17H04687, 18H04120, and 18H04112. The authors thank Mr . Lauri Juv ela from Aalto Univ ersity , Finland for his suggestions on WGAN-GP . ABSTRA CT Recent neural networks such as W av eNet and sampleRNN that learn directly from speech wa veform samples have achie ved very high-quality synthetic speech in terms of both naturalness and speaker similarity even in multi-speaker text-to-speech synthesis systems. Such neural networks are being used as an alternati ve to vocoders and hence they are often called neural v ocoders. The neural v ocoder uses acoustic features as local condition parameters, and these parameters need to be accurately predicted by another acoustic model. Howe ver , it is not yet clear how to train this acoustic model, which is problematic because the final quality of synthetic speech is significantly affected by the performance of the acoustic model. Significant degradation happens, especially when predicted acoustic features ha ve mismatched characteristics compared to natural ones. In order to reduce the mismatched characteristics between natural and generated acoustic features, we propose frameworks that incorporate either a conditional generati ve adversarial network (GAN) or its v ariant, W asserstein GAN with gradient penalty (WGAN-GP), into multi- speaker speech synthesis that uses the W av eNet vocoder . W e also extend the GAN frame works and use the discretized mixture logistic loss of a well-trained W aveNet in addition to mean squared error and adversarial losses as parts of objectiv e functions. Experimental results sho w that acoustic models trained using the WGAN-GP frame work using back-propagated discretized-mixture-of-logistics (DML) loss achiev es the highest subjectiv e e v aluation scores in terms of both quality and speaker similarity . INDEX TERMS generativ e adv ersarial network, multi-speaker modeling, speech synthesis, W aveNet I. INTR ODUCTION In recent years, text-to-speech (TTS) synthesis has gained popularity as an artificial intelligence technique and is widely used in many applications with speech interfaces. There are currently two major categories in the machine learning- based speech synthesis field: a) an end-to-end approach that learns the relationship between text and speech directly and b) the con ventional pipeline processing approach that divides text-to-speech con version into sub tasks such as linguistic feature extraction and acoustic feature extraction. In the latter approach, an acoustic model is trained to learn the relationship between separately extracted linguistic and acoustic features [1]. Previously in vestigated acoustic models include the hidden Markov model (HMM) [2], the deep neural network (DNN) [3], and the recurrent neural net- work (RNN) [4] [5]. These are normally trained with the minimum mean squared error (MSE) criterion, and hence, the generated acoustic parameters tend to be ov er-smoothed VOLUME 7, 2018 1 Yi Zhao et al. : W asserstein GAN and Wa vef or m Loss-based Acoustic Model T raining f or Multi-speaker TTS Systems regardless of the architectures. Finally , speech wav eforms hav e been reconstructed using a deterministic vocoder based on the acoustic parameters [6] [7] [8]. Ho wev er , the generated signals ha ve artifacts and typically sound buzzy . Due to these two major issues, the resultant quality of generated speech sounds obviously worse compared with natural speech. V ery recently , we see emerging solutions for the two issues. T o alleviate the over -smoothing problem, Saito et al. hav e incorporated adversarial training into acoustic mod- eling [9] [10]. The generative adversarial network (GAN) contains a generator as well as a discriminator [11], where the generator aims at decei ving the discriminator and the discriminator is trained to distinguish the natural and gen- erated feature samples. In the frame work proposed by [10], the generator acts as an acoustic model and is optimized by not only the conv entional MSE but also an adversarial loss computed using the discriminator . Experimental results sho w that GAN can effecti vely alleviate the ov er-smoothing effect of the generated speech parameters. T o av oid the artif acts and deterioration caused by deter- ministic vocoders, W aveNet, which directly models the raw wa veform of the audio signal in a non-linear auto-regressi ve way , has been proposed and dramatically improv es the qual- ity of synthetic speech [12] [13]. The original W av eNet model [12] used linguistic features as well as the fundamental frequency (F0) as local conditions. Later , the W av eNet model was used as an alternati ve to the deterministic vocoders in many studies [14] [15] by conditioning it on acoustic features such as cepstrum, F0, or spectrograms only [14], and results hav e shown that the sound quality of the W av eNet vocoder outperformed deterministic vocoders and phase recovery al- gorithms [16]. Howe ver , it is also reported that the samples generated from W aveNet occasionally become unstable and generate collapsed speech, especially when less accurately predicted acoustic features are used as the local condition parame- ters [17]. This would be more critical for the case of multi- speaker acoustic modeling where the same network is used for modeling multiple speak ers at the same time, as the prediction accuracy of the multi-speaker model would be worse than well-trained speaker -dependent models. In this paper, we propose frame works that incorporate either the conditional GAN [18] or its v ariant, W asserstein GAN with gradient penalty (WGAN-GP) [19], into RNN- based speech synthesis systems using the W av eNet vocoder for the purpose of reducing the mismatched characteristics between natural and generated acoustic features and for making the outputs of the W aveNet vocoder better and more stable. W e ev aluate the proposed framew orks using a multi- speaker modeling task. The generator of GAN is conditioned on both linguistic features and speaker code, and the discrim- inator aiming at distinguishing the real and predicted mel- spectrograms is also conditioned on speaker information. The W av eNet vocoder is conditioned on both mel-spectrogram and speaker codes, as well. In addition, we extend the GAN frame works and define a ne w objectiv e function using the weighted sum of three kinds of losses: con ventional MSE loss, adversarial loss, and discretized mixture logistic loss [20] obtained through the well-trained W aveNet vocoder . Since the third loss will let neural networks consider losses not only in the acoustic feature domain (such as mel-spectrogram) but also in the final wa veform, we hypothesize that it will improv e the quality of synthetic speech. In our experiment, simple recurrent units (SR Us) [21] are utilized as basic components since the y can be trained faster than the LSTM-based RNN architecture while maintaining a performance as good as or e ven better than LSTM-RNN. In Section 2 of this paper, we briefly revie w pre viously proposed DNN-based multi-speaker speech synthesis, as we ev aluate our proposed method in a popular multi-speaker modeling task. In Section III, we present the proposed framew ork for multi-speaker speech synthesis. Section IV describes the basic elements of the structure of the proposed model including SR U, GAN, and W av eNet, and the details of the training algorithms are giv en in Section V. Section VI describes experimental conditions and Section VII discusses the results. W e conclude in Section VIII with a brief summary and mention of future work. II. DNN-B ASED MUL TI-SPEAKER SPEECH SYNTHESIS Although deep learning-based methods have significantly advanced the performance of statistical parametric speech synthesis (SPSS), it still suffers from the necessity of a large amount of speech recordings of one speaker to train a high- quality acoustic model. Ideally , a speech synthesis system should be able to generate an arbitrary speaker’ s v oice with a minimum of training data. Multi-speaker speech synthesis is one of the most effecti ve approaches to train such a high- quality acoustic model with a limited amount of speech data of each speak er . Using multiple speakers’ data at the same time, we can improv e the quality of synthesized speech and can also change the speaker characteristics of synthetic speech flexibly . Using DNN-based acoustic models as a basis, Fan et al. [22] proposed multi-speaker speech synthesis using shared speaker-independent layers as well as a speaker- dependent output layer . They showed that the speaker - dependent output layer can be estimated from a target speaker’ s data only and that the shared hidden layers can improv e the quality of synthesized speech of individual speakers. W u et al. [23] suggested using i-vectors for mod- eling multiple speakers and controlling the speaker identity of synthetic speech. Hojo et al. [24] proposed using speaker codes based on a one-hot vector for modeling multiple speakers and extending the code and associated weights at an input layer for adapting it to unseen speakers. Luong et al. [25] proposed estimating code vectors for new speak- ers via back-propagation and experimented with manually manipulating input code vectors to alter the gender and/or age characteristics of the synthesized speech. Similar work has been extended to LSTM-based acoustic models. Zhao 2 VOLUME 7, 2018 Yi Zhao et al. : W asserstein GAN and Wa vef or m Loss-based Acoustic Model T raining f or Multi-speaker TTS Systems Linguistic features Speaker codes Generator Discriminator WaveNet vocoder Waveform samples Time alignment Embedding layers Predicted mel spectrogram Natural mel spectrogram 1/0 FIGURE 1: Proposed GAN-trained multi-speaker speech synthesis framework using a W av eNet vocoder . et al. [26] examined various speaker identity representations for multi-speaker synthesis and showed that multi-speaker systems trained with less of the target speaker’ s data can ev en outperform single speaker speech synthesis, which uses a larger amount of the target speaker’ s data. Li et al. [27] in vestigated multi-speaker modeling with speech data in dif- ferent languages. Multi-speaker speech synthesis has also been inv estigated in the recent W av eNet-based approaches and in end-to-end approaches. Hayashi [28] attempted W av eNet vocoder -based multi-speaker synthesis using four speakers from the CMU arctic corpus [29]. V oiceLoop [30] in volves the data of 109 speakers for acoustic model training, and Deep V oice 3 [31] trained a multi-speaker model using over 2,000 speakers. W ang et al. [32] proposed a bank of style embedding vectors and used it for modeling multiple TED speakers. As we can see, v ery acti ve research on multi-speaker modeling has been carried out. III. MUL TI-SPEAKER SPEECH SYNTHESIS INCORPORA TING GAN AND W A VENET V OCODER In this section, we introduce the proposed speech synthesis framew ork for multi-speaker modeling. In the con ventional SPSS structure, acoustic models and vocoders usually work independently: the acoustic models are trained without any consideration of the speech v ocoding process, and vice versa. It was the same in the first v ersions of end-to-end structures such as Deep V oice [33], where vocoders were usually designed or trained on natural acous- tic parameters without considering the div ergence between predicted and natural acoustic parameters. This may lead to obvious and unpredictable distortion of the synthesized speech. T o alleviate this problem, T acotron 2 [15] utilized predicted mel-spectrograms to train the W aveNet vocoder instead of natural mel-spectrograms. Experimental results showed that such a strategy may outperform those that use natural parameters and may achiev e a higher ev aluation. In the present work, we try to minimize the acoustic mismatch of predicted and natural parameters by conducting acoustic model training based on GAN, which also considers vocoder loss. The proposed multi-speaker speech synthesis framew ork is shown in Fig. 1. In this frame work, a generator part of GAN is adopted to predict acoustic features from linguistic features, and both the generator and discriminator are conditioned on speaker codes and trained with multiple speakers’ data. Similar to T acotron 2, the mel-spectrogram, a low-dimensional representation of the linear-frequency spec- trogram, which contains both spectral env elop and harmonics information, is selected as the output of the generator and used to bridge the acoustic model and the W aveNet vocoder . Mel-scale acoustic features have overwhelming advantages in terms of emphasizing the details of audio, especially for lower frequencies, since they are more critical to phonetic information and hence to speech intelligibility in general. The input of the discriminator is either natural or generated acoustic feature samples. The discriminator is trained to dis- tinguish natural samples from generated ones. Speaker codes are also attached to both the input and hidden layers of the discriminator in order to make a better distinction between different speakers. The discriminator is used to compute the adversarial (ADV) loss, which is e xpected to alleviate the ov er-smoothing problem. In addition to the adversarial (AD V) loss from the discriminator , the average discretized-mixture-of-logistics (DML) loss of a well-trained W av eNet model is also back- propagated to the generator of GAN. This loss corresponds to distortion between natural and generated wav eform samples. W e hypothesize that this increases the consistency of acoustic features predicted by the acoustic model and utilized in the vocoder since the acoustic model is updated on the basis of gradients directly computed by the pre-trained W aveNet vocoder . In brief, it is expected that the weighted sum of the con- ventional MSE loss, the adv ersarial loss of the discriminator , and the DML from the W av eNet vocoder will improve the ac- curacy of the predicted acoustic parameters and thus enhance synthesized speech quality . What sets this work apart from other related works is that W av eNet is inv olved in the pro- cess of acoustic modeling training. After e xtracting acoustic features from a training corpus, the W aveNet v ocoder is first trained by utilizing natural mel-spectrograms, and then the trained W av eNet model is fixed and referenced for acoustic model optimization. IV . COMPONENTS OF PROPOSED MODEL STRUCTURE In this section, we describe the three major components of the proposed framew ork, namely , the SRU architecture and the GAN and W av eNet models. A. SR U For the sake of modeling accurac y as well as time efficiency , we choose SRU [21] as the basic architecture of the acoustic modeling. The SRU architecture was originally designed to speed up the training process of RNN. By utilizing both skip and highway connections, SR U is capable of outperforming RNN, especially on very deep networks. Compared with other recurrent architectures (e.g., LSTM and GR U), the VOLUME 7, 2018 3 Yi Zhao et al. : W asserstein GAN and Wa vef or m Loss-based Acoustic Model T raining f or Multi-speaker TTS Systems σ σ c t − 1 f t x t r t ! x t c t h t g ! x t = W * x t f t = σ ( W f x t + b f ) r t = σ ( W r x t + b r ) c t = f t ⊙ c t − 1 + ( 1 − f t ) ⊙ ! x t h t = r t ⊙ g ( c t ) + ( 1 − r t ) ⊙ x t W * 1 − 1 − W f W r FIGURE 2: Details of the SR U cell. σ ( · ) and g ( · ) represent sigmoid and ReLU activation functions , respectively . Linguistic ! Feats. Generated ! Speech Params. Generator Natural ! Speech Params. Discriminator 1/0 L MSE L ADV x ˆ y y FIGURE 3: GAN-based training of TTS acoustic model. L ADV indicates adversarial loss and L M S E indicates L2 loss. basic form of SR U includes only a single forget gate f t to alleviate vanishing and exploding gradient problems instead of using many dif ferent gates to control the information flow . In SRU, the forget gate is used to modulate the internal state c t , which is then used to compute the output state h t . Unlike existing RNN architectures that use the previous output state in the recurrence computation, SR U completely drops the connection between the gating computations and the previous states, and this makes SR U computationally efficient and allows us to use parallelization. The complete architecture of SR U is shown in Fig. 2. The reset gate r t is computed similar to the forget gate f t and is used to compute the output state h t , which performs as a combination of the internal state g ( c t ) and the input x t . g ( · ) represents a ReLU acti vation function and σ ( · ) is a sigmoid function. B. GENERA TIVE ADVERSARIAL NETWORK GANs have achiev ed great success in modeling the distri- butions of complex data and the predictions of realistic data in many applications. They hav e also proven beneficial for speaker -dependent speech synthesis [10]. Fig. 3 shows the GAN-based training of acoustic models for TTS systems. The GAN training in volves a pair of net- works: a generator G aims to produce vivid feature samples that decei ve a discriminator D , and the discriminator aims to estimate the probability that a sample y came from the real data set distribution P r rather than a generator distri- bution P g . For speech synthesis from text, the generator is conditioned on linguistic vectors x ∼ P x . The generator and discriminator are trained like a two-player min-max game objectiv e function, as min G max D E y ∼ P r [log D ( y )] + E x ∼ P x [log(1 − D ( G ( x )))] (1) This objective function is not easy to optimize. T o improv e the stability of model training, W asserstein GAN (WGAN), which minimizes a dif ferent distribution di vergence called E ar th − M ov er (EM) or W asserstein-1 distance, has been proposed and achie ved a better performance than original GAN in terms of con ver gence, especially in image process- ing [34]. The optimization criteria for WGAN is equal to min G max D E y ∼ P r [ D ( y )] − E x ∼ P x [ D ( G ( x ))] (2) During the training of WGAN, the updated model parame- ters of discriminator are clipped into a compact space [ − c, c ] to enforce a Lipschitz constraint on D . Howe ver , the weight clipping may lead to either vanishing or exploding gradients if the clipping threshold c is not carefully tuned, and the resulting discriminator may have a pathological value surface ev en when optimization performs smoothly [19]. T o address this problem, Gulrajani et al. [19] proposed penalizing the norm of the gradient deduced from a discriminator with respect to its input. The new objective for WGAN with gradient penalty (WGAN-GP) is shown as follo ws: min G max D E y ∼ P r [ D ( y )] − E x ∼ P x [ D ( G ( x ))] + λ E ˜ y ∼ P ˜ y [( k∇ ˜ y D ( ˜ y ) k 2 − 1) 2 ] (3) where ˜ y represents samples that are linearly interpolated by the real data y and the fake data generated from the generator G ( x ) : ˜ y = y + (1 − ) G ( x ) (4) where is a random number that obeys distrib ution U [0 , 1] . The loss function of the generator is also expanded on the basis of the least square errors of y as: L G ( y , ˆ y ) = L M S E ( y , ˆ y ) + γ D L ADV ( ˆ y ) (5) where L ADV ( ˆ y ) is the adversarial loss and γ D controls the weight of the adversarial loss. When γ D = 0 , the loss function is equiv alent to the con ventional MSE criteria. In original GAN, L ADV ( ˆ y ) equals E [log (1 − D ( G ( x )))] . In WGAN-GP , L ADV ( ˆ y ) can be regarded as − E [ D ( G ( x ))] . C. W A VENET W av eNet is a deep auto-regressiv e and generativ e model that models a joint distribution of sequential data as a product of conditional distributions, as p ( s ) = Y t p ( s t | s

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment