Lead Sheet Generation and Arrangement by Conditional Generative Adversarial Network

Research on automatic music generation has seen great progress due to the development of deep neural networks. However, the generation of multi-instrument music of arbitrary genres still remains a challenge. Existing research either works on lead she…

Authors: Hao-Min Liu, Yi-Hsuan Yang

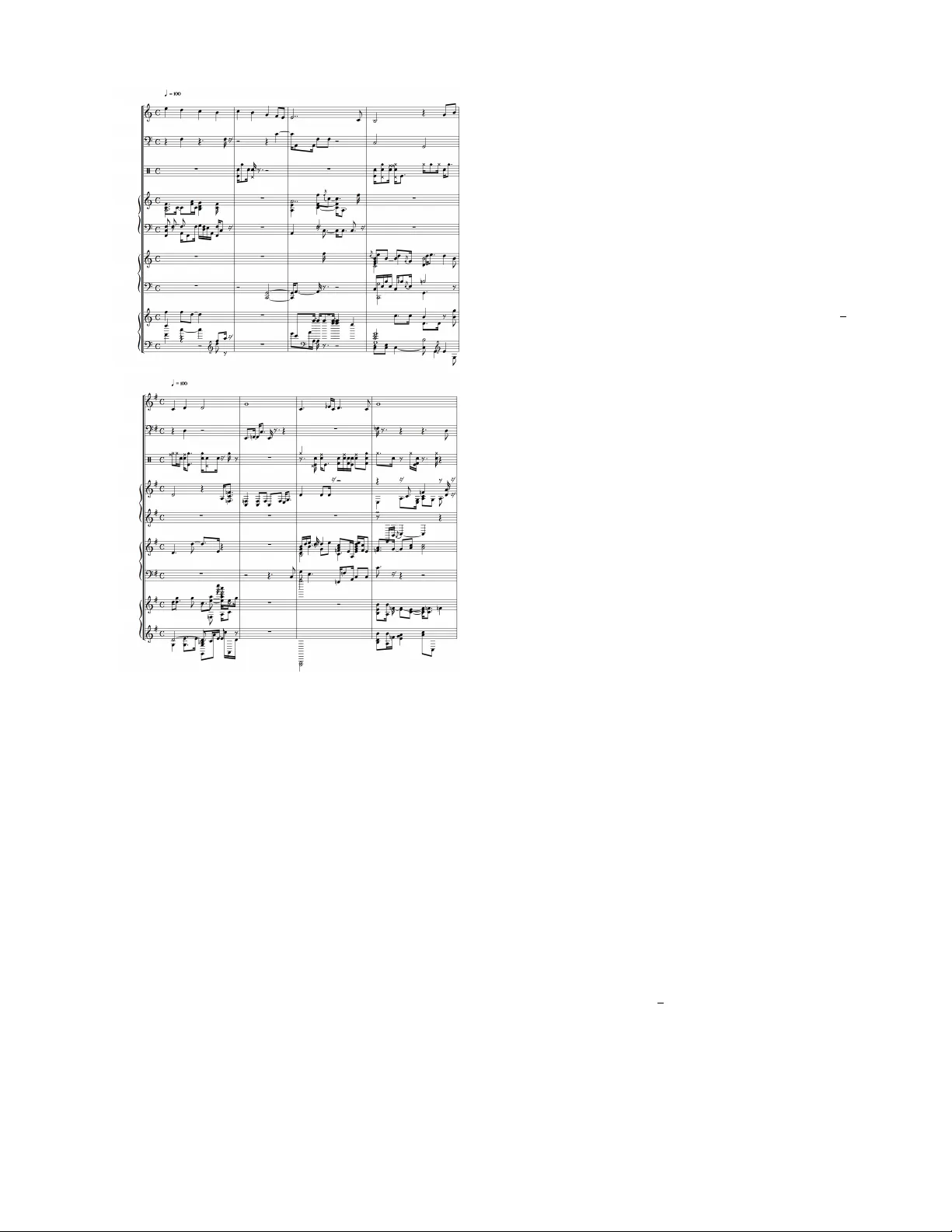

Lead Sheet Generation and Arrangement by Conditional Generati v e Adversarial Netw ork Hao-Min Liu Resear ch Center for IT Inno vation Academia Sinica T aipei, T aiw an paul115236@citi.sinica.edu.tw Y i-Hsuan Y ang Resear ch Center for IT Innovation Academia Sinica T aipei, T aiw an yang@citi.sinica.edu.tw Abstract —Research on automatic music generation has seen great pr ogress due to the de velopment of deep neural networks. Howev er , the generation of multi-instrument music of arbitrary genres still remains a challenge. Existing resear ch either works on lead sheets or multi-track piano-rolls found in MIDIs, but both musical notations hav e their limits. In this work, we propose a new task called lead sheet arrangement to avoid such limits. A new recurrent con volutional generative model for the task is proposed, along with thr ee new symbolic-domain harmonic featur es to facilitate lear ning from unpair ed lead sheets and MIDIs. Our model can generate lead sheets and their arrangements of eight-bar long. A udio samples of the generated result can be f ound at https://drive.google.com/open? id=1c0FfODTpudmLvuKBbc23VBCgQizY6- Rk Index T erms —Lead sheet arrangement, multi-track polyph onic music generation, conditional generative adversarial network I . I N T RO D U C T I O N Automatic music generation by machine (or A.I.) has re- gained great academic and public attention in recent years, due largely to the dev elopment of deep neural networks [1]. Although this is not alw ays made explicitly , researchers often assume that machine can learn the rules people use to compose music, or ev en create new rules, giv en a sufficient amount of training data (i.e. existing music) and a proper neural network architecture. Thanks to cumulati ve ef forts in the research community , deep neural networks hav e been sho wn successful in generating monophonic melodies [2], [3] or polyphonic music of certain musical genres such as the Bach Chorales [4], [5] and piano solo [6], [7]. One of the major remaining challenges is that of generating polyphonic music of arbitrary genres. Existing work can be divided into two groups, depending on the target form of musical notation. The first group of research aims at creating the lead sheet [8]–[11], which is composed of a melody line and a sequence of accompanying chord labels (marked above the staff, see Fig. 1(a) for an example). A lead sheet can be viewed as a middle product of the music. In this form, the melody is clearly specified, but the accompaniment part is made up of only the chord labels. It does not describe the chord voicings, voice leading, bass line or other aspects of the accompaniment [12]. The way to play the chords (e.g. to play all the notes of a chord at the same time, or one at a time) Am a zin g G r a c e C o m pin g = 7 13 Amazing Grace Lead Sheet = G G 7 C G Em C D 9 D 7 G G 7 C G Em C D G (a) (b) Amazing Grace Compin g = 7 13 Am a zin g G r a c e L e a d S h e e t = G G 7 C G Em C D 9 D 7 G G 7 C G Em C D G (b) Fig. 1. (a) A lead sheet of Amazing Grace and (b) its arrangement version. is left to the interpretation of the performer . Accordingly , the rhythmic aspect of chords is missing. The second group of research aims at creating music of the MIDI format [13]–[15], which indicates all the voicing and accompaniment of different instruments. A MIDI can be represented by a number of piano-rolls (which can be seen as matrices) indicating the acti ve notes per time step per instrument [13], [16]. For example, the MIDI for a song of a four-instrument rock band would ha ve four piano-rolls, one for each instrument: lead guitar , rhythm guitar, bass, and drums. A MIDI contains detailed information regarding what to be played by each instrument. But, the major problem is that a MIDI typically does not specify which instrument plays the melody and which plays the chord. An interesting and yet rarely-studied topic is the generation of something in the middle of the aforementioned two forms. Fig. 2. Architecture of the proposed recurrent con volutional generativ e adversarial network model for lead sheet generation and arrangement. W e call it lead sheet arrang ement . Arranging is the art of giving an e xisting melody musical variety [17]. It can be understood as the process to accompan y a melody line or solo with other instruments based on the chord labels on the lead sheet [12]. For e xample, Fig. 1(b) shows an arrangement of the lead sheet of the popular gospel song, Amazing Grace . W e see that it sho ws ho w the chords are to be played. Arrangement can be seen across dif ferent genres. In classical music, arrangement is used in basic type such as sonata, string quartet and also more complex type as symphony . In Jazz, arrangement is often used to accompany the solo instrument. Pop music nowadays also have lots of arrangement setting to increase the diversity and richness of the music. Computationally , we define lead sheet arrangement as the process that takes as input a lead sheet and generates as output piano-rolls of a number of instruments to accompany the melody of the gi ven lead sheet. In other words, the lead sheet is treated as a “condition” in generating the piano- rolls. For example, we aim to generate the piano-rolls of the following instruments in our implementation: strings, piano, guitar , drum and bass. Compared to MIDIs, the result of lead sheet arrangement is musically more informative, for the melody line is made explicit. T o our kno wledge, there is no prior work on lead sheet arrangement, possibly because of the lack of a dataset that contains both the lead sheet and MIDI versions of the same songs. Our work presents three technical contributions to circumvent this issue: • First, we de velop a ne w conditional generati ve model (based on generative adversarial network, or GAN [18]) to learn from unpaired lead sheet and MIDI datasets for lead sheet arrangement. As Fig. 2 shows, the proposed model contains three stages: lead sheet generation , fea- tur e extr action , and arrangement generation (see Section III for details). The middle one plays a piv otal role by extracting symbolic-domain harmonic features from the giv en lead sheet to condition the generation of the ar- rangement. For the conditional generation to be possible, we use features that can be computed from both the lead sheets and the MIDIs. • Second, we propose and empirically compare the perfor- mance of three such harmonic features to connect lead sheets and MIDIs for lead sheet arrangement. They are chr oma piano-r oll features, chr oma beats features, and chor d piano-r oll features (see Section III-D). • W e employ the conv olutional GAN model proposed by Dong et al. for multi-track piano-roll generation [13] for both lead sheet generation and arrangement generation, since the former can be viewed as two-track piano-roll generation and the later can be vie wed as conditional fi ve- track piano-roll generation. As a minor contribution, we replace the con volutional layers in the temporal genera- tors (marked as G temp and G temp,i in Fig. 2) by recurrent layers, to better capture the repetiti ve patterns seen in lead sheets. As a result, we can generate realistic lead sheets (and their arrangement) of eight bars long. In our experiment, we e valuate the effecti veness of the lead sheet generation component and the ov erall lead sheet arrangement model through objectiv e metrics and subjective ev aluation, respectively . Upon publication, we will set up a github repo to demonstrate the generated music and to share the code for reproducibility . I I . R E L A T E D W O R K Many deep learning models have been proposed for lead sheet generation, possibly because lead sheets are commonly used in pop music. Lead sheet generation can be done in three ways: given chords, generate melody (e.g., [8]); given melody , generate chords (a.k.a. melody harmonization, e.g., [9], [10]); or generating both melody and chords from scratch (e.g., [11]). Some recent models generate not only the melody and chords but also the drums [19]. But, as the target form of musical notation is still the lead sheet, only a sequence of chord labels (usually one chord label per bar or per half-bar) is generated, not the chord voicing and comping [20]. T o generate music that uses more instruments/tracks, Dong et al. proposed MuseGAN, a con volutional GAN model that learns from MIDIs for multi-track piano-roll generation [13]. MuseGAN creates music of fiv e tracks (strings, piano, guitar, drum and bass), whereas a later variant of it that uses binary neurons considers eight tracks (replacing strings by ensemble, reed, synth lead and synth pad) [14]. Recently , Simon et al. [15] used a variational auto-encoder (V AE) model to obtain a bar embedding space with instrumentation disentanglement and a chord conditioned structure. Their model demonstrates the ability of generating polyphonic measures conditioned on predefined chord progression. The instrumentation in this model can be vie wed as part of arrangement. Another work that is concerned with the instrumentation of music is the MIDI-V AE model proposed by Brunner et al. [21]. Learning from MIDIs, the model creates a bar embedding space with instrument, pitch, velocity disentanglement and style label condition. Although all these models can arrange the instru- ments in multi-track music, they cannot specify which track plays the melody . There are some other models for multi-track music gener- ation. DeepBach [5] aims at generating four-track chorales in the style of Bach. Jambot [22] generates a chord sequence first and then uses that as a condition to generate polyphonic music of a single track (e.g., piano solo). MusicV AE [23], an improv ed version of Google Magenta’ s MelodyRNN model [2], uses a hierarchical autoencoder to create melody , bass and drums (i.e. three tracks) of 32 bars long. Howe ver , all these models are based on recurrent neural networks (RNNs), which may work well in learning the long-term temporal structure in music but less so for local musical textures (e.g. arpeggios and broken chords), as compared with con volutional neural networks (CNNs) [13]. I I I . P RO P O S E D M O D E L The proposed model is composed of three stages: lead sheet generation, feature extraction, and arrangement generation, as illustrated in Figure 2. W e introduce them below . A. Data Repr esentation In order to model multi-track music, we adopt the piano-roll representation as our data format. A piano-roll is represented as a binary-valued score-like matrix [13]. Its x-axis and y-axis denote the time steps and note pitches, respectiv ely . An N - track piano-roll of one bar long is represented as a tensor X ∈ { 0 , 1 } T × P × N , where T denotes the number of time steps in a bar and P stands for number of note pitches. Both lead sheets and MIDIs can be con verted to piano-rolls. For example, a lead sheet can be seen as two-track (melody , chord) piano-rolls, as illustrated in the upper part of Fig. 3. B. Lead Sheet Generation The goal of our lead sheet generation model is to generate lead sheets of eight bars long from scratch . As the left hand side of Figure 2 shows, it contains two sets of generati ve models: the temporal generators G temp and the bar generators G bar . The temporal generators are in charge of the temporal dependency across bars and their output becomes part of the input of the bar generator . The bar generators generate music one bar at a time. Since the piano-rolls for each bar of a lead LeadsheetGen (8 bars pianoroll) ArrangementGen-chord-roll ArrangementGen-chroma-roll ArrangementGen-chroma-beats Chroma-beats 4 12 Chroma-roll 12 48 Chord-roll 48 84 8 b ars Fig. 3. Lead sheet arrangement system flow . sheet are two image-like matrices (one for melody , one for chords), we can use CNN-based models for G bar . In order to generate realistic music, we train the generators in an “adversarial” way , following the principal of GAN [18]. Specifically , in the training time, we train a CNN-based discriminator D (not shown in Figure 2) to distinguish between real lead sheets (from existing songs) and the output of G bar . While training D , both G temp and G bar are held fixed, and the learning target is to minimize the classification loss of D . In contrast, while training G temp and G bar , we fix D and the learning target is to maximize the classification loss of D . As a result of this iterative mini-max training process, G temp and G bar may learn to generate realistic lead sheets. Follo wing the design of MuseGAN [13], we use four types of input random noises z for our generators, to capture time dependent/independent and track dependent/independent characteristics. Ho wev er, unlike MuseGAN, we use a two- layer RNN model instead of CNN for G temp . Empirically we find that RNNs can better capture the repetitive patterns seen in lead sheets. Although such a hybrid recurrent-con volutional design is not ne w (e.g. used in [24]), to our knowledge it is the first recurrent CNN model (RCNN) for lead sheet generation. C. Arr angement Generation The goal of our arrangement generation model, denoted as the conditional bar generators G (c) bar on the right hand side of Figure 2, is to generate fiv e-track piano-rolls of one bar long conditioning on the features extracted from the lead sheets . This process is also illustrated in Fig. 3. W e generate the arrangement one bar at a time, until all the eight bars hav e been generated (and then concatenated). W e also use the principal of GAN to train G (c) bar along with a discriminator D (c) . Both G (c) bar and D (c) are implemented as CNNs. As the bottom-right corner of Fig. 2 sho ws, we train a CNN-based encoder E along with G (c) bar to embed the harmonic features extracted by the middle feature extractor to the same space as the output of the intermediate hidden layers of G (c) bar and D (c) . Conditioning both G (c) bar and D (c) empirically performs better than conditioning G (c) bar only . D. F eature Extraction Giv en a lead sheet, one may think that we can directly use the melody line or the chord sequence in the lead sheet to condition the arrangement generation. This is, howe ver , not feasible in practice, because few MIDIs in the training data hav e the melody or chord tracks specified. What we hav e from MIDIs are usually the multi-track piano-rolls. W e need to project the lead sheets and MIDIs to the same feature space to make the conditioning possible. W e propose to achieve this by extracting harmonic features from lead sheets and MIDIs. In this way , arrangement gener- ation is conditioned on the harmonic part of the lead sheet. W e propose the follo wing three symbolic-domain harmonic features. See the middle of Fig. 3 for an illustration. • Chroma piano-roll representation (chroma-r oll) : The idea is to neglect pitch octav es and compress the pitch range of a piano-roll into chroma (twelve pitch classes) [25], leading to a 12 × 48 matrix per bar . Such a chroma representation has been widely used in audio-domain music information retriev al (MIR) tasks such as audio- domain chord recognition and cover song identification [26]. For a lead sheet, we compute the chroma from both the melody and chord piano-rolls and then take the union. For a MIDI, we do the same across the N piano-rolls. • Chroma beats representation (chroma-beats) : From chroma-roll, we further reduce the temporal resolution by taking the av erage per beat (i.e. per 12 time steps), leading to a 12 × 4 matrix per bar . The lead sheets and MIDIs may overlap more in this feature space, but the downside is the loss of detailed temporal information. • Chord piano-r oll representation (chord-roll) : Instead of using chroma features, we estimate chord labels from both lead sheets and MIDIs to increase the information of harmony . This is done by first synthesizing the audio file of a lead sheet (or a MIDI), and then applying an audio- domain chord recognition model for chord estimation. W e use the DeepChroma model implemented in the Madmom library [27] for recognizing 12 major chords and 12 minor chords for each beat and use piano-roll without compressing to chroma, yielding a 84 × 48 matrix per bar . W e do not use the (ground truth) chord labels provided in the lead sheets, as we want the lead sheets and MIDIs to undergo the same feature extraction process. I V . I M P L E M E N TA T I O N This section presents the datasets used in our implementa- tion and some technical details. A. Dataset W e use the TheoryT ab dataset [28] for the lead sheets and the Lakh piano-roll dataset [13] for the multi-track piano-rolls. T ABLE I C O MPA R IS O N O F T HE T W O DAT A S E T S E MP L OY E D I N O U R W O R K . T H EY A R E R ES P E C TI V E L Y F RO M H T T P S : / / W W W . H O OK T H E ORY . C O M / TH E O RYTA B , A N D H T TP S : / / S A L U 13 3 4 4 5. G I T HU B . I O / L A K H - P I A NO RO L L - DA TAS E T / . TheoryT ab [28] Lakh Pianoroll [13] Song length Segment Full song Symbolic data Lead sheet Multi-track piano-rolls Musical key C key only V arious keys Genre tag Y es Y es Number of songs 16K 21K W e summarize the two datasets in T able I and present below how we preprocess them for the sake of model training. 1) TheoryT ab Dataset (TTD) contains 16K segments of lead sheets stored with XML format. Since it uses scale degree to represent the chord labels, we could think of all the songs as C key songs without further key transposition. W e parse each XML file and turn the melody and chords into two piano-rolls. For each bar, we set the height to 84 (note pitch range from C1 to B7 ) and the width (time resolution) to 48 for modeling temporal patterns such as triplets and 16th notes. As we want to generate eight-bar lead sheets, the size of the target output tensor for lead sheet generation is 8 (bars) × 48 (time steps) × 84 (notes) × 2 (tracks). W e use all the songs in TTD, without filtering the songs by genre. For segments that are longer than eight bars, we take the maximum multiples of eight bars. 2) Lakh Piano-roll Dataset (LPD) is derived from the Lakh MIDI dataset [29]. W e use the lpd-5-cleansed subset [13], which contains 21,425 fiv e-track piano-rolls that are tagged as Rock songs and are in 4/4 time signature. These fi ve tracks are, again, strings, piano, guitar , drum and bass. Since the songs are in various keys, we transpose all the songs into C key by using the pretty_midi library [30]. Since arrangement generation aims at creating arrangement of one bar at a time, the size of the target output tensor for arrangement generation is 1 (bar) × 48 (time steps) × 84 (notes) × 5 (tracks). B. Model P arameter Settings The G temp in the lead sheet generation model is implemented by two-layer RNN with 4 outputs and 32 hidden units. G bar , G (c) bar , D and D (c) are all implemented as CNNs. The total size of the input random v ectors z for G bar and G (c) bar are both set to 128. For the encoder E in arrangement generation, we adopt skip connections (as done in [13]) and use slightly different topology for encoding the three features described in Section III-D. More details of the network topology will be presented in an online appendix. W e use WGAN-gp [31] for model training. Each model is trained with a T esla K80m GPU in less than 24 hours with batch size being 64. V . E X P E R I M E N T A. Lead Sheet Generation Evaluation W e adopt the objectiv e metrics proposed in [13] to ev aluate lead sheet generation, using the code they shared. Empty bars (EB) reflects the ratio of empty bars; used pitch classes (UPC) T ABLE II R E SU LT O F O B J E CT I V E E V A L UA T I ON F O R L E AD S H EE T G EN E R A T I O N , I N F OU R M E TR I C S . T H E V A L UE S A RE B E TT E R W H E N T H E Y A R E C LO S E R T O T H A T C O MP U T E D F RO M T H E T R A IN I N G DAT A ( I . E ., T H E T H EO RY T A B D A TA SE T ) , S H OW N I N T H E FI R S T RO W . empty bars (EB) used pitch classes (UPC) qualified notes (QN) tonal distance (TD) Melody Chord Melody Chord Melody Chord Melody-Chord T raining data 0.02 0.01 4.18 6.12 1.00 1.00 1.50 1st iteration 0.00 0.00 16.5 83.7 0.16 0.42 0.45 1,000th iteration 0.00 0.00 6.99 7.59 0.55 0.71 1.34 2,000th iteration 0.00 0.00 6.47 7.85 0.71 0.80 1.42 3,000th iteration 0.00 0.00 4.96 6.75 0.69 0.84 1.46 4,000th iteration 0.00 0.00 4.48 6.66 0.82 0.87 1.49 5,000th iteration 0.00 0.00 4.10 6.69 0.83 0.84 1.53 represents the av erage number of pitch classes used per bar , qualified note (QN) denotes the ratio of notes that are longer than or equal to the 16th note (i.e. low QN suggests overly fragmented music); tonal distance (TD) [32] represents the harmonicity between two giv en tracks. T able II shows that the UPC, QN and TD of the generated lead sheets get closer to those of the training data (i.e., the TheoryT ab dataset) as the learning unfolds, suggesting that the model is learning the data distribution of real lead sheets. The values gradually saturate after around 5,000 training iterations (one batch per iteration). W e find no empty bars in our generation result. B. Lead Sheet Arrang ement User Study W e conduct an online user study to ev aluate the result of arrangement generation. In the study , we ask respondents to listen to four groups of eight-bar music phrases arranged by our models. Each group contains one lead sheet and its three kinds of arrangements based on chroma-roll, chroma-beats and chord-roll features, respectiv ely . The lead sheet is put at the top of each group and can be viewed as the reference. W e use “bell sound” to play the melody . W e play the melody and chord along with the fi ve tracks generated by the arrangement model to show the compatibility of these two models. After listening to the music, respondents are asked to compare the three arranged versions. They are asked to vote for the best model among the three, in terms of harmonicity , rhythmicity and overall feeling , respectively . Moreov er , they are asked to rate each sample according to the same three aspects. T o focus on the result of arrangement generation, we use existing lead sheets from TheoryT ab for this ev aluation. Figure 4 shows the av erage result of 25 respondents, 88% of which play some instruments and 16% are studying in music- related departments or working in music-related industries. The following observations can be made: • In terms of harmonicity , chord-roll outperforms chroma- roll and chroma-beats by a great margin, suggesting that chords carry more harmonic information than chroma. • In rhythmicity , we see from the votes that chroma-beats are clearly inferior to chord-roll and chroma-roll. W e attribute this to the loss of temporal resolution in the chroma-beats representation. • In o verall feeling, chord-roll performs significantly better than the other two (which can be seen from the standard deviation shown in Fig. 4(b)) and attains a mean opinion (a) (b) Fig. 4. Result of subjective ev aluation for three arrangement generation models using dif ferent features, in terms of three metrics—(a) The vote result and (b) the MOS rating scores in a Likert scale from 1 to 5. score (MOS) of 3.5675 in a fiv e-point Likert scale. The result seems to suggest that harmonicity has stronger impact on the ov erall feeling, compared to rhythmicity . In summary , as there are no existing work on lead sheet arrangement, we hav e to compare three variants of our own model. The result shows that using chord-roll to connect the lead sheets and MIDIs performs the best. The MOS in ov erall feeling suggests that the model is promising, but there is still room for improvement. As illustrations, we show in Fig. 5 the scoresheet of the arranged result (based on chord-roll) of a four-bar lead sheet from TTD and another one generated by our model. Audio examples can be found in the link described in the abstract. V I . C O N C L U S I O N In this paper , we have presented a conditional GAN model for generating eight-bar phrases of lead sheets and their arrangement. T o our knowledge, this represents the first model (a) Theorytab Lead Sheet Arrangement Melody Bass Drum Guitar Piano String (b) GAN Lead Sheet Arrangement Melody Bass Drum Guitar Piano String Fig. 5. Result of lead sheet arrangement (based on chord-roll) for (a) an existing lead sheet from TTD and (b) a lead sheet generated by our model. for lead sheet arrangement. W e experimented with three new harmonic features to condition the arrangement generation and found through a listening test that the chord piano- roll representation performs the best. The best model attains 3.4350, 3.4325, and 3.5675 MOS in harmonicity , rhythmicity , and overall feeling, respecti vely , in a Likert scale from 1 to 5. R E F E R E N C E S [1] J.-P . Briot, G. Hadjeres, and F . Pachet, “Deep learning techniques for music generation: A survey , ” arXiv pr eprint arXiv:1709.01620 , 2017. [2] E. W aite, D. Eck, A. Roberts, and D. Abolafia, “Project magenta: Generating long-term structure in songs and stories, ” 2016, https:// magenta.tensorflow .org/blog/2016/07/15/lookback- rnn- attention- rnn/. [3] B. L. Sturm, J. F . Santos, O. Ben-T al, and I. Korshuno va, “Music transcription modelling and composition using deep learning, ” in Proc. Conf. Computer Simulation of Musical Creativity , 2016. [4] F . Liang, “BachBot: Automatic composition in the style of bach chorales, ” Master’ s thesis, Univ ersity of Cambridge, 2016. [5] G. Hadjeres, F . Pachet, and F . Nielsen, “DeepBach: A steerable model for Bach chorales generation, ” in Proc. Int. Conf. Machine Learning , 2017. [6] I. Simon and S. Oore, “Performance RNN: Generating music with expressi ve timing and dynamics, ” 2017, https://magenta.tensorflow .org/ performance- rnn. [7] A. Huang, A. V aswani, J. Uszkoreit, N. Shazeer , A. Dai, M. Hoffman, C. Hawthorne, and D. Eck, “Generating structured music through self- attention, ” in Pr oc. Joint W orkshop on Machine Learning for Music , 2018. [8] L.-C. Y ang, S.-Y . Chou, and Y .-H. Y ang, “MidiNet: A conv olutional generativ e adversarial network for symbolic-domain music generation, ” in Proc. Int. Soc. Music Information Retrieval Conf. , 2017, pp. 324–331. [9] H. Lim, S. Rhyu, and K. Lee, “Chord generation from symbolic melody using BLSTM networks, ” in Pr oc. Int. Soc. Music Information Retrieval Conf. , 2017. [10] H. Tsushima, E. Nakamura, K. Itoyama, and K. Y oshii, “Function- and rhythm-aware melody harmonization based on tree-structured parsing and split-merge sampling of chord sequences, ” in Pr oc. Int. Soc. Music Information Retrieval Conf. , 2017. [11] P . Roy , A. Papadopoulos, and F . Pachet, “Sampling variations of lead sheets, ” arXiv preprint , 2017. [12] “Lead sheet, ” from Wikipedia, https://en.wikipedia.org/wiki/Lead sheet. [13] H.-W . Dong, W .-Y . Hsiao, L.-C. Y ang, and Y .-H. Y ang, “MuseGAN: Multi-track sequential generative adversarial networks for symbolic music generation and accompaniment, ” in Proc. AAAI Conf. Artificial Intelligence , 2018, https://salu133445.github .io/lakh-pianoroll-dataset/. [14] H.-W . Dong and Y .-H. Y ang, “Conv olutional generative adversarial networks with binary neurons for polyphonic music generation, ” in Pr oc. Int. Soc. Music Information Retrieval Conf. , 2018. [15] I. Simon, A. Roberts, C. Raffel, J. Engel, C. Hawthorne, and D. Eck, “Learning a latent space of multitrack measures, ” arXiv preprint arXiv:1806.00195 , 2018. [16] C. Raffel and D. P . W . Ellis, “Extracting ground truth information from MIDI files: A MIDIfesto, ” in Pr oc. Int. Soc. Music Information Retrieval Conf. , 2016, pp. 796–802. [17] V . Corozine, Arranging Music for the Real W orld: Classical and Commer cial Aspects . Pacific, MO: Mel Bay . ISBN 0-7866-4961-5. OCLC 50470629, 2002. [18] I. J. Goodfellow , J. Pouget-Abadie, M. Mirza, B. Xu, D. W arde-Farley , S. Ozair, A. Courville, and Y . Bengio, “Generativ e adversarial nets, ” in NIPS , 2014. [19] H. Chu, R. Urtasun, and S. Fidler, “Song from PI: A musically plausible network for pop music generation, ” in Pr oc. ICLR W orkshop , 2016. [20] B. Saunders, “Jazz guitar lesson– chord voicing and comping, ” Berklee Online, on Y outube, https://www .youtube.com/watch?v=olksbLorpIY. [21] G. Brunner, A. Konrad, Y . W ang, and R. W attenhofer, “MIDI-V AE: Modeling dynamics and instrumentation of music with applications to style transfer, ” in Pr oc. Int. Society for Music Information Retrieval Conf. , 2018. [22] G. Brunner , Y . W ang, R. W attenhofer, and J. W iesendanger, “Jambot: Music theory aware chord based generation of polyphomic music with LSTMs, ” arXiv preprint , 2016. [23] A. Roberts, J. Engel, C. Raffel, C. Hawthorne, and D. Eck, “ A hierar- chical latent vector model for learning long-term structure in music, ” in Pr oc. NIPS W orks. Machine Learning for Creativity and Design , 2017. [24] M. Akbari and J. Liang, “Semi-recurrent CNN-based V AE-GAN for sequential data generation, ” arXiv pr eprint arXiv:1806.00509 , 2018. [25] T . Fujishima, “Realtime chord recognition of musical sound: a system using common Lisp music, ” in Proc. Int. Computer Music Conf. , 1999. [26] D. P . W . Ellis and G. E. Poliner , “Identifying ‘cover songs’ with chroma features and dynamic programming beat tracking, ” in Proc. IEEE Int. Conf. Acoustics, Speech and Signal Pr ocessing , 2007. [27] F . K orzeniowski and G. W idmer, “Feature learning for chord recogni- tion the deep chroma extractor , ” in Pr oc. Int. Soc. Music Information Retrieval Conf. , 2016, pp. 37–43. [28] TheoryT ab, https://www .hooktheory .com/theorytab. [29] C. Raffel, “Learning-based methods for comparing sequences, with ap- plications to audio-to-midi alignment and matching, ” Ph.D. dissertation, Columbia University , 2016, http://colinraffel.com/projects/lmd/. [30] C. Raffel and D. P . W . Ellis, “Intuitive analysis, creation and manipula- tion of MIDI data with pretty midi, ” in Pr oc. Int. Soc. Music Information Retrieval Conf. Late Breaking and Demo P apers , 2014, pp. 84–93, https://github .com/craffel/pretty-midi. [31] I. Gulrajani, F . Ahmed, M. Arjovsky , V . Dumoulin, and A. Courville, “Improved training of W asserstein GANs, ” arXiv pr eprint arXiv:1704.00028 , 2017. [32] C. Harte, M. Sandler , and M. Gasser, “Detecting harmonic change in musical audio, ” in Pr oc. ACM MM W orkshop on Audio and Music Computing Multimedia , 2006. A P P E N D I X T ABLE III C O ND I T I ON E D G E N E RATO R S Input: z ∈ < 128 Reshaped to (1,1) × 128 channels (1, 1, 128) transcon v 1024 1 × 1 (1,1) BN ReLU (1, 1, 1024) Reshaped to (2,1) × 512 channels (2, 1, 512) chord-roll[5] (2, 1, 528) transcon v 512 2 × 1 (2,1) BN ReLU (4, 1, 512) chord-roll[4] (4, 1, 528) transcon v 256 2 × 1 (2,1) BN ReLU (8, 1, 256) chord-roll[3] (8, 1, 272) transcon v 256 2 × 1 (2,1) BN ReLU (16, 1, 256) chord-roll[2] (16, 1, 272) transcon v 128 3 × 1 (3,1) BN ReLU (48, 1, 128) chord-roll[1] (48, 1, 144) transcon v 64 1 × 7 (1,1) BN ReLU (48, 7, 64) chord-roll[0] (48, 7, 80) transcon v 1 1 × 12 (1,12) BN tanh (48, 84, 1) Output: G chord − r oll ( z ) ∈ < 48 × 84 (a) Chord-roll conditioned generator Input: z ∈ < 128 Reshaped to (1,1) × 128 channels (1, 1, 128) transcon v 1024 1 × 1 (1,1) BN ReLU (1, 1, 1024) transcon v 512 1 × 12 (1,12) BN ReLU (1, 12, 512) chroma-roll[5] (1, 12, 528) transcon v 256 2 × 1 (2,1) BN ReLU (2, 12, 256) chroma-roll[4] (2, 12, 272) transcon v 256 2 × 1 (2,1) BN ReLU (4, 12, 256) chroma-roll[3] (4, 12, 272) transcon v 128 2 × 1 (2,1) BN ReLU (8, 12, 128) chroma-roll[2] (8, 12, 144) transcon v 128 2 × 1 (2,1) BN ReLU (16, 12, 128) chroma-roll[1] (16, 12, 144) transcon v 64 3 × 1 (3,1) BN ReLU (48, 12, 64) chroma-roll[0] (48, 12, 80) transcon v 1 1 × 7 (1,7) BN tanh (48, 84, 1) Output: G chroma − r oll ( z ) ∈ < 48 × 84 (b) Chroma-roll conditioned generator Input: z ∈ < 128 Reshaped to (1,1) × 128 channels (1, 1, 128) transcon v 1024 1 × 1 (1,1) BN ReLU (1, 1, 1024) transcon v 512 1 × 12 (1,12) BN ReLU (1, 12, 512) transcon v 256 2 × 1 (2,1) BN ReLU (2, 12, 256) transcon v 256 2 × 1 (2,1) BN ReLU (4, 12, 256) chroma-beats[0] (4, 12, 272) transcon v 128 2 × 1 (2,1) BN ReLU (8, 12, 128) transcon v 128 2 × 1 (2,1) BN ReLU (16, 12, 128) transcon v 64 3 × 1 (3,1) BN ReLU (48, 12, 64) transcon v 1 1 × 7 (1,7) BN tanh (48, 84, 1) Output: G chroma − beats ( z ) ∈ < 48 × 84 (c) Chroma-beats conditioned generator T able III sho ws three conditioned generator networks on (a) chord-roll, (b) chroma-roll and (c) chroma-beats features, respectiv ely . T able IV presents the discriminators (a)-(c) and encoders (d)-(f) designed for the same three features, respec- tiv ely . The values showned in ro ws of transcon v and con v (from left to right) represent: number of channels, filter size, strides, batch normalization (BN) and activ ation function. For fully-connected layers, the v alues represent (from left to right): number of hidden nodes and activ ation functions. LReLU stands for leaky ReLU. The column after activ ation function denotes the dimension of each hidden layer . The names (chord- roll, chroma-roll, chroma-beats) in generator and discriminator networks shows the skip connection on the information with respect to those feature names in the corresponding encoders. T ABLE IV C O ND I T I ON E D D I S C RI M I NA T O RS A N D E N CO D E RS . Input: ˜ x ∈ < 1 × 48 × 84 × 5 Reshaped to (48,84) × 5 channels (48, 84, 5) chord-roll[6] (48, 84, 6) con v 128 1 × 12 (1,12) LReLU (48, 7, 128 con v 128 1 × 7 (1,7) LReLU (48, 1, 128) con v 128 2 × 1 (2,1) LReLU (24, 1, 128) con v 128 2 × 1 (2,1) LReLU (12, 1, 128) con v 256 4 × 1 (2,1) LReLU (5, 1, 256) con v 512 3 × 1 (2,1) LReLU (2, 1, 512) fully-connected 1024 LReLU 1024 fully-connected 1 1 Output: D chord − r oll ( ˜ x ) ∈ < (a) Chord-roll conditioned discriminator Input: ˜ x ∈ < 1 × 48 × 84 × 5 Reshaped to (48,84) × 5 channels (48, 84, 5) con v 128 1 × 7 (1,7) LReLU (48, 12, 128) chroma-roll[0] (48, 12, 144) con v 128 3 × 1 (3,1) LReLU (16, 12, 128 chroma-roll[1] (16, 12, 144) con v 128 2 × 1 (2,1) LReLU (8, 12, 128) chroma-roll[2] (8, 12, 144) con v 128 2 × 1 (2,1) LReLU (4, 12, 128) chroma-roll[3] (4, 12, 144) con v 256 2 × 1 (2,1) LReLU (2, 12, 256) chroma-roll[4] (2, 12, 272) con v 512 2 × 1 (2,1) LReLU (1, 12, 512) chroma-roll[5] (1, 12, 528) fully-connected 1024 LReLU 1024 fully-connected 1 1 Output: D chroma − r oll ( ˜ x ) ∈ < (b) Chroma-roll conditioned discriminator Input: ˜ x ∈ < 1 × 48 × 84 × 5 Reshaped to (48,84) × 5 channels (48, 84, 5) con v 128 1 × 7 (1,7) LReLU (48, 12, 128) con v 128 3 × 1 (3,1) LReLU (16, 12, 128 con v 128 2 × 1 (2,1) LReLU (8, 12, 128) con v 128 2 × 1 (2,1) LReLU (4, 12, 128) chroma-beats[0] (4, 12, 144) con v 256 2 × 1 (2,1) LReLU (2, 12, 256) con v 512 2 × 1 (2,1) LReLU (1, 12, 512) fully-connected 1024 LReLU 1024 fully-connected 1 1 Output: D chroma − beats ( ˜ x ) ∈ < (c) Chroma-beats conditioned discriminator Input: y ∈ < 48 × 84 feature name Reshaped to (48,84) × 1 channel (48, 84, 1) con v 16 1 × 12 (1,12) BN LReLU (48, 7, 16) chord-roll[0] con v 16 1 × 7 (1,7) BN LReLU (48, 1, 16) chord-roll[1] con v 16 3 × 1 (3,1) BN LReLU (16, 1, 16 chord-roll[2] con v 16 2 × 1 (2,1) BN LReLU (8, 1, 16) chord-roll[3] con v 16 2 × 1 (2,1) BN LReLU (4, 1, 16) chord-roll[4] con v 16 2 × 1 (2,1) BN LReLU (2, 1,16) chord-roll[5] Output: chord-roll[:] (d) Chord-roll encoder Input: y ∈ < 48 × 12 feature name Reshaped to (48,12) × 1 channel (48, 12, 1) chroma-roll[0] con v 16 3 × 1 (3,1) BN LReLU (16, 12, 16) chroma-roll[1] con v 16 2 × 1 (2,1) BN LReLU (8, 12, 16) chroma-roll[2] con v 16 2 × 1 (2,1) BN LReLU (4, 12, 16) chroma-roll[3] con v 16 2 × 1 (2,1) BN LReLU (2, 12,16) chroma-roll[4] con v 16 2 × 1 (2,1) BN LReLU (1, 12, 16) chroma-roll[5] Output: chroma-roll[:] (e) Chroma-roll encoder Input: y ∈ < 4 × 12 feature name Replicated to (4,12) × 16 channels (4, 12, 16) chroma-beats[0] Output: chroma-beats[:] (f) Chroma-beats encoder

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment