Learning to Separate Object Sounds by Watching Unlabeled Video

Perceiving a scene most fully requires all the senses. Yet modeling how objects look and sound is challenging: most natural scenes and events contain multiple objects, and the audio track mixes all the sound sources together. We propose to learn audi…

Authors: Ruohan Gao, Rogerio Feris, Kristen Grauman

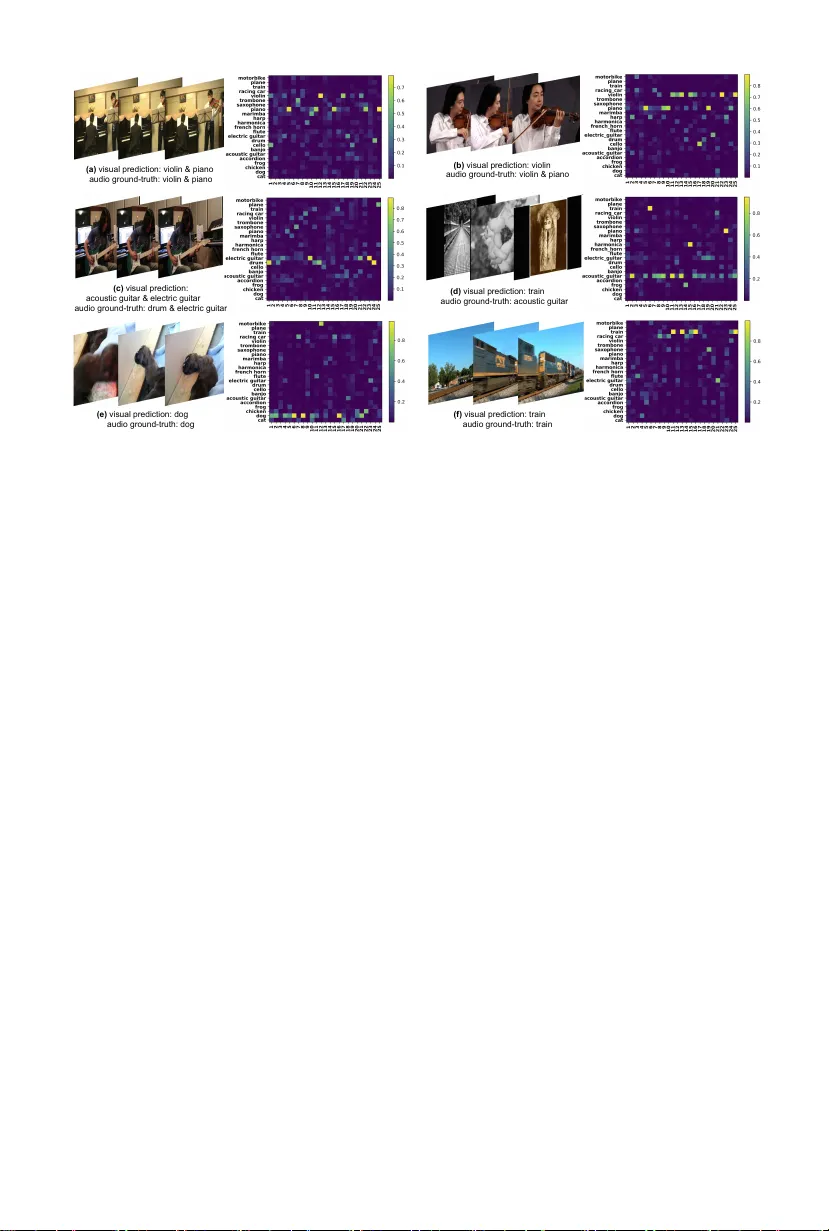

Learning to Separate Ob ject Sounds b y W atc hing Unlab eled Video Ruohan Gao 1 , Rogerio F eris 2 , Kristen Grauman 3 1 The Un iversit y of T exas at Austin, 2 IBM Research, 3 F aceb ook AI Researc h rhgao@cs.utexas.edu , rsferis@us.ibm.com , grauman@fb.com ?? Abstract. P erceiving a scene most fully requires all the senses. Y et mo deling ho w ob jects lo ok and sound is c hallenging: most natural scenes and ev ents con tain multiple ob jects, and the audio track mixes all the sound sources together. W e prop ose to learn audio-visual ob ject mo dels from unlab eled video, then exploit the visual context to perform au- dio source separation in nov el videos. Our approach relies on a deep m ulti-instance multi-label learning framework to disen tangle the audio frequency bases that map to individual visual ob jects, ev en without ob- serving/hearing those ob jects in isolation. W e show ho w the recov ered disen tangled bases can b e used to guide audio source separation to obtain b etter-separated, ob ject-level sounds. Our work is the first to learn audio source separation from large-scale “in the wild” videos containing multi- ple audio sources per video. W e obtain state-of-the-art results on visually- aided audio source separation and audio denoising. Our video results: http://vision.cs.utexas.edu/projects/separating_object_sounds/ audio visual sound of guitar sound of saxophone separation Fig. 1. Goal: Learn from unlab eled video to separate ob ject sounds 1 In tro duction Understanding scenes and even ts is inherently a multi-modal exp erience. W e p erceiv e the w orld by b oth lo oking and listening (and touching, smelling, and tasting). Ob jects generate unique sounds due to their physical prop erties and in teractions with other ob jects and the environmen t. F or example, p erception of a coffee shop scene ma y include seeing cups, saucers, p eople, and tables, but also hearing the dishes clatter, the espresso machine grind, and the barista shouting ?? On le ave fr om The University of T exas at Austin ( grauman@cs.utexas.edu ). 2 Ruohan Gao, Rogerio F eris, Kristen Grauman an order. Human developmen tal learning is also inherently multi-modal, with y oung children quickly amassing a rep ertoire of ob jects and their sounds: dogs bark, cats mew, phones ring. Ho wev er, while recognition has made significant progress by “looking”— detecting ob jects, actions, or p eople based on their app earance—it often do es not listen. Despite a long history of audio-visual video indexing [45, 57, 70, 71, 79], obje cts in video are often analyzed as if they w ere silent en tities in silent envi- ronmen ts. A key c hallenge is that in a realistic video, ob ject sounds are observed not as separate entities, but as a single audio channel that mixes all their fre- quencies together. Audio source separation, though studied extensively in the signal pro cessing literature [25, 40, 76, 86], remains a difficult problem with nat- ural data outside of lab settings. Existing metho ds p erform b est by capturing the input with multiple microphones, or else assume a clean set of single source audio examples is a v ailable for supervision (e.g., a recording of only a violin, another recording containing only a drum, etc.), b oth of which are very limiting prerequisites. The blind audio separation task ev ok es challenges similar to image segmen tation—and p erhaps more, since all sounds o verlap in the input signal. Our goal is to learn ho w differen t ob jects sound by b oth lo oking at and lis- tening to unlab eled video containing m ultiple sounding ob jects. W e prop ose an unsup ervised approach to disentangle mixed audio into its comp onen t sound sources. The key insight is that observing sounds in a v ariet y of visual con- texts reveals the cues needed to isolate individual audio sources; the differen t visual contexts lend w eak sup ervision for discov ering the asso ciations. F or ex- ample, having exp erienced v arious instruments pla ying in v arious com binations b efore, then given a video with a guitar and a saxophone (Fig. 1), one can naturally anticipate what sounds could b e presen t in the accompanying audio, and therefore better separate them. Indeed, neuroscientists rep ort that the mis- matc h negativity of ev ent-related brain potentials, whic h is generated bilaterally within auditory cortices, is elicited only when the visual pattern promotes the segregation of the sounds [63]. This suggests that synchronous presentation of visual stim uli should help to resolv e sound ambiguit y due to multiple sources, and promote either an in tegrated or segregated p erception of the sounds. W e introduce a no vel audio-visual source separation approac h that realizes this in tuition. Our metho d first lev erages a large collection of unannotated videos to discov er a laten t sound representation for eac h ob ject. Specifically , we use state-of-the-art image recognition to ols to infer the ob jects present in eac h video clip, and w e p erform non-negativ e matrix factorization (NMF) on each video’s audio c hannel to recov er its set of frequency basis vectors. At this point it is unkno wn which audio bases go with which visible ob ject(s). T o recov er the as- so ciation, we construct a neural netw ork for multi-instance m ulti-lab el learning (MIML) that maps audio bases to the distribution of detected visual ob jects. F rom this audio basis-ob ject asso ciation net work, w e extract the audio bases link ed to each visual ob ject, yielding its prototypical spectral patterns. Finally , giv en a no vel video, w e use the learned p er-ob ject audio bases to steer audio source separation. Learning to Separate Ob ject Sounds b y W atc hing Unlab eled Video 3 Prior attempts at visually-aided audio source separation tackle the problem b y detecting low-lev el correlations b et ween the tw o data streams for the input video [8, 12, 15, 27, 52, 61, 62, 64], and they experiment with somewhat controlled domains of musical instruments in concert or hu man sp eak ers facing the cam- era. In contrast, we prop ose to le arn obje ct-level sound mo dels from hundreds of thousands of unlab eled videos, and generalize to separate new audio-visual instances. W e demonstrate results for a broad set of “in the wild” videos. While a resurgence of researc h on cross-modal learning from images and audio also cap- italizes on synchronized audio-visual data for v arious tasks [3, 4, 5, 47, 49, 59, 60], they treat the audio as a single monolithic input, and thus cannot asso ciate differen t sounds to different ob jects in the same video. The main contributions in this pap er are as follows. Firstly , w e prop ose to enhance audio source separation in videos b y “sup ervising” it with visual infor- mation from image recognition results 1 . Secondly , w e propose a no vel deep m ulti- instance m ulti-lab el learning framework to learn prototypical sp ectral patterns of different acoustic ob jects, and inject the learned prior into an NMF source separation framework. Thirdly , to our knowledge, w e are the first to study audio source separation learned from large scale online videos. W e demonstrate state- of-the-art results on visually-aided audio source separation and audio denoising. 2 Related W ork L o c alizing sounds in vide o fr ames The sound localization problem entails iden- tifying which pixels or regions in a video are responsible for the recorded sound. Early work on lo calization explored correlating pixels with sounds using m utual information [27, 37] or m ulti-mo dal embeddings like canonical correlation anal- ysis [47], often with assumptions that a sounding ob ject is in motion. Beyond iden tifying correlations for a single input video’s audio and visual streams, re- cen t work inv estigates learning asso ciations from man y such videos in order to lo calize sounding ob jects [4]. Such metho ds typically assume that there is one sound source, and the task is to lo calize the p ortion(s) of the visual con tent re- sp onsible for it. In con trast, our goal is to sep ar ate multiple audio sources from a monoaural signal b y leveraging learned audio-visual associations. A udio-visual r epr esentation le arning Recent w ork sho ws that image and audio classification tasks can benefit from representation learning with b oth modal- ities. Given unlab eled training videos, the audio channel can b e used as free self-sup ervision, allowing a conv olutional netw ork to learn features that tend to gra vitate to ob jects and scenes, resulting in improv ed image classification [3, 60]. W orking in the opp osite direction, the SoundNet approac h uses image classifier predictions on unlab eled video frames to guid e a learned audio represen tation for impro ved audio scene classification [5]. F or applications in cross-mo dal retriev al or zero-shot classification, other metho ds aim to learn aligned representations 1 Our task can hence be seen as “weakly supervised”, though the weak “labels” them- selv es are inferred from the video, not manually annotated. 4 Ruohan Gao, Rogerio F eris, Kristen Grauman across mo dalities, e.g., audio, text, and visual [6]. Related to these approaches, w e share the goal of learning from unlab eled video with synchronized audio and visual channels. How ev er, whereas they aim to improv e audio or image classifi- cation, our metho d disco vers asso ciations in order to isolate sounds p er ob ject, with the ultimate task of audio-visual source separation. A udio sour c e sep ar ation Audio source separation (from purely audio input) has b een studied for decades in the signal processing literature. Some methods as- sume access to multiple microphones, whic h facilitates separation [20, 56, 82]. Others accept a single monoaural input [39, 69, 72, 76, 77] to p erform “blind” sepa- ration. Popular approaches include Independent Comp onen t Analysis (ICA) [40], sparse decomposition [86], Computational Auditory Scene Analysis (CASA) [22], non-negativ e matrix factorization (NMF) [25, 26, 51, 76], probabilistic laten t v ari- able mo dels [38, 68], and deep learning [36, 39, 66]. NMF is a traditional metho d that is still widely used for unsup ervised source separation [31, 41, 44, 72, 75]. How- ev er, existing methods typically require sup ervision to get go od results. Strong sup ervision in the form of isolated recordings of individual sound sources [69, 77] is effectiv e but difficult to secure for arbitrary sources in the wild. Alterna- tiv ely , “informed” audio source separation uses special-purp ose auxiliary cues to guide the pro cess, suc h as a music score [35], text [50], or man ual user guid- ance [11, 19, 77]. Our approach employs an existing NMF optimization [26], cho- sen for its efficiency , but unlike any of the ab o ve we tac kle audio separation informed b y automatically detected visual ob jects. A udio-visual sour c e sep ar ation The idea of guiding audio source separation us- ing visual information can b e traced back to [15, 27], where mutual informa- tion is used to learn the join t distribution of the visual and auditory signals, then applied to isolate human sp eak ers. Subsequent work explores audio-visual subspace analysis [62, 67], NMF informed by visual motion [61, 65], statistical con volutiv e mixture mo dels [64], and correlating temp oral onset even ts [8, 52]. Recen t w ork [62] attempts both localization and separation sim ultaneously; ho w- ev er, it assumes a moving ob ject is present and only aims to decomp ose a video in to background (assumed low-rank) and foreground sounds/pixels. Prior meth- o ds nearly alwa ys tackle videos of p eople sp eaking or pla ying musical instru- men ts [8, 12, 15, 27, 52, 61, 62, 64]—domains where salien t motion signals accom- pan y audio even ts (e.g., a mouth or a violin b o w starts moving, a guitar string suddenly accelerates). Some studies further assume side cues from a written mu- sical score [52], require that eac h sound source has a p eriod when it alone is activ e [12], or use ground-truth motion captured by MoCap [61]. Whereas prior work correlates lo w-level visual patterns—particularly motion and onset ev ents—with the audio channel, we propose to learn from video how differen t obje cts lo ok and sound, whether or not an ob ject mov es with ob vious correlation to the sounds. Our metho d assumes access to visual detectors, but assumes no side information about a no vel test video. F urthermore, whereas existing metho ds analyze a single input video in isolation and are largely con- strained to human sp eak ers and instruments, our approach learns a v aluable prior for audio separation from a large library of unlab ele d videos. Learning to Separate Ob ject Sounds b y W atc hing Unlab eled Video 5 Concurren t with our w ork, other new metho ds for audio-visual source sepa- ration are b eing explored sp ecifically for sp eec h [1, 23, 28, 58] or musical instru- men ts [84]. In con trast, w e study a broader set of ob ject-level sounds including instrumen ts, animals, and vehicles. Moreov er, our metho d’s training data re- quiremen ts are distinctly more flexible. W e are the first to learn from uncurated “in the wild” videos that con tain multiple ob jects and multiple audio sources. Gener ating sounds fr om vide o More distan t from our work are metho ds that aim to gener ate sounds from a silent visual input, using recurren t netw orks [59, 85], conditional generative adv ersarial netw orks (C-GANs) [13], or sim ulators in tegrating physics, audio, and graphics engines [83]. Unlike any of the ab o v e, our approac h learns the asso ciation betw een ho w ob jects lo ok and sound in order to disen tangle real audio sources; our metho d does not aim to syn thesize sounds. We akly sup ervise d visual le arning Given unlabeled video, our approach learns to disen tangle whic h sounds within a mixed audio signal go with whic h recognizable ob jects. This can b e seen as a weakly sup ervised visual learning problem, where the “sup ervision” in our case consists of automatically detected visual ob jects. The proposed setting of w eakly supervised audio-visual learning is en tirely no vel, but at a high lev el it follo ws the spirit of prior w ork leveraging w eak annotations, including early “words and pictures” w ork [7, 21], internet vision methods [9, 73], training w eakly supervised ob ject (activit y) detectors [2, 10, 14, 16, 78], image cap- tioning metho ds [18, 46], or grounding acoustic units of sp ok en language to image regions [32, 33]. In con trast to an y of these metho ds, our idea is to learn sound asso ciations for ob jects from unlab eled video, and to exploit those asso ciations for audio source separation on new videos. 3 Approac h Our approach learns what ob jects sound like from a batch of unlab eled, multi- sound-source videos. Giv en a new video, our method returns the separated audio c hannels and the visual ob jects resp onsible for them. W e first formalize the audio separation task and o verview audio basis ex- traction with NMF (Sec. 3.1). Then we introduce our framework for learning audio-visual ob jects from unlab eled video (Sec. 3.2) and our accompanying deep m ulti-instance multi-label netw ork (Sec. 3.3). Next we present an approach to use that netw ork to associate audio bases with visual ob jects (Sec. 3.4). Finally , w e p ose audio source separation for nov el videos in terms of a semi-sup ervised NMF approac h (Sec. 3.5). 3.1 Audio Basis Extraction Single-c hannel audio source separation is the problem of obtaining an estimate for each of the J sources s j from the observed linear mixture x ( t ): x ( t ) = P J j =1 s j ( t ), where s j ( t ) are time-discrete signals. The mixture signal can be transformed into a magnitude or p ow er sp ectrogram V ∈ R F × N + consisting of F frequency bins and N short-time F ourier transform (STFT) [30] frames, which 6 Ruohan Gao, Rogerio F eris, Kristen Grauman Non-negative Matrix Factorization Visual Predictions from ResNet-152 M Basis Vectors Multi-Instance Multi-Label Learning Unlabeled Video Audio Visual STFT Spectrogram Guitar Saxophone Top Labels Fig. 2. Unsupervised training pip eline. F or each video, we p erform NMF on its au- dio magnitude sp ectrogram to get M basis vectors. An ImageNet-trained ResNet-152 net work is used to make visual predictions to find the p oten tial ob jects presen t in the video. Finally , w e p erform multi-instance multi-label learning to disen tangle whic h extracted audio basis v ectors go with which detected visible ob ject(s). enco de the change of a signal’s frequency and phase conten t ov er time. W e op er- ate on the frequency domain, and use the inv erse short-time F ourier transform (ISTFT) [30] to reconstruct the sources. Non-negativ e matrix factorization (NMF) is often employ ed [25, 26, 51, 76] to appro ximate the (non-negative real-v alued) sp ectrogram matrix V as a pro duct of t wo matrices W and H : V ≈ ˜ V = WH , (1) where W ∈ R F × M + and H ∈ R M × N + . The num ber of bases M is a user-defined parameter. W can b e interpreted as the non-negative audio sp ectral patterns, and H can b e seen as the activ ation matrix. Sp ecifically , each column of W is referred to as a b asis ve ctor , and eac h ro w in H represents the gain of the corresp onding basis vector. The factorization is usually obtained b y solving the follo wing minimization problem: min W , H D ( V | WH ) sub ject to W ≥ 0 , H ≥ 0 , (2) where D is a measure of div ergence, e.g., w e employ the Kullback-Leibler (KL) div ergence. F or each unlabeled training video, we perform NMF indep enden tly on its audio magnitude spectrogram to obtain its sp ectral patterns W , and throw a wa y the activ ation matrix H . M audio basis v ectors are therefore extracted from eac h video. 3.2 W eakly-Supervised Audio-Visual Ob ject Learning F ramew ork Multiple ob jects can app ear in an unlab eled video at the same time, and sim- ilarly in the asso ciated audio track. At this p oint, it is unknown whic h of the audio bases extracted (columns of W ) go with which visible ob ject(s) in the visual frames. T o disco ver the association, we devise a m ulti-instance multi-label learning (MIML) framew ork that matc hes audio bases with the detected ob jects. As shown in Fig. 2, given an unlab eled video, we extract its visual frames and the corresp onding audio trac k. As defined abov e, w e perform NMF inde- p enden tly on the magnitude sp etrogram of each audio track and obtain M basis Learning to Separate Ob ject Sounds b y W atc hing Unlab eled Video 7 basis vector ... FC + BN + ReLU … shared weights ... i th basis 1024 x M depth = M K x L Max-Pooling over sub-concepts M x L Max-Pooling over bases L 1x1 Convolution Reshape depth = M K x L x M BN + ReLU (K x L) x M Audio Basis-Object Relation Map basis vector FC + BN + ReLU ... basis vector FC + BN + ReLU basis vector FC + BN + ReLU 1024 F Fig. 3. Our deep multi-instance multi-label net work tak es a bag of M audio basis v ectors for each video as input, and giv es a bag-lev el prediction of the ob jects presen t in the audio. The visual predictions from an ImageNet-trained CNN are used as weak “lab els” to train the net work with unlab eled video. v ectors from each video. F or the visual frames, we use an ImageNet pre-trained ResNet-152 net work [34] to make ob ject category predictions, and we max-p o ol o ver predictions of all frames to obtain a video-lev el prediction. The top lab els (with class probability larger than a threshold) are used as weak “labels” for the unlab eled video. The extracted basis vectors and the visual predictions are then fed into our MIML learning framew ork to discov er asso ciations, as defined next. 3.3 Deep Multi-Instance Multi-Lab el Net work W e cast the audio basis-ob ject disentangling task as a m ulti-instance multi-label (MIML) learning problem. In single-lab el MIL [17], one has bags of instances, and a bag lab el indicates only that some num b er of the instances within it ha ve that lab el. In MIML, the bag can hav e m ultiple lab els, and there is ambiguit y ab out which labels go with which instances in the bag. W e design a deep MIML netw ork for our task. A bag of basis v ectors { B } is the input to the netw ork, and within each bag there are M basis vectors B i with i ∈ [1 , M ] extracted from one video. The “lab els” are only av ailable at the bag level, and come from noisy visual pr e dictions of the ResNet-152 netw ork trained for ImageNet recognition. The labels for each instance (basis vector) are unkno wn. W e incorp orate MIL in to the deep net w ork b y modeling that there m ust b e at le ast one audio basis vector from a certain ob ject that constitutes a p ositiv e bag, so that the net work can output a correct bag-lev el prediction that agrees with the visual prediction. Fig. 3 shows the detailed netw ork arc hitecture. M basis vectors are fed through a Siamese Net work of M branches with shared w eights. The Siamese net work is designed to reduce the dimension of the audio frequency bases and learns the audio sp ectral patterns through a fully-connected lay er (FC) follow ed b y batch norm (BN) [42] and a rectified linear unit (ReLU). The output of all branc hes are stack ed to form a 1024 × M dimension feature map. Each slice of the feature map represents a basis vector with reduced dimension. Inspired b y [24], each lab el is decomp osed to K sub-concepts to capture latent seman- tic meanings. F or example, for drum, the latent sub-concepts could b e differen t t yp es of drums, suc h as b ongo drum, tabla, and so on. The stack ed output from the Siamese net work is forwarded through a 1 × 1 Conv olution-BN-ReLU mod- 8 Ruohan Gao, Rogerio F eris, Kristen Grauman ule, and then reshap ed into a feature cub e of dimension K × L × M , where K is the num b er of sub-concepts, L is the num b er of ob ject categories, and M is the num b er of audio basis vectors. The depth of the tensor equals the num b er of input basis vectors, with each K × L slice corresp onding to one particular basis. The activ ation score of the ( k , l , m ) th no de in the cub e represen ts the matching score of the k th sub-concept of the l th lab el for the m th basis v ector. T o get a bag-lev el prediction, w e conduct t wo max-po oling op erations. Max p ooling in deep MIL [24, 80, 81] is t ypically used to iden tify the positive instances within an aggregated bag. Our first p ooling is ov er the sub-concept dimension ( K ) to generate an audio basis-ob ject relation map. The second max-p o oling op erates ov er the basis dimension ( M ) to produce a video-level prediction. W e use the follo wing multi-label hinge loss to train the netw ork: L ( A, V ) = 1 L L X i =1 ,i 6 = V j |V | X j =1 max[0 , 1 − ( A V j − A i )] , (3) where A ∈ R L is the output of the MIML netw ork, and represents the ob ject predictions base d on audio bases; V is the set of visual ob jects, namely the indices of the |V | ob jects predicted by the ImageNet-trained mo del. The loss function encourages the prediction scores of the correct classes to be larger than incorrect ones by a margin of 1. W e find these po oling steps in our MIML form ulation are v aluable to learn accurately from the ambiguously “lab eled” bags (i.e., the videos and their ob ject predictions); see Supp. 3.4 Disen tangling P er-Ob ject Bases The MIML netw ork ab o ve learns from audio-visual associations, but does not itself disentangle them. The sounds in the audio track and ob jects present in the visual frames of unlab eled video are diverse and noisy (see Sec. 4.1 for details ab out the data w e use). The audio basis vectors extracted from each video could b e a component shared b y multiple ob jects, a feature comp osed of them, or ev en completely unrelated to the predicted visual ob jects. The visual predictions from ResNet-152 netw ork give appro ximate predictions ab out the ob jects that could b e present, but are certainly not alw ays reliable (see Fig. 5 for examples). Therefore, to collect high quality represen tative bases for each ob ject cate- gory , we use our trained deep MIML net work as a to ol. The audio basis-ob ject relation map after the first p o oling lay er of the MIML netw ork pro duces match- ing scores across all basis vectors for all ob ject lab els. W e p erform a dimension- wise softmax o ver the basis dimension ( M ) to normalize ob ject matching scores to probabilities along eac h basis dimension. By examining the normalized map, w e can disco ver links from bases to ob jects. W e only collect the k ey bases that trigger the prediction of the correct ob jects (namely , the visually detected ob- jects). F urther, we only collect bases from an unlab eled video if multiple basis v ectors strongly activ ate the correct ob ject(s). See Supp. for details, and see Fig. 5 for examples of t ypical basis-ob ject relation maps. In short, at the end of this phase, we hav e a set of audio bases for each visual ob ject, discov ered purely from unlab eled video and mixed single-channel audio. Learning to Separate Ob ject Sounds b y W atc hing Unlab eled Video 9 Novel Test Video Supervised Source Separation Audio Visual Retrieve Violin Bases Retrieve Piano Bases Audio Spectrogram STFT Violin Detected Piano Detected Violin Sound Piano Sound Fig. 4. T esting pipeline. Given a no vel test video, we detect the ob jects present in the visual frames, and retrieve their learnt audio bases. The bases are collected to form a fixed basis dictionary W with whic h to guide NMF factorization of the test video’s audio channel. The basis vectors and the learned activ ation scores from NMF are finally used to separate the sound for eac h detected ob ject, resp ectiv ely . 3.5 Ob ject Sound Separation for a Nov el Video Finally , we present our pro cedure to separate audio sources in new videos. As sho wn in Fig. 4, given a no vel test video q , we obtain its audio magnitude sp ectrogram V ( q ) through STFT and detect ob jects using the same ImageNet- trained ResNet-152 netw ork as b efore. Then, we retrieve the learn t audio basis v ectors for each detected ob ject, and use them to “guide” NMF-based audio source separation. Sp ecifically , V ( q ) ≈ ˜ V ( q ) = W ( q ) H ( q ) = h W ( q ) 1 · · · W ( q ) j · · · W ( q ) J i h H ( q ) 1 · · · H ( q ) j · · · H ( q ) J i T , (4) where J is the num b er of detected ob jects ( J potential sound sources), and W ( q ) j con tains the retrieved bases corresponding to ob ject j in input video q . In other words, we concatenate the basis vectors learnt for each detected ob ject to construct the basis dictionary W ( q ) . Next, in the NMF algorithm, we hold W ( q ) fixed, and only estimate activ ations H ( q ) with multiplicativ e up date rules. Then w e obtain the sp ectrogram corresp onding to each detected ob ject b y V ( q ) j = W ( q ) j H ( q ) j . W e reconstruct the individual (compressed) audio source signals by soft masking the mixture sp ectrogram: V j = V ( q ) j P J i =1 V ( q ) i V , (5) where V contains b oth magnitude and phase. Finally , we p erform ISTFT on V j to reconstruct the audio signals for each detected ob ject. If a detected ob ject do es not mak e sound, then its estimated activ ation scores will be lo w. This phase can b e seen as a self-sup ervised form of NMF, where the detected visual ob jects rev eal which bases (previously discov ered from unlab eled videos) are relev ant to guide audio separation. 10 Ruohan Gao, Rogerio F eris, Kristen Grauman 4 Exp erimen ts W e no w v alidate our approach and compare to existing metho ds. 4.1 Datasets W e consider tw o public video datasets: AudioSet [29] and the b enc hmark videos from [43, 53, 62], whic h we refer to as A V-Bench. A udioSet-Unlab ele d: W e use AudioSet [29] as the source of unlabeled training videos 2 . The dataset consists of short 10 second video clips that often concen trate on one ev ent. How ev er, our metho d mak es no particular assumptions about using short or trimmed videos, as it learns bases in the frequency domain and p ools b oth visual predictions and audio bases from all frames. The videos are c hallenging: many are of p oor quality and unrelated to ob ject sounds, such as silence, sine w av e, ec ho, infrasound, etc. As is t ypical for related experimentation in the literature [4, 85], w e filter the dataset to those likely to displa y audio-visual ev ents. In particular, w e extract musical instruments, animals, and v ehicles, whic h span a broad set of unique sound-making ob jects. See Supp. for a complete list of the ob ject categories. Using the dataset’s provided split, we randomly reserv e some videos from the “unbalanced” split as v alidation data, and the rest as the training data. W e use videos from the “balanced” split as test data. The final AudioSet-Unlab eled data contains 104k, 2.9k, 1k / 22k, 1.2k, 0.5k / 58k, 2.4k, 0.6k video clips in the train, v al, test splits, for the instrumen ts, animals, and v ehicles, resp ectiv ely . A udioSet-SingleSour c e: T o facilitate quantitativ e ev aluation (cf. Sec. 4.4), w e construct a dataset of AudioSet videos containing only a single sounding ob ject. W e manually examine videos in the v al/test set, and obtain 23 suc h videos. There are 15 m usical instrumen ts (accordion, acoustic guitar, banjo, cello, drum, electric guitar, flute, french horn, harmonica, harp, marimba, piano, saxophone, trom b one, violin), 4 animals (cat, dog, c hick en, frog), and 4 v ehicles (car, train, plane, motorbike). Note that our metho d never uses these samples for training. A V-Bench: This dataset contains the benchmark videos (Violin Y anni, W o oden Horse, and Guitar Solo) used in previous studies [43, 53, 62]. 4.2 Implemen tation Details W e extract a 10 second audio clip and 10 frames (every 1s) from each video. F ol- lo wing common settings [3], the audio clip is resampled at 48 kHz, and con v erted in to a magnitude spectrogram of size 2401 × 202 through STFT of windo w length 0.1s and half window ov erlap. W e use the NMF implementation of [26] with KL div ergence and the multiplicativ e update solver. W e extract M = 25 basis v ec- tors from each audio. All video frames are resized to 256 × 256, and 224 × 224 cen ter crops are used to make visual predictions. W e use all relev ant ImageNet categories and group them into 23 classes by me rging the p osteriors of similar categories to roughly align with the AudioSet categories; see Supp. A softmax 2 AudioSet offers noisy video-level audio class annotations. How ever, w e do not use an y of its lab el information. Learning to Separate Ob ject Sounds b y W atc hing Unlab eled Video 11 is finally p erformed on the video-lev el ob ject prediction scores, and classes with probabilit y greater than 0.3 are k ept as w eak labels for MIML training. The deep MIML netw ork is implemented in PyT orch with F = 2 , 401, K = 4, L = 25, and M = 25. W e rep ort all results with these settings and did not try other v alues. The netw ork is trained using Adam [48] with weigh t decay 10 − 5 and batch size 256. The starting learning rate is set to 0.001, and decreased by 6% ev ery 5 ep ochs and trained for 300 ep o c hs. 4.3 Baselines W e compare to sev eral existing metho ds [47, 55, 62, 72] and multiple baselines: MF CC Unsup ervise d Sep ar ation [72]: This is an off-the-shelf unsupervised audio source separation metho d. The separated channels are first conv erted into Mel frequency cepstrum coefficients (MFCC), and then K-means clustering is used to group separated c hannels. This is an established pip eline in the litera- ture [31, 41, 44, 75], making it a go od representativ e for comparison. W e use the publicly a v ailable co de 3 . A V-L o c [62], JIVE [55], Sp arse CCA [47]: W e refer to results rep orted in [62] for the A V-Benc h dataset to compare to these metho ds. A udioSet Sup ervise d Upp er-Bound: This baseline uses AudioSet ground- truth lab els to train our deep MIML net work. AudioSet lab els are organized in an on tology and each video is lab eled by man y categories. W e use the 23 lab els aligned with our subset (15 instrumen ts, 4 animals, and 4 v ehicles). This baseline serv es as an upp er-b ound. K-me ans Clustering Unsup ervise d Sep ar ation: W e use the same num b er of basis vectors as our metho d to initialize the W matrix, and p erform unsup er- vised NMF. K-means clustering is then used to group separated channels, with K equal to the n umber of ground-truth sources. The sound sources are separated b y aggregating the channel spectrograms b elonging to eac h cluster. Visual Exemplar for Sup ervise d Sep ar ation: W e recognize ob jects in the frames, and retrieve bases from an exemplar video for each detected ob ject class to supervise its NMF audio source separation. An exemplar video is the one that has the largest confidence score for a class among all unlab eled training videos. Unmatche d Bases for Sup ervise d Sep ar ation: This baseline is the same as our metho d except that it retriev es bases of the wrong class (at random from classes absen t in the visual prediction) to guide NMF audio source separation. Gaussian Bases for Sup ervise d Sep ar ation: W e initialize the weigh t ma- trix W randomly using a Gaussian distribution, and then p erform sup ervised audio source separation (with W fixed) as in Sec. 3.5. 4.4 Quan titative Results Visual ly-aide d audio sour c e sep ar ation F or “in the wild” unlab eled videos, the ground-truth of separated audio sources nev er exists. Therefore, to allow quan ti- tativ e ev aluation, w e create a test set consisting of com bined single-source videos, 3 https://github.com/interactiveaudiolab/nussl 12 Ruohan Gao, Rogerio F eris, Kristen Grauman Instrumen t P air Animal Pair V ehicle P air Cross-Domain Pair Upp er-Bound 2.05 0.35 0.60 2.79 K-means Clustering -2.85 -3.76 -2.71 -3.32 MF CC Unsupervised [72] 0.47 -0.21 -0.05 1.49 Visual Exemplar -2.41 -4.75 -2.21 -2.28 Unmatc hed Bases -2.12 -2.46 -1.99 -1.93 Gaussian Bases -8.74 -9.12 -7.39 -8.21 Ours 1.83 0.23 0.49 2.53 T able 1. W e pairwise mix the sounds of tw o single source AudioSet videos and p erform audio source separation. Mean Signal to Distortion Ratio (SDR in dB, higher is b etter) is rep orted to represent the ov erall separation performance. follo wing [8]. In particular, we take pairwise video combinations from AudioSet- SingleSource (cf. Sec. 4.1) and 1) comp ound their audio trac ks by normalizing and mixing them and 2) comp ound their visual channels b y max-p ooling their resp ectiv e ob ject predictions. Each comp ound video is a test video; its reserv ed source audio trac ks are the ground truth for ev aluation of separation results. T o ev aluate source separation quality , w e use the widely used BSS-EV AL to olbox [74] and rep ort the Signal to Distortion Ratio (SDR). W e p erform four sets of exp erimen ts: pairwise comp ound tw o videos of musical instrumen ts (In- strumen t Pair), t wo of animals (Animal P air), t wo of v ehicles (V ehicle Pair), and t wo cross-domain videos (Cross-Domain Pair). F or unsup ervised clustering sep- aration baselines, we ev aluate b oth p ossible matchings and take the b est results (to the baselines’ adv an tage). T able 1 sho ws the results. Our metho d significan tly outp erforms the Vi- sual Exemplar, Unmatched, and Gaussian baselines, demonstrating the p o wer of our learned bases. Compared with the unsup ervised clustering baselines, in- cluding [72], our metho d achiev es large gains. It also has the capability to matc h the separated source to acoustic ob jects in the video, whereas the baselines can only return ungrounded audio signals. W e stress that both our method as w ell as the baselines use no audio-based sup ervision. In contrast, other state-of-the-art audio source separation methods sup ervise the separation process with lab eled training data containing clean ground-truth sources and/or tailor separation to m usic/sp eec h (e.g., [36, 39, 54]). Such methods are not applicable here. Our MIML solution is fairly tolerant to imp erfect visual detection. Using w eak lab els from the ImageNet pre-trained ResNet-152 netw ork p erforms sim- ilarly to using the AudioSet ground-truth lab els with ab out 30% of the lab els corrupted. Using the true lab els (Upp er-Bound in T able 1) reveals the exten t to whic h b etter visual mo dels would impro ve results. Visual ly-aide d audio denoising T o facilitate comparison to prior audio-visual metho ds (none of which rep ort results on AudioSet), next we p erform the same exp erimen t as in [62] on visually-assisted audio denoising on A V-Bench. F ol- lo wing the same setup as [62], the audio signals in all videos are corrupted with white noise with the signal to noise ratio set to 0 dB. T o perform audio denoising, our method retrieves bases of detected ob ject(s) and appends the same n umber of randomly initialized bases as the weigh t matrix W to sup ervise NMF. The Learning to Separate Ob ject Sounds b y W atc hing Unlab eled Video 13 W o oden Horse Violin Y anni Guitar Solo Average Sparse CCA (Kidron et al. [47]) 4.36 5.30 5.71 5.12 JIVE (Lo c k et al. [55]) 4.54 4.43 2.64 3.87 Audio-Visual (Pu et al. [62]) 8.82 5.90 14.1 9.61 Ours 12.3 7.88 11.4 10.5 T able 2. Visually-assisted audio denoising results on three benchmark videos, in terms of NSDR (in dB, higher is better). randomly initialized bases are in tended to capture the noise signal. As in [62], w e rep ort Normalized SDR (NSDR), which measures the impro vemen t of the SDR b et w een the mixed noisy signal and the denoised sound. T able 2 sho ws the results. Note that the method of [62] is tailored to separate noise from the foreground sound b y exploiting the low-rank nature of background sounds. Still, our metho d outp erforms [62] on 2 out of the 3 videos, and per- forms muc h b etter than the other t wo prior audio-visual metho ds [47, 55]. Pu et al. [62] also exploit motion in man ually segmented regions. On Guitar Solo, the hand’s motion may strongly correlate with the sound, leading to their b etter p erformance. 4.5 Qualitativ e Results Next we pro vide qualitative results to illustrate the effectiveness of MIML train- ing and the success of audio source separation. Here we run our method on the real multi-source videos from AudioSet. They lack ground truth, but results can b e manually inspected for quality (see our video 4 ). Fig. 5 shows example unlab eled videos and their discov ered audio basis asso- ciations. F or each example, w e show sample video frames, ImageNet CNN visual ob ject predictions, as well as the corresponding audio basis-ob ject relation map predicted by our MIML netw ork. W e also rep ort the AudioSet audio ground truth lab els, but note that they are nev er seen by our method. The first example (Fig. 5-a) has b oth piano and violin in the visual frames, which are correctly de- tected b y the CNN. The audio also con tains the sounds of b oth instrumen ts, and our metho d appropriately activ ates bases for b oth the violin and piano. Fig. 5-b sho ws a man pla ying the violin in the visual frames, but b oth piano and violin are strongly activ ated. Listening to the audio, w e can hear that an out-of-view pla yer is indeed playing the piano. This example accentuates the adv antage of learning ob ject sounds from thousands of unlab eled videos; our metho d has learned the correct audio bases for piano, and “hears” it even though it is off-camera in this test video. Fig. 5-c/d show t wo examples with inaccurate visual predictions, and our model correctly activ ates the label of the ob ject in the audio. Fig. 5-e /f sho w t wo more examples of an animal and a v ehicle, and the results are similar. These examples suggest that our MIML netw ork has successfully learned the protot ypical sp ectral patterns of different sounds, and is capable of asso ciating audio bases with ob ject categories. Please see our video 4 for more results, where we use our system to detect and separate ob ject sounds for nov el “in the wild” videos. 4 http://vision.cs.utexas.edu/projects/separating_object_sounds/ 14 Ruohan Gao, Rogerio F eris, Kristen Grauman (a) visual prediction: violin & piano audio ground-truth: violin & piano (c) visual prediction: acoustic guitar & electric guitar audio ground-truth: drum & electric guitar (e) visual prediction: dog audio ground-truth: dog (f) visual prediction: train audio ground-truth: train (b) visual prediction: violin audio ground-truth: violin & piano (d) visual prediction: train audio ground-truth: acoustic guitar Fig. 5. In each example, we show the video frames, visual predictions, and the cor- resp onding basis-lab el relation maps predicted by our MIML netw ork. Please see our video 4 for more examples and the corresp onding audio tracks. Ov erall, the results are promising and constitute a noticeable step tow ards visually guided audio source separation for more realistic videos. Of course, our system is far from p erfect. The most common failure modes by our metho d are when the audio c haracteristics of detected ob jects are to o similar or ob jects are incorrectly detected (see Supp.). Though ImageNet-trained CNNs c an recognize a wide array of ob jects, we are nonetheless constrained b y its breadth. F urther- more, not all ob jects make sounds and not all sounds are within the camera’s view. Our results ab o ve suggest that learning can b e robust to suc h factors, y et it will b e imp ortant future work to explicitly mo del them. 5 Conclusion W e presen ted a framework to learn ob ject sounds from thousands of unlab eled videos. Our deep multi-instance multi-label net w ork automatically links audio bases to ob ject categories. Using the disentangled bases to sup ervise non-negative matrix factorization, our approach successfully separates ob ject-lev el sounds. W e demonstrate its effectiveness on div erse data and ob ject c ategories. Audio source separation will contin ue to b enefit many app ealing applications, e.g., au- dio even ts indexing/remixing, audio denoising for closed captioning, or instru- men t equalization. In future w ork, w e aim to explore w ays to lev erage scenes and am bient sounds, as w ell as integrate lo calized ob ject detections and motion. Ac knowledgemen ts: This research was supp orted in part b y an IBM F aculty Aw ard, IBM Op en Collab oration Research Aw ard, and DARP A Lifelong Learn- ing Machines. W e thank members of the UT Austin vision group and W enguang Mao, Y uzhong W u, Dongguang Y ou, Xingyi Zhou and Xinying Hao for helpful input. W e also gratefully ac knowledge a GPU donation from F aceb o ok. Learning to Separate Ob ject Sounds b y W atc hing Unlab eled Video 15 References 1. Afouras, T., Ch ung, J.S., Zisserman, A.: The conv ersation: Deep audio-visual sp eec h enhancement. arXiv preprint arXiv:1804.04121 (2018) 5 2. Ali, S., Shah, M.: Human action recognition in videos using kinematic features and m ultiple instance learning. P AMI (2010) 5 3. Arandjelo vic, R., Zisserman, A.: Look, listen and learn. In: ICCV (2017) 3, 10 4. Arandjelo vi´ c, R., Zisserman, A.: Ob jects that sound. arXiv preprint arXiv:1712.06651 (2017) 3, 10 5. Aytar, Y., V ondric k, C., T orralba, A.: Soundnet: Learning sound representations from unlab eled video. In: NIPS (2016) 3 6. Aytar, Y., V ondrick, C., T orralba, A.: See, hear, and read: Deep aligned represen- tations. arXiv preprint arXiv:1706.00932 (2017) 4 7. Barnard, K., Duygulu, P ., de F reitas, N., Blei, D., Jordan, M.: Matching words and pictures. JMLR (2003) 5 8. Barzela y , Z., Schec hner, Y.Y.: Harmon y in motion. In: CVPR (2007) 3, 4, 12 9. Berg, T., Berg, A., Edwards, J., Maire, M., White, R., T eh, Y., Learned-Miller, E., F orsyth, D.: Names and faces in the news. In: CVPR (2004) 5 10. Bilen, H., V edaldi, A.: W eakly sup ervised deep detection netw orks. In: CVPR (2016) 5 11. Bry an, N.: Interactiv e Sound Source Separation. Ph.D. thesis, Stanford Universit y (2014) 4 12. Casano v as, A.L., Monaci, G., V andergheynst, P ., Grib on v al, R.: Blind audio visual source separation based on sparse redundan t representations. IEEE T ransactions on Multimedia (2010) 3, 4 13. Chen, L., Sriv astav a, S., Duan, Z., Xu, C.: Deep cross-mo dal audio-visual genera- tion. In: on Thematic W orkshops of ACM Multimedia (2017) 5 14. Cin bis, R., V erbeek, J., Sc hmid, C.: W eakly supervised ob ject lo calization with m ulti-fold m ultiple instance learning. P AMI (2017) 5 15. Darrell, T., Fisher, J., Viola, P ., F reeman, W.: Audio-visual segmentation and the co c ktail party effect. In: ICMI (2000) 3, 4 16. Deselaers, T., Alexe, B., F errari, V.: W eakly supervised localization and learning with generic knowledge. IJCV (2012) 5 17. Dietteric h, T.G., Lathrop, R.H., Lozano-P´ erez, T.: Solving the multiple instance problem with axis-parallel rectangles. Artificial intelligence (1997) 7 18. Donah ue, J., Hendric ks, L., Guadarrama, S., Rohrbac h, M., V enugopalan, S., Saenk o, K., Darrell, T.: Long-term recurrent conv olutional netw orks for visual recognition and description. In: CVPR (2015) 5 19. Duong, N.Q., Ozero v, A., Chev allier, L., Sirot, J.: An in teractive audio source sep- aration framework based on non-negative matrix factorization. In: ICASSP (2014) 4 20. Duong, N.Q., Vincent, E., Grib on v al, R.: Under-determined reverberant audio source separation using a full-rank spatial cov ariance mo del. IEEE T ransactions on Audio, Sp eech, and Language Pro cessing (2010) 4 21. Duygulu, P ., Barnard, K., de F reitas, N., F orsyth, D.: Ob ject recognition as ma- c hine translation: learning a lexicon for a fixed image vocabulary . In: eccv (2002) 5 22. Ellis, D.P .W.: Prediction-driven computational auditory scene analysis. Ph.D. the- sis, Massach usetts Institute of T echnology (1996) 4 16 Ruohan Gao, Rogerio F eris, Kristen Grauman 23. Ephrat, A., Mosseri, I., Lang, O., Dekel, T., Wilson, K., Hassidim, A., F reeman, W.T., Rubinstein, M.: Looking to listen at the co c ktail party: A sp eak er-indep enden t audio-visual mo del for sp eech separation. arXiv preprint arXiv:1804.03619 (2018) 5 24. F eng, J., Zhou, Z.H.: Deep miml net work. In: AAAI (2017) 7, 8 25. F ´ ev otte, C., Bertin, N., Durrieu, J.L.: Nonnegative matrix factorization with the itakura-saito divergence: With application to music analysis. Neural computation (2009) 2, 4, 6 26. F ´ ev otte, C., Idier, J.: Algorithms for nonnegative matrix factorization with the β -divergence. Neural computation (2011) 4, 6, 10 27. Fisher I II, J.W., Darrell, T., F reeman, W.T., Viola, P .A.: Learning joint statistical mo dels for audio-visual fusion and segregation. In: NIPS (2001) 3, 4 28. Gabba y , A., Shamir, A., Peleg, S.: Visual speech enhancemen t using noise-inv ariant training. arXiv preprint arXiv:1711.08789 (2017) 5 29. Gemmek e, J.F., Ellis, D.P ., F reedman, D., Jansen, A., Lawrence, W., Mo ore, R.C., Plak al, M., Ritter, M.: Audio set: An ontology and human-labeled dataset for audio ev ents. In: ICASSP (2017) 10 30. Griffin, D., Lim, J.: Signal estimation from modified short-time fourier transform. IEEE T ransactions on Acoustics, Sp eec h, and Signal Pro cessing (1984) 5, 6 31. Guo, X., Uhlich, S., Mitsufuji, Y.: Nmf-based blind source separation using a linear predictiv e coding error clustering criterion. In: ICASSP (2015) 4, 11 32. Harw ath, D., Glass, J.: Learning word-lik e units from joint audio-visual analysis. In: ACL (2017) 5 33. Harw ath, D., Recasens, A., Sur ´ ıs, D., Ch uang, G., T orralba, A., Glass, J.: Join tly disco vering visual ob jects and spoken words from raw sensory input. arXiv preprint arXiv:1804.01452 (2018) 5 34. He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: CVPR (2016) 7 35. Hennequin, R., Da vid, B., Badeau, R.: Score informed audio source separation using a parametric model of non-negative spectrogram. In: ICASSP (2011) 4 36. Hershey , J.R., Chen, Z., Le Roux, J., W atanab e, S.: Deep clustering: Discriminative em b eddings for segmentation and separation. In: ICASSP (2016) 4, 12 37. Hershey , J.R., Mov ellan, J.R.: Audio vision: Using audio-visual sync hron y to locate sounds. In: NIPS (2000) 3 38. Hofmann, T.: Probabilistic laten t seman tic indexing. In: In ternational A CM SIGIR Conference on Research and Developmen t in Information Retriev al (1999) 4 39. Huang, P .S., Kim, M., Hasegaw a-Johnson, M., Smaragdis, P .: Deep learning for monaural sp eec h separation. In: ICASSP (2014) 4, 12 40. Hyv¨ arinen, A., Oja, E.: Independent comp onen t analysis: algorithms and applica- tions. Neural netw orks (2000) 2, 4 41. Innami, S., Kasai, H.: Nmf-based en vironmental sound source separation using time-v arian t gain features. Computers & Mathematics with Applications (2012) 4, 11 42. Ioffe, S., Szegedy , C.: Batch normalization: Accelerating deep net work training b y reducing internal co v ariate shift. In: ICML (2015) 7 43. Izadinia, H., Saleemi, I., Shah, M.: Multimo dal analysis for iden tification and seg- men tation of mo ving-sounding ob jects. IEEE T ransactions on Multimedia (2013) 10 44. Jaisw al, R., FitzGerald, D., Barry , D., Co yle, E., Rick ard, S.: Clustering nmf basis functions using shifted nmf for monaural sound source separation. In: ICASSP (2011) 4, 11 Learning to Separate Ob ject Sounds b y W atc hing Unlab eled Video 17 45. Jh uo, I.H., Y e, G., Gao, S., Liu, D., Jiang, Y.G., Lee, D., Chang, S.F.: Discov- ering joint audio–visual co dew ords for video ev ent detection. Machine vision and applications (2014) 2 46. Karpath y , A., F ei-F ei, L.: Deep visual-semantic alignments for generating image descriptions. In: CVPR (2015) 5 47. Kidron, E., Sc hechner, Y.Y., Elad, M.: Pixels that sound. In: CVPR (2005) 3, 11, 13 48. Kingma, D., Ba, J.: Adam: A metho d for sto c hastic optimization. In: ICLR (2015) 11 49. Korbar, B., T ran, D., T orresani, L.: Co-training of audio and video representations from self-supervised temp oral synchronization. arXiv preprint (2018) 3 50. Le Magoarou, L., Ozerov, A., Duong, N.Q.: T ext-informed audio source separa- tion. example-based approach using non-negativ e matrix partial co-factorization. Journal of Signal Processing Systems (2015) 4 51. Lee, D.D., Seung, H.S.: Algorithms for non-negativ e matrix factorization. In: Ad- v ances in neural information pro cessing systems (2001) 4, 6 52. Li, B., Dinesh, K., Duan, Z., Sharma, G.: See and listen: Score-informed association of sound tracks to play ers in cham b er music p erformance videos. In: ICASSP (2017) 3, 4 53. Li, K., Y e, J., Hua, K.A.: What’s making that sound? In: ACMMM (2014) 10 54. Liutkus, A., Fitzgerald, D., Rafii, Z., P ardo, B., Daudet, L.: Kernel additiv e models for source separation. IEEE T ransactions on Signal Pro cessing (2014) 12 55. Lo c k, E.F., Hoadley , K.A., Marron, J.S., Nob el, A.B.: Joint and individual v ariation explained (jive) for in tegrated analysis of multiple data types. The annals of applied statistics (2013) 11, 13 56. Nak adai, K., Hidai, K.i., Okuno, H.G., Kitano, H.: Real-time sp eak er lo calization and sp eec h separation b y audio-visual integration. In: IEEE In ternational Confer- ence on Rob otics and Automation (2002) 4 57. Naphade, M., Smith, J.R., T esic, J., Chang, S.F., Hsu, W., Kennedy , L., Haupt- mann, A., Curtis, J.: Large-scale concept on tology for multimedia. IEEE multime- dia (2006) 2 58. Ow ens, A., Efros, A.A.: Audio-visual scene analysis with self-supervised multisen- sory features. arXiv preprin t arXiv:1804.03641 (2018) 5 59. Ow ens, A., Isola, P ., McDermott, J., T orralba, A., Adelson, E.H., F reeman, W.T.: Visually indicated sounds. In: CVPR (2016) 3, 5 60. Ow ens, A., W u, J., McDermott, J.H., F reeman, W.T., T orralba, A.: Ambien t sound pro vides supervision for visual learning. In: ECCV (2016) 3 61. P arekh, S., Essid, S., Ozerov, A., Duong, N.Q., P´ erez, P ., Ric hard, G.: Motion informed audio source separation. In: ICASSP (2017) 3, 4 62. Pu, J., P anagakis, Y., Petridis, S., P antic, M.: Audio-visual ob ject localization and separation using low-rank and sparsity . In: ICASSP (2017) 3, 4, 10, 11, 12, 13 63. Rahne, T., B¨ oc kmann, M., von Sp ec h t, H., Sussman, E.S.: Visual cues can mo du- late in tegration and segregation of ob jects in auditory scene analysis. Brain research (2007) 2 64. Riv et, B., Girin, L., Jutten, C.: Mixing audiovisual sp eec h pro cessing and blind source separation for the extraction of sp eec h signals from conv olutive mixtures. IEEE transactions on audio, sp eec h, and language pro cessing (2007) 3, 4 65. Sedighin, F., Babaie-Zadeh, M., Riv et, B., Jutten, C.: Tw o multimodal approaches for single microphone source separation. In: 24th European Signal Pro cessing Con- ference (2016) 4 18 Ruohan Gao, Rogerio F eris, Kristen Grauman 66. Simpson, A.J., Roma, G., Plumbley , M.D.: Deep k araoke: Extracting vocals from m usical mixtures using a con volutional deep neural net work. In: In ternational Con- ference on Laten t V ariable Analysis and Signal Separation. pp. 429–436. Springer (2015) 4 67. Smaragdis, P ., Casey , M.: Audio/visual independent components. In: In ternational Conference on Indep endent Component Analysis and Signal Separation (2003) 4 68. Smaragdis, P ., Ra j, B., Shashank a, M.: A probabilistic laten t v ariable mo del for acoustic mo deling. In: NIPS (2006) 4 69. Smaragdis, P ., Ra j, B., Shashank a, M.: Sup ervised and semi-sup ervised separation of sounds from single-channel mixtures. In: International Conference on Indepen- den t Component Analysis and Signal Separation (2007) 4 70. Smeaton, A.F., Over, P ., Kraaij, W.: Ev aluation campaigns and trecvid. In: Pro- ceedings of the 8th A CM international workshop on Multimedia information re- triev al (2006) 2 71. Sno ek, C.G., W orring, M.: Multimo dal video indexing: A review of the state-of- the-art. Multimedia to ols and applications (2005) 2 72. SPIER TZ, M.: Source-filter based clustering for monaural blind source separation. In: 12th International Conference on Digital Audio Effects (2009) 4, 11, 12 73. Vija yanarasimhan, S., Grauman, K.: Keyw ords to visual categories: Multiple- instance learning for w eakly sup ervised ob ject categorization. In: CVPR (2008) 5 74. Vincen t, E., Grib on v al, R., F´ ev otte, C.: P erformance measuremen t in blind audio source separation. IEEE transactions on audio, sp eec h, and language pro cessing (2006) 12 75. Virtanen, T.: Sound source separation using sparse co ding with temporal contin uity ob jective. In: International Computer Music Conference (2003) 4, 11 76. Virtanen, T.: Monaural sound source separation by nonnegativ e matrix factoriza- tion with temp oral con tinuit y and sparseness criteria. IEEE transactions on audio, sp eec h, and language processing (2007) 2, 4, 6 77. W ANG, B.: In vestigating single-c hannel audio source separation metho ds based on non-negativ e matrix factorization. In: ICA Researc h Netw ork International W ork- shop (2006) 4 78. W ang, L., Xiong, Y., Lin, D., Go ol, L.V.: Un trimmednets for weakly sup ervised action recognition and detection. In: CVPR (2017) 5 79. W ang, Z., Kuan, K., Ra v aut, M., Manek, G., Song, S., Y uan, F., Seokhw an, K., Chen, N., Enriquez, L.F.D., T uan, L.A., et al.: T ruly multi-modal y outub e-8m video classification with video, audio, and text. arXiv preprin t (2017) 2 80. W u, J., Y u, Y., Huang, C., Y u, K.: Deep multiple instance learning for image classification and auto-annotation. In: CVPR (2015) 8 81. Y ang, H., Zhou, J.T., Cai, J., Ong, Y.S.: Miml-fcn+: Multi-instance multi-label learning via fully conv olutional netw orks with privileged information. In: CVPR (2017) 8 82. Yilmaz, O., Rick ard, S.: Blind separation of sp eec h mixtures via time-frequency masking. IEEE T ransactions on signal pro cessing (2004) 4 83. Zhang, Z., W u, J., Li, Q., Huang, Z., T raer, J., McDermott, J.H., T enenbaum, J.B., F reeman, W.T.: Generative mo deling of audible shap es for ob ject p erception. In: ICCV (2017) 5 84. Zhao, H., Gan, C., Rouditchenk o, A., V ondrick, C., McDermott, J., T orralba, A.: The sound of pixels. arXiv preprint arXiv:1804.03160 (2018) 5 Learning to Separate Ob ject Sounds b y W atc hing Unlab eled Video 19 85. Zhou, Y., W ang, Z., F ang, C., Bui, T., Berg, T.L.: Visual to sound: Generating natural sound for videos in the wild. arXiv preprint arXiv:1712.01393 (2017) 5, 10 86. Zibulevsky , M., Pearlm utter, B.A.: Blind source separation by sparse decomp osi- tion in a signal dictionary . Neural computation (2001) 2, 4

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment