A GPU-enabled finite volume solver for large shallow water simulations

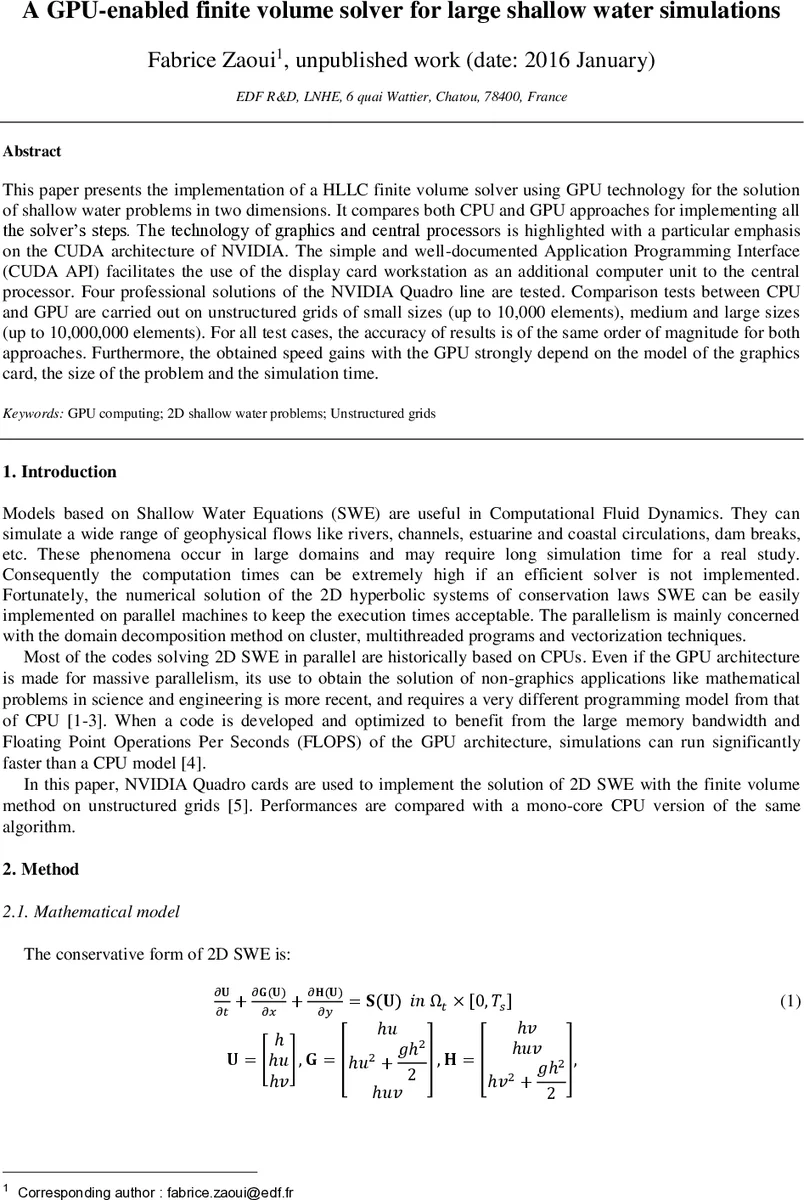

This paper presents the implementation of a HLLC finite volume solver using GPU technology for the solution of shallow water problems in two dimensions. It compares both CPU and GPU approaches for implementing all the solver’s steps. The technology of graphics and central processors is highlighted with a particular emphasis on the CUDA architecture of NVIDIA. The simple and well-documented Application Programming Interface (CUDA API) facilitates the use of the display card workstation as an additional computer unit to the central processor. Four professional solutions of the NVIDIA Quadro line are tested. Comparison tests between CPU and GPU are carried out on unstructured grids of small sizes (up to 10,000 elements), medium and large sizes (up to 10,000,000 elements). For all test cases, the accuracy of results is of the same order of magnitude for both approaches. Furthermore, the obtained speed gains with the GPU strongly depend on the model of the graphics card, the size of the problem and the simulation time.

💡 Research Summary

This paper presents a GPU‑accelerated finite‑volume solver for two‑dimensional shallow‑water equations (SWE) using the HLLC approximate Riemann solver. The authors implement the entire algorithm in both standard C (for a single‑core Intel Xeon CPU) and CUDA C (for NVIDIA GPUs), and they compare performance across four professional Quadro graphics cards: K600, K2000, K5200, and K6000.

The governing equations are written in conservative form and discretized on an unstructured triangular mesh using a cell‑centered finite‑volume approach. Spatial fluxes at each edge are evaluated with the HLLC solver, while a simple explicit Euler scheme advances the solution in time under a Courant‑Friedrichs‑Lewy (CFL) condition with a Courant number of 0.5. Source terms for topography and Manning friction are treated with a hydrostatic reconstruction and a semi‑implicit scheme, respectively.

In the GPU implementation, data are transferred from host to device only once at the beginning (mesh connectivity, initial water depth, bathymetry, etc.) and transferred back only after the final time step. The two computational kernels—flux computation over edges and state update over cells—are launched as massive parallel loops, with threads organized into blocks and grids to exploit the thousands of CUDA cores. The authors emphasize minimizing PCI‑Express traffic, as host‑device transfers dominate runtime for small problems.

Four test cases are defined, ranging from 1 036 to 10 261 932 triangular cells (approximately 1 k to 10 M elements). The physical scenario is a Gaussian water drop in a square basin with reflective boundaries, simulated for up to 2 400 seconds. All simulations are performed in double precision. Accuracy is verified by comparing the final water‑depth fields from CPU and GPU runs; differences are within numerical noise, confirming that the GPU version does not sacrifice solution fidelity.

Performance results show a clear dependence on problem size and GPU hardware. For the smallest mesh (≈1 k cells) the speed‑up is modest (≈1.5–2×) because data‑transfer overhead outweighs computational gains. As the mesh grows to 100 k cells, the K5200 achieves roughly 12× acceleration, while the top‑end K6000 reaches about 25×. The largest mesh (≈10 M cells) exceeds the memory capacity of the lower‑end GPUs; only the K5200 and K6000 (with 8 GB and 12 GB of VRAM, respectively) can execute it, and they maintain the highest speed‑ups. The authors also observe that longer simulation times tend to increase the average speed‑up, although occasional dips suggest thermal throttling or power‑limit effects on the GPUs.

The discussion highlights that a minimum problem size of roughly 10 k cells is required for a GPU to outperform a single CPU core meaningfully. Performance scales with the number of CUDA cores, memory bandwidth, and available VRAM. High‑end GPUs deliver larger absolute speed‑ups but also exhibit greater variability, indicating that workload balancing and possibly multi‑GPU strategies would be beneficial for robust performance.

In conclusion, the study demonstrates that CUDA‑based GPU acceleration can reduce shallow‑water simulation times by an order of magnitude or more, provided the mesh is sufficiently large and the GPU has adequate memory. The authors suggest future work on multi‑GPU versus multi‑CPU comparisons, exploration of additional physical processes, and optimization of long‑duration runs to mitigate the observed performance fluctuations.

Comments & Academic Discussion

Loading comments...

Leave a Comment