Approximate Nearest Neighbors in Limited Space

We consider the $(1+\epsilon)$-approximate nearest neighbor search problem: given a set $X$ of $n$ points in a $d$-dimensional space, build a data structure that, given any query point $y$, finds a point $x \in X$ whose distance to $y$ is at most $(1+\epsilon) \min_{x \in X} |x-y|$ for an accuracy parameter $\epsilon \in (0,1)$. Our main result is a data structure that occupies only $O(\epsilon^{-2} n \log(n) \log(1/\epsilon))$ bits of space, assuming all point coordinates are integers in the range ${-n^{O(1)} \ldots n^{O(1)}}$, i.e., the coordinates have $O(\log n)$ bits of precision. This improves over the best previously known space bound of $O(\epsilon^{-2} n \log(n)^2)$, obtained via the randomized dimensionality reduction method of Johnson and Lindenstrauss (1984). We also consider the more general problem of estimating all distances from a collection of query points to all data points $X$, and provide almost tight upper and lower bounds for the space complexity of this problem.

💡 Research Summary

The paper addresses the fundamental problem of (1 + ε)-approximate nearest neighbor (ANN) search in high‑dimensional Euclidean space under stringent space constraints. Given a dataset X of n points with integer coordinates bounded by poly(n) (hence each coordinate requires O(log n) bits), the goal is to build a compact sketch that enables a query algorithm to return, for any query point y, a point x ∈ X whose distance to y is at most (1 + ε) times the optimal distance. Prior work based on the Johnson‑Lindenstrauss (JL) dimensionality reduction achieved a space bound of O(ε⁻² n log² n) bits, which is suboptimal by a logarithmic factor.

The authors present a new data structure whose space usage is O(ε⁻² n log n log(1/ε)) bits, essentially matching the lower bound up to a log(1/ε) factor. The construction builds on the hierarchical clustering tree introduced in Indyk–Woodruff (IW17). The tree is formed by repeatedly merging clusters whose inter‑cluster distance is at most 2^ℓ at level ℓ, yielding a full binary‑like tree of depth O(log Φ) where Φ = n^{O(1)} bounds the coordinate range.

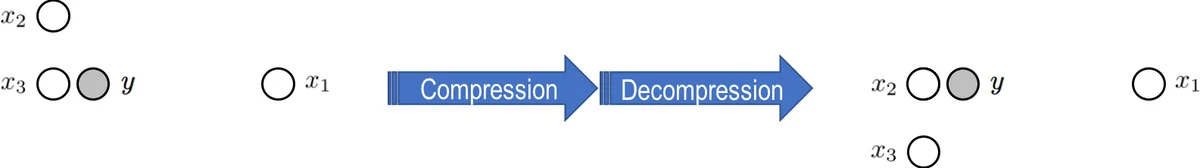

A key innovation is “top‑out compression”. For any node v, the authors define Λ(v) = ⌈log(Δ(v) / (2^{ℓ(v)} ε))⌉, where Δ(v) is the diameter of the cluster at v. If a downward 1‑degree path from a node u to v contains more than Λ(v) edges, all but the lowest Λ(v) edges are replaced by a single “long edge” annotated with the number of collapsed edges. This operation dramatically reduces the number of nodes: the compressed tree contains only O(n log(1/ε)) nodes (Lemma 3.2). The long edges are later used to reconstruct the necessary geometry without storing every intermediate node.

To support ANN queries, the compressed tree must still retain enough absolute positional information. The authors therefore augment each long‑edge segment with two additional pieces of data: (1) the suffix of the path (i.e., the most significant bits of the positions of points in the collapsed subtree) and (2) a hashed representation of a representative point (the “center”) of the subtree. The center is chosen as the point with the smallest index in the subtree, and its location is quantized onto a coarse grid before being hashed with a pairwise‑independent hash function. This random hashing introduces a small failure probability, but by choosing the hash range appropriately the overall error probability can be bounded by δ.

During a query, the algorithm proceeds top‑down. At each node it uses the hashed center to decide whether the current subtree could contain an approximate nearest neighbor of the query point y. If the hash matches, the algorithm descends into that subtree; otherwise it discards it. Within a retained subtree, the algorithm reconstructs surrogate points using the stored quantized displacements (the “ingresses”) and computes actual distances only for a small candidate set. Because the compression guarantees that any cluster that could affect the nearest‑neighbor answer is kept intact, the algorithm returns a (1 + ε)-approximate nearest neighbor with probability at least 1 − δ for all q queries simultaneously.

The paper also studies a more general problem: estimating all pairwise distances between a set of q query points and the n data points (the “all‑cross‑distances” problem). By augmenting each subtree with distance sketches from KOR00 and JL84, the authors obtain a sketch of size

O(ε⁻² n log n·log(1/ε) + log(d Φ)·log(q/δ) + poly(d,log Φ,log(q/δ),log(1/ε))) bits. They prove a matching lower bound of Ω(ε⁻² n log n + log(d Φ)·log(q/δ)) bits, showing that their construction is optimal up to the log(1/ε) factor.

Theoretical contributions are complemented by practical considerations. The authors note that a simplified algorithm from IRW17, which is already efficient in practice, can be enhanced with their additional hashing and quantization steps to support out‑of‑sample queries while preserving its empirical speed.

In summary, the paper achieves three major milestones: (1) it reduces the space complexity of ANN search from O(ε⁻² n log² n) to O(ε⁻² n log n log(1/ε)) bits, (2) it provides near‑optimal upper and lower bounds for the all‑cross‑distances problem, and (3) it offers a concrete pathway to implement these ideas in real systems where memory is a limiting factor. The work bridges a gap between theoretical optimality and practical applicability, making it a significant advance for large‑scale, high‑dimensional similarity search under tight memory budgets.

Comments & Academic Discussion

Loading comments...

Leave a Comment